LingEcho-App

LingEcho is an intelligent voice interaction platform that provides a comprehensive AI voice interaction solution. It integrates advanced speech recognition (ASR), text-to-speech (TTS), large language models (LLM) and real-time communication, supporting real-time calls, voice cloning, knowledge base management and other enterprise-level features.

Stars: 252

LingEcho is an enterprise-grade intelligent voice interaction platform that integrates advanced speech recognition, text-to-speech, large language models, and real-time communication technologies. It provides features such as AI character real-time calls, voice cloning, workflow automation, knowledge base management, application integration, device management, alert system, billing system, organization management, key management, VAD voice activity detection, voiceprint recognition service, ASR-TTS service, MCP service, and hardware device support.

README:

Intelligent Voice Interaction Platform - Giving AI a Real Voice

Experience LingEcho online: https://lingecho.com

LingEcho is an enterprise-grade intelligent voice interaction platform based on Go + React, providing a complete AI voice interaction solution. It integrates advanced speech recognition (ASR), text-to-speech (TTS), large language models (LLM), and real-time communication technologies, supporting real-time calls, voice cloning, knowledge base management, workflow automation, device management, alerting, billing, and other enterprise-level features.

- AI Character Real-time Calls - Real-time voice calls with AI characters based on WebRTC technology, supporting high-quality audio transmission and low-latency interaction

- Voice Cloning & Training - Support for custom voice training and cloning, allowing AI assistants to have exclusive voice characteristics for personalized voice experiences

- Workflow Automation - Visual workflow designer with multiple trigger types (API, Event, Schedule, Webhook, Assistant), supporting complex business process automation

- Knowledge Base Management - Powerful knowledge base management system supporting document storage, retrieval, and AI analysis, providing intelligent knowledge services

- Application Integration - Quick integration of new applications through JS injection, API gateway, and key management, enabling seamless integration

- Device Management - Complete device management system with OTA firmware updates, device monitoring, and remote control

- Alert System - Comprehensive alerting system with rule-based monitoring, multi-channel notifications, and alert management

- Billing System - Flexible billing and usage tracking system with detailed usage records, bill generation, and quota management

- Organization Management - Multi-tenant organization management with group collaboration, member management, and resource sharing

- Key Management & API Platform - Enterprise-level key management system and API development platform

- VAD Voice Activity Detection - Standalone SileroVAD service supporting PCM and OPUS formats

- ️ Voiceprint Recognition Service - ModelScope-based voiceprint recognition service supporting speaker identification

- ASR-TTS Service - Standalone ASR (Whisper) and TTS (edge-tts) service supporting speech recognition and text-to-speech synthesis

- MCP Service - Model Context Protocol service supporting SSE and stdio transports

- Hardware Device Support - Support for xiaozhi protocol hardware devices with complete WebSocket communication

| Service | Port | Tech Stack | Description |

|---|---|---|---|

| Main Service | 7072 | Go + Gin | Core backend service with RESTful API and WebSocket support |

| VAD Service | 7073 | Python + FastAPI | Voice activity detection service (SileroVAD) |

| Voiceprint Service | 7074 | Python + FastAPI | Voiceprint recognition service (ModelScope) |

| ASR-TTS Service | 7075 | Python + FastAPI | ASR (Whisper) and TTS (edge-tts) service |

| MCP Service | 3001 | Go | Model Context Protocol service (SSE transport, optional) |

| Frontend Service | 5173 | React + Vite | Development frontend (Vite dev server) |

For detailed architecture documentation, see Architecture Documentation.

- Go >= 1.24.0

- Node.js >= 18.0.0

- npm >= 8.0.0 or pnpm >= 8.0.0

- Git

- Python >= 3.10 (for optional services: VAD, Voiceprint, ASR-TTS)

- Docker & Docker Compose (for containerized deployment, recommended)

The easiest way to get started with LingEcho is using Docker Compose:

docker run -d --name neo4j \

-p 7474:7474 -p 7687:7687 \

-e NEO4J_AUTH=neo4j/admin123 \

neo4j:latest

# Clone the project

git clone https://github.com/your-username/LingEcho.git

cd LingEcho

# Copy environment configuration

cp server/env.example .env

# Edit .env file and configure your settings

# At minimum, set: SESSION_SECRET, LLM_API_KEY

# Start services with Docker Compose

docker-compose up -d

# View logs

docker-compose logs -f lingechoAccess the Application:

- Frontend Interface: http://localhost:7072

- Backend API: http://localhost:7072/api

- API Documentation: http://localhost:7072/api/docs

Optional Services:

# Start with PostgreSQL database

docker-compose --profile postgres up -d

# Start with Redis cache

docker-compose --profile redis up -d

# Start with Nginx reverse proxy

docker-compose --profile nginx up -d

# Start frontend development server

docker-compose --profile dev up -dbrew install pkg-config

brew install opus opusfile

# Clone the project

git clone https://github.com/your-username/LingEcho.git

cd LingEcho

# Backend setup

cd server

go mod tidy

cp env.example .env

# Edit .env file with your configuration

# Frontend setup

cd ../web

npm install # or pnpm install

npm run build # For production

# OR

npm run dev # For development (runs on port 5173)

# Start backend (from server directory)

cd ../server

go run ./cmd/server/main.go -mode=devAccess the Application:

- Frontend Interface: http://localhost:5173 (dev) or http://localhost:7072 (production)

- Backend API: http://localhost:7072/api

- API Documentation: http://localhost:7072/api/docs

Optional Services (if needed):

# Start VAD service

cd services/vad-service

docker-compose up -d

# Or manually: python vad_service.py

# Start Voiceprint service

cd services/voiceprint-api

docker-compose up -d

# Or manually: python -m app.main

# Start ASR-TTS service

cd services/asr-tts-service

docker-compose up -d

# Or manually: python -m app.main

# Start MCP service (optional)

cd server

go run ./cmd/mcp/main.go --transport sse --port 3001For detailed installation instructions, see Installation Guide.

- Installation Guide - Detailed installation and configuration instructions

- Features Documentation - Complete feature list with screenshots and examples

- Architecture Documentation - System architecture and design

- Development Guide - Development setup and contribution guidelines

- Services Documentation - Detailed service component documentation

We welcome all forms of contributions! Please check our Development Guide for details.

- Fork the Project - Click the Fork button in the top right corner

-

Create a Branch -

git checkout -b feature/your-feature -

Commit Changes -

git commit -m 'Add some feature' -

Push Branch -

git push origin feature/your-feature - Create PR - Create a Pull Request on GitHub

A core team of two full-stack engineers focused on innovation and application of AI voice technology.

| Member | Role | Responsibilities |

|---|---|---|

| chenting | Full-stack Engineer + Project Manager | Responsible for overall project architecture design and full-stack development, leading product direction and technology selection |

| wangyueran | Full-stack Engineer | Responsible for frontend interface development and user experience optimization, ensuring product usability |

- Email: [email protected]

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for LingEcho-App

Similar Open Source Tools

LingEcho-App

LingEcho is an enterprise-grade intelligent voice interaction platform that integrates advanced speech recognition, text-to-speech, large language models, and real-time communication technologies. It provides features such as AI character real-time calls, voice cloning, workflow automation, knowledge base management, application integration, device management, alert system, billing system, organization management, key management, VAD voice activity detection, voiceprint recognition service, ASR-TTS service, MCP service, and hardware device support.

astron-rpa

AstronRPA is an enterprise-grade Robotic Process Automation (RPA) desktop application that supports low-code/no-code development. It enables users to rapidly build workflows and automate desktop software and web pages. The tool offers comprehensive automation support for various applications, highly component-based design, enterprise-grade security and collaboration features, developer-friendly experience, native agent empowerment, and multi-channel trigger integration. It follows a frontend-backend separation architecture with components for system operations, browser automation, GUI automation, AI integration, and more. The tool is deployed via Docker and designed for complex RPA scenarios.

atom

Atom is an open-source, self-hosted AI agent platform that allows users to automate workflows by interacting with AI agents. Users can speak or type requests, and Atom's specialty agents can plan, verify, and execute complex workflows across various tech stacks. Unlike SaaS alternatives, Atom runs entirely on the user's infrastructure, ensuring data privacy. The platform offers features such as voice interface, specialty agents for sales, marketing, and engineering, browser and device automation, universal memory and context, agent governance system, deep integrations, dynamic skills, and more. Atom is designed for business automation, multi-agent workflows, and enterprise governance.

sandboxed.sh

sandboxed.sh is a self-hosted cloud orchestrator for AI coding agents that provides isolated Linux workspaces with Claude Code, OpenCode & Amp runtimes. It allows users to hand off entire development cycles, run multi-day operations unattended, and keep sensitive data local by analyzing data against scientific literature. The tool features dual runtime support, mission control for remote agent management, isolated workspaces, a git-backed library, MCP registry, and multi-platform support with a web dashboard and iOS app.

claude-007-agents

Claude Code Agents is an open-source AI agent system designed to enhance development workflows by providing specialized AI agents for orchestration, resilience engineering, and organizational memory. These agents offer specialized expertise across technologies, AI system with organizational memory, and an agent orchestration system. The system includes features such as engineering excellence by design, advanced orchestration system, Task Master integration, live MCP integrations, professional-grade workflows, and organizational intelligence. It is suitable for solo developers, small teams, enterprise teams, and open-source projects. The system requires a one-time bootstrap setup for each project to analyze the tech stack, select optimal agents, create configuration files, set up Task Master integration, and validate system readiness.

evi-run

evi-run is a powerful, production-ready multi-agent AI system built on Python using the OpenAI Agents SDK. It offers instant deployment, ultimate flexibility, built-in analytics, Telegram integration, and scalable architecture. The system features memory management, knowledge integration, task scheduling, multi-agent orchestration, custom agent creation, deep research, web intelligence, document processing, image generation, DEX analytics, and Solana token swap. It supports flexible usage modes like private, free, and pay mode, with upcoming features including NSFW mode, task scheduler, and automatic limit orders. The technology stack includes Python 3.11, OpenAI Agents SDK, Telegram Bot API, PostgreSQL, Redis, and Docker & Docker Compose for deployment.

neuropilot

NeuroPilot is an open-source AI-powered education platform that transforms study materials into interactive learning resources. It provides tools like contextual chat, smart notes, flashcards, quizzes, and AI podcasts. Supported by various AI models and embedding providers, it offers features like WebSocket streaming, JSON or vector database support, file-based storage, and configurable multi-provider setup for LLMs and TTS engines. The technology stack includes Node.js, TypeScript, Vite, React, TailwindCSS, JSON database, multiple LLM providers, and Docker for deployment. Users can contribute to the project by integrating AI models, adding mobile app support, improving performance, enhancing accessibility features, and creating documentation and tutorials.

serverless-openclaw

An open-source project, Serverless OpenClaw, that runs OpenClaw on-demand on AWS serverless infrastructure, providing a web UI and Telegram as interfaces. It minimizes cost, offers predictive pre-warming, supports multi-LLM providers, task automation, and one-command deployment. The project aims for cost optimization, easy management, scalability, and security through various features and technologies. It follows a specific architecture and tech stack, with a roadmap for future development phases and estimated costs. The project structure is organized as an npm workspaces monorepo with TypeScript project references, and detailed documentation is available for contributors and users.

AionUi

AionUi is a user interface library for building modern and responsive web applications. It provides a set of customizable components and styles to create visually appealing user interfaces. With AionUi, developers can easily design and implement interactive web interfaces that are both functional and aesthetically pleasing. The library is built using the latest web technologies and follows best practices for performance and accessibility. Whether you are working on a personal project or a professional application, AionUi can help you streamline the UI development process and deliver a seamless user experience.

transformerlab-app

Transformer Lab is an app that allows users to experiment with Large Language Models by providing features such as one-click download of popular models, finetuning across different hardware, RLHF and Preference Optimization, working with LLMs across different operating systems, chatting with models, using different inference engines, evaluating models, building datasets for training, calculating embeddings, providing a full REST API, running in the cloud, converting models across platforms, supporting plugins, embedded Monaco code editor, prompt editing, inference logs, all through a simple cross-platform GUI.

layra

LAYRA is the world's first visual-native AI automation engine that sees documents like a human, preserves layout and graphical elements, and executes arbitrarily complex workflows with full Python control. It empowers users to build next-generation intelligent systems with no limits or compromises. Built for Enterprise-Grade deployment, LAYRA features a modern frontend, high-performance backend, decoupled service architecture, visual-native multimodal document understanding, and a powerful workflow engine.

bifrost

Bifrost is a high-performance AI gateway that unifies access to multiple providers through a single OpenAI-compatible API. It offers features like automatic failover, load balancing, semantic caching, and enterprise-grade functionalities. Users can deploy Bifrost in seconds with zero configuration, benefiting from its core infrastructure, advanced features, enterprise and security capabilities, and developer experience. The repository structure is modular, allowing for maximum flexibility. Bifrost is designed for quick setup, easy configuration, and seamless integration with various AI models and tools.

nodetool

NodeTool is a platform designed for AI enthusiasts, developers, and creators, providing a visual interface to access a variety of AI tools and models. It simplifies access to advanced AI technologies, offering resources for content creation, data analysis, automation, and more. With features like a visual editor, seamless integration with leading AI platforms, model manager, and API integration, NodeTool caters to both newcomers and experienced users in the AI field.

AgentNeo

AgentNeo is an advanced, open-source Agentic AI Application Observability, Monitoring, and Evaluation Framework designed to provide deep insights into AI agents, Large Language Model (LLM) calls, and tool interactions. It offers robust logging, visualization, and evaluation capabilities to help debug and optimize AI applications with ease. With features like tracing LLM calls, monitoring agents and tools, tracking interactions, detailed metrics collection, flexible data storage, simple instrumentation, interactive dashboard, project management, execution graph visualization, and evaluation tools, AgentNeo empowers users to build efficient, cost-effective, and high-quality AI-driven solutions.

claude-code-plugins-plus-skills

Claude Code Skills & Plugins Hub is a comprehensive marketplace for agent skills and plugins, offering 1537 production-ready agent skills and 270 total plugins. It provides a learning lab with guides, diagrams, and examples for building production agent workflows. The package manager CLI allows users to discover, install, and manage plugins from their terminal, with features like searching, listing, installing, updating, and validating plugins. The marketplace is not on GitHub Marketplace and does not support built-in monetization. It is community-driven, actively maintained, and focuses on quality over quantity, aiming to be the definitive resource for Claude Code plugins.

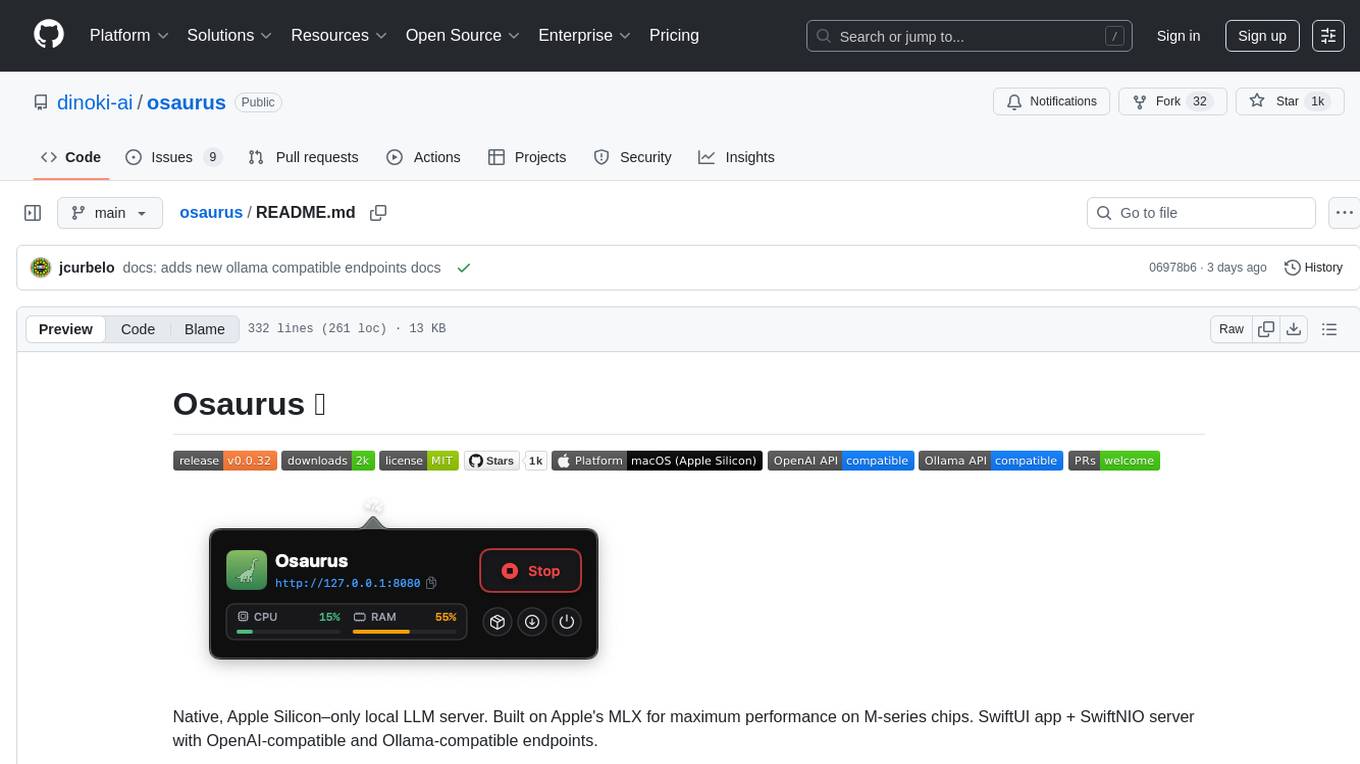

osaurus

Osaurus is a native, Apple Silicon-only local LLM server built on Apple's MLX for maximum performance on M‑series chips. It is a SwiftUI app + SwiftNIO server with OpenAI‑compatible and Ollama‑compatible endpoints. The tool supports native MLX text generation, model management, streaming and non‑streaming chat completions, OpenAI‑compatible function calling, real-time system resource monitoring, and path normalization for API compatibility. Osaurus is designed for macOS 15.5+ and Apple Silicon (M1 or newer) with Xcode 16.4+ required for building from source.

For similar tasks

autogen

AutoGen is a framework that enables the development of LLM applications using multiple agents that can converse with each other to solve tasks. AutoGen agents are customizable, conversable, and seamlessly allow human participation. They can operate in various modes that employ combinations of LLMs, human inputs, and tools.

tracecat

Tracecat is an open-source automation platform for security teams. It's designed to be simple but powerful, with a focus on AI features and a practitioner-obsessed UI/UX. Tracecat can be used to automate a variety of tasks, including phishing email investigation, evidence collection, and remediation plan generation.

ciso-assistant-community

CISO Assistant is a tool that helps organizations manage their cybersecurity posture and compliance. It provides a centralized platform for managing security controls, threats, and risks. CISO Assistant also includes a library of pre-built frameworks and tools to help organizations quickly and easily implement best practices.

ck

Collective Mind (CM) is a collection of portable, extensible, technology-agnostic and ready-to-use automation recipes with a human-friendly interface (aka CM scripts) to unify and automate all the manual steps required to compose, run, benchmark and optimize complex ML/AI applications on any platform with any software and hardware: see online catalog and source code. CM scripts require Python 3.7+ with minimal dependencies and are continuously extended by the community and MLCommons members to run natively on Ubuntu, MacOS, Windows, RHEL, Debian, Amazon Linux and any other operating system, in a cloud or inside automatically generated containers while keeping backward compatibility - please don't hesitate to report encountered issues here and contact us via public Discord Server to help this collaborative engineering effort! CM scripts were originally developed based on the following requirements from the MLCommons members to help them automatically compose and optimize complex MLPerf benchmarks, applications and systems across diverse and continuously changing models, data sets, software and hardware from Nvidia, Intel, AMD, Google, Qualcomm, Amazon and other vendors: * must work out of the box with the default options and without the need to edit some paths, environment variables and configuration files; * must be non-intrusive, easy to debug and must reuse existing user scripts and automation tools (such as cmake, make, ML workflows, python poetry and containers) rather than substituting them; * must have a very simple and human-friendly command line with a Python API and minimal dependencies; * must require minimal or zero learning curve by using plain Python, native scripts, environment variables and simple JSON/YAML descriptions instead of inventing new workflow languages; * must have the same interface to run all automations natively, in a cloud or inside containers. CM scripts were successfully validated by MLCommons to modularize MLPerf inference benchmarks and help the community automate more than 95% of all performance and power submissions in the v3.1 round across more than 120 system configurations (models, frameworks, hardware) while reducing development and maintenance costs.

zenml

ZenML is an extensible, open-source MLOps framework for creating portable, production-ready machine learning pipelines. By decoupling infrastructure from code, ZenML enables developers across your organization to collaborate more effectively as they develop to production.

clearml

ClearML is a suite of tools designed to streamline the machine learning workflow. It includes an experiment manager, MLOps/LLMOps, data management, and model serving capabilities. ClearML is open-source and offers a free tier hosting option. It supports various ML/DL frameworks and integrates with Jupyter Notebook and PyCharm. ClearML provides extensive logging capabilities, including source control info, execution environment, hyper-parameters, and experiment outputs. It also offers automation features, such as remote job execution and pipeline creation. ClearML is designed to be easy to integrate, requiring only two lines of code to add to existing scripts. It aims to improve collaboration, visibility, and data transparency within ML teams.

devchat

DevChat is an open-source workflow engine that enables developers to create intelligent, automated workflows for engaging with users through a chat panel within their IDEs. It combines script writing flexibility, latest AI models, and an intuitive chat GUI to enhance user experience and productivity. DevChat simplifies the integration of AI in software development, unlocking new possibilities for developers.

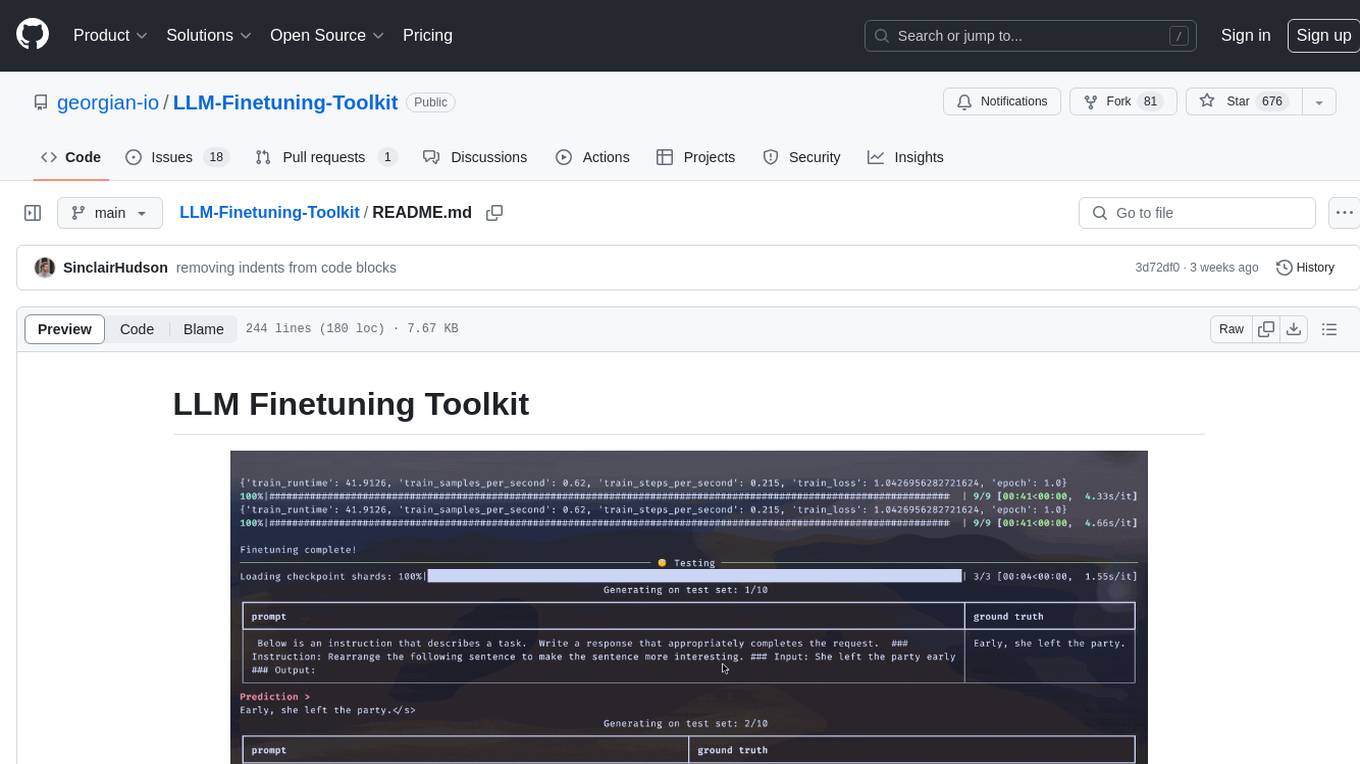

LLM-Finetuning-Toolkit

LLM Finetuning toolkit is a config-based CLI tool for launching a series of LLM fine-tuning experiments on your data and gathering their results. It allows users to control all elements of a typical experimentation pipeline - prompts, open-source LLMs, optimization strategy, and LLM testing - through a single YAML configuration file. The toolkit supports basic, intermediate, and advanced usage scenarios, enabling users to run custom experiments, conduct ablation studies, and automate fine-tuning workflows. It provides features for data ingestion, model definition, training, inference, quality assurance, and artifact outputs, making it a comprehensive tool for fine-tuning large language models.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.