voyant

Fact-Verified Travel AI Agent

Stars: 66

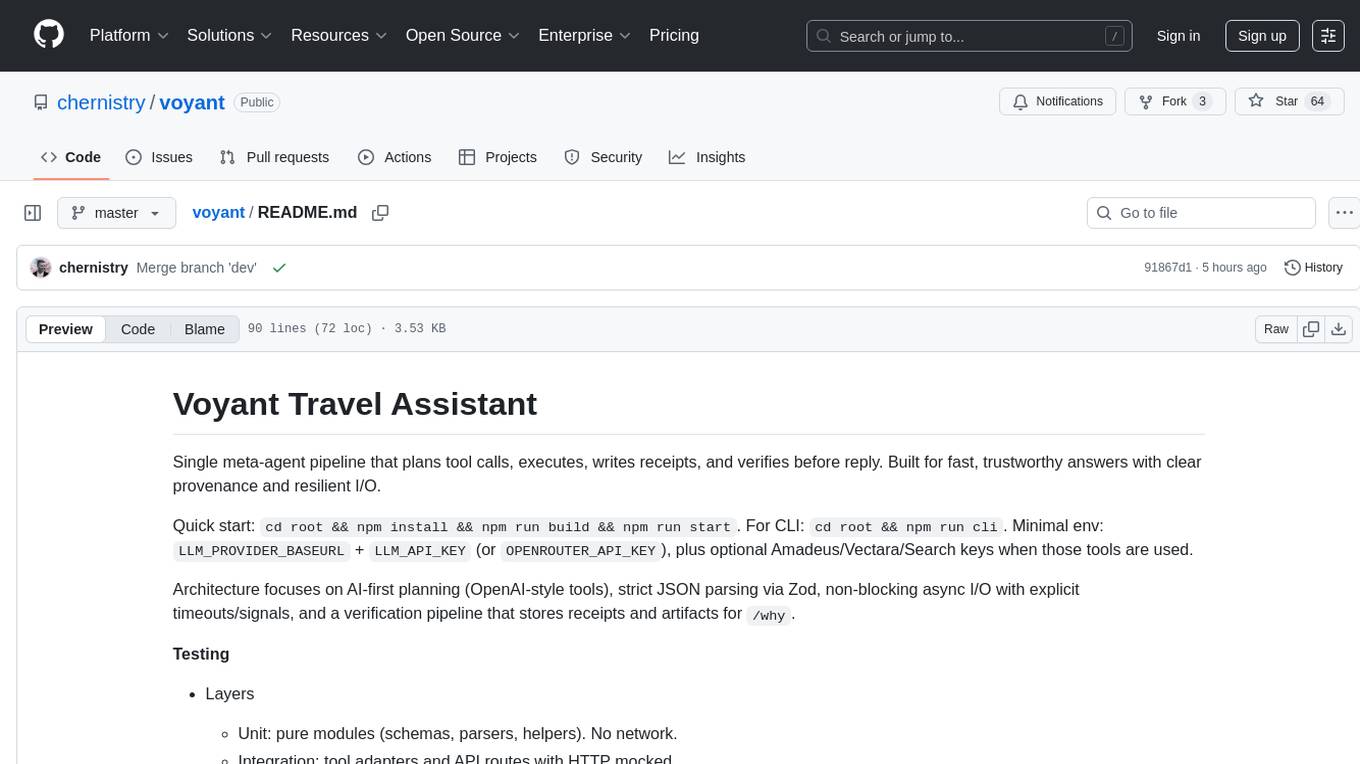

Voyant Travel Assistant is a meta-agent pipeline designed for fast, trustworthy answers with clear provenance and resilient I/O. It focuses on AI-first planning, strict JSON parsing, non-blocking async I/O, and verification pipelines. The tool analyzes user messages, plans tool calls, executes actions, and blends responses from various tools. It supports tools like weather, country information, attractions, travel search, flight information, and policy extraction. Users can interact with the tool through a CLI interface and benefit from its architecture that ensures reliable responses and observability through stored receipts and verification processes.

README:

Single meta‑agent pipeline that plans tool calls, executes, writes receipts, and verifies before reply. Built for fast, trustworthy answers with clear provenance and resilient I/O.

Quick start: cd root && npm install && npm run build && npm run start.

For CLI: cd root && npm run cli. Minimal env: LLM_PROVIDER_BASEURL +

LLM_API_KEY (or OPENROUTER_API_KEY), plus optional Amadeus/Vectara/Search

keys when those tools are used.

Architecture focuses on AI‑first planning (OpenAI‑style tools), strict JSON

parsing via Zod, non‑blocking async I/O with explicit timeouts/signals, and a

verification pipeline that stores receipts and artifacts for /why.

Testing

-

Layers

- Unit: pure modules (schemas, parsers, helpers). No network.

- Integration: tool adapters and API routes with HTTP mocked.

- Golden: real meta‑agent conversations that persist receipts, then call the

verifier (verify.md) via an LLM pass‑through; assertions read the stored

verification artifact (no re‑verify on

/why).

-

Commands

cd root && npm run test:unitcd root && npm run test:integrationcd root && VERIFY_LLM=1 AUTO_VERIFY_REPLIES=true npm run test:golden

-

Golden prerequisites

- Provide

LLM_PROVIDER_BASEURL+LLM_API_KEYorOPENROUTER_API_KEY. - Golden tests are skipped unless

VERIFY_LLM=1.

- Provide

Agent Decision Flow

flowchart TD

U["User message"] --> API["POST /chat\\nroot/src/api/routes.ts"]

API --> HANDLE["handleChat()\\nroot/src/core/blend.ts"]

HANDLE --> RUN["runMetaAgentTurn()\\nroot/src/agent/meta_agent.ts"]

subgraph MetaAgent["Meta Agent\\nAnalyze → Plan → Act → Blend"]

RUN --> LOAD["Load meta_agent.md\\nlog prompt hash/version"]

LOAD --> PLAN["Analyze + Plan (LLM)\\nCONTROL JSON route/missing/calls"]

PLAN --> ACT["chatWithToolsLLM()\\nexecute tool plan"]

ACT --> BLEND["Blend (LLM) grounded reply"]

subgraph Tools["Tools Registry\\nroot/src/agent/tools/index.ts"]

ACT --> T1["weather / getCountry / getAttractions"]

ACT --> T2["searchTravelInfo (Tavily/Brave)"]

ACT --> T3["vectaraQuery (RAG locator)"]

ACT --> T4["extractPolicyWithCrawlee / deepResearch"]

ACT --> T5["Amadeus resolveCity / airports / flights"]

end

BLEND --> RECEIPTS["setLastReceipts()\\nslot_memory.ts"]

end

RECEIPTS --> MET["observeStage / addMeta* metrics\\nutil/metrics.ts"]

MET --> AUTO{"AUTO_VERIFY_REPLIES=true?"}

AUTO -->|Yes| VERIFY["verifyAnswer()\\ncore/verify.ts\\nctx: getContext + slots + intent"]

VERIFY --> STORE["setLastVerification()\\nslot_memory.ts"]

VERIFY --> VERDICT{"verdict = fail & revised answer?"}

VERDICT -->|Yes| REPLACE["Use revised answer\\npushMessage(thread, revised)"]

VERDICT -->|No| FINAL["Return meta reply"]

STORE --> FINAL

AUTO -->|No| FINAL

REPLACE --> FINAL

FINAL --> RESP["ChatOutput → caller"]

RESP --> WHY["/why command\\nreads receipts + stored verification"]Prompts Flow

flowchart TD

U["User message"] --> SYS["meta_agent.md<br/>System prompt"]

SYS --> PLAN["Planning request (LLM)<br/>CONTROL JSON route/missing/calls"]

PLAN --> ACT["chatWithToolsLLM<br/>Meta Agent execution"]

ACT --> BLEND["Blend instructions<br/>within meta_agent.md"]

BLEND --> RECEIPTS["Persist receipts\\nslot_memory.setLastReceipts"]

RECEIPTS --> VERQ{"Auto-verify or /why?"}

VERQ -->|Yes| VERIFY["verify.md<br/>STRICT JSON verdict"]

VERIFY --> OUT["Reply + receipts"]

VERQ -->|No| OUTDocs: see docs/index.html for quick links to prompts/observability.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for voyant

Similar Open Source Tools

voyant

Voyant Travel Assistant is a meta-agent pipeline designed for fast, trustworthy answers with clear provenance and resilient I/O. It focuses on AI-first planning, strict JSON parsing, non-blocking async I/O, and verification pipelines. The tool analyzes user messages, plans tool calls, executes actions, and blends responses from various tools. It supports tools like weather, country information, attractions, travel search, flight information, and policy extraction. Users can interact with the tool through a CLI interface and benefit from its architecture that ensures reliable responses and observability through stored receipts and verification processes.

Acontext

Acontext is a context data platform designed for production AI agents, offering unified storage, built-in context management, and observability features. It helps agents scale from local demos to production without the need to rebuild context infrastructure. The platform provides solutions for challenges like scattered context data, long-running agents requiring context management, and tracking states from multi-modal agents. Acontext offers core features such as context storage, session management, disk storage, agent skills management, and sandbox for code execution and analysis. Users can connect to Acontext, install SDKs, initialize clients, store and retrieve messages, perform context engineering, and utilize agent storage tools. The platform also supports building agents using end-to-end scripts in Python and Typescript, with various templates available. Acontext's architecture includes client layer, backend with API and core components, infrastructure with PostgreSQL, S3, Redis, and RabbitMQ, and a web dashboard. Join the Acontext community on Discord and follow updates on GitHub.

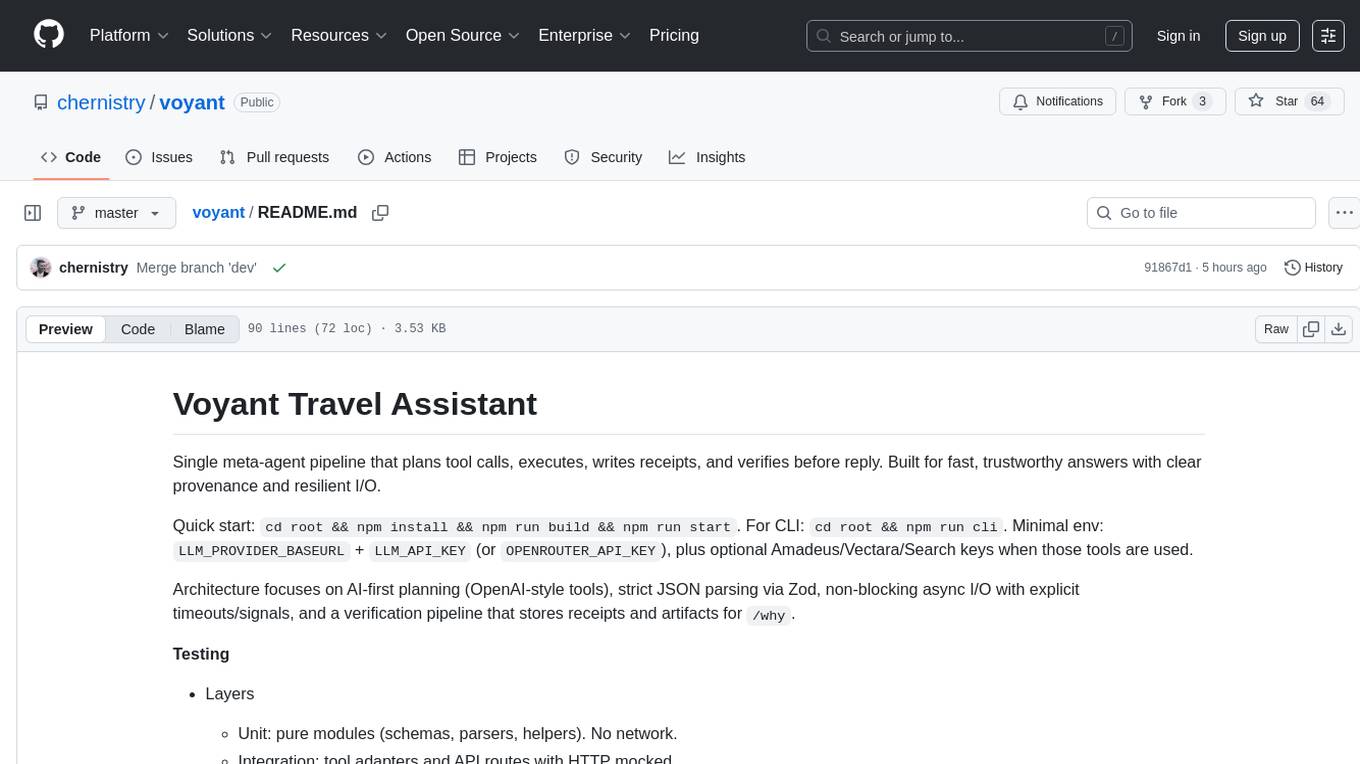

agentops

AgentOps is a toolkit for evaluating and developing robust and reliable AI agents. It provides benchmarks, observability, and replay analytics to help developers build better agents. AgentOps is open beta and can be signed up for here. Key features of AgentOps include: - Session replays in 3 lines of code: Initialize the AgentOps client and automatically get analytics on every LLM call. - Time travel debugging: (coming soon!) - Agent Arena: (coming soon!) - Callback handlers: AgentOps works seamlessly with applications built using Langchain and LlamaIndex.

LocalAGI

LocalAGI is a powerful, self-hostable AI Agent platform that allows you to design AI automations without writing code. It provides a complete drop-in replacement for OpenAI's Responses APIs with advanced agentic capabilities. With LocalAGI, you can create customizable AI assistants, automations, chat bots, and agents that run 100% locally, without the need for cloud services or API keys. The platform offers features like no-code agents, web-based interface, advanced agent teaming, connectors for various platforms, comprehensive REST API, short & long-term memory capabilities, planning & reasoning, periodic tasks scheduling, memory management, multimodal support, extensible custom actions, fully customizable models, observability, and more.

ai00_server

AI00 RWKV Server is an inference API server for the RWKV language model based upon the web-rwkv inference engine. It supports VULKAN parallel and concurrent batched inference and can run on all GPUs that support VULKAN. No need for Nvidia cards!!! AMD cards and even integrated graphics can be accelerated!!! No need for bulky pytorch, CUDA and other runtime environments, it's compact and ready to use out of the box! Compatible with OpenAI's ChatGPT API interface. 100% open source and commercially usable, under the MIT license. If you are looking for a fast, efficient, and easy-to-use LLM API server, then AI00 RWKV Server is your best choice. It can be used for various tasks, including chatbots, text generation, translation, and Q&A.

logicstamp-context

LogicStamp Context is a static analyzer that extracts deterministic component contracts from TypeScript codebases, providing structured architectural context for AI coding assistants. It helps AI assistants understand architecture by extracting props, hooks, and dependencies without implementation noise. The tool works with React, Next.js, Vue, Express, and NestJS, and is compatible with various AI assistants like Claude, Cursor, and MCP agents. It offers features like watch mode for real-time updates, breaking change detection, and dependency graph creation. LogicStamp Context is a security-first tool that protects sensitive data, runs locally, and is non-opinionated about architectural decisions.

lingo.dev

Replexica AI automates software localization end-to-end, producing authentic translations instantly across 60+ languages. Teams can do localization 100x faster with state-of-the-art quality, reaching more paying customers worldwide. The tool offers a GitHub Action for CI/CD automation and supports various formats like JSON, YAML, CSV, and Markdown. With lightning-fast AI localization, auto-updates, native quality translations, developer-friendly CLI, and scalability for startups and enterprise teams, Replexica is a top choice for efficient and effective software localization.

agentic-rag-for-dummies

Agentic RAG for Dummies is a production-ready system that demonstrates how to build an Agentic RAG (Retrieval-Augmented Generation) system using LangGraph with minimal code. It bridges the gap between basic RAG tutorials and production readiness by providing learning materials and deployable code. The system includes features like conversation memory, hierarchical indexing, query clarification, agent orchestration, multi-agent map-reduce, self-correction, and context compression. Users can interact with the system through an interactive notebook for learning or a modular project for production-ready architecture.

sonarqube-mcp-server

The SonarQube MCP Server is a Model Context Protocol (MCP) server that enables seamless integration with SonarQube Server or Cloud for code quality and security. It supports the analysis of code snippets directly within the agent context. The server provides various tools for analyzing code, managing issues, accessing metrics, and interacting with SonarQube projects. It also supports advanced features like dependency risk analysis, enterprise portfolio management, and system health checks. The server can be configured for different transport modes, proxy settings, and custom certificates. Telemetry data collection can be disabled if needed.

volga

Volga is a general purpose real-time data processing engine in Python for modern AI/ML systems. It aims to be a Python-native alternative to Flink/Spark Streaming with extended functionality for real-time AI/ML workloads. It provides a hybrid push+pull architecture, Entity API for defining data entities and feature pipelines, DataStream API for general data processing, and customizable data connectors. Volga can run on a laptop or a distributed cluster, making it suitable for building custom real-time AI/ML feature platforms or general data pipelines without relying on third-party platforms.

mcphub.nvim

MCPHub.nvim is a powerful Neovim plugin that integrates MCP (Model Context Protocol) servers into your workflow. It offers a centralized config file for managing servers and tools, with an intuitive UI for testing resources. Ideal for LLM integration, it provides programmatic API access and interactive testing through the `:MCPHub` command.

genai-toolbox

Gen AI Toolbox for Databases is an open source server that simplifies building Gen AI tools for interacting with databases. It handles complexities like connection pooling, authentication, and more, enabling easier, faster, and more secure tool development. The toolbox sits between the application's orchestration framework and the database, providing a control plane to modify, distribute, or invoke tools. It offers simplified development, better performance, enhanced security, and end-to-end observability. Users can install the toolbox as a binary, container image, or compile from source. Configuration is done through a 'tools.yaml' file, defining sources, tools, and toolsets. The project follows semantic versioning and welcomes contributions.

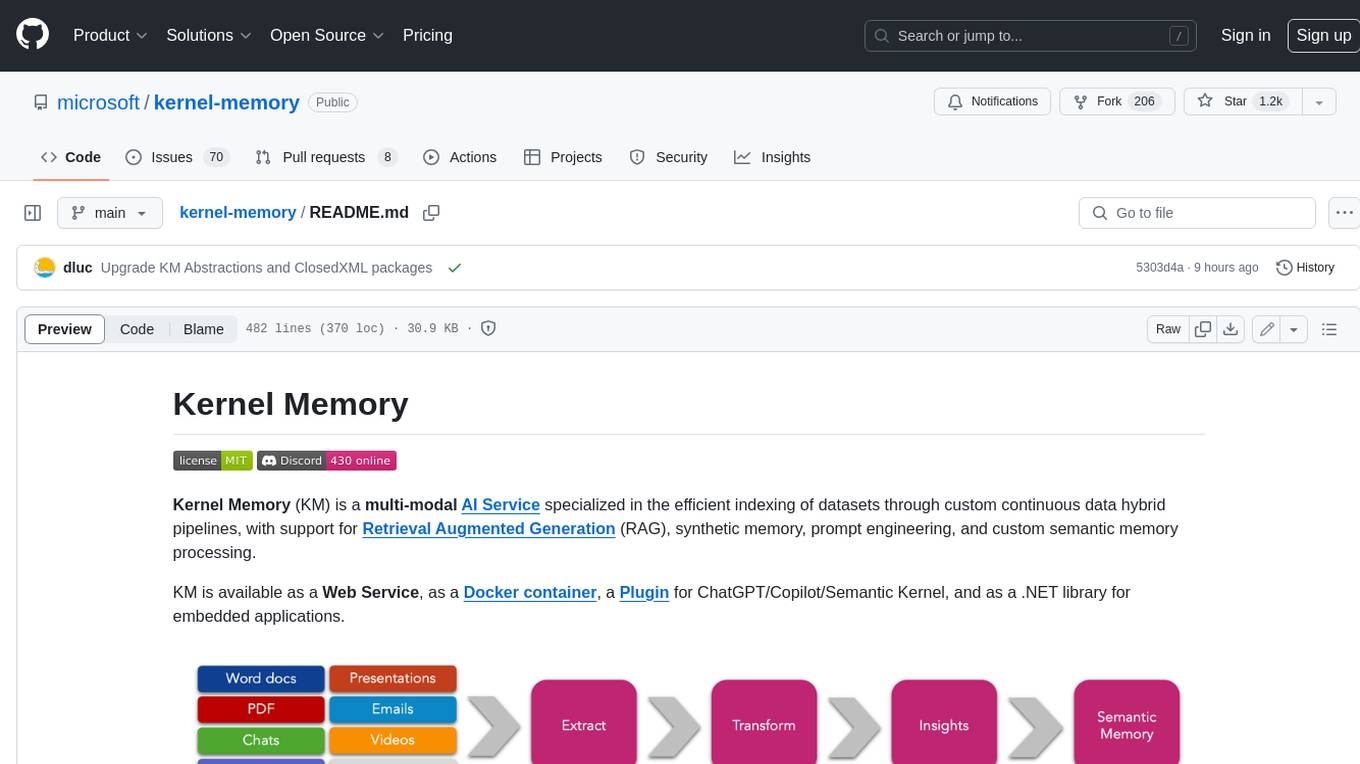

kernel-memory

Kernel Memory (KM) is a multi-modal AI Service specialized in the efficient indexing of datasets through custom continuous data hybrid pipelines, with support for Retrieval Augmented Generation (RAG), synthetic memory, prompt engineering, and custom semantic memory processing. KM is available as a Web Service, as a Docker container, a Plugin for ChatGPT/Copilot/Semantic Kernel, and as a .NET library for embedded applications. Utilizing advanced embeddings and LLMs, the system enables Natural Language querying for obtaining answers from the indexed data, complete with citations and links to the original sources. Designed for seamless integration as a Plugin with Semantic Kernel, Microsoft Copilot and ChatGPT, Kernel Memory enhances data-driven features in applications built for most popular AI platforms.

open-edison

OpenEdison is a secure MCP control panel that connects AI to data/software with additional security controls to reduce data exfiltration risks. It helps address the lethal trifecta problem by providing visibility, monitoring potential threats, and alerting on data interactions. The tool offers features like data leak monitoring, controlled execution, easy configuration, visibility into agent interactions, a simple API, and Docker support. It integrates with LangGraph, LangChain, and plain Python agents for observability and policy enforcement. OpenEdison helps gain observability, control, and policy enforcement for AI interactions with systems of records, existing company software, and data to reduce risks of AI-caused data leakage.

zeroclaw

ZeroClaw is a fast, small, and fully autonomous AI assistant infrastructure built with Rust. It features a lean runtime, cost-efficient deployment, fast cold starts, and a portable architecture. It is secure by design, fully swappable, and supports OpenAI-compatible provider support. The tool is designed for low-cost boards and small cloud instances, with a memory footprint of less than 5MB. It is suitable for tasks like deploying AI assistants, swapping providers/channels/tools, and pluggable everything.

matchlock

Matchlock is a CLI tool designed for running AI agents in isolated and disposable microVMs with network allowlisting and secret injection capabilities. It ensures that your secrets never enter the VM, providing a secure environment for AI agents to execute code without risking access to your machine. The tool offers features such as sealing the network to only allow traffic to specified hosts, injecting real credentials in-flight by the host, and providing a full Linux environment for the agent's operations while maintaining isolation from the host machine. Matchlock supports quick booting of Linux environments, sandbox lifecycle management, image building, and SDKs for Go and Python for embedding sandboxes in applications.

For similar tasks

voyant

Voyant Travel Assistant is a meta-agent pipeline designed for fast, trustworthy answers with clear provenance and resilient I/O. It focuses on AI-first planning, strict JSON parsing, non-blocking async I/O, and verification pipelines. The tool analyzes user messages, plans tool calls, executes actions, and blends responses from various tools. It supports tools like weather, country information, attractions, travel search, flight information, and policy extraction. Users can interact with the tool through a CLI interface and benefit from its architecture that ensures reliable responses and observability through stored receipts and verification processes.

tribe

Tribe AI is a low code tool designed to rapidly build and coordinate multi-agent teams. It leverages the langgraph framework to customize and coordinate teams of agents, allowing tasks to be split among agents with different strengths for faster and better problem-solving. The tool supports persistent conversations, observability, tool calling, human-in-the-loop functionality, easy deployment with Docker, and multi-tenancy for managing multiple users and teams.

crewAI

CrewAI is a cutting-edge framework designed to orchestrate role-playing autonomous AI agents. By fostering collaborative intelligence, CrewAI empowers agents to work together seamlessly, tackling complex tasks. It enables AI agents to assume roles, share goals, and operate in a cohesive unit, much like a well-oiled crew. Whether you're building a smart assistant platform, an automated customer service ensemble, or a multi-agent research team, CrewAI provides the backbone for sophisticated multi-agent interactions. With features like role-based agent design, autonomous inter-agent delegation, flexible task management, and support for various LLMs, CrewAI offers a dynamic and adaptable solution for both development and production workflows.

aip-community-registry

AIP Community Registry is a collection of community-built applications and projects leveraging Palantir's AIP Platform. It showcases real-world implementations from developers using AIP in production. The registry features various solutions demonstrating practical implementations and integration patterns across different use cases.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.