evalplus

Rigourous evaluation of LLM-synthesized code - NeurIPS 2023 & COLM 2024

Stars: 1303

EvalPlus is a rigorous evaluation framework for LLM4Code, providing HumanEval+ and MBPP+ tests to evaluate large language models on code generation tasks. It offers precise evaluation and ranking, coding rigorousness analysis, and pre-generated code samples. Users can use EvalPlus to generate code solutions, post-process code, and evaluate code quality. The tool includes tools for code generation and test input generation using various backends.

README:

📙About • 🔥Quick Start • 🚀LLM Backends • 📚Documents • 📜Citation • 🙏Acknowledgement

Who's using EvalPlus datasets? EvalPlus has been used by various LLM teams, including:

- Meta Llama 3.1 and 3.3

- Allen AI TÜLU 1/2/3

- Qwen2.5-Coder

- CodeQwen 1.5

- DeepSeek-Coder V2

- Qwen2

- Snowflake Arctic

- StarCoder2

- Magicoder

- WizardCoder

Below tracks the notable updates of EvalPlus:

-

[2024-10-20

v0.3.1]: EvalPlusv0.3.1is officially released! Highlights: (i) Code efficiency evaluation via EvalPerf, (ii) one command to run all: generation + post-processing + evaluation, (iii) support for more inference backends such as Google Gemini & Anthropic, etc. -

[2024-06-09 pre

v0.3.0]: Improved ground-truth solutions for MBPP+ tasks (IDs: 459, 102, 559). Thanks to EvalArena. -

[2024-04-17 pre

v0.3.0]: MBPP+ is upgraded tov0.2.0by removing some broken tasks (399 -> 378 tasks). ~4pp pass@1 improvement could be expected.

Earlier news :: click to expand ::

- (

v0.2.1) You can use EvalPlus datasets via bigcode-evaluation-harness! HumanEval+ oracle fixes (32). - (

v0.2.0) MBPP+ is released! HumanEval contract & input fixes (0/3/9/148/114/1/2/99/28/32/35/160). - (

v0.1.7) Leaderboard release; HumanEval+ contract and input fixes (32/166/126/6) - (

v0.1.6) Configurable and by-default-conservative timeout settings; HumanEval+ contract & ground-truth fixes (129/148/75/53/0/3/9/140) - (

v0.1.5) HumanEval+ mini is released for ultra-fast evaluation when you have too many samples! - (

v0.1.1) Optimizing user experiences: evaluation speed, PyPI package, Docker, etc. - (

v0.1.0) HumanEval+ is released!

EvalPlus is a rigorous evaluation framework for LLM4Code, with:

- ✨ HumanEval+: 80x more tests than the original HumanEval!

- ✨ MBPP+: 35x more tests than the original MBPP!

- ✨ EvalPerf: evaluating the efficiency of LLM-generated code!

- ✨ Framework: our packages/images/tools can easily and safely evaluate LLMs on above benchmarks.

Why EvalPlus?

- ✨ Precise evaluation: See our leaderboard for latest LLM rankings before & after rigorous evaluation.

- ✨ Coding rigorousness: Look at the score differences! esp. before & after using EvalPlus tests! Less drop means more rigorousness in code generation; while a bigger drop means the generated code tends to be fragile.

- ✨ Code efficiency: Beyond correctness, our EvalPerf dataset evaluates the efficiency of LLM-generated code via performance-exercising coding tasks and test inputs.

Want to know more details? Read our papers & materials!

- EvalPlus: NeurIPS'23 paper, Slides, Poster, Leaderboard

- EvalPerf: COLM'24 paper, Poster, Documentation, Leaderboard

pip install --upgrade "evalplus[vllm] @ git+https://github.com/evalplus/evalplus"

# Or `pip install "evalplus[vllm]" --upgrade` for the latest stable release

evalplus.evaluate --model "ise-uiuc/Magicoder-S-DS-6.7B" \

--dataset [humaneval|mbpp] \

--backend vllm \

--greedy🛡️ Safe code execution within Docker :: click to expand ::

# Local generation

evalplus.codegen --model "ise-uiuc/Magicoder-S-DS-6.7B" \

--dataset humaneval \

--backend vllm \

--greedy

# Code execution within Docker

docker run --rm --pull=always -v $(pwd)/evalplus_results:/app ganler/evalplus:latest \

evalplus.evaluate --dataset humaneval \

--samples /app/humaneval/ise-uiuc--Magicoder-S-DS-6.7B_vllm_temp_0.0.jsonlpip install --upgrade "evalplus[perf,vllm] @ git+https://github.com/evalplus/evalplus"

# Or `pip install "evalplus[perf,vllm]" --upgrade` for the latest stable release

sudo sh -c 'echo 0 > /proc/sys/kernel/perf_event_paranoid' # Enable perf

evalplus.evalperf --model "ise-uiuc/Magicoder-S-DS-6.7B" --backend vllm🛡️ Safe code execution within Docker :: click to expand ::

# Local generation

evalplus.codegen --model "ise-uiuc/Magicoder-S-DS-6.7B" \

--dataset evalperf \

--backend vllm \

--temperature 1.0 \

--n-samples 100

# Code execution within Docker

sudo sh -c 'echo 0 > /proc/sys/kernel/perf_event_paranoid' # Enable perf

docker run --cap-add PERFMON --rm --pull=always -v $(pwd)/evalplus_results:/app ganler/evalplus:latest \

evalplus.evalperf --samples /app/evalperf/ise-uiuc--Magicoder-S-DS-6.7B_vllm_temp_1.0.jsonl-

transformersbackend:

evalplus.evaluate --model "ise-uiuc/Magicoder-S-DS-6.7B" \

--dataset [humaneval|mbpp] \

--backend hf \

--greedy[!Note]

EvalPlus uses different prompts for base and chat models. By default it is detected by

tokenizer.chat_templatewhen usinghf/vllmas backend. For other backends, only chat mode is allowed.Therefore, if your base models come with a

tokenizer.chat_template, please add--force-base-promptto avoid being evaluated in a chat mode.

Enable Flash Attention 2 :: click to expand ::

# Install Flash Attention 2

pip install packaging ninja

pip install flash-attn --no-build-isolation

# Note: if you have installation problem, consider using pre-built

# wheels from https://github.com/Dao-AILab/flash-attention/releases

# Run evaluation with FA2

evalplus.evaluate --model "ise-uiuc/Magicoder-S-DS-6.7B" \

--dataset [humaneval|mbpp] \

--backend hf \

--attn-implementation [flash_attention_2|sdpa] \

--greedy-

vllmbackend:

evalplus.evaluate --model "ise-uiuc/Magicoder-S-DS-6.7B" \

--dataset [humaneval|mbpp] \

--backend vllm \

--tp [TENSOR_PARALLEL_SIZE] \

--greedy-

openaicompatible servers (e.g., vLLM):

# OpenAI models

export OPENAI_API_KEY="{KEY}" # https://platform.openai.com/settings/organization/api-keys

evalplus.evaluate --model "gpt-4o-2024-08-06" \

--dataset [humaneval|mbpp] \

--backend openai --greedy

# DeepSeek

export OPENAI_API_KEY="{KEY}" # https://platform.deepseek.com/api_keys

evalplus.evaluate --model "deepseek-chat" \

--dataset [humaneval|mbpp] \

--base-url https://api.deepseek.com \

--backend openai --greedy

# Grok

export OPENAI_API_KEY="{KEY}" # https://console.x.ai/

evalplus.evaluate --model "grok-beta" \

--dataset [humaneval|mbpp] \

--base-url https://api.x.ai/v1 \

--backend openai --greedy

# vLLM server

# First, launch a vLLM server: https://docs.vllm.ai/en/latest/serving/deploying_with_docker.html

evalplus.evaluate --model "ise-uiuc/Magicoder-S-DS-6.7B" \

--dataset [humaneval|mbpp] \

--base-url http://localhost:8000/v1 \

--backend openai --greedy

# GPTQModel

evalplus.evaluate --model "ModelCloud/Llama-3.2-1B-Instruct-gptqmodel-4bit-vortex-v1" \

--dataset [humaneval|mbpp] \

--backend gptqmodel --greedy- Access OpenAI APIs from OpenAI Console

export OPENAI_API_KEY="[YOUR_API_KEY]"

evalplus.evaluate --model "gpt-4o" \

--dataset [humaneval|mbpp] \

--backend openai \

--greedy- Access Anthropic APIs from Anthropic Console

export ANTHROPIC_API_KEY="[YOUR_API_KEY]"

evalplus.evaluate --model "claude-3-haiku-20240307" \

--dataset [humaneval|mbpp] \

--backend anthropic \

--greedy- Access Gemini APIs from Google AI Studio

export GOOGLE_API_KEY="[YOUR_API_KEY]"

evalplus.evaluate --model "gemini-1.5-pro" \

--dataset [humaneval|mbpp] \

--backend google \

--greedyexport BEDROCK_ROLE_ARN="[BEDROCK_ROLE_ARN]"

evalplus.evaluate --model "anthropic.claude-3-5-sonnet-20241022-v2:0" \

--dataset [humaneval|mbpp] \

--backend bedrock \

--greedyYou can checkout the generation and results at evalplus_results/[humaneval|mbpp]/

⏬ Using EvalPlus as a local repo? :: click to expand ::

git clone https://github.com/evalplus/evalplus.git

cd evalplus

export PYTHONPATH=$PYTHONPATH:$(pwd)

pip install -r requirements.txtTo learn more about how to use EvalPlus, please refer to:

@inproceedings{evalplus,

title = {Is Your Code Generated by Chat{GPT} Really Correct? Rigorous Evaluation of Large Language Models for Code Generation},

author = {Liu, Jiawei and Xia, Chunqiu Steven and Wang, Yuyao and Zhang, Lingming},

booktitle = {Thirty-seventh Conference on Neural Information Processing Systems},

year = {2023},

url = {https://openreview.net/forum?id=1qvx610Cu7},

}

@inproceedings{evalperf,

title = {Evaluating Language Models for Efficient Code Generation},

author = {Liu, Jiawei and Xie, Songrun and Wang, Junhao and Wei, Yuxiang and Ding, Yifeng and Zhang, Lingming},

booktitle = {First Conference on Language Modeling},

year = {2024},

url = {https://openreview.net/forum?id=IBCBMeAhmC},

}For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for evalplus

Similar Open Source Tools

evalplus

EvalPlus is a rigorous evaluation framework for LLM4Code, providing HumanEval+ and MBPP+ tests to evaluate large language models on code generation tasks. It offers precise evaluation and ranking, coding rigorousness analysis, and pre-generated code samples. Users can use EvalPlus to generate code solutions, post-process code, and evaluate code quality. The tool includes tools for code generation and test input generation using various backends.

openlrc

Open-Lyrics is a Python library that transcribes voice files using faster-whisper and translates/polishes the resulting text into `.lrc` files in the desired language using LLM, e.g. OpenAI-GPT, Anthropic-Claude. It offers well preprocessed audio to reduce hallucination and context-aware translation to improve translation quality. Users can install the library from PyPI or GitHub and follow the installation steps to set up the environment. The tool supports GUI usage and provides Python code examples for transcription and translation tasks. It also includes features like utilizing context and glossary for translation enhancement, pricing information for different models, and a list of todo tasks for future improvements.

ai00_server

AI00 RWKV Server is an inference API server for the RWKV language model based upon the web-rwkv inference engine. It supports VULKAN parallel and concurrent batched inference and can run on all GPUs that support VULKAN. No need for Nvidia cards!!! AMD cards and even integrated graphics can be accelerated!!! No need for bulky pytorch, CUDA and other runtime environments, it's compact and ready to use out of the box! Compatible with OpenAI's ChatGPT API interface. 100% open source and commercially usable, under the MIT license. If you are looking for a fast, efficient, and easy-to-use LLM API server, then AI00 RWKV Server is your best choice. It can be used for various tasks, including chatbots, text generation, translation, and Q&A.

zeroclaw

ZeroClaw is a fast, small, and fully autonomous AI assistant infrastructure built with Rust. It features a lean runtime, cost-efficient deployment, fast cold starts, and a portable architecture. It is secure by design, fully swappable, and supports OpenAI-compatible provider support. The tool is designed for low-cost boards and small cloud instances, with a memory footprint of less than 5MB. It is suitable for tasks like deploying AI assistants, swapping providers/channels/tools, and pluggable everything.

lingo.dev

Replexica AI automates software localization end-to-end, producing authentic translations instantly across 60+ languages. Teams can do localization 100x faster with state-of-the-art quality, reaching more paying customers worldwide. The tool offers a GitHub Action for CI/CD automation and supports various formats like JSON, YAML, CSV, and Markdown. With lightning-fast AI localization, auto-updates, native quality translations, developer-friendly CLI, and scalability for startups and enterprise teams, Replexica is a top choice for efficient and effective software localization.

llm_model_hub

Model Hub V2 is a one-stop platform for model fine-tuning, deployment, and debugging without code, providing users with a visual interface to quickly validate the effects of fine-tuning various open-source models, facilitating rapid experimentation and decision-making, and lowering the threshold for users to fine-tune large models. For detailed instructions, please refer to the Feishu documentation.

auto-round

AutoRound is an advanced weight-only quantization algorithm for low-bits LLM inference. It competes impressively against recent methods without introducing any additional inference overhead. The method adopts sign gradient descent to fine-tune rounding values and minmax values of weights in just 200 steps, often significantly outperforming SignRound with the cost of more tuning time for quantization. AutoRound is tailored for a wide range of models and consistently delivers noticeable improvements.

Groq2API

Groq2API is a REST API wrapper around the Groq2 model, a large language model trained by Google. The API allows you to send text prompts to the model and receive generated text responses. The API is easy to use and can be integrated into a variety of applications.

tokscale

Tokscale is a high-performance CLI tool and visualization dashboard for tracking token usage and costs across multiple AI coding agents. It helps monitor and analyze token consumption from various AI coding tools, providing real-time pricing calculations using LiteLLM's pricing data. Inspired by the Kardashev scale, Tokscale measures token consumption as users scale the ranks of AI-augmented development. It offers interactive TUI mode, multi-platform support, real-time pricing, detailed breakdowns, web visualization, flexible filtering, and social platform features.

LocalAGI

LocalAGI is a powerful, self-hostable AI Agent platform that allows you to design AI automations without writing code. It provides a complete drop-in replacement for OpenAI's Responses APIs with advanced agentic capabilities. With LocalAGI, you can create customizable AI assistants, automations, chat bots, and agents that run 100% locally, without the need for cloud services or API keys. The platform offers features like no-code agents, web-based interface, advanced agent teaming, connectors for various platforms, comprehensive REST API, short & long-term memory capabilities, planning & reasoning, periodic tasks scheduling, memory management, multimodal support, extensible custom actions, fully customizable models, observability, and more.

agentops

AgentOps is a toolkit for evaluating and developing robust and reliable AI agents. It provides benchmarks, observability, and replay analytics to help developers build better agents. AgentOps is open beta and can be signed up for here. Key features of AgentOps include: - Session replays in 3 lines of code: Initialize the AgentOps client and automatically get analytics on every LLM call. - Time travel debugging: (coming soon!) - Agent Arena: (coming soon!) - Callback handlers: AgentOps works seamlessly with applications built using Langchain and LlamaIndex.

flyte-sdk

Flyte 2 SDK is a pure Python tool for type-safe, distributed orchestration of agents, ML pipelines, and more. It allows users to write data pipelines, ML training jobs, and distributed compute in Python without any DSL constraints. With features like async-first parallelism and fine-grained observability, Flyte 2 offers a seamless workflow experience. Users can leverage core concepts like TaskEnvironments for container configuration, pure Python workflows for flexibility, and async parallelism for distributed execution. Advanced features include sub-task observability with tracing and remote task execution. The tool also provides native Jupyter integration for running and monitoring workflows directly from notebooks. Configuration and deployment are made easy with configuration files and commands for deploying and running workflows. Flyte 2 is licensed under the Apache 2.0 License.

FDAbench

FDABench is a benchmark tool designed for evaluating data agents' reasoning ability over heterogeneous data in analytical scenarios. It offers 2,007 tasks across various data sources, domains, difficulty levels, and task types. The tool provides ready-to-use data agent implementations, a DAG-based evaluation system, and a framework for agent-expert collaboration in dataset generation. Key features include data agent implementations, comprehensive evaluation metrics, multi-database support, different task types, extensible framework for custom agent integration, and cost tracking. Users can set up the environment using Python 3.10+ on Linux, macOS, or Windows. FDABench can be installed with a one-command setup or manually. The tool supports API configuration for LLM access and offers quick start guides for database download, dataset loading, and running examples. It also includes features like dataset generation using the PUDDING framework, custom agent integration, evaluation metrics like accuracy and rubric score, and a directory structure for easy navigation.

candle-vllm

Candle-vllm is an efficient and easy-to-use platform designed for inference and serving local LLMs, featuring an OpenAI compatible API server. It offers a highly extensible trait-based system for rapid implementation of new module pipelines, streaming support in generation, efficient management of key-value cache with PagedAttention, and continuous batching. The tool supports chat serving for various models and provides a seamless experience for users to interact with LLMs through different interfaces.

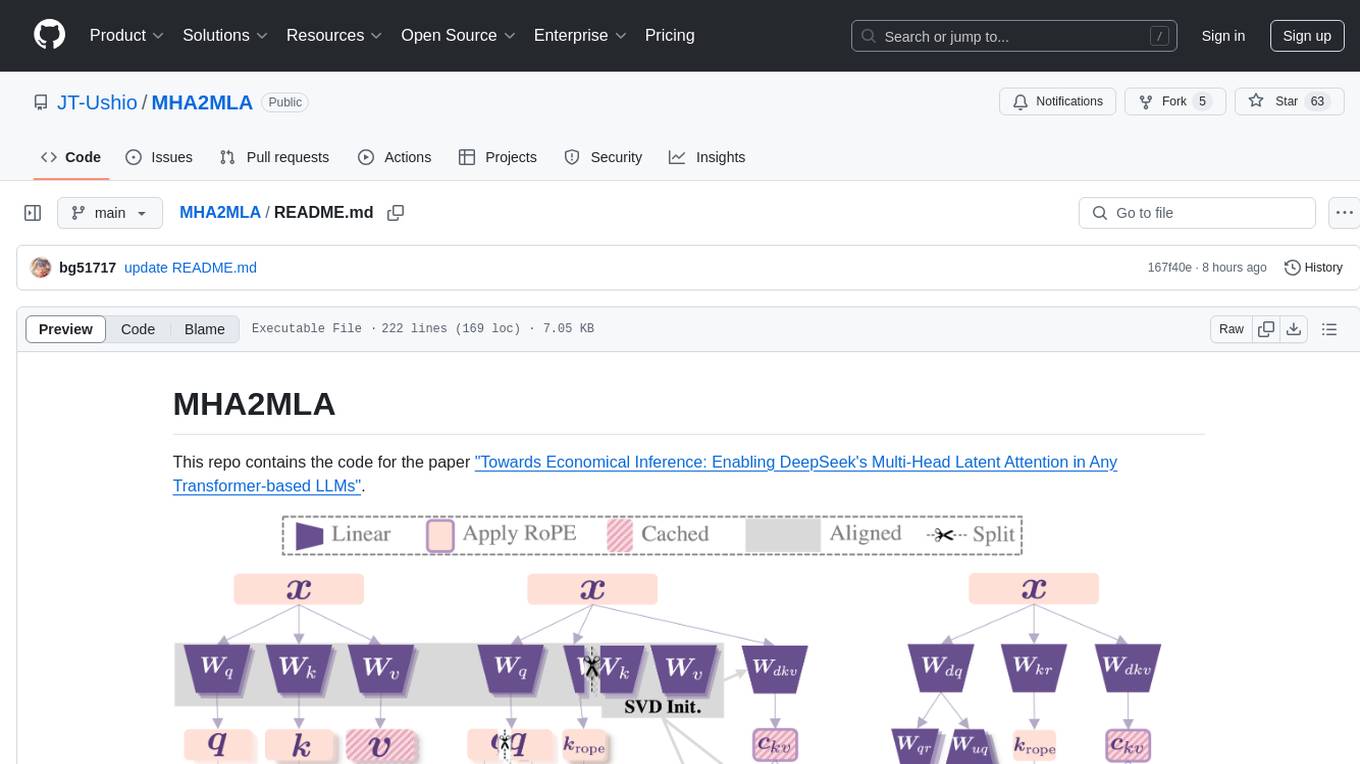

MHA2MLA

This repository contains the code for the paper 'Towards Economical Inference: Enabling DeepSeek's Multi-Head Latent Attention in Any Transformer-based LLMs'. It provides tools for fine-tuning and evaluating Llama models, converting models between different frameworks, processing datasets, and performing specific model training tasks like Partial-RoPE Fine-Tuning and Multiple-Head Latent Attention Fine-Tuning. The repository also includes commands for model evaluation using Lighteval and LongBench, along with necessary environment setup instructions.

browser4

Browser4 is a lightning-fast, coroutine-safe browser designed for AI integration with large language models. It offers ultra-fast automation, deep web understanding, and powerful data extraction APIs. Users can automate the browser, extract data at scale, and perform tasks like summarizing products, extracting product details, and finding specific links. The tool is developer-friendly, supports AI-powered automation, and provides advanced features like X-SQL for precise data extraction. It also offers RPA capabilities, browser control, and complex data extraction with X-SQL. Browser4 is suitable for web scraping, data extraction, automation, and AI integration tasks.

For similar tasks

evalplus

EvalPlus is a rigorous evaluation framework for LLM4Code, providing HumanEval+ and MBPP+ tests to evaluate large language models on code generation tasks. It offers precise evaluation and ranking, coding rigorousness analysis, and pre-generated code samples. Users can use EvalPlus to generate code solutions, post-process code, and evaluate code quality. The tool includes tools for code generation and test input generation using various backends.

web-codegen-scorer

Web Codegen Scorer is a tool designed to evaluate the quality of web code generated by Large Language Models (LLMs). It allows users to make evidence-based decisions related to AI-generated code by iterating on system prompts, comparing code quality from different models, and monitoring code quality over time. The tool focuses specifically on web code and offers various features such as configuring evaluations, specifying system instructions, using built-in checks for code quality, automatically repairing issues, and viewing results with an intuitive report viewer UI.

MathCoder

MathCoder is a repository focused on enhancing mathematical reasoning by fine-tuning open-source language models to use code for modeling and deriving math equations. It introduces MathCodeInstruct dataset with solutions interleaving natural language, code, and execution results. The repository provides MathCoder models capable of generating code-based solutions for challenging math problems, achieving state-of-the-art scores on MATH and GSM8K datasets. It offers tools for model deployment, inference, and evaluation, along with a citation for referencing the work.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.