whatsapp-ai-bot

This is a WhatsApp AI bot that uses various AI models, including Gemini, GPT, DALL-E, Flux and StabilityAI, to generate responses to user input.

Stars: 190

The WhatsApp AI Bot is a chatbot that utilizes various AI models APIs to generate responses to user input. Users can interact with the bot using commands to access different AI models such as Gemini, Gemini-Vision, CHAT-GPT, DALL-E, and Stability AI. Additionally, users have the flexibility to create their own custom models to personalize the bot's behavior. The bot operates on WhatsApp Web through Puppeteer and requires API keys for Gemini, OpenAI, and StabilityAI. It provides a range of functionalities and customization options for users interested in AI-powered chatbots.

README:

+ We are switching to the @whiskeysockets/baileys library for our bot because it's lightweight and easy to deploy.

- This branch is still under construction 🚧. Currently, we only support the models listed below, but support for new models will be added soon.Supported Models:

| Model | Provider | Type | Usage |

|---|---|---|---|

| Chat GPT | Open AI | text to text | !chatgpt |

| Gemini | text to text | !gemini | |

| Gemini Vision | image to text | !gemini-vision | |

| Dalle 2 | Open AI | text to image | !dalle |

| Dalle 3 | Open AI | text to image + revised text | !dalle3 |

| FLUX.1-schnell | Hugging Face | text to image | !flux |

Useful links:

| ♥ Sponsor | 💎 Bounty | 🚀 Deployment | ✉ WhatsApp Group |

|---|---|---|---|

| link | link | link | link |

The WhatsApp AI Bot is a chatbot that uses AI models APIs to generate responses to user input. The bot supports several AI models, including Gemini, Gemini-Vision, CHAT-GPT, DALL-E, and Stability AI, and users can also create their own models to customize the bot's behavior.

Gemini

Stability AI + Chat-GPT

Dalle + Custom Model

1. Download Source Code

git clone https://github.com/Zain-ul-din/WhatsApp-Ai-bot.git

cd WhatsApp-Ai-botOR

2. Get API Keys

3. Add API Keys

-

create

.envin the root of the project. -

set following fields in

.envfile

OPENAI_API_KEY=YOUR_OPEN_AI_API_KEY

DREAMSTUDIO_API_KEY=YOUR_STABILITY_AI_API_KEY

GEMINI_API_KEY=YOUR_GEMINI_API_KEY

HF_TOKEN=HUGGING_FACE_TOKEN4. Run the code

-

run

setup.shto start the bot. -

Scan QR code.

Default Prefix

-

!geminiuse gemini. -

!gemini-visionusegemini-pro-visionmodel for images -

!chatgptuse chat-gpt. -

!dalleuse Dalle. -

!dalle3use Dalle 3. -

!stableuse Stability AI. -

!fluxuse flux AI. -

!botuse custom model.

Note! open src/whatsapp-ai.config.ts to edit config.

This bot utilizes Puppeteer to operate an actual instance of Whatsapp Web to prevent blocking. However, it is essential to note that these operations come at a cost charged by OpenAI and Stability AI for every request made. Please be aware that WhatsApp does not support bots or unofficial clients on its platform, so using this method is not entirely secure and could lead to getting blocked.

A big thank you to these people for supporting this project.

|

|

|

|

|---|---|---|

| Levitco 💎 | Anas Ashfaq | YOU? |

This repository is maintained by Zain-Ul-Din

Show some ❤️ by starring this awesome repository!

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for whatsapp-ai-bot

Similar Open Source Tools

whatsapp-ai-bot

The WhatsApp AI Bot is a chatbot that utilizes various AI models APIs to generate responses to user input. Users can interact with the bot using commands to access different AI models such as Gemini, Gemini-Vision, CHAT-GPT, DALL-E, and Stability AI. Additionally, users have the flexibility to create their own custom models to personalize the bot's behavior. The bot operates on WhatsApp Web through Puppeteer and requires API keys for Gemini, OpenAI, and StabilityAI. It provides a range of functionalities and customization options for users interested in AI-powered chatbots.

pollinations

pollinations.ai is an open-source generative AI platform based in Berlin, empowering community projects with accessible text, image, video, and audio generation APIs. It offers a unified API endpoint for various AI generation needs, including text, images, audio, and video. The platform provides features like image generation using models such as Flux, GPT Image, Seedream, and Kontext, video generation with Seedance and Veo, and audio generation with text-to-speech and speech-to-text capabilities. Users can access the platform through a web interface or API, and authentication is managed through API keys. The platform is community-driven, transparent, and ethical, aiming to make AI technology open, accessible, and interconnected while fostering innovation and responsible development.

llm-x

LLM X is a ChatGPT-style UI for the niche group of folks who run Ollama (think of this like an offline chat gpt server) locally. It supports sending and receiving images and text and works offline through PWA (Progressive Web App) standards. The project utilizes React, Typescript, Lodash, Mobx State Tree, Tailwind css, DaisyUI, NextUI, Highlight.js, React Markdown, kbar, Yet Another React Lightbox, Vite, and Vite PWA plugin. It is inspired by ollama-ui's project and Perplexity.ai's UI advancements in the LLM UI space. The project is still under development, but it is already a great way to get started with building your own LLM UI.

cog

Cog is an open-source tool that lets you package machine learning models in a standard, production-ready container. You can deploy your packaged model to your own infrastructure, or to Replicate.

airunner

AI Runner is a multi-modal AI interface that allows users to run open-source large language models and AI image generators on their own hardware. The tool provides features such as voice-based chatbot conversations, text-to-speech, speech-to-text, vision-to-text, text generation with large language models, image generation capabilities, image manipulation tools, utility functions, and more. It aims to provide a stable and user-friendly experience with security updates, a new UI, and a streamlined installation process. The application is designed to run offline on users' hardware without relying on a web server, offering a smooth and responsive user experience.

pocketpaw

PocketPaw is a lightweight and user-friendly tool designed for managing and organizing your digital assets. It provides a simple interface for users to easily categorize, tag, and search for files across different platforms. With PocketPaw, you can efficiently organize your photos, documents, and other files in a centralized location, making it easier to access and share them. Whether you are a student looking to organize your study materials, a professional managing project files, or a casual user wanting to declutter your digital space, PocketPaw is the perfect solution for all your file management needs.

Devon

Devon is an open-source pair programmer tool designed to facilitate collaborative coding sessions. It provides features such as multi-file editing, codebase exploration, test writing, bug fixing, and architecture exploration. The tool supports Anthropic, OpenAI, and Groq APIs, with plans to add more models in the future. Devon is community-driven, with ongoing development goals including multi-model support, plugin system for tool builders, self-hostable Electron app, and setting SOTA on SWE-bench Lite. Users can contribute to the project by developing core functionality, conducting research on agent performance, providing feedback, and testing the tool.

ebook2audiobook

ebook2audiobook is a CPU/GPU converter tool that converts eBooks to audiobooks with chapters and metadata using tools like Calibre, ffmpeg, XTTSv2, and Fairseq. It supports voice cloning and a wide range of languages. The tool is designed to run on 4GB RAM and provides a new v2.0 Web GUI interface for user-friendly interaction. Users can convert eBooks to text format, split eBooks into chapters, and utilize high-quality text-to-speech functionalities. Supported languages include Arabic, Chinese, English, French, German, Hindi, and many more. The tool can be used for legal, non-DRM eBooks only and should be used responsibly in compliance with applicable laws.

yournextstore

Your Next Store is an open-source Next.js e-commerce platform designed for AI development. It offers a Stripe-native integration, ultra-fast page loads, and typed APIs. The codebase follows consistent patterns, provides blazing fast performance with Next.js 16 and edge caching, and allows direct API integration without the need for plugins. With a focus on AI coding tools, Your Next Store offers familiar patterns, typed APIs for Commerce Kit SDK methods, and a well-defined domain for commerce data models, making it easier for AI models to generate accurate suggestions and write correct code.

burp-ai-agent

Burp AI Agent is an extension for Burp Suite that integrates AI into your security workflow. It provides 7 AI backends, 53+ MCP tools, and 62 vulnerability classes. Users can configure privacy modes, perform audit logging, and connect external AI agents via MCP. The tool allows passive and active AI scanners to find vulnerabilities while users focus on manual testing. It requires Burp Suite, Java 21, and at least one AI backend configured.

postgresai

PostgresAI is an AI-native PostgreSQL observability tool designed for monitoring, health checks, and root cause analysis. It provides structured reports and metrics for AI consumption, tracks problems from detection to resolution, offers over 45 health checks including bloat, indexes, queries, settings, and security, and features Active Session History similar to Oracle ASH. PostgresAI is part of the Self-Driving Postgres initiative, aiming to make Postgres autonomous. It includes expert dashboards following the Four Golden Signals methodology and is battle-tested with companies like GitLab, Miro, Chewy, and more.

stenoai

StenoAI is an AI-powered meeting intelligence tool that allows users to record, transcribe, summarize, and query meetings using local AI models. It prioritizes privacy by processing data entirely on the user's device. The tool offers multiple AI models optimized for different use cases, making it ideal for healthcare, legal, and finance professionals with confidential data needs. StenoAI also features a macOS desktop app with a user-friendly interface, making it convenient for users to access its functionalities. The project is open-source and not affiliated with any specific company, emphasizing its focus on meeting-notes productivity and community collaboration.

seline

Seline is a local-first AI desktop application that integrates conversational AI, visual generation tools, vector search, and multi-channel connectivity. It allows users to connect WhatsApp, Telegram, or Slack to create always-on bots with full context and background task delivery. The application supports multi-channel connectivity, deep research mode, local web browsing with Puppeteer, local knowledge and privacy features, visual and creative tools, automation and agents, developer experience enhancements, and more. Seline is actively developed with a focus on improving user experience and functionality.

mistral.rs

Mistral.rs is a fast LLM inference platform written in Rust. We support inference on a variety of devices, quantization, and easy-to-use application with an Open-AI API compatible HTTP server and Python bindings.

spaCy

spaCy is an industrial-strength Natural Language Processing (NLP) library in Python and Cython. It incorporates the latest research and is designed for real-world applications. The library offers pretrained pipelines supporting 70+ languages, with advanced neural network models for tasks such as tagging, parsing, named entity recognition, and text classification. It also facilitates multi-task learning with pretrained transformers like BERT, along with a production-ready training system and streamlined model packaging, deployment, and workflow management. spaCy is commercial open-source software released under the MIT license.

Nemp-memory

Nemp Memory is a smart memory tool designed for AI agents like Claude Code and OpenClaw. It helps users save and recall project-related information such as tech stack, architecture decisions, project patterns, and daily work updates. Unlike other memory plugins, Nemp is simple to use with zero dependencies, no cloud requirements, and stores data in plain JSON files. It offers features like auto-init to detect project stack, smart context for semantic search, memory suggestions based on user activity, auto-sync to CLAUDE.md for project context updates, two-way sync with CLAUDE.md, and export to CLAUDE.md for full project context. Nemp Memory is privacy-focused, keeping all data local without tracking or cloud storage.

For similar tasks

whatsapp-ai-bot

The WhatsApp AI Bot is a chatbot that utilizes various AI models APIs to generate responses to user input. Users can interact with the bot using commands to access different AI models such as Gemini, Gemini-Vision, CHAT-GPT, DALL-E, and Stability AI. Additionally, users have the flexibility to create their own custom models to personalize the bot's behavior. The bot operates on WhatsApp Web through Puppeteer and requires API keys for Gemini, OpenAI, and StabilityAI. It provides a range of functionalities and customization options for users interested in AI-powered chatbots.

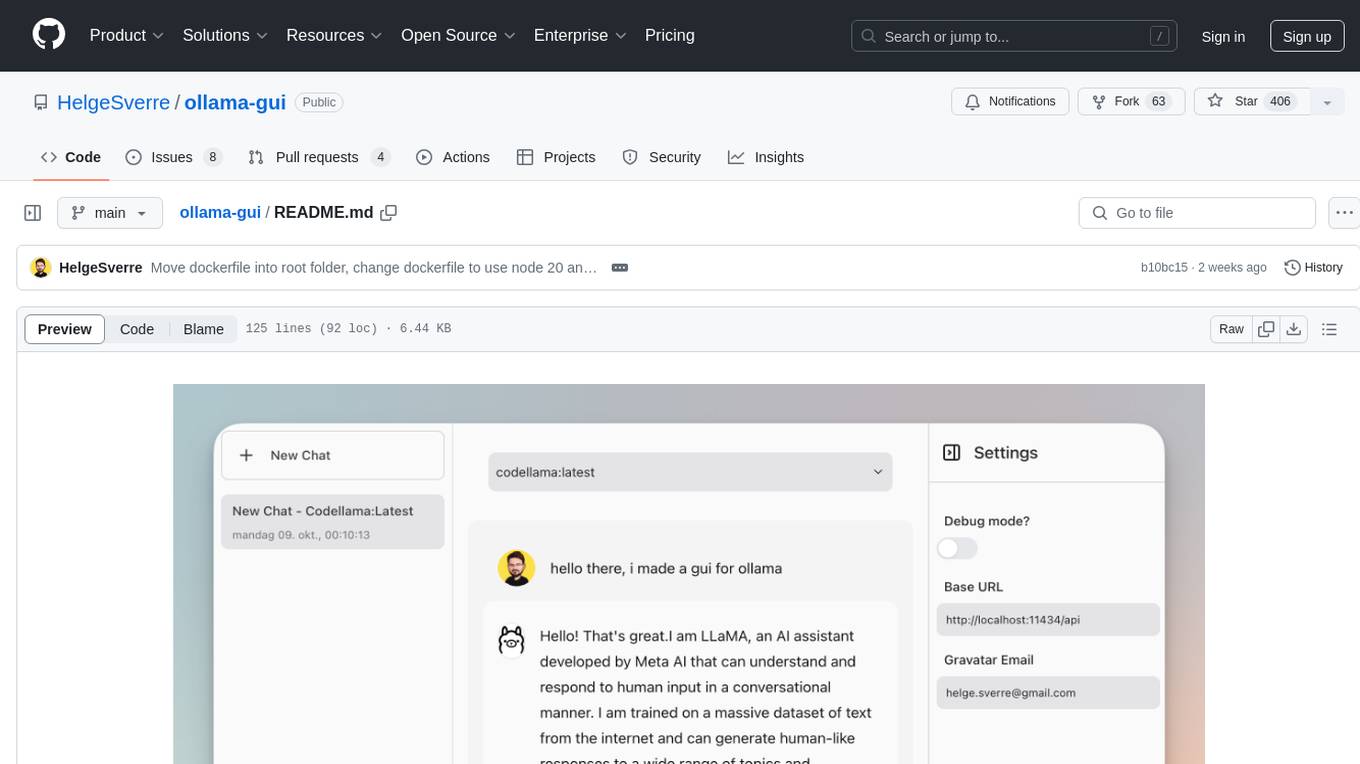

ollama-gui

Ollama GUI is a web interface for ollama.ai, a tool that enables running Large Language Models (LLMs) on your local machine. It provides a user-friendly platform for chatting with LLMs and accessing various models for text generation. Users can easily interact with different models, manage chat history, and explore available models through the web interface. The tool is built with Vue.js, Vite, and Tailwind CSS, offering a modern and responsive design for seamless user experience.

driverlessai-recipes

This repository contains custom recipes for H2O Driverless AI, which is an Automatic Machine Learning platform for the Enterprise. Custom recipes are Python code snippets that can be uploaded into Driverless AI at runtime to automate feature engineering, model building, visualization, and interpretability. Users can gain control over the optimization choices made by Driverless AI by providing their own custom recipes. The repository includes recipes for various tasks such as data manipulation, data preprocessing, feature selection, data augmentation, model building, scoring, and more. Best practices for creating and using recipes are also provided, including security considerations, performance tips, and safety measures.

semantic-router

Semantic Router is a superfast decision-making layer for your LLMs and agents. Rather than waiting for slow LLM generations to make tool-use decisions, we use the magic of semantic vector space to make those decisions — _routing_ our requests using _semantic_ meaning.

hass-ollama-conversation

The Ollama Conversation integration adds a conversation agent powered by Ollama in Home Assistant. This agent can be used in automations to query information provided by Home Assistant about your house, including areas, devices, and their states. Users can install the integration via HACS and configure settings such as API timeout, model selection, context size, maximum tokens, and other parameters to fine-tune the responses generated by the AI language model. Contributions to the project are welcome, and discussions can be held on the Home Assistant Community platform.

luna-ai

Luna AI is a virtual streamer driven by a 'brain' composed of ChatterBot, GPT, Claude, langchain, chatglm, text-generation-webui, 讯飞星火, 智谱AI. It can interact with viewers in real-time during live streams on platforms like Bilibili, Douyin, Kuaishou, Douyu, or chat with you locally. Luna AI uses natural language processing and text-to-speech technologies like Edge-TTS, VITS-Fast, elevenlabs, bark-gui, VALL-E-X to generate responses to viewer questions and can change voice using so-vits-svc, DDSP-SVC. It can also collaborate with Stable Diffusion for drawing displays and loop custom texts. This project is completely free, and any identical copycat selling programs are pirated, please stop them promptly.

KULLM

KULLM (구름) is a Korean Large Language Model developed by Korea University NLP & AI Lab and HIAI Research Institute. It is based on the upstage/SOLAR-10.7B-v1.0 model and has been fine-tuned for instruction. The model has been trained on 8×A100 GPUs and is capable of generating responses in Korean language. KULLM exhibits hallucination and repetition phenomena due to its decoding strategy. Users should be cautious as the model may produce inaccurate or harmful results. Performance may vary in benchmarks without a fixed system prompt.

cria

Cria is a Python library designed for running Large Language Models with minimal configuration. It provides an easy and concise way to interact with LLMs, offering advanced features such as custom models, streams, message history management, and running multiple models in parallel. Cria simplifies the process of using LLMs by providing a straightforward API that requires only a few lines of code to get started. It also handles model installation automatically, making it efficient and user-friendly for various natural language processing tasks.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.