spaCy

💫 Industrial-strength Natural Language Processing (NLP) in Python

Stars: 30652

spaCy is an industrial-strength Natural Language Processing (NLP) library in Python and Cython. It incorporates the latest research and is designed for real-world applications. The library offers pretrained pipelines supporting 70+ languages, with advanced neural network models for tasks such as tagging, parsing, named entity recognition, and text classification. It also facilitates multi-task learning with pretrained transformers like BERT, along with a production-ready training system and streamlined model packaging, deployment, and workflow management. spaCy is commercial open-source software released under the MIT license.

README:

spaCy is a library for advanced Natural Language Processing in Python and Cython. It's built on the very latest research, and was designed from day one to be used in real products.

spaCy comes with pretrained pipelines and currently supports tokenization and training for 70+ languages. It features state-of-the-art speed and neural network models for tagging, parsing, named entity recognition, text classification and more, multi-task learning with pretrained transformers like BERT, as well as a production-ready training system and easy model packaging, deployment and workflow management. spaCy is commercial open-source software, released under the MIT license.

💫 Version 3.7 out now! Check out the release notes here.

| Documentation | |

|---|---|

| ⭐️ spaCy 101 | New to spaCy? Here's everything you need to know! |

| 📚 Usage Guides | How to use spaCy and its features. |

| 🚀 New in v3.0 | New features, backwards incompatibilities and migration guide. |

| 🪐 Project Templates | End-to-end workflows you can clone, modify and run. |

| 🎛 API Reference | The detailed reference for spaCy's API. |

| ⏩ GPU Processing | Use spaCy with CUDA-compatible GPU processing. |

| 📦 Models | Download trained pipelines for spaCy. |

| 🦙 Large Language Models | Integrate LLMs into spaCy pipelines. |

| 🌌 Universe | Plugins, extensions, demos and books from the spaCy ecosystem. |

| ⚙️ spaCy VS Code Extension | Additional tooling and features for working with spaCy's config files. |

| 👩🏫 Online Course | Learn spaCy in this free and interactive online course. |

| 📰 Blog | Read about current spaCy and Prodigy development, releases, talks and more from Explosion. |

| 📺 Videos | Our YouTube channel with video tutorials, talks and more. |

| 🛠 Changelog | Changes and version history. |

| 💝 Contribute | How to contribute to the spaCy project and code base. |

| 👕 Swag | Support us and our work with unique, custom-designed swag! |

| Custom NLP consulting, implementation and strategic advice by spaCy’s core development team. Streamlined, production-ready, predictable and maintainable. Send us an email or take our 5-minute questionnaire, and well'be in touch! Learn more → |

The spaCy project is maintained by the spaCy team. Please understand that we won't be able to provide individual support via email. We also believe that help is much more valuable if it's shared publicly, so that more people can benefit from it.

| Type | Platforms |

|---|---|

| 🚨 Bug Reports | GitHub Issue Tracker |

| 🎁 Feature Requests & Ideas | GitHub Discussions |

| 👩💻 Usage Questions | GitHub Discussions · Stack Overflow |

| 🗯 General Discussion | GitHub Discussions |

- Support for 70+ languages

- Trained pipelines for different languages and tasks

- Multi-task learning with pretrained transformers like BERT

- Support for pretrained word vectors and embeddings

- State-of-the-art speed

- Production-ready training system

- Linguistically-motivated tokenization

- Components for named entity recognition, part-of-speech-tagging, dependency parsing, sentence segmentation, text classification, lemmatization, morphological analysis, entity linking and more

- Easily extensible with custom components and attributes

- Support for custom models in PyTorch, TensorFlow and other frameworks

- Built in visualizers for syntax and NER

- Easy model packaging, deployment and workflow management

- Robust, rigorously evaluated accuracy

📖 For more details, see the facts, figures and benchmarks.

For detailed installation instructions, see the documentation.

- Operating system: macOS / OS X · Linux · Windows (Cygwin, MinGW, Visual Studio)

- Python version: Python 3.7+ (only 64 bit)

-

Package managers: pip · conda (via

conda-forge)

Using pip, spaCy releases are available as source packages and binary wheels.

Before you install spaCy and its dependencies, make sure that your pip,

setuptools and wheel are up to date.

pip install -U pip setuptools wheel

pip install spacyTo install additional data tables for lemmatization and normalization you can

run pip install spacy[lookups] or install

spacy-lookups-data

separately. The lookups package is needed to create blank models with

lemmatization data, and to lemmatize in languages that don't yet come with

pretrained models and aren't powered by third-party libraries.

When using pip it is generally recommended to install packages in a virtual environment to avoid modifying system state:

python -m venv .env

source .env/bin/activate

pip install -U pip setuptools wheel

pip install spacyYou can also install spaCy from conda via the conda-forge channel. For the

feedstock including the build recipe and configuration, check out

this repository.

conda install -c conda-forge spacySome updates to spaCy may require downloading new statistical models. If you're

running spaCy v2.0 or higher, you can use the validate command to check if

your installed models are compatible and if not, print details on how to update

them:

pip install -U spacy

python -m spacy validateIf you've trained your own models, keep in mind that your training and runtime inputs must match. After updating spaCy, we recommend retraining your models with the new version.

📖 For details on upgrading from spaCy 2.x to spaCy 3.x, see the migration guide.

Trained pipelines for spaCy can be installed as Python packages. This means

that they're a component of your application, just like any other module. Models

can be installed using spaCy's download

command, or manually by pointing pip to a path or URL.

| Documentation | |

|---|---|

| Available Pipelines | Detailed pipeline descriptions, accuracy figures and benchmarks. |

| Models Documentation | Detailed usage and installation instructions. |

| Training | How to train your own pipelines on your data. |

# Download best-matching version of specific model for your spaCy installation

python -m spacy download en_core_web_sm

# pip install .tar.gz archive or .whl from path or URL

pip install /Users/you/en_core_web_sm-3.0.0.tar.gz

pip install /Users/you/en_core_web_sm-3.0.0-py3-none-any.whl

pip install https://github.com/explosion/spacy-models/releases/download/en_core_web_sm-3.0.0/en_core_web_sm-3.0.0.tar.gzTo load a model, use spacy.load()

with the model name or a path to the model data directory.

import spacy

nlp = spacy.load("en_core_web_sm")

doc = nlp("This is a sentence.")You can also import a model directly via its full name and then call its

load() method with no arguments.

import spacy

import en_core_web_sm

nlp = en_core_web_sm.load()

doc = nlp("This is a sentence.")📖 For more info and examples, check out the models documentation.

The other way to install spaCy is to clone its GitHub repository and build it from source. That is the common way if you want to make changes to the code base. You'll need to make sure that you have a development environment consisting of a Python distribution including header files, a compiler, pip, virtualenv and git installed. The compiler part is the trickiest. How to do that depends on your system.

| Platform | |

|---|---|

| Ubuntu | Install system-level dependencies via apt-get: sudo apt-get install build-essential python-dev git . |

| Mac | Install a recent version of XCode, including the so-called "Command Line Tools". macOS and OS X ship with Python and git preinstalled. |

| Windows | Install a version of the Visual C++ Build Tools or Visual Studio Express that matches the version that was used to compile your Python interpreter. |

For more details and instructions, see the documentation on compiling spaCy from source and the quickstart widget to get the right commands for your platform and Python version.

git clone https://github.com/explosion/spaCy

cd spaCy

python -m venv .env

source .env/bin/activate

# make sure you are using the latest pip

python -m pip install -U pip setuptools wheel

pip install -r requirements.txt

pip install --no-build-isolation --editable .To install with extras:

pip install --no-build-isolation --editable .[lookups,cuda102]spaCy comes with an extensive test suite. In order to run the

tests, you'll usually want to clone the repository and build spaCy from source.

This will also install the required development dependencies and test utilities

defined in the requirements.txt.

Alternatively, you can run pytest on the tests from within the installed

spacy package. Don't forget to also install the test utilities via spaCy's

requirements.txt:

pip install -r requirements.txt

python -m pytest --pyargs spacyFor Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for spaCy

Similar Open Source Tools

spaCy

spaCy is an industrial-strength Natural Language Processing (NLP) library in Python and Cython. It incorporates the latest research and is designed for real-world applications. The library offers pretrained pipelines supporting 70+ languages, with advanced neural network models for tasks such as tagging, parsing, named entity recognition, and text classification. It also facilitates multi-task learning with pretrained transformers like BERT, along with a production-ready training system and streamlined model packaging, deployment, and workflow management. spaCy is commercial open-source software released under the MIT license.

noether

Noether is Emmi AI's open software framework for Engineering AI. It is built on transformer building blocks, delivering the full engineering stack for building, training, and operating industrial simulation models across engineering verticals. The framework eliminates the need for component re-engineering or an in-house deep learning team. Noether features a modular transformer architecture optimized for physical systems, hardware agnostic execution across CPU, MPS, and NVIDIA GPUs, industrial-grade design for high-fidelity simulations, and built-in support for Multi-GPU and SLURM cluster environments.

openlit

OpenLIT is an OpenTelemetry-native GenAI and LLM Application Observability tool. It's designed to make the integration process of observability into GenAI projects as easy as pie – literally, with just **a single line of code**. Whether you're working with popular LLM Libraries such as OpenAI and HuggingFace or leveraging vector databases like ChromaDB, OpenLIT ensures your applications are monitored seamlessly, providing critical insights to improve performance and reliability.

EvoAgentX

EvoAgentX is an open-source framework for building, evaluating, and evolving LLM-based agents or agentic workflows in an automated, modular, and goal-driven manner. It enables developers and researchers to move beyond static prompt chaining or manual workflow orchestration by introducing a self-evolving agent ecosystem. The framework includes features such as agent workflow autoconstruction, built-in evaluation, self-evolution engine, plug-and-play compatibility, comprehensive built-in tools, memory module support, and human-in-the-loop interactions.

DreamLayer

DreamLayer AI is an open-source Stable Diffusion WebUI designed for AI researchers, labs, and developers. It automates prompts, seeds, and metrics for benchmarking models, datasets, and samplers, enabling reproducible evaluations across multiple seeds and configurations. The tool integrates custom metrics and evaluation pipelines, providing a streamlined workflow for AI research. With features like automated benchmarking, reproducibility, built-in metrics, multi-modal readiness, and researcher-friendly interface, DreamLayer AI aims to simplify and accelerate the model evaluation process.

superduperdb

SuperDuperDB is a Python framework for integrating AI models, APIs, and vector search engines directly with your existing databases, including hosting of your own models, streaming inference and scalable model training/fine-tuning. Build, deploy and manage any AI application without the need for complex pipelines, infrastructure as well as specialized vector databases, and moving our data there, by integrating AI at your data's source: - Generative AI, LLMs, RAG, vector search - Standard machine learning use-cases (classification, segmentation, regression, forecasting recommendation etc.) - Custom AI use-cases involving specialized models - Even the most complex applications/workflows in which different models work together SuperDuperDB is **not** a database. Think `db = superduper(db)`: SuperDuperDB transforms your databases into an intelligent platform that allows you to leverage the full AI and Python ecosystem. A single development and deployment environment for all your AI applications in one place, fully scalable and easy to manage.

mage-ai

Mage is an open-source data pipeline tool for transforming and integrating data. It offers an easy developer experience, engineering best practices built-in, and data as a first-class citizen. Mage makes it easy to build, preview, and launch data pipelines, and provides observability and scaling capabilities. It supports data integrations, streaming pipelines, and dbt integration.

RWKV_APP

RWKV App is an experimental application that enables users to run Large Language Models (LLMs) offline on their edge devices. It offers a privacy-first, on-device LLM experience for everyday devices. Users can engage in multi-turn conversations, text-to-speech, visual understanding, and more, all without requiring an internet connection. The app supports switching between different models, running locally without internet, and exploring various AI tasks such as chat, speech generation, and visual understanding. It is built using Flutter and Dart FFI for cross-platform compatibility and efficient communication with the C++ inference engine. The roadmap includes integrating features into the RWKV Chat app, supporting more model weights, hardware, operating systems, and devices.

Roo-Code

Roo Code is an AI-powered development tool that integrates with your code editor to help you generate code from natural language descriptions and specifications, refactor and debug existing code, write and update documentation, answer questions about your codebase, automate repetitive tasks, and utilize MCP servers. It offers different modes such as Code, Architect, Ask, Debug, and Custom Modes to adapt to various tasks and workflows. Roo Code provides tutorial and feature videos, documentation, a YouTube channel, a Discord server, a Reddit community, GitHub issues tracking, and a feature request platform. Users can set up and develop Roo Code locally by cloning the repository, installing dependencies, and running the extension in development mode or by automated/manual VSIX installation. The tool uses changesets for versioning and publishing. Please note that Roo Code, Inc. does not make any representations or warranties regarding the tools provided, and users assume all risks associated with their use.

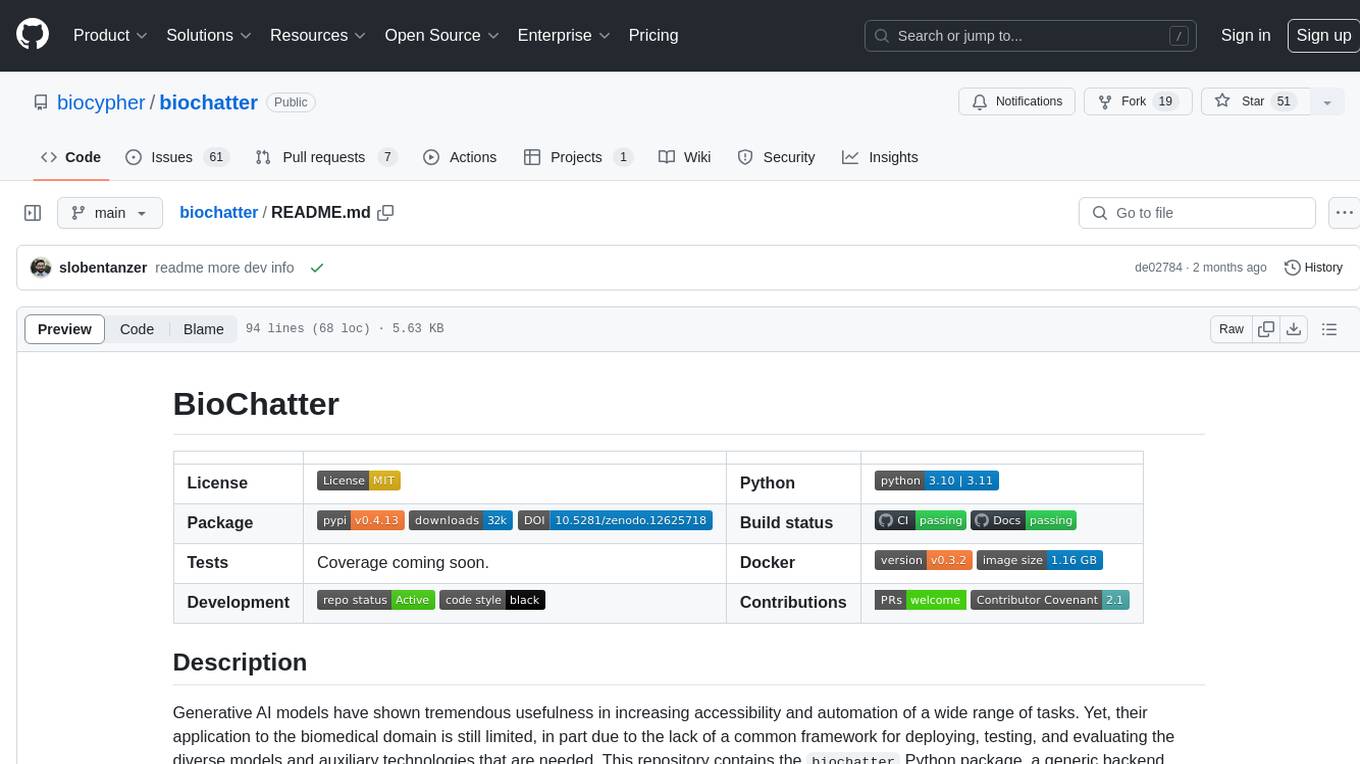

biochatter

Generative AI models have shown tremendous usefulness in increasing accessibility and automation of a wide range of tasks. This repository contains the `biochatter` Python package, a generic backend library for the connection of biomedical applications to conversational AI. It aims to provide a common framework for deploying, testing, and evaluating diverse models and auxiliary technologies in the biomedical domain. BioChatter is part of the BioCypher ecosystem, connecting natively to BioCypher knowledge graphs.

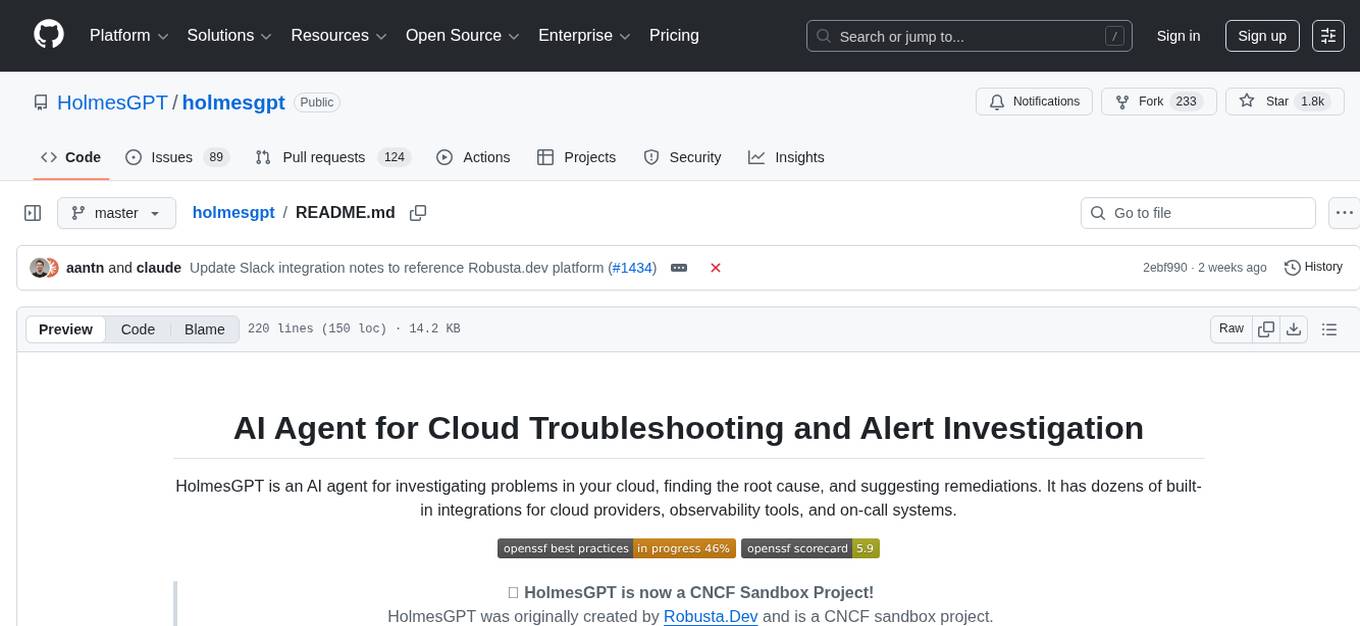

holmesgpt

HolmesGPT is an AI agent designed for troubleshooting and investigating issues in cloud environments. It utilizes AI models to analyze data from various sources, identify root causes, and provide remediation suggestions. The tool offers integrations with popular cloud providers, observability tools, and on-call systems, enabling users to streamline the troubleshooting process. HolmesGPT can automate the investigation of alerts and tickets from external systems, providing insights back to the source or communication platforms like Slack. It supports end-to-end automation and offers a CLI for interacting with the AI agent. Users can customize HolmesGPT by adding custom data sources and runbooks to enhance investigation capabilities. The tool prioritizes data privacy, ensuring read-only access and respecting RBAC permissions. HolmesGPT is a CNCF Sandbox Project and is distributed under the Apache 2.0 License.

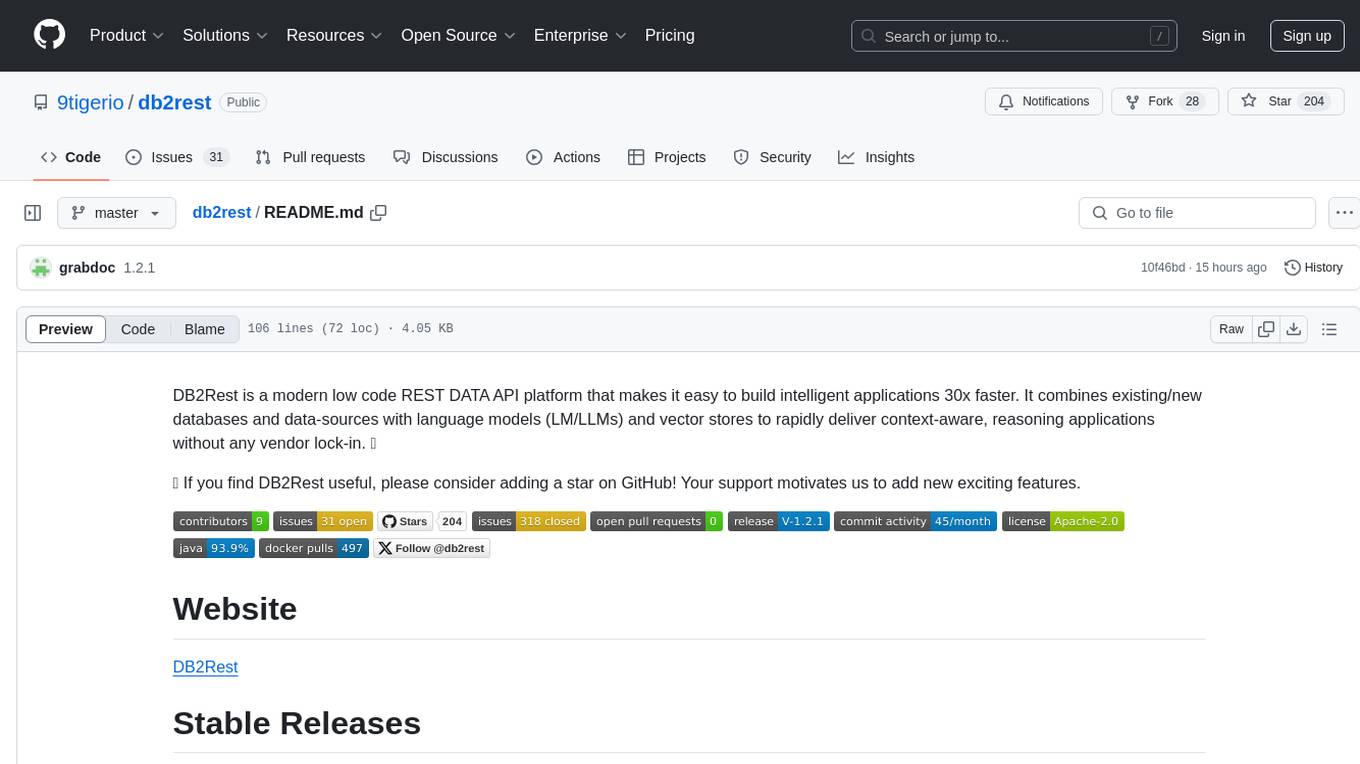

db2rest

DB2Rest is a modern low code REST DATA API platform that enables the rapid development of intelligent applications by combining databases, language models, and vector stores. It facilitates context-aware, reasoning applications without vendor lock-in. The tool accelerates application delivery, fosters faster innovation with AI, serves as a secure database gateway, and simplifies integration. It supports various databases like PostgreSQL, MySQL, MS SQL Server, Oracle, MongoDB, and more, with planned support for additional databases. Users can connect on Discord for support and contact [email protected] for inquiries.

EpicStaff

EpicStaff is a powerful project management tool designed to streamline team collaboration and task management. It provides a user-friendly interface for creating and assigning tasks, tracking progress, and communicating with team members in real-time. With features such as task prioritization, deadline reminders, and file sharing capabilities, EpicStaff helps teams stay organized and productive. Whether you're working on a small project or managing a large team, EpicStaff is the perfect solution to keep everyone on the same page and ensure project success.

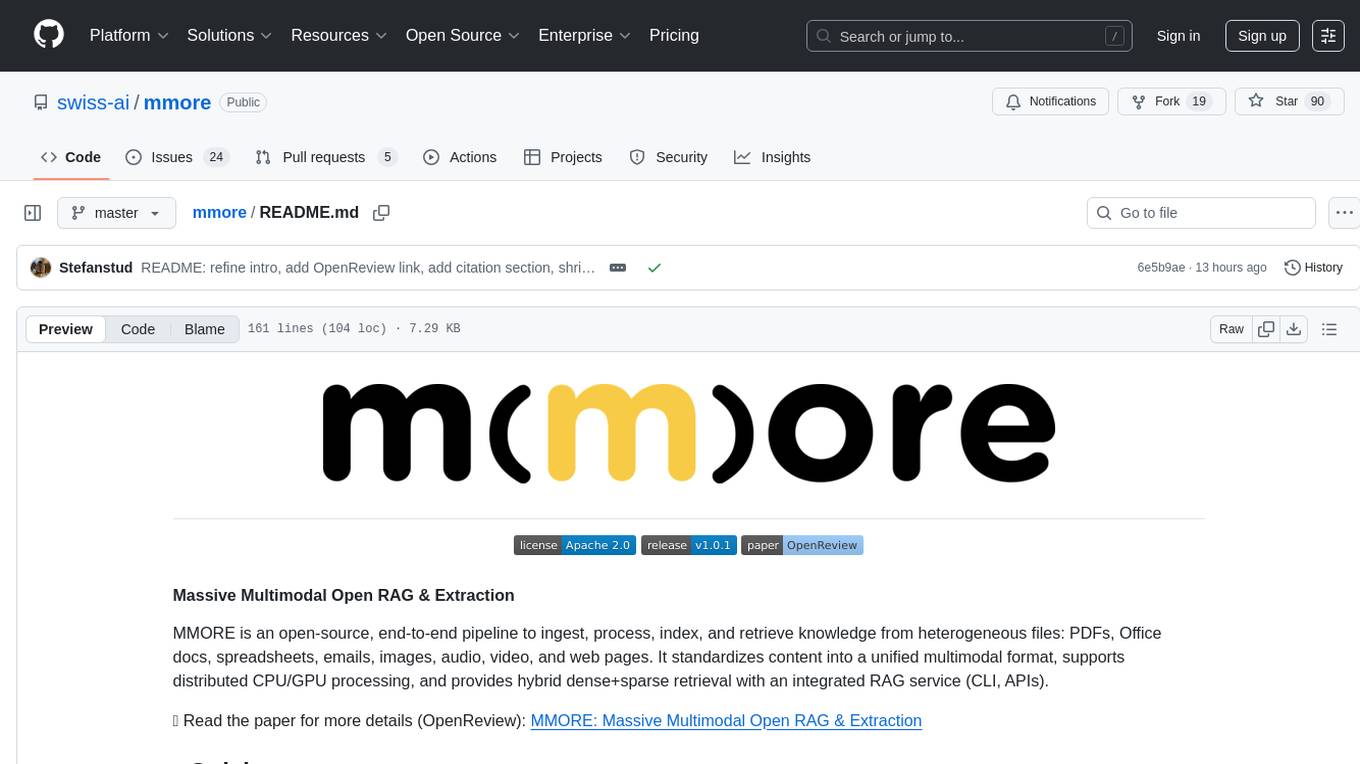

mmore

MMORE is an open-source, end-to-end pipeline for ingesting, processing, indexing, and retrieving knowledge from various file types such as PDFs, Office docs, images, audio, video, and web pages. It standardizes content into a unified multimodal format, supports distributed CPU/GPU processing, and offers hybrid dense+sparse retrieval with an integrated RAG service through CLI and APIs.

aegis-stack

Aegis Stack is a system for creating and evolving modular Python applications quickly, without the need for extensive testing or clean architecture. It allows users to go from idea to working prototype rapidly, using familiar tools. The stack includes a CLI, a built-in system dashboard called Overseer, and an optional conversational interface named Illiana. Users can start with basic components and add or remove features as needed, without being locked into initial choices. Aegis Stack aims to provide a flexible and efficient development environment for Python applications.

keras-llm-robot

The Keras-llm-robot Web UI project is an open-source tool designed for offline deployment and testing of various open-source models from the Hugging Face website. It allows users to combine multiple models through configuration to achieve functionalities like multimodal, RAG, Agent, and more. The project consists of three main interfaces: chat interface for language models, configuration interface for loading models, and tools & agent interface for auxiliary models. Users can interact with the language model through text, voice, and image inputs, and the tool supports features like model loading, quantization, fine-tuning, role-playing, code interpretation, speech recognition, image recognition, network search engine, and function calling.

For similar tasks

phospho

Phospho is a text analytics platform for LLM apps. It helps you detect issues and extract insights from text messages of your users or your app. You can gather user feedback, measure success, and iterate on your app to create the best conversational experience for your users.

OpenFactVerification

Loki is an open-source tool designed to automate the process of verifying the factuality of information. It provides a comprehensive pipeline for dissecting long texts into individual claims, assessing their worthiness for verification, generating queries for evidence search, crawling for evidence, and ultimately verifying the claims. This tool is especially useful for journalists, researchers, and anyone interested in the factuality of information.

open-parse

Open Parse is a Python library for visually discerning document layouts and chunking them effectively. It is designed to fill the gap in open-source libraries for handling complex documents. Unlike text splitting, which converts a file to raw text and slices it up, Open Parse visually analyzes documents for superior LLM input. It also supports basic markdown for parsing headings, bold, and italics, and has high-precision table support, extracting tables into clean Markdown formats with accuracy that surpasses traditional tools. Open Parse is extensible, allowing users to easily implement their own post-processing steps. It is also intuitive, with great editor support and completion everywhere, making it easy to use and learn.

spaCy

spaCy is an industrial-strength Natural Language Processing (NLP) library in Python and Cython. It incorporates the latest research and is designed for real-world applications. The library offers pretrained pipelines supporting 70+ languages, with advanced neural network models for tasks such as tagging, parsing, named entity recognition, and text classification. It also facilitates multi-task learning with pretrained transformers like BERT, along with a production-ready training system and streamlined model packaging, deployment, and workflow management. spaCy is commercial open-source software released under the MIT license.

NanoLLM

NanoLLM is a tool designed for optimized local inference for Large Language Models (LLMs) using HuggingFace-like APIs. It supports quantization, vision/language models, multimodal agents, speech, vector DB, and RAG. The tool aims to provide efficient and effective processing for LLMs on local devices, enhancing performance and usability for various AI applications.

ontogpt

OntoGPT is a Python package for extracting structured information from text using large language models, instruction prompts, and ontology-based grounding. It provides a command line interface and a minimal web app for easy usage. The tool has been evaluated on test data and is used in related projects like TALISMAN for gene set analysis. OntoGPT enables users to extract information from text by specifying relevant terms and provides the extracted objects as output.

lima

LIMA is a multilingual linguistic analyzer developed by the CEA LIST, LASTI laboratory. It is Free Software available under the MIT license. LIMA has state-of-the-art performance for more than 60 languages using deep learning modules. It also includes a powerful rules-based mechanism called ModEx for extracting information in new domains without annotated data.

liboai

liboai is a simple C++17 library for the OpenAI API, providing developers with access to OpenAI endpoints through a collection of methods and classes. It serves as a spiritual port of OpenAI's Python library, 'openai', with similar structure and features. The library supports various functionalities such as ChatGPT, Audio, Azure, Functions, Image DALL·E, Models, Completions, Edit, Embeddings, Files, Fine-tunes, Moderation, and Asynchronous Support. Users can easily integrate the library into their C++ projects to interact with OpenAI services.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.