jvm-openai

A minimalistic OpenAI API client for the JVM, written in Java 🤖

Stars: 70

jvm-openai is a minimalistic unofficial OpenAI API client for the JVM, written in Java. It serves as a Java client for OpenAI API with a focus on simplicity and minimal dependencies. The tool provides support for various OpenAI APIs and endpoints, including Audio, Chat, Embeddings, Fine-tuning, Batch, Files, Uploads, Images, Models, Moderations, Assistants, Threads, Messages, Runs, Run Steps, Vector Stores, Vector Store Files, Vector Store File Batches, Invites, Users, Projects, Project Users, Project Service Accounts, Project API Keys, and Audit Logs. Users can easily integrate this tool into their Java projects to interact with OpenAI services efficiently.

README:

A minimalistic unofficial OpenAI API client for the JVM, written in Java. The only dependency used is Jackson for JSON parsing.

jvm-openai works on Java 17+. Android support is not yet available.

implementation("io.github.stefanbratanov:jvm-openai:${version}")<dependency>

<groupId>io.github.stefanbratanov</groupId>

<artifactId>jvm-openai</artifactId>

<version>${version}</version>

</dependency>OpenAI openAI = OpenAI.newBuilder(System.getenv("OPENAI_API_KEY")).build();

ChatClient chatClient = openAI.chatClient();

CreateChatCompletionRequest createChatCompletionRequest = CreateChatCompletionRequest.newBuilder()

.model(OpenAIModel.GPT_3_5_TURBO)

.message(ChatMessage.userMessage("Who won the world series in 2020?"))

.build();

ChatCompletion chatCompletion = chatClient.createChatCompletion(createChatCompletionRequest);| API | Status |

|---|---|

| Audio | ✔️ |

| Chat | ✔️ |

| Embeddings | ✔️ |

| Fine-tuning | ✔️ |

| Batch | ✔️ |

| Files | ✔️ |

| Uploads | ✔️ |

| Images | ✔️ |

| Models | ✔️ |

| Moderations | ✔️ |

| API | Status |

|---|---|

| Assistants | ✔️ |

| Threads | ✔️ |

| Messages | ✔️ |

| Runs | ✔️ |

| Run Steps | ✔️ |

| Vector Stores | ✔️ |

| Vector Store Files | ✔️ |

| Vector Store File Batches | ✔️ |

| API | Status |

|---|---|

| Admin API Keys | |

| Invites | ✔️ |

| Users | ✔️ |

| Projects | ✔️ |

| Project Users | ✔️ |

| Project Service Accounts | ✔️ |

| Project API Keys | ✔️ |

| Project rate limits | |

| Audit Logs | ✔️ |

| Usage |

NOTE: Realtime and Legacy APIs are not supported

- Configure an organization and project

OpenAI openAI = OpenAI.newBuilder(System.getenv("OPENAI_API_KEY"))

.organization("org-zweLLamVlP6c5n66zY334ivs")

.project(System.getenv("PROJECT_ID"))

.build();- Configure a custom base url

OpenAI openAI = OpenAI.newBuilder(System.getenv("OPENAI_API_KEY"))

.baseUrl("https://api.foobar.com/v1/")

.build();- Configure a custom Java's

HttpClient

HttpClient httpClient = HttpClient.newBuilder()

.connectTimeout(Duration.ofSeconds(20))

.executor(Executors.newFixedThreadPool(3))

.proxy(ProxySelector.of(new InetSocketAddress("proxy.example.com", 80)))

.build();

OpenAI openAI = OpenAI.newBuilder(System.getenv("OPENAI_API_KEY"))

.httpClient(httpClient)

.build();- Configure a timeout for all requests

OpenAI openAI = OpenAI.newBuilder(System.getenv("OPENAI_API_KEY"))

.requestTimeout(Duration.ofSeconds(10))

.build();- Create chat completion async

ChatClient chatClient = openAI.chatClient();

CreateChatCompletionRequest request = CreateChatCompletionRequest.newBuilder()

.model(OpenAIModel.GPT_3_5_TURBO)

.message(ChatMessage.userMessage("Who won the world series in 2020?"))

.build();

CompletableFuture<ChatCompletion> chatCompletion = chatClient.createChatCompletionAsync(request);

chatCompletion.thenAccept(System.out::println);- Streaming

ChatClient chatClient = openAI.chatClient();

CreateChatCompletionRequest request = CreateChatCompletionRequest.newBuilder()

.message(ChatMessage.userMessage("Who won the world series in 2020?"))

.stream(true)

.build();

// with java.util.stream.Stream

chatClient.streamChatCompletion(request).forEach(System.out::println);

// with subscriber

chatClient.streamChatCompletion(request, new ChatCompletionStreamSubscriber() {

@Override

public void onChunk(ChatCompletionChunk chunk) {

System.out.println(chunk);

}

@Override

public void onException(Throwable ex) {

// ...

}

@Override

public void onComplete() {

// ...

}

});- Create image

ImagesClient imagesClient = openAI.imagesClient();

CreateImageRequest createImageRequest = CreateImageRequest.newBuilder()

.model(OpenAIModel.DALL_E_3)

.prompt("A cute baby sea otter")

.build();

Images images = imagesClient.createImage(createImageRequest);- Create speech

AudioClient audioClient = openAI.audioClient();

SpeechRequest request = SpeechRequest.newBuilder()

.model(OpenAIModel.TTS_1)

.input("The quick brown fox jumped over the lazy dog.")

.voice(Voice.ALLOY)

.build();

Path output = Paths.get("/tmp/speech.mp3");

audioClient.createSpeech(request, output);- Create translation

AudioClient audioClient = openAI.audioClient();

TranslationRequest request = TranslationRequest.newBuilder()

.model(OpenAIModel.WHISPER_1)

.file(Paths.get("/tmp/german.m4a"))

.build();

String translatedText = audioClient.createTranslation(request);- List models

ModelsClient modelsClient = openAI.modelsClient();

List<Model> models = modelsClient.listModels();- Classify if text violates OpenAI's Content Policy

ModerationsClient moderationsClient = openAI.moderationsClient();

ModerationRequest request = ModerationRequest.newBuilder()

.input("I want to bake a cake.")

.build();

Moderation moderation = moderationsClient.createModeration(request);

boolean violence = moderation.results().get(0).categories().violence();- Create and execute a batch

// Upload JSONL file containing requests for the batch

FilesClient filesClient = openAI.filesClient();

UploadFileRequest uploadInputFileRequest = UploadFileRequest.newBuilder()

.file(Paths.get("/tmp/batch-requests.jsonl"))

.purpose(Purpose.BATCH)

.build();

File inputFile = filesClient.uploadFile(uploadInputFileRequest);

BatchClient batchClient = openAI.batchClient();

CreateBatchRequest request = CreateBatchRequest.newBuilder()

.inputFileId(inputFile.id())

.endpoint("/v1/chat/completions")

.completionWindow("24h")

.build();

Batch batch = batchClient.createBatch(request);

// check status of the batch

Batch retrievedBatch = batchClient.retrieveBatch(batch.id());

System.out.println(retrievedBatch.status()); - Upload large file in multiple parts

UploadsClient uploadsClient = openAI.uploadsClient();

CreateUploadRequest createUploadRequest = CreateUploadRequest.newBuilder()

.filename("training_examples.jsonl")

.purpose(Purpose.FINE_TUNE)

.bytes(2147483648)

.mimeType("text/jsonl")

.build();

Upload upload = uploadsClient.createUpload(createUploadRequest);

UploadPart part1 = uploadsClient.addUploadPart(upload.id(), Paths.get("/tmp/part1.jsonl"));

UploadPart part2 = uploadsClient.addUploadPart(upload.id(), Paths.get("/tmp/part2.jsonl"));

CompleteUploadRequest completeUploadRequest = CompleteUploadRequest.newBuilder()

.partIds(List.of(part1.id(), part2.id()))

.build();

Upload completedUpload = uploadsClient.completeUpload(upload.id(), completeUploadRequest);

// the created usable File object

File file = completedUpload.file();- Build AI Assistant

AssistantsClient assistantsClient = openAI.assistantsClient();

ThreadsClient threadsClient = openAI.threadsClient();

MessagesClient messagesClient = openAI.messagesClient();

RunsClient runsClient = openAI.runsClient();

// Step 1: Create an Assistant

CreateAssistantRequest createAssistantRequest = CreateAssistantRequest.newBuilder()

.name("Math Tutor")

.model(OpenAIModel.GPT_3_5_TURBO_1106)

.instructions("You are a personal math tutor. Write and run code to answer math questions.")

.tool(Tool.codeInterpreterTool())

.build();

Assistant assistant = assistantsClient.createAssistant(createAssistantRequest);

// Step 2: Create a Thread

CreateThreadRequest createThreadRequest = CreateThreadRequest.newBuilder().build();

Thread thread = threadsClient.createThread(createThreadRequest);

// Step 3: Add a Message to a Thread

CreateMessageRequest createMessageRequest = CreateMessageRequest.newBuilder()

.role(Role.USER)

.content("I need to solve the equation `3x + 11 = 14`. Can you help me?")

.build();

ThreadMessage message = messagesClient.createMessage(thread.id(), createMessageRequest);

// Step 4: Run the Assistant

CreateRunRequest createRunRequest = CreateRunRequest.newBuilder()

.assistantId(assistant.id())

.instructions("Please address the user as Jane Doe. The user has a premium account.")

.build();

ThreadRun run = runsClient.createRun(thread.id(), createRunRequest);

// Step 5: Check the Run status

ThreadRun retrievedRun = runsClient.retrieveRun(thread.id(), run.id());

String status = retrievedRun.status();

// Step 6: Display the Assistant's Response

MessagesClient.PaginatedThreadMessages paginatedMessages = messagesClient.listMessages(thread.id(), PaginationQueryParameters.none(), Optional.empty());

List<ThreadMessage> messages = paginatedMessages.data();- Build AI Assistant with File Search Enabled

AssistantsClient assistantsClient = openAI.assistantsClient();

ThreadsClient threadsClient = openAI.threadsClient();

MessagesClient messagesClient = openAI.messagesClient();

RunsClient runsClient = openAI.runsClient();

VectorStoresClient vectorStoresClient = openAI.vectorStoresClient();

FilesClient filesClient = openAI.filesClient();

VectorStoreFileBatchesClient vectorStoreFileBatchesClient = openAI.vectorStoreFileBatchesClient();

// Step 1: Create a new Assistant with File Search Enabled

CreateAssistantRequest createAssistantRequest = CreateAssistantRequest.newBuilder()

.name("Financial Analyst Assistant")

.model(OpenAIModel.GPT_4_TURBO)

.instructions("You are an expert financial analyst. Use you knowledge base to answer questions about audited financial statements.")

.tool(Tool.fileSearchTool())

.build();

Assistant assistant = assistantsClient.createAssistant(createAssistantRequest);

// Step 2: Upload files and add them to a Vector Store

CreateVectorStoreRequest createVectorStoreRequest = CreateVectorStoreRequest.newBuilder()

.name("Financial Statements")

.build();

VectorStore vectorStore = vectorStoresClient.createVectorStore(createVectorStoreRequest);

UploadFileRequest uploadFileRequest1 = UploadFileRequest.newBuilder()

.file(Paths.get("edgar/goog-10k.pdf"))

.purpose(Purpose.ASSISTANTS)

.build();

File file1 = filesClient.uploadFile(uploadFileRequest1);

UploadFileRequest uploadFileRequest2 = UploadFileRequest.newBuilder()

.file(Paths.get("edgar/brka-10k.txt"))

.purpose(Purpose.ASSISTANTS)

.build();

File file2 = filesClient.uploadFile(uploadFileRequest2);

CreateVectorStoreFileBatchRequest createVectorStoreFileBatchRequest = CreateVectorStoreFileBatchRequest.newBuilder()

.fileIds(List.of(file1.id(), file2.id()))

.build();

VectorStoreFileBatch batch = vectorStoreFileBatchesClient.createVectorStoreFileBatch(vectorStore.id(), createVectorStoreFileBatchRequest);

// need to query the status of the file batch for completion

vectorStoreFileBatchesClient.retrieveVectorStoreFileBatch(vectorStore.id(), batch.id());

// Step 3: Update the assistant to use the new Vector Store

ModifyAssistantRequest modifyAssistantRequest = ModifyAssistantRequest.newBuilder()

.toolResources(ToolResources.fileSearchToolResources(vectorStore.id()))

.build();

assistantsClient.modifyAssistant(assistant.id(), modifyAssistantRequest);

// Step 4: Create a thread

CreateThreadRequest.Message message = CreateThreadRequest.Message.newBuilder()

.role(Role.USER)

.content("How many shares of AAPL were outstanding at the end of of October 2023?")

.build();

CreateThreadRequest createThreadRequest = CreateThreadRequest.newBuilder()

.message(message)

.build();

Thread thread = threadsClient.createThread(createThreadRequest);

// Step 5: Create a run and check the output

CreateRunRequest createRunRequest = CreateRunRequest.newBuilder()

.assistantId(assistant.id())

.instructions("Please address the user as Jane Doe. The user has a premium account.")

.build();

ThreadRun run = runsClient.createRun(thread.id(), createRunRequest);

// check the run status

ThreadRun retrievedRun = runsClient.retrieveRun(thread.id(), run.id());

String status = retrievedRun.status();

// display the Assistant's Response

MessagesClient.PaginatedThreadMessages paginatedMessages = messagesClient.listMessages(thread.id(), PaginationQueryParameters.none(), Optional.empty());

List<ThreadMessage> messages = paginatedMessages.data();- Create a run and stream the result of executing the run (Assistants Streaming)

RunsClient runsClient = openAI.runsClient();

CreateRunRequest createRunRequest = CreateRunRequest.newBuilder()

.assistantId(assistant.id())

.instructions("Please address the user as Jane Doe. The user has a premium account.")

.stream(true)

.build();

// with java.util.stream.Stream

runsClient.createRunAndStream(thread.id(), createRunRequest).forEach(assistantStreamEvent -> {

System.out.println(assistantStreamEvent.event());

System.out.println(assistantStreamEvent.data());

});

// with subscriber

runsClient.createRunAndStream(thread.id(), createRunRequest, new AssistantStreamEventSubscriber() {

@Override

public void onThread(String event, Thread thread) {

// ...

}

@Override

public void onThreadRun(String event, ThreadRun threadRun) {

// ...

}

@Override

public void onThreadRunStep(String event, ThreadRunStep threadRunStep) {

// ...

}

@Override

public void onThreadRunStepDelta(String event, ThreadRunStepDelta threadRunStepDelta) {

// ...

}

@Override

public void onThreadMessage(String event, ThreadMessage threadMessage) {

// ...

}

@Override

public void onThreadMessageDelta(String event, ThreadMessageDelta threadMessageDelta) {

// ...

}

@Override

public void onUnknownEvent(String event, String data) {

// ...

}

@Override

public void onException(Throwable ex) {

// ...

}

@Override

public void onComplete() {

// ...

}

});

// "createThreadAndRunAndStream" and "submitToolOutputsAndStream" methods are also available- List all the users in an organization.

OpenAI openAI = OpenAI.newBuilder()

.adminKey(System.getenv("OPENAI_ADMIN_KEY"))

.build();

UsersClient usersClient = openAI.usersClient();

List<User> users = usersClient.listUsers(Optional.empty(), Optional.empty()).data();- List user actions and configuration changes within an organization

OpenAI openAI = OpenAI.newBuilder()

.adminKey(System.getenv("OPENAI_ADMIN_KEY"))

.build();

AuditLogsClient auditLogsClient = openAI.auditLogsClient();

ListAuditLogsQueryParameters queryParameters = ListAuditLogsQueryParameters.newBuilder()

.eventTypes(List.of("invite.sent", "invite.deleted"))

.build();

List<AuditLog> auditLogs = auditLogsClient.listAuditLogs(queryParameters).data();For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for jvm-openai

Similar Open Source Tools

jvm-openai

jvm-openai is a minimalistic unofficial OpenAI API client for the JVM, written in Java. It serves as a Java client for OpenAI API with a focus on simplicity and minimal dependencies. The tool provides support for various OpenAI APIs and endpoints, including Audio, Chat, Embeddings, Fine-tuning, Batch, Files, Uploads, Images, Models, Moderations, Assistants, Threads, Messages, Runs, Run Steps, Vector Stores, Vector Store Files, Vector Store File Batches, Invites, Users, Projects, Project Users, Project Service Accounts, Project API Keys, and Audit Logs. Users can easily integrate this tool into their Java projects to interact with OpenAI services efficiently.

nb_utils

nb_utils is a Flutter package that provides a collection of useful methods, extensions, widgets, and utilities to simplify Flutter app development. It includes features like shared preferences, text styles, decorations, widgets, extensions for strings, colors, build context, date time, device, numbers, lists, scroll controllers, system methods, network utils, JWT decoding, and custom dialogs. The package aims to enhance productivity and streamline common tasks in Flutter development.

mediapipe-rs

MediaPipe-rs is a Rust library designed for MediaPipe tasks on WasmEdge WASI-NN. It offers easy-to-use low-code APIs similar to mediapipe-python, with low overhead and flexibility for custom media input. The library supports various tasks like object detection, image classification, gesture recognition, and more, including TfLite models, TF Hub models, and custom models. Users can create task instances, run sessions for pre-processing, inference, and post-processing, and speed up processing by reusing sessions. The library also provides support for audio tasks using audio data from symphonia, ffmpeg, or raw audio. Users can choose between CPU, GPU, or TPU devices for processing.

langchain-rust

LangChain Rust is a library for building applications with Large Language Models (LLMs) through composability. It provides a set of tools and components that can be used to create conversational agents, document loaders, and other applications that leverage LLMs. LangChain Rust supports a variety of LLMs, including OpenAI, Azure OpenAI, Ollama, and Anthropic Claude. It also supports a variety of embeddings, vector stores, and document loaders. LangChain Rust is designed to be easy to use and extensible, making it a great choice for developers who want to build applications with LLMs.

solon-ai

Solon-AI is a Java AI & MCP application development framework that supports various AI development capabilities. It is designed to be versatile, efficient, and open for integration with frameworks like SpringBoot, jFinal, and Vert.x. The framework provides examples of embedding solon-ai(& mcp) and showcases interfaces for chat models, function calling, vision, RAG (EmbeddingModel, Repository, DocumentLoader, RerankingModel), Ai Flow, MCP server, MCP client, and MCP Proxy. Solon-AI is part of the Solon project ecosystem, which includes other repositories for different functionalities.

openai-scala-client

This is a no-nonsense async Scala client for OpenAI API supporting all the available endpoints and params including streaming, chat completion, vision, and voice routines. It provides a single service called OpenAIService that supports various calls such as Models, Completions, Chat Completions, Edits, Images, Embeddings, Batches, Audio, Files, Fine-tunes, Moderations, Assistants, Threads, Thread Messages, Runs, Run Steps, Vector Stores, Vector Store Files, and Vector Store File Batches. The library aims to be self-contained with minimal dependencies and supports API-compatible providers like Azure OpenAI, Azure AI, Anthropic, Google Vertex AI, Groq, Grok, Fireworks AI, OctoAI, TogetherAI, Cerebras, Mistral, Deepseek, Ollama, FastChat, and more.

island-ai

island-ai is a TypeScript toolkit tailored for developers engaging with structured outputs from Large Language Models. It offers streamlined processes for handling, parsing, streaming, and leveraging AI-generated data across various applications. The toolkit includes packages like zod-stream for interfacing with LLM streams, stream-hooks for integrating streaming JSON data into React applications, and schema-stream for JSON streaming parsing based on Zod schemas. Additionally, related packages like @instructor-ai/instructor-js focus on data validation and retry mechanisms, enhancing the reliability of data processing workflows.

Code

A3S Code is an embeddable AI coding agent framework in Rust that allows users to build agents capable of reading, writing, and executing code with tool access, planning, and safety controls. It is production-ready with features like permission system, HITL confirmation, skill-based tool restrictions, and error recovery. The framework is extensible with 19 trait-based extension points and supports lane-based priority queue for scalable multi-machine task distribution.

agents-flex

Agents-Flex is a LLM Application Framework like LangChain base on Java. It provides a set of tools and components for building LLM applications, including LLM Visit, Prompt and Prompt Template Loader, Function Calling Definer, Invoker and Running, Memory, Embedding, Vector Storage, Resource Loaders, Document, Splitter, Loader, Parser, LLMs Chain, and Agents Chain.

retinify

Retinify is an advanced AI-powered stereo vision library designed for robotics, enabling real-time, high-precision 3D perception by leveraging GPU and NPU acceleration. It is open source under Apache-2.0 license, offers high precision 3D mapping and object recognition, runs computations on GPU for fast performance, accepts stereo images from any rectified camera setup, is cost-efficient using minimal hardware, and has minimal dependencies on CUDA Toolkit, cuDNN, and TensorRT. The tool provides a pipeline for stereo matching and supports various image data types independently of OpenCV.

zenu

ZeNu is a high-performance deep learning framework implemented in pure Rust, featuring a pure Rust implementation for safety and performance, GPU performance comparable to PyTorch with CUDA support, a simple and intuitive API, and a modular design for easy extension. It supports various layers like Linear, Convolution 2D, LSTM, and optimizers such as SGD and Adam. ZeNu also provides device support for CPU and CUDA (NVIDIA GPU) with CUDA 12.3 and cuDNN 9. The project structure includes main library, automatic differentiation engine, neural network layers, matrix operations, optimization algorithms, CUDA implementation, and other support crates. Users can find detailed implementations like MNIST classification, CIFAR10 classification, and ResNet implementation in the examples directory. Contributions to ZeNu are welcome under the MIT License.

EAGLE

Eagle is a family of Vision-Centric High-Resolution Multimodal LLMs that enhance multimodal LLM perception using a mix of vision encoders and various input resolutions. The model features a channel-concatenation-based fusion for vision experts with different architectures and knowledge, supporting up to over 1K input resolution. It excels in resolution-sensitive tasks like optical character recognition and document understanding.

pgmpy

pgmpy is a Python library for causal and probabilistic modeling using graphical models. It provides a uniform API for building, learning, and analyzing models, such as Bayesian Networks, Dynamic Bayesian Networks, Directed Acyclic Graphs (DAGs), and Structural Equation Models (SEMs). By integrating tools from both probabilistic inference and causal inference, pgmpy enables users to seamlessly transition between predictive and causal analyses. Key features include causal discovery/structure learning, causal validation, parameter learning, probabilistic inference, causal inference, and simulations. The library offers resources such as example notebooks, tutorial notebooks, blog posts, documentation, bug reports, and feature requests.

zig-aio

zig-aio is a library that provides an io_uring-like asynchronous API and coroutine-powered IO tasks for the Zig programming language. It offers support for different operating systems and backends, such as io_uring, iocp, and posix. The library aims to provide efficient IO operations by leveraging coroutines and async IO mechanisms. Users can create servers and clients with ease using the provided API functions for socket operations, sending and receiving data, and managing connections.

Janus

Janus is a series of unified multimodal understanding and generation models, including Janus-Pro, Janus, and JanusFlow. Janus-Pro is an advanced version that improves both multimodal understanding and visual generation significantly. Janus decouples visual encoding for unified multimodal understanding and generation, surpassing previous models. JanusFlow harmonizes autoregression and rectified flow for unified multimodal understanding and generation, achieving comparable or superior performance to specialized models. The models are available for download and usage, supporting a broad range of research in academic and commercial communities.

SwiftAgent

A type-safe, declarative framework for building AI agents in Swift, SwiftAgent is built on Apple FoundationModels. It allows users to compose agents by combining Steps in a declarative syntax similar to SwiftUI. The framework ensures compile-time checked input/output types, native Apple AI integration, structured output generation, and built-in security features like permission, sandbox, and guardrail systems. SwiftAgent is extensible with MCP integration, distributed agents, and a skills system. Users can install SwiftAgent with Swift 6.2+ on iOS 26+, macOS 26+, or Xcode 26+ using Swift Package Manager.

For similar tasks

AIHub

AIHub is a client that integrates the capabilities of multiple large models, allowing users to quickly and easily build their own personalized AI assistants. It supports custom plugins for endless possibilities. The tool provides powerful AI capabilities, rich configuration options, customization of AI assistants for text and image conversations, AI drawing, installation of custom plugins, personal knowledge base building, AI calendar generation, support for AI mini programs, and ongoing development of additional features. Users can download the application package from the release section, resolve issues related to macOS app installation, and contribute ideas by submitting issues. The project development involves installation, development, and building processes for different operating systems.

jvm-openai

jvm-openai is a minimalistic unofficial OpenAI API client for the JVM, written in Java. It serves as a Java client for OpenAI API with a focus on simplicity and minimal dependencies. The tool provides support for various OpenAI APIs and endpoints, including Audio, Chat, Embeddings, Fine-tuning, Batch, Files, Uploads, Images, Models, Moderations, Assistants, Threads, Messages, Runs, Run Steps, Vector Stores, Vector Store Files, Vector Store File Batches, Invites, Users, Projects, Project Users, Project Service Accounts, Project API Keys, and Audit Logs. Users can easily integrate this tool into their Java projects to interact with OpenAI services efficiently.

second-brain-ai-assistant-course

This open-source course teaches how to build an advanced RAG and LLM system using LLMOps and ML systems best practices. It helps you create an AI assistant that leverages your personal knowledge base to answer questions, summarize documents, and provide insights. The course covers topics such as LLM system architecture, pipeline orchestration, large-scale web crawling, model fine-tuning, and advanced RAG features. It is suitable for ML/AI engineers and data/software engineers & data scientists looking to level up to production AI systems. The course is free, with minimal costs for tools like OpenAI's API and Hugging Face's Dedicated Endpoints. Participants will build two separate Python applications for offline ML pipelines and online inference pipeline.

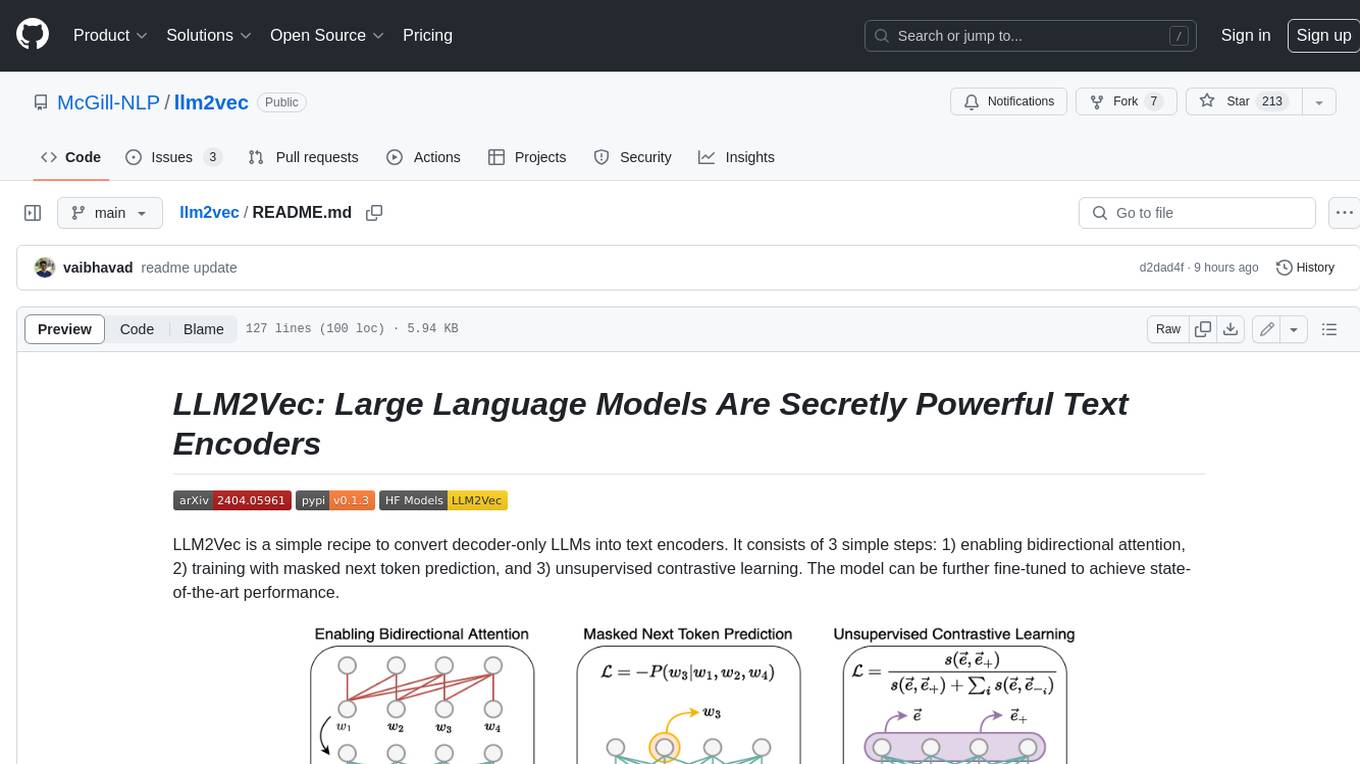

llm2vec

LLM2Vec is a simple recipe to convert decoder-only LLMs into text encoders. It consists of 3 simple steps: 1) enabling bidirectional attention, 2) training with masked next token prediction, and 3) unsupervised contrastive learning. The model can be further fine-tuned to achieve state-of-the-art performance.

marvin

Marvin is a lightweight AI toolkit for building natural language interfaces that are reliable, scalable, and easy to trust. Each of Marvin's tools is simple and self-documenting, using AI to solve common but complex challenges like entity extraction, classification, and generating synthetic data. Each tool is independent and incrementally adoptable, so you can use them on their own or in combination with any other library. Marvin is also multi-modal, supporting both image and audio generation as well using images as inputs for extraction and classification. Marvin is for developers who care more about _using_ AI than _building_ AI, and we are focused on creating an exceptional developer experience. Marvin users should feel empowered to bring tightly-scoped "AI magic" into any traditional software project with just a few extra lines of code. Marvin aims to merge the best practices for building dependable, observable software with the best practices for building with generative AI into a single, easy-to-use library. It's a serious tool, but we hope you have fun with it. Marvin is open-source, free to use, and made with 💙 by the team at Prefect.

curated-transformers

Curated Transformers is a transformer library for PyTorch that provides state-of-the-art models composed of reusable components. It supports various transformer architectures, including encoders like ALBERT, BERT, and RoBERTa, and decoders like Falcon, Llama, and MPT. The library emphasizes consistent type annotations, minimal dependencies, and ease of use for education and research. It has been production-tested by Explosion and will be the default transformer implementation in spaCy 3.7.

txtai

Txtai is an all-in-one embeddings database for semantic search, LLM orchestration, and language model workflows. It combines vector indexes, graph networks, and relational databases to enable vector search with SQL, topic modeling, retrieval augmented generation, and more. Txtai can stand alone or serve as a knowledge source for large language models (LLMs). Key features include vector search with SQL, object storage, topic modeling, graph analysis, multimodal indexing, embedding creation for various data types, pipelines powered by language models, workflows to connect pipelines, and support for Python, JavaScript, Java, Rust, and Go. Txtai is open-source under the Apache 2.0 license.

bert4torch

**bert4torch** is a high-level framework for training and deploying transformer models in PyTorch. It provides a simple and efficient API for building, training, and evaluating transformer models, and supports a wide range of pre-trained models, including BERT, RoBERTa, ALBERT, XLNet, and GPT-2. bert4torch also includes a number of useful features, such as data loading, tokenization, and model evaluation. It is a powerful and versatile tool for natural language processing tasks.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.