simple-dataengineering-ai-stack

None

Stars: 156

This repository provides curated, dockerized blueprints for building and demoing modern data and AI platforms. Users can spin up end-to-end environments including data lake foundations, pipeline orchestration, observability, and AI-friendly tooling with just a few commands. The vision is to accelerate experimentation, stay modular, promote best practices, and bridge different personas in data and AI fields. The repository includes various directories focusing on different aspects of data engineering and AI, such as data infrastructure, data pipeline orchestration, AI-powered job orchestration, and more. Users can choose a stack, launch it locally, and compose their platform by running multiple stacks side-by-side. Typical use cases include prototyping a lakehouse, trialing ETL & AI pipelines, providing sandboxes for analysts, and validating monitoring/backup strategies.

README:

Build and demo modern data and AI platforms without waiting on infrastructure tickets. This repository collects curated, dockerized blueprints that let data engineers, ML teams, and platform builders spin up end-to-end environments—data lake foundations, pipeline orchestration, observability, and AI-friendly tooling—in a few commands.

- Accelerate experimentation: Stand up realistic data/AI environments locally or on a single VM, then iterate on pipelines, models, and dashboards with production-inspired defaults.

- Stay modular: Each stack is self-contained and composable—pick the lakehouse, orchestration, or monitoring pieces you need today and combine them as your platform grows.

- Promote best practices: Included services cover security, backups, health checks, and resource monitoring so teams focus on insights, not plumbing.

- Bridge personas: Empower data engineers, AI engineers, analytics developers, and operators to collaborate against the same sandbox with role-aligned interfaces.

| Directory | Focus | Highlights | Docs |

|---|---|---|---|

data-Infrastructure/ |

Platform foundations | Opinionated essays covering the why behind stack choices—start with hidden pitfalls that derail data platforms before they scale | The Hidden Problems in Data Infrastructure |

datalake/ |

Data infrastructure | PostgreSQL-based lake with connection pooling, Redis cache, no-code access, backups, and uptime monitoring | Postgres Lake README |

data_pipeline_orchestration/ |

Data & AI engineering | Apache Airflow bundle with MinIO object storage, customizable ETL worker, resource monitoring, and helper scripts | Airflow Stack README |

ducklake-ai-platform/ |

Lakehouse + AI workspace | DuckDB + DuckLake core with Marimo notebooks, MinIO object storage, Postgres metadata, and vector search-ready defaults | DuckLake README |

dataengineering-dashboard-vision/ |

Observability agent | Conversational Grafana + Prometheus assistant delivers root-cause context and anomaly summaries via chat | Dashboard Agent README |

dwh-rag-framework/ |

Warehouse-first RAG lab | DuckDB snapshots feeding LightRAG indexing with Marimo notebooks and Cronicle automation for agent validation | RAG Framework README |

n8n-data-ai-orchestration/ |

AI-powered job orchestration | Customer retention workflow that blends SQL, enrichment, OpenAI strategy generation, Slack/email reporting, and failure alerting in n8n | n8n Flow README |

mcp-data-server/ |

Universal data loader MCP | Format-agnostic FastAPI server with auto-detect parsers, DuckDB SQL querying, and REST endpoints for instant file-to-query workflows | MCP Data Server README |

data-agent-sdk/ |

Data engineering agent SDK | Minimal SDK for building data agents with SQL/Polars tools, governance hooks, lineage tracking, and MCP server support in ~2,000 lines | Data Agent SDK README |

python-redis-streaming/ |

Streaming ingestion engine | Async Python + Redis Streams + Postgres stack with uv tooling, DLQ handling, and CLI helpers for monitoring and benchmarks | Python Redis Streaming README |

redis-postgres-pipeline/ |

High-performance pipeline | Production-ready data pipeline with Redis queues, dedup, caching, Postgres 18 async I/O, UNLOGGED staging, materialized views, and Polars — handles 500M records without Spark | Redis Postgres Pipeline README |

postgres-duckdb-sync/ |

Postgres → DuckDB sync lab | 150-line Polars loop that copies live Postgres rows to DuckDB via Parquet, SQLite checkpoints, schema drift detection, and soft-delete support — exactly what the “Copying Postgres to DuckDB” post prescribes | Postgres → DuckDB Sync README |

spark-to-polars-migration/ |

Spark-to-single-node rewrite lab | Side-by-side Spark UDF baseline with Polars and DuckDB replacements, Dockerized for benchmarking single-node performance | Spark-to-Polars README |

data-pipeline-security/ |

Data Pipeline Security | Secrets & Identity | Data-pipeline-security README |

elasticsearch-vs-vector-search/ |

Search architecture lab | Hands-on comparison of Elasticsearch keyword search vs pgvector semantic search with hybrid approach, performance benchmarks, and production decision framework | Elasticsearch vs Vector Search README |

knowledge-search-hybrid/ |

Local hybrid search stack | Config-driven Lucene BM25 + local embeddings + HNSW kNN + RAG answers in one container; Polars ETL on JSONL drops, autocomplete, and disk-backed indexes | Hybrid Knowledge Search README |

mdm-polars-duckdb/ |

MDM golden customer table | Implements “Creating One Clean Customer Table from 7 Conflicting Sources” with Polars, Pandera, RapidFuzz, and DuckDB; includes synthetic messy inputs, uv workflow, and Docker image for five-minute runs | Polars + DuckDB Golden Table README |

Pair the conceptual deep dives with the hands-on stack READMEs: skim data-Infrastructure/ to understand the platform philosophy, then jump into the stack directory that matches your next experiment for deployment steps and credentials.

- Install prerequisites: Docker + Docker Compose v2 on a machine with adequate CPU, RAM, and disk (see stack-specific READMEs for sizing).

-

Clone the repo:

git clone https://github.com/hottechstack/simple-data-ai-stack.git cd simple-data-ai-stack - Choose a stack: Browse the directories above and open the corresponding README for detailed instructions.

-

Launch locally: Most stacks run with a single command (

docker compose up -d,./start_pipeline.sh start, etc.). Scripts expose health checks, sample data loaders, and log helpers to keep you moving. - Compose your platform: Run stacks side-by-side for a fuller platform—pipe object storage into the SQL lake, orchestrate model feature jobs, or layer BI tooling on top.

- Prototype a lakehouse with production-grade components before committing to cloud services.

- Trial ETL & AI feature pipelines with real datasets and observe resource footprints.

- Provide analysts and business users a sandbox with self-service interfaces (NocoDB, pgAdmin, dashboards).

- Validate monitoring/backup strategies in isolation before promoting to shared environments.

- Land structured/unstructured data via MinIO or direct DB ingestion.

- Transform using Airflow-managed ETL jobs powered by DuckDB and Polars.

- Serve & explore through PostgreSQL, NocoDB, BI tools, or custom APIs.

- Observe everything with built-in uptime checks, metrics dashboards, and automated backups.

The stacks are designed to connect: object storage flows into transformation jobs, refined outputs land back into the data lake, and monitoring tools keep the feedback loop tight.

- ✅ Vector databases + search architecture comparison (see

elasticsearch-vs-vector-search/) - Streaming ingestion profile (Kafka/Redpanda + stream processing + materialized views).

- Notebook & model experimentation workspace with GPU-ready containers.

- Terraform modules to mirror these blueprints in managed cloud environments.

Have an idea or internal stack you want to share? Contributions are welcome—open an issue or PR to propose a new module or enhancement.

- Fork the repository and work inside a dedicated directory for your stack.

- Document your stack thoroughly (architecture, environment variables, health checks, teardown steps).

- Reuse existing patterns for Docker Compose profiles, scripts, and monitoring hooks to keep experiences consistent.

- Submit a PR describing the use case, prerequisites, and any sample data included.

Unless otherwise stated in a subdirectory, content is provided as-is for educational and production experimentation. Review upstream container licenses before deploying in regulated environments.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for simple-dataengineering-ai-stack

Similar Open Source Tools

simple-dataengineering-ai-stack

This repository provides curated, dockerized blueprints for building and demoing modern data and AI platforms. Users can spin up end-to-end environments including data lake foundations, pipeline orchestration, observability, and AI-friendly tooling with just a few commands. The vision is to accelerate experimentation, stay modular, promote best practices, and bridge different personas in data and AI fields. The repository includes various directories focusing on different aspects of data engineering and AI, such as data infrastructure, data pipeline orchestration, AI-powered job orchestration, and more. Users can choose a stack, launch it locally, and compose their platform by running multiple stacks side-by-side. Typical use cases include prototyping a lakehouse, trialing ETL & AI pipelines, providing sandboxes for analysts, and validating monitoring/backup strategies.

synmetrix

Synmetrix is an open source data engineering platform and semantic layer for centralized metrics management. It provides a complete framework for modeling, integrating, transforming, aggregating, and distributing metrics data at scale. Key features include data modeling and transformations, semantic layer for unified data model, scheduled reports and alerts, versioning, role-based access control, data exploration, caching, and collaboration on metrics modeling. Synmetrix leverages Cube.js to consolidate metrics from various sources and distribute them downstream via a SQL API. Use cases include data democratization, business intelligence and reporting, embedded analytics, and enhancing accuracy in data handling and queries. The tool speeds up data-driven workflows from metrics definition to consumption by combining data engineering best practices with self-service analytics capabilities.

mlcraft

Synmetrix (prev. MLCraft) is an open source data engineering platform and semantic layer for centralized metrics management. It provides a complete framework for modeling, integrating, transforming, aggregating, and distributing metrics data at scale. Key features include data modeling and transformations, semantic layer for unified data model, scheduled reports and alerts, versioning, role-based access control, data exploration, caching, and collaboration on metrics modeling. Synmetrix leverages Cube (Cube.js) for flexible data models that consolidate metrics from various sources, enabling downstream distribution via a SQL API for integration into BI tools, reporting, dashboards, and data science. Use cases include data democratization, business intelligence, embedded analytics, and enhancing accuracy in data handling and queries. The tool speeds up data-driven workflows from metrics definition to consumption by combining data engineering best practices with self-service analytics capabilities.

aistore

AIStore is a lightweight object storage system designed for AI applications. It is highly scalable, reliable, and easy to use. AIStore can be deployed on any commodity hardware, and it can be used to store and manage large datasets for deep learning and other AI applications.

positronic

Positronic is an end-to-end toolkit for building ML-driven robotics systems, aiming to simplify data collection, messy data handling, and complex deployment in the field of robotics. It provides a Python-native stack for real-life ML robotics, covering hardware integration, dataset curation, policy training, deployment, and monitoring. The toolkit is designed to make professional-grade ML robotics approachable, without the need for ROS. Positronic offers solutions for data ops, hardware drivers, unified inference API, and iteration workflows, enabling teams to focus on developing manipulation systems for robots.

ksail

KSail is a tool that bundles common Kubernetes tooling into a single binary, providing a unified workflow for creating clusters, deploying workloads, and operating cloud-native stacks across different distributions and providers. It eliminates the need for multiple CLI tools and bespoke scripts, offering features like one binary for provisioning and deployment, support for various cluster configurations, mirror registries, GitOps integration, customizable stack selection, built-in SOPS for secrets management, AI assistant for interactive chat, and a VSCode extension for cluster management.

neuro-san-studio

Neuro SAN Studio is an open-source library for building agent networks across various industries. It simplifies the development of collaborative AI systems by enabling users to create sophisticated multi-agent applications using declarative configuration files. The tool offers features like data-driven configuration, adaptive communication protocols, safe data handling, dynamic agent network designer, flexible tool integration, robust traceability, and cloud-agnostic deployment. It has been used in various use-cases such as automated generation of multi-agent configurations, airline policy assistance, banking operations, market analysis in consumer packaged goods, insurance claims processing, intranet knowledge management, retail operations, telco network support, therapy vignette supervision, and more.

ToolJet

ToolJet is an open-source platform for building and deploying internal tools, workflows, and AI agents. It offers a visual builder with drag-and-drop UI, integrations with databases, APIs, SaaS apps, and object storage. The community edition includes features like a visual app builder, ToolJet database, multi-page apps, collaboration tools, extensibility with plugins, code execution, and security measures. ToolJet AI, the enterprise version, adds AI capabilities for app generation, query building, debugging, agent creation, security compliance, user management, environment management, GitSync, branding, access control, embedded apps, and enterprise support.

genkit

Firebase Genkit (beta) is a framework with powerful tooling to help app developers build, test, deploy, and monitor AI-powered features with confidence. Genkit is cloud optimized and code-centric, integrating with many services that have free tiers to get started. It provides unified API for generation, context-aware AI features, evaluation of AI workflow, extensibility with plugins, easy deployment to Firebase or Google Cloud, observability and monitoring with OpenTelemetry, and a developer UI for prototyping and testing AI features locally. Genkit works seamlessly with Firebase or Google Cloud projects through official plugins and templates.

video-search-and-summarization

The NVIDIA AI Blueprint for Video Search and Summarization is a repository showcasing video search and summarization agent with NVIDIA NIM microservices. It enables industries to make better decisions faster by providing insightful, accurate, and interactive video analytics AI agents. These agents can perform tasks like video summarization and visual question-answering, unlocking new application possibilities. The repository includes software components like NIM microservices, ingestion pipeline, and CA-RAG module, offering a comprehensive solution for analyzing and summarizing large volumes of video data. The target audience includes video analysts, IT engineers, and GenAI developers who can benefit from the blueprint's 1-click deployment steps, easy-to-manage configurations, and customization options. The repository structure overview includes directories for deployment, source code, and training notebooks, along with documentation for detailed instructions. Hardware requirements vary based on deployment topology and dependencies like VLM and LLM, with different deployment methods such as Launchable Deployment, Docker Compose Deployment, and Helm Chart Deployment provided for various use cases.

Genkit

Genkit is an open-source framework for building full-stack AI-powered applications, used in production by Google's Firebase. It provides SDKs for JavaScript/TypeScript (Stable), Go (Beta), and Python (Alpha) with unified interface for integrating AI models from providers like Google, OpenAI, Anthropic, Ollama. Rapidly build chatbots, automations, and recommendation systems using streamlined APIs for multimodal content, structured outputs, tool calling, and agentic workflows. Genkit simplifies AI integration with open-source SDK, unified APIs, and offers text and image generation, structured data generation, tool calling, prompt templating, persisted chat interfaces, AI workflows, and AI-powered data retrieval (RAG).

graphbit

GraphBit is an industry-grade agentic AI framework built for developers and AI teams that demand stability, scalability, and low resource usage. It is written in Rust for maximum performance and safety, delivering significantly lower CPU usage and memory footprint compared to leading alternatives. The framework is designed to run multi-agent workflows in parallel, persist memory across steps, recover from failures, and ensure 100% task success under load. With lightweight architecture, observability, and concurrency support, GraphBit is suitable for deployment in high-scale enterprise environments and low-resource edge scenarios.

doris

Doris is a lightweight and user-friendly data visualization tool designed for quick and easy exploration of datasets. It provides a simple interface for users to upload their data and generate interactive visualizations without the need for coding. With Doris, users can easily create charts, graphs, and dashboards to analyze and present their data in a visually appealing way. The tool supports various data formats and offers customization options to tailor visualizations to specific needs. Whether you are a data analyst, researcher, or student, Doris simplifies the process of data exploration and presentation.

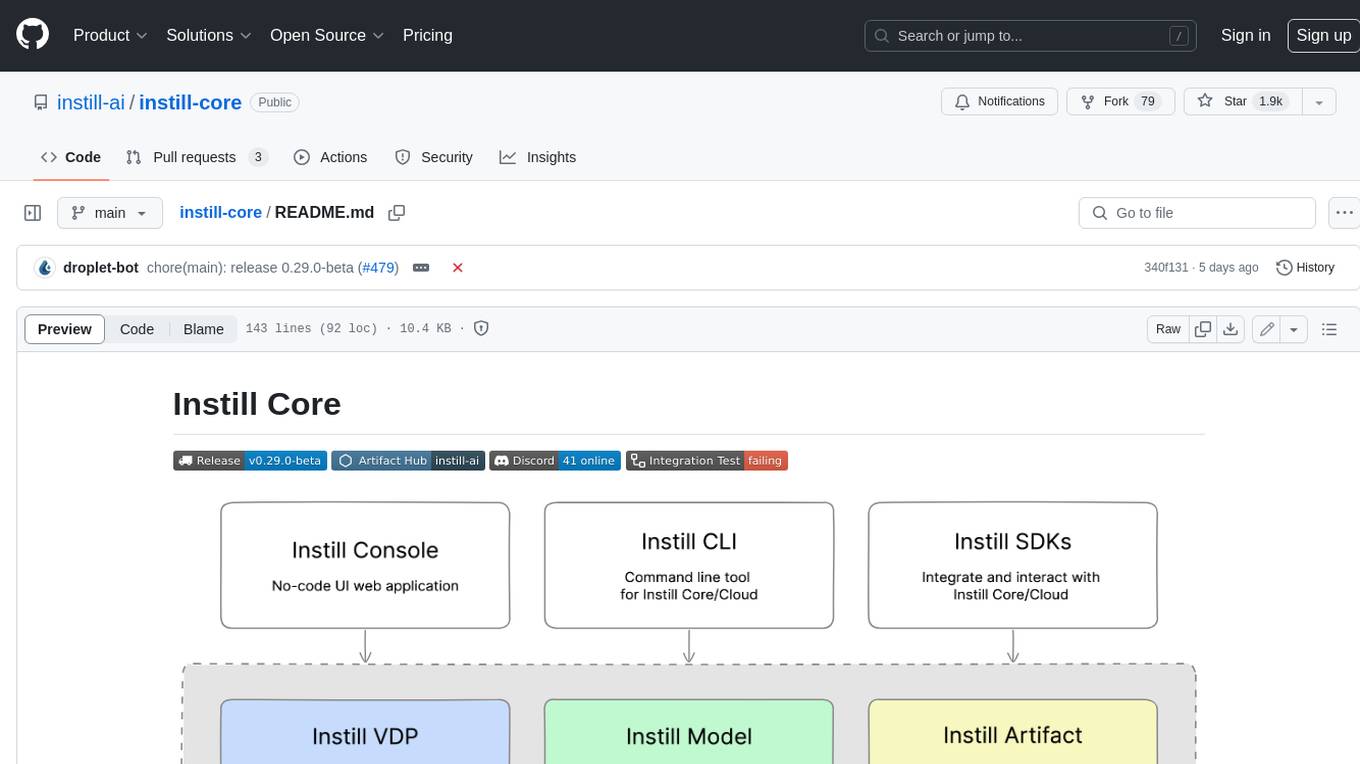

instill-core

Instill Core is an open-source orchestrator comprising a collection of source-available projects designed to streamline every aspect of building versatile AI features with unstructured data. It includes Instill VDP (Versatile Data Pipeline) for unstructured data, AI, and pipeline orchestration, Instill Model for scalable MLOps and LLMOps for open-source or custom AI models, and Instill Artifact for unified unstructured data management. Instill Core can be used for tasks such as building, testing, and sharing pipelines, importing, serving, fine-tuning, and monitoring ML models, and transforming documents, images, audio, and video into a unified AI-ready format.

pluto

Pluto is a development tool dedicated to helping developers **build cloud and AI applications more conveniently** , resolving issues such as the challenging deployment of AI applications and open-source models. Developers are able to write applications in familiar programming languages like **Python and TypeScript** , **directly defining and utilizing the cloud resources necessary for the application within their code base** , such as AWS SageMaker, DynamoDB, and more. Pluto automatically deduces the infrastructure resource needs of the app through **static program analysis** and proceeds to create these resources on the specified cloud platform, **simplifying the resources creation and application deployment process**.

ai-factory

AI Factory is a CLI tool and skill system that streamlines AI-powered development by handling context setup, skill installation, and workflow configuration. It supports multiple AI coding agents, offers spec-driven development, and integrates with popular tech stacks like Next.js, Laravel, Django, and Express. The tool ensures zero configuration, best practices adherence, community skills utilization, and multi-agent support. Users can create plans, tasks, and commits for structured feature development, bug fixes, and self-improvement. Security is a priority with mandatory two-level scans for external skills. The tool's learning loop generates patches from bug fixes to enhance future implementations.

For similar tasks

simple-dataengineering-ai-stack

This repository provides curated, dockerized blueprints for building and demoing modern data and AI platforms. Users can spin up end-to-end environments including data lake foundations, pipeline orchestration, observability, and AI-friendly tooling with just a few commands. The vision is to accelerate experimentation, stay modular, promote best practices, and bridge different personas in data and AI fields. The repository includes various directories focusing on different aspects of data engineering and AI, such as data infrastructure, data pipeline orchestration, AI-powered job orchestration, and more. Users can choose a stack, launch it locally, and compose their platform by running multiple stacks side-by-side. Typical use cases include prototyping a lakehouse, trialing ETL & AI pipelines, providing sandboxes for analysts, and validating monitoring/backup strategies.

llm-zoomcamp

LLM Zoomcamp is a free online course focusing on real-life applications of Large Language Models (LLMs). Over 10 weeks, participants will learn to build an AI bot capable of answering questions based on a knowledge base. The course covers topics such as LLMs, RAG, open-source LLMs, vector databases, orchestration, monitoring, and advanced RAG systems. Pre-requisites include comfort with programming, Python, and the command line, with no prior exposure to AI or ML required. The course features a pre-course workshop and is led by instructors Alexey Grigorev and Magdalena Kuhn, with support from sponsors and partners.

emqx

EMQX is a highly scalable and reliable MQTT platform designed for IoT data infrastructure. It supports various protocols like MQTT 5.0, 3.1.1, and 3.1, as well as MQTT-SN, CoAP, LwM2M, and MQTT over QUIC. EMQX allows connecting millions of IoT devices, processing messages in real time, and integrating with backend data systems. It is suitable for applications in AI, IoT, IIoT, connected vehicles, smart cities, and more. The tool offers features like massive scalability, powerful rule engine, flow designer, AI processing, robust security, observability, management, extensibility, and a unified experience with the Business Source License (BSL) 1.1.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.