graphbit

GraphBit is the world’s first enterprise-grade Agentic AI framework, built on a Rust core with a Python wrapper for unmatched speed, security, and scalability. It enables reliable multi-agent workflows with minimal CPU and memory usage, making it production-ready for real-world enterprise environments.

Stars: 510

GraphBit is an industry-grade agentic AI framework built for developers and AI teams that demand stability, scalability, and low resource usage. It is written in Rust for maximum performance and safety, delivering significantly lower CPU usage and memory footprint compared to leading alternatives. The framework is designed to run multi-agent workflows in parallel, persist memory across steps, recover from failures, and ensure 100% task success under load. With lightweight architecture, observability, and concurrency support, GraphBit is suitable for deployment in high-scale enterprise environments and low-resource edge scenarios.

README:

Type-Safe AI Agent Workflows with Rust Performance

Read this in other languages: 🇨🇳 简体中文 | 🇨🇳 繁體中文 | 🇪🇸 Español | 🇫🇷 Français | 🇩🇪 Deutsch | 🇯🇵 日本語 | 🇰🇷 한국어 | 🇮🇳 हिन्दी | 🇸🇦 العربية | 🇮🇹 Italiano | 🇧🇷 Português | 🇷🇺 Русский | 🇧🇩 বাংলা

GraphBit is an open-source agentic AI framework for deterministic, concurrent, low-overhead execution.

Efficiency decides who scales. GraphBit is built for developers who need deterministic, concurrent, and ultra-efficient AI execution without the overhead.

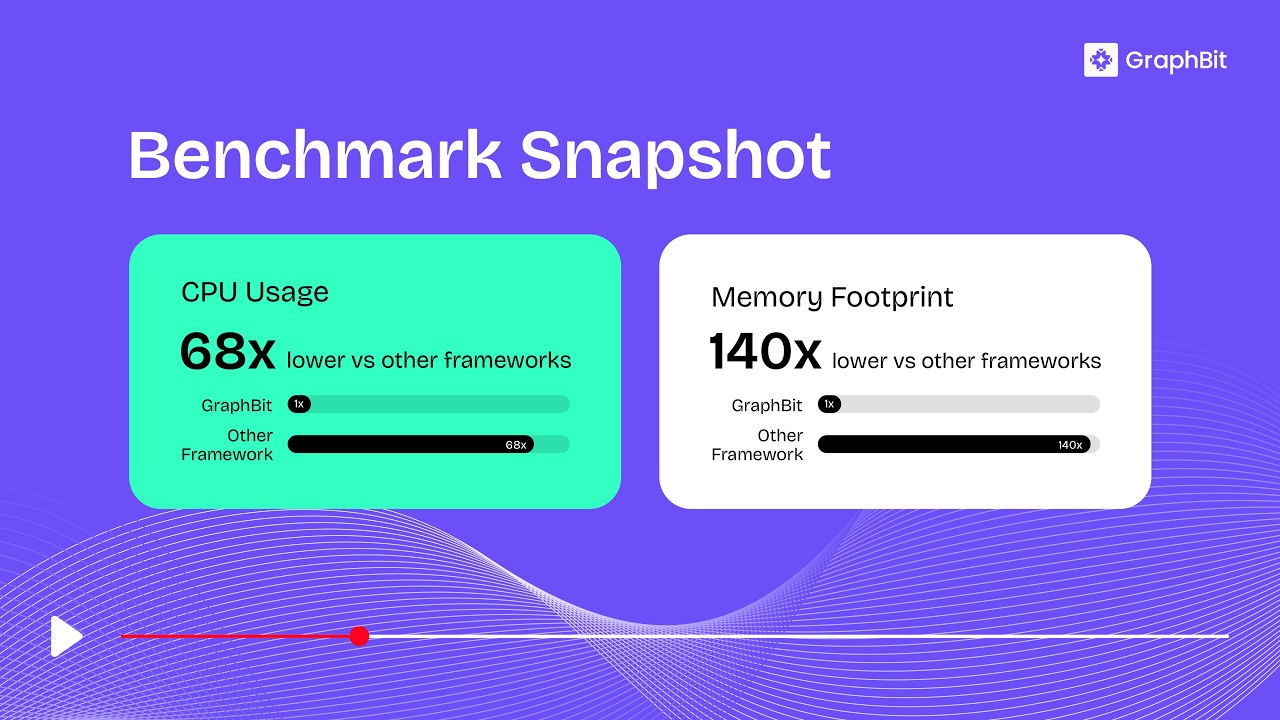

Built with a Rust core and a minimal Python layer, GraphBit delivers up to 68× lower CPU usage and 140× lower memory footprint than other frameworks, while maintaining equal or greater throughput.

It powers multi-agent workflows that run in parallel, persist memory across steps, self-recover from failures, and ensure 100% task reliability. GraphBit is built for production workloads, from enterprise AI systems to low-resource edge deployments.

Grant Thornton Germany adopted GraphBit to move AI from "permanent pilot" to production without regulatory risk as a core component of their tech stack.

- Tool Selection - LLMs intelligently choose tools based on descriptions

- Type Safety - Strong typing through every execution layer

- Reliability - Circuit breakers, retry policies, and error handling and fault recovery

- Multi-LLM Support - OpenAI, Azure OpenAI, Anthropic, OpenRouter, DeepSeek, Replicate, Ollama, TogetherAI and more

- Resource Management - Concurrency controls and memory optimization

- Observability - Built-in tracing, structured logs, and performance metrics

GraphBit was built for efficiency at scale, not theoretical claims, but measured results.

Our internal benchmark suite compared GraphBit to leading Python-based agent frameworks across identical workloads.

| Metric | GraphBit | Other Frameworks | Gain |

|---|---|---|---|

| CPU Usage | 1.0× baseline | 68.3× higher | ~68× CPU |

| Memory Footprint | 1.0× baseline | 140× higher | ~140× Memory |

| Execution Speed | ≈ equal / faster | — | Consistent throughput |

| Determinism | 100% success | Variable | Guaranteed reliability |

GraphBit consistently delivers production-grade efficiency across LLM calls, tool invocations, and multi-agent chains.

Choose GraphBit if you need:

- Production-grade multi-agent systems that won't collapse under load

- Type-safe execution and reproducible outputs

- Real-time orchestration for hybrid or streaming AI applications

- Rust-level efficiency with Python-level ergonomics

If you're scaling beyond prototypes or care about runtime determinism, GraphBit is for you.

Recommended to use virtual environment.

pip install graphbitSet up API keys you want to use in your project:

# OpenAI (optional – required if using OpenAI models)

export OPENAI_API_KEY=your_openai_api_key_here

# Anthropic (optional – required if using Anthropic models)

export ANTHROPIC_API_KEY=your_anthropic_api_key_hereSecurity Note: Never commit API keys to version control. Always use environment variables or secure secret management.

import os

from graphbit import LlmConfig, Executor, Workflow, Node, tool

# Initialize and configure

config = LlmConfig.openai(os.getenv("OPENAI_API_KEY"), "gpt-4o-mini")

# Create executor

executor = Executor(config)

# Create tools with clear descriptions for LLM selection

@tool(_description="Get current weather information for any city")

def get_weather(location: str) -> dict:

return {"location": location, "temperature": 22, "condition": "sunny"}

@tool(_description="Perform mathematical calculations and return results")

def calculate(expression: str) -> str:

return f"Result: {eval(expression)}"

# Build workflow

workflow = Workflow("Analysis Pipeline")

# Create agent nodes

smart_agent = Node.agent(

name="Smart Agent",

prompt="What's the weather in Paris and calculate 15 + 27?",

system_prompt="You are an assistant skilled in weather lookup and math calculations. Use tools to answer queries accurately.",

tools=[get_weather, calculate]

)

processor = Node.agent(

name="Data Processor",

prompt="Process the results obtained from Smart Agent.",

system_prompt="""You process and organize results from other agents.

- Summarize and clarify key points

- Structure your output for easy reading

- Focus on actionable insights

"""

)

# Connect and execute

id1 = workflow.add_node(smart_agent)

id2 = workflow.add_node(processor)

workflow.connect(id1, id2)

result = executor.execute(workflow)

print(f"Workflow completed: {result.is_success()}")

print("\nSmart Agent Output: \n", result.get_node_output("Smart Agent"))

print("\nData Processor Output: \n", result.get_node_output("Data Processor"))GraphBit Tracer captures and monitors LLM calls and AI workflows with minimal configuration. It wraps GraphBit LLM clients and workflow executors to trace prompts, responses, token usage, latency, and errors without changing your code.

Three-tier design for reliability and performance:

- Rust Core - Workflow engine, agents, and LLM providers

- Orchestration Layer - Project management and execution

- Python API - PyO3 bindings with async support

GraphBit provides a rich Python API for building and integrating agentic workflows:

- LLM Clients - Multi-provider LLM integrations (OpenAI, Anthropic, Azure, and more)

- Workflows - Define and manage multi-agent workflow graphs with state management

- Nodes - Agent nodes, tool nodes, and custom workflow components

- Executors - Workflow execution engine with configuration management

- Tool System - Function decorators, registry, and execution framework for agent tools

- Workflow Results - Execution results with metadata, timing, and output access

- Embeddings - Vector embeddings for semantic search and retrieval

- Workflow Context - Shared state and variables across workflow execution

- Document Loaders - Load and parse documents from multiple formats (PDF, DOCX, TXT, JSON, CSV, XML, HTML)

- Text Splitters - Split documents into chunks (character, token, sentence, recursive)

For the complete list of classes, methods, and usage examples, see the Python API Reference.

GraphBit's modular architecture supports external integrations:

| Category | Examples |

|---|---|

| LLM Providers | OpenAI, Anthropic, Azure OpenAI, DeepSeek, Together, Ollama, OpenRouter, Fireworks, Mistral AI, Replicate, Perplexity, HuggingFace, AI21, Bytedance, xAI, and more |

| Vector Stores | Pinecone, Qdrant, Chroma, Milvus, Weaviate, FAISS, Elasticsearch, AstraDB, Redis, and more |

| Databases | PostgreSQL (PGVector), MongoDB, MariaDB, IBM DB2, Redis, and more |

| Cloud Platforms | AWS (Boto3), Azure, Google Cloud Platform, and more |

| Search APIs | Serper, Google Search, GitHub Search, GitLab Search, and more |

| Embedding Models | OpenAI Embeddings, Voyage AI, and more |

Extensions are developed and maintained by the community.

We welcome contributions. To get started, please see the Contributing file for development setup and guidelines.

GraphBit is built by a wonderful community of researchers and engineers.

GraphBit is committed to maintaining security standards for our agentic framework. We recommend using environment variables for API keys, keeping GraphBit updated, and using proper secret management for production environments. If you discover a security vulnerability, please report it responsibly through GitHub Security or via email rather than creating a public issue.

For detailed reporting procedures and response timelines, see our Security Policy.

GraphBit project is licensed under the Apache License, Version 2.0.

For complete terms and conditions, see the Full License.

Copyright © 2023–2026 InfinitiBit GmbH. All rights reserved.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for graphbit

Similar Open Source Tools

graphbit

GraphBit is an industry-grade agentic AI framework built for developers and AI teams that demand stability, scalability, and low resource usage. It is written in Rust for maximum performance and safety, delivering significantly lower CPU usage and memory footprint compared to leading alternatives. The framework is designed to run multi-agent workflows in parallel, persist memory across steps, recover from failures, and ensure 100% task success under load. With lightweight architecture, observability, and concurrency support, GraphBit is suitable for deployment in high-scale enterprise environments and low-resource edge scenarios.

EvoAgentX

EvoAgentX is an open-source framework for building, evaluating, and evolving LLM-based agents or agentic workflows in an automated, modular, and goal-driven manner. It enables developers and researchers to move beyond static prompt chaining or manual workflow orchestration by introducing a self-evolving agent ecosystem. The framework includes features such as agent workflow autoconstruction, built-in evaluation, self-evolution engine, plug-and-play compatibility, comprehensive built-in tools, memory module support, and human-in-the-loop interactions.

superagentx

SuperAgentX is a lightweight open-source AI framework designed for multi-agent applications with Artificial General Intelligence (AGI) capabilities. It offers goal-oriented multi-agents with retry mechanisms, easy deployment through WebSocket, RESTful API, and IO console interfaces, streamlined architecture with no major dependencies, contextual memory using SQL + Vector databases, flexible LLM configuration supporting various Gen AI models, and extendable handlers for integration with diverse APIs and data sources. It aims to accelerate the development of AGI by providing a powerful platform for building autonomous AI agents capable of executing complex tasks with minimal human intervention.

flow-like

Flow-Like is an enterprise-grade workflow operating system built upon Rust for uncompromising performance, efficiency, and code safety. It offers a modular frontend for apps, a rich set of events, a node catalog, a powerful no-code workflow IDE, and tools to manage teams, templates, and projects within organizations. With typed workflows, users can create complex, large-scale workflows with clear data origins, transformations, and contracts. Flow-Like is designed to automate any process through seamless integration of LLM, ML-based, and deterministic decision-making instances.

clearml

ClearML is an auto-magical suite of tools designed to streamline AI workflows. It includes modules for experiment management, MLOps/LLMOps, data management, model serving, and more. ClearML offers features like experiment tracking, model serving, orchestration, and automation. It supports various ML/DL frameworks and integrates with Jupyter Notebook and PyCharm for remote debugging. ClearML aims to simplify collaboration, automate processes, and enhance visibility in AI projects.

agents-towards-production

Agents Towards Production is an open-source playbook for building production-ready GenAI agents that scale from prototype to enterprise. Tutorials cover stateful workflows, vector memory, real-time web search APIs, Docker deployment, FastAPI endpoints, security guardrails, GPU scaling, browser automation, fine-tuning, multi-agent coordination, observability, evaluation, and UI development.

clearml

ClearML is a suite of tools designed to streamline the machine learning workflow. It includes an experiment manager, MLOps/LLMOps, data management, and model serving capabilities. ClearML is open-source and offers a free tier hosting option. It supports various ML/DL frameworks and integrates with Jupyter Notebook and PyCharm. ClearML provides extensive logging capabilities, including source control info, execution environment, hyper-parameters, and experiment outputs. It also offers automation features, such as remote job execution and pipeline creation. ClearML is designed to be easy to integrate, requiring only two lines of code to add to existing scripts. It aims to improve collaboration, visibility, and data transparency within ML teams.

MaxKB

MaxKB is a knowledge base Q&A system based on the LLM large language model. MaxKB = Max Knowledge Base, which aims to become the most powerful brain of the enterprise.

MemMachine

MemMachine is an open-source long-term memory layer designed for AI agents and LLM-powered applications. It enables AI to learn, store, and recall information from past sessions, transforming stateless chatbots into personalized, context-aware assistants. With capabilities like episodic memory, profile memory, working memory, and agent memory persistence, MemMachine offers a developer-friendly API, flexible storage options, and seamless integration with various AI frameworks. It is suitable for developers, researchers, and teams needing persistent, cross-session memory for their LLM applications.

EnvScaler

EnvScaler is an automated, scalable framework that creates tool-interactive environments for training LLM agents. It consists of SkelBuilder for environment description mining and quality inspection, ScenGenerator for synthesizing multiple environment scenarios, and modules for supervised fine-tuning and reinforcement learning. The tool provides data, models, and evaluation guides for users to build, generate scenarios, collect training data, train models, and evaluate performance. Users can interact with environments, build environments from scratch, and improve LLMs' task-solving abilities in complex environments.

Kiln

Kiln is an intuitive tool for fine-tuning LLM models, generating synthetic data, and collaborating on datasets. It offers desktop apps for Windows, MacOS, and Linux, zero-code fine-tuning for various models, interactive data generation, and Git-based version control. Users can easily collaborate with QA, PM, and subject matter experts, generate auto-prompts, and work with a wide range of models and providers. The tool is open-source, privacy-first, and supports structured data tasks in JSON format. Kiln is free to use and helps build high-quality AI products with datasets, facilitates collaboration between technical and non-technical teams, allows comparison of models and techniques without code, ensures structured data integrity, and prioritizes user privacy.

yournextstore

Your Next Store is an open-source Next.js e-commerce platform designed for AI development. It offers a Stripe-native integration, ultra-fast page loads, and typed APIs. The codebase follows consistent patterns, provides blazing fast performance with Next.js 16 and edge caching, and allows direct API integration without the need for plugins. With a focus on AI coding tools, Your Next Store offers familiar patterns, typed APIs for Commerce Kit SDK methods, and a well-defined domain for commerce data models, making it easier for AI models to generate accurate suggestions and write correct code.

skypilot

SkyPilot is a framework for running LLMs, AI, and batch jobs on any cloud, offering maximum cost savings, highest GPU availability, and managed execution. SkyPilot abstracts away cloud infra burdens: - Launch jobs & clusters on any cloud - Easy scale-out: queue and run many jobs, automatically managed - Easy access to object stores (S3, GCS, R2) SkyPilot maximizes GPU availability for your jobs: * Provision in all zones/regions/clouds you have access to (the _Sky_), with automatic failover SkyPilot cuts your cloud costs: * Managed Spot: 3-6x cost savings using spot VMs, with auto-recovery from preemptions * Optimizer: 2x cost savings by auto-picking the cheapest VM/zone/region/cloud * Autostop: hands-free cleanup of idle clusters SkyPilot supports your existing GPU, TPU, and CPU workloads, with no code changes.

inference

Xorbits Inference (Xinference) is a powerful and versatile library designed to serve language, speech recognition, and multimodal models. With Xorbits Inference, you can effortlessly deploy and serve your or state-of-the-art built-in models using just a single command. Whether you are a researcher, developer, or data scientist, Xorbits Inference empowers you to unleash the full potential of cutting-edge AI models.

kornia

Kornia is a differentiable computer vision library for PyTorch. It consists of a set of routines and differentiable modules to solve generic computer vision problems. At its core, the package uses PyTorch as its main backend both for efficiency and to take advantage of the reverse-mode auto-differentiation to define and compute the gradient of complex functions.

openrl

OpenRL is an open-source general reinforcement learning research framework that supports training for various tasks such as single-agent, multi-agent, offline RL, self-play, and natural language. Developed based on PyTorch, the goal of OpenRL is to provide a simple-to-use, flexible, efficient and sustainable platform for the reinforcement learning research community. It supports a universal interface for all tasks/environments, single-agent and multi-agent tasks, offline RL training with expert dataset, self-play training, reinforcement learning training for natural language tasks, DeepSpeed, Arena for evaluation, importing models and datasets from Hugging Face, user-defined environments, models, and datasets, gymnasium environments, callbacks, visualization tools, unit testing, and code coverage testing. It also supports various algorithms like PPO, DQN, SAC, and environments like Gymnasium, MuJoCo, Atari, and more.

For similar tasks

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.

sorrentum

Sorrentum is an open-source project that aims to combine open-source development, startups, and brilliant students to build machine learning, AI, and Web3 / DeFi protocols geared towards finance and economics. The project provides opportunities for internships, research assistantships, and development grants, as well as the chance to work on cutting-edge problems, learn about startups, write academic papers, and get internships and full-time positions at companies working on Sorrentum applications.

tidb

TiDB is an open-source distributed SQL database that supports Hybrid Transactional and Analytical Processing (HTAP) workloads. It is MySQL compatible and features horizontal scalability, strong consistency, and high availability.

zep-python

Zep is an open-source platform for building and deploying large language model (LLM) applications. It provides a suite of tools and services that make it easy to integrate LLMs into your applications, including chat history memory, embedding, vector search, and data enrichment. Zep is designed to be scalable, reliable, and easy to use, making it a great choice for developers who want to build LLM-powered applications quickly and easily.

telemetry-airflow

This repository codifies the Airflow cluster that is deployed at workflow.telemetry.mozilla.org (behind SSO) and commonly referred to as "WTMO" or simply "Airflow". Some links relevant to users and developers of WTMO: * The `dags` directory in this repository contains some custom DAG definitions * Many of the DAGs registered with WTMO don't live in this repository, but are instead generated from ETL task definitions in bigquery-etl * The Data SRE team maintains a WTMO Developer Guide (behind SSO)

mojo

Mojo is a new programming language that bridges the gap between research and production by combining Python syntax and ecosystem with systems programming and metaprogramming features. Mojo is still young, but it is designed to become a superset of Python over time.

pandas-ai

PandasAI is a Python library that makes it easy to ask questions to your data in natural language. It helps you to explore, clean, and analyze your data using generative AI.

databend

Databend is an open-source cloud data warehouse that serves as a cost-effective alternative to Snowflake. With its focus on fast query execution and data ingestion, it's designed for complex analysis of the world's largest datasets.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.