RAGMeUp

Generic rag framework to apply the power of LLMs on any given dataset

Stars: 576

RAG Me Up is a generic framework that enables users to perform Retrieve, Answer, Generate (RAG) on their own dataset easily. It consists of a small server and UIs for communication. The tool can run on CPU but is optimized for GPUs with at least 16GB of vRAM. Users can combine RAG with fine-tuning using the LLaMa2Lang repository. The tool provides a configurable RAG pipeline without the need for coding, utilizing indexing and inference steps to accurately answer user queries.

README:

RAG Me Up is a generic framework (server + UIs) that enables you do to RAG on your own dataset easily. Its essence is a small and lightweight server and a couple of ways to run UIs to communicate with the server (or write your own).

RAG Me Up can run on CPU but is best run on any GPU with at least 16GB of vRAM when using the default instruct model.

Combine the power of RAG with the power of fine-tuning - check out our LLaMa2Lang repository on fine-tuning LLMs which can then be used in RAG Me Up.

- 2024-09-06 Implemented Re2

- 2024-09-04 Added an evaluation script that uses Ragas to evaluate your RAG pipeline

- 2024-08-30 Added Ollama compatibility

- 2024-08-27 Using cross encoders now so you can specify your own reranking model

- 2024-07-30 Added multiple provenance attribution methods

- 2024-06-26 Updated readme, added more file types, robust self-inflection

- 2024-06-05 Upgraded to Langchain v0.2

git clone https://github.com/UnderstandLingBV/RAGMeUp.git

cd server

pip install -r requirements.txt

Then run the server using python server.py from the server subfolder.

Make sure you have JDK 17+. Download and install SBT and run sbt run from the server/scala directory or alternatively download the compiled binary and run bin/ragemup(.bat)

RAG Me Up supports Postgres as hybrid retrieval database with both pgvector and pg_search installed. To run Postgres instead of Milvus, follow these steps.

- In the postgres folder is a Dockerfile, build it using

docker build -t ragmeup-pgvector-pgsearch . - Run the container using

docker run --name ragmeup-pgvector-pgsearch -e POSTGRES_USER=langchain -e POSTGRES_PASSWORD=langchain -e POSTGRES_DB=langchain -p 6024:5432 -d ragmeup-pgvector-pgsearch - Once in use, our custom PostgresBM25Retriever will automatically create the right indexes for you.

- pgvector however, will not do this automatically so you have to create them yourself (perhaps after loading the documents first so the right tables are created):

- Make sure the vector column is an actual vector (it's not by default):

ALTER TABLE langchain_pg_embedding ALTER COLUMN embedding TYPE vector(384); - Create the index (may take a while with a lot of data):

CREATE INDEX ON langchain_pg_embedding USING hnsw (embedding vector_cosine_ops) WITH (m = 16, ef_construction = 64);

- Make sure the vector column is an actual vector (it's not by default):

RAG Me Up aims to provide a robust RAG pipeline that is configurable without necessarily writing any code. To achieve this, a couple of strategies are used to make sure that the user query can be accurately answered through the documents provided.

The RAG pipeline is visualized in the image below:

The following steps are executed. Take note that some steps are optional and can be turned off through configuring the .env file.

Top part - Indexing

- You collect and make your documents available to RAG Me Up.

- Using different file type loaders, RAG Me Up will read the contents of your documents. Note that for some document types like JSON and XML, you need to specify additional configuration to tell RAG Me Up what to extract.

- Your documents get chunked using a recursive splitter.

- The chunks get converted into document (chunk) embeddings using an embedding model. Note that this model is usually a different one than the LLM you intend to use for chat.

- RAG Me Up uses a hybrid search strategy, combining dense vectors in the vector database with sparse vectors using BM25. By default, RAG Me Up uses a local Milvus database.

Bottom part - Inference

- Inference starts with a user asking a query. This query can either be an initial query or a follow-up query with an associated history and documents retrieved before. Note that both (chat history, documents) need to be passed on by a UI to properly handle follow-up querying.

- A check is done if new documents need to be fetched, this can be due to one of two cases:

- There is no history given in which case we always need to fetch documents

-

[OPTIONAL] The LLM itself will judge whether or not the question - in isolation - is phrased in such a way that new documents are fetched or whether it is a follow-up question on existing documents. A flag called

fetch_new_documentsis set to indicate whether or not new documents need to be fetched.

- Documents are fetched from both the vector database (dense) and the BM25 index (sparse). Only executed if

fetch_new_documentsis set. -

[OPTIONAL] Reranking is applied to extract the most relevant documents returned by the previous step. Only executed if

fetch_new_documentsis set. -

[OPTIONAL] The LLM is asked to judge whether or not the documents retrieved contain an accurate answer to the user's query. Only executed if

fetch_new_documentsis set.- If this is not the case, the LLM is used to rewrite the query with the instruction to optimize for distance based similarity search. This is then fed back into step 3. but only once to avoid lengthy or infinite loops.

- The documents are injected into the prompt with the user query. The documents can come from:

- The retrieval and reranking of the document databases, if

fetch_new_documentsis set. - The history passed on with the initial user query, if

fetch_new_documentsis not set.

- The retrieval and reranking of the document databases, if

- The LLM is asked to answer the query with the given chat history and documents.

- The answer, chat history and documents are returned.

RAG Me Up uses a .env file for configuration, see .env.template. The following fields can be configured:

-

llm_modelThis is the main LLM (instruct or chat) model to use that you will converse with. Default is LLaMa3-8B -

llm_assistant_tokenThis should contain the unique query (sub)string that indicates where in a prompt template the assistant's answer starts -

embedding_modelThe model used to convert your documents' chunks into vectors that will be stored in the vector store -

trust_remote_codeSet this to true if your LLM needs to execute remote code -

force_cpuWhen set to True, forces RAG Me Up to run fully on CPU (not recommended)

If you want to use OpenAI as LLM backend, make sure to set use_openai to True and make sure you (externally) set the environment variable OPENAI_API_KEY to be your OpenAI API Key.

If you want to use Gemini as LLM backend, make sure to set use_gemini to True and make sure you (externally) set the environment variable GOOGLE_API_KEY to be your Gemini API Key.

If you want to use Azure OpenAI as LLM backend, make sure to set use_azure to True and make sure you (externally) set the following environment variables:

AZURE_OPENAI_API_KEYAZURE_OPENAI_API_VERSIONAZURE_OPENAI_ENDPOINTAZURE_OPENAI_CHAT_DEPLOYMENT_NAME

If you want to use Ollama as LLM backend, make sure to install Ollama and set use_ollama to True. The model to use should be given in ollama_model.

One of the biggest, arguably unsolved, challenges of RAG is to do good provenance attribution: tracking which of the source documents retrieved from your database led to the LLM generating its answer (the most). RAG Me Up implements several ways of achieving this, each with its own pros and cons.

The following environment variables can be set for provenance attribution.

-

provenance_methodCan be one ofrerank, attention, similarity, llm. IfrerankisFalseand the value ofprovenance_methodis eitherrerankor none of the allowed values, provenance attribution is turned completely off -

provenance_similarity_llmIfprovenance_methodis set tosimilarity, this model will be used to compute the similarity scores -

provenance_include_querySet to True or False to include the query itself when attributing provenance -

provenance_llm_promptIfprovenance_methodis set tollm, this prompt will be used to let the LLM attribute the provenance of each document in isolation.

The different provenance attribution metrics are described below.

This uses the reranker as the provenance method. While the reranking is already used when retrieving documents (if reranking is turned on), this only applies the rerankers cross-attention to the documents and the query. For provenance attribution, we use the same reranking to apply cross-attention to the answer (and potentially the query too).

This is probably the most accurate way of tracking provenance but it can only be used with OS LLMs that allow to return the attention weights. The way we track provenance is by looking at the actual attention weights (of the last attention layer in the model) for each token from the answer to the document and vice versa, optionally we do the same for the query if provenance_include_query=True.

This method uses a sentence transformer (LM) to get dense vectors for each document as well as for the answer (and potentially query). We then use a cosine similarity to get the similarity of the document vectors to the answer (+ query).

The LLM that is used to generate messages is now also used to attribute the provenance of each document in isolation. We use the provenance_llm_prompt as the prompt to ask the LLM to perform this task. Note that the outcome of this provenance method is highly influenced by the prompt and the strength of the model. As a good practice, make sure you force the LLM to return numbers on a relatively small scale (eg. score from 1 to 3). Using something like a percentage for each document will likely result in random outcomes.

-

data_directoryThe directory that contains your (initial) documents to load into the vector store -

file_typesComma-separated list of file types to load. Supported file types:PDF, JSON, DOCX, XSLX, PPTX, CSV, XML -

json_schemaIf you are loading JSON, this should be the schema (usingjq_schema). For example, use.for the root of a JSON object if your data contains JSON objects only and.[0]for the first element in each JSON array if your data contains JSON arrays with one JSON object in them -

json_text_contentWhether or not the JSON data should be loaded as textual content or as structured content (in case of a JSON object) -

xml_xpathIf you are loading XML, this should be the XPath of the documents to load (the tags that contain your text)

-

vector_store_uriRAG Me Up caches your vector store on disk if possible to make loading a next time faster. This is the location where the vector store is stored. Remove this file to force a reload of all your documents -

vector_store_kThe number of documents to retrieve from the vector store -

rerankSet to either True or False to enable reranking -

rerank_kThe number of documents to keep after reranking. Note that if you use reranking, this should be your final target forkandvector_store_kshould be set (significantly) higher. For example, setvector_store_kto 10 andrerank_kto 3 -

rerank_modelThe cross encoder reranking retrieval model to use. Sensible defaults arecross-encoder/ms-marco-TinyBERT-L-2-v2for speed andcolbert-ir/colbertv2.0for accuracy (antoinelouis/colbert-xmfor multilingual). Set this value toflashrankto use the FlashrankReranker.

-

temperatureThe chat LLM's temperature. Increase this to create more diverse answers -

repetition_penaltyThe penalty for repeating outputs in the chat answers. Some models are very sensitive to this parameter and need a value bigger than 1.0 (penalty) while others benefit from inversing it (lower than 1.0) -

max_new_tokensThis caps how much tokens the LLM can generate in its answer. More tokens means slower throughput and more memory usage

-

rag_instructionAn instruction message for the LLM to let it know what to do. Should include a mentioning of it performing RAG and that documents will be given as input context to generate the answer from. -

rag_question_initialThe initial question prompt that will be given to the LLM only for the first question a user asks, that is, without chat history -

rag_question_followupThis is a follow-up question the user is asking. While the context resulting from the prompt will be populated by RAG from the vector store, if chat history is present, this prompt will be used instead ofrag_question_initial

-

rag_fetch_new_instructionRAG Me Up automatically determines whether or not new documents should be fetched from the vector store or whether the user is asking a follow-up question on the already fetched documents by leveraging the same LLM that is used for chat. This environment variable determines the prompt to use to make this decision. Be very sure to instruct your LLM to answer with yes or no only and make sure your LLM is capable enough to follow this instruction -

rag_fetch_new_questionThe question prompt used in conjunction withrag_fetch_new_instructionto decide if new documents should be fetched or not

-

user_rewrite_loopSet to either True or False to enable the rewriting of the initial query. Note that a rewrite will always occur at most once -

rewrite_query_instructionThis is the instruction of the prompt that is used to ask the LLM to judge whether a rewrite is necessary or not. Make sure you force the LLM to answer with yes or no only -

rewrite_query_questionThis is the actual query part of the prompt that isued to ask the LLM to judge a rewrite -

rewrite_query_promptIf the rewrite loop is on and the LLM judges a rewrite is required, this is the instruction with question asked to the LLM to rewrite the user query into a phrasing more optimized for RAG. Make sure to instruct your model adequately.

-

use_re2Set to either True or False to enable Re2 (Re-reading) which repeats the question, generally improving the quality of the answer generated by the LLM. -

re2_promptThe prompt used in between the question and the repeated question to signal that we are re-asking.

-

splitterThe Langchain document splitter to use. Supported splitters areRecursiveCharacterTextSplitterandSemanticChunker. -

chunk_sizeThe chunk size to use when splitting up documents forRecursiveCharacterTextSplitter -

chunk_overlapThe chunk overlap forRecursiveCharacterTextSplitter -

breakpoint_threshold_typeSets the breakpoint threshold type when using theSemanticChunker(see here). Can be one of: percentile, standard_deviation, interquartile, gradient -

breakpoint_threshold_amountThe amount to use for the threshold type, in float. Set toNoneto leave default -

number_of_chunksThe number of chunks to use for the threshold type, in int. Set toNoneto leave default

While RAG evaluation is difficult and subjective to begin with, frameworks such as Ragas can give some metrics as to how well your RAG pipeline and its prompts are working, allowing us to benchmark one approach over the other quantitatively.

RAG Me Up uses Ragas to evaluate your pipeline. You can run an evaluation based on your .env using python Ragas_eval.py. The following configuration parameters can be set for evaluation:

-

ragas_sample_sizeThe amount of document (chunks) to use in evaluation. These are sampled from your data directory after chunking. -

ragas_qa_pairsRagas works upon questions and ground truth answers. The amount of such pairs to create based on the sampled document chunks is set by this parameter. -

ragas_question_instructionThe instruction prompt used to generate the questions of the Ragas input pairs. -

ragas_question_queryThe query prompt used to generate the questions of the Ragas input pairs. -

ragas_answer_instructionThe instruction prompt used to generate the answers of the Ragas input pairs. -

ragas_answer_queryThe query prompt used to generate the answers of the Ragas input pairs.

We are actively looking for funding to democratize AI and advance its applications. Contact us at [email protected] if you want to invest.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for RAGMeUp

Similar Open Source Tools

RAGMeUp

RAG Me Up is a generic framework that enables users to perform Retrieve, Answer, Generate (RAG) on their own dataset easily. It consists of a small server and UIs for communication. The tool can run on CPU but is optimized for GPUs with at least 16GB of vRAM. Users can combine RAG with fine-tuning using the LLaMa2Lang repository. The tool provides a configurable RAG pipeline without the need for coding, utilizing indexing and inference steps to accurately answer user queries.

RAGMeUp

RAG Me Up is a generic framework that enables users to perform Retrieve and Generate (RAG) on their own dataset easily. It consists of a small server and UIs for communication. Best run on GPU with 16GB vRAM. Users can combine RAG with fine-tuning using LLaMa2Lang repository. The tool allows configuration for LLM, data, LLM parameters, prompt, and document splitting. Funding is sought to democratize AI and advance its applications.

MultiPL-E

MultiPL-E is a system for translating unit test-driven neural code generation benchmarks to new languages. It is part of the BigCode Code Generation LM Harness and allows for evaluating Code LLMs using various benchmarks. The tool supports multiple versions with improvements and new language additions, providing a scalable and polyglot approach to benchmarking neural code generation. Users can access a tutorial for direct usage and explore the dataset of translated prompts on the Hugging Face Hub.

wdoc

wdoc is a powerful Retrieval-Augmented Generation (RAG) system designed to summarize, search, and query documents across various file types. It aims to handle large volumes of diverse document types, making it ideal for researchers, students, and professionals dealing with extensive information sources. wdoc uses LangChain to process and analyze documents, supporting tens of thousands of documents simultaneously. The system includes features like high recall and specificity, support for various Language Model Models (LLMs), advanced RAG capabilities, advanced document summaries, and support for multiple tasks. It offers markdown-formatted answers and summaries, customizable embeddings, extensive documentation, scriptability, and runtime type checking. wdoc is suitable for power users seeking document querying capabilities and AI-powered document summaries.

ai-rag-chat-evaluator

This repository contains scripts and tools for evaluating a chat app that uses the RAG architecture. It provides parameters to assess the quality and style of answers generated by the chat app, including system prompt, search parameters, and GPT model parameters. The tools facilitate running evaluations, with examples of evaluations on a sample chat app. The repo also offers guidance on cost estimation, setting up the project, deploying a GPT-4 model, generating ground truth data, running evaluations, and measuring the app's ability to say 'I don't know'. Users can customize evaluations, view results, and compare runs using provided tools.

ReasonablePlanningAI

Reasonable Planning AI is a robust design and data-driven AI solution for game developers. It provides an AI Editor that allows creating AI without Blueprints or C++. The AI can think for itself, plan actions, adapt to the game environment, and act dynamically. It consists of Core components like RpaiGoalBase, RpaiActionBase, RpaiPlannerBase, RpaiReasonerBase, and RpaiBrainComponent, as well as Composer components for easier integration by Game Designers. The tool is extensible, cross-compatible with Behavior Trees, and offers debugging features like visual logging and heuristics testing. It follows a simple path of execution and supports versioning for stability and compatibility with Unreal Engine versions.

Mapperatorinator

Mapperatorinator is a multi-model framework that uses spectrogram inputs to generate fully featured osu! beatmaps for all gamemodes and assist modding beatmaps. The project aims to automatically generate rankable quality osu! beatmaps from any song with a high degree of customizability. The tool is built upon osuT5 and osu-diffusion, utilizing GPU compute and instances on vast.ai for development. Users can responsibly use AI in their beatmaps with this tool, ensuring disclosure of AI usage. Installation instructions include cloning the repository, creating a virtual environment, and installing dependencies. The tool offers a Web GUI for user-friendly experience and a Command-Line Inference option for advanced configurations. Additionally, an Interactive CLI script is available for terminal-based workflow with guided setup. The tool provides generation tips and features MaiMod, an AI-driven modding tool for osu! beatmaps. Mapperatorinator tokenizes beatmaps, utilizes a model architecture based on HF Transformers Whisper model, and offers multitask training format for conditional generation. The tool ensures seamless long generation, refines coordinates with diffusion, and performs post-processing for improved beatmap quality. Super timing generator enhances timing accuracy, and LoRA fine-tuning allows adaptation to specific styles or gamemodes. The project acknowledges credits and related works in the osu! community.

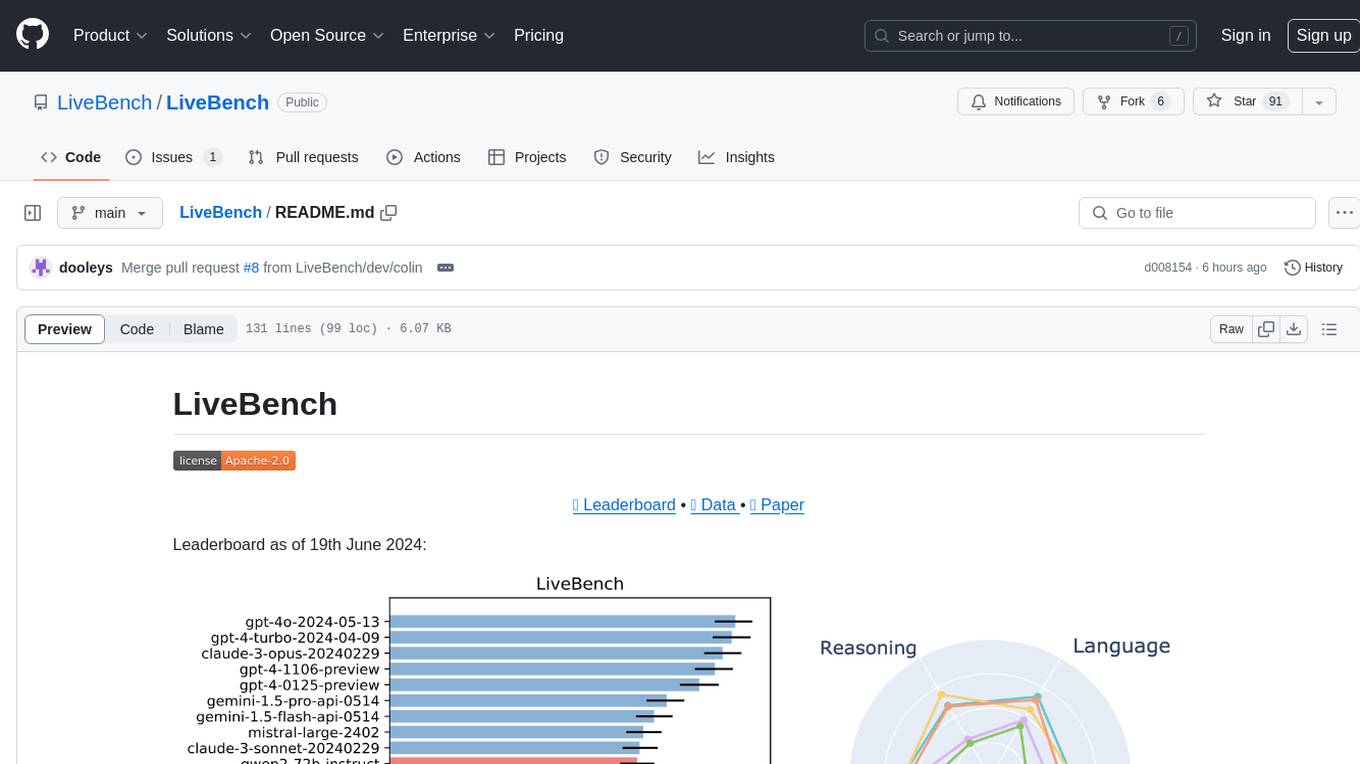

LiveBench

LiveBench is a benchmark tool designed for Language Model Models (LLMs) with a focus on limiting contamination through monthly new questions based on recent datasets, arXiv papers, news articles, and IMDb movie synopses. It provides verifiable, objective ground-truth answers for accurate scoring without an LLM judge. The tool offers 18 diverse tasks across 6 categories and promises to release more challenging tasks over time. LiveBench is built on FastChat's llm_judge module and incorporates code from LiveCodeBench and IFEval.

LLM-LieDetector

This repository contains code for reproducing experiments on lie detection in black-box LLMs by asking unrelated questions. It includes Q/A datasets, prompts, and fine-tuning datasets for generating lies with language models. The lie detectors rely on asking binary 'elicitation questions' to diagnose whether the model has lied. The code covers generating lies from language models, training and testing lie detectors, and generalization experiments. It requires access to GPUs and OpenAI API calls for running experiments with open-source models. Results are stored in the repository for reproducibility.

wtffmpeg

wtffmpeg is a command-line tool that uses a Large Language Model (LLM) to translate plain-English descriptions of video or audio tasks into actual, executable ffmpeg commands. It aims to streamline the process of generating ffmpeg commands by allowing users to describe what they want to do in natural language, review the generated command, optionally edit it, and then decide whether to run it. The tool provides an interactive REPL interface where users can input their commands, retain conversational context, and history, and control the level of interactivity. wtffmpeg is designed to assist users in efficiently working with ffmpeg commands, reducing the need to search for solutions, read lengthy explanations, and manually adjust commands.

ezkl

EZKL is a library and command-line tool for doing inference for deep learning models and other computational graphs in a zk-snark (ZKML). It enables the following workflow: 1. Define a computational graph, for instance a neural network (but really any arbitrary set of operations), as you would normally in pytorch or tensorflow. 2. Export the final graph of operations as an .onnx file and some sample inputs to a .json file. 3. Point ezkl to the .onnx and .json files to generate a ZK-SNARK circuit with which you can prove statements such as: > "I ran this publicly available neural network on some private data and it produced this output" > "I ran my private neural network on some public data and it produced this output" > "I correctly ran this publicly available neural network on some public data and it produced this output" In the backend we use the collaboratively-developed Halo2 as a proof system. The generated proofs can then be verified with much less computational resources, including on-chain (with the Ethereum Virtual Machine), in a browser, or on a device.

chronon

Chronon is a platform that simplifies and improves ML workflows by providing a central place to define features, ensuring point-in-time correctness for backfills, simplifying orchestration for batch and streaming pipelines, offering easy endpoints for feature fetching, and guaranteeing and measuring consistency. It offers benefits over other approaches by enabling the use of a broad set of data for training, handling large aggregations and other computationally intensive transformations, and abstracting away the infrastructure complexity of data plumbing.

curategpt

CurateGPT is a prototype web application and framework designed for general purpose AI-guided curation and curation-related operations over collections of objects. It provides functionalities for loading example data, building indexes, interacting with knowledge bases, and performing tasks such as chatting with a knowledge base, querying Pubmed, interacting with a GitHub issue tracker, term autocompletion, and all-by-all comparisons. The tool is built to work best with the OpenAI gpt-4 model and OpenAI ada-text-embedding-002 for embedding, but also supports alternative models through a plugin architecture.

eval-dev-quality

DevQualityEval is an evaluation benchmark and framework designed to compare and improve the quality of code generation of Language Model Models (LLMs). It provides developers with a standardized benchmark to enhance real-world usage in software development and offers users metrics and comparisons to assess the usefulness of LLMs for their tasks. The tool evaluates LLMs' performance in solving software development tasks and measures the quality of their results through a point-based system. Users can run specific tasks, such as test generation, across different programming languages to evaluate LLMs' language understanding and code generation capabilities.

REINVENT4

REINVENT is a molecular design tool for de novo design, scaffold hopping, R-group replacement, linker design, molecule optimization, and other small molecule design tasks. It uses a Reinforcement Learning (RL) algorithm to generate optimized molecules compliant with a user-defined property profile defined as a multi-component score. Transfer Learning (TL) can be used to create or pre-train a model that generates molecules closer to a set of input molecules.

For similar tasks

RAGMeUp

RAG Me Up is a generic framework that enables users to perform Retrieve, Answer, Generate (RAG) on their own dataset easily. It consists of a small server and UIs for communication. The tool can run on CPU but is optimized for GPUs with at least 16GB of vRAM. Users can combine RAG with fine-tuning using the LLaMa2Lang repository. The tool provides a configurable RAG pipeline without the need for coding, utilizing indexing and inference steps to accurately answer user queries.

mindsdb

MindsDB is a platform for customizing AI from enterprise data. You can create, serve, and fine-tune models in real-time from your database, vector store, and application data. MindsDB "enhances" SQL syntax with AI capabilities to make it accessible for developers worldwide. With MindsDB’s nearly 200 integrations, any developer can create AI customized for their purpose, faster and more securely. Their AI systems will constantly improve themselves — using companies’ own data, in real-time.

training-operator

Kubeflow Training Operator is a Kubernetes-native project for fine-tuning and scalable distributed training of machine learning (ML) models created with various ML frameworks such as PyTorch, Tensorflow, XGBoost, MPI, Paddle and others. Training Operator allows you to use Kubernetes workloads to effectively train your large models via Kubernetes Custom Resources APIs or using Training Operator Python SDK. > Note: Before v1.2 release, Kubeflow Training Operator only supports TFJob on Kubernetes. * For a complete reference of the custom resource definitions, please refer to the API Definition. * TensorFlow API Definition * PyTorch API Definition * Apache MXNet API Definition * XGBoost API Definition * MPI API Definition * PaddlePaddle API Definition * For details of all-in-one operator design, please refer to the All-in-one Kubeflow Training Operator * For details on its observability, please refer to the monitoring design doc.

helix

HelixML is a private GenAI platform that allows users to deploy the best of open AI in their own data center or VPC while retaining complete data security and control. It includes support for fine-tuning models with drag-and-drop functionality. HelixML brings the best of open source AI to businesses in an ergonomic and scalable way, optimizing the tradeoff between GPU memory and latency.

nntrainer

NNtrainer is a software framework for training neural network models on devices with limited resources. It enables on-device fine-tuning of neural networks using user data for personalization. NNtrainer supports various machine learning algorithms and provides examples for tasks such as few-shot learning, ResNet, VGG, and product rating. It is optimized for embedded devices and utilizes CBLAS and CUBLAS for accelerated calculations. NNtrainer is open source and released under the Apache License version 2.0.

petals

Petals is a tool that allows users to run large language models at home in a BitTorrent-style manner. It enables fine-tuning and inference up to 10x faster than offloading. Users can generate text with distributed models like Llama 2, Falcon, and BLOOM, and fine-tune them for specific tasks directly from their desktop computer or Google Colab. Petals is a community-run system that relies on people sharing their GPUs to increase its capacity and offer a distributed network for hosting model layers.

LLaVA-pp

This repository, LLaVA++, extends the visual capabilities of the LLaVA 1.5 model by incorporating the latest LLMs, Phi-3 Mini Instruct 3.8B, and LLaMA-3 Instruct 8B. It provides various models for instruction-following LMMS and academic-task-oriented datasets, along with training scripts for Phi-3-V and LLaMA-3-V. The repository also includes installation instructions and acknowledgments to related open-source contributions.

KULLM

KULLM (구름) is a Korean Large Language Model developed by Korea University NLP & AI Lab and HIAI Research Institute. It is based on the upstage/SOLAR-10.7B-v1.0 model and has been fine-tuned for instruction. The model has been trained on 8×A100 GPUs and is capable of generating responses in Korean language. KULLM exhibits hallucination and repetition phenomena due to its decoding strategy. Users should be cautious as the model may produce inaccurate or harmful results. Performance may vary in benchmarks without a fixed system prompt.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.