LLMGA

This project is the official implementation of 'LLMGA: Multimodal Large Language Model based Generation Assistant', ECCV2024

Stars: 305

LLMGA (Multimodal Large Language Model-based Generation Assistant) is a tool that leverages Large Language Models (LLMs) to assist users in image generation and editing. It provides detailed language generation prompts for precise control over Stable Diffusion (SD), resulting in more intricate and precise content in generated images. The tool curates a dataset for prompt refinement, similar image generation, inpainting & outpainting, and visual question answering. It offers a two-stage training scheme to optimize SD alignment and a reference-based restoration network to alleviate texture, brightness, and contrast disparities in image editing. LLMGA shows promising generative capabilities and enables wider applications in an interactive manner.

README:

Bin Xia, Shiyin Wang, Yingfan Tao, Yitong Wang, and Jiaya Jia

New Version (Accepted by ECCV2024):

- [x] [2024.07.06] The finetuned SD15 models have been released, including SD15-T2I and SD15-inpainting. Notably, our SD15-T2I model can also be used for instruction-based editing of LLMGA.

- [x] [2024.07.06] The finetuned SDXL models have been released, including SDXL-T2I and SDXL-inpainting.

- [x] [2024.07.06] The pre-trained models, which further support Chinese (obtained by further fine-tuned on mixed Chinese and English data), have been released, including llmga-cn-vicuna 7b, llmga-cn-llama3 8b, llmga-cn-gemma 2b, and llmga-cn-qwen2 0.5b.

- [x] [2024.07.06] We release new version LLMGA's training datasets, including texts and images.

- [x] [2024.07.05] The pre-trained model has been released, including llmga-vicuna 7b, llmga-mistral 7b, llmga-llama3 8b, llmga-vicuna7b, llmga-qwen2 0.5b, llmga-qwen2 1.5b, llmga-qwen2 7b, llmga-phi3 3b, and llmga-gemma 2b.

- [x] [2024.07.05] The code has been updated.

- [x] [2024.07.04] I am organizing and uploading the new version of the LLMGA code and the dataset and model. I will have a status update when I complete this process, please wait for me for a few days. Notably, in this new version, we build our LLMGA on different base LLM models, such as Llama2 7b, Mistral 7b, LLama3 8b, Qwen2 0.5b, Qwen2 1.5b, Qwen2 7b, Phi3 3b, and gemma 2b. They have different performance and model sizes, as well as commercial licenses, there is always one that can meet your usage scenario.

Old Version:

- [x] [2023.12.20] We release LLMGA's [training datasets].

- [x] [2023.12.20] We release the gradio codes of LLMGA7b-SDXL-T2I.

- [x] [2023.12.08] We release LLMGA7b-SDXL-T2I [demo].

- [x] [2023.11.30] We have released the code for DiffRIR. It can effectively eliminate differences in brightness, contrast, and texture between generated and preserved regions in inpainting and outpainting. Considering its applicability to projects beyond LLMGA, we have open-sourced it at Github.

- [x] [2023.11.29] The models is released at [Huggingface].

- [x] [2023.11.29] The training and inference code is released.

- [x] [2023.11.29] We will upload all models, code, and data within a week and further refine this project.

- [x] [2023.11.28] GitHub repo is created.

Abstract: In this paper, we introduce a Multimodal Large Language Model-based Generation Assistant (LLMGA), leveraging the vast reservoir of knowledge and proficiency in reasoning, comprehension, and response inherent in Large Language Models (LLMs) to assist users in image generation and editing. Diverging from existing approaches where Multimodal Large Language Models (MLLMs) generate fixed-size embeddings to control Stable Diffusion (SD), our LLMGA provides a detailed language generation prompt for precise control over SD. This not only augments LLM context understanding but also reduces noise in generation prompts, yields images with more intricate and precise content, and elevates the interpretability of the network. To this end, we curate a comprehensive dataset comprising prompt refinement, similar image generation, inpainting & outpainting, and instruction-based editing. Moreover, we propose a two-stage training scheme. In the first stage, we train the MLLM to grasp the properties of image generation and editing, enabling it to generate detailed prompts. In the second stage, we optimize SD to align with the MLLM's generation prompts. Additionally, we propose a reference-based restoration network to alleviate texture, brightness, and contrast disparities between generated and preserved regions during inpainting and outpainting. Extensive results show that LLMGA has promising generation and editing capabilities and can enable more flexible and expansive applications in an interactive manner.

- [x] Generation Assiatant. As a unified system, LLMGA can generate and edit images using methods such as Text-to-Image (T2I), inpainting, outpainting, and instruction-based editing through conversational interactions with users. By leveraging the extensive knowledge and understanding of image design from LLMGA, users can easily produce and revise images to obtain highly satisfactory images.

- [x] Design Expert. LLMGA incorporates an extensive array of image design data, offering deep insights for a wide range of design tasks, including logo creation, game character design, poster design, T-shirt design, infographic design, and more.

- [x] Illustration Generation. LLMGA can interactively generate story illustrations based on user-input story snippets.

- [x] Picture Book Generation. With a single user's instruction, LLMGA can generate an interwoven storybook of text and illustrations.

- [x] Multilingual Support.Through the multilingual adaptation of the LLMGA, T2I and editing model can generate content using Chinese language instructions.

- [x] Flexible Expansion. LLMGA offers enhanced flexibility by integrating with external plugins like ControlNet, enabling a wider range of functionalities.

- [x] To be continued ......

- [x] Support gradio demo.

- [ ] Support more generation models

Please follow the instructions below to install the required packages.

- Clone this repository

git clone https://github.com/dvlab-research/LLMGA.git- Install Package

conda create -n llmga python=3.9 -y

conda activate llmga

cd LLMGA

pip install --upgrade pip # enable PEP 660 support

pip install -e .

cd ./llmga/diffusers

pip install . - Install additional packages for training cases

pip install -e ".[train]"

pip install -r requirements.txt

pip install flash-attn --no-build-isolation

pip install datasets

pip install albumentations

pip install ninjaWe provide the training data for LLMGA training.

please download LLMGA datasets and LLaVA pretrain datasets.

Besides, download LLaVA1.5 instruction tuning datasets llava_v1_5_mix665k.json, and download the images from constituting datasets:

- COCO: train2017

- GQA: images

- OCR-VQA: download script, we save all files as

.jpg - TextVQA: train_val_images

- VisualGenome: part1, part2.

Please organize these downloaded data as in Structure.

We recommend users to download the pretrained MLP projector weights. Then put them in ./checkpoints following Structure.

Please download MLLM Models and SD models from the following links. For example, you can download LLMGA-MLLM7b and LLMGA-SDXL-T2I to realize LLMGA7b-T2I functionality. Please organize them as in Structure.

| MLLM Model (support English) | Pretrained Models |

|---|---|

| llmga-vicuna 7b | Download |

| llmga-mistral 7b | Download |

| llmga-llama3 8b | Download |

| llmga-qwen2 0.5b | Download |

| llmga-qwen2 1.5b | Download |

| llmga-qwen2 7b | Download |

| llmga-phi3 3b | Download |

| llmga-gemma 2b | Download |

| MLLM Model (further support Chinese and English) | Pretrained Models |

|---|---|

| llmga-cn-vicuna 7b | Download |

| llmga-cn-llama3 8b | Download |

| llmga-cn-gemma 2b | Download |

| llmga-cn-qwen2 0.5b | Download |

| SD Model | Pretrained Models |

|---|---|

| LLMGA-SD15-T2I | Download |

| LLMGA-SD15-Inpainting | Download |

| LLMGA-SDXL-T2I | Download |

| LLMGA-SDXL-Inpainting | Download |

The folder structure should be organized as follows before training.

LLMGA

├── llmga

├── scripts

├── work_dirs

├── checkpoints

│ ├── llmga-Phi-3-mini-128k-pretrain

│ ├── llmga-Qwen2-0.5B-pretrain

│ ├── llmga-llama3-8b-pretrain

│ ├── llmga-mistral-pretrain

│ ├── llmga-vicuna-7b-v1.5-pretrain

│ ├── llmga-Phi-3-mini-128k-full-finetune

│ ├── llmga-Qwen2-0.5B-full-finetune

│ ├── llmga-llama3-8b-it-full-finetune

│ ├── llmga-mistral_instruct-full-finetune

│ ├── llmga-vicuna-7b-v1.5-full-finetune

│ ├── llmga-cn-vicuna-7b-v1.5-full-finetune

│ ├── llmga-cn-Qwen2-0.5B-full-finetune

│ ├── llmga-sdxl-t2i

│ ├── llmga-sd15-inpainting-v2

│ ├── llmga-sd15-t2i-v2

├── data

│ │── jsons

│ │ ├── llmga-data

│ │ │ ├── Edit/train.json

│ │ │ ├── inpainting/train.json

│ │ │ ├── SG/train.json

│ │ │ ├── T2I/train.json

│ │ ├── text-data

│ │ │ ├── alpaca_gpt4_sharegpt_en_clean2.json

│ │ │ ├── lima.json

│ │ │ ├── oasst2.json

│ │ ├── llava_v1_5_mix665k.json

│ ├── llmga-imgs

│ │ ├── COCO

│ │ ├── LAION

│ │ ├── JourneyDB

│ ├── llava_pretrain

│ │ ├──images

│ ├── llava-imgs

│ │ ├── coco

│ │ │ ├── train2017

│ │ ├── gqa

│ │ │ ├── images

│ │ ├── ocr_vqa

│ │ │ ├── images

│ │ ├── textvqa

│ │ │ ├── train_images

│ │ ├── vg

│ │ │ ├── VG_100K

│ │ │ ├── VG_100K_2

LLMGA is trained on 8 A100 GPUs with 80GB memory. To train on fewer GPUs, you can reduce the per_device_train_batch_size and increase the gradient_accumulation_steps accordingly. Always keep the global batch size the same: per_device_train_batch_size x gradient_accumulation_steps x num_gpus.

Please make sure you download and organize the data following Preparation before training. Here, we just take training llmga vicuna 7b as an example. For more model training scripts, please check the ./scripts folder.

bash scripts/pretrain_vicuna_7b.shbash scripts/train_llmga_s1_7b_vicuna.shtrain LLMGA based on SD1.5-T2I

bash scripts/train_llmga_s2_sd15_t2i.shtrain LLMGA based on SD1.5-Inpainting

bash scripts/train_llmga_s2_sd15_inpaint.shUse LLMGA without the need of Gradio interface. It also supports multiple GPUs, 4-bit and 8-bit quantized inference. With 4-bit quantization. Here, we just give some examples for T2I, inpainting and instruction-based editing. For more model inference scripts, please check the ./scripts folder.

For T2I generation task.

bash scripts/test-llmga-sdxl-t2i.shFor inpainting or outpainting task.

bash scripts/test-llmga-sd15-inpainting.shFor instruction based editing task.

bash scripts/test-llmga-sd15-editing.shbash scripts/run_gradio_t2i.shIf you find this repo useful for your research, please consider citing the paper

@article{xia2023llmga,

title={LLMGA: Multimodal Large Language Model based Generation Assistant},

author={Xia, Bin and Wang, Shiyin, and Tao, Yingfan and Wang, Yitong and Jia, Jiaya},

journal={ECCV},

year={2024}

}

We would like to thank the following repos for their great work:

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for LLMGA

Similar Open Source Tools

LLMGA

LLMGA (Multimodal Large Language Model-based Generation Assistant) is a tool that leverages Large Language Models (LLMs) to assist users in image generation and editing. It provides detailed language generation prompts for precise control over Stable Diffusion (SD), resulting in more intricate and precise content in generated images. The tool curates a dataset for prompt refinement, similar image generation, inpainting & outpainting, and visual question answering. It offers a two-stage training scheme to optimize SD alignment and a reference-based restoration network to alleviate texture, brightness, and contrast disparities in image editing. LLMGA shows promising generative capabilities and enables wider applications in an interactive manner.

GPT4Point

GPT4Point is a unified framework for point-language understanding and generation. It aligns 3D point clouds with language, providing a comprehensive solution for tasks such as 3D captioning and controlled 3D generation. The project includes an automated point-language dataset annotation engine, a novel object-level point cloud benchmark, and a 3D multi-modality model. Users can train and evaluate models using the provided code and datasets, with a focus on improving models' understanding capabilities and facilitating the generation of 3D objects.

aiconfigurator

The `aiconfigurator` tool assists in finding a strong starting configuration for disaggregated serving in AI deployments. It helps optimize throughput at a given latency by evaluating thousands of configurations based on model, GPU count, and GPU type. The tool models LLM inference using collected data for a target machine and framework, running via CLI and web app. It generates configuration files for deployment with Dynamo, offering features like customized configuration, all-in-one automation, and tuning with advanced features. The tool estimates performance by breaking down LLM inference into operations, collecting operation execution times, and searching for strong configurations. Supported features include models like GPT and operations like attention, KV cache, GEMM, AllReduce, embedding, P2P, element-wise, MoE, MLA BMM, TRTLLM versions, and parallel modes like tensor-parallel and pipeline-parallel.

SoM-LLaVA

SoM-LLaVA is a new data source and learning paradigm for Multimodal LLMs, empowering open-source Multimodal LLMs with Set-of-Mark prompting and improved visual reasoning ability. The repository provides a new dataset that is complementary to existing training sources, enhancing multimodal LLMs with Set-of-Mark prompting and improved general capacity. By adding 30k SoM data to the visual instruction tuning stage of LLaVA, the tool achieves 1% to 6% relative improvements on all benchmarks. Users can train SoM-LLaVA via command line and utilize the implementation to annotate COCO images with SoM. Additionally, the tool can be loaded in Huggingface for further usage.

screenpipe

Screenpipe is an open source application that turns your computer into a personal AI, capturing screen and audio to create a searchable memory of your activities. It allows you to remember everything, search with AI, and keep your data 100% local. The tool is designed for knowledge workers, developers, researchers, people with ADHD, remote workers, and anyone looking for a private, local-first alternative to cloud-based AI memory tools.

zeus

Zeus is a library for measuring the energy consumption of Deep Learning workloads and optimizing their energy consumption. It provides functionalities for energy and power measurement, time and energy optimization, device abstraction, utility functions, and more. Zeus is part of The ML.ENERGY Initiative and has been recognized in various research papers and conferences. It offers a Docker image with all dependencies, working examples for integration, and ongoing research to enhance its capabilities.

NExT-GPT

NExT-GPT is an end-to-end multimodal large language model that can process input and generate output in various combinations of text, image, video, and audio. It leverages existing pre-trained models and diffusion models with end-to-end instruction tuning. The repository contains code, data, and model weights for NExT-GPT, allowing users to work with different modalities and perform tasks like encoding, understanding, reasoning, and generating multimodal content.

WebAI-to-API

This project implements a web API that offers a unified interface to Google Gemini and Claude 3. It provides a self-hosted, lightweight, and scalable solution for accessing these AI models through a streaming API. The API supports both Claude and Gemini models, allowing users to interact with them in real-time. The project includes a user-friendly web UI for configuration and documentation, making it easy to get started and explore the capabilities of the API.

astrsk

astrsk is a tool that pushes the boundaries of AI storytelling by offering advanced AI agents, customizable response formatting, and flexible prompt editing for immersive roleplaying experiences. It provides complete AI agent control, a visual flow editor for conversation flows, and ensures 100% local-first data storage. The tool is true cross-platform with support for various AI providers and modern technologies like React, TypeScript, and Tailwind CSS. Coming soon features include cross-device sync, enhanced session customization, and community features.

airunner

AI Runner is a multi-modal AI interface that allows users to run open-source large language models and AI image generators on their own hardware. The tool provides features such as voice-based chatbot conversations, text-to-speech, speech-to-text, vision-to-text, text generation with large language models, image generation capabilities, image manipulation tools, utility functions, and more. It aims to provide a stable and user-friendly experience with security updates, a new UI, and a streamlined installation process. The application is designed to run offline on users' hardware without relying on a web server, offering a smooth and responsive user experience.

X-AnyLabeling

X-AnyLabeling is a robust annotation tool that seamlessly incorporates an AI inference engine alongside an array of sophisticated features. Tailored for practical applications, it is committed to delivering comprehensive, industrial-grade solutions for image data engineers. This tool excels in swiftly and automatically executing annotations across diverse and intricate tasks.

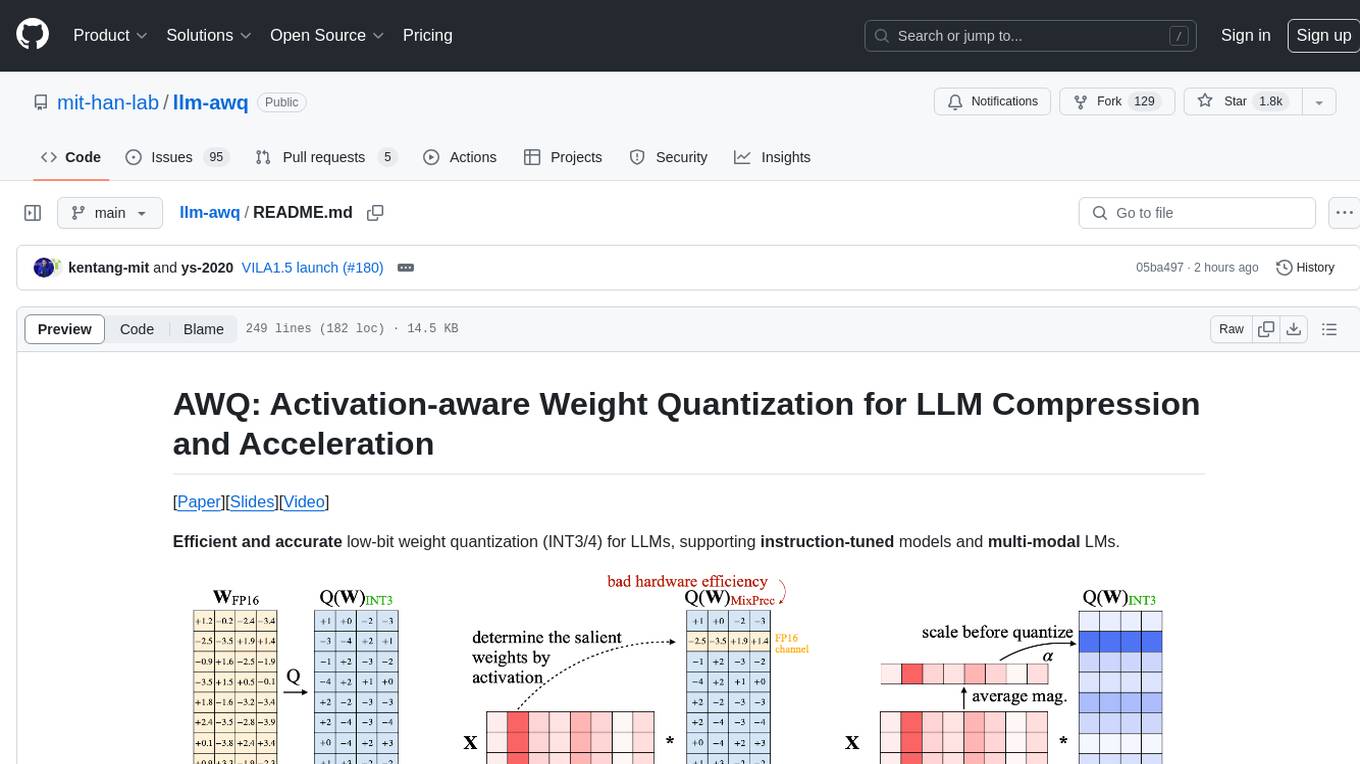

llm-awq

AWQ (Activation-aware Weight Quantization) is a tool designed for efficient and accurate low-bit weight quantization (INT3/4) for Large Language Models (LLMs). It supports instruction-tuned models and multi-modal LMs, providing features such as AWQ search for accurate quantization, pre-computed AWQ model zoo for various LLMs, memory-efficient 4-bit linear in PyTorch, and efficient CUDA kernel implementation for fast inference. The tool enables users to run large models on resource-constrained edge platforms, delivering more efficient responses with LLM/VLM chatbots through 4-bit inference.

univer

Univer is an isomorphic full-stack framework designed for creating and editing spreadsheets, documents, and slides across web and server. It is highly extensible, high-performance, and can be embedded into applications. Univer offers a wide range of features including formulas, conditional formatting, data validation, collaborative editing, printing, import & export, and more. It supports multiple languages and provides a distraction-free editing experience with a clean interface. Univer is suitable for data analysts, software developers, project managers, content creators, and educators.

roo-code-memory-bank

Roo Code Memory Bank is a tool designed for AI-assisted development to maintain project context across sessions. It provides a structured memory system integrated with VS Code, ensuring deep understanding of the project for the AI assistant. The tool includes key components such as Memory Bank for persistent storage, Mode Rules for behavior configuration, VS Code Integration for seamless development experience, and Real-time Updates for continuous context synchronization. Users can configure custom instructions, initialize the Memory Bank, and organize files within the project root directory. The Memory Bank structure includes files for tracking session state, technical decisions, project overview, progress tracking, and optional project brief and system patterns documentation. Features include persistent context, smart workflows for specialized tasks, knowledge management with structured documentation, and cross-referenced project knowledge. Pro tips include handling multiple projects, utilizing Debug mode for troubleshooting, and managing session updates for synchronization. The tool aims to enhance AI-assisted development by providing a comprehensive solution for maintaining project context and facilitating efficient workflows.

oreilly_live_training_agents

This repository provides resources and notebooks for O'Reilly Live Training on getting started with LLM Agents using LangChain & LangGraph. It includes setup instructions, core learning paths, additional topics, repository structure, and additional resources for learning and deploying LangGraph agents.

MiniAI-Face-Recognition-LivenessDetection-ServerSDK

The MiniAiLive Face Recognition LivenessDetection Server SDK provides system integrators with fast, flexible, and extremely precise facial recognition that can be deployed across various scenarios, including security, access control, public safety, fintech, smart retail, and home protection. The SDK is fully on-premise, meaning all processing happens on the hosting server, and no data leaves the server. The project structure includes bin, cpp, flask, model, python, test_image, and Dockerfile directories. To set up the project on Linux, download the repo, install system dependencies, and copy libraries into the system folder. For Windows, contact MiniAiLive via email. The C++ example involves replacing the license key in main.cpp, building the project, and running it. The Python example requires installing dependencies and running the project. The Python Flask example involves replacing the license key in app.py, installing dependencies, and running the project. The Docker Flask example includes building the docker image and running it. To request a license, contact MiniAiLive. Contributions to the project are welcome by following specific steps. An online demo is available at https://demo.miniai.live. Related products include MiniAI-Face-Recognition-LivenessDetection-AndroidSDK, MiniAI-Face-Recognition-LivenessDetection-iOS-SDK, MiniAI-Face-LivenessDetection-AndroidSDK, MiniAI-Face-LivenessDetection-iOS-SDK, MiniAI-Face-Matching-AndroidSDK, and MiniAI-Face-Matching-iOS-SDK. MiniAiLive is a leading AI solutions company specializing in computer vision and machine learning technologies.

For similar tasks

InternGPT

InternGPT (iGPT) is a pointing-language-driven visual interactive system that enhances communication between users and chatbots by incorporating pointing instructions. It improves chatbot accuracy in vision-centric tasks, especially in complex visual scenarios. The system includes an auxiliary control mechanism to enhance the control capability of the language model. InternGPT features a large vision-language model called Husky, fine-tuned for high-quality multi-modal dialogue. Users can interact with ChatGPT by clicking, dragging, and drawing using a pointing device, leading to efficient communication and improved chatbot performance in vision-related tasks.

LLMGA

LLMGA (Multimodal Large Language Model-based Generation Assistant) is a tool that leverages Large Language Models (LLMs) to assist users in image generation and editing. It provides detailed language generation prompts for precise control over Stable Diffusion (SD), resulting in more intricate and precise content in generated images. The tool curates a dataset for prompt refinement, similar image generation, inpainting & outpainting, and visual question answering. It offers a two-stage training scheme to optimize SD alignment and a reference-based restoration network to alleviate texture, brightness, and contrast disparities in image editing. LLMGA shows promising generative capabilities and enables wider applications in an interactive manner.

transformers

Transformers is a state-of-the-art pretrained models library that acts as the model-definition framework for machine learning models in text, computer vision, audio, video, and multimodal tasks. It centralizes model definition for compatibility across various training frameworks, inference engines, and modeling libraries. The library simplifies the usage of new models by providing simple, customizable, and efficient model definitions. With over 1M+ Transformers model checkpoints available, users can easily find and utilize models for their tasks.

InvokeAI

InvokeAI is a leading creative engine built to empower professionals and enthusiasts alike. Generate and create stunning visual media using the latest AI-driven technologies. InvokeAI offers an industry leading Web Interface, interactive Command Line Interface, and also serves as the foundation for multiple commercial products.

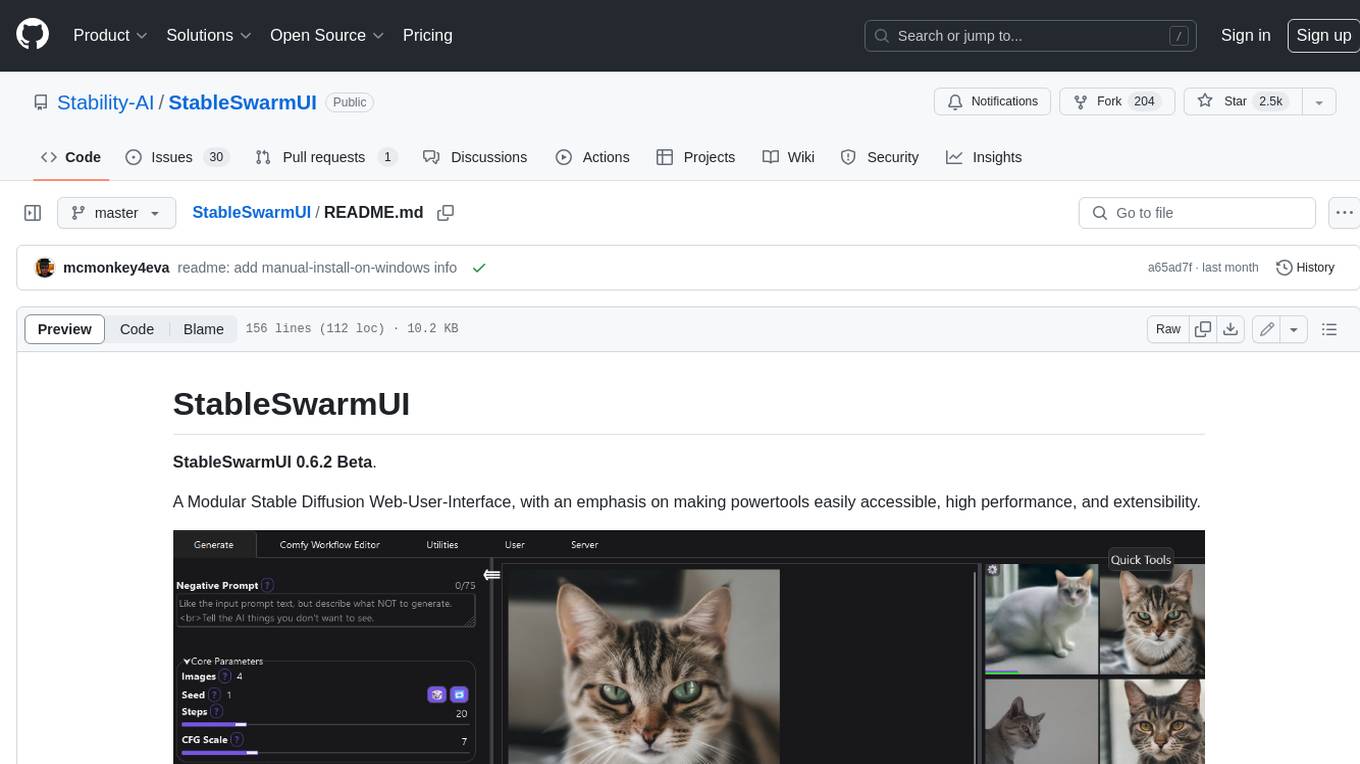

StableSwarmUI

StableSwarmUI is a modular Stable Diffusion web user interface that emphasizes making power tools easily accessible, high performance, and extensible. It is designed to be a one-stop-shop for all things Stable Diffusion, providing a wide range of features and capabilities to enhance the user experience.

civitai

Civitai is a platform where people can share their stable diffusion models (textual inversions, hypernetworks, aesthetic gradients, VAEs, and any other crazy stuff people do to customize their AI generations), collaborate with others to improve them, and learn from each other's work. The platform allows users to create an account, upload their models, and browse models that have been shared by others. Users can also leave comments and feedback on each other's models to facilitate collaboration and knowledge sharing.

ap-plugin

AP-PLUGIN is an AI drawing plugin for the Yunzai series robot framework, allowing you to have a convenient AI drawing experience in the input box. It uses the open source Stable Diffusion web UI as the backend, deploys it for free, and generates a variety of images with richer functions.

ComfyUI-IF_AI_tools

ComfyUI-IF_AI_tools is a set of custom nodes for ComfyUI that allows you to generate prompts using a local Large Language Model (LLM) via Ollama. This tool enables you to enhance your image generation workflow by leveraging the power of language models.

For similar jobs

Detection-and-Classification-of-Alzheimers-Disease

This tool is designed to detect and classify Alzheimer's Disease using Deep Learning and Machine Learning algorithms on an early basis, which is further optimized using the Crow Search Algorithm (CSA). Alzheimer's is a fatal disease, and early detection is crucial for patients to predetermine their condition and prevent its progression. By analyzing MRI scanned images using Artificial Intelligence technology, this tool can classify patients who may or may not develop AD in the future. The CSA algorithm, combined with ML algorithms, has proven to be the most effective approach for this purpose.

Co-LLM-Agents

This repository contains code for building cooperative embodied agents modularly with large language models. The agents are trained to perform tasks in two different environments: ThreeDWorld Multi-Agent Transport (TDW-MAT) and Communicative Watch-And-Help (C-WAH). TDW-MAT is a multi-agent environment where agents must transport objects to a goal position using containers. C-WAH is an extension of the Watch-And-Help challenge, which enables agents to send messages to each other. The code in this repository can be used to train agents to perform tasks in both of these environments.

awesome-synthetic-datasets

This repository focuses on organizing resources for building synthetic datasets using large language models. It covers important datasets, libraries, tools, tutorials, and papers related to synthetic data generation. The goal is to provide pragmatic and practical resources for individuals interested in creating synthetic datasets for machine learning applications.

ai-devices

AI Devices Template is a project that serves as an AI-powered voice assistant utilizing various AI models and services to provide intelligent responses to user queries. It supports voice input, transcription, text-to-speech, image processing, and function calling with conditionally rendered UI components. The project includes customizable UI settings, optional rate limiting using Upstash, and optional tracing with Langchain's LangSmith for function execution. Users can clone the repository, install dependencies, add API keys, start the development server, and deploy the application. Configuration settings can be modified in `app/config.tsx` to adjust settings and configurations for the AI-powered voice assistant.

ROSGPT_Vision

ROSGPT_Vision is a new robotic framework designed to command robots using only two prompts: a Visual Prompt for visual semantic features and an LLM Prompt to regulate robotic reactions. It is based on the Prompting Robotic Modalities (PRM) design pattern and is used to develop CarMate, a robotic application for monitoring driver distractions and providing real-time vocal notifications. The framework leverages state-of-the-art language models to facilitate advanced reasoning about image data and offers a unified platform for robots to perceive, interpret, and interact with visual data through natural language. LangChain is used for easy customization of prompts, and the implementation includes the CarMate application for driver monitoring and assistance.

AIBotPublic

AIBotPublic is an open-source version of AIBotPro, a comprehensive AI tool that provides various features such as knowledge base construction, AI drawing, API hosting, and more. It supports custom plugins and parallel processing of multiple files. The tool is built using bootstrap4 for the frontend, .NET6.0 for the backend, and utilizes technologies like SqlServer, Redis, and Milvus for database and vector database functionalities. It integrates third-party dependencies like Baidu AI OCR, Milvus C# SDK, Google Search, and more to enhance its capabilities.

LLMGA

LLMGA (Multimodal Large Language Model-based Generation Assistant) is a tool that leverages Large Language Models (LLMs) to assist users in image generation and editing. It provides detailed language generation prompts for precise control over Stable Diffusion (SD), resulting in more intricate and precise content in generated images. The tool curates a dataset for prompt refinement, similar image generation, inpainting & outpainting, and visual question answering. It offers a two-stage training scheme to optimize SD alignment and a reference-based restoration network to alleviate texture, brightness, and contrast disparities in image editing. LLMGA shows promising generative capabilities and enables wider applications in an interactive manner.

MetaAgent

MetaAgent is a multi-agent collaboration platform designed to build, manage, and deploy multi-modal AI agents without the need for coding. Users can easily create AI agents by editing a yml file or using the provided UI. The platform supports features such as building LLM-based AI agents, multi-modal interactions with users using texts, audios, images, and videos, creating a company of agents for complex tasks like drawing comics, vector database and knowledge embeddings, and upcoming features like UI for creating and using AI agents, fine-tuning, and RLHF. The tool simplifies the process of creating and deploying AI agents for various tasks.