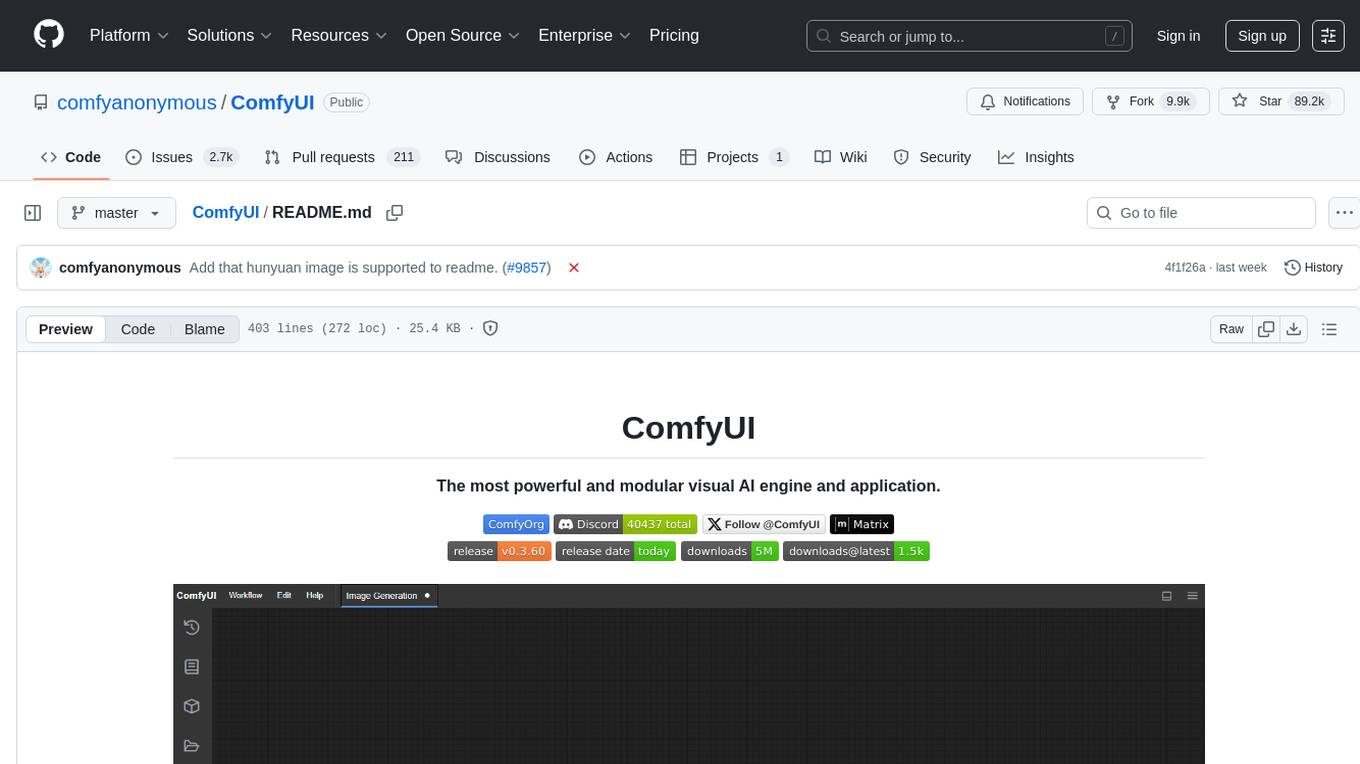

ComfyUI

The most powerful and modular diffusion model GUI, api and backend with a graph/nodes interface.

Stars: 89407

ComfyUI is a powerful and modular visual AI engine and application that allows users to design and execute advanced stable diffusion pipelines using a graph/nodes/flowchart based interface. It provides a user-friendly environment for creating complex Stable Diffusion workflows without the need for coding. ComfyUI supports various models for image editing, video processing, audio manipulation, 3D modeling, and more. It offers features like smart memory management, support for different GPU types, loading and saving workflows as JSON files, and offline functionality. Users can also use API nodes to access paid models from external providers through the online Comfy API.

README:

ComfyUI lets you design and execute advanced stable diffusion pipelines using a graph/nodes/flowchart based interface. Available on Windows, Linux, and macOS.

- The easiest way to get started.

- Available on Windows & macOS.

- Get the latest commits and completely portable.

- Available on Windows.

Supports all operating systems and GPU types (NVIDIA, AMD, Intel, Apple Silicon, Ascend).

See what ComfyUI can do with the example workflows.

- Nodes/graph/flowchart interface to experiment and create complex Stable Diffusion workflows without needing to code anything.

- Image Models

- SD1.x, SD2.x (unCLIP)

- SDXL, SDXL Turbo

- Stable Cascade

- SD3 and SD3.5

- Pixart Alpha and Sigma

- AuraFlow

- HunyuanDiT

- Flux

- Lumina Image 2.0

- HiDream

- Qwen Image

- Hunyuan Image 2.1

- Image Editing Models

- Video Models

- Audio Models

- 3D Models

- Asynchronous Queue system

- Many optimizations: Only re-executes the parts of the workflow that changes between executions.

- Smart memory management: can automatically run large models on GPUs with as low as 1GB vram with smart offloading.

- Works even if you don't have a GPU with:

--cpu(slow) - Can load ckpt and safetensors: All in one checkpoints or standalone diffusion models, VAEs and CLIP models.

- Safe loading of ckpt, pt, pth, etc.. files.

- Embeddings/Textual inversion

- Loras (regular, locon and loha)

- Hypernetworks

- Loading full workflows (with seeds) from generated PNG, WebP and FLAC files.

- Saving/Loading workflows as Json files.

- Nodes interface can be used to create complex workflows like one for Hires fix or much more advanced ones.

- Area Composition

- Inpainting with both regular and inpainting models.

- ControlNet and T2I-Adapter

- Upscale Models (ESRGAN, ESRGAN variants, SwinIR, Swin2SR, etc...)

- GLIGEN

- Model Merging

- LCM models and Loras

- Latent previews with TAESD

- Works fully offline: core will never download anything unless you want to.

- Optional API nodes to use paid models from external providers through the online Comfy API.

- Config file to set the search paths for models.

Workflow examples can be found on the Examples page

ComfyUI follows a weekly release cycle targeting Friday but this regularly changes because of model releases or large changes to the codebase. There are three interconnected repositories:

-

- Releases a new stable version (e.g., v0.7.0)

- Serves as the foundation for the desktop release

-

- Builds a new release using the latest stable core version

-

- Weekly frontend updates are merged into the core repository

- Features are frozen for the upcoming core release

- Development continues for the next release cycle

| Keybind | Explanation |

|---|---|

Ctrl + Enter

|

Queue up current graph for generation |

Ctrl + Shift + Enter

|

Queue up current graph as first for generation |

Ctrl + Alt + Enter

|

Cancel current generation |

Ctrl + Z/Ctrl + Y

|

Undo/Redo |

Ctrl + S

|

Save workflow |

Ctrl + O

|

Load workflow |

Ctrl + A

|

Select all nodes |

Alt + C

|

Collapse/uncollapse selected nodes |

Ctrl + M

|

Mute/unmute selected nodes |

Ctrl + B

|

Bypass selected nodes (acts like the node was removed from the graph and the wires reconnected through) |

Delete/Backspace

|

Delete selected nodes |

Ctrl + Backspace

|

Delete the current graph |

Space |

Move the canvas around when held and moving the cursor |

Ctrl/Shift + Click

|

Add clicked node to selection |

Ctrl + C/Ctrl + V

|

Copy and paste selected nodes (without maintaining connections to outputs of unselected nodes) |

Ctrl + C/Ctrl + Shift + V

|

Copy and paste selected nodes (maintaining connections from outputs of unselected nodes to inputs of pasted nodes) |

Shift + Drag

|

Move multiple selected nodes at the same time |

Ctrl + D

|

Load default graph |

Alt + +

|

Canvas Zoom in |

Alt + -

|

Canvas Zoom out |

Ctrl + Shift + LMB + Vertical drag |

Canvas Zoom in/out |

P |

Pin/Unpin selected nodes |

Ctrl + G

|

Group selected nodes |

Q |

Toggle visibility of the queue |

H |

Toggle visibility of history |

R |

Refresh graph |

F |

Show/Hide menu |

. |

Fit view to selection (Whole graph when nothing is selected) |

| Double-Click LMB | Open node quick search palette |

Shift + Drag |

Move multiple wires at once |

Ctrl + Alt + LMB |

Disconnect all wires from clicked slot |

Ctrl can also be replaced with Cmd instead for macOS users

There is a portable standalone build for Windows that should work for running on Nvidia GPUs or for running on your CPU only on the releases page.

Simply download, extract with 7-Zip and run. Make sure you put your Stable Diffusion checkpoints/models (the huge ckpt/safetensors files) in: ComfyUI\models\checkpoints

If you have trouble extracting it, right click the file -> properties -> unblock

See the Config file to set the search paths for models. In the standalone windows build you can find this file in the ComfyUI directory. Rename this file to extra_model_paths.yaml and edit it with your favorite text editor.

You can install and start ComfyUI using comfy-cli:

pip install comfy-cli

comfy installPython 3.13 is very well supported. If you have trouble with some custom node dependencies you can try 3.12

Git clone this repo.

Put your SD checkpoints (the huge ckpt/safetensors files) in: models/checkpoints

Put your VAE in: models/vae

AMD users can install rocm and pytorch with pip if you don't have it already installed, this is the command to install the stable version:

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/rocm6.4

This is the command to install the nightly with ROCm 6.4 which might have some performance improvements:

pip install --pre torch torchvision torchaudio --index-url https://download.pytorch.org/whl/nightly/rocm6.4

(Option 1) Intel Arc GPU users can install native PyTorch with torch.xpu support using pip. More information can be found here

- To install PyTorch xpu, use the following command:

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/xpu

This is the command to install the Pytorch xpu nightly which might have some performance improvements:

pip install --pre torch torchvision torchaudio --index-url https://download.pytorch.org/whl/nightly/xpu

(Option 2) Alternatively, Intel GPUs supported by Intel Extension for PyTorch (IPEX) can leverage IPEX for improved performance.

- visit Installation for more information.

Nvidia users should install stable pytorch using this command:

pip install torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/cu129

This is the command to install pytorch nightly instead which might have performance improvements.

pip install --pre torch torchvision torchaudio --index-url https://download.pytorch.org/whl/nightly/cu129

If you get the "Torch not compiled with CUDA enabled" error, uninstall torch with:

pip uninstall torch

And install it again with the command above.

Install the dependencies by opening your terminal inside the ComfyUI folder and:

pip install -r requirements.txt

After this you should have everything installed and can proceed to running ComfyUI.

You can install ComfyUI in Apple Mac silicon (M1 or M2) with any recent macOS version.

- Install pytorch nightly. For instructions, read the Accelerated PyTorch training on Mac Apple Developer guide (make sure to install the latest pytorch nightly).

- Follow the ComfyUI manual installation instructions for Windows and Linux.

- Install the ComfyUI dependencies. If you have another Stable Diffusion UI you might be able to reuse the dependencies.

- Launch ComfyUI by running

python main.py

Note: Remember to add your models, VAE, LoRAs etc. to the corresponding Comfy folders, as discussed in ComfyUI manual installation.

This is very badly supported and is not recommended. There are some unofficial builds of pytorch ROCm on windows that exist that will give you a much better experience than this. This readme will be updated once official pytorch ROCm builds for windows come out.

pip install torch-directml Then you can launch ComfyUI with: python main.py --directml

For models compatible with Ascend Extension for PyTorch (torch_npu). To get started, ensure your environment meets the prerequisites outlined on the installation page. Here's a step-by-step guide tailored to your platform and installation method:

- Begin by installing the recommended or newer kernel version for Linux as specified in the Installation page of torch-npu, if necessary.

- Proceed with the installation of Ascend Basekit, which includes the driver, firmware, and CANN, following the instructions provided for your specific platform.

- Next, install the necessary packages for torch-npu by adhering to the platform-specific instructions on the Installation page.

- Finally, adhere to the ComfyUI manual installation guide for Linux. Once all components are installed, you can run ComfyUI as described earlier.

For models compatible with Cambricon Extension for PyTorch (torch_mlu). Here's a step-by-step guide tailored to your platform and installation method:

- Install the Cambricon CNToolkit by adhering to the platform-specific instructions on the Installation

- Next, install the PyTorch(torch_mlu) following the instructions on the Installation

- Launch ComfyUI by running

python main.py

For models compatible with Iluvatar Extension for PyTorch. Here's a step-by-step guide tailored to your platform and installation method:

- Install the Iluvatar Corex Toolkit by adhering to the platform-specific instructions on the Installation

- Launch ComfyUI by running

python main.py

python main.py

Try running it with this command if you have issues:

For 6700, 6600 and maybe other RDNA2 or older: HSA_OVERRIDE_GFX_VERSION=10.3.0 python main.py

For AMD 7600 and maybe other RDNA3 cards: HSA_OVERRIDE_GFX_VERSION=11.0.0 python main.py

You can enable experimental memory efficient attention on recent pytorch in ComfyUI on some AMD GPUs using this command, it should already be enabled by default on RDNA3. If this improves speed for you on latest pytorch on your GPU please report it so that I can enable it by default.

TORCH_ROCM_AOTRITON_ENABLE_EXPERIMENTAL=1 python main.py --use-pytorch-cross-attention

You can also try setting this env variable PYTORCH_TUNABLEOP_ENABLED=1 which might speed things up at the cost of a very slow initial run.

Only parts of the graph that have an output with all the correct inputs will be executed.

Only parts of the graph that change from each execution to the next will be executed, if you submit the same graph twice only the first will be executed. If you change the last part of the graph only the part you changed and the part that depends on it will be executed.

Dragging a generated png on the webpage or loading one will give you the full workflow including seeds that were used to create it.

You can use () to change emphasis of a word or phrase like: (good code:1.2) or (bad code:0.8). The default emphasis for () is 1.1. To use () characters in your actual prompt escape them like \( or \).

You can use {day|night}, for wildcard/dynamic prompts. With this syntax "{wild|card|test}" will be randomly replaced by either "wild", "card" or "test" by the frontend every time you queue the prompt. To use {} characters in your actual prompt escape them like: \{ or \}.

Dynamic prompts also support C-style comments, like // comment or /* comment */.

To use a textual inversion concepts/embeddings in a text prompt put them in the models/embeddings directory and use them in the CLIPTextEncode node like this (you can omit the .pt extension):

embedding:embedding_filename.pt

Use --preview-method auto to enable previews.

The default installation includes a fast latent preview method that's low-resolution. To enable higher-quality previews with TAESD, download the taesd_decoder.pth, taesdxl_decoder.pth, taesd3_decoder.pth and taef1_decoder.pth and place them in the models/vae_approx folder. Once they're installed, restart ComfyUI and launch it with --preview-method taesd to enable high-quality previews.

Generate a self-signed certificate (not appropriate for shared/production use) and key by running the command: openssl req -x509 -newkey rsa:4096 -keyout key.pem -out cert.pem -sha256 -days 3650 -nodes -subj "/C=XX/ST=StateName/L=CityName/O=CompanyName/OU=CompanySectionName/CN=CommonNameOrHostname"

Use --tls-keyfile key.pem --tls-certfile cert.pem to enable TLS/SSL, the app will now be accessible with https://... instead of http://....

Note: Windows users can use alexisrolland/docker-openssl or one of the 3rd party binary distributions to run the command example above.

If you use a container, note that the volume mount-vcan be a relative path so... -v ".\:/openssl-certs" ...would create the key & cert files in the current directory of your command prompt or powershell terminal.

Discord: Try the #help or #feedback channels.

Matrix space: #comfyui_space:matrix.org (it's like discord but open source).

See also: https://www.comfy.org/

As of August 15, 2024, we have transitioned to a new frontend, which is now hosted in a separate repository: ComfyUI Frontend. This repository now hosts the compiled JS (from TS/Vue) under the web/ directory.

For any bugs, issues, or feature requests related to the frontend, please use the ComfyUI Frontend repository. This will help us manage and address frontend-specific concerns more efficiently.

The new frontend is now the default for ComfyUI. However, please note:

- The frontend in the main ComfyUI repository is updated fortnightly.

- Daily releases are available in the separate frontend repository.

To use the most up-to-date frontend version:

-

For the latest daily release, launch ComfyUI with this command line argument:

--front-end-version Comfy-Org/ComfyUI_frontend@latest -

For a specific version, replace

latestwith the desired version number:--front-end-version Comfy-Org/[email protected]

This approach allows you to easily switch between the stable fortnightly release and the cutting-edge daily updates, or even specific versions for testing purposes.

If you need to use the legacy frontend for any reason, you can access it using the following command line argument:

--front-end-version Comfy-Org/ComfyUI_legacy_frontend@latest

This will use a snapshot of the legacy frontend preserved in the ComfyUI Legacy Frontend repository.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ComfyUI

Similar Open Source Tools

ComfyUI

ComfyUI is a powerful and modular visual AI engine and application that allows users to design and execute advanced stable diffusion pipelines using a graph/nodes/flowchart based interface. It provides a user-friendly environment for creating complex Stable Diffusion workflows without the need for coding. ComfyUI supports various models for image editing, video processing, audio manipulation, 3D modeling, and more. It offers features like smart memory management, support for different GPU types, loading and saving workflows as JSON files, and offline functionality. Users can also use API nodes to access paid models from external providers through the online Comfy API.

ComfyUI

ComfyUI is a powerful and modular visual AI engine and application that allows users to design and execute advanced stable diffusion pipelines using a graph/nodes/flowchart based interface. It provides a user-friendly environment for creating complex Stable Diffusion workflows without the need for coding. ComfyUI supports various models for image, video, audio, and 3D processing, along with features like smart memory management, model loading, embeddings/textual inversion, and offline usage. Users can experiment with different models, create complex workflows, and optimize their processes efficiently.

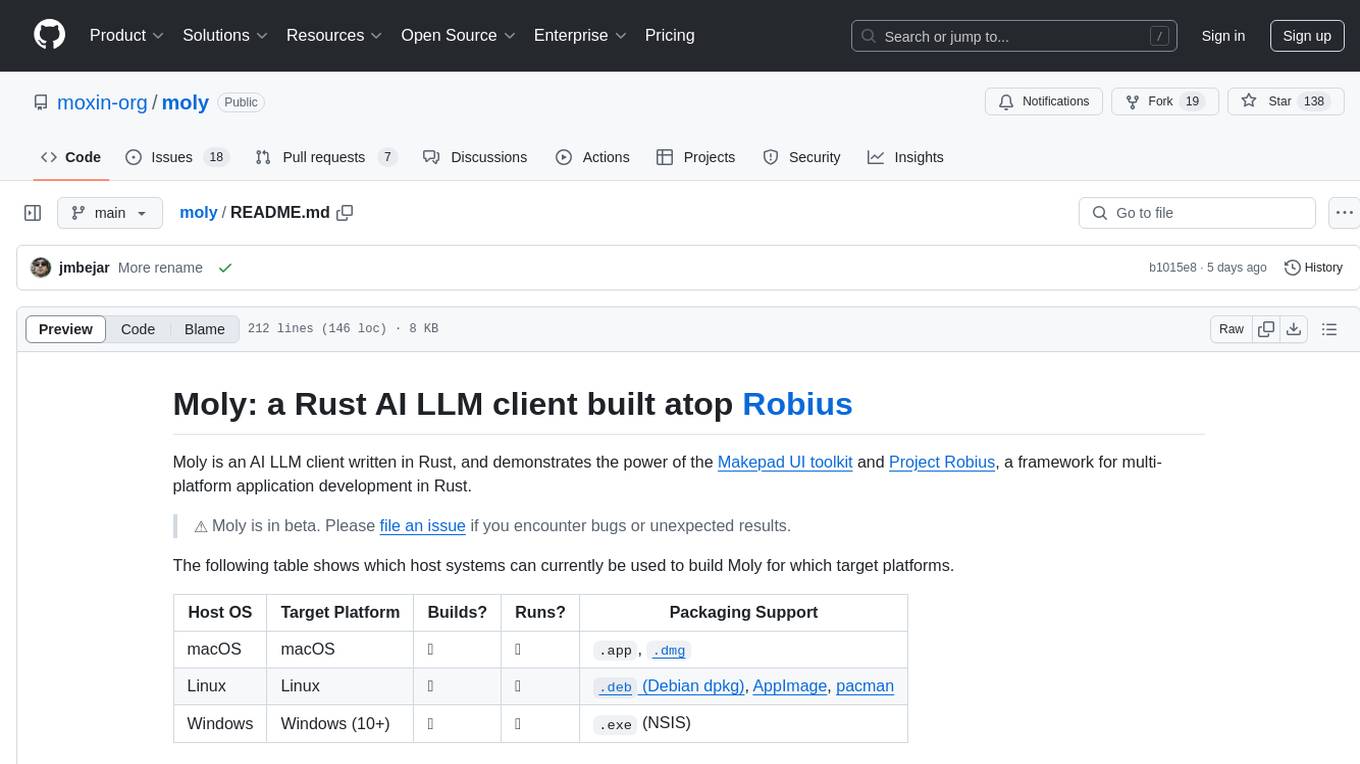

moly

Moly is an AI LLM client written in Rust, showcasing the capabilities of the Makepad UI toolkit and Project Robius, a framework for multi-platform application development in Rust. It is currently in beta, allowing users to build and run Moly on macOS, Linux, and Windows. The tool provides packaging support for different platforms, such as `.app`, `.dmg`, `.deb`, AppImage, pacman, and `.exe` (NSIS). Users can easily set up WasmEdge using `moly-runner` and leverage `cargo` commands to build and run Moly. Additionally, Moly offers pre-built releases for download and supports packaging for distribution on Linux, Windows, and macOS.

LlamaEdge

The LlamaEdge project makes it easy to run LLM inference apps and create OpenAI-compatible API services for the Llama2 series of LLMs locally. It provides a Rust+Wasm stack for fast, portable, and secure LLM inference on heterogeneous edge devices. The project includes source code for text generation, chatbot, and API server applications, supporting all LLMs based on the llama2 framework in the GGUF format. LlamaEdge is committed to continuously testing and validating new open-source models and offers a list of supported models with download links and startup commands. It is cross-platform, supporting various OSes, CPUs, and GPUs, and provides troubleshooting tips for common errors.

vim-ollama

The 'vim-ollama' plugin for Vim adds Copilot-like code completion support using Ollama as a backend, enabling intelligent AI-based code completion and integrated chat support for code reviews. It does not rely on cloud services, preserving user privacy. The plugin communicates with Ollama via Python scripts for code completion and interactive chat, supporting Vim only. Users can configure LLM models for code completion tasks and interactive conversations, with detailed installation and usage instructions provided in the README.

torchchat

torchchat is a codebase showcasing the ability to run large language models (LLMs) seamlessly. It allows running LLMs using Python in various environments such as desktop, server, iOS, and Android. The tool supports running models via PyTorch, chatting, generating text, running chat in the browser, and running models on desktop/server without Python. It also provides features like AOT Inductor for faster execution, running in C++ using the runner, and deploying and running on iOS and Android. The tool supports popular hardware and OS including Linux, Mac OS, Android, and iOS, with various data types and execution modes available.

depthai

This repository contains a demo application for DepthAI, a tool that can load different networks, create pipelines, record video, and more. It provides documentation for installation and usage, including running programs through Docker. Users can explore DepthAI features via command line arguments or a clickable QT interface. Supported models include various AI models for tasks like face detection, human pose estimation, and object detection. The tool collects anonymous usage statistics by default, which can be disabled. Users can report issues to the development team for support and troubleshooting.

LARS

LARS is an application that enables users to run Large Language Models (LLMs) locally on their devices, upload their own documents, and engage in conversations where the LLM grounds its responses with the uploaded content. The application focuses on Retrieval Augmented Generation (RAG) to increase accuracy and reduce AI-generated inaccuracies. LARS provides advanced citations, supports various file formats, allows follow-up questions, provides full chat history, and offers customization options for LLM settings. Users can force enable or disable RAG, change system prompts, and tweak advanced LLM settings. The application also supports GPU-accelerated inferencing, multiple embedding models, and text extraction methods. LARS is open-source and aims to be the ultimate RAG-centric LLM application.

desktop

ComfyUI Desktop is a packaged desktop application that allows users to easily use ComfyUI with bundled features like ComfyUI source code, ComfyUI-Manager, and uv. It automatically installs necessary Python dependencies and updates with stable releases. The app comes with Electron, Chromium binaries, and node modules. Users can store ComfyUI files in a specified location and manage model paths. The tool requires Python 3.12+ and Visual Studio with Desktop C++ workload for Windows. It uses nvm to manage node versions and yarn as the package manager. Users can install ComfyUI and dependencies using comfy-cli, download uv, and build/launch the code. Troubleshooting steps include rebuilding modules and installing missing libraries. The tool supports debugging in VSCode and provides utility scripts for cleanup. Crash reports can be sent to help debug issues, but no personal data is included.

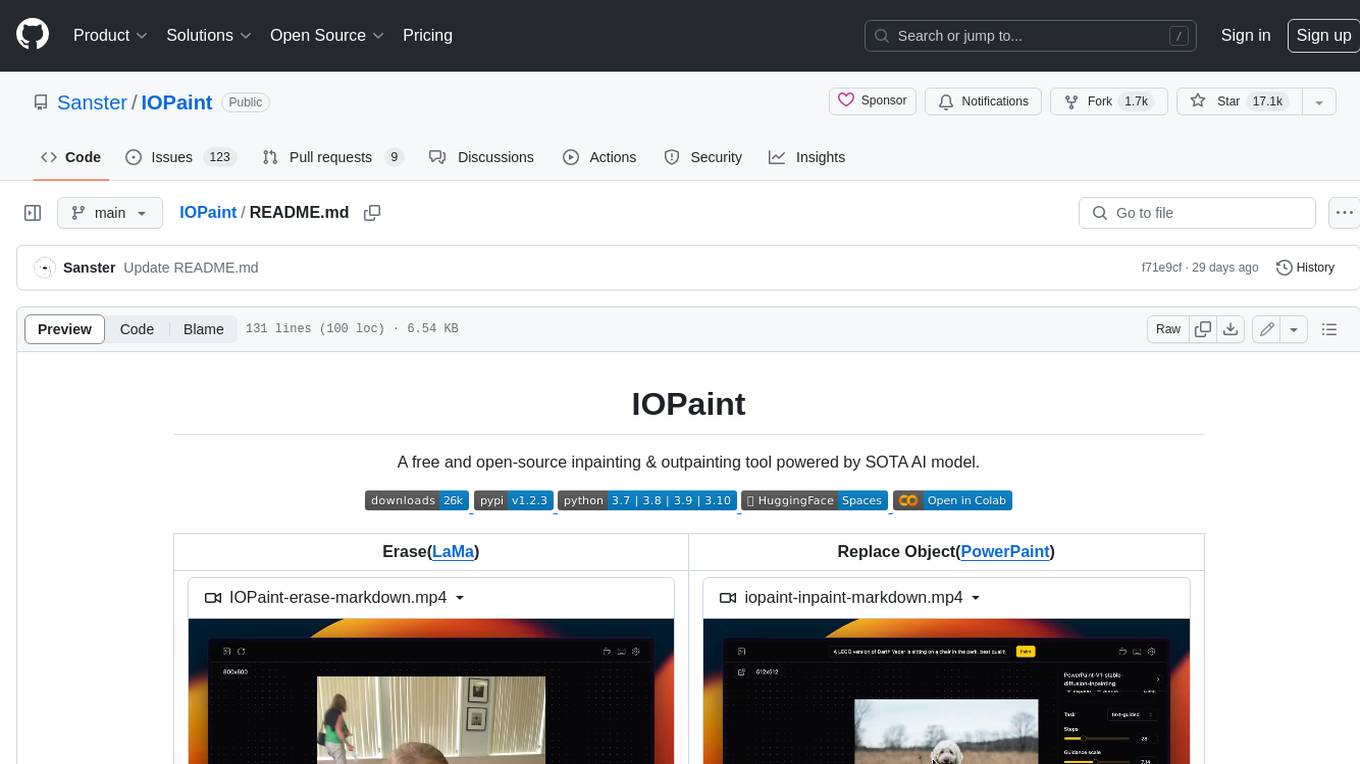

IOPaint

IOPaint is a free and open-source inpainting & outpainting tool powered by SOTA AI model. It supports various AI models to perform erase, inpainting, or outpainting tasks. Users can remove unwanted objects, defects, watermarks, or people from images using erase models. Additionally, diffusion models can replace objects or perform outpainting. The tool also offers plugins for interactive object segmentation, background removal, anime segmentation, super resolution, face restoration, and file management. IOPaint provides a web UI for easy access to the latest AI models and supports batch processing of images through the command line. Developers can contribute to the project by installing front-end dependencies, setting up the backend, and starting the development environment for both front-end and back-end components.

termax

Termax is an LLM agent in your terminal that converts natural language to commands. It is featured by: - Personalized Experience: Optimize the command generation with RAG. - Various LLMs Support: OpenAI GPT, Anthropic Claude, Google Gemini, Mistral AI, and more. - Shell Extensions: Plugin with popular shells like `zsh`, `bash` and `fish`. - Cross Platform: Able to run on Windows, macOS, and Linux.

lexido

Lexido is an innovative assistant for the Linux command line, designed to boost your productivity and efficiency. Powered by Gemini Pro 1.0 and utilizing the free API, Lexido offers smart suggestions for commands based on your prompts and importantly your current environment. Whether you're installing software, managing files, or configuring system settings, Lexido streamlines the process, making it faster and more intuitive.

gpustack

GPUStack is an open-source GPU cluster manager designed for running large language models (LLMs). It supports a wide variety of hardware, scales with GPU inventory, offers lightweight Python package with minimal dependencies, provides OpenAI-compatible APIs, simplifies user and API key management, enables GPU metrics monitoring, and facilitates token usage and rate metrics tracking. The tool is suitable for managing GPU clusters efficiently and effectively.

rclip

rclip is a command-line photo search tool powered by the OpenAI's CLIP neural network. It allows users to search for images using text queries, similar image search, and combining multiple queries. The tool extracts features from photos to enable searching and indexing, with options for previewing results in supported terminals or custom viewers. Users can install rclip on Linux, macOS, and Windows using different installation methods. The repository follows the Conventional Commits standard and welcomes contributions from the community.

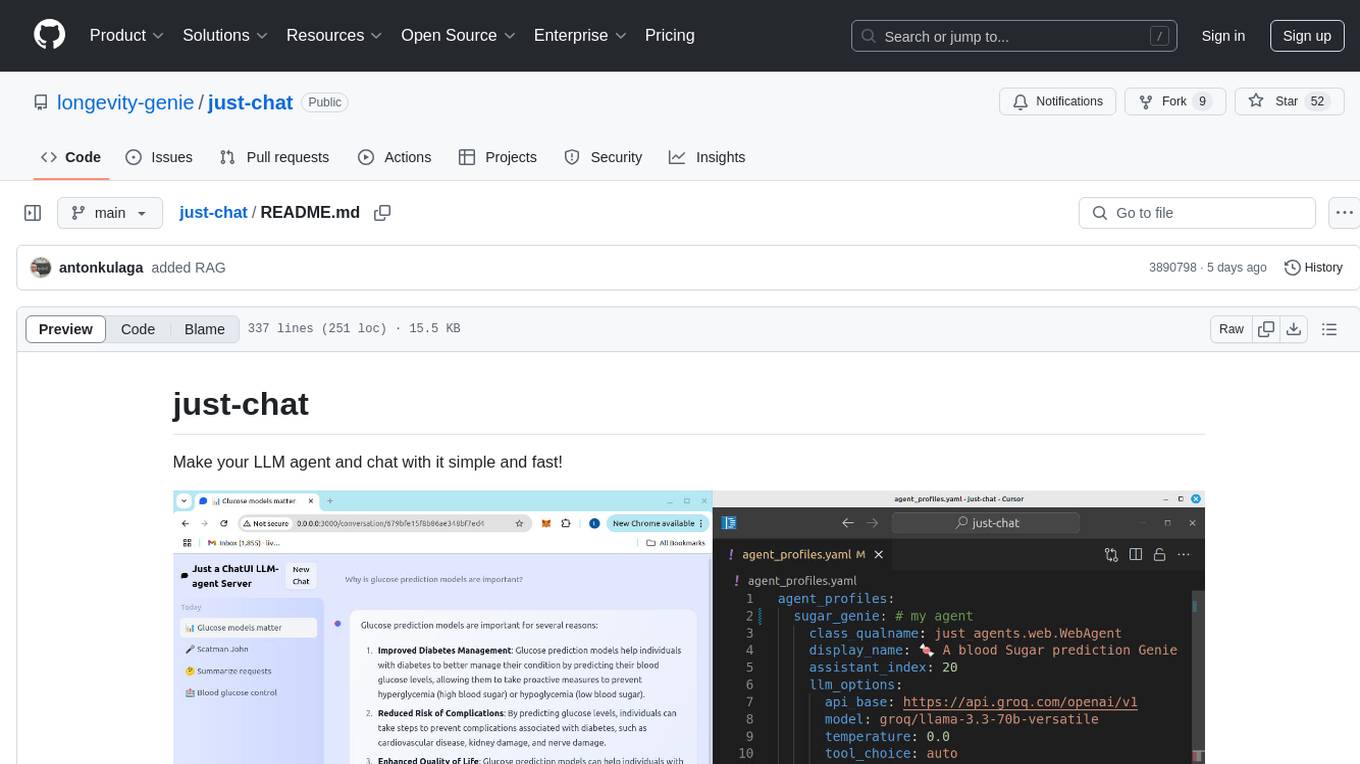

just-chat

Just-Chat is a containerized application that allows users to easily set up and chat with their AI agent. Users can customize their AI assistant using a YAML file, add new capabilities with Python tools, and interact with the agent through a chat web interface. The tool supports various modern models like DeepSeek Reasoner, ChatGPT, LLAMA3.3, etc. Users can also use semantic search capabilities with MeiliSearch to find and reference relevant information based on meaning. Just-Chat requires Docker or Podman for operation and provides detailed installation instructions for both Linux and Windows users.

ai-starter-kit

SambaNova AI Starter Kits is a collection of open-source examples and guides designed to facilitate the deployment of AI-driven use cases for developers and enterprises. The kits cover various categories such as Data Ingestion & Preparation, Model Development & Optimization, Intelligent Information Retrieval, and Advanced AI Capabilities. Users can obtain a free API key using SambaNova Cloud or deploy models using SambaStudio. Most examples are written in Python but can be applied to any programming language. The kits provide resources for tasks like text extraction, fine-tuning embeddings, prompt engineering, question-answering, image search, post-call analysis, and more.

For similar tasks

ComfyUI

ComfyUI is a powerful and modular visual AI engine and application that allows users to design and execute advanced stable diffusion pipelines using a graph/nodes/flowchart based interface. It provides a user-friendly environment for creating complex Stable Diffusion workflows without the need for coding. ComfyUI supports various models for image editing, video processing, audio manipulation, 3D modeling, and more. It offers features like smart memory management, support for different GPU types, loading and saving workflows as JSON files, and offline functionality. Users can also use API nodes to access paid models from external providers through the online Comfy API.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.