comark

A high-performance markdown parser and renderer with Vue & React components support.

Stars: 207

Comark is a high-performance markdown parser and renderer that supports Vue & React components. It features a fast markdown-exit based parser, stream API for buffered parsing, Comark component syntax support, auto-close unclosed markdown syntax, frontmatter parsing (YAML), automatic table of contents generation, and full TypeScript support. It is designed to efficiently parse and render markdown content with support for Vue and React components.

README:

A high-performance markdown parser and renderer with Vue & React components support.

- 🚀 Fast markdown-exit based parser

- 📦 Stream API for buffered parsing

- 🔧 Comark component syntax support

- 🔒 Auto-close unclosed markdown syntax (perfect for streaming)

- 📝 Frontmatter parsing (YAML)

- 📑 Automatic table of contents generation

- 🎯 Full TypeScript support

npm install comark

# or

pnpm add comark<script setup lang="ts">

import { Comark } from 'comark/vue'

import cjk from '@comark/cjk'

import math from '@comark/math'

import { Math } from '@comark/math/vue'

const chatMessage = ...

</script>

<template>

<Comark :components="{ Math }" :plugins="[cjk(), math()]">{{ chatMessage }}</Comark>

</template>import { Comark } from 'comark/react'

import cjk from '@comark/cjk'

import math from '@comark/math'

import { Math } from '@comark/math/react'

function App() {

const chatMessage = ...

return <Comark components={{ Math }} plugins={[cjk(), math()]}>{chatMessage}</Comark>

}Made with ❤️

Published under MIT License.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for comark

Similar Open Source Tools

comark

Comark is a high-performance markdown parser and renderer that supports Vue & React components. It features a fast markdown-exit based parser, stream API for buffered parsing, Comark component syntax support, auto-close unclosed markdown syntax, frontmatter parsing (YAML), automatic table of contents generation, and full TypeScript support. It is designed to efficiently parse and render markdown content with support for Vue and React components.

mdream

Mdream is a lightweight and user-friendly markdown editor designed for developers and writers. It provides a simple and intuitive interface for creating and editing markdown files with real-time preview. The tool offers syntax highlighting, markdown formatting options, and the ability to export files in various formats. Mdream aims to streamline the writing process and enhance productivity for individuals working with markdown documents.

markdrop

Markdrop is a Python package that facilitates the conversion of PDFs to markdown format while extracting images and tables. It also generates descriptive text descriptions for extracted tables and images using various LLM clients. The tool offers additional functionalities such as PDF URL support, AI-powered image and table descriptions, interactive HTML output with downloadable Excel tables, customizable image resolution and UI elements, and a comprehensive logging system. Markdrop aims to simplify the process of handling PDF documents and enhancing their content with AI-generated descriptions.

ollama4j

Ollama4j is a Java library that serves as a wrapper or binding for the Ollama server. It facilitates communication with the Ollama server and provides models for deployment. The tool requires Java 11 or higher and can be installed locally or via Docker. Users can integrate Ollama4j into Maven projects by adding the specified dependency. The tool offers API specifications and supports various development tasks such as building, running unit tests, and integration tests. Releases are automated through GitHub Actions CI workflow. Areas of improvement include adhering to Java naming conventions, updating deprecated code, implementing logging, using lombok, and enhancing request body creation. Contributions to the project are encouraged, whether reporting bugs, suggesting enhancements, or contributing code.

daytona

Daytona is a secure and elastic infrastructure tool designed for running AI-generated code. It offers lightning-fast infrastructure with sub-90ms sandbox creation, separated and isolated runtime for executing AI code with zero risk, massive parallelization for concurrent AI workflows, programmatic control through various APIs, unlimited sandbox persistence, and OCI/Docker compatibility. Users can create sandboxes using Python or TypeScript SDKs, run code securely inside the sandbox, and clean up the sandbox after execution. Daytona is open source under the GNU Affero General Public License and welcomes contributions from developers.

client-ts

Mistral Typescript Client is an SDK for Mistral AI API, providing Chat Completion and Embeddings APIs. It allows users to create chat completions, upload files, create agent completions, create embedding requests, and more. The SDK supports various JavaScript runtimes and provides detailed documentation on installation, requirements, API key setup, example usage, error handling, server selection, custom HTTP client, authentication, providers support, standalone functions, debugging, and contributions.

mcp-ui

mcp-ui is a collection of SDKs that bring interactive web components to the Model Context Protocol (MCP). It allows servers to define reusable UI snippets, render them securely in the client, and react to their actions in the MCP host environment. The SDKs include @mcp-ui/server (TypeScript) for generating UI resources on the server, @mcp-ui/client (TypeScript) for rendering UI components on the client, and mcp_ui_server (Ruby) for generating UI resources in a Ruby environment. The project is an experimental community playground for MCP UI ideas, with rapid iteration and enhancements.

llm-metadata

LLM Metadata is a lightweight static API designed for discovering and integrating LLM metadata. It provides a high-throughput friendly, static-by-default interface that serves static JSON via GitHub Pages. The sources for the metadata include models.dev/api.json and contributions from the basellm community. The tool allows for easy rebuilding on change and offers various scripts for compiling TypeScript, building the API, and managing the project. It also supports internationalization for both documentation and API, enabling users to add new languages and localize capability labels and descriptions. The tool follows an auto-update policy based on a configuration file and allows for directory-based overrides for providers and models, facilitating customization and localization of metadata.

json-io

json-io is a powerful and lightweight Java library that simplifies JSON5, JSON, and TOON serialization and deserialization while handling complex object graphs with ease. It preserves object references, handles polymorphic types, and maintains cyclic relationships in data structures. It offers full JSON5 support, TOON read/write capabilities, and is compatible with JDK 1.8 through JDK 24. The library is built with a focus on correctness over speed, providing extensive configuration options and two modes for data representation. json-io is designed for developers who require advanced serialization features and support for various Java types without external dependencies.

lingo.dev

Replexica AI automates software localization end-to-end, producing authentic translations instantly across 60+ languages. Teams can do localization 100x faster with state-of-the-art quality, reaching more paying customers worldwide. The tool offers a GitHub Action for CI/CD automation and supports various formats like JSON, YAML, CSV, and Markdown. With lightning-fast AI localization, auto-updates, native quality translations, developer-friendly CLI, and scalability for startups and enterprise teams, Replexica is a top choice for efficient and effective software localization.

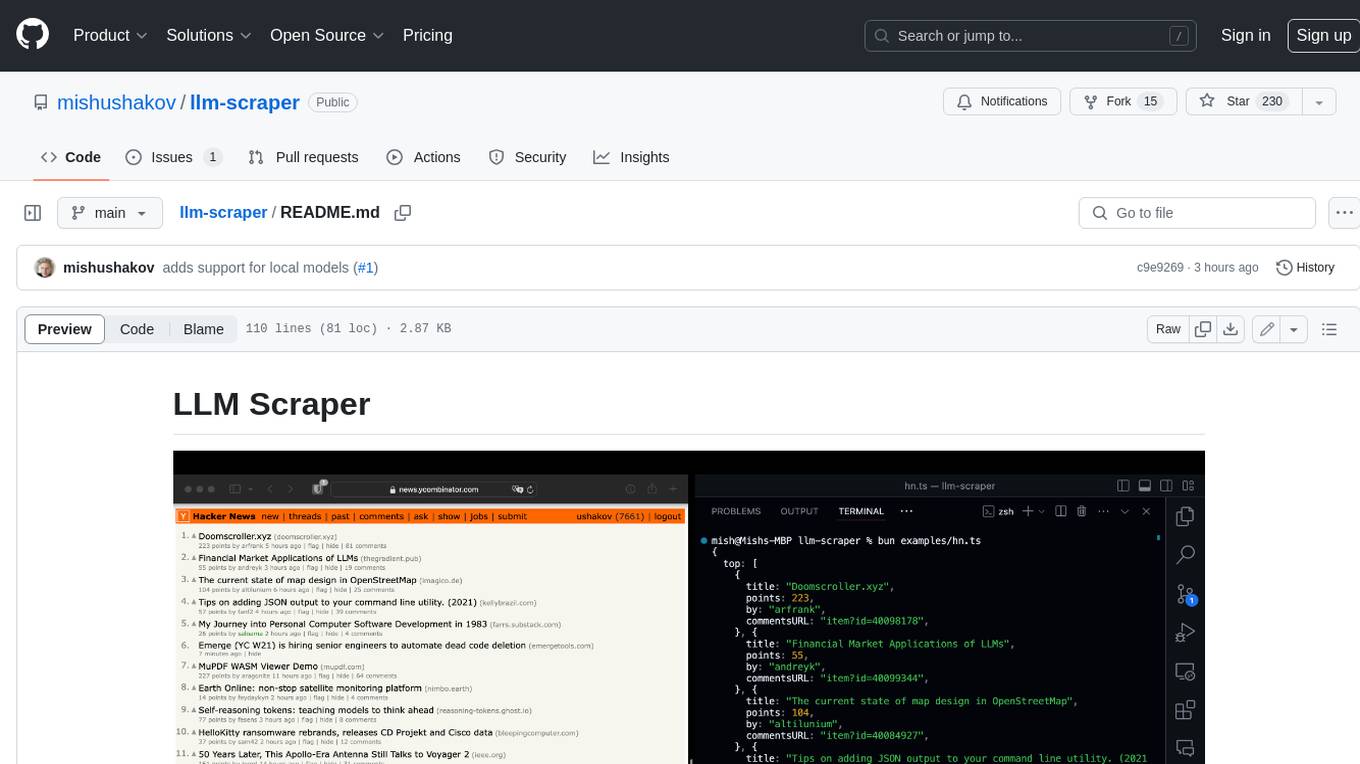

llm-scraper

LLM Scraper is a TypeScript library that allows you to convert any webpages into structured data using LLMs. It supports Local (GGUF), OpenAI, Groq chat models, and schemas defined with Zod. With full type-safety in TypeScript and based on the Playwright framework, it offers streaming when crawling multiple pages and supports four input modes: html, markdown, text, and image.

laravel-mcp-server

Laravel MCP Server is a tool that allows users to build a route-first MCP server in Laravel and Lumen. It provides route-based MCP endpoint registration, streamable HTTP transport, and supports tool, resource, resource template, and prompt registration per endpoint. The server metadata is compatible with route cache, and it requires PHP version 8.2 or higher along with Laravel (Illuminate) version 9.x or Lumen version 9.x. Users can quickly install the tool, register endpoints, and verify functionality. Additionally, the tool offers advanced features like creating tools, resources, resource templates, prompts, notifications, and generating tools from OpenAPI specs.

browser4

Browser4 is a lightning-fast, coroutine-safe browser designed for AI integration with large language models. It offers ultra-fast automation, deep web understanding, and powerful data extraction APIs. Users can automate the browser, extract data at scale, and perform tasks like summarizing products, extracting product details, and finding specific links. The tool is developer-friendly, supports AI-powered automation, and provides advanced features like X-SQL for precise data extraction. It also offers RPA capabilities, browser control, and complex data extraction with X-SQL. Browser4 is suitable for web scraping, data extraction, automation, and AI integration tasks.

agent-tool-protocol

Agent Tool Protocol (ATP) is a production-ready, code-first protocol for AI agents to interact with external systems through secure sandboxed code execution. It enables AI agents to write and execute TypeScript/JavaScript code in a secure, sandboxed environment, allowing for parallel operations, data filtering and transformation, and familiar programming patterns. ATP provides a complete ecosystem for building production-ready AI agents with secure code execution, runtime SDK, stateless architecture, client tools for integration, provenance tracking, and compatibility with OpenAPI and MCP. It solves limitations of traditional protocols like MCP by offering open API integration, parallel execution, data processing, code flexibility, universal compatibility, reduced token usage, type safety, and being production-ready. ATP allows LLMs to write code that executes in a secure sandbox, providing the full power of a programming language while maintaining strict security boundaries.

VimLM

VimLM is an AI-powered coding assistant for Vim that integrates AI for code generation, refactoring, and documentation directly into your Vim workflow. It offers native Vim integration with split-window responses and intuitive keybindings, offline first execution with MLX-compatible models, contextual awareness with seamless integration with codebase and external resources, conversational workflow for iterating on responses, project scaffolding for generating and deploying code blocks, and extensibility for creating custom LLM workflows with command chains.

llama-api-server

This project aims to create a RESTful API server compatible with the OpenAI API using open-source backends like llama/llama2. With this project, various GPT tools/frameworks can be compatible with your own model. Key features include: - **Compatibility with OpenAI API**: The API server follows the OpenAI API structure, allowing seamless integration with existing tools and frameworks. - **Support for Multiple Backends**: The server supports both llama.cpp and pyllama backends, providing flexibility in model selection. - **Customization Options**: Users can configure model parameters such as temperature, top_p, and top_k to fine-tune the model's behavior. - **Batch Processing**: The API supports batch processing for embeddings, enabling efficient handling of multiple inputs. - **Token Authentication**: The server utilizes token authentication to secure access to the API. This tool is particularly useful for developers and researchers who want to integrate large language models into their applications or explore custom models without relying on proprietary APIs.

For similar tasks

comark

Comark is a high-performance markdown parser and renderer that supports Vue & React components. It features a fast markdown-exit based parser, stream API for buffered parsing, Comark component syntax support, auto-close unclosed markdown syntax, frontmatter parsing (YAML), automatic table of contents generation, and full TypeScript support. It is designed to efficiently parse and render markdown content with support for Vue and React components.

hollama

Hollama is a minimal web-UI tool designed for interacting with Ollama servers. It features large prompt fields, streams completions, ability to copy completions as raw text, Markdown parsing with syntax highlighting, and saves sessions/context in the browser's localStorage. Users can access the latest version of Hollama at https://hollama.fernando.is without sign up, and data is stored locally on the browser. The tool can also be run as a Docker image by executing a specific command. Developers can connect to an Ollama server by updating the ORIGIN settings. Hollama facilitates easy development by providing instructions to set up the environment, install dependencies, and start a development server. Building a production version of the app is straightforward with a single command, and deployment may require installing an adapter for the target environment.

pro-chat

ProChat is a components library focused on quickly building large language model chat interfaces. It empowers developers to create rich, dynamic, and intuitive chat interfaces with features like automatic chat caching, streamlined conversations, message editing tools, auto-rendered Markdown, and programmatic controls. The tool also includes design evolution plans such as customized dialogue rendering, enhanced request parameters, personalized error handling, expanded documentation, and atomic component design.

ChatGPT-Telegram-Bot

The ChatGPT Telegram Bot is a powerful Telegram bot that utilizes various GPT models, including GPT3.5, GPT4, GPT4 Turbo, GPT4 Vision, DALL·E 3, Groq Mixtral-8x7b/LLaMA2-70b, and Claude2.1/Claude3 opus/sonnet API. It enables users to engage in efficient conversations and information searches on Telegram. The bot supports multiple AI models, online search with DuckDuckGo and Google, user-friendly interface, efficient message processing, document interaction, Markdown rendering, and convenient deployment options like Zeabur, Replit, and Docker. Users can set environment variables for configuration and deployment. The bot also provides Q&A functionality, supports model switching, and can be deployed in group chats with whitelisting. The project is open source under GPLv3 license.

PureChat

PureChat is a chat application integrated with ChatGPT, featuring efficient application building with Vite5, screenshot generation and copy support for chat records, IM instant messaging SDK for sessions, automatic light and dark mode switching based on system theme, Markdown rendering, code highlighting, and link recognition support, seamless social experience with GitHub quick login, integration of large language models like ChatGPT Ollama for streaming output, preset prompts, and context, Electron desktop app versions for macOS and Windows, ongoing development of more features. Environment setup requires Node.js 18.20+. Clone code with 'git clone https://github.com/Hyk260/PureChat.git', install dependencies with 'pnpm install', start project with 'pnpm dev', and build with 'pnpm build'.

Sidekick

Sidekick is a native LLM application for macOS that allows users to chat with a local language model to retrieve information from files, folders, and websites without the need for additional software installation. It operates offline, ensuring data privacy and security. Sidekick offers features such as resource access, image generation, inline writing assistance, advanced markdown rendering, fast generation speeds, and more. The tool aims to provide a simple and powerful solution for accessing local, private models with context awareness of user files and content on the web.

Code-Atlas

Code Atlas is a lightweight interpreter developed in C++ that supports the execution of multi-language code snippets and partial Markdown rendering. It consumes significantly lower resources compared to similar tools, making it suitable for resource-limited devices. It leverages llama.cpp for local large-model inference and supports cloud-based large-model APIs. The tool provides features for code execution, Markdown rendering, local AI inference, and resource efficiency.

deepchat

DeepChat is a versatile chat tool that supports multiple model cloud services and local model deployment. It offers multi-channel chat concurrency support, platform compatibility, complete Markdown rendering, and easy usability with a comprehensive guide. The tool aims to enhance chat experiences by leveraging various AI models and ensuring efficient conversation management.

For similar jobs

Protofy

Protofy is a full-stack, batteries-included low-code enabled web/app and IoT system with an API system and real-time messaging. It is based on Protofy (protoflow + visualui + protolib + protodevices) + Expo + Next.js + Tamagui + Solito + Express + Aedes + Redbird + Many other amazing packages. Protofy can be used to fast prototype Apps, webs, IoT systems, automations, or APIs. It is a ultra-extensible CMS with supercharged capabilities, mobile support, and IoT support (esp32 thanks to esphome).

react-native-vision-camera

VisionCamera is a powerful, high-performance Camera library for React Native. It features Photo and Video capture, QR/Barcode scanner, Customizable devices and multi-cameras ("fish-eye" zoom), Customizable resolutions and aspect-ratios (4k/8k images), Customizable FPS (30..240 FPS), Frame Processors (JS worklets to run facial recognition, AI object detection, realtime video chats, ...), Smooth zooming (Reanimated), Fast pause and resume, HDR & Night modes, Custom C++/GPU accelerated video pipeline (OpenGL).

dev-conf-replay

This repository contains information about various IT seminars and developer conferences in South Korea, allowing users to watch replays of past events. It covers a wide range of topics such as AI, big data, cloud, infrastructure, devops, blockchain, mobility, games, security, mobile development, frontend, programming languages, open source, education, and community events. Users can explore upcoming and past events, view related YouTube channels, and access additional resources like free programming ebooks and data structures and algorithms tutorials.

OpenDevin

OpenDevin is an open-source project aiming to replicate Devin, an autonomous AI software engineer capable of executing complex engineering tasks and collaborating actively with users on software development projects. The project aspires to enhance and innovate upon Devin through the power of the open-source community. Users can contribute to the project by developing core functionalities, frontend interface, or sandboxing solutions, participating in research and evaluation of LLMs in software engineering, and providing feedback and testing on the OpenDevin toolset.

polyfire-js

Polyfire is an all-in-one managed backend for AI apps that allows users to build AI applications directly from the frontend, eliminating the need for a separate backend. It simplifies the process by providing most backend services in just a few lines of code. With Polyfire, users can easily create chatbots, transcribe audio files, generate simple text, manage long-term memory, and generate images. The tool also offers starter guides and tutorials to help users get started quickly and efficiently.

sdfx

SDFX is the ultimate no-code platform for building and sharing AI apps with beautiful UI. It enables the creation of user-friendly interfaces for complex workflows by combining Comfy workflow with a UI. The tool is designed to merge the benefits of form-based UI and graph-node based UI, allowing users to create intricate graphs with a high-level UI overlay. SDFX is fully compatible with ComfyUI, abstracting the need for installing ComfyUI. It offers features like animated graph navigation, node bookmarks, UI debugger, custom nodes manager, app and template export, image and mask editor, and more. The tool compiles as a native app or web app, making it easy to maintain and add new features.

aimeos-laravel

Aimeos Laravel is a professional, full-featured, and ultra-fast Laravel ecommerce package that can be easily integrated into existing Laravel applications. It offers a wide range of features including multi-vendor, multi-channel, and multi-warehouse support, fast performance, support for various product types, subscriptions with recurring payments, multiple payment gateways, full RTL support, flexible pricing options, admin backend, REST and GraphQL APIs, modular structure, SEO optimization, multi-language support, AI-based text translation, mobile optimization, and high-quality source code. The package is highly configurable and extensible, making it suitable for e-commerce SaaS solutions, marketplaces, and online shops with millions of vendors.

llm-ui

llm-ui is a React library designed for LLMs, providing features such as removing broken markdown syntax, adding custom components to LLM output, smoothing out pauses in streamed output, rendering at native frame rate, supporting code blocks for every language with Shiki, and being headless to allow for custom styles. The library aims to enhance the user experience and flexibility when working with LLMs.