agent-tool-protocol

Agent Tool Protocol

Stars: 87

Agent Tool Protocol (ATP) is a production-ready, code-first protocol for AI agents to interact with external systems through secure sandboxed code execution. It enables AI agents to write and execute TypeScript/JavaScript code in a secure, sandboxed environment, allowing for parallel operations, data filtering and transformation, and familiar programming patterns. ATP provides a complete ecosystem for building production-ready AI agents with secure code execution, runtime SDK, stateless architecture, client tools for integration, provenance tracking, and compatibility with OpenAPI and MCP. It solves limitations of traditional protocols like MCP by offering open API integration, parallel execution, data processing, code flexibility, universal compatibility, reduced token usage, type safety, and being production-ready. ATP allows LLMs to write code that executes in a secure sandbox, providing the full power of a programming language while maintaining strict security boundaries.

README:

A production-ready, code-first protocol for AI agents to interact with external systems through secure sandboxed code execution.

Agent Tool Protocol (ATP) is a next-generation protocol that enables AI agents to interact with external systems by generating and executing TypeScript/JavaScript code in a secure, sandboxed environment. Unlike traditional function-calling protocols, ATP allows LLMs to write code that can execute multiple operations in parallel, filter and transform data, chain operations together, and use familiar programming patterns.

ATP provides a complete ecosystem for building production-ready AI agents with:

- Secure code execution in isolated V8 VMs with memory limits and timeouts

-

Runtime SDK (

atp.*) for LLM calls, embeddings, approvals, caching, and logging - Stateless architecture with optional caching for scalability

- Client tools for seamless integration with LangChain, LangGraph, and other frameworks

- Provenance tracking to defend against prompt injection attacks

- OpenAPI and MCP compatibility for connecting to any API or MCP server

Traditional function-calling protocols like Model Context Protocol (MCP) have fundamental limitations:

- Context Bloat: Large schemas consume significant tokens in every request

- Sequential Execution: Only one tool can be called at a time

- No Data Processing: Can't filter, transform, or combine results within the protocol

- Limited Model Support: Not all LLMs support function calling well

- Schema Overhead: Complex nested schemas are verbose and token-expensive

- ✅ OpenAPI Integration: Built in open api integration allowing to connect a single server to multiple mcps & openapis

- ✅ Parallel Execution: Execute multiple operations simultaneously

- ✅ Data Processing: Filter, map, reduce, and transform data inline

- ✅ Code Flexibility: Use familiar programming patterns (loops, conditionals, async/await)

- ✅ Universal Compatibility: Works with any LLM that can generate code

- ✅ Reduced Token Usage: Code is more concise than verbose JSON schemas

- ✅ Type Safety: Full TypeScript support with generated type definitions

- ✅ Production Ready: Built-in security, caching, state management, and observability

ATP solves these problems by letting LLMs write code that executes in a secure sandbox, giving agents the full power of a programming language while maintaining strict security boundaries.

graph TB

LLM[LLM/Agent] --> Client[ATP Client]

Client --> Server[ATP Server]

Server --> Validator[Code Validator<br/>AST Analysis]

Server --> Executor[Sandbox Executor<br/>Isolated VM]

Server --> Aggregator[API Aggregator<br/>OpenAPI/MCP/Custom]

Server --> Search[Search Engine<br/>Semantic/Keyword]

Server --> State[State Manager<br/>Pause/Resume]

Executor --> Runtime[Runtime APIs]

Runtime --> LLMAPI[atp.llm.*]

Runtime --> EmbedAPI[atp.embedding.*]

Runtime --> ApprovalAPI[atp.approval.*]

Runtime --> CacheAPI[atp.cache.*]

Runtime --> LogAPI[atp.log.*]

LLMAPI -.Pause.-> Client

EmbedAPI -.Pause.-> Client

ApprovalAPI -.Pause.-> Client

Aggregator --> OpenAPI[OpenAPI Loader]

Aggregator --> MCP[MCP Connector]

Aggregator --> Custom[Custom Functions]Agents executing code have access to a powerful runtime SDK that provides:

-

atp.llm.*: Client-side LLM execution with call, extract, and classify methods -

atp.embedding.*: Semantic search with embedding storage and similarity search -

atp.approval.*: Human-in-the-loop approvals with pause/resume support -

atp.cache.*: Key-value caching with TTL support -

atp.log.*: Structured logging for debugging and observability -

atp.progress.*: Progress reporting for long-running operations -

atp.api.*: Dynamic APIs from OpenAPI specs, MCP servers, or custom functions

The runtime SDK enables agents to perform complex workflows that require LLM reasoning, data persistence, human approval, and more—all within the secure sandbox.

ATP is designed as a stateless system for horizontal scalability:

- Stateless Server: Can work completely stateless with distribute caching like redis

- Execution State: Long-running executions can pause and resume via state management

- State TTL: Configurable time-to-live for execution state

This architecture allows ATP servers to scale horizontally while maintaining execution continuity for complex workflows.

ATP provides the ability to execute code in the client side:

- LLM Callbacks: Client-side LLM execution with automatic pause/resume

- Approval Workflows: Approvals for human-in-the-loop

- Client tools: Support executing tools defined in the client side

- Embedding Capabilities: Execute embedding request using the client embedding model

ATP includes advanced security features to defend against prompt injection and data exfiltration:

- Provenance Tracking: Tracks the origin of all data (user, LLM, API, etc.)

-

Security Policies: Configurable policies like

preventDataExfiltrationandrequireUserOrigin - AST Analysis: Code validation to detect forbidden patterns

- Proxy Mode: Runtime interception of all external calls

- Audit Logging: Complete audit trail of all executions

Provenance security is inspired by Google Research's CAMEL paper and provides defense-in-depth against adversarial inputs.

# Using Yarn (recommended)

yarn add @mondaydotcomorg/atp-server @mondaydotcomorg/atp-client

# Using npm

npm install @mondaydotcomorg/atp-server @mondaydotcomorg/atp-client

# Using pnpm

pnpm add @mondaydotcomorg/atp-server @mondaydotcomorg/atp-client

# Using bun

bun add @mondaydotcomorg/atp-server @mondaydotcomorg/atp-client📝 Note: The

--no-node-snapshotflag is required for Node.js 20+

A single script that integrates OpenAPI (Petstore) and MCP (Playwright):

import { createServer, loadOpenAPI } from '@mondaydotcomorg/atp-server';

import { AgentToolProtocolClient } from '@mondaydotcomorg/atp-client';

import { MCPConnector } from '@mondaydotcomorg/atp-mcp-adapter';

process.env.ATP_JWT_SECRET = process.env.ATP_JWT_SECRET || 'test-secret-key';

async function main() {

const server = createServer({});

// Load OpenAPI spec (supports OpenAPI 3.0+ and Swagger 2.0)

const petstore = await loadOpenAPI('https://petstore.swagger.io/v2/swagger.json', {

name: 'petstore',

filter: { methods: ['GET'] },

});

// Connect to MCP server

const mcpConnector = new MCPConnector();

const playwright = await mcpConnector.connectToMCPServer({

name: 'playwright',

command: 'npx',

args: ['@playwright/mcp@latest'],

});

server.use([petstore, playwright]);

await server.listen(3333);

// Create client and execute code

const client = new AgentToolProtocolClient({

baseUrl: 'http://localhost:3333',

});

await client.init({ name: 'quickstart', version: '1.0.0' });

// Execute code that filters, maps, and transforms API data

const result = await client.execute(`

const pets = await api.petstore.findPetsByStatus({ status: 'available' });

const categories = pets

.filter(p => p.category?.name)

.map(p => p.category.name)

.filter((v, i, a) => a.indexOf(v) === i);

return {

totalPets: pets.length,

categories: categories.slice(0, 5),

sample: pets.slice(0, 3).map(p => ({

name: p.name,

status: p.status

}))

};

`);

console.log('Result:', JSON.stringify(result.result, null, 2));

}

main().catch(console.error);Run it:

cd examples/quickstart

NODE_OPTIONS='--no-node-snapshot' npm startUse ATP with LangChain/LangGraph for autonomous agents:

import { createServer, loadOpenAPI } from '@mondaydotcomorg/atp-server';

import { ChatOpenAI } from '@langchain/openai';

import { createReactAgent } from '@langchain/langgraph/prebuilt';

import { createATPTools } from '@mondaydotcomorg/atp-langchain';

async function main() {

// Start ATP server with OpenAPI

const server = createServer({});

const petstore = await loadOpenAPI('https://petstore.swagger.io/v2/swagger.json', {

name: 'petstore',

filter: { methods: ['GET'] },

});

server.use([petstore]);

await server.listen(3333);

// Create LangChain agent with ATP tools

const llm = new ChatOpenAI({ modelName: 'gpt-4o-mini', temperature: 0 });

const { tools } = await createATPTools({

serverUrl: 'http://localhost:3333',

llm,

});

const agent = createReactAgent({ llm, tools });

// Agent autonomously uses ATP to call APIs

const result = await agent.invoke({

messages: [

{

role: 'user',

content:

'Use ATP to fetch available pets from the petstore API, then tell me how many pets are available and list 3 example pet names.',

},

],

});

console.log('Agent response:', result.messages[result.messages.length - 1].content);

}

main().catch(console.error);Run it:

cd examples/langchain-quickstart

export OPENAI_API_KEY=sk-...

NODE_OPTIONS='--no-node-snapshot' npm start📝 Note: The

--no-node-snapshotflag is required for Node.js 20+ and is already configured in thepackage.json.

ATP provides powerful LangChain/LangGraph integration with LLM callbacks and approval workflows:

import { MemorySaver } from '@langchain/langgraph';

import { createATPTools } from '@mondaydotcomorg/atp-langchain';

import { ChatOpenAI } from '@langchain/openai';

const llm = new ChatOpenAI({ modelName: 'gpt-4.1' });

// Create ATP tools with LLM support and LangGraph interrupt-based approvals

const { tools, isApprovalRequired, resumeWithApproval } = await createATPTools({

serverUrl: 'http://localhost:3333',

llm,

// useLangGraphInterrupts: true (default) - use LangGraph checkpoints for async approvals

});

const checkpointer = new MemorySaver();

const agent = createReactAgent({ llm, tools, checkpointSaver: checkpointer });

try {

await agent.invoke({ messages: [...] }, { configurable: { thread_id: 'thread-1' } });

} catch (error) {

if (isApprovalRequired(error)) {

const { executionId, message } = error.approvalRequest;

// Notify user (Slack, email, etc.)

await notifyUser(message);

// Wait for approval (async - can take hours/days)

const approved = await waitForApproval(executionId);

// Resume execution

const result = await resumeWithApproval(executionId, approved);

}

}

// Alternative: Simple synchronous approval handler

const { tools } = await createATPTools({

serverUrl: 'http://localhost:3333',

llm,

useLangGraphInterrupts: false, // Required for approvalHandler

approvalHandler: async (message, context) => {

console.log('Approval requested:', message);

return true; // or false to deny

},

});The atp.* runtime SDK provides a comprehensive set of APIs for agents executing code:

-

atp.llm.*: Client-side LLM execution for reasoning, extraction, and classification (requiresclient.provideLLM()) -

atp.embedding.*: Semantic search with embedding storage and similarity search (requiresclient.provideEmbedding()) -

atp.approval.*: Human-in-the-loop approvals with pause/resume support (requiresclient.provideApproval()) -

atp.cache.*: Key-value caching with TTL for performance optimization -

atp.log.*: Structured logging for debugging and observability -

atp.progress.*: Progress reporting for long-running operations -

atp.api.*: Dynamic APIs from OpenAPI specs, MCP servers, or custom functions

All runtime APIs are available within the secure sandbox and automatically handle pause/resume for operations that require client-side interaction (LLM, embeddings, approvals).

- Isolated VM: Code runs in true V8 isolates with separate heaps

- No Node.js Access: Zero access to fs, net, child_process, etc.

- Memory Limits: Hard memory limits enforced at VM level

- Timeout Protection: Automatic termination after timeout

- Code Validation: AST analysis and forbidden pattern detection

Defend against prompt injection with provenance tracking:

import { createServer, ProvenanceMode } from '@mondaydotcomorg/atp-server';

import { preventDataExfiltration, requireUserOrigin } from '@mondaydotcomorg/atp-server';

const server = createServer({

execution: {

provenanceMode: ProvenanceMode.PROXY, // or AST

securityPolicies: [

preventDataExfiltration, // Block data exfiltration

requireUserOrigin, // Require user-originated data

],

},

});- LLM Call Limits: Configurable max LLM calls per execution

- Rate Limiting: Requests per minute and executions per hour

- API Key Authentication: Optional API key requirement

- Audit Logging: All executions logged for compliance

ATP provides intelligent API discovery to help agents find the right tools:

- Semantic Search: Embedding-based search for natural language queries (requires embeddings)

- Keyword Search: Fast keyword-based search across API names and descriptions

- Type Definitions: Generated TypeScript definitions for all available APIs

-

Schema Exploration: Full API schema exploration via

client.explore()

Full server configuration:

import { createServer } from '@mondaydotcomorg/atp-server';

import { RedisCache, JSONLAuditSink } from '@mondaydotcomorg/atp-providers';

const server = createServer({

execution: {

timeout: 30000, // 30 seconds

memory: 128 * 1024 * 1024, // 128 MB

llmCalls: 10, // Max LLM calls per execution

provenanceMode: 'proxy', // Provenance tracking mode

securityPolicies: [...], // Security policies

},

clientInit: {

tokenTTL: 3600, // 1 hour

tokenRotation: 1800, // 30 minutes

},

executionState: {

ttl: 3600, // State TTL in seconds

maxPauseDuration: 3600, // Max pause duration

},

discovery: {

embeddings: embeddingsModel, // Enable semantic search

},

audit: {

enabled: true,

sinks: [

new JSONLAuditSink({ path: './logs', rotateDaily: true }),

],

},

otel: {

enabled: true,

serviceName: 'atp-server',

traceEndpoint: 'http://localhost:4318/v1/traces',

},

});

// Add providers

server.setCacheProvider(new RedisCache({ redis }));

server.setAuthProvider(authProvider);

await server.start(3333);import { RedisCache } from '@mondaydotcomorg/atp-providers';

import Redis from 'ioredis';

const redis = new Redis(process.env.REDIS_URL);

server.setCacheProvider(new RedisCache({ redis }));import { JSONLAuditSink } from '@mondaydotcomorg/atp-providers';

const server = createServer({

audit: {

enabled: true,

sinks: [new JSONLAuditSink({ path: './audit-logs', rotateDaily: true })],

},

});const server = createServer({

otel: {

enabled: true,

serviceName: 'atp-server',

traceEndpoint: 'http://localhost:4318/v1/traces',

metricsEndpoint: 'http://localhost:4318/v1/metrics',

},

});import { GoogleOAuthProvider } from '@mondaydotcomorg/atp-providers';

const oauthProvider = new GoogleOAuthProvider({

clientId: process.env.GOOGLE_CLIENT_ID,

clientSecret: process.env.GOOGLE_CLIENT_SECRET,

redirectUri: 'http://localhost:3333/oauth/callback',

scopes: ['https://www.googleapis.com/auth/calendar'],

});

server.addAPIGroup({

name: 'calendar',

type: 'oauth',

oauthProvider,

functions: [...],

});@agent-tool-protocol/

├── protocol # Core types and interfaces

├── server # ATP server implementation

├── client # Client SDK

├── runtime # Runtime APIs (atp.*)

├── mcp-adapter # MCP integration

├── langchain # LangChain/LangGraph integration

├── atp-compiler # Loop transformation and optimization

├── providers # Cache, auth, OAuth, audit providers

└── provenance # Provenance security (CAMEL-inspired)

All examples are self-contained and work end-to-end without external servers.

📝 Note: Node.js 20+ requires the

--no-node-snapshotflag. This is already configured in each example'spackage.jsonscripts, so just runnpm start.

Complete example with OpenAPI (Petstore) and MCP (Playwright) integration.

cd examples/quickstart

NODE_OPTIONS='--no-node-snapshot' npm startEnvironment variables:

-

ATP_JWT_SECRET- Optional (defaults totest-secret-keyin code)

Autonomous LangChain agent using ATP to interact with APIs.

cd examples/langchain-quickstart

export OPENAI_API_KEY=sk-...

NODE_OPTIONS='--no-node-snapshot' npm startEnvironment variables:

-

OPENAI_API_KEY- Required: Your OpenAI API key -

ATP_JWT_SECRET- Optional (defaults totest-secret-keyin code)

Advanced LangChain agent with the test server.

# Start test server

cd examples/test-server

npx tsx server.ts

# Run agent

cd examples/langchain-react-agent

export OPENAI_API_KEY=sk-...

npm startEnvironment variables:

-

OPENAI_API_KEY- Required: Your OpenAI API key

Other examples in the examples/ directory:

-

openapi-example- OpenAPI integration examples -

oauth-example- OAuth flow examples -

production-example- Production configuration examples

# Clone repository

git clone https://github.com/yourusername/agent-tool-protocol.git

cd agent-tool-protocol

# Install dependencies (Node.js 18+)

yarn install

# Build all packages

yarn build

# Run tests

yarn test

# Run E2E tests

yarn test:e2e

# Lint

yarn lintSee docs/publishing.md for publishing releases and prereleases.

Node.js 18+ is required. Node.js 20+ requires the --no-node-snapshot flag.

isolated-vm requires native compilation:

-

macOS:

xcode-select --install -

Ubuntu/Debian:

sudo apt-get install python3 g++ build-essential - Windows: See node-gyp Windows instructions

This project is licensed under the MIT License - see the License file for details.

- Inspired by Model Context Protocol (MCP)

- Provenance security based on Google Research's CAMEL paper

- Sandboxing powered by isolated-vm

- LangChain integration via @langchain/core

Built with ❤️ for the AI community

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for agent-tool-protocol

Similar Open Source Tools

agent-tool-protocol

Agent Tool Protocol (ATP) is a production-ready, code-first protocol for AI agents to interact with external systems through secure sandboxed code execution. It enables AI agents to write and execute TypeScript/JavaScript code in a secure, sandboxed environment, allowing for parallel operations, data filtering and transformation, and familiar programming patterns. ATP provides a complete ecosystem for building production-ready AI agents with secure code execution, runtime SDK, stateless architecture, client tools for integration, provenance tracking, and compatibility with OpenAPI and MCP. It solves limitations of traditional protocols like MCP by offering open API integration, parallel execution, data processing, code flexibility, universal compatibility, reduced token usage, type safety, and being production-ready. ATP allows LLMs to write code that executes in a secure sandbox, providing the full power of a programming language while maintaining strict security boundaries.

sdk-typescript

Strands Agents - TypeScript SDK is a lightweight and flexible SDK that takes a model-driven approach to building and running AI agents in TypeScript/JavaScript. It brings key features from the Python Strands framework to Node.js environments, enabling type-safe agent development for various applications. The SDK supports model agnostic development with first-class support for Amazon Bedrock and OpenAI, along with extensible architecture for custom providers. It also offers built-in MCP support, real-time response streaming, extensible hooks, and conversation management features. With tools for interaction with external systems and seamless integration with MCP servers, the SDK provides a comprehensive solution for developing AI agents.

polyfire-js

Polyfire is an all-in-one managed backend for AI apps that allows users to build AI apps directly from the frontend, eliminating the need for a separate backend. It simplifies the process by providing most backend services in just a few lines of code. With Polyfire, users can easily create chatbots, transcribe audio files to text, generate simple text, create a long-term memory, and generate images with Dall-E. The tool also offers starter guides and tutorials to help users get started quickly and efficiently.

alphora

Alphora is a full-stack framework for building production AI agents, providing agent orchestration, prompt engineering, tool execution, memory management, streaming, and deployment with an async-first, OpenAI-compatible design. It offers features like agent derivation, reasoning-action loop, async streaming, visual debugger, OpenAI compatibility, multimodal support, tool system with zero-config tools and type safety, prompt engine with dynamic prompts, memory and storage management, sandbox for secure execution, deployment as API, and more. Alphora allows users to build sophisticated AI agents easily and efficiently.

MCP-Nest

A NestJS module to effortlessly expose tools, resources, and prompts for AI using the Model Context Protocol (MCP). It allows defining tools, resources, and prompts in a familiar NestJS way, supporting multi-transport, tool validation, interactive tool calls, request context access, fine-grained authorization, resource serving, dynamic resources, prompt templates, guard-based authentication, dependency injection, server mutation, and instrumentation. It provides features for building ChatGPT widgets and MCP apps.

flyte-sdk

Flyte 2 SDK is a pure Python tool for type-safe, distributed orchestration of agents, ML pipelines, and more. It allows users to write data pipelines, ML training jobs, and distributed compute in Python without any DSL constraints. With features like async-first parallelism and fine-grained observability, Flyte 2 offers a seamless workflow experience. Users can leverage core concepts like TaskEnvironments for container configuration, pure Python workflows for flexibility, and async parallelism for distributed execution. Advanced features include sub-task observability with tracing and remote task execution. The tool also provides native Jupyter integration for running and monitoring workflows directly from notebooks. Configuration and deployment are made easy with configuration files and commands for deploying and running workflows. Flyte 2 is licensed under the Apache 2.0 License.

botserver

General Bots is a self-hosted AI automation platform and LLM conversational platform focused on convention over configuration and code-less approaches. It serves as the core API server handling LLM orchestration, business logic, database operations, and multi-channel communication. The platform offers features like multi-vendor LLM API, MCP + LLM Tools Generation, Semantic Caching, Web Automation Engine, Enterprise Data Connectors, and Git-like Version Control. It enforces a ZERO TOLERANCE POLICY for code quality and security, with strict guidelines for error handling, performance optimization, and code patterns. The project structure includes modules for core functionalities like Rhai BASIC interpreter, security, shared types, tasks, auto task system, file operations, learning system, and LLM assistance.

OpenMemory

OpenMemory is a cognitive memory engine for AI agents, providing real long-term memory capabilities beyond simple embeddings. It is self-hosted and supports Python + Node SDKs, with integrations for various tools like LangChain, CrewAI, AutoGen, and more. Users can ingest data from sources like GitHub, Notion, Google Drive, and others directly into memory. OpenMemory offers explainable traces for recalled information and supports multi-sector memory, temporal reasoning, decay engine, waypoint graph, and more. It aims to provide a true memory system rather than just a vector database with marketing copy, enabling users to build agents, copilots, journaling systems, and coding assistants that can remember and reason effectively.

nanolang

NanoLang is a minimal, LLM-friendly programming language that transpiles to C for native performance. It features mandatory testing, unambiguous syntax, automatic memory management, LLM-powered autonomous optimization, dual notation for operators, static typing, C interop, and native performance. The language supports variables, functions with mandatory tests, control flow, structs, enums, generic types, and provides a clean, modern syntax optimized for both human readability and AI code generation.

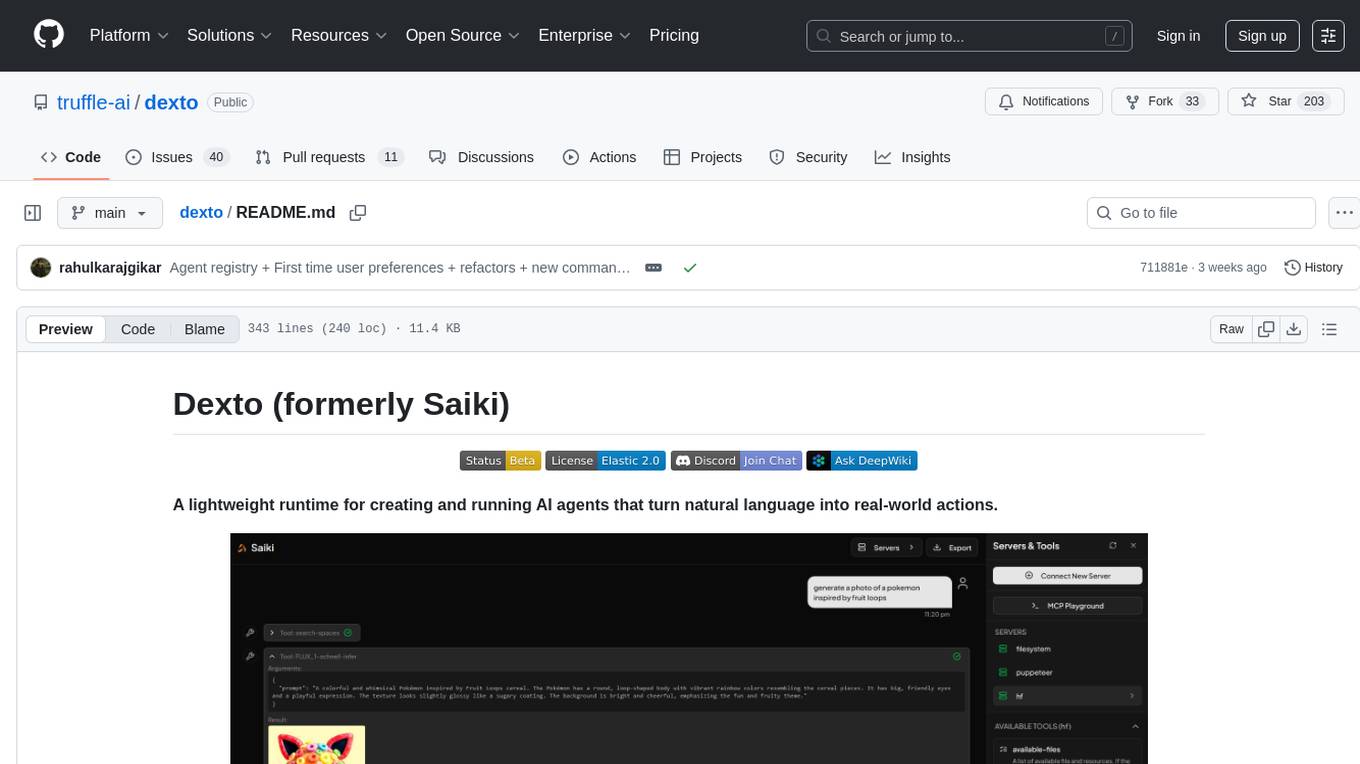

dexto

Dexto is a lightweight runtime for creating and running AI agents that turn natural language into real-world actions. It serves as the missing intelligence layer for building AI applications, standalone chatbots, or as the reasoning engine inside larger products. Dexto features a powerful CLI and Web UI for running AI agents, supports multiple interfaces, allows hot-swapping of LLMs from various providers, connects to remote tool servers via the Model Context Protocol, is config-driven with version-controlled YAML, offers production-ready core features, extensibility for custom services, and enables multi-agent collaboration via MCP and A2A.

ai-counsel

AI Counsel is a true deliberative consensus MCP server where AI models engage in actual debate, refine positions across multiple rounds, and converge with voting and confidence levels. It features two modes (quick and conference), mixed adapters (CLI tools and HTTP services), auto-convergence, structured voting, semantic grouping, model-controlled stopping, evidence-based deliberation, local model support, data privacy, context injection, semantic search, fault tolerance, and full transcripts. Users can run local and cloud models to deliberate on various questions, ground decisions in reality by querying code and files, and query past decisions for analysis. The tool is designed for critical technical decisions requiring multi-model deliberation and consensus building.

mcpproxy-go

MCPProxy is an open-source desktop application that enhances AI agents by enabling intelligent tool discovery, reducing token usage, and providing security against malicious servers. It allows users to federate multiple servers, save tokens, and improve accuracy. The tool works offline and is compatible with various platforms. It offers features like Docker isolation, secrets management, OAuth authentication support, and HTTPS setup. Users can easily install, run, and manage servers using CLI commands. The tool provides detailed documentation and supports various tasks like adding servers, connecting to IDEs, and managing secrets.

routilux

Routilux is a powerful event-driven workflow orchestration framework designed for building complex data pipelines and workflows effortlessly. It offers features like event queue architecture, flexible connections, built-in state management, robust error handling, concurrent execution, persistence & recovery, and simplified API. Perfect for tasks such as data pipelines, API orchestration, event processing, workflow automation, microservices coordination, and LLM agent workflows.

sagents

Sagents is a framework designed for Elixir developers to build interactive AI applications. It combines the wisdom of a Sage with the power of LLM-based Agents, offering features like Human-In-The-Loop (HITL) for sensitive operations, SubAgents for efficient task delegation, GenServer Architecture for supervised OTP processes, Phoenix.Presence Integration for resource management, PubSub Real-Time Events for streaming agent state, Middleware System for extensible capabilities, Cluster-Aware Distribution for running agents across nodes, State Persistence for saving and restoring agent conversations, and Virtual Filesystem for isolated file operations with optional persistence. Sagents is suitable for developers working on interactive AI applications requiring real-time conversations, human oversight, isolated agent processes, persistent agent state, and real-time UI updates.

mcp-context-forge

MCP Context Forge is a powerful tool for generating context-aware data for machine learning models. It provides functionalities to create diverse datasets with contextual information, enhancing the performance of AI algorithms. The tool supports various data formats and allows users to customize the context generation process easily. With MCP Context Forge, users can efficiently prepare training data for tasks requiring contextual understanding, such as sentiment analysis, recommendation systems, and natural language processing.

For similar tasks

AutoGPT

AutoGPT is a revolutionary tool that empowers everyone to harness the power of AI. With AutoGPT, you can effortlessly build, test, and delegate tasks to AI agents, unlocking a world of possibilities. Our mission is to provide the tools you need to focus on what truly matters: innovation and creativity.

agent-os

The Agent OS is an experimental framework and runtime to build sophisticated, long running, and self-coding AI agents. We believe that the most important super-power of AI agents is to write and execute their own code to interact with the world. But for that to work, they need to run in a suitable environment—a place designed to be inhabited by agents. The Agent OS is designed from the ground up to function as a long-term computing substrate for these kinds of self-evolving agents.

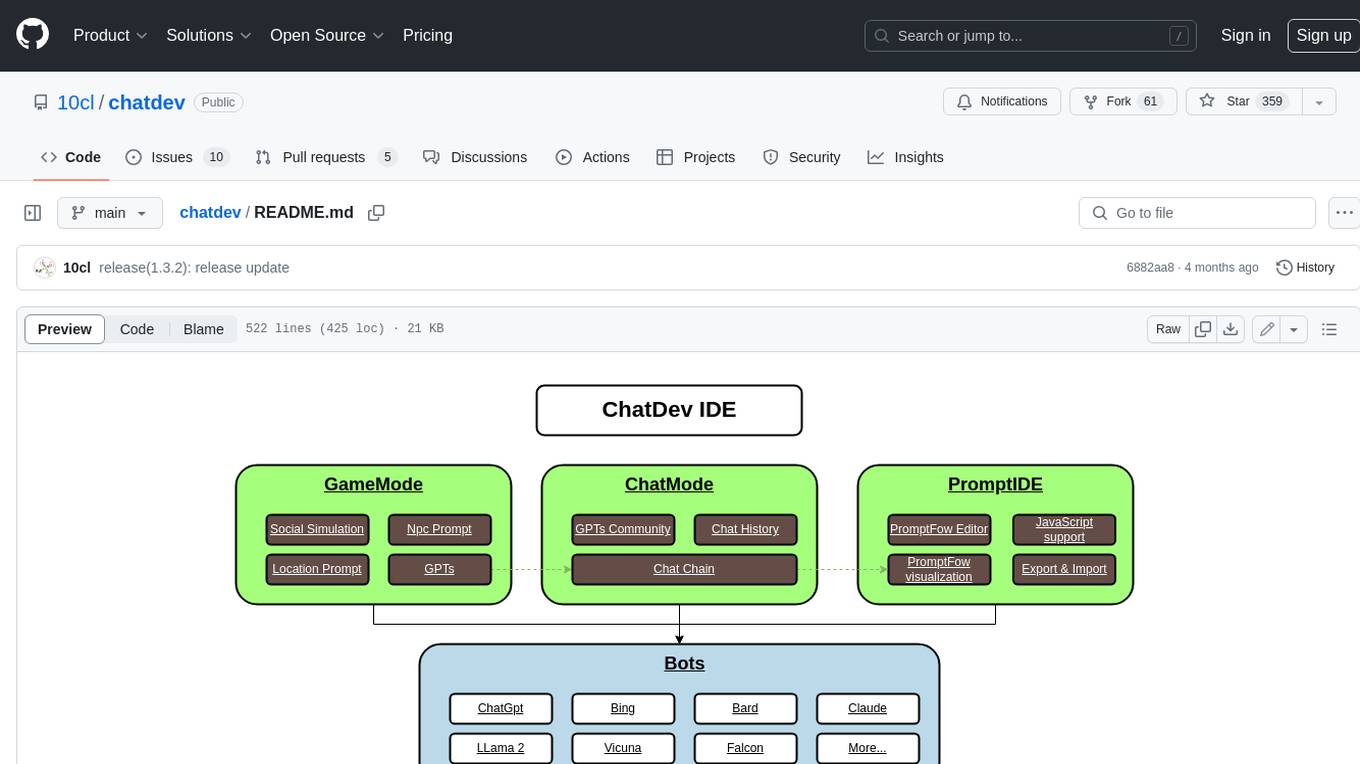

chatdev

ChatDev IDE is a tool for building your AI agent, Whether it's NPCs in games or powerful agent tools, you can design what you want for this platform. It accelerates prompt engineering through **JavaScript Support** that allows implementing complex prompting techniques.

module-ballerinax-ai.agent

This library provides functionality required to build ReAct Agent using Large Language Models (LLMs).

npi

NPi is an open-source platform providing Tool-use APIs to empower AI agents with the ability to take action in the virtual world. It is currently under active development, and the APIs are subject to change in future releases. NPi offers a command line tool for installation and setup, along with a GitHub app for easy access to repositories. The platform also includes a Python SDK and examples like Calendar Negotiator and Twitter Crawler. Join the NPi community on Discord to contribute to the development and explore the roadmap for future enhancements.

ai-agents

The 'ai-agents' repository is a collection of books and resources focused on developing AI agents, including topics such as GPT models, building AI agents from scratch, machine learning theory and practice, and basic methods and tools for data analysis. The repository provides detailed explanations and guidance for individuals interested in learning about and working with AI agents.

llms

The 'llms' repository is a comprehensive guide on Large Language Models (LLMs), covering topics such as language modeling, applications of LLMs, statistical language modeling, neural language models, conditional language models, evaluation methods, transformer-based language models, practical LLMs like GPT and BERT, prompt engineering, fine-tuning LLMs, retrieval augmented generation, AI agents, and LLMs for computer vision. The repository provides detailed explanations, examples, and tools for working with LLMs.

ai-app

The 'ai-app' repository is a comprehensive collection of tools and resources related to artificial intelligence, focusing on topics such as server environment setup, PyCharm and Anaconda installation, large model deployment and training, Transformer principles, RAG technology, vector databases, AI image, voice, and music generation, and AI Agent frameworks. It also includes practical guides and tutorials on implementing various AI applications. The repository serves as a valuable resource for individuals interested in exploring different aspects of AI technology.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.