aiortc

WebRTC and ORTC implementation for Python using asyncio

Stars: 4570

aiortc is a Python library for Web Real-Time Communication (WebRTC) and Object Real-Time Communication (ORTC). It provides a simple and readable implementation for programmers to understand and tinker with WebRTC internals. The library allows for exchanging audio, video, and data channels, supports SDP generation/parsing, ICE, DTLS, SRTP, SCTP, and various audio/video codecs. It also enables creating innovative products by leveraging Python ecosystem modules, such as computer vision algorithms with OpenCV. Extensive testing ensures high code quality.

README:

.. image:: docs/_static/aiortc.svg :width: 120px :alt: aiortc

.. image:: https://img.shields.io/pypi/l/aiortc.svg :target: https://pypi.python.org/pypi/aiortc :alt: License

.. image:: https://img.shields.io/pypi/v/aiortc.svg :target: https://pypi.python.org/pypi/aiortc :alt: Version

.. image:: https://img.shields.io/pypi/pyversions/aiortc.svg :target: https://pypi.python.org/pypi/aiortc :alt: Python versions

.. image:: https://github.com/aiortc/aiortc/workflows/tests/badge.svg :target: https://github.com/aiortc/aiortc/actions :alt: Tests

.. image:: https://img.shields.io/codecov/c/github/aiortc/aiortc.svg :target: https://codecov.io/gh/aiortc/aiortc :alt: Coverage

.. image:: https://readthedocs.org/projects/aiortc/badge/?version=latest :target: https://aiortc.readthedocs.io/ :alt: Documentation

aiortc is a library for Web Real-Time Communication (WebRTC)_ and

Object Real-Time Communication (ORTC)_ in Python. It is built on top of

asyncio, Python's standard asynchronous I/O framework.

The API closely follows its Javascript counterpart while using pythonic constructs:

- promises are replaced by coroutines

- events are emitted using

pyee.EventEmitter

To learn more about aiortc please read the documentation_.

.. _Web Real-Time Communication (WebRTC): https://webrtc.org/ .. _Object Real-Time Communication (ORTC): https://ortc.org/ .. _read the documentation: https://aiortc.readthedocs.io/en/latest/

The main WebRTC and ORTC implementations are either built into web browsers, or come in the form of native code. While they are extensively battle tested, their internals are complex and they do not provide Python bindings. Furthermore they are tightly coupled to a media stack, making it hard to plug in audio or video processing algorithms.

In contrast, the aiortc implementation is fairly simple and readable. As

such it is a good starting point for programmers wishing to understand how

WebRTC works or tinker with its internals. It is also easy to create innovative

products by leveraging the extensive modules available in the Python ecosystem.

For instance you can build a full server handling both signaling and data

channels or apply computer vision algorithms to video frames using OpenCV.

Furthermore, a lot of effort has gone into writing an extensive test suite for

the aiortc code to ensure best-in-class code quality.

aiortc allows you to exchange audio, video and data channels and

interoperability is regularly tested against both Chrome and Firefox. Here are

some of its features:

- SDP generation / parsing

- Interactive Connectivity Establishment, with half-trickle and mDNS support

- DTLS key and certificate generation

- DTLS handshake, encryption / decryption (for SCTP)

- SRTP keying, encryption and decryption for RTP and RTCP

- Pure Python SCTP implementation

- Data Channels

- Sending and receiving audio (Opus / PCMU / PCMA)

- Sending and receiving video (VP8 / H.264)

- Bundling audio / video / data channels

- RTCP reports, including NACK / PLI to recover from packet loss

The easiest way to install aiortc is to run:

.. code:: bash

pip install aiortc

aiortc is released under the BSD license_.

.. _BSD license: https://aiortc.readthedocs.io/en/latest/license.html

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for aiortc

Similar Open Source Tools

aiortc

aiortc is a Python library for Web Real-Time Communication (WebRTC) and Object Real-Time Communication (ORTC). It provides a simple and readable implementation for programmers to understand and tinker with WebRTC internals. The library allows for exchanging audio, video, and data channels, supports SDP generation/parsing, ICE, DTLS, SRTP, SCTP, and various audio/video codecs. It also enables creating innovative products by leveraging Python ecosystem modules, such as computer vision algorithms with OpenCV. Extensive testing ensures high code quality.

eShopSupport

eShopSupport is a sample .NET application showcasing common use cases and development practices for building AI solutions in .NET, specifically Generative AI. It demonstrates a customer support application for an e-commerce website using a services-based architecture with .NET Aspire. The application includes support for text classification, sentiment analysis, text summarization, synthetic data generation, and chat bot interactions. It also showcases development practices such as developing solutions locally, evaluating AI responses, leveraging Python projects, and deploying applications to the Cloud.

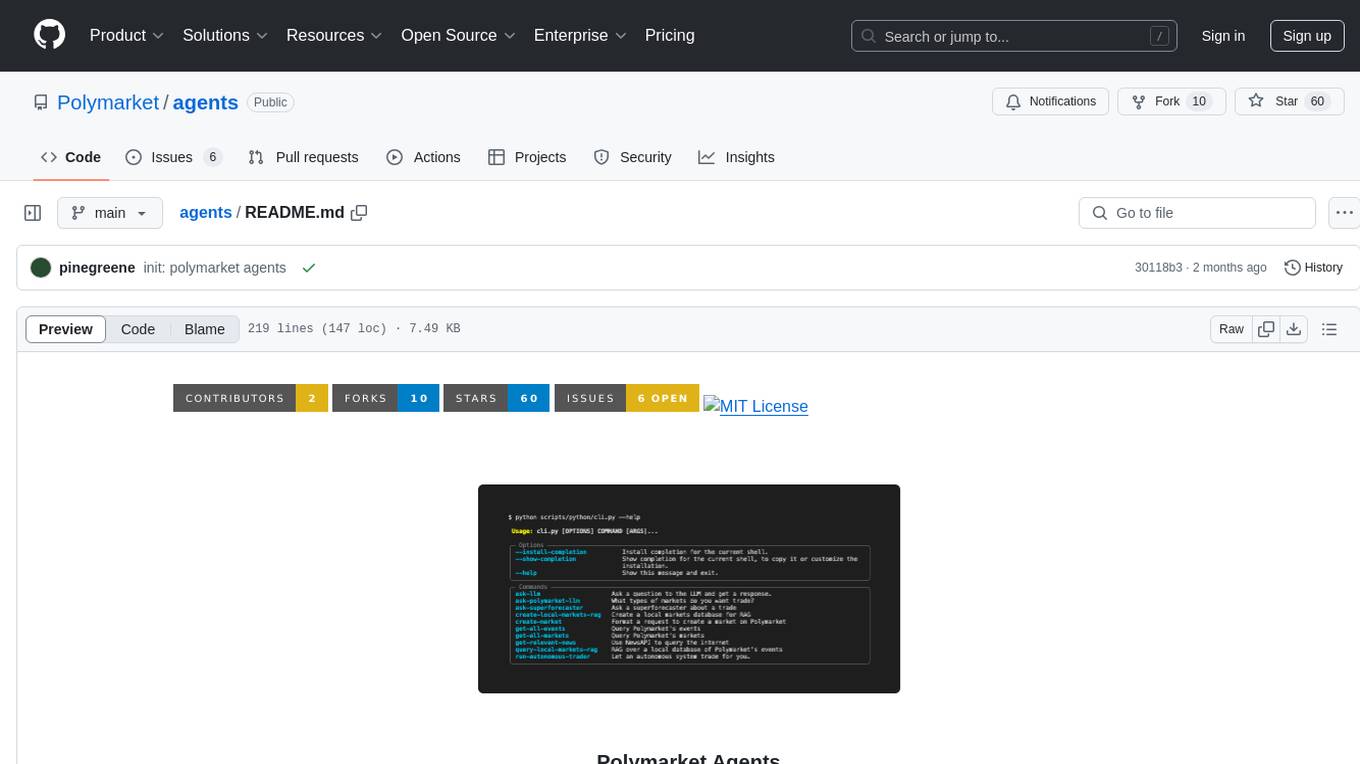

agents

Polymarket Agents is a developer framework and set of utilities for building AI agents to trade autonomously on Polymarket. It integrates with Polymarket API, provides AI agent utilities for prediction markets, supports local and remote RAG, sources data from various services, and offers comprehensive LLM tools for prompt engineering. The architecture features modular components like APIs and scripts for managing local environments, server set-up, and CLI for end-user commands.

poml

POML (Prompt Orchestration Markup Language) is a novel markup language designed to bring structure, maintainability, and versatility to advanced prompt engineering for Large Language Models (LLMs). It addresses common challenges in prompt development, such as lack of structure, complex data integration, format sensitivity, and inadequate tooling. POML provides a systematic way to organize prompt components, integrate diverse data types seamlessly, and manage presentation variations, empowering developers to create more sophisticated and reliable LLM applications.

ChatLab

ChatLab is a free, open-source, and local-first application dedicated to analyzing chat records. Through an AI Agent and a flexible SQL engine, you can freely dissect, query, and even reconstruct your social data. It provides ultimate performance, privacy protection, an intelligent AI Agent, multi-dimensional data visualization, and format standardization. The tool supports chat record analysis for various platforms like LINE, WeChat, QQ, WhatsApp, Instagram, and Discord. Users can export chat records, troubleshoot, and access standardized format specifications. The system architecture includes Electron Main Process, Worker and Data Pipeline, and Rendering Process. Local development setup steps are provided for Node.js environment. Contributions are welcome following specific guidelines. Users are advised to read the Privacy Policy & User Agreement before using the software. The tool is licensed under AGPL-3.0 License.

OllamaKit

OllamaKit is a Swift library designed to simplify interactions with the Ollama API. It handles network communication and data processing, offering an efficient interface for Swift applications to communicate with the Ollama API. The library is optimized for use within Ollamac, a macOS app for interacting with Ollama models.

litlytics

LitLytics is an affordable analytics platform leveraging LLMs for automated data analysis. It simplifies analytics for teams without data scientists, generates custom pipelines, and allows customization. Cost-efficient with low data processing costs. Scalable and flexible, works with CSV, PDF, and plain text data formats.

awsome_kali_MCPServers

awsome-kali-MCPServers is a repository containing Model Context Protocol (MCP) servers tailored for Kali Linux environments. It aims to optimize reverse engineering, security testing, and automation tasks by incorporating powerful tools and flexible features. The collection includes network analysis tools, support for binary understanding, and automation scripts to streamline repetitive tasks. The repository is continuously evolving with new features and integrations based on the FastMCP framework, such as network scanning, symbol analysis, binary analysis, string extraction, network traffic analysis, and sandbox support using Docker containers.

hashbrown

Hashbrown is a lightweight and efficient hashing library for Python, designed to provide easy-to-use cryptographic hashing functions for secure data storage and transmission. It supports a variety of hashing algorithms, including MD5, SHA-1, SHA-256, and SHA-512, allowing users to generate hash values for strings, files, and other data types. With Hashbrown, developers can quickly implement data integrity checks, password hashing, digital signatures, and other security features in their Python applications.

AgentIQ

AgentIQ is a flexible library designed to seamlessly integrate enterprise agents with various data sources and tools. It enables true composability by treating agents, tools, and workflows as simple function calls. With features like framework agnosticism, reusability, rapid development, profiling, observability, evaluation system, user interface, and MCP compatibility, AgentIQ empowers developers to move quickly, experiment freely, and ensure reliability across agent-driven projects.

open-assistant-api

Open Assistant API is an open-source, self-hosted AI intelligent assistant API compatible with the official OpenAI interface. It supports integration with more commercial and private models, R2R RAG engine, internet search, custom functions, built-in tools, code interpreter, multimodal support, LLM support, and message streaming output. Users can deploy the service locally and expand existing features. The API provides user isolation based on tokens for SaaS deployment requirements and allows integration of various tools to enhance its capability to connect with the external world.

minimal-llm-ui

This minimalistic UI serves as a simple interface for Ollama models, enabling real-time interaction with Local Language Models (LLMs). Users can chat with models, switch between different LLMs, save conversations, and create parameter-driven prompt templates. The tool is built using React, Next.js, and Tailwind CSS, with seamless integration with LangchainJs and Ollama for efficient model switching and context storage.

multimodal-chat

Yet Another Chatbot is a sophisticated multimodal chat interface powered by advanced AI models and equipped with a variety of tools. This chatbot can search and browse the web in real-time, query Wikipedia for information, perform news and map searches, execute Python code, compose long-form articles mixing text and images, generate, search, and compare images, analyze documents and images, search and download arXiv papers, save conversations as text and audio files, manage checklists, and track personal improvements. It offers tools for web interaction, Wikipedia search, Python scripting, content management, image handling, arXiv integration, conversation generation, file management, personal improvement, and checklist management.

lmstudio-js

LM Studio Client SDK lmstudio-ts is LM Studio's official JavaScript/TypeScript client SDK. It allows you to use LLMs to respond in chats or predict text completions, define functions as tools, and turn LLMs into autonomous agents that run completely locally, load, configure, and unload models from memory, supports both browser and any Node-compatible environments, generate embeddings for text, and more! Why use `lmstudio-js` over `openai` sdk? Open AI's SDK is designed to use with Open AI's proprietary models. As such, it is missing many features that are essential for using LLMs in a local environment, such as managing loading and unloading models from memory, configuring load parameters (context length, gpu offload settings, etc.), speculative decoding, getting information (such as context length, model size, etc.) about a model, and more. In addition, while `openai` sdk is automatically generated, `lmstudio-js` is designed from ground-up to be clean and easy to use for TypeScript/JavaScript developers.

modus

Modus is an open-source, serverless framework for building APIs powered by WebAssembly. It simplifies integrating AI models, data, and business logic with sandboxed execution. Modus extracts metadata, compiles code with optimizations, caches compiled modules, prepares invocation plans, generates API schema, and activates endpoints. Users query the endpoint, and Modus loads compiled code into a sandboxed environment, runs code securely, queries data and AI models, and responds via API. It provides a production-ready scalable endpoint for AI-enabled apps, optimized for sub-second response times. Modus supports programming languages like AssemblyScript and Go, and can be hosted on Hypermode or any platform. Developed by Hypermode as an open-source project, Modus welcomes external contributions.

GraphRAG-Local-UI

GraphRAG Local with Interactive UI is an adaptation of Microsoft's GraphRAG, tailored to support local models and featuring a comprehensive interactive user interface. It allows users to leverage local models for LLM and embeddings, visualize knowledge graphs in 2D or 3D, manage files, settings, and queries, and explore indexing outputs. The tool aims to be cost-effective by eliminating dependency on costly cloud-based models and offers flexible querying options for global, local, and direct chat queries.

For similar tasks

aiortc

aiortc is a Python library for Web Real-Time Communication (WebRTC) and Object Real-Time Communication (ORTC). It provides a simple and readable implementation for programmers to understand and tinker with WebRTC internals. The library allows for exchanging audio, video, and data channels, supports SDP generation/parsing, ICE, DTLS, SRTP, SCTP, and various audio/video codecs. It also enables creating innovative products by leveraging Python ecosystem modules, such as computer vision algorithms with OpenCV. Extensive testing ensures high code quality.

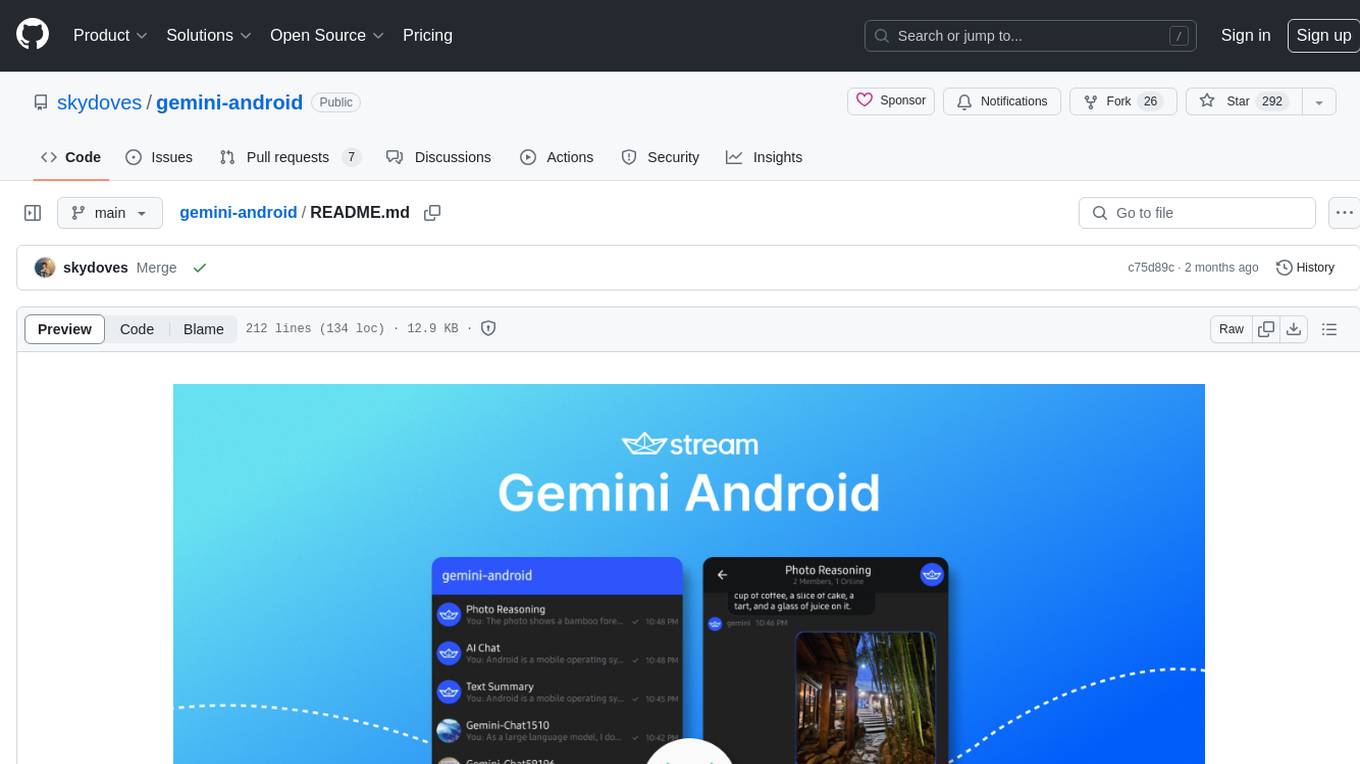

gemini-android

Gemini Android is a repository showcasing Google's Generative AI on Android using Stream Chat SDK for Compose. It demonstrates the Gemini API for Android, implements UI elements with Jetpack Compose, utilizes Android architecture components like Hilt and AppStartup, performs background tasks with Kotlin Coroutines, and integrates chat systems with Stream Chat Compose SDK for real-time event handling. The project also provides technical content, instructions on building the project, tech stack details, architecture overview, modularization strategies, and a contribution guideline. It follows Google's official architecture guidance and offers a real-world example of app architecture implementation.

For similar jobs

aiogram_bot_template

Aiogram bot template is a boilerplate for creating Telegram bots using Aiogram framework. It provides a solid foundation for building robust and scalable bots with a focus on code organization, database integration, and localization.

aiohttp-pydantic

Aiohttp pydantic is an aiohttp view to easily parse and validate requests. You define using function annotations what your methods for handling HTTP verbs expect, and Aiohttp pydantic parses the HTTP request for you, validates the data, and injects the parameters you want. It provides features like query string, request body, URL path, and HTTP headers validation, as well as Open API Specification generation.

Gemini-API

Gemini-API is a reverse-engineered asynchronous Python wrapper for Google Gemini web app (formerly Bard). It provides features like persistent cookies, ImageFx support, extension support, classified outputs, official flavor, and asynchronous operation. The tool allows users to generate contents from text or images, have conversations across multiple turns, retrieve images in response, generate images with ImageFx, save images to local files, use Gemini extensions, check and switch reply candidates, and control log level.

Building-AI-Applications-with-ChatGPT-APIs

This repository is for the book 'Building AI Applications with ChatGPT APIs' published by Packt. It provides code examples and instructions for mastering ChatGPT, Whisper, and DALL-E APIs through building innovative AI projects. Readers will learn to develop AI applications using ChatGPT APIs, integrate them with frameworks like Flask and Django, create AI-generated art with DALL-E APIs, and optimize ChatGPT models through fine-tuning.

gcloud-aio

This repository contains shared codebase for two projects: gcloud-aio and gcloud-rest. gcloud-aio is built for Python 3's asyncio, while gcloud-rest is a threadsafe requests-based implementation. It provides clients for Google Cloud services like Auth, BigQuery, Datastore, KMS, PubSub, Storage, and Task Queue. Users can install the library using pip and refer to the documentation for usage details. Developers can contribute to the project by following the contribution guide.

robocorp

Robocorp is a platform that allows users to create, deploy, and operate Python automations and AI actions. It provides an easy way to extend the capabilities of AI agents, assistants, and copilots with custom actions written in Python. Users can create and deploy tools, skills, loaders, and plugins that securely connect any AI Assistant platform to their data and applications. The Robocorp Action Server makes Python scripts compatible with ChatGPT and LangChain by automatically creating and exposing an API based on function declaration, type hints, and docstrings. It simplifies the process of developing and deploying AI actions, enabling users to interact with AI frameworks effortlessly.

aioconsole

aioconsole is a Python package that provides asynchronous console and interfaces for asyncio. It offers asynchronous equivalents to input, print, exec, and code.interact, an interactive loop running the asynchronous Python console, customization and running of command line interfaces using argparse, stream support to serve interfaces instead of using standard streams, and the apython script to access asyncio code at runtime without modifying the sources. The package requires Python version 3.8 or higher and can be installed from PyPI or GitHub. It allows users to run Python files or modules with a modified asyncio policy, replacing the default event loop with an interactive loop. aioconsole is useful for scenarios where users need to interact with asyncio code in a console environment.

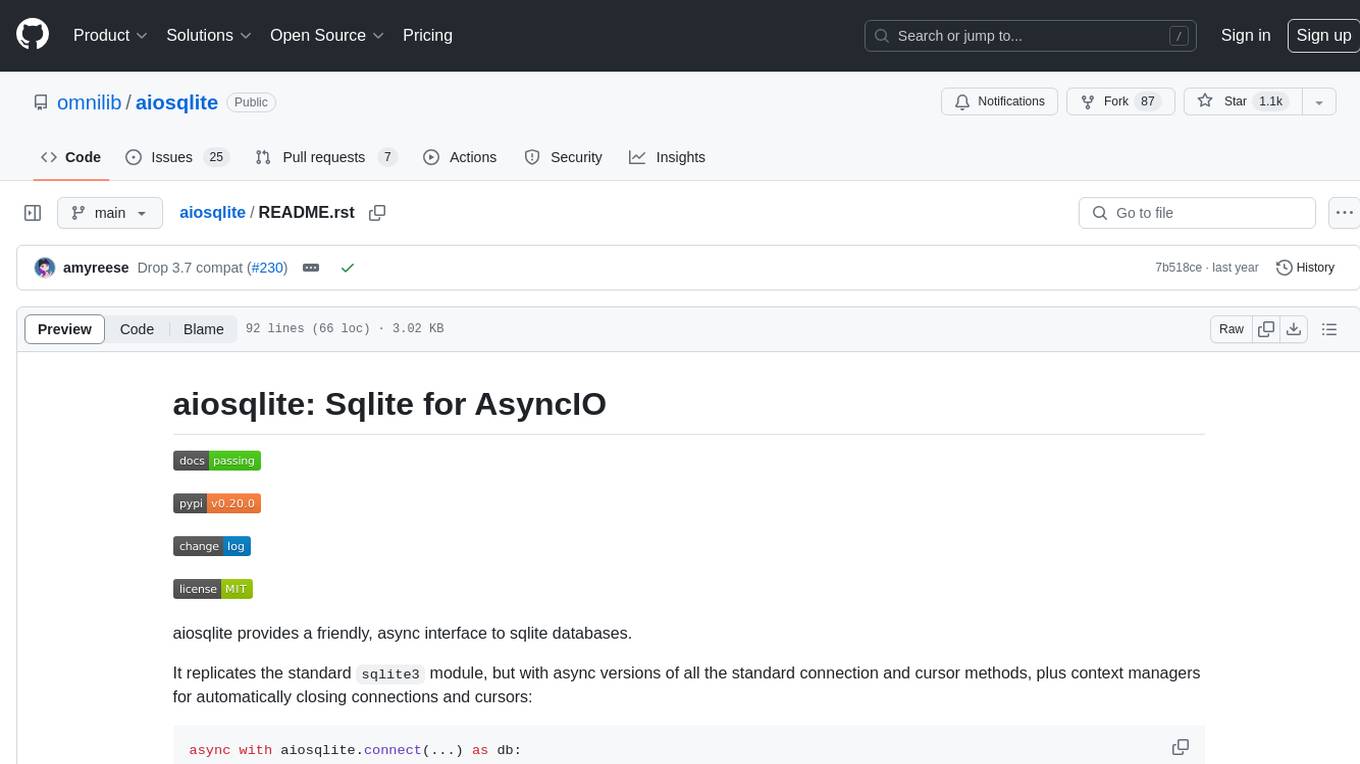

aiosqlite

aiosqlite is a Python library that provides a friendly, async interface to SQLite databases. It replicates the standard sqlite3 module but with async versions of all the standard connection and cursor methods, along with context managers for automatically closing connections and cursors. It allows interaction with SQLite databases on the main AsyncIO event loop without blocking execution of other coroutines while waiting for queries or data fetches. The library also replicates most of the advanced features of sqlite3, such as row factories and total changes tracking.