aioquic

QUIC and HTTP/3 implementation in Python

Stars: 1759

aioquic is a Python library for the QUIC network protocol, featuring a minimal TLS 1.3 implementation, a QUIC stack, and an HTTP/3 stack. It is designed to be embedded into Python client and server libraries supporting QUIC and HTTP/3, with IPv4 and IPv6 support, connection migration, NAT rebinding, logging TLS traffic secrets and QUIC events, server push, WebSocket bootstrapping, and datagram support. The library follows the 'bring your own I/O' pattern for QUIC and HTTP/3 APIs, making it testable and integrable with different concurrency models.

README:

.. image:: https://img.shields.io/pypi/l/aioquic.svg :target: https://pypi.python.org/pypi/aioquic :alt: License

.. image:: https://img.shields.io/pypi/v/aioquic.svg :target: https://pypi.python.org/pypi/aioquic :alt: Version

.. image:: https://img.shields.io/pypi/pyversions/aioquic.svg :target: https://pypi.python.org/pypi/aioquic :alt: Python versions

.. image:: https://github.com/aiortc/aioquic/workflows/tests/badge.svg :target: https://github.com/aiortc/aioquic/actions :alt: Tests

.. image:: https://img.shields.io/codecov/c/github/aiortc/aioquic.svg :target: https://codecov.io/gh/aiortc/aioquic :alt: Coverage

.. image:: https://readthedocs.org/projects/aioquic/badge/?version=latest :target: https://aioquic.readthedocs.io/ :alt: Documentation

aioquic is a library for the QUIC network protocol in Python. It features

a minimal TLS 1.3 implementation, a QUIC stack and an HTTP/3 stack.

aioquic is used by Python opensource projects such as dnspython,

hypercorn, mitmproxy_ and the Web Platform Tests_ cross-browser test

suite. It has also been used extensively in research papers about QUIC.

To learn more about aioquic please read the documentation_.

aioquic has been designed to be embedded into Python client and server

libraries wishing to support QUIC and / or HTTP/3. The goal is to provide a

common codebase for Python libraries in the hope of avoiding duplicated effort.

Both the QUIC and the HTTP/3 APIs follow the "bring your own I/O" pattern, leaving actual I/O operations to the API user. This approach has a number of advantages including making the code testable and allowing integration with different concurrency models.

A lot of effort has gone into writing an extensive test suite for the

aioquic code to ensure best-in-class code quality, and it is regularly

tested for interoperability_ against other QUIC implementations_.

- minimal TLS 1.3 implementation conforming with

RFC 8446_ - QUIC stack conforming with

RFC 9000_ (QUIC v1) andRFC 9369_ (QUIC v2)- IPv4 and IPv6 support

- connection migration and NAT rebinding

- logging TLS traffic secrets

- logging QUIC events in QLOG format

- version negotiation conforming with

RFC 9368_

- HTTP/3 stack conforming with

RFC 9114_- server push support

- WebSocket bootstrapping conforming with

RFC 9220_ - datagram support conforming with

RFC 9297_

The easiest way to install aioquic is to run:

.. code:: bash

pip install aioquic

If there are no wheels for your system or if you wish to build aioquic

from source you will need the OpenSSL development headers.

Linux .....

On Debian/Ubuntu run:

.. code-block:: console

sudo apt install libssl-dev python3-dev

On Alpine Linux run:

.. code-block:: console

sudo apk add openssl-dev python3-dev bsd-compat-headers libffi-dev

OS X ....

On OS X run:

.. code-block:: console

brew install openssl

You will need to set some environment variables to link against OpenSSL:

.. code-block:: console

export CFLAGS=-I$(brew --prefix openssl)/include export LDFLAGS=-L$(brew --prefix openssl)/lib

Windows .......

On Windows the easiest way to install OpenSSL is to use Chocolatey_.

.. code-block:: console

choco install openssl

You will need to set some environment variables to link against OpenSSL:

.. code-block:: console

$Env:INCLUDE = "C:\Progra1\OpenSSL\include"

$Env:LIB = "C:\Progra1\OpenSSL\lib"

aioquic comes with a number of examples illustrating various QUIC usecases.

You can browse these examples here: https://github.com/aiortc/aioquic/tree/main/examples

aioquic is released under the BSD license_.

.. _read the documentation: https://aioquic.readthedocs.io/en/latest/ .. _dnspython: https://github.com/rthalley/dnspython .. _hypercorn: https://github.com/pgjones/hypercorn .. _mitmproxy: https://github.com/mitmproxy/mitmproxy .. _Web Platform Tests: https://github.com/web-platform-tests/wpt .. _tested for interoperability: https://interop.seemann.io/ .. _QUIC implementations: https://github.com/quicwg/base-drafts/wiki/Implementations .. _cryptography: https://cryptography.io/ .. _Chocolatey: https://chocolatey.org/ .. _BSD license: https://aioquic.readthedocs.io/en/latest/license.html .. _RFC 8446: https://datatracker.ietf.org/doc/html/rfc8446 .. _RFC 9000: https://datatracker.ietf.org/doc/html/rfc9000 .. _RFC 9114: https://datatracker.ietf.org/doc/html/rfc9114 .. _RFC 9220: https://datatracker.ietf.org/doc/html/rfc9220 .. _RFC 9297: https://datatracker.ietf.org/doc/html/rfc9297 .. _RFC 9368: https://datatracker.ietf.org/doc/html/rfc9368 .. _RFC 9369: https://datatracker.ietf.org/doc/html/rfc9369

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for aioquic

Similar Open Source Tools

aioquic

aioquic is a Python library for the QUIC network protocol, featuring a minimal TLS 1.3 implementation, a QUIC stack, and an HTTP/3 stack. It is designed to be embedded into Python client and server libraries supporting QUIC and HTTP/3, with IPv4 and IPv6 support, connection migration, NAT rebinding, logging TLS traffic secrets and QUIC events, server push, WebSocket bootstrapping, and datagram support. The library follows the 'bring your own I/O' pattern for QUIC and HTTP/3 APIs, making it testable and integrable with different concurrency models.

aioconsole

aioconsole is a Python package that provides asynchronous console and interfaces for asyncio. It offers asynchronous equivalents to input, print, exec, and code.interact, an interactive loop running the asynchronous Python console, customization and running of command line interfaces using argparse, stream support to serve interfaces instead of using standard streams, and the apython script to access asyncio code at runtime without modifying the sources. The package requires Python version 3.8 or higher and can be installed from PyPI or GitHub. It allows users to run Python files or modules with a modified asyncio policy, replacing the default event loop with an interactive loop. aioconsole is useful for scenarios where users need to interact with asyncio code in a console environment.

go-embeddings

This project provides API clients for fetching embeddings from various LLM providers. It includes implementations for OpenAI, Cohere, Google Vertex, VoyageAI, Ollama, and AWS Bedrock. Sample programs demonstrate how to use the client packages. The 'document' package offers text splitters inspired by Langchain framework. Environment variables are used to initialize API clients for each provider. Contributions are welcome.

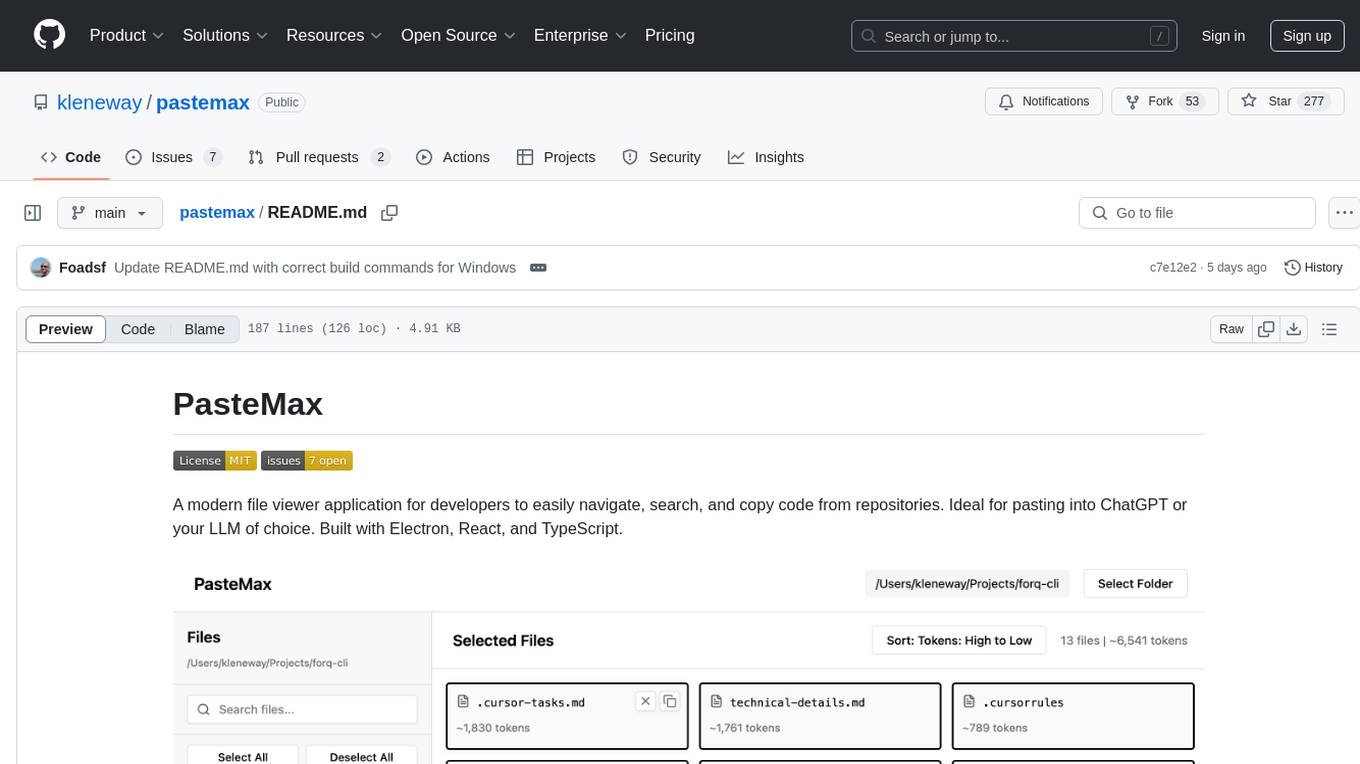

pastemax

PasteMax is a modern file viewer application designed for developers to easily navigate, search, and copy code from repositories. It provides features such as file tree navigation, token counting, search capabilities, selection management, sorting options, dark mode, binary file detection, and smart file exclusion. Built with Electron, React, and TypeScript, PasteMax is ideal for pasting code into ChatGPT or other language models. Users can download the application or build it from source, and customize file exclusions. Troubleshooting steps are provided for common issues, and contributions to the project are welcome under the MIT License.

backend.ai-webui

Backend.AI Web UI is a user-friendly web and app interface designed to make AI accessible for end-users, DevOps, and SysAdmins. It provides features for session management, inference service management, pipeline management, storage management, node management, statistics, configurations, license checking, plugins, help & manuals, kernel management, user management, keypair management, manager settings, proxy mode support, service information, and integration with the Backend.AI Web Server. The tool supports various devices, offers a built-in websocket proxy feature, and allows for versatile usage across different platforms. Users can easily manage resources, run environment-supported apps, access a web-based terminal, use Visual Studio Code editor, manage experiments, set up autoscaling, manage pipelines, handle storage, monitor nodes, view statistics, configure settings, and more.

sandvault

SandVault is a tool that manages a limited user account to sandbox shell commands and AI agents on macOS, providing a lightweight alternative to application isolation using virtual machines. It allows for running Claude Code, OpenAI Codex, Google Gemini, and shell commands safely within a sandboxed environment. SandVault offers features like fast context switching, passwordless account switching, shared workspace access, and clean uninstallation. The tool operates with limited access to the user's computer, ensuring security by restricting access to certain directories and system files.

langstream

LangStream is a tool for natural language processing tasks, providing a CLI for easy installation and usage. Users can try sample applications like Chat Completions and create their own applications using the developer documentation. It supports running on Kubernetes for production-ready deployment, with support for various Kubernetes distributions and external components like Apache Kafka or Apache Pulsar cluster. Users can deploy LangStream locally using minikube and manage the cluster with mini-langstream. Development requirements include Docker, Java 17, Git, Python 3.11+, and PIP, with the option to test local code changes using mini-langstream.

mcpd

mcpd is a tool developed by Mozilla AI to declaratively manage Model Context Protocol (MCP) servers, enabling consistent interface for defining and running tools across different environments. It bridges the gap between local development and enterprise deployment by providing secure secrets management, declarative configuration, and seamless environment promotion. mcpd simplifies the developer experience by offering zero-config tool setup, language-agnostic tooling, version-controlled configuration files, enterprise-ready secrets management, and smooth transition from local to production environments.

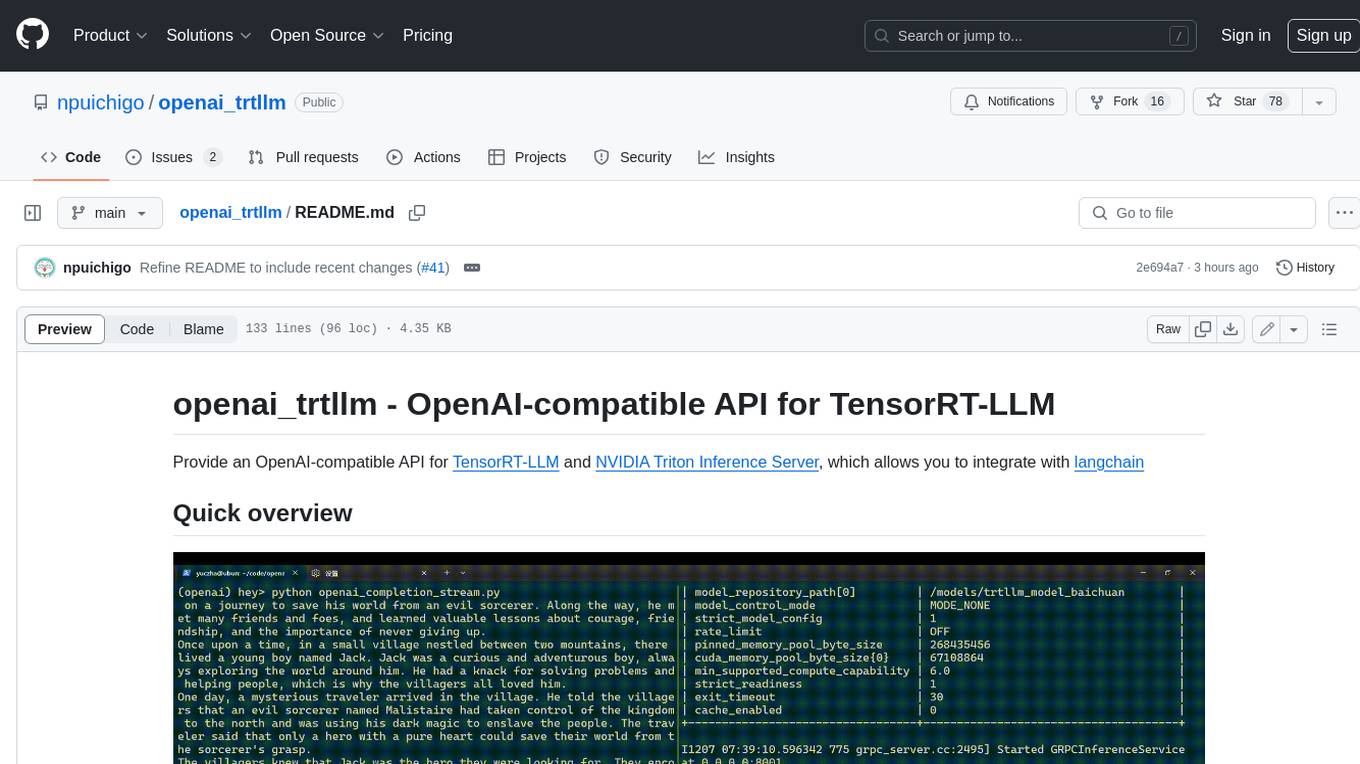

openai_trtllm

OpenAI-compatible API for TensorRT-LLM and NVIDIA Triton Inference Server, which allows you to integrate with langchain

desktop

ComfyUI Desktop is a packaged desktop application that allows users to easily use ComfyUI with bundled features like ComfyUI source code, ComfyUI-Manager, and uv. It automatically installs necessary Python dependencies and updates with stable releases. The app comes with Electron, Chromium binaries, and node modules. Users can store ComfyUI files in a specified location and manage model paths. The tool requires Python 3.12+ and Visual Studio with Desktop C++ workload for Windows. It uses nvm to manage node versions and yarn as the package manager. Users can install ComfyUI and dependencies using comfy-cli, download uv, and build/launch the code. Troubleshooting steps include rebuilding modules and installing missing libraries. The tool supports debugging in VSCode and provides utility scripts for cleanup. Crash reports can be sent to help debug issues, but no personal data is included.

loz

Loz is a command-line tool that integrates AI capabilities with Unix tools, enabling users to execute system commands and utilize Unix pipes. It supports multiple LLM services like OpenAI API, Microsoft Copilot, and Ollama. Users can run Linux commands based on natural language prompts, enhance Git commit formatting, and interact with the tool in safe mode. Loz can process input from other command-line tools through Unix pipes and automatically generate Git commit messages. It provides features like chat history access, configurable LLM settings, and contribution opportunities.

aimeos-symfony

Aimeos Symfony bundle is a professional, full-featured, and ultra-fast e-commerce package for Symfony. It can be easily installed and customized within an existing Symfony application. The bundle provides comprehensive features for setting up an e-commerce platform, including authentication, routing configuration, database setup, and administration interface setup. It offers flexibility for adapting, extending, overwriting, and customizing various aspects to meet specific business needs. The bundle is designed to streamline the development process and provide a robust foundation for building e-commerce applications with Symfony.

swarmauri-sdk

Swarmauri SDK is a repository containing core interfaces, standard ABCs, and standard concrete references of the SwarmaURI Framework. It provides a set of tools and functionalities for developers to work with the SwarmaURI ecosystem. The SDK aims to streamline the development process and enhance the interoperability of applications within the framework. Developers can easily integrate SwarmaURI features into their projects by leveraging the resources available in this repository.

AirCasting

AirCasting is a platform for gathering, visualizing, and sharing environmental data. It aims to provide a central hub for environmental data, making it easier for people to access and use this information to make informed decisions about their environment.

alcless

Alcoholless is a lightweight security sandbox for macOS programs, originally designed for securing Homebrew but can be used for any CLI programs. It allows AI agents to run shell commands with reduced risk of breaking the host OS. The tool creates a separate environment for executing commands, syncing changes back to the host directory upon command exit. It uses utilities like sudo, su, pam_launchd, and rsync, with potential future integration of FSKit for file syncing. The tool also generates a sudo configuration for user-specific sandbox access, enabling users to run commands as the sandbox user without a password.

For similar tasks

resonance

Resonance is a framework designed to facilitate interoperability and messaging between services in your infrastructure and beyond. It provides AI capabilities and takes full advantage of asynchronous PHP, built on top of Swoole. With Resonance, you can: * Chat with Open-Source LLMs: Create prompt controllers to directly answer user's prompts. LLM takes care of determining user's intention, so you can focus on taking appropriate action. * Asynchronous Where it Matters: Respond asynchronously to incoming RPC or WebSocket messages (or both combined) with little overhead. You can set up all the asynchronous features using attributes. No elaborate configuration is needed. * Simple Things Remain Simple: Writing HTTP controllers is similar to how it's done in the synchronous code. Controllers have new exciting features that take advantage of the asynchronous environment. * Consistency is Key: You can keep the same approach to writing software no matter the size of your project. There are no growing central configuration files or service dependencies registries. Every relation between code modules is local to those modules. * Promises in PHP: Resonance provides a partial implementation of Promise/A+ spec to handle various asynchronous tasks. * GraphQL Out of the Box: You can build elaborate GraphQL schemas by using just the PHP attributes. Resonance takes care of reusing SQL queries and optimizing the resources' usage. All fields can be resolved asynchronously.

aiohttp

aiohttp is an async http client/server framework that supports both client and server side of HTTP protocol. It also supports both client and server Web-Sockets out-of-the-box and avoids Callback Hell. aiohttp provides a Web-server with middleware and pluggable routing.

aioquic

aioquic is a Python library for the QUIC network protocol, featuring a minimal TLS 1.3 implementation, a QUIC stack, and an HTTP/3 stack. It is designed to be embedded into Python client and server libraries supporting QUIC and HTTP/3, with IPv4 and IPv6 support, connection migration, NAT rebinding, logging TLS traffic secrets and QUIC events, server push, WebSocket bootstrapping, and datagram support. The library follows the 'bring your own I/O' pattern for QUIC and HTTP/3 APIs, making it testable and integrable with different concurrency models.

nexent

Nexent is a powerful tool for analyzing and visualizing network traffic data. It provides comprehensive insights into network behavior, helping users to identify patterns, anomalies, and potential security threats. With its user-friendly interface and advanced features, Nexent is suitable for network administrators, cybersecurity professionals, and anyone looking to gain a deeper understanding of their network infrastructure.

For similar jobs

aio-proxy

This script automates setting up TUIC, hysteria and other proxy-related tools in Linux. It features setting domains, getting SSL certification, setting up a simple web page, SmartSNI by Bepass, Chisel Tunnel, Hysteria V2, Tuic, Hiddify Reality Scanner, SSH, Telegram Proxy, Reverse TLS Tunnel, different panels, installing, disabling, and enabling Warp, Sing Box 4-in-1 script, showing ports in use and their corresponding processes, and an Android script to use Chisel tunnel.

aiohttp

aiohttp is an async http client/server framework that supports both client and server side of HTTP protocol. It also supports both client and server Web-Sockets out-of-the-box and avoids Callback Hell. aiohttp provides a Web-server with middleware and pluggable routing.

OpsPilot

OpsPilot is an AI-powered operations navigator developed by the WeOps team. It leverages deep learning and LLM technologies to make operations plans interactive and generalize and reason about local operations knowledge. OpsPilot can be integrated with web applications in the form of a chatbot and primarily provides the following capabilities: 1. Operations capability precipitation: By depositing operations knowledge, operations skills, and troubleshooting actions, when solving problems, it acts as a navigator and guides users to solve operations problems through dialogue. 2. Local knowledge Q&A: By indexing local knowledge and Internet knowledge and combining the capabilities of LLM, it answers users' various operations questions. 3. LLM chat: When the problem is beyond the scope of OpsPilot's ability to handle, it uses LLM's capabilities to solve various long-tail problems.

aiocoap

aiocoap is a Python library that implements the Constrained Application Protocol (CoAP) using native asyncio methods in Python 3. It supports various CoAP standards such as RFC7252, RFC7641, RFC7959, RFC8323, RFC7967, RFC8132, RFC9176, RFC8613, and draft-ietf-core-oscore-groupcomm-17. The library provides features for clients and servers, including multicast support, blockwise transfer, CoAP over TCP, TLS, and WebSockets, No-Response, PATCH/FETCH, OSCORE, and Group OSCORE. It offers an easy-to-use interface for concurrent operations and is suitable for IoT applications.

aiounifi

Aiounifi is a Python library that provides a simple interface for interacting with the Unifi Controller API. It allows users to easily manage their Unifi network devices, such as access points, switches, and gateways, through automated scripts or applications. With Aiounifi, users can retrieve device information, perform configuration changes, monitor network performance, and more, all through a convenient and efficient API wrapper. This library simplifies the process of integrating Unifi network management into custom solutions, making it ideal for network administrators, developers, and enthusiasts looking to automate and streamline their network operations.

AirConnect-Synology

AirConnect-Synology is a minimal Synology package that allows users to use AirPlay to stream to UPnP/Sonos & Chromecast devices that do not natively support AirPlay. It is compatible with DSM 7.0 and DSM 7.1, and provides detailed information on installation, configuration, supported devices, troubleshooting, and more. The package automates the installation and usage of AirConnect on Synology devices, ensuring compatibility with various architectures and firmware versions. Users can customize the configuration using the airconnect.conf file and adjust settings for specific speakers like Sonos, Bose SoundTouch, and Pioneer/Phorus/Play-Fi.

axoned

Axone is a public dPoS layer 1 designed for connecting, sharing, and monetizing resources in the AI stack. It is an open network for collaborative AI workflow management compatible with any data, model, or infrastructure, allowing sharing of data, algorithms, storage, compute, APIs, both on-chain and off-chain. The 'axoned' node of the AXONE network is built on Cosmos SDK & Tendermint consensus, enabling companies & individuals to define on-chain rules, share off-chain resources, and create new applications. Validators secure the network by maintaining uptime and staking $AXONE for rewards. The blockchain supports various platforms and follows Semantic Versioning 2.0.0. A docker image is available for quick start, with documentation on querying networks, creating wallets, starting nodes, and joining networks. Development involves Go and Cosmos SDK, with smart contracts deployed on the AXONE blockchain. The project provides a Makefile for building, installing, linting, and testing. Community involvement is encouraged through Discord, open issues, and pull requests.

paddler

Paddler is an open-source load balancer and reverse proxy designed specifically for optimizing servers running llama.cpp. It overcomes typical load balancing challenges by maintaining a stateful load balancer that is aware of each server's available slots, ensuring efficient request distribution. Paddler also supports dynamic addition or removal of servers, enabling integration with autoscaling tools.