ClawX

ClawX is a desktop app that provides a graphical interface for OpenClaw AI agents. It turns CLI-based AI orchestration into a desktop experience without using the terminal. We’ve moved from clawx.dev to claw-x.com.

Stars: 1527

ClawX bridges the gap between powerful AI agents and everyday users by providing a desktop interface for OpenClaw AI agents. It offers an accessible, beautiful desktop experience for automating workflows, managing AI-powered channels, and scheduling intelligent tasks. ClawX comes pre-configured with best-practice model providers, supports multi-language settings, and allows fine-tuning of advanced configurations via Settings → Advanced → Developer Mode.

README:

The Desktop Interface for OpenClaw AI Agents

Features • Why ClawX • Getting Started • Architecture • Development • Contributing

English | 简体中文

ClawX bridges the gap between powerful AI agents and everyday users. Built on top of OpenClaw, it transforms command-line AI orchestration into an accessible, beautiful desktop experience—no terminal required.

Whether you're automating workflows, managing AI-powered channels, or scheduling intelligent tasks, ClawX provides the interface you need to harness AI agents effectively.

ClawX comes pre-configured with best-practice model providers and natively supports Windows as well as multi-language settings. Of course, you can also fine-tune advanced configurations via Settings → Advanced → Developer Mode.

Building AI agents shouldn't require mastering the command line. ClawX was designed with a simple philosophy: powerful technology deserves an interface that respects your time.

| Challenge | ClawX Solution |

|---|---|

| Complex CLI setup | One-click installation with guided setup wizard |

| Configuration files | Visual settings with real-time validation |

| Process management | Automatic gateway lifecycle management |

| Multiple AI providers | Unified provider configuration panel |

| Skill/plugin installation | Built-in skill marketplace and management |

ClawX is built directly upon the official OpenClaw core. Instead of requiring a separate installation, we embed the runtime within the application to provide a seamless "battery-included" experience.

We are committed to maintaining strict alignment with the upstream OpenClaw project, ensuring that you always have access to the latest capabilities, stability improvements, and ecosystem compatibility provided by the official releases.

Complete the entire setup—from installation to your first AI interaction—through an intuitive graphical interface. No terminal commands, no YAML files, no environment variable hunting.

Communicate with AI agents through a modern chat experience. Support for multiple conversation contexts, message history, and rich content rendering with Markdown.

Configure and monitor multiple AI channels simultaneously. Each channel operates independently, allowing you to run specialized agents for different tasks.

Schedule AI tasks to run automatically. Define triggers, set intervals, and let your AI agents work around the clock without manual intervention.

Extend your AI agents with pre-built skills. Browse, install, and manage skills through the integrated skill panel—no package managers required.

Connect to multiple AI providers (OpenAI, Anthropic, and more) with credentials stored securely in your system's native keychain.

Light mode, dark mode, or system-synchronized themes. ClawX adapts to your preferences automatically.

- Operating System: macOS 11+, Windows 10+, or Linux (Ubuntu 20.04+)

- Memory: 4GB RAM minimum (8GB recommended)

- Storage: 1GB available disk space

Download the latest release for your platform from the Releases page.

# Clone the repository

git clone https://github.com/ValueCell-ai/ClawX.git

cd ClawX

# Initialize the project

pnpm run init

# Start in development mode

pnpm devWhen you launch ClawX for the first time, the Setup Wizard will guide you through:

- Language & Region – Configure your preferred locale

- AI Provider – Enter your API keys for supported providers

- Skill Bundles – Select pre-configured skills for common use cases

- Verification – Test your configuration before entering the main interface

ClawX employs a dual-process architecture that separates UI concerns from AI runtime operations:

┌─────────────────────────────────────────────────────────────────┐

│ ClawX Desktop App │

│ │

│ ┌────────────────────────────────────────────────────────────┐ │

│ │ Electron Main Process │ │

│ │ • Window & application lifecycle management │ │

│ │ • Gateway process supervision │ │

│ │ • System integration (tray, notifications, keychain) │ │

│ │ • Auto-update orchestration │ │

│ └────────────────────────────────────────────────────────────┘ │

│ │ │

│ │ IPC │

│ ▼ │

│ ┌────────────────────────────────────────────────────────────┐ │

│ │ React Renderer Process │ │

│ │ • Modern component-based UI (React 19) │ │

│ │ • State management with Zustand │ │

│ │ • Real-time WebSocket communication │ │

│ │ • Rich Markdown rendering │ │

│ └────────────────────────────────────────────────────────────┘ │

└──────────────────────────────┬──────────────────────────────────┘

│

│ WebSocket (JSON-RPC)

▼

┌─────────────────────────────────────────────────────────────────┐

│ OpenClaw Gateway │

│ │

│ • AI agent runtime and orchestration │

│ • Message channel management │

│ • Skill/plugin execution environment │

│ • Provider abstraction layer │

└─────────────────────────────────────────────────────────────────┘

- Process Isolation: The AI runtime operates in a separate process, ensuring UI responsiveness even during heavy computation

- Graceful Recovery: Built-in reconnection logic with exponential backoff handles transient failures automatically

- Secure Storage: API keys and sensitive data leverage the operating system's native secure storage mechanisms

- Hot Reload: Development mode supports instant UI updates without restarting the gateway

Configure a general-purpose AI agent that can answer questions, draft emails, summarize documents, and help with everyday tasks—all from a clean desktop interface.

Set up scheduled agents to monitor news feeds, track prices, or watch for specific events. Results are delivered to your preferred notification channel.

Integrate AI into your development workflow. Use agents to review code, generate documentation, or automate repetitive coding tasks.

Chain multiple skills together to create sophisticated automation pipelines. Process data, transform content, and trigger actions—all orchestrated visually.

- Node.js: 22+ (LTS recommended)

- Package Manager: pnpm 9+ (recommended) or npm

ClawX/

├── electron/ # Electron Main Process

│ ├── main/ # Application entry, window management

│ ├── gateway/ # OpenClaw Gateway process manager

│ ├── preload/ # Secure IPC bridge scripts

│ └── utils/ # Utilities (storage, auth, paths)

├── src/ # React Renderer Process

│ ├── components/ # Reusable UI components

│ │ ├── ui/ # Base components (shadcn/ui)

│ │ ├── layout/ # Layout components (sidebar, header)

│ │ └── common/ # Shared components

│ ├── pages/ # Application pages

│ │ ├── Setup/ # Initial setup wizard

│ │ ├── Dashboard/ # Home dashboard

│ │ ├── Chat/ # AI chat interface

│ │ ├── Channels/ # Channel management

│ │ ├── Skills/ # Skill browser & manager

│ │ ├── Cron/ # Scheduled tasks

│ │ └── Settings/ # Configuration panels

│ ├── stores/ # Zustand state stores

│ ├── lib/ # Frontend utilities

│ └── types/ # TypeScript type definitions

├── resources/ # Static assets (icons, images)

├── scripts/ # Build & utility scripts

└── tests/ # Test suites

# Development

pnpm dev # Start with hot reload

pnpm dev:electron # Launch Electron directly

# Quality

pnpm lint # Run ESLint

pnpm lint:fix # Auto-fix issues

pnpm typecheck # TypeScript validation

# Testing

pnpm test # Run unit tests

pnpm test:watch # Watch mode

pnpm test:coverage # Generate coverage report

pnpm test:e2e # Run Playwright E2E tests

# Build & Package

pnpm build # Full production build

pnpm package # Package for current platform

pnpm package:mac # Package for macOS

pnpm package:win # Package for Windows

pnpm package:linux # Package for Linux| Layer | Technology |

|---|---|

| Runtime | Electron 40+ |

| UI Framework | React 19 + TypeScript |

| Styling | Tailwind CSS + shadcn/ui |

| State | Zustand |

| Build | Vite + electron-builder |

| Testing | Vitest + Playwright |

| Animation | Framer Motion |

| Icons | Lucide React |

We welcome contributions from the community! Whether it's bug fixes, new features, documentation improvements, or translations—every contribution helps make ClawX better.

- Fork the repository

-

Create a feature branch (

git checkout -b feature/amazing-feature) - Commit your changes with clear messages

- Push to your branch

- Open a Pull Request

- Follow the existing code style (ESLint + Prettier)

- Write tests for new functionality

- Update documentation as needed

- Keep commits atomic and descriptive

ClawX is built on the shoulders of excellent open-source projects:

- OpenClaw – The AI agent runtime

- Electron – Cross-platform desktop framework

- React – UI component library

- shadcn/ui – Beautifully designed components

- Zustand – Lightweight state management

Join our community to connect with other users, get support, and share your experiences.

| Enterprise WeChat | Feishu Group | Discord |

|---|---|---|

|

|

|

ClawX is released under the MIT License. You're free to use, modify, and distribute this software.

Built with ❤️ by the ValueCell Team

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ClawX

Similar Open Source Tools

ClawX

ClawX bridges the gap between powerful AI agents and everyday users by providing a desktop interface for OpenClaw AI agents. It offers an accessible, beautiful desktop experience for automating workflows, managing AI-powered channels, and scheduling intelligent tasks. ClawX comes pre-configured with best-practice model providers, supports multi-language settings, and allows fine-tuning of advanced configurations via Settings → Advanced → Developer Mode.

CoWork-OS

CoWork-OS is an open-source AI assistant platform designed for security-hardened, local-first runtime. It offers 30+ LLM providers, 14 messaging channels, and over 100 built-in skills. Users can create tasks, choose execution modes, monitor real-time execution, and approve actions. The platform emphasizes security, extensibility, and privacy by keeping data and API keys on the user's machine. CoWork-OS is suitable for individuals and teams looking for an independent AI assistant solution with advanced features and customizable workflows.

QodeAssist

QodeAssist is an AI-powered coding assistant plugin for Qt Creator, offering intelligent code completion and suggestions for C++ and QML. It leverages large language models like Ollama to enhance coding productivity with context-aware AI assistance directly in the Qt development environment. The plugin supports multiple LLM providers, extensive model-specific templates, and easy configuration for enhanced coding experience.

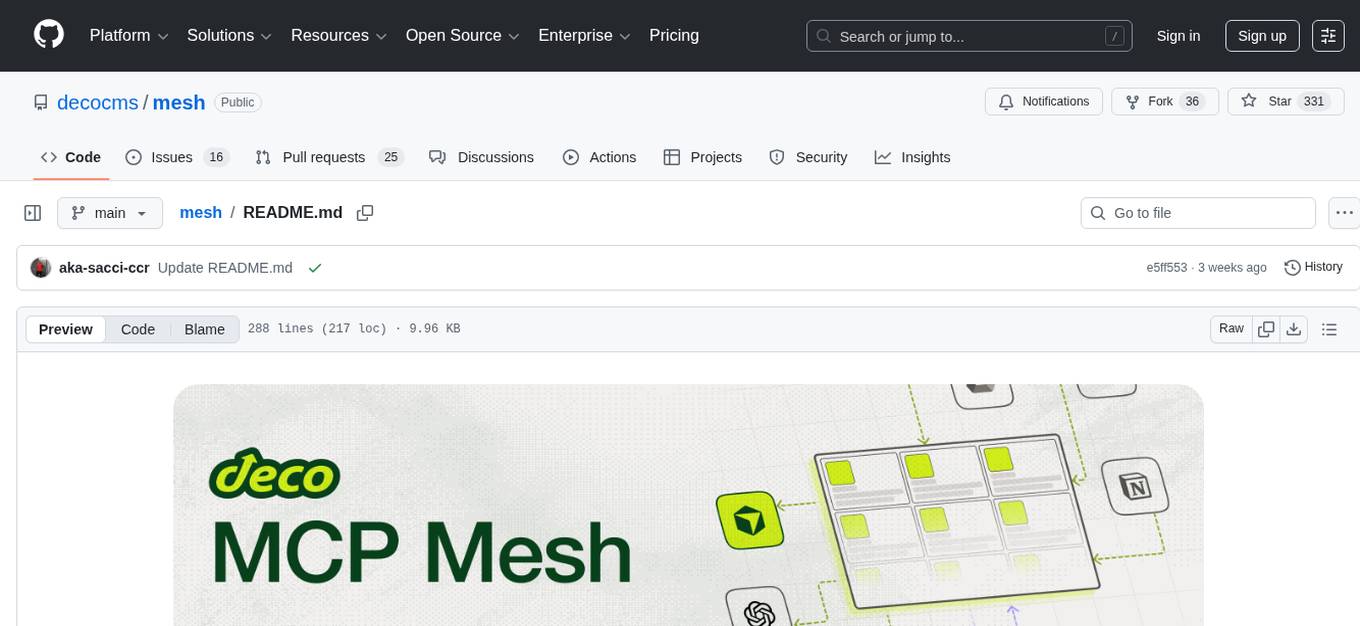

mesh

MCP Mesh is an open-source control plane for MCP traffic that provides a unified layer for authentication, routing, and observability. It replaces multiple integrations with a single production endpoint, simplifying configuration management. Built for multi-tenant organizations, it offers workspace/project scoping for policies, credentials, and logs. With core capabilities like MeshContext, AccessControl, and OpenTelemetry, it ensures fine-grained RBAC, full tracing, and metrics for tools and workflows. Users can define tools with input/output validation, access control checks, audit logging, and OpenTelemetry traces. The project structure includes apps for full-stack MCP Mesh, encryption, observability, and more, with deployment options ranging from Docker to Kubernetes. The tech stack includes Bun/Node runtime, TypeScript, Hono API, React, Kysely ORM, and Better Auth for OAuth and API keys.

Shannon

Shannon is a battle-tested infrastructure for AI agents that solves problems at scale, such as runaway costs, non-deterministic failures, and security concerns. It offers features like intelligent caching, deterministic replay of workflows, time-travel debugging, WASI sandboxing, and hot-swapping between LLM providers. Shannon allows users to ship faster with zero configuration multi-agent setup, multiple AI patterns, time-travel debugging, and hot configuration changes. It is production-ready with features like WASI sandbox, token budget control, policy engine (OPA), and multi-tenancy. Shannon helps scale without breaking by reducing costs, being provider agnostic, observable by default, and designed for horizontal scaling with Temporal workflow orchestration.

helix

HelixML is a private GenAI platform that allows users to deploy the best of open AI in their own data center or VPC while retaining complete data security and control. It includes support for fine-tuning models with drag-and-drop functionality. HelixML brings the best of open source AI to businesses in an ergonomic and scalable way, optimizing the tradeoff between GPU memory and latency.

open-computer-use

Open Computer Use is an open-source platform that enables AI agents to control computers through browser automation, terminal access, and desktop interaction. It is designed for developers to create autonomous AI workflows. The platform allows agents to browse the web, run terminal commands, control desktop applications, orchestrate multi-agents, stream execution, and is 100% open-source and self-hostable. It provides capabilities similar to Anthropic's Claude Computer Use but is fully open-source and extensible.

groundup-toolkit

GroundUp Toolkit is an open-source automation toolkit designed for venture capital teams to streamline deal flow, meeting management, CRM updates, and team communication through an AI assistant connected via WhatsApp. It offers various skills such as meeting reminders, meeting bot, deal automation, deck analyzer, VC automation, ping teammate, Google Workspace operations, LinkedIn profile research, keep on radar feature, and deal logger. Additionally, it includes operational scripts for health check, WhatsApp watchdog, and Shabbat-aware scheduler to ensure smooth automation processes. The toolkit's architecture involves WhatsApp, OpenClaw, and various skills and scripts for seamless automation. It requires Ubuntu 22.04+ server, Node.js 18+, and Python 3.10+ for installation and operation.

forge-orchestrator

Forge Orchestrator is a Rust CLI tool designed to coordinate and manage multiple AI tools seamlessly. It acts as a senior tech lead, preventing conflicts, capturing knowledge, and ensuring work aligns with specifications. With features like file locking, knowledge capture, and unified state management, Forge enhances collaboration and efficiency among AI tools. The tool offers a pluggable brain for intelligent decision-making and includes a Model Context Protocol server for real-time integration with AI tools. Forge is not a replacement for AI tools but a facilitator for making them work together effectively.

aiohomematic

AIO Homematic (hahomematic) is a lightweight Python 3 library for controlling and monitoring HomeMatic and HomematicIP devices, with support for third-party devices/gateways. It automatically creates entities for device parameters, offers custom entity classes for complex behavior, and includes features like caching paramsets for faster restarts. Designed to integrate with Home Assistant, it requires specific firmware versions for HomematicIP devices. The public API is defined in modules like central, client, model, exceptions, and const, with example usage provided. Useful links include changelog, data point definitions, troubleshooting, and developer resources for architecture, data flow, model extension, and Home Assistant lifecycle.

giztoy

Giztoy is a multi-language framework designed for building AI toys and intelligent applications. It provides a unified abstraction layer that spans from resource-constrained embedded systems to powerful cloud services. With features like native support for ESP32 and other MCUs, cross-platform app development, a unified build system with Bazel, an agent framework for AI agents, audio processing capabilities, support for various Large Language Models, real-time models with WebSocket streaming, secure transport protocols, and multi-language implementations in Go, Rust, Zig, and C/C++, Giztoy serves as a versatile tool for developing AI-powered applications across different platforms and devices.

osmedeus

Osmedeus is a security-focused declarative orchestration engine that simplifies complex workflow automation into auditable YAML definitions. It provides powerful automation capabilities without compromising infrastructure integrity and safety. With features like declarative YAML workflows, multiple runners, event-driven triggers, template engine, utility functions, REST API server, distributed execution, notifications, cloud storage, AI integration, SAST integration, language detection, and preset installations, Osmedeus offers a comprehensive solution for security automation tasks.

gpt-all-star

GPT-All-Star is an AI-powered code generation tool designed for scratch development of web applications with team collaboration of autonomous AI agents. The primary focus of this research project is to explore the potential of autonomous AI agents in software development. Users can organize their team, choose leaders for each step, create action plans, and work together to complete tasks. The tool supports various endpoints like OpenAI, Azure, and Anthropic, and provides functionalities for project management, code generation, and team collaboration.

huf

HUF is an AI-native engine designed to centralize intelligence and execution into a single engine, enabling AI to operate inside real business systems. It offers multi-provider AI connectivity, intelligent tools, knowledge grounding, event-driven execution, visual workflow builder, full auditability, and cost control. HUF can be used as AI infrastructure for products, internal intelligence platform, automation & orchestration engine, embedded AI layer for SaaS, and enterprise AI control plane. Core capabilities include agent system, knowledge management, trigger system, visual flow builder, and observability. The tech stack includes Frappe Framework, Python 3.10+, LiteLLM, SQLite FTS5, React 18, TypeScript, Tailwind CSS, and MariaDB.

mimiclaw

MimiClaw is a pocket AI assistant that runs on a $5 chip, specifically designed for the ESP32-S3 board. It operates without Linux or Node.js, using pure C language. Users can interact with MimiClaw through Telegram, enabling it to handle various tasks and learn from local memory. The tool is energy-efficient, running on USB power 24/7. With MimiClaw, users can have a personal AI assistant on a chip the size of a thumb, making it convenient and accessible for everyday use.

solo-server

Solo Server is a lightweight server designed for managing hardware-aware inference. It provides seamless setup through a simple CLI and HTTP servers, an open model registry for pulling models from platforms like Ollama and Hugging Face, cross-platform compatibility for effortless deployment of AI models on hardware, and a configurable framework that auto-detects hardware components (CPU, GPU, RAM) and sets optimal configurations.

For similar tasks

autogen

AutoGen is a framework that enables the development of LLM applications using multiple agents that can converse with each other to solve tasks. AutoGen agents are customizable, conversable, and seamlessly allow human participation. They can operate in various modes that employ combinations of LLMs, human inputs, and tools.

tracecat

Tracecat is an open-source automation platform for security teams. It's designed to be simple but powerful, with a focus on AI features and a practitioner-obsessed UI/UX. Tracecat can be used to automate a variety of tasks, including phishing email investigation, evidence collection, and remediation plan generation.

ciso-assistant-community

CISO Assistant is a tool that helps organizations manage their cybersecurity posture and compliance. It provides a centralized platform for managing security controls, threats, and risks. CISO Assistant also includes a library of pre-built frameworks and tools to help organizations quickly and easily implement best practices.

ck

Collective Mind (CM) is a collection of portable, extensible, technology-agnostic and ready-to-use automation recipes with a human-friendly interface (aka CM scripts) to unify and automate all the manual steps required to compose, run, benchmark and optimize complex ML/AI applications on any platform with any software and hardware: see online catalog and source code. CM scripts require Python 3.7+ with minimal dependencies and are continuously extended by the community and MLCommons members to run natively on Ubuntu, MacOS, Windows, RHEL, Debian, Amazon Linux and any other operating system, in a cloud or inside automatically generated containers while keeping backward compatibility - please don't hesitate to report encountered issues here and contact us via public Discord Server to help this collaborative engineering effort! CM scripts were originally developed based on the following requirements from the MLCommons members to help them automatically compose and optimize complex MLPerf benchmarks, applications and systems across diverse and continuously changing models, data sets, software and hardware from Nvidia, Intel, AMD, Google, Qualcomm, Amazon and other vendors: * must work out of the box with the default options and without the need to edit some paths, environment variables and configuration files; * must be non-intrusive, easy to debug and must reuse existing user scripts and automation tools (such as cmake, make, ML workflows, python poetry and containers) rather than substituting them; * must have a very simple and human-friendly command line with a Python API and minimal dependencies; * must require minimal or zero learning curve by using plain Python, native scripts, environment variables and simple JSON/YAML descriptions instead of inventing new workflow languages; * must have the same interface to run all automations natively, in a cloud or inside containers. CM scripts were successfully validated by MLCommons to modularize MLPerf inference benchmarks and help the community automate more than 95% of all performance and power submissions in the v3.1 round across more than 120 system configurations (models, frameworks, hardware) while reducing development and maintenance costs.

zenml

ZenML is an extensible, open-source MLOps framework for creating portable, production-ready machine learning pipelines. By decoupling infrastructure from code, ZenML enables developers across your organization to collaborate more effectively as they develop to production.

clearml

ClearML is a suite of tools designed to streamline the machine learning workflow. It includes an experiment manager, MLOps/LLMOps, data management, and model serving capabilities. ClearML is open-source and offers a free tier hosting option. It supports various ML/DL frameworks and integrates with Jupyter Notebook and PyCharm. ClearML provides extensive logging capabilities, including source control info, execution environment, hyper-parameters, and experiment outputs. It also offers automation features, such as remote job execution and pipeline creation. ClearML is designed to be easy to integrate, requiring only two lines of code to add to existing scripts. It aims to improve collaboration, visibility, and data transparency within ML teams.

devchat

DevChat is an open-source workflow engine that enables developers to create intelligent, automated workflows for engaging with users through a chat panel within their IDEs. It combines script writing flexibility, latest AI models, and an intuitive chat GUI to enhance user experience and productivity. DevChat simplifies the integration of AI in software development, unlocking new possibilities for developers.

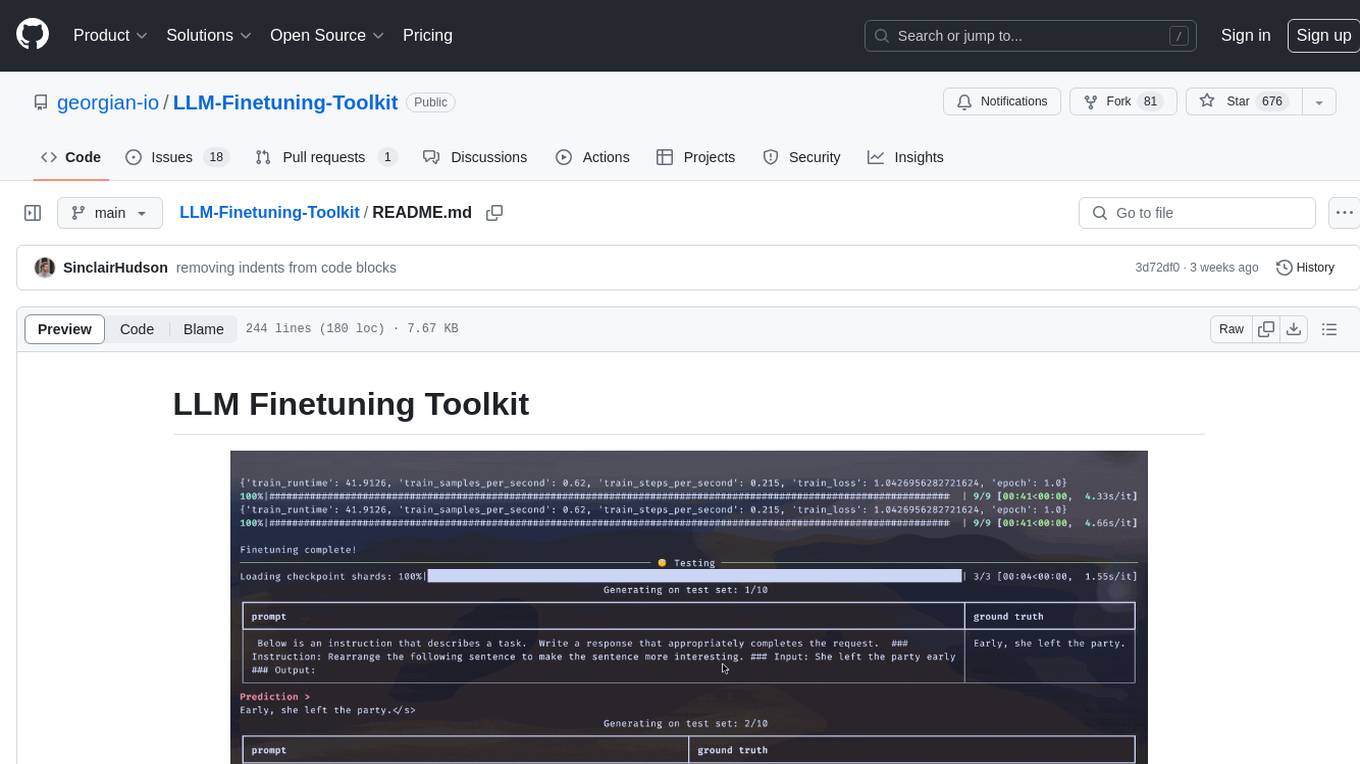

LLM-Finetuning-Toolkit

LLM Finetuning toolkit is a config-based CLI tool for launching a series of LLM fine-tuning experiments on your data and gathering their results. It allows users to control all elements of a typical experimentation pipeline - prompts, open-source LLMs, optimization strategy, and LLM testing - through a single YAML configuration file. The toolkit supports basic, intermediate, and advanced usage scenarios, enabling users to run custom experiments, conduct ablation studies, and automate fine-tuning workflows. It provides features for data ingestion, model definition, training, inference, quality assurance, and artifact outputs, making it a comprehensive tool for fine-tuning large language models.

For similar jobs

promptflow

**Prompt flow** is a suite of development tools designed to streamline the end-to-end development cycle of LLM-based AI applications, from ideation, prototyping, testing, evaluation to production deployment and monitoring. It makes prompt engineering much easier and enables you to build LLM apps with production quality.

deepeval

DeepEval is a simple-to-use, open-source LLM evaluation framework specialized for unit testing LLM outputs. It incorporates various metrics such as G-Eval, hallucination, answer relevancy, RAGAS, etc., and runs locally on your machine for evaluation. It provides a wide range of ready-to-use evaluation metrics, allows for creating custom metrics, integrates with any CI/CD environment, and enables benchmarking LLMs on popular benchmarks. DeepEval is designed for evaluating RAG and fine-tuning applications, helping users optimize hyperparameters, prevent prompt drifting, and transition from OpenAI to hosting their own Llama2 with confidence.

MegaDetector

MegaDetector is an AI model that identifies animals, people, and vehicles in camera trap images (which also makes it useful for eliminating blank images). This model is trained on several million images from a variety of ecosystems. MegaDetector is just one of many tools that aims to make conservation biologists more efficient with AI. If you want to learn about other ways to use AI to accelerate camera trap workflows, check out our of the field, affectionately titled "Everything I know about machine learning and camera traps".

leapfrogai

LeapfrogAI is a self-hosted AI platform designed to be deployed in air-gapped resource-constrained environments. It brings sophisticated AI solutions to these environments by hosting all the necessary components of an AI stack, including vector databases, model backends, API, and UI. LeapfrogAI's API closely matches that of OpenAI, allowing tools built for OpenAI/ChatGPT to function seamlessly with a LeapfrogAI backend. It provides several backends for various use cases, including llama-cpp-python, whisper, text-embeddings, and vllm. LeapfrogAI leverages Chainguard's apko to harden base python images, ensuring the latest supported Python versions are used by the other components of the stack. The LeapfrogAI SDK provides a standard set of protobuffs and python utilities for implementing backends and gRPC. LeapfrogAI offers UI options for common use-cases like chat, summarization, and transcription. It can be deployed and run locally via UDS and Kubernetes, built out using Zarf packages. LeapfrogAI is supported by a community of users and contributors, including Defense Unicorns, Beast Code, Chainguard, Exovera, Hypergiant, Pulze, SOSi, United States Navy, United States Air Force, and United States Space Force.

llava-docker

This Docker image for LLaVA (Large Language and Vision Assistant) provides a convenient way to run LLaVA locally or on RunPod. LLaVA is a powerful AI tool that combines natural language processing and computer vision capabilities. With this Docker image, you can easily access LLaVA's functionalities for various tasks, including image captioning, visual question answering, text summarization, and more. The image comes pre-installed with LLaVA v1.2.0, Torch 2.1.2, xformers 0.0.23.post1, and other necessary dependencies. You can customize the model used by setting the MODEL environment variable. The image also includes a Jupyter Lab environment for interactive development and exploration. Overall, this Docker image offers a comprehensive and user-friendly platform for leveraging LLaVA's capabilities.

carrot

The 'carrot' repository on GitHub provides a list of free and user-friendly ChatGPT mirror sites for easy access. The repository includes sponsored sites offering various GPT models and services. Users can find and share sites, report errors, and access stable and recommended sites for ChatGPT usage. The repository also includes a detailed list of ChatGPT sites, their features, and accessibility options, making it a valuable resource for ChatGPT users seeking free and unlimited GPT services.

TrustLLM

TrustLLM is a comprehensive study of trustworthiness in LLMs, including principles for different dimensions of trustworthiness, established benchmark, evaluation, and analysis of trustworthiness for mainstream LLMs, and discussion of open challenges and future directions. Specifically, we first propose a set of principles for trustworthy LLMs that span eight different dimensions. Based on these principles, we further establish a benchmark across six dimensions including truthfulness, safety, fairness, robustness, privacy, and machine ethics. We then present a study evaluating 16 mainstream LLMs in TrustLLM, consisting of over 30 datasets. The document explains how to use the trustllm python package to help you assess the performance of your LLM in trustworthiness more quickly. For more details about TrustLLM, please refer to project website.

AI-YinMei

AI-YinMei is an AI virtual anchor Vtuber development tool (N card version). It supports fastgpt knowledge base chat dialogue, a complete set of solutions for LLM large language models: [fastgpt] + [one-api] + [Xinference], supports docking bilibili live broadcast barrage reply and entering live broadcast welcome speech, supports Microsoft edge-tts speech synthesis, supports Bert-VITS2 speech synthesis, supports GPT-SoVITS speech synthesis, supports expression control Vtuber Studio, supports painting stable-diffusion-webui output OBS live broadcast room, supports painting picture pornography public-NSFW-y-distinguish, supports search and image search service duckduckgo (requires magic Internet access), supports image search service Baidu image search (no magic Internet access), supports AI reply chat box [html plug-in], supports AI singing Auto-Convert-Music, supports playlist [html plug-in], supports dancing function, supports expression video playback, supports head touching action, supports gift smashing action, supports singing automatic start dancing function, chat and singing automatic cycle swing action, supports multi scene switching, background music switching, day and night automatic switching scene, supports open singing and painting, let AI automatically judge the content.