aws-mcp

Talk with your AWS using Claude. Model Context Protocol (MCP) server for AWS. Better Amazon Q alternative.

Stars: 118

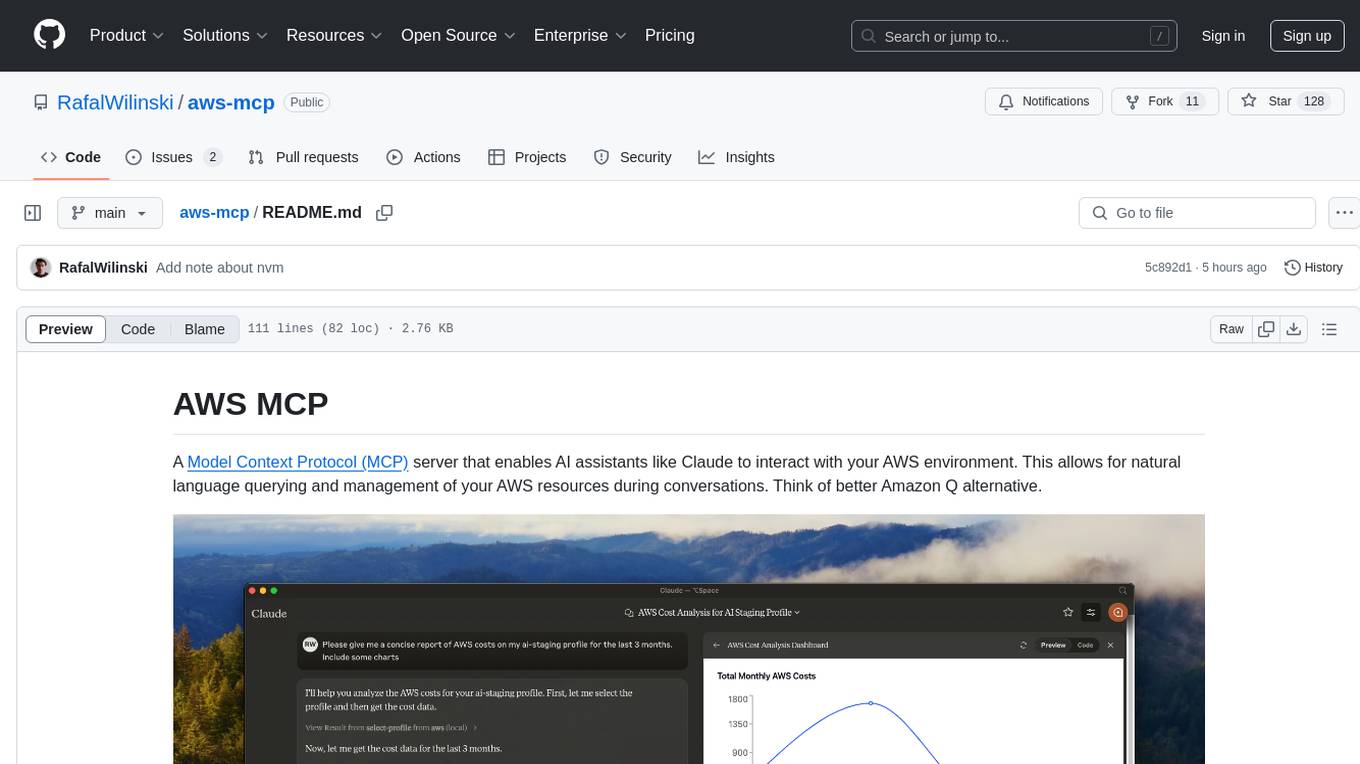

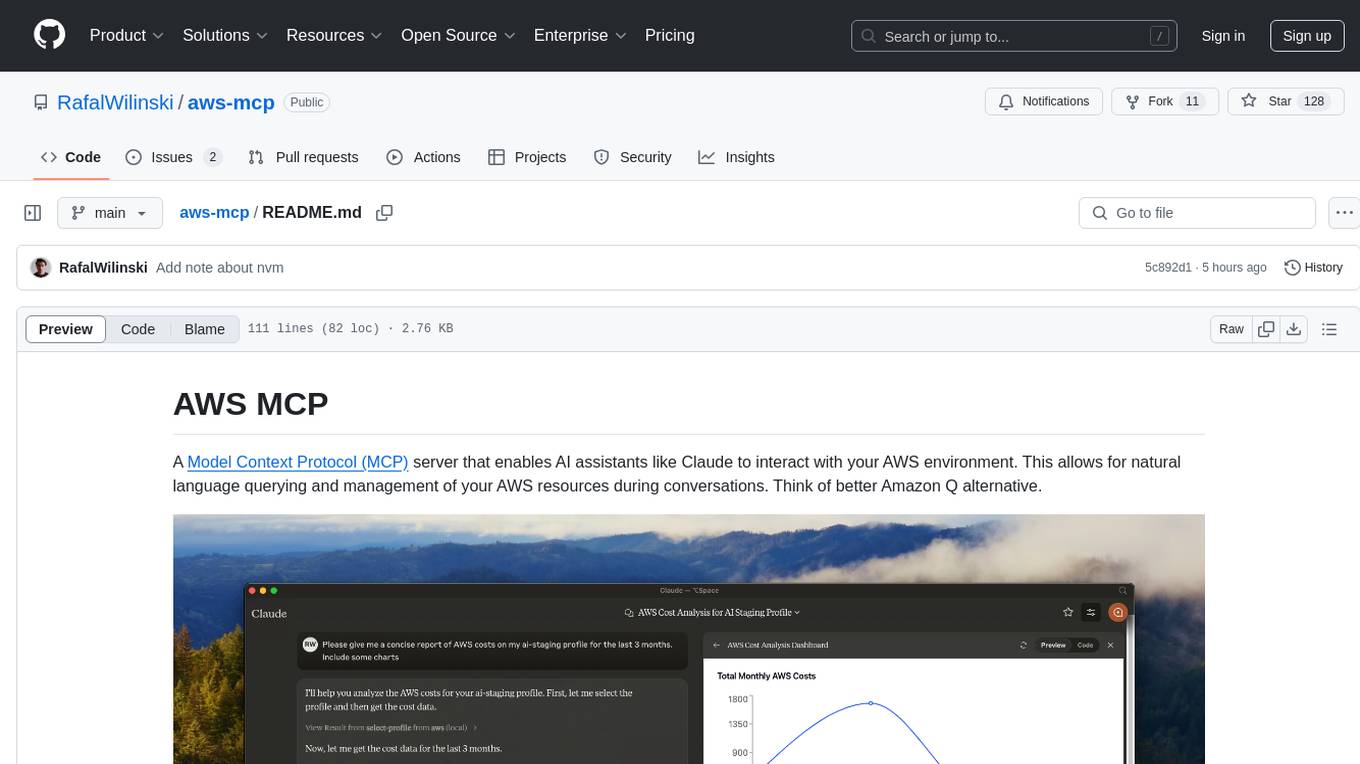

AWS MCP is a Model Context Protocol (MCP) server that facilitates interactions between AI assistants and AWS environments. It allows for natural language querying and management of AWS resources during conversations. The server supports multiple AWS profiles, SSO authentication, multi-region operations, and secure credential handling. Users can locally execute commands with their AWS credentials, enhancing the conversational experience with AWS resources.

README:

A Model Context Protocol (MCP) server that enables AI assistants like Claude to interact with your AWS environment. This allows for natural language querying and management of your AWS resources during conversations. Think of better Amazon Q alternative.

- 🔍 Query and modify AWS resources using natural language

- ☁️ Support for multiple AWS profiles and SSO authentication

- 🌐 Multi-region support

- 🔐 Secure credential handling (no credentials are exposed to external services, your local credentials are used)

- 🏃♂️ Local execution with your AWS credentials

- Node.js

- Claude Desktop

- AWS credentials configured locally (

~/.aws/directory)

- Clone the repository:

git clone https://github.com/RafalWilinski/aws-mcp

cd aws-mcp- Install dependencies:

pnpm install

# or

npm install- Open Claude desktop app and go to Settings -> Developer -> Edit Config

- Add the following entry to your

claude_desktop_config.json:

{

"mcpServers": {

"aws": {

"command": "npm", // OR pnpm

"args": [

"--silent",

"--prefix",

"/Users/<YOUR USERNAME>/aws-mcp",

"start"

]

}

}

}Important: Replace /Users/<YOUR USERNAME>/aws-mcp with the actual path to your project directory.

- Restart Claude desktop app. You should see this:

- Start by selecting an AWS profile or jump to action by asking:

- "List available AWS profiles"

- "List all EC2 instances in my account"

- "Show me S3 buckets with their sizes"

- "What Lambda functions are deployed in us-east-1?"

- "List all ECS clusters and their services"

Build from source first and add following config:

{

"mcpServers": {

"aws": {

"command": "/Users/<USERNAME>/.nvm/versions/node/v20.10.0/bin/node",

"args": [

"<WORKSPACE_PATH>/aws-mcp/node_modules/tsx/dist/cli.mjs",

"<WORKSPACE_PATH>/aws-mcp/index.ts",

"--prefix",

"<WORKSPACE_PATH>/aws-mcp",

"start"

]

}

}

}To see logs:

tail -n 50 -f ~/Library/Logs/Claude/mcp-server-aws.log

# or

tail -n 50 -f ~/Library/Logs/Claude/mcp.log- [ ] MFA support

- [ ] Cache SSO credentials to prevent from refreshing them too eagerly

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for aws-mcp

Similar Open Source Tools

aws-mcp

AWS MCP is a Model Context Protocol (MCP) server that facilitates interactions between AI assistants and AWS environments. It allows for natural language querying and management of AWS resources during conversations. The server supports multiple AWS profiles, SSO authentication, multi-region operations, and secure credential handling. Users can locally execute commands with their AWS credentials, enhancing the conversational experience with AWS resources.

mcp-redis

The Redis MCP Server is a natural language interface designed for agentic applications to efficiently manage and search data in Redis. It integrates seamlessly with MCP (Model Content Protocol) clients, enabling AI-driven workflows to interact with structured and unstructured data in Redis. The server supports natural language queries, seamless MCP integration, full Redis support for various data types, search and filtering capabilities, scalability, and lightweight design. It provides tools for managing data stored in Redis, such as string, hash, list, set, sorted set, pub/sub, streams, JSON, query engine, and server management. Installation can be done from PyPI or GitHub, with options for testing, development, and Docker deployment. Configuration can be via command line arguments or environment variables. Integrations include OpenAI Agents SDK, Augment, Claude Desktop, and VS Code with GitHub Copilot. Use cases include AI assistants, chatbots, data search & analytics, and event processing. Contributions are welcome under the MIT License.

mcp

Model Context Protocol (MCP) server providing Vuetify component information and documentation to any MCP-compatible client or IDE. The Vuetify MCP server bridges the gap between Vuetify's component library and AI-assisted development environments, enabling seamless access to Vuetify's extensive component ecosystem directly within your development workflow. Gain AI-powered assistance that understands Vuetify's component structure, styling conventions, and implementation details.

context7

Context7 is a powerful tool for analyzing and visualizing data in various formats. It provides a user-friendly interface for exploring datasets, generating insights, and creating interactive visualizations. With advanced features such as data filtering, aggregation, and customization, Context7 is suitable for both beginners and experienced data analysts. The tool supports a wide range of data sources and formats, making it versatile for different use cases. Whether you are working on exploratory data analysis, data visualization, or data storytelling, Context7 can help you uncover valuable insights and communicate your findings effectively.

mcphub.nvim

MCPHub.nvim is a powerful Neovim plugin that integrates MCP (Model Context Protocol) servers into your workflow. It offers a centralized config file for managing servers and tools, with an intuitive UI for testing resources. Ideal for LLM integration, it provides programmatic API access and interactive testing through the `:MCPHub` command.

mcp

Semgrep MCP Server is a beta server under active development for using Semgrep to scan code for security vulnerabilities. It provides a Model Context Protocol (MCP) for various coding tools to get specialized help in tasks. Users can connect to Semgrep AppSec Platform, scan code for vulnerabilities, customize Semgrep rules, analyze and filter scan results, and compare results. The tool is published on PyPI as semgrep-mcp and can be installed using pip, pipx, uv, poetry, or other methods. It supports CLI and Docker environments for running the server. Integration with VS Code is also available for quick installation. The project welcomes contributions and is inspired by core technologies like Semgrep and MCP, as well as related community projects and tools.

mcp-server-mysql

The MCP Server for MySQL based on NodeJS is a Model Context Protocol server that provides access to MySQL databases. It enables users to inspect database schemas and execute SQL queries. The server offers tools for executing SQL queries, providing comprehensive database information, security features like SQL injection prevention, performance optimizations, monitoring, and debugging capabilities. Users can configure the server using environment variables and advanced options. The server supports multi-DB mode, schema-specific permissions, and includes troubleshooting guidelines for common issues. Contributions are welcome, and the project roadmap includes enhancing query capabilities, security features, performance optimizations, monitoring, and expanding schema information.

ruby_llm-mcp

RubyLLM::MCP is a Ruby client for the Model Context Protocol (MCP), designed to seamlessly integrate with RubyLLM. It provides a Ruby-first API for using MCP tools, resources, and prompts directly in RubyLLM chat workflows. The tool supports the stable MCP spec `2025-06-18` and offers draft spec `2026-01-26` compatibility. It includes features like notification and response handlers, OAuth 2.1 authentication support, integration paths for Rails apps and CLI flows, and straightforward integration for any Ruby app or Rails project using RubyLLM. The tool allows users to work with MCP tools, resources, and prompts over `stdio`, streamable HTTP, or SSE transports.

Windows-MCP

Windows-MCP is a lightweight, open-source project that enables seamless integration between AI agents and the Windows operating system. Acting as an MCP server bridges the gap between LLMs and the Windows operating system, allowing agents to perform tasks such as file navigation, application control, UI interaction, QA testing, and more. It provides seamless Windows integration, supports any LLM without traditional computer vision techniques, offers a rich toolset for UI automation, is lightweight and open-source, customizable and extendable, offers real-time interaction with low latency, includes a DOM mode for browser automation, and supports various tools for interacting with Windows applications and system components.

matchlock

Matchlock is a CLI tool designed for running AI agents in isolated and disposable microVMs with network allowlisting and secret injection capabilities. It ensures that your secrets never enter the VM, providing a secure environment for AI agents to execute code without risking access to your machine. The tool offers features such as sealing the network to only allow traffic to specified hosts, injecting real credentials in-flight by the host, and providing a full Linux environment for the agent's operations while maintaining isolation from the host machine. Matchlock supports quick booting of Linux environments, sandbox lifecycle management, image building, and SDKs for Go and Python for embedding sandboxes in applications.

yutu

Yutu is a fully functional MCP server and CLI for YouTube designed to automate YouTube workflows. It allows users to manipulate various YouTube resources such as videos, playlists, channels, comments, captions, and more. The tool requires a Google Cloud Platform account with specific APIs enabled and OAuth credentials set up. Users can install Yutu using various methods like GitHub Actions, Docker, Gopher, Linux, macOS, or Windows. Yutu can also be used as an MCP server in tools like VS Code or Cursor, providing a chat-like interface to interact with YouTube resources.

postman-mcp-server

The Postman MCP Server connects Postman to AI tools, enabling AI agents and assistants to access workspaces, manage collections and environments, evaluate APIs, and automate workflows through natural language interactions. It supports various tool configurations like Minimal, Full, and Code, catering to users with different needs. The server offers authentication via OAuth for the best developer experience and fastest setup. Use cases include API testing, code synchronization, collection management, workspace and environment management, automatic spec creation, and client code generation. Designed for developers integrating AI tools with Postman's context and features, supporting quick natural language queries to advanced agent workflows.

Gmail-MCP-Server

Gmail AutoAuth MCP Server is a Model Context Protocol (MCP) server designed for Gmail integration in Claude Desktop. It supports auto authentication and enables AI assistants to manage Gmail through natural language interactions. The server provides comprehensive features for sending emails, reading messages, managing labels, searching emails, and batch operations. It offers full support for international characters, email attachments, and Gmail API integration. Users can install and authenticate the server via Smithery or manually with Google Cloud Project credentials. The server supports both Desktop and Web application credentials, with global credential storage for convenience. It also includes Docker support and instructions for cloud server authentication.

kindly-web-search-mcp-server

Kindly Web Search MCP Server is a tool designed to enhance web search and content retrieval for AI coding assistants. It integrates with APIs for StackExchange, GitHub Issues, arXiv, and Wikipedia to present content in optimized formats. It returns full conversations in a single call, parses webpages in real-time using a headless browser, and passes useful content to AI immediately. The tool supports multiple search providers and aims to deliver content in a structured and useful manner for AI coding assistants.

open-edison

OpenEdison is a secure MCP control panel that connects AI to data/software with additional security controls to reduce data exfiltration risks. It helps address the lethal trifecta problem by providing visibility, monitoring potential threats, and alerting on data interactions. The tool offers features like data leak monitoring, controlled execution, easy configuration, visibility into agent interactions, a simple API, and Docker support. It integrates with LangGraph, LangChain, and plain Python agents for observability and policy enforcement. OpenEdison helps gain observability, control, and policy enforcement for AI interactions with systems of records, existing company software, and data to reduce risks of AI-caused data leakage.

datagouv-mcp

datagouv-mcp is a Model Context Protocol (MCP) server designed to facilitate AI chatbots (such as Claude, ChatGPT, Gemini) in searching, exploring, and analyzing datasets from data.gouv.fr, the French national Open Data platform, through conversation. Users can ask questions like 'Quels jeux de données sont disponibles sur les prix de l'immobilier?' or 'Montre-moi les dernières données de population pour Paris' to get instant answers without manually browsing the website. The server provides tools to interact with datasets and dataservices, supporting features like searching datasets, getting dataset information, listing resources, querying resource data, and more. It also offers support for various chatbots like ChatGPT, Claude Desktop, Claude Code, Gemini CLI, Mistral Vibe CLI, AnythingLLM, VS Code, Cursor, Windsurf, and provides detailed instructions for connecting chatbots to the server.

For similar tasks

aws-mcp

AWS MCP is a Model Context Protocol (MCP) server that facilitates interactions between AI assistants and AWS environments. It allows for natural language querying and management of AWS resources during conversations. The server supports multiple AWS profiles, SSO authentication, multi-region operations, and secure credential handling. Users can locally execute commands with their AWS credentials, enhancing the conversational experience with AWS resources.

datagouv-mcp

datagouv-mcp is a Model Context Protocol (MCP) server designed to facilitate AI chatbots (such as Claude, ChatGPT, Gemini) in searching, exploring, and analyzing datasets from data.gouv.fr, the French national Open Data platform, through conversation. Users can ask questions like 'Quels jeux de données sont disponibles sur les prix de l'immobilier?' or 'Montre-moi les dernières données de population pour Paris' to get instant answers without manually browsing the website. The server provides tools to interact with datasets and dataservices, supporting features like searching datasets, getting dataset information, listing resources, querying resource data, and more. It also offers support for various chatbots like ChatGPT, Claude Desktop, Claude Code, Gemini CLI, Mistral Vibe CLI, AnythingLLM, VS Code, Cursor, Windsurf, and provides detailed instructions for connecting chatbots to the server.

For similar jobs

promptflow

**Prompt flow** is a suite of development tools designed to streamline the end-to-end development cycle of LLM-based AI applications, from ideation, prototyping, testing, evaluation to production deployment and monitoring. It makes prompt engineering much easier and enables you to build LLM apps with production quality.

deepeval

DeepEval is a simple-to-use, open-source LLM evaluation framework specialized for unit testing LLM outputs. It incorporates various metrics such as G-Eval, hallucination, answer relevancy, RAGAS, etc., and runs locally on your machine for evaluation. It provides a wide range of ready-to-use evaluation metrics, allows for creating custom metrics, integrates with any CI/CD environment, and enables benchmarking LLMs on popular benchmarks. DeepEval is designed for evaluating RAG and fine-tuning applications, helping users optimize hyperparameters, prevent prompt drifting, and transition from OpenAI to hosting their own Llama2 with confidence.

MegaDetector

MegaDetector is an AI model that identifies animals, people, and vehicles in camera trap images (which also makes it useful for eliminating blank images). This model is trained on several million images from a variety of ecosystems. MegaDetector is just one of many tools that aims to make conservation biologists more efficient with AI. If you want to learn about other ways to use AI to accelerate camera trap workflows, check out our of the field, affectionately titled "Everything I know about machine learning and camera traps".

leapfrogai

LeapfrogAI is a self-hosted AI platform designed to be deployed in air-gapped resource-constrained environments. It brings sophisticated AI solutions to these environments by hosting all the necessary components of an AI stack, including vector databases, model backends, API, and UI. LeapfrogAI's API closely matches that of OpenAI, allowing tools built for OpenAI/ChatGPT to function seamlessly with a LeapfrogAI backend. It provides several backends for various use cases, including llama-cpp-python, whisper, text-embeddings, and vllm. LeapfrogAI leverages Chainguard's apko to harden base python images, ensuring the latest supported Python versions are used by the other components of the stack. The LeapfrogAI SDK provides a standard set of protobuffs and python utilities for implementing backends and gRPC. LeapfrogAI offers UI options for common use-cases like chat, summarization, and transcription. It can be deployed and run locally via UDS and Kubernetes, built out using Zarf packages. LeapfrogAI is supported by a community of users and contributors, including Defense Unicorns, Beast Code, Chainguard, Exovera, Hypergiant, Pulze, SOSi, United States Navy, United States Air Force, and United States Space Force.

llava-docker

This Docker image for LLaVA (Large Language and Vision Assistant) provides a convenient way to run LLaVA locally or on RunPod. LLaVA is a powerful AI tool that combines natural language processing and computer vision capabilities. With this Docker image, you can easily access LLaVA's functionalities for various tasks, including image captioning, visual question answering, text summarization, and more. The image comes pre-installed with LLaVA v1.2.0, Torch 2.1.2, xformers 0.0.23.post1, and other necessary dependencies. You can customize the model used by setting the MODEL environment variable. The image also includes a Jupyter Lab environment for interactive development and exploration. Overall, this Docker image offers a comprehensive and user-friendly platform for leveraging LLaVA's capabilities.

carrot

The 'carrot' repository on GitHub provides a list of free and user-friendly ChatGPT mirror sites for easy access. The repository includes sponsored sites offering various GPT models and services. Users can find and share sites, report errors, and access stable and recommended sites for ChatGPT usage. The repository also includes a detailed list of ChatGPT sites, their features, and accessibility options, making it a valuable resource for ChatGPT users seeking free and unlimited GPT services.

TrustLLM

TrustLLM is a comprehensive study of trustworthiness in LLMs, including principles for different dimensions of trustworthiness, established benchmark, evaluation, and analysis of trustworthiness for mainstream LLMs, and discussion of open challenges and future directions. Specifically, we first propose a set of principles for trustworthy LLMs that span eight different dimensions. Based on these principles, we further establish a benchmark across six dimensions including truthfulness, safety, fairness, robustness, privacy, and machine ethics. We then present a study evaluating 16 mainstream LLMs in TrustLLM, consisting of over 30 datasets. The document explains how to use the trustllm python package to help you assess the performance of your LLM in trustworthiness more quickly. For more details about TrustLLM, please refer to project website.

AI-YinMei

AI-YinMei is an AI virtual anchor Vtuber development tool (N card version). It supports fastgpt knowledge base chat dialogue, a complete set of solutions for LLM large language models: [fastgpt] + [one-api] + [Xinference], supports docking bilibili live broadcast barrage reply and entering live broadcast welcome speech, supports Microsoft edge-tts speech synthesis, supports Bert-VITS2 speech synthesis, supports GPT-SoVITS speech synthesis, supports expression control Vtuber Studio, supports painting stable-diffusion-webui output OBS live broadcast room, supports painting picture pornography public-NSFW-y-distinguish, supports search and image search service duckduckgo (requires magic Internet access), supports image search service Baidu image search (no magic Internet access), supports AI reply chat box [html plug-in], supports AI singing Auto-Convert-Music, supports playlist [html plug-in], supports dancing function, supports expression video playback, supports head touching action, supports gift smashing action, supports singing automatic start dancing function, chat and singing automatic cycle swing action, supports multi scene switching, background music switching, day and night automatic switching scene, supports open singing and painting, let AI automatically judge the content.