obsidian-github-copilot

A bridge between Obsidian and Github Copilot

Stars: 422

Obsidian Github Copilot Plugin is a tool that enables users to utilize Github Copilot within the Obsidian editor. It acts as a bridge between Obsidian and the Github Copilot service, allowing for enhanced code completion and suggestion features. Users can configure various settings such as suggestion generation delay, key bindings, and visibility of suggestions. The plugin requires a Github Copilot subscription, Node.js 18 or later, and a network connection to interact with the Copilot service. It simplifies the process of writing code by providing helpful completions and suggestions directly within the Obsidian editor.

README:

Use GitHub Copilot in the Obsidian editor. This plugin is a bridge between the Obsidian editor and the GitHub Copilot service.

- 🤖 Get inline suggestion as you type using GitHub Copilot service

- 💬 Use the GitHub Copilot Chat service to ask questions about your notes

- A GitHub Copilot subscription (https://copilot.github.com/)

- Network connection to send and receive data from the GitHub Copilot service

- Install the plugin via the Obsidian community plugins browser.

- Activate the plugin in the settings. Make sure to configure any necessary options.

- Node.js 22 or later

- Once installed, go to the plugin settings and enter the path to the Node +22 binary. You can find it by running

which nodein your terminal. - Either

- A modal will appear asking you to sign in to Copilot. Follow the instructions to sign in.

- Or, you will receive a notice saying that Copilot is ready to use. (This will happen if you have already signed in to Copilot in the past in IDEs)

[!NOTE]

If you install the plugin by cloning it or downloading the release files from GitHub, you will need to name the plugin foldergithub-copilotfor the plugin to work.

- Open a note in Obsidian.

- Write something in the editor.

- After a small pause, Copilot will suggest completions for your text.

- Press

Tabto accept a suggestion orEscto dismiss it.

- [x] Use GitHub Copilot in the Obsidian editor

- [x] Configure the suggestion generation delay

- [x] Configure your bindings to accept, dismiss, trigger or partially accept suggestions

- [x] Configure if you want to see automatic suggestions or only trigger them manually

- [x] Configure if you want to see suggestion only in code blocks or in the whole note

- [x] Exclude folders and files from the suggestion generation

- If you installed Obsidian with Flatpak, you might need to use NVM to handle Node.js versions as the default binary path is not accessible in the Flatpak sandbox. See this issue for more information.

- "Tab" completion can be buggy in some cases (e.g. in bullet points in LateX Math mode for example). It depends on plugin priority over the keybindings. See this issue for more information.

https://github.com/user-attachments/assets/89089920-45de-47c3-80b1-f2d58d1ba55e

- Once installed, you can find the GitHub Copilot Chat in the right sidebar of Obsidian.

- It should ask you to sign in to GitHub Copilot. Follow the instructions to sign in.

- Open the GitHub Copilot Chat in the right sidebar.

- Choose a model to use (e.g.

gpt-4orclaude 3.7). - Ask a question and like a note like you would do in the obsidian editor (e.g.

Can you summarize this note [[my note]]?).

- [x] Use GitHub Copilot Chat in the Obsidian editor

- [x] Choose the model to use between all models available in the GitHub Copilot Chat service

- [x] Implement a chat history

- [x] Link notes with [[double bracket syntax]] in the chat

- [x] Configure a custom prompt as a system message in the settings

- [x] Let the user choose the default behavior of the enter key (send message or add a new line)

- [x] Mermaid diagram support - Render Mermaid diagrams in chat responses

- [ ] Stream the response from the API

- Waiting for requestUrl to implement Streaming : https://forum.obsidian.md/t/support-streaming-the-request-and-requesturl-response-body/87381

The chat supports rendering Mermaid diagrams when GitHub Copilot responds with Mermaid code blocks. Simply ask Copilot to create a diagram and it will render visually in the chat.

Example usage:

You: Can you create a flowchart showing the software development lifecycle?

Copilot: Here's a flowchart of the software development lifecycle:

```mermaid

flowchart TD

A[Requirements Analysis] --> B[Design]

B --> C[Implementation]

C --> D[Testing]

D --> E[Deployment]

E --> F[Maintenance]

F --> A

Supported diagram types:

- Flowcharts

- Sequence diagrams

- Class diagrams

- State diagrams

- Gantt charts

- Pie charts

- And more...

The diagrams automatically adapt to your Obsidian theme (light/dark mode) and are fully integrated into the chat interface.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for obsidian-github-copilot

Similar Open Source Tools

obsidian-github-copilot

Obsidian Github Copilot Plugin is a tool that enables users to utilize Github Copilot within the Obsidian editor. It acts as a bridge between Obsidian and the Github Copilot service, allowing for enhanced code completion and suggestion features. Users can configure various settings such as suggestion generation delay, key bindings, and visibility of suggestions. The plugin requires a Github Copilot subscription, Node.js 18 or later, and a network connection to interact with the Copilot service. It simplifies the process of writing code by providing helpful completions and suggestions directly within the Obsidian editor.

Kuebiko

Kuebiko is a Twitch Chat Bot that reads twitch chat and generates text-to-speech responses using Google Cloud API and OpenAI's GPT-3 text completion model. It allows users to set up their own VTuber AI similar to 'Neuro-Sama'. The project is built with Python and requires setting up various API keys and configurations to enable the bot functionality. Users can customize the voice of their VTuber and route audio using VBAudio Cable. Kuebiko provides a unique way to interact with viewers through chat responses and captions in OBS.

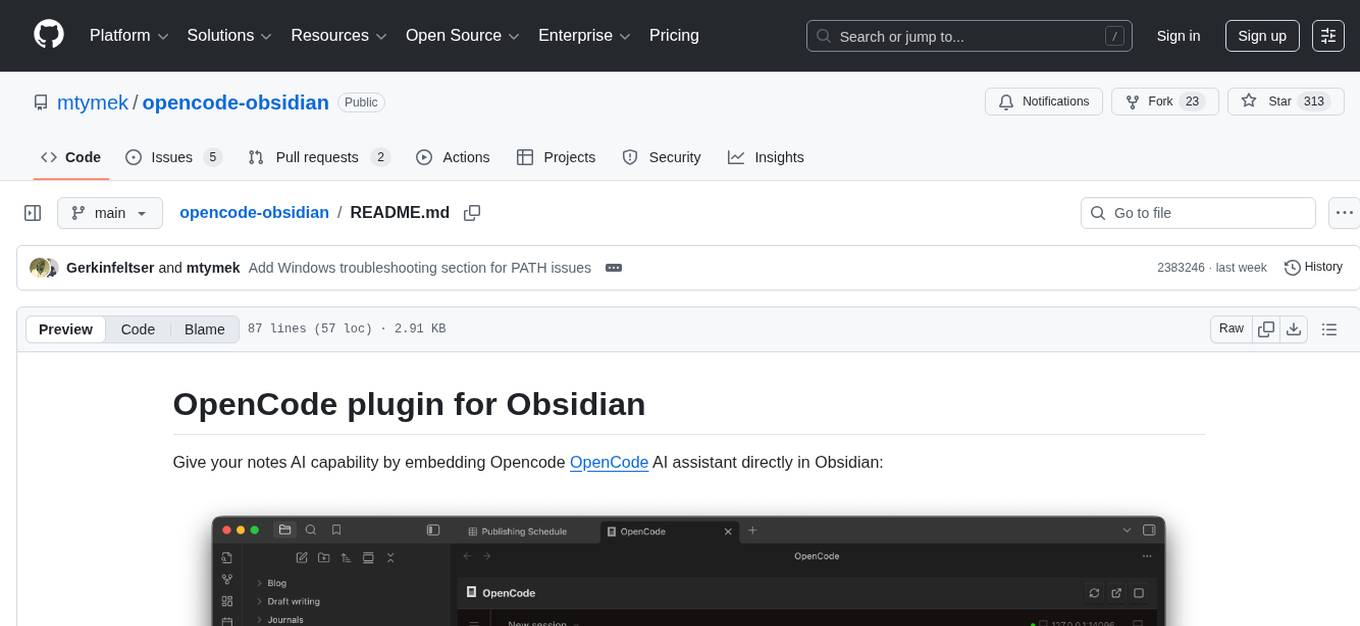

opencode-obsidian

The Opencode plugin for Obsidian allows users to give their notes AI capability by embedding Opencode OpenCode AI assistant directly in Obsidian. Users can summarize and distill long-form content, draft, edit, and refine writing, query and explore their knowledge base, and generate outlines and structured notes. The plugin uses OpenCode's web view embedded directly into the Obsidian window, providing full power without the need for a custom chat UI. It is a third-party software and requires Node.js child processes, OpenCode CLI, and Bun for installation and usage.

superflows

Superflows is an open-source alternative to OpenAI's Assistant API. It allows developers to easily add an AI assistant to their software products, enabling users to ask questions in natural language and receive answers or have tasks completed by making API calls. Superflows can analyze data, create plots, answer questions based on static knowledge, and even write code. It features a developer dashboard for configuration and testing, stateful streaming API, UI components, and support for multiple LLMs. Superflows can be set up in the cloud or self-hosted, and it provides comprehensive documentation and support.

Delphi-AI-Developer

Delphi AI Developer is a plugin that enhances the Delphi IDE with AI capabilities from OpenAI, Gemini, and Groq APIs. It assists in code generation, refactoring, and speeding up development by providing code suggestions and predefined questions. Users can interact with AI chat and databases within the IDE, customize settings, and access documentation. The plugin is open-source and under the MIT License.

extensionOS

Extension | OS is an open-source browser extension that brings AI directly to users' web browsers, allowing them to access powerful models like LLMs seamlessly. Users can create prompts, fix grammar, and access intelligent assistance without switching tabs. The extension aims to revolutionize online information interaction by integrating AI into everyday browsing experiences. It offers features like Prompt Factory for tailored prompts, seamless LLM model access, secure API key storage, and a Mixture of Agents feature. The extension was developed to empower users to unleash their creativity with custom prompts and enhance their browsing experience with intelligent assistance.

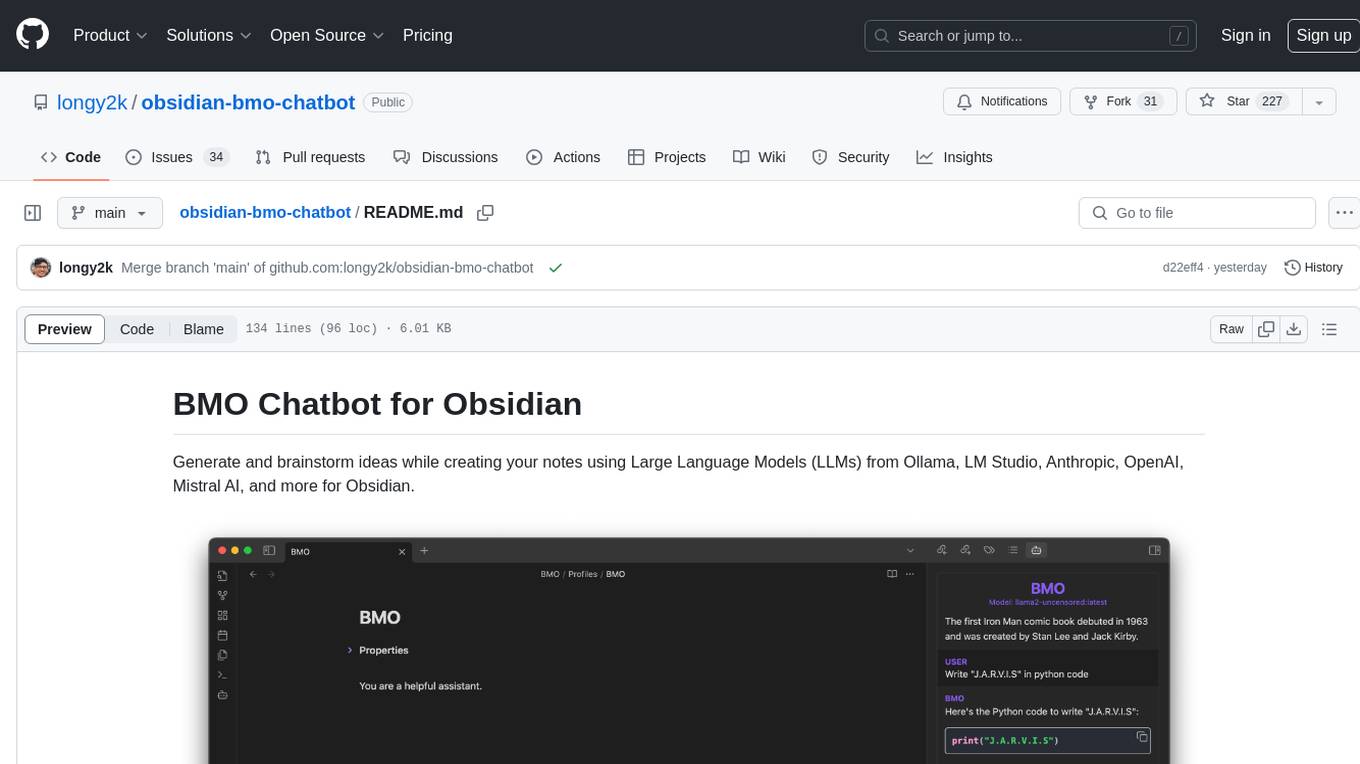

obsidian-bmo-chatbot

Obsidian BMO Chatbot is a plugin that allows users to generate and brainstorm ideas while creating notes using Large Language Models (LLMs) from various providers like Ollama, LM Studio, Anthropic, OpenAI, Mistral AI, and more. Users can interact with self-hosted LLMs, create chatbots with specific knowledge and personalities, chat from anywhere within Obsidian, and receive formatted responses in Obsidian Markdown. The plugin also offers features like customizable bot name, prompt selection, saving chat history as markdown, and more. Users can activate the plugin through Obsidian Community plugins or by installing it manually. Supported models include Ollama, LM Studio, Anthropic, Mistral AI, Google Gemini Pro, OpenAI, and Openrouter provided models.

github-pr-summary

github-pr-summary is a bot designed to summarize GitHub Pull Requests, helping open source contributors make faster decisions. It automatically summarizes commits and changed files in PRs, triggered by new commits or a magic trigger phrase. Users can deploy their own code review bot in 3 steps: create a bot from their GitHub repo, configure it to review PRs, and connect to GitHub for access to the target repo. The bot runs on flows.network using Rust and WasmEdge Runtimes. It utilizes ChatGPT/4 to review and summarize PR content, posting the result back as a comment on the PR. The bot can be used on multiple repos by creating new flows and importing the source code repo, specifying the target repo using flow config. Users can also change the magic phrase to trigger a review from a PR comment.

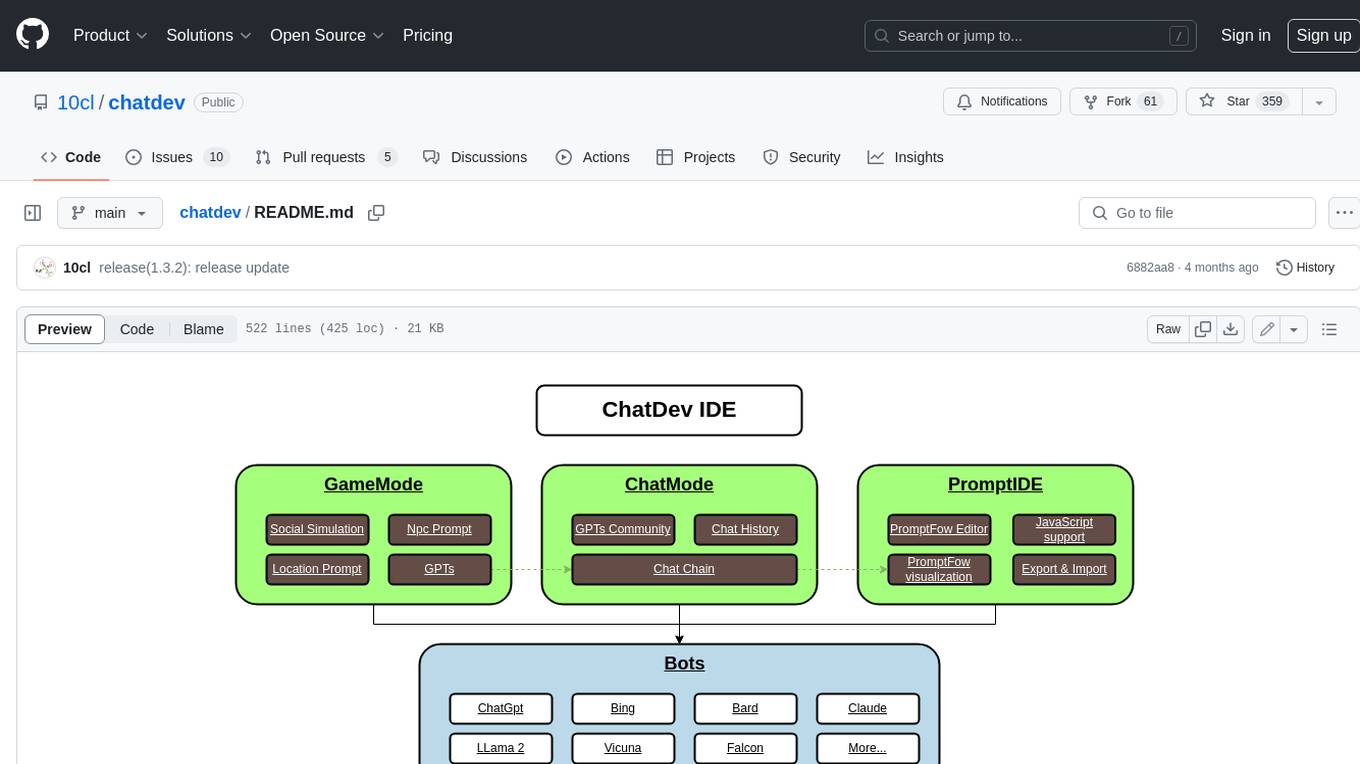

chatdev

ChatDev IDE is a tool for building your AI agent, Whether it's NPCs in games or powerful agent tools, you can design what you want for this platform. It accelerates prompt engineering through **JavaScript Support** that allows implementing complex prompting techniques.

ChatterUI

ChatterUI is a mobile app that allows users to manage chat files and character cards, and to interact with Large Language Models (LLMs). It supports multiple backends, including local, koboldcpp, text-generation-webui, Generic Text Completions, AI Horde, Mancer, Open Router, and OpenAI. ChatterUI provides a mobile-friendly interface for interacting with LLMs, making it easy to use them for a variety of tasks, such as generating text, translating languages, writing code, and answering questions.

serverless-chat-langchainjs

This sample shows how to build a serverless chat experience with Retrieval-Augmented Generation using LangChain.js and Azure. The application is hosted on Azure Static Web Apps and Azure Functions, with Azure Cosmos DB for MongoDB vCore as the vector database. You can use it as a starting point for building more complex AI applications.

TerminalGPT

TerminalGPT is a terminal-based ChatGPT personal assistant app that allows users to interact with OpenAI GPT-3.5 and GPT-4 language models. It offers advantages over browser-based apps, such as continuous availability, faster replies, and tailored answers. Users can use TerminalGPT in their IDE terminal, ensuring seamless integration with their workflow. The tool prioritizes user privacy by not using conversation data for model training and storing conversations locally on the user's machine.

parllama

PAR LLAMA is a Text UI application for managing and using LLMs, designed with Textual and Rich and PAR AI Core. It runs on major OS's including Windows, Windows WSL, Mac, and Linux. Supports Dark and Light mode, custom themes, and various workflows like Ollama chat, image chat, and OpenAI provider chat. Offers features like custom prompts, themes, environment variables configuration, and remote instance connection. Suitable for managing and using LLMs efficiently.

OpenCopilot

OpenCopilot allows you to have your own product's AI copilot. It integrates with your underlying APIs and can execute API calls whenever needed. It uses LLMs to determine if the user's request requires calling an API endpoint. Then, it decides which endpoint to call and passes the appropriate payload based on the given API definition.

nobodywho

NobodyWho is a plugin for the Godot game engine that enables interaction with local LLMs for interactive storytelling. Users can install it from Godot editor or GitHub releases page, providing their own LLM in GGUF format. The plugin consists of `NobodyWhoModel` node for model file, `NobodyWhoChat` node for chat interaction, and `NobodyWhoEmbedding` node for generating embeddings. It offers a programming interface for sending text to LLM, receiving responses, and starting the LLM worker.

SunoApi

SunoAPI is an unofficial client for Suno AI, built on Python and Streamlit. It supports functions like generating music and obtaining music information. Users can set up multiple account information to be saved for use. The tool also features built-in maintenance and activation functions for tokens, eliminating concerns about token expiration. It supports multiple languages and allows users to upload pictures for generating songs based on image content analysis.

For similar tasks

obsidian-github-copilot

Obsidian Github Copilot Plugin is a tool that enables users to utilize Github Copilot within the Obsidian editor. It acts as a bridge between Obsidian and the Github Copilot service, allowing for enhanced code completion and suggestion features. Users can configure various settings such as suggestion generation delay, key bindings, and visibility of suggestions. The plugin requires a Github Copilot subscription, Node.js 18 or later, and a network connection to interact with the Copilot service. It simplifies the process of writing code by providing helpful completions and suggestions directly within the Obsidian editor.

devchat

DevChat is an open-source workflow engine that enables developers to create intelligent, automated workflows for engaging with users through a chat panel within their IDEs. It combines script writing flexibility, latest AI models, and an intuitive chat GUI to enhance user experience and productivity. DevChat simplifies the integration of AI in software development, unlocking new possibilities for developers.

lowcode-vscode

This repository is a low-code tool that supports ChatGPT and other LLM models. It provides functionalities such as OCR translation, generating specified format JSON, translating Chinese to camel case, translating current directory to English, and quickly creating code templates. Users can also generate CURD operations for managing backend list pages. The tool allows users to select templates, initialize query form configurations using OCR, initialize table configurations using OCR, translate Chinese fields using ChatGPT, and generate code without writing a single line. It aims to enhance productivity by simplifying code generation and development processes.

AI-Prompt-Genius

AI Prompt Genius is a Chrome extension that allows you to curate a custom library of AI prompts. It is built using React web app and Tailwind CSS with DaisyUI components. The extension enables users to create and manage AI prompts for various purposes. It provides a user-friendly interface for organizing and accessing AI prompts efficiently. AI Prompt Genius is designed to enhance productivity and creativity by offering a personalized collection of prompts tailored to individual needs. Users can easily install the extension from the Chrome Web Store and start using it to generate AI prompts for different tasks.

second-brain-agent

The Second Brain AI Agent Project is a tool designed to empower personal knowledge management by automatically indexing markdown files and links, providing a smart search engine powered by OpenAI, integrating seamlessly with different note-taking methods, and enhancing productivity by accessing information efficiently. The system is built on LangChain framework and ChromaDB vector store, utilizing a pipeline to process markdown files and extract text and links for indexing. It employs a Retrieval-augmented generation (RAG) process to provide context for asking questions to the large language model. The tool is beneficial for professionals, students, researchers, and creatives looking to streamline workflows, improve study sessions, delve deep into research, and organize thoughts and ideas effortlessly.

AI-scripts

AI-scripts is a repository containing various AI scripts used for daily tasks. It includes tools like 'holefill' for filling code snippets in VIM, 'aiemu' for emulation purposes, and 'chatsh [model]' for terminal-based ChatGPT functionality. The repository aims to streamline AI-related workflows and enhance productivity by providing convenient scripts for common tasks.

magic-cli

Magic CLI is a command line utility that leverages Large Language Models (LLMs) to enhance command line efficiency. It is inspired by projects like Amazon Q and GitHub Copilot for CLI. The tool allows users to suggest commands, search across command history, and generate commands for specific tasks using local or remote LLM providers. Magic CLI also provides configuration options for LLM selection and response generation. The project is still in early development, so users should expect breaking changes and bugs.

readme-ai

README-AI is a developer tool that auto-generates README.md files using a combination of data extraction and generative AI. It streamlines documentation creation and maintenance, enhancing developer productivity. This project aims to enable all skill levels, across all domains, to better understand, use, and contribute to open-source software. It offers flexible README generation, supports multiple large language models (LLMs), provides customizable output options, works with various programming languages and project types, and includes an offline mode for generating boilerplate README files without external API calls.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.