hume-python-sdk

Python client for Hume AI

Stars: 158

The Hume AI Python SDK allows users to integrate Hume APIs directly into their Python applications. Users can access complete documentation, quickstart guides, and example notebooks to get started. The SDK is designed to provide support for Hume's expressive communication platform built on scientific research. Users are encouraged to create an account at beta.hume.ai and stay updated on changes through Discord. The SDK may undergo breaking changes to improve tooling and ensure reliable releases in the future.

README:

There were major breaking changes in version 0.7.0 of the SDK. If upgrading from a previous version, please

View the Migration Guide. That release deprecated several interfaces and moved them to the hume[legacy] package extra. The legacy extra was removed in 0.9.0. The last version to include legacy was 0.8.6.

API reference documentation is available here.

The Hume Python SDK is compatible across several Python versions and operating systems.

- For the Empathic Voice Interface, Python versions

3.9through3.13are supported on macOS and Linux. - For Text-to-speech (TTS), Python versions

3.9through3.13are supported on macOS, Linux, and Windows. - For Expression Measurement, Python versions

3.9through3.13are supported on macOS, Linux, and Windows.

Below is a table which shows the version and operating system compatibilities by product:

| Python Version | Operating System | |

|---|---|---|

| Empathic Voice Interface |

3.9, 3.10, 3.11, 3.12, 3.13

|

macOS, Linux |

| Text-to-speech (TTS) |

3.9, 3.10, 3.11, 3.12, 3.13

|

macOS, Linux, Windows |

| Expression Measurement |

3.9, 3.10, 3.11, 3.12, 3.13

|

macOS, Linux, Windows |

pip install hume

# or

poetry add hume

# or

uv add humefrom hume.client import HumeClient

client = HumeClient(api_key="YOUR_API_KEY")

client.empathic_voice.configs.list_configs()The SDK also exports an async client so that you can make non-blocking calls to our API.

import asyncio

from hume.client import AsyncHumeClient

client = AsyncHumeClient(api_key="YOUR_API_KEY")

async def main() -> None:

await client.empathic_voice.configs.list_configs()

asyncio.run(main())Writing files with an async stream of bytes can be tricky in Python! aiofiles can simplify this some. For example,

you can download your job artifacts like so:

import aiofiles

from hume import AsyncHumeClient

client = AsyncHumeClient()

async with aiofiles.open('artifacts.zip', mode='wb') as file:

async for chunk in client.expression_measurement.batch.get_job_artifacts(id="my-job-id"):

await file.write(chunk)This SDK contains the APIs for empathic voice, tts, and expression measurement. Even if you do not plan on using more than one API to start, the SDK provides easy access in case you would like to use additional APIs in the future.

Each API is namespaced accordingly:

from hume.client import HumeClient

client = HumeClient(api_key="YOUR_API_KEY")

client.emapthic_voice. # APIs specific to Empathic Voice

client.tts. # APIs specific to Text-to-speech

client.expression_measurement. # APIs specific to Expression MeasurementAll errors thrown by the SDK will be subclasses of ApiError.

import hume.client

try:

client.expression_measurement.batch.get_job_predictions(...)

except hume.core.ApiError as e: # Handle all errors

print(e.status_code)

print(e.body)Paginated requests will return a SyncPager or AsyncPager, which can be used as generators for the underlying object. For example, list_tools will return a generator over ReturnUserDefinedTool and handle the pagination behind the scenes:

import hume.client

client = HumeClient(api_key="YOUR_API_KEY")

for tool in client.empathic_voice.tools.list_tools():

print(tool)you could also iterate page-by-page:

for page in client.empathic_voice.tools.list_tools().iter_pages():

print(page.items)or manually:

pager = client.empathic_voice.tools.list_tools()

# First page

print(pager.items)

# Second page

pager = pager.next_page()

print(pager.items)We expose a websocket client for interacting with the EVI API as well as Expression Measurement.

When interacting with these clients, you can use them very similarly to how you'd use the common websockets library:

from hume import StreamDataModels

client = AsyncHumeClient(api_key=os.getenv("HUME_API_KEY"))

async with client.expression_measurement.stream.connect(

options={"config": StreamDataModels(...)}

) as hume_socket:

print(await hume_socket.get_job_details())The underlying connection, in this case hume_socket, will support intellisense/autocomplete for the different functions that are available on the socket!

The Hume SDK is instrumented with automatic retries with exponential backoff. A request will be retried as long as the request is deemed retriable and the number of retry attempts has not grown larger than the configured retry limit.

A request is deemed retriable when any of the following HTTP status codes is returned:

Use the max_retries request option to configure this behavior.

from hume.client import HumeClient

from hume.core import RequestOptions

client = HumeClient(...)

# Override retries for a specific method

client.expression_measurement.batch.get_job_predictions(...,

request_options=RequestOptions(max_retries=5)

)By default, requests time out after 60 seconds. You can configure this with a timeout option at the client or request level.

from hume.client import HumeClient

from hume.core import RequestOptions

client = HumeClient(

# All timeouts are 20 seconds

timeout=20.0,

)

# Override timeout for a specific method

client.expression_measurement.batch.get_job_predictions(...,

request_options=RequestOptions(timeout_in_seconds=20)

)You can override the httpx client to customize it for your use-case. Some common use-cases include support for proxies and transports.

import httpx

from hume.client import HumeClient

client = HumeClient(

http_client=httpx.Client(

proxies="http://my.test.proxy.example.com",

transport=httpx.HTTPTransport(local_address="0.0.0.0"),

),

)While we value open-source contributions to this SDK, this library is generated programmatically.

Additions made directly to this library would have to be moved over to our generation code, otherwise they would be overwritten upon the next generated release. Feel free to open a PR as a proof of concept, but know that we will not be able to merge it as-is. We suggest opening an issue first to discuss with us!

On the other hand, contributions to the README are always very welcome!

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for hume-python-sdk

Similar Open Source Tools

hume-python-sdk

The Hume AI Python SDK allows users to integrate Hume APIs directly into their Python applications. Users can access complete documentation, quickstart guides, and example notebooks to get started. The SDK is designed to provide support for Hume's expressive communication platform built on scientific research. Users are encouraged to create an account at beta.hume.ai and stay updated on changes through Discord. The SDK may undergo breaking changes to improve tooling and ensure reliable releases in the future.

hash

HASH is a self-building, open-source database which grows, structures and checks itself. With it, we're creating a platform for decision-making, which helps you integrate, understand and use data in a variety of different ways.

DeepPavlov

DeepPavlov is an open-source conversational AI library built on PyTorch. It is designed for the development of production-ready chatbots and complex conversational systems, as well as for research in the area of NLP and dialog systems. The library offers a wide range of models for tasks such as Named Entity Recognition, Intent/Sentence Classification, Question Answering, Sentence Similarity/Ranking, Syntactic Parsing, and more. DeepPavlov also provides embeddings like BERT, ELMo, and FastText for various languages, along with AutoML capabilities and integrations with REST API, Socket API, and Amazon AWS.

chat-ui

A chat interface using open source models, eg OpenAssistant or Llama. It is a SvelteKit app and it powers the HuggingChat app on hf.co/chat.

debug-gym

debug-gym is a text-based interactive debugging framework designed for debugging Python programs. It provides an environment where agents can interact with code repositories, use various tools like pdb and grep to investigate and fix bugs, and propose code patches. The framework supports different LLM backends such as OpenAI, Azure OpenAI, and Anthropic. Users can customize tools, manage environment states, and run agents to debug code effectively. debug-gym is modular, extensible, and suitable for interactive debugging tasks in a text-based environment.

langserve

LangServe helps developers deploy `LangChain` runnables and chains as a REST API. This library is integrated with FastAPI and uses pydantic for data validation. In addition, it provides a client that can be used to call into runnables deployed on a server. A JavaScript client is available in LangChain.js.

hordelib

horde-engine is a wrapper around ComfyUI designed to run inference pipelines visually designed in the ComfyUI GUI. It enables users to design inference pipelines in ComfyUI and then call them programmatically, maintaining compatibility with the existing horde implementation. The library provides features for processing Horde payloads, initializing the library, downloading and validating models, and generating images based on input data. It also includes custom nodes for preprocessing and tasks such as face restoration and QR code generation. The project depends on various open source projects and bundles some dependencies within the library itself. Users can design ComfyUI pipelines, convert them to the backend format, and run them using the run_image_pipeline() method in hordelib.comfy.Comfy(). The project is actively developed and tested using git, tox, and a specific model directory structure.

safety-tooling

This repository, safety-tooling, is designed to be shared across various AI Safety projects. It provides an LLM API with a common interface for OpenAI, Anthropic, and Google models. The aim is to facilitate collaboration among AI Safety researchers, especially those with limited software engineering backgrounds, by offering a platform for contributing to a larger codebase. The repo can be used as a git submodule for easy collaboration and updates. It also supports pip installation for convenience. The repository includes features for installation, secrets management, linting, formatting, Redis configuration, testing, dependency management, inference, finetuning, API usage tracking, and various utilities for data processing and experimentation.

paxml

Pax is a framework to configure and run machine learning experiments on top of Jax.

chatgpt-cli

ChatGPT CLI provides a powerful command-line interface for seamless interaction with ChatGPT models via OpenAI and Azure. It features streaming capabilities, extensive configuration options, and supports various modes like streaming, query, and interactive mode. Users can manage thread-based context, sliding window history, and provide custom context from any source. The CLI also offers model and thread listing, advanced configuration options, and supports GPT-4, GPT-3.5-turbo, and Perplexity's models. Installation is available via Homebrew or direct download, and users can configure settings through default values, a config.yaml file, or environment variables.

Hurley-AI

Hurley AI is a next-gen framework for developing intelligent agents through Retrieval-Augmented Generation. It enables easy creation of custom AI assistants and agents, supports various agent types, and includes pre-built tools for domains like finance and legal. Hurley AI integrates with LLM inference services and provides observability with Arize Phoenix. Users can create Hurley RAG tools with a single line of code and customize agents with specific instructions. The tool also offers various helper functions to connect with Hurley RAG and search tools, along with pre-built tools for tasks like summarizing text, rephrasing text, understanding memecoins, and querying databases.

verifiers

Verifiers is a library of modular components for creating RL environments and training LLM agents. It includes an async GRPO implementation built around the `transformers` Trainer, is supported by `prime-rl` for large-scale FSDP training, and can easily be integrated into any RL framework which exposes an OpenAI-compatible inference client. The library provides tools for creating and evaluating RL environments, training LLM agents, and leveraging OpenAI-compatible models for various tasks. Verifiers aims to be a reliable toolkit for building on top of, minimizing fork proliferation in the RL infrastructure ecosystem.

agenticSeek

AgenticSeek is a voice-enabled AI assistant powered by DeepSeek R1 agents, offering a fully local alternative to cloud-based AI services. It allows users to interact with their filesystem, code in multiple languages, and perform various tasks autonomously. The tool is equipped with memory to remember user preferences and past conversations, and it can divide tasks among multiple agents for efficient execution. AgenticSeek prioritizes privacy by running entirely on the user's hardware without sending data to the cloud.

mods

AI for the command line, built for pipelines. LLM based AI is really good at interpreting the output of commands and returning the results in CLI friendly text formats like Markdown. Mods is a simple tool that makes it super easy to use AI on the command line and in your pipelines. Mods works with OpenAI, Groq, Azure OpenAI, and LocalAI To get started, install Mods and check out some of the examples below. Since Mods has built-in Markdown formatting, you may also want to grab Glow to give the output some _pizzazz_.

php-ai-client

A provider agnostic PHP AI client SDK to communicate with any generative AI models of various capabilities using a uniform API. It is a PHP SDK that can be installed as a Composer package and used in any PHP project, not limited to WordPress. The project aims to bridge the gap between AI models and PHP applications, providing flexibility and ease of communication with AI providers.

magentic

Easily integrate Large Language Models into your Python code. Simply use the `@prompt` and `@chatprompt` decorators to create functions that return structured output from the LLM. Mix LLM queries and function calling with regular Python code to create complex logic.

For similar tasks

Fay

Fay is an open-source digital human framework that offers different versions for various purposes. The '带货完整版' is suitable for online and offline salespersons. The '助理完整版' serves as a human-machine interactive digital assistant that can also control devices upon command. The 'agent版' is designed to be an autonomous agent capable of making decisions and contacting its owner. The framework provides updates and improvements across its different versions, including features like emotion analysis integration, model optimizations, and compatibility enhancements. Users can access detailed documentation for each version through the provided links.

hume-python-sdk

The Hume AI Python SDK allows users to integrate Hume APIs directly into their Python applications. Users can access complete documentation, quickstart guides, and example notebooks to get started. The SDK is designed to provide support for Hume's expressive communication platform built on scientific research. Users are encouraged to create an account at beta.hume.ai and stay updated on changes through Discord. The SDK may undergo breaking changes to improve tooling and ensure reliable releases in the future.

deid-examples

This repository contains examples demonstrating how to use the Private AI REST API for identifying and replacing Personally Identifiable Information (PII) in text. The API supports over 50 entity types, such as Credit Card information and Social Security numbers, across 50 languages. Users can access documentation and the API reference on Private AI's website. The examples include common API call scenarios and use cases in both Python and JavaScript, with additional content related to PrivateGPT for secure work with Language Models (LLMs).

web-ui

WebUI is a user-friendly tool built on Gradio that enhances website accessibility for AI agents. It supports various Large Language Models (LLMs) and allows custom browser integration for seamless interaction. The tool eliminates the need for re-login and authentication challenges, offering high-definition screen recording capabilities.

git-mcp

GitMCP is a free, open-source service that transforms any GitHub project into a remote Model Context Protocol (MCP) endpoint, allowing AI assistants to access project documentation effortlessly. It empowers AI with semantic search capabilities, requires zero setup, is completely free and private, and serves as a bridge between GitHub repositories and AI assistants.

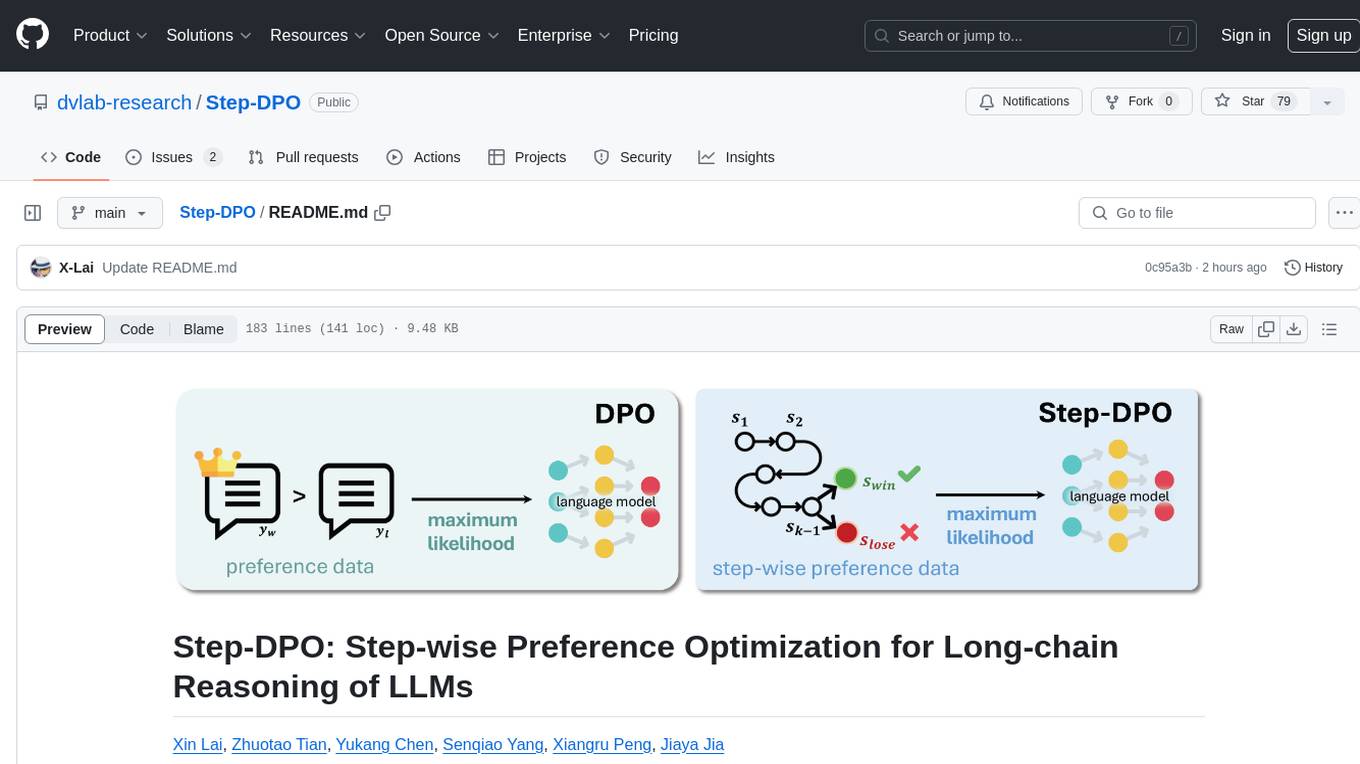

Step-DPO

Step-DPO is a method for enhancing long-chain reasoning ability of LLMs with a data construction pipeline creating a high-quality dataset. It significantly improves performance on math and GSM8K tasks with minimal data and training steps. The tool fine-tunes pre-trained models like Qwen2-7B-Instruct with Step-DPO, achieving superior results compared to other models. It provides scripts for training, evaluation, and deployment, along with examples and acknowledgements.

mimir

MIMIR is a Python package designed for measuring memorization in Large Language Models (LLMs). It provides functionalities for conducting experiments related to membership inference attacks on LLMs. The package includes implementations of various attacks such as Likelihood, Reference-based, Zlib Entropy, Neighborhood, Min-K% Prob, Min-K%++, Gradient Norm, and allows users to extend it by adding their own datasets and attacks.

TriForce

TriForce is a training-free tool designed to accelerate long sequence generation. It supports long-context Llama models and offers both on-chip and offloading capabilities. Users can achieve a 2.2x speedup on a single A100 GPU. TriForce also provides options for offloading with tensor parallelism or without it, catering to different hardware configurations. The tool includes a baseline for comparison and is optimized for performance on RTX 4090 GPUs. Users can cite the associated paper if they find TriForce useful for their projects.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.