we0

we0 is an AI code editor for development programmers and product managers. same v0, bolt.new,lovable

Stars: 391

We0 is a web project generation tool that offers browser-based debugging, high-fidelity design restoration, importing historical projects, integration with WeChat Mini Program Developer Tools, and multi-platform support. It supports code generation, design-to-code conversion, open-source projects, WeChat Mini Program Tools preview, existing projects, and Deepseek. The tool uses pnpm as the package management tool and requires Node.js version 18.20. Users can install and configure the tool for web development and utilize quick start methods for building the web editor. Additionally, instructions are provided for installing and using the client version on Mac, along with troubleshooting tips. For any questions or support, users can contact [email protected] or join the WeChat group chat.

README:

Currently, Cursor, v0, and Bolt.new have impressive performance in web project generation. The We0 project has the following features:

Supports browser-based debugging: Built-in WebContainer environment allows you to run a terminal in the browser, install and run npm and tool libraries.

High-fidelity design restoration: Utilizes cutting-edge D2C technology to achieve 90% design restoration.

Supports importing historical projects: Unlike Bolt.new, which runs in a browser environment, We0 can directly open existing historical projects for secondary editing and debugging.

Integrates with WeChat Mini Program Developer Tools: Allows direct preview and debugging by clicking to launch the WeChat Developer Tools.

Multi-platform support: Supports Windows and Mac operating systems for client downloads, as well as web container scenarios, allowing you to choose the appropriate terminal based on usage scenarios.

| Feature | we0 | v0 | bolt.new |

|---|---|---|---|

| Code generation and preview | ✅ | ✅ | ✅ |

| Design-to-code conversion | ✅ | ✅ | ❌ |

| Open-source | ✅ | ❌ | ✅ |

| Supports WeChat Mini Program Tools preview | ✅ | ❌ | ❌ |

| Supports existing projects | ✅ | ❌ | ❌ |

| Supports Deepseek | ✅ | ❌ | ❌ |

This project uses pnpm as the package management tool. Ensure your Node.js version is 18.20 .

- Install pnpm

npm install pnpm -g- Install dependencies

# Client

cd apps/we-dev-client

pnpm install

# Server

cd apps/we-dev/we-dev-next

pnpm install

- Configure environment variables

Rename .env.example to .env and fill in the corresponding content.

Client apps/we-dev-client/.env

# SERVER_ADDRESS [MUST*] (eg: http://localhost:3000)

APP_BASE_URL=

# JWT_SECRET [Optional]

JWT_SECRET=Servers apps/we-dev-next/.env

# Third-Party Model URL [MUST*] (eg: https://api.openai.com/v1)

THIRD_API_URL=

# Third-Party Model API-Key [MUST*] (eg:sk-xxxx)

THIRD_API_KEY=

# JWT_SECRET [Optional]

JWT_SECRET=

chmod +x scripts/wedev-build.sh

./scripts/wedev-build.shQuick Start Method Supports quick start from the root directory.

"dev:next": "cd apps/we-dev-next && pnpm install && pnpm dev",

"dev:client": "cd apps/we-dev-client && pnpm dev",How to Use the Client Version?

-

mac version

-

Go to https://we0.ai/.

-

Select Download for Mac to download the installer.

-

You might encounter an issue:

-

-

Open Launchpad, select Terminal, and enter:

sudo spctl --master-disable

then press Enter, enter your password (the password input is invisible), and press Enter again.

Next, open System Preferences, select Security & Privacy, then General, and you will see Anywhere selected. Then open the file to install.

-

If it still shows "Damaged and cannot be opened. You should move it to the Trash"

don't worry. Use the following method:

Copy and paste the command in the terminal (note the space at the end):

sudo xattr -r -d com.apple.quarantine

Do not press Enter yet! Do not press Enter yet! Do not press Enter yet! Do not press Enter yet!

Then open Finder, go to the Applications directory, find the software icon, and drag it into the terminal window. You will get a combination like this (as shown in the image):

sudo xattr -r -d com.apple.quarantine /Applications/WebStrom.app

Return to the terminal window, press Enter, and enter your system password to proceed.

- If electron reports an error during the second run, please delete the client workspace

- electron If there is no preview when starting, run pnpm run electron:dev

send email to [email protected]

If you cannot join the WeChat group, you can add

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for we0

Similar Open Source Tools

we0

We0 is a web project generation tool that offers browser-based debugging, high-fidelity design restoration, importing historical projects, integration with WeChat Mini Program Developer Tools, and multi-platform support. It supports code generation, design-to-code conversion, open-source projects, WeChat Mini Program Tools preview, existing projects, and Deepseek. The tool uses pnpm as the package management tool and requires Node.js version 18.20. Users can install and configure the tool for web development and utilize quick start methods for building the web editor. Additionally, instructions are provided for installing and using the client version on Mac, along with troubleshooting tips. For any questions or support, users can contact [email protected] or join the WeChat group chat.

ChatGPT-desktop

ChatGPT Desktop Application is a multi-platform tool that provides a powerful AI wrapper for generating text. It offers features like text-to-speech, exporting chat history in various formats, automatic application upgrades, system tray hover window, support for slash commands, customization of global shortcuts, and pop-up search. The application is built using Tauri and aims to enhance user experience by simplifying text generation tasks. It is available for Mac, Windows, and Linux, and is designed for personal learning and research purposes.

vibe-kanban

Vibe Kanban is a tool designed to streamline the process of planning, reviewing, and orchestrating tasks for human engineers working with AI coding agents. It allows users to easily switch between different coding agents, orchestrate their execution, review work, start dev servers, and track task statuses. The tool centralizes the configuration of coding agent MCP configs, providing a comprehensive solution for managing coding tasks efficiently.

glide

Glide is a cloud-native LLM gateway that provides a unified REST API for accessing various large language models (LLMs) from different providers. It handles LLMOps tasks such as model failover, caching, key management, and more, making it easy to integrate LLMs into applications. Glide supports popular LLM providers like OpenAI, Anthropic, Azure OpenAI, AWS Bedrock (Titan), Cohere, Google Gemini, OctoML, and Ollama. It offers high availability, performance, and observability, and provides SDKs for Python and NodeJS to simplify integration.

verifywise

VerifyWise is an open-source AI governance platform designed to help businesses harness the power of AI safely and responsibly. The platform ensures compliance and robust AI management without compromising on security. It offers additional products like MaskWise for data redaction, EvalWise for AI model evaluation, and FlagWise for security threat monitoring. VerifyWise simplifies AI governance for organizations, aiding in risk management, regulatory compliance, and promoting responsible AI practices. It features options for on-premises or private cloud hosting, open-source with AGPLv3 license, AI-generated answers for compliance audits, source code transparency, Docker deployment, user registration, role-based access control, and various AI governance tools like risk management, bias & fairness checks, evidence center, AI trust center, and more.

mcp

Enable AI agents to operate reliably within real workflows. This MCP is monday.com's open framework for connecting agents into your work OS - giving them secure access to structured data, tools to take action, and the context needed to make smart decisions. The repository provides a comprehensive set of tools for AI agent developers who want to integrate with monday.com, including a plug-and-play server implementation for the Model Context Protocol (MCP) and a powerful set of tools for building AI agents that interact with the monday.com API. Users can choose between a hosted MCP service for fast and reliable connection or run the MCP locally for customization and offline development. The repository also offers advanced tools like Dynamic API Tools for full access to the monday.com GraphQL API, enabling complex reports, batch operations, and deep integration with monday.com's features.

tts-generation-webui

TTS Generation WebUI is a comprehensive tool that provides a user-friendly interface for text-to-speech and voice cloning tasks. It integrates various AI models such as Bark, MusicGen, AudioGen, Tortoise, RVC, Vocos, Demucs, SeamlessM4T, and MAGNeT. The tool offers one-click installers, Google Colab demo, videos for guidance, and extra voices for Bark. Users can generate audio outputs, manage models, caches, and system space for AI projects. The project is open-source and emphasizes ethical and responsible use of AI technology.

keras-llm-robot

The Keras-llm-robot Web UI project is an open-source tool designed for offline deployment and testing of various open-source models from the Hugging Face website. It allows users to combine multiple models through configuration to achieve functionalities like multimodal, RAG, Agent, and more. The project consists of three main interfaces: chat interface for language models, configuration interface for loading models, and tools & agent interface for auxiliary models. Users can interact with the language model through text, voice, and image inputs, and the tool supports features like model loading, quantization, fine-tuning, role-playing, code interpretation, speech recognition, image recognition, network search engine, and function calling.

chatgpt-lite

ChatGPT Lite is a lightweight web interface developed using Next.js and the OpenAI Chat API. It allows users to deploy a custom ChatGPT interface supporting markdown, prompt storage, and multi-person chats. Users can create private web-based ChatGPT instances for friends without sharing API keys. The codebase is clear and expandable, making it an ideal starting point for AI projects.

RWKV-Runner

RWKV Runner is a project designed to simplify the usage of large language models by automating various processes. It provides a lightweight executable program and is compatible with the OpenAI API. Users can deploy the backend on a server and use the program as a client. The project offers features like model management, VRAM configurations, user-friendly chat interface, WebUI option, parameter configuration, model conversion tool, download management, LoRA Finetune, and multilingual localization. It can be used for various tasks such as chat, completion, composition, and model inspection.

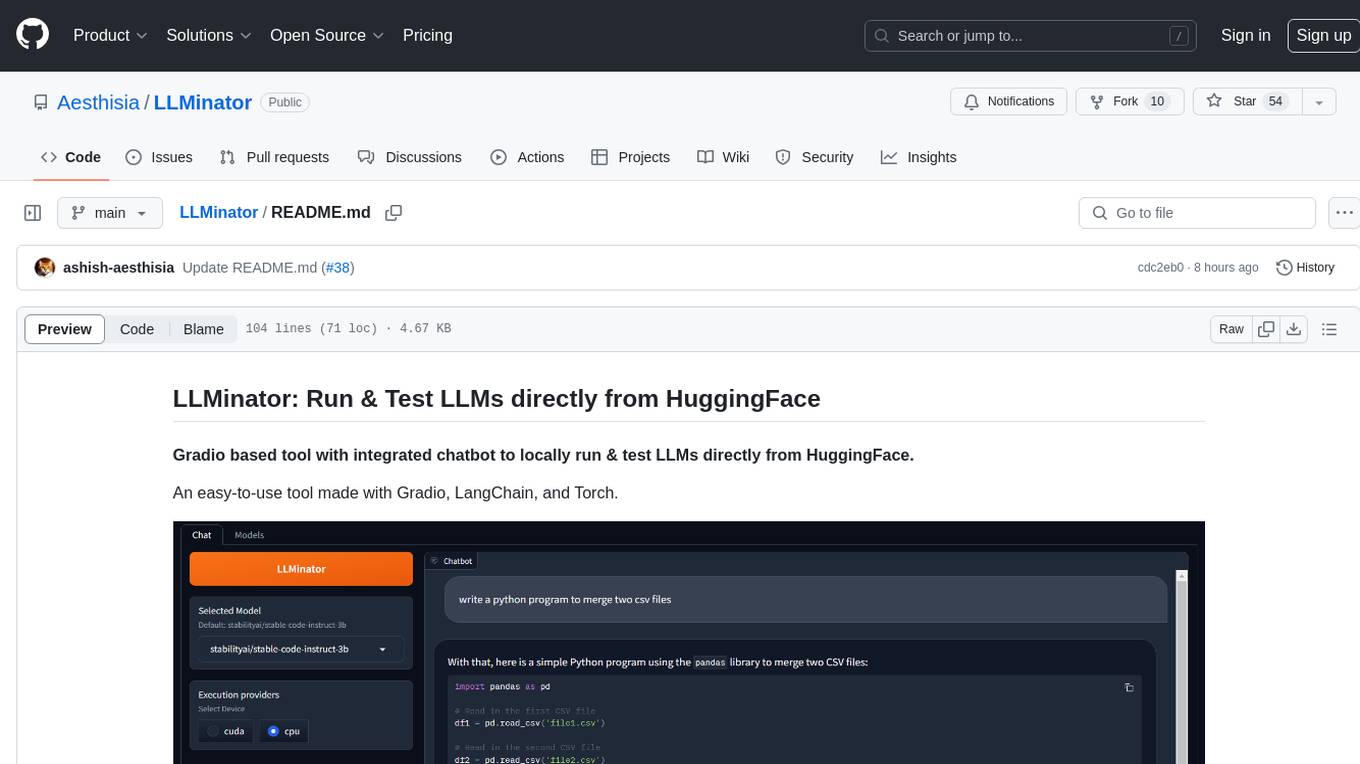

LLMinator

LLMinator is a Gradio-based tool with an integrated chatbot designed to locally run and test Language Model Models (LLMs) directly from HuggingFace. It provides an easy-to-use interface made with Gradio, LangChain, and Torch, offering features such as context-aware streaming chatbot, inbuilt code syntax highlighting, loading any LLM repo from HuggingFace, support for both CPU and CUDA modes, enabling LLM inference with llama.cpp, and model conversion capabilities.

flowgen

FlowGen is a tool built for AutoGen, a great agent framework from Microsoft and a lot of contributors. It provides intuitive visual tools that streamline the construction and oversight of complex agent-based workflows, simplifying the process for creators and developers. Users can create Autoflows, chat with agents, and share flow templates. The tool is fully dockerized and supports deployment on Railway.app. Contributions to the project are welcome, and the platform uses semantic-release for versioning and releases.

page-assist

Page Assist is an open-source Chrome Extension that provides a Sidebar and Web UI for your Local AI model. It allows you to interact with your model from any webpage.

dev3000

dev3000 captures your web app's complete development timeline including server logs, browser events, console messages, network requests, and automatic screenshots in a unified, timestamped feed for AI debugging. It creates a comprehensive log of your development session that AI assistants can easily understand, monitoring your app in a real browser and capturing server logs, console output, browser console messages and errors, network requests and responses, and automatic screenshots on navigation, errors, and key events. Logs are saved with timestamps and rotated to keep the 10 most recent per project, with the current session symlinked for easy access. The tool integrates with AI assistants for instant debugging and provides advanced querying options through the MCP server.

mobile-use

Mobile-use is an open-source AI agent that controls Android or IOS devices using natural language. It understands commands to perform tasks like sending messages and navigating apps. Features include natural language control, UI-aware automation, data scraping, and extensibility. Users can automate their mobile experience by setting up environment variables, customizing LLM configurations, and launching the tool via Docker or manually for development. The tool supports physical Android phones, Android simulators, and iOS simulators. Contributions are welcome, and the project is licensed under MIT.

openlit

OpenLIT is an OpenTelemetry-native GenAI and LLM Application Observability tool. It's designed to make the integration process of observability into GenAI projects as easy as pie – literally, with just **a single line of code**. Whether you're working with popular LLM Libraries such as OpenAI and HuggingFace or leveraging vector databases like ChromaDB, OpenLIT ensures your applications are monitored seamlessly, providing critical insights to improve performance and reliability.

For similar tasks

sdxl-lightning-demo-app

This repository contains a demo application showcasing the usage of the SDXL Lightning API by fal.ai. The application also demonstrates the functionality of the fal.realtime client. To get started, users need to have a Fal AI API key for model access. The setup involves adding the API key to the .env.local file, installing dependencies using 'npm install', and running the application with 'npm run dev'.

we0

We0 is a web project generation tool that offers browser-based debugging, high-fidelity design restoration, importing historical projects, integration with WeChat Mini Program Developer Tools, and multi-platform support. It supports code generation, design-to-code conversion, open-source projects, WeChat Mini Program Tools preview, existing projects, and Deepseek. The tool uses pnpm as the package management tool and requires Node.js version 18.20. Users can install and configure the tool for web development and utilize quick start methods for building the web editor. Additionally, instructions are provided for installing and using the client version on Mac, along with troubleshooting tips. For any questions or support, users can contact [email protected] or join the WeChat group chat.

AgentConnect

AgentConnect is an open-source implementation of the Agent Network Protocol (ANP) aiming to define how agents connect with each other and build an open, secure, and efficient collaboration network for billions of agents. It addresses challenges like interconnectivity, native interfaces, and efficient collaboration. The architecture includes authentication, end-to-end encryption modules, meta-protocol module, and application layer protocol integration framework. AgentConnect focuses on performance and multi-platform support, with plans to rewrite core components in Rust and support mobile platforms and browsers. The project aims to establish ANP as an industry standard and form an ANP Standardization Committee. Installation is done via 'pip install agent-connect' and demos can be run after cloning the repository. Features include decentralized authentication based on did:wba and HTTP, and meta-protocol negotiation examples.

AgentConnect

AgentConnect is an open-source implementation of the Agent Network Protocol (ANP) aiming to define how agents connect with each other and build an open, secure, and efficient collaboration network for billions of agents. It addresses challenges like interconnectivity, native interfaces, and efficient collaboration by providing authentication, end-to-end encryption, meta-protocol handling, and application layer protocol integration. The project focuses on performance and multi-platform support, with plans to rewrite core components in Rust and support Mac, Linux, Windows, mobile platforms, and browsers. AgentConnect aims to establish ANP as an industry standard through protocol development and forming a standardization committee.

For similar jobs

air-light

Air-light is a minimalist WordPress starter theme designed to be an ultra minimal starting point for a WordPress project. It is built to be very straightforward, backwards compatible, front-end developer friendly and modular by its structure. Air-light is free of weird "app-like" folder structures or odd syntaxes that nobody else uses. It loves WordPress as it was and as it is.

AirPower4T

AirPower4T is a development base library based on Vue3 TypeScript Element Plus Vite, using decorators, object-oriented, Hook and other front-end development methods. It provides many common components and some feedback components commonly used in background management systems, and provides a lot of enums and decorators.

Juggle

Juggle is a low-code tool for interface orchestration, which can quickly orchestrate simple APIs into a complex interface. The orchestrated interface can be directly used by the front end, greatly improving development efficiency and reducing development costs.

enterprise-commerce

Enterprise Commerce is a Next.js commerce starter that helps you launch your high-performance Shopify storefront in minutes, not weeks. It leverages the power of Vector Search and AI to deliver a superior online shopping experience without the development headaches.

ai2html

ai2html is an open-source script for Adobe Illustrator that converts your Illustrator documents into html and css.

nlux

nlux is an open-source Javascript and React JS library that makes it super simple to integrate powerful large language models (LLMs) like ChatGPT into your web app or website. With just a few lines of code, you can add conversational AI capabilities and interact with your favourite LLM.

ui

Leafer UI is a colorful UI drawing framework developed based on Leafer, which can be used to combine AI drawing and generate interfaces. It provides commonly used UI drawing components and out-of-the-box functions, which is convenient for data exchange with products such as Figma and Sketch, and provides unified and rich interactive events for cross-platform development, such as drag, rotate, and zoom gestures. 1.0.0-rc.21 has been released 🎉🎉🎉, check the changelog. At present, the product has gradually stabilized, and the official version is coming soon. Thanks to all the friends who participated~ If you want to start using it right away, please check the quick installation. If you have any questions or suggestions, you can submit them here or join the technical exchange group. If you only need drawing functions, the lighter leafer-draw (46KB min+gzip) is recommended. 🌟 Remember to go to GitHub / Gitee to light up your little stars ✨ ✨ ✨