moly-ai

Moly AI: A local + cloud AI LLM multi-platform GUI app in pure Rust

Stars: 397

Moly is an AI LLM client written in Rust that showcases the capabilities of the Makepad UI toolkit and Project Robius. It supports various AI providers, including OpenAI-compatible providers, Moly Server for local LLM exploration, and MoFa Servers for building AI agents. Users can download pre-built releases for different platforms and contribute to extending the list of supported providers. Moly can be used for tasks like chat completions, AI agent interactions, and custom client creation.

README:

Moly: a Rust AI LLM client built atop Robius

Moly is an AI LLM client written in Rust, that demonstrates the power of the Makepad UI toolkit and Project Robius, a framework for multi-platform application development in Rust.

⚠️ Moly is in early development. Please file an issue if you encounter bugs or unexpected results.

https://github.com/user-attachments/assets/bc50f75d-c82a-49c4-8faa-363afff198a1

Want to try Moly without building it from source? You can download the latest stable pre-built releases of Moly.

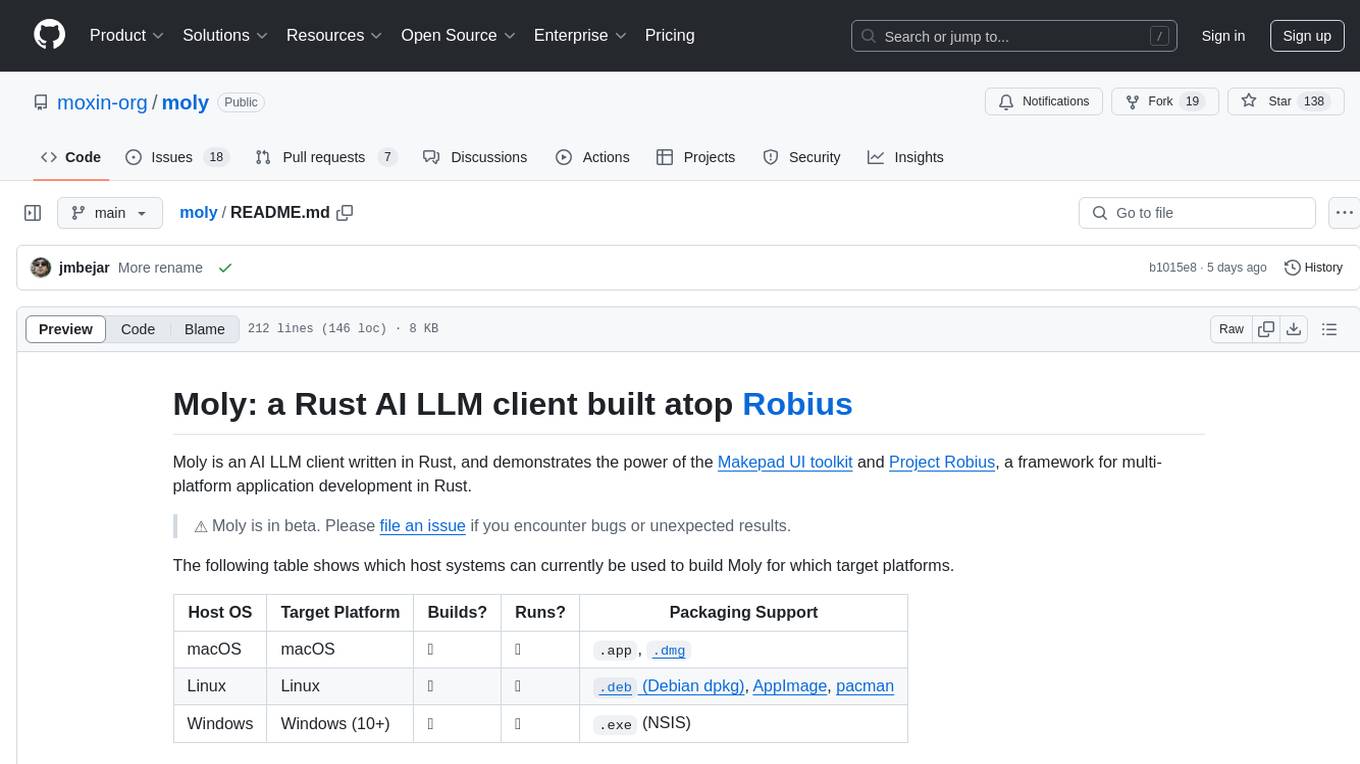

The following table shows which host systems can currently be used to build Moly for which target platforms.

| Host OS | Target Platform | Builds? | Runs? | Packaging Support |

|---|---|---|---|---|

| macOS | macOS | ✅ | ✅ |

.app, .dmg

|

| Linux | Linux | ✅ | ✅ |

.deb (Debian dpkg), AppImage, pacman

|

| Windows | Windows (10+) | ✅ | ✅ | .exe (NSIS) |

| Any | Web | ✅ | ✅ | N/A |

| Any | Android | ✅ | ✅ | .apk |

| macOS | iOS | ✅ | ✅ | Beta testing via TestFlight |

The Moly app supports different types of AI providers:

-

OpenAI-compatible AI providers: configured through the Providers Dashboard.

- Support for other clients will be added to MolyKit. To create your own custom clients, checkout the MolyKit documentation.

- If you want to contribute providers, or extend the list of supported models for a given provider, see instructions here

- Moly Server: a local LLM backend that allows exploring, downloading and running OSS LLMs locally. For usage and installation see instructions here

- MoFa Servers: MoFa is a framework for building AI agents. Using MoFa, AI agents can be constructed via templates, and then exposed via a Dora server that is OpenAI-compatible. MoFa servers can be added to the application through the Providers Dashboard. See instructions here.

Moly Server is a local HTTP server which provides capabilities for searching, downloading, and running local LLMs over an OpenAI-compatible API. While not required in order to use Moly, it can be run alongside the main Moly application for an integrated, local experience.

To get started, simply download and extract the latest version for your platform from the server releases page and run the executable in a command line from inside the directory.

Alternatively, to compile it from source, follow the setup guide and then run:

cd moly-local/

cargo run -p moly-local-

Obtain the source code for this repository:

git clone https://github.com/moly-ai/moly-ai.git- Run

cargo run --release[!IMPORTANT] If your CPU does not support AVX512, then you should append the

--noavxoption onto the above command. To build Moly on Linux, you must install the following dependencies:openssl,clang/libclang,binfmt,Xcursor/X11,asound/pulse. On a Debian-like Linux distro (e.g., Ubuntu), run the following:

sudo apt-get update

sudo apt-get install libssl-dev pkg-config llvm clang libclang-dev binfmt-support libxcursor-dev libx11-dev libasound2-dev libpulse-dev libwayland-dev libxkbcommon-devThen use cargo to build and run Moly:

cd moly

cargo run --release- Install Rust and cargo-makepad.

- Obtain the source code for this repository:

git clone https://github.com/moly-ai/moly-ai.git- Run and serve the Moly app:

cargo makepad wasm --bindgen run -p moly --release[!NOTE] If you want to deploy it, it's recommended to optimize for size building it like this:

cargo makepad wasm --strip --brotli --bindgen build -p moly --profile=small

Note: we already have pre-built releases of Moly available for download.

Install cargo-packager:

rustup update stable ## Rust version 1.79 or higher is required

cargo +stable install --force --locked cargo-packagerFor posterity, these instructions have been tested on cargo-packager version 0.10.1, which requires Rust v1.79.

On a Debian-based Linux distribution (e.g., Ubuntu), you can generate a .deb Debian package, an AppImage, and a pacman installation package.

[!IMPORTANT] You can only generate a

.debDebian package on a Debian-based Linux distribution, asdpkgis needed.

[!NOTE] The

pacmanpackage has not yet been tested.

Ensure you are in the root moly directory, and then you can use cargo packager to generate all three package types at once:

cargo packager --release --verbose ## --verbose is optionalTo install the Moly app from the .debpackage on a Debian-based Linux distribution (e.g., Ubuntu), run:

cd dist/

sudo apt install ./moly_*.deb ## Replace * with version/arch. The leading "./" part is requiredWe recommend using apt install to install the .deb file instead of dpkg -i, because apt will auto-install all of Moly's required dependencies, whereas dpkg will require you to install them manually.

To run the AppImage bundle, simply set the file as executable and then run it:

cd dist/

chmod +x moly_*.AppImage ## Replace * with version/arch

./moly_*.AppImage ## Replace * with version/archThis can only be run on an actual Windows machine, due to platform restrictions.

First, install the necessary build tools if you haven't already (e.g., Visual Studio Build Tools, LLVM as mentioned in some setups).

Ensure you are in the root moly directory, and then you can use cargo packager to generate a setup.exe file using NSIS:

cargo packager --release --formats nsis --verbose ## --verbose is optionalAfter the command completes, you should see a Windows installer called moly_*_x64-setup.exe (replace * with version) in the dist/ directory.

Double-click that file to install Moly on your machine, and then run it as you would a regular application.

This can only be run on an actual macOS machine, due to platform restrictions.

Ensure you are in the root moly directory, and then you can use cargo packager to generate an .app bundle and a .dmg disk image:

cargo packager --release --verbose ## --verbose is optional[!IMPORTANT] You will see a .dmg window pop up — please leave it alone, it will auto-close once the packaging procedure has completed.

[!TIP] If you receive the following error:

ERROR cargo_packager::cli: Error running create-dmg script: File exists (os error 17)then open Finder and unmount any Moly-related disk images, then try the above

cargo packagercommand again.

[!TIP] If you receive an error like so:

Creating disk image... hdiutil: create failed - Operation not permitted could not access /Volumes/Moly/Moly.app - Operation not permittedthen you need to grant "App Management" permissions to the app in which you ran the

cargo packagercommand, e.g., Terminal, Visual Studio Code, etc. To do this, openSystem Preferences→Privacy & Security→App Management, and then click the toggle switch next to the relevant app to enable that permission. Then, try the abovecargo packagercommand again.

After the command completes, you should see both the Moly.app and the .dmg in the dist/ directory.

You can immediately double-click the Moly.app bundle to run it, or you can double-click the .dmg file to install.

Note that the

.dmgis what should be distributed for installation on other machines, not the.app.

If you'd like to modify the .dmg background, here is the Google Drawings file used to generate the MacOS .dmg background image.

MoFa is a software framework for building AI agents. Moly supports connecting to MoFa servers to interact with AI agents in the same way it does with local or remote LLMs.

To run Moly with a local MoFa server, you can follow these steps:

https://github.com/dora-rs/dora?tab=readme-ov-file#installation

Requires python ^3.10

git clone https://github.com/moly-ai/mofa.gitInstall the required Python libraries, and mainly, the mofa library itself

cd python && pip install -r requirements.txt && pip install -e .

pip install dora-rsNavigate to the folder of the Dora node that implements the http server

cd examples/moly_clientRun MoFa with

dora up

dora build dataflow.yml

dora start dataflow.yml

If there's any error when doing dora start, you can restart dora

dora destroy && dora upAt this point the server should be up You can verify it with a request for chat completion:

curl http://localhost:8000/v1/chat/completions \

-v -H "Content-Type: application/json" \

-d '{

"model": "moly-chat",

"messages": [

{ "role": "system", "content": "Use positive language and offer helpful solutions to their problems." },

{ "role": "user", "content": "What is the currency used in Spain?" }

],

"temperature": 0.7,

"stream": true

}'This should return a JSON response with the completion.

Go to the Providers Dashboard and enable the MoFa entry (or add new ones if needed)

One of the easiest ways to contribute to Moly is by extending the list of predefined supported providers and their models.

- Add the provider information to supported_providers.json.

-

name: The name to display in the UI -

url: The full API endpoint for this provider, including versioning, e.g. "https://api.openai.com/v1" -

provider_type: The type of API format that the provider uses, e.g. the"provider_type": "OpenAi"will use theOpenAiClientfrom MolyKit. In Moly, the mapping between supported provider types and MolyKit clients can be found in src/chat/chat_screen.rs (if you were to add a custom MolyKit client and default supported provider, you would need to extend the mapping here). -

supported_models: A list of model ids to be used as the whitelist of allowed/supported models in Moly for this provider.

-

Add a new icon for the provider under /resources/images/providers (in PNG format), using the same name as the provider you registered in the previous step.

-

Update the providers view, importing the new image and referencing the import:

- At the top of the live_design!{} block, add your import, e.g.:

ICON_GEMINI = dep("crate://self/resources/images/providers/gemini.png")- Add the icon to the list of provider_icons:

provider_icons: [

...

(ICON_GEMINI), // Add this line to reference the imported file.

]For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for moly-ai

Similar Open Source Tools

moly-ai

Moly is an AI LLM client written in Rust that showcases the capabilities of the Makepad UI toolkit and Project Robius. It supports various AI providers, including OpenAI-compatible providers, Moly Server for local LLM exploration, and MoFa Servers for building AI agents. Users can download pre-built releases for different platforms and contribute to extending the list of supported providers. Moly can be used for tasks like chat completions, AI agent interactions, and custom client creation.

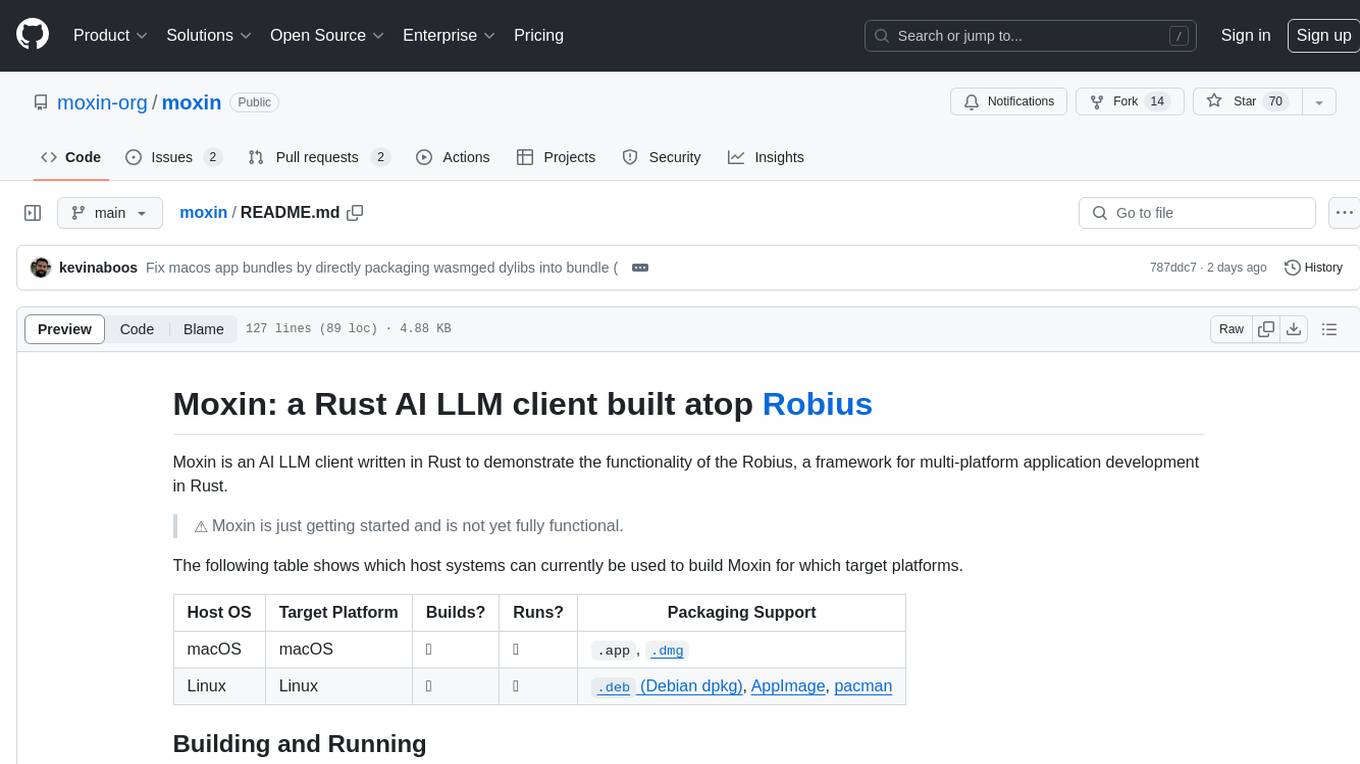

moly

Moly is an AI LLM client written in Rust, showcasing the capabilities of the Makepad UI toolkit and Project Robius, a framework for multi-platform application development in Rust. It is currently in beta, allowing users to build and run Moly on macOS, Linux, and Windows. The tool provides packaging support for different platforms, such as `.app`, `.dmg`, `.deb`, AppImage, pacman, and `.exe` (NSIS). Users can easily set up WasmEdge using `moly-runner` and leverage `cargo` commands to build and run Moly. Additionally, Moly offers pre-built releases for download and supports packaging for distribution on Linux, Windows, and macOS.

moxin

Moxin is an AI LLM client written in Rust to demonstrate the functionality of the Robius framework for multi-platform application development. It is currently in early stages of development and not fully functional. The tool supports building and running on macOS and Linux systems, with packaging options available for distribution. Users can install the required WasmEdge WASM runtime and dependencies to build and run Moxin. Packaging for distribution includes generating `.deb` Debian packages, AppImage, and pacman installation packages for Linux, as well as `.app` bundles and `.dmg` disk images for macOS. The macOS app is not signed, leading to a warning on installation, which can be resolved by removing the quarantine attribute from the installed app.

ComfyUI

ComfyUI is a powerful and modular visual AI engine and application that allows users to design and execute advanced stable diffusion pipelines using a graph/nodes/flowchart based interface. It provides a user-friendly environment for creating complex Stable Diffusion workflows without the need for coding. ComfyUI supports various models for image editing, video processing, audio manipulation, 3D modeling, and more. It offers features like smart memory management, support for different GPU types, loading and saving workflows as JSON files, and offline functionality. Users can also use API nodes to access paid models from external providers through the online Comfy API.

ComfyUI

ComfyUI is a powerful and modular visual AI engine and application that allows users to design and execute advanced stable diffusion pipelines using a graph/nodes/flowchart based interface. It provides a user-friendly environment for creating complex Stable Diffusion workflows without the need for coding. ComfyUI supports various models for image, video, audio, and 3D processing, along with features like smart memory management, model loading, embeddings/textual inversion, and offline usage. Users can experiment with different models, create complex workflows, and optimize their processes efficiently.

WindowsAgentArena

Windows Agent Arena (WAA) is a scalable Windows AI agent platform designed for testing and benchmarking multi-modal, desktop AI agents. It provides researchers and developers with a reproducible and realistic Windows OS environment for AI research, enabling testing of agentic AI workflows across various tasks. WAA supports deploying agents at scale using Azure ML cloud infrastructure, allowing parallel running of multiple agents and delivering quick benchmark results for hundreds of tasks in minutes.

vim-ollama

The 'vim-ollama' plugin for Vim adds Copilot-like code completion support using Ollama as a backend, enabling intelligent AI-based code completion and integrated chat support for code reviews. It does not rely on cloud services, preserving user privacy. The plugin communicates with Ollama via Python scripts for code completion and interactive chat, supporting Vim only. Users can configure LLM models for code completion tasks and interactive conversations, with detailed installation and usage instructions provided in the README.

torchchat

torchchat is a codebase showcasing the ability to run large language models (LLMs) seamlessly. It allows running LLMs using Python in various environments such as desktop, server, iOS, and Android. The tool supports running models via PyTorch, chatting, generating text, running chat in the browser, and running models on desktop/server without Python. It also provides features like AOT Inductor for faster execution, running in C++ using the runner, and deploying and running on iOS and Android. The tool supports popular hardware and OS including Linux, Mac OS, Android, and iOS, with various data types and execution modes available.

llm-foundry

LLM Foundry is a codebase for training, finetuning, evaluating, and deploying LLMs for inference with Composer and the MosaicML platform. It is designed to be easy-to-use, efficient _and_ flexible, enabling rapid experimentation with the latest techniques. You'll find in this repo: * `llmfoundry/` - source code for models, datasets, callbacks, utilities, etc. * `scripts/` - scripts to run LLM workloads * `data_prep/` - convert text data from original sources to StreamingDataset format * `train/` - train or finetune HuggingFace and MPT models from 125M - 70B parameters * `train/benchmarking` - profile training throughput and MFU * `inference/` - convert models to HuggingFace or ONNX format, and generate responses * `inference/benchmarking` - profile inference latency and throughput * `eval/` - evaluate LLMs on academic (or custom) in-context-learning tasks * `mcli/` - launch any of these workloads using MCLI and the MosaicML platform * `TUTORIAL.md` - a deeper dive into the repo, example workflows, and FAQs

termax

Termax is an LLM agent in your terminal that converts natural language to commands. It is featured by: - Personalized Experience: Optimize the command generation with RAG. - Various LLMs Support: OpenAI GPT, Anthropic Claude, Google Gemini, Mistral AI, and more. - Shell Extensions: Plugin with popular shells like `zsh`, `bash` and `fish`. - Cross Platform: Able to run on Windows, macOS, and Linux.

nosia

Nosia is a platform that allows users to run an AI model on their own data. It is designed to be easy to install and use. Users can follow the provided guides for quickstart, API usage, upgrading, starting, stopping, and troubleshooting. The platform supports custom installations with options for remote Ollama instances, custom completion models, and custom embeddings models. Advanced installation instructions are also available for macOS with a Debian or Ubuntu VM setup. Users can access the platform at 'https://nosia.localhost' and troubleshoot any issues by checking logs and job statuses.

lexido

Lexido is an innovative assistant for the Linux command line, designed to boost your productivity and efficiency. Powered by Gemini Pro 1.0 and utilizing the free API, Lexido offers smart suggestions for commands based on your prompts and importantly your current environment. Whether you're installing software, managing files, or configuring system settings, Lexido streamlines the process, making it faster and more intuitive.

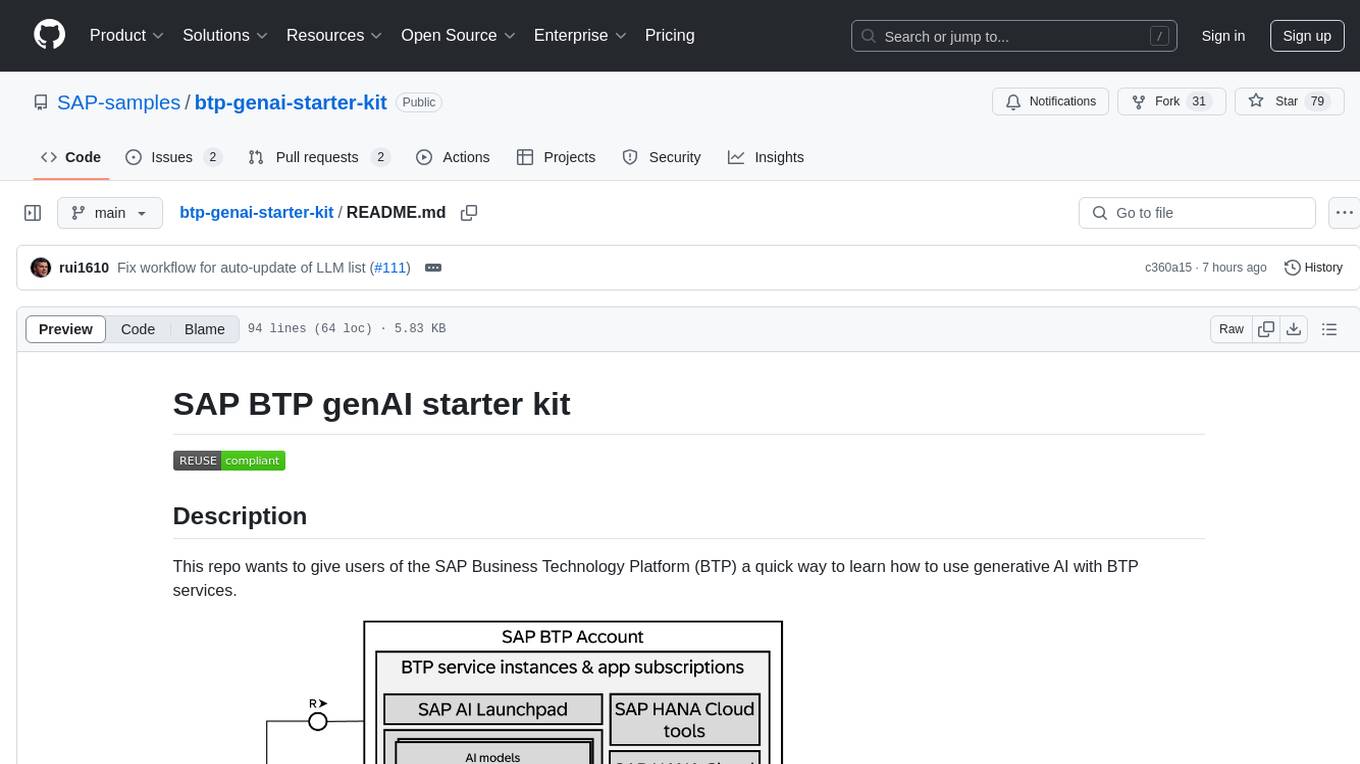

btp-genai-starter-kit

This repository provides a quick way for users of the SAP Business Technology Platform (BTP) to learn how to use generative AI with BTP services. It guides users through setting up the necessary infrastructure, deploying AI models, and running genAI experiments on SAP BTP. The repository includes scripts, examples, and instructions to help users get started with generative AI on the SAP BTP platform.

Upscaler

Holloway's Upscaler is a consolidation of various compiled open-source AI image/video upscaling products for a CLI-friendly image and video upscaling program. It provides low-cost AI upscaling software that can run locally on a laptop, programmable for albums and videos, reliable for large video files, and works without GUI overheads. The repository supports hardware testing on various systems and provides important notes on GPU compatibility, video types, and image decoding bugs. Dependencies include ffmpeg and ffprobe for video processing. The user manual covers installation, setup pathing, calling for help, upscaling images and videos, and contributing back to the project. Benchmarks are provided for performance evaluation on different hardware setups.

screeps-starter-rust

screeps-starter-rust is a Rust AI starter kit for Screeps: World, a JavaScript-based MMO game. It utilizes the screeps-game-api bindings from the rustyscreeps organization and wasm-pack for building Rust code to WebAssembly. The example includes Rollup for bundling javascript, Babel for transpiling code, and screeps-api Node.js package for deployment. Users can refer to the Rust version of game APIs documentation at https://docs.rs/screeps-game-api/. The tool supports most crates on crates.io, except those interacting with OS APIs.

LlamaEdge

The LlamaEdge project makes it easy to run LLM inference apps and create OpenAI-compatible API services for the Llama2 series of LLMs locally. It provides a Rust+Wasm stack for fast, portable, and secure LLM inference on heterogeneous edge devices. The project includes source code for text generation, chatbot, and API server applications, supporting all LLMs based on the llama2 framework in the GGUF format. LlamaEdge is committed to continuously testing and validating new open-source models and offers a list of supported models with download links and startup commands. It is cross-platform, supporting various OSes, CPUs, and GPUs, and provides troubleshooting tips for common errors.

For similar tasks

moly-ai

Moly is an AI LLM client written in Rust that showcases the capabilities of the Makepad UI toolkit and Project Robius. It supports various AI providers, including OpenAI-compatible providers, Moly Server for local LLM exploration, and MoFa Servers for building AI agents. Users can download pre-built releases for different platforms and contribute to extending the list of supported providers. Moly can be used for tasks like chat completions, AI agent interactions, and custom client creation.

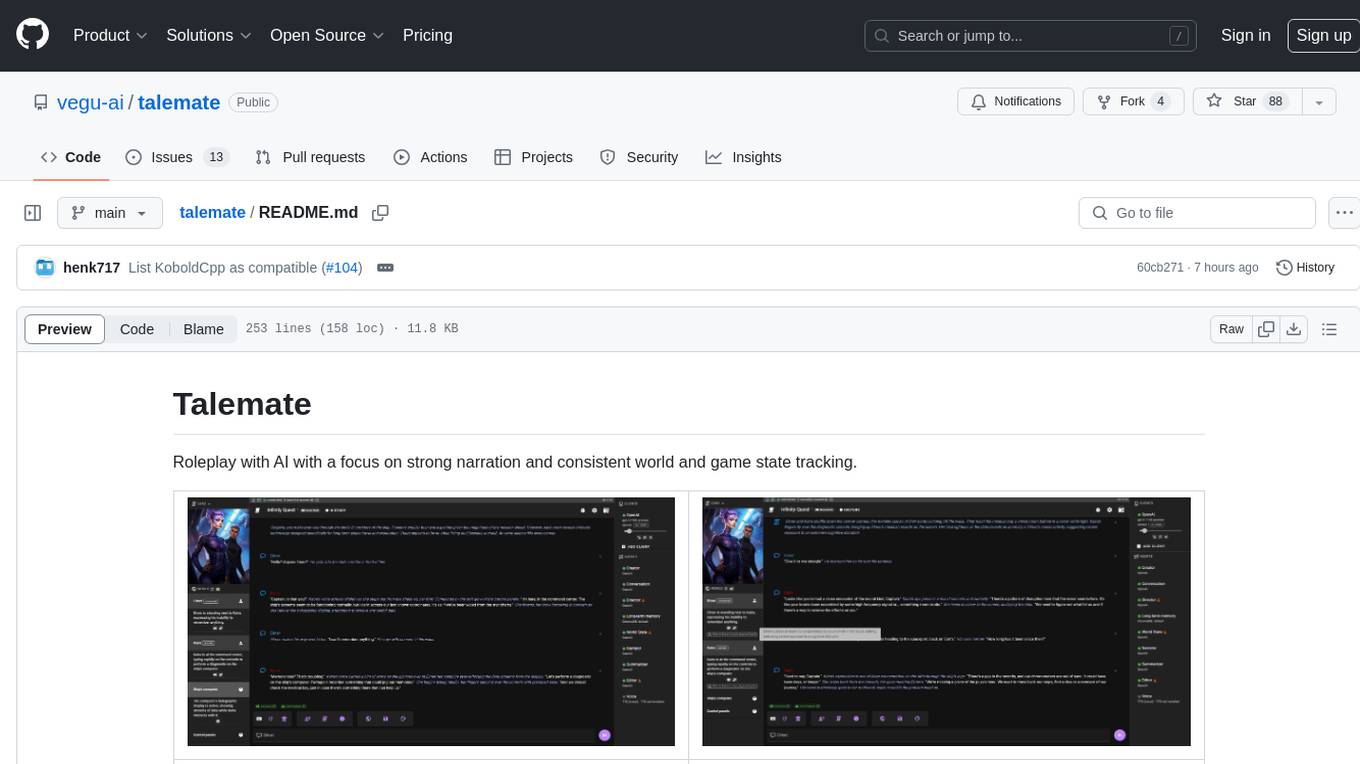

talemate

Talemate is a roleplay tool that allows users to interact with AI agents for dialogue, narration, summarization, direction, editing, world state management, character/scenario creation, text-to-speech, and visual generation. It supports multiple AI clients and APIs, offers long-term memory using ChromaDB, and provides tools for managing NPCs, AI-assisted character creation, and scenario creation. Users can customize prompts using Jinja2 templates and benefit from a modern, responsive UI. The tool also integrates with Runpod for enhanced functionality.

codesandbox-sdk

CodeSandbox SDK enables users to programmatically spin up development environments and run untrusted code securely. It provides a programmatic API for creating and running sandboxes quickly. The SDK uses the microVM infrastructure of CodeSandbox, supporting features like snapshotting/restoring VMs, cloning VMs & Snapshots, source control integration, and running any Dockerfile. Users can authenticate with an API token, create sandboxes, run code in various languages, interact with the filesystem, clone sandboxes, get metrics, hibernate sandboxes, and more. The sandboxes are created inside the user's workspace in CodeSandbox, allowing for controlled environments and resource billing. Example use cases include code interpretation, creating development environments, running AI agents, and CI/CD testing.

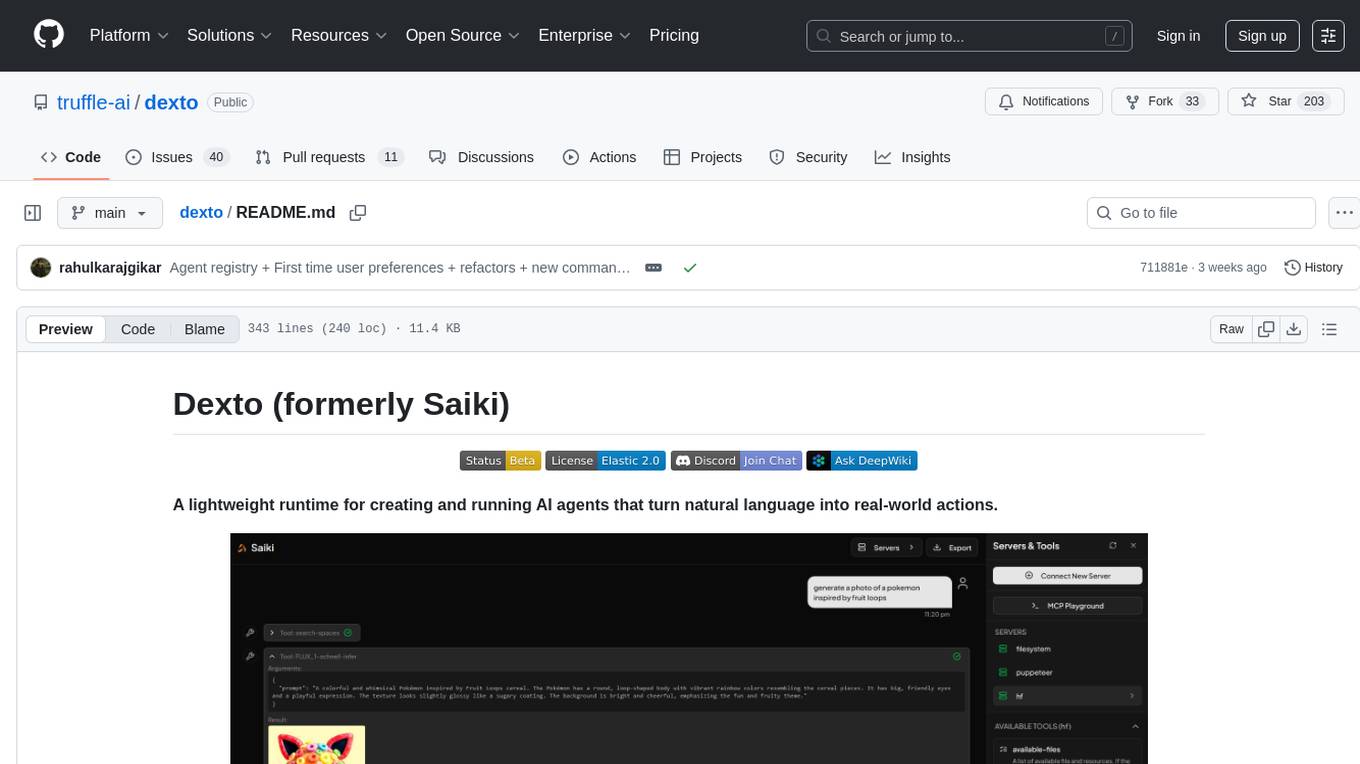

dexto

Dexto is a lightweight runtime for creating and running AI agents that turn natural language into real-world actions. It serves as the missing intelligence layer for building AI applications, standalone chatbots, or as the reasoning engine inside larger products. Dexto features a powerful CLI and Web UI for running AI agents, supports multiple interfaces, allows hot-swapping of LLMs from various providers, connects to remote tool servers via the Model Context Protocol, is config-driven with version-controlled YAML, offers production-ready core features, extensibility for custom services, and enables multi-agent collaboration via MCP and A2A.

claudex

Claudex is an open-source, self-hosted Claude Code UI that runs entirely on your machine. It provides multiple sandboxes, allows users to use their own plans, offers a full IDE experience with VS Code in the browser, and is extensible with skills, agents, slash commands, and MCP servers. Users can run AI agents in isolated environments, view and interact with a browser via VNC, switch between multiple AI providers, automate tasks with Celery workers, and enjoy various chat features and preview capabilities. Claudex also supports marketplace plugins, secrets management, integrations like Gmail, and custom instructions. The tool is configured through providers and supports various providers like Anthropic, OpenAI, OpenRouter, and Custom. It has a tech stack consisting of React, FastAPI, Python, PostgreSQL, Celery, Redis, and more.

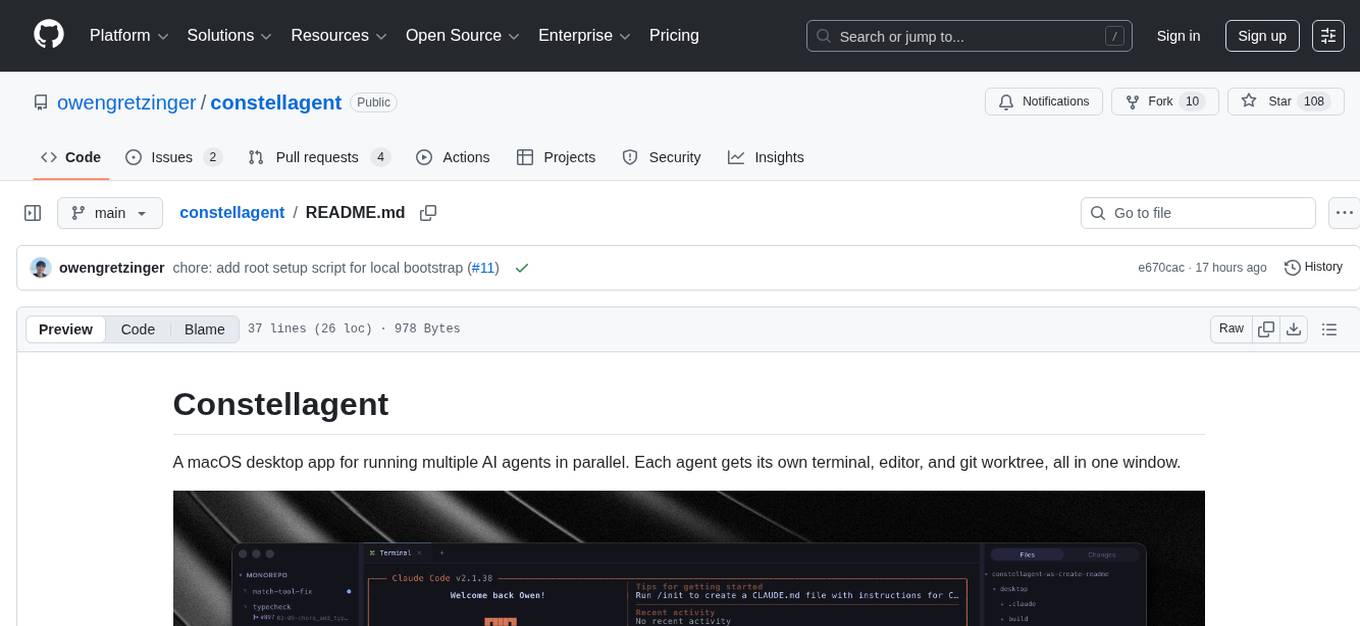

constellagent

Constellagent is a macOS desktop app designed for running multiple AI agents in parallel. It provides a seamless environment for each agent with its own terminal, editor, and git worktree, all within a single window. The app offers features such as separate agent sessions, full terminal emulator, Monaco code editor, git management tools, file tree navigation, automation scheduling, and keyboard-driven functionalities. Constellagent is a versatile tool that streamlines the workflow for developers and AI practitioners working on multiple projects simultaneously.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.