json_repair

A python module to repair invalid JSON from LLMs

Stars: 1653

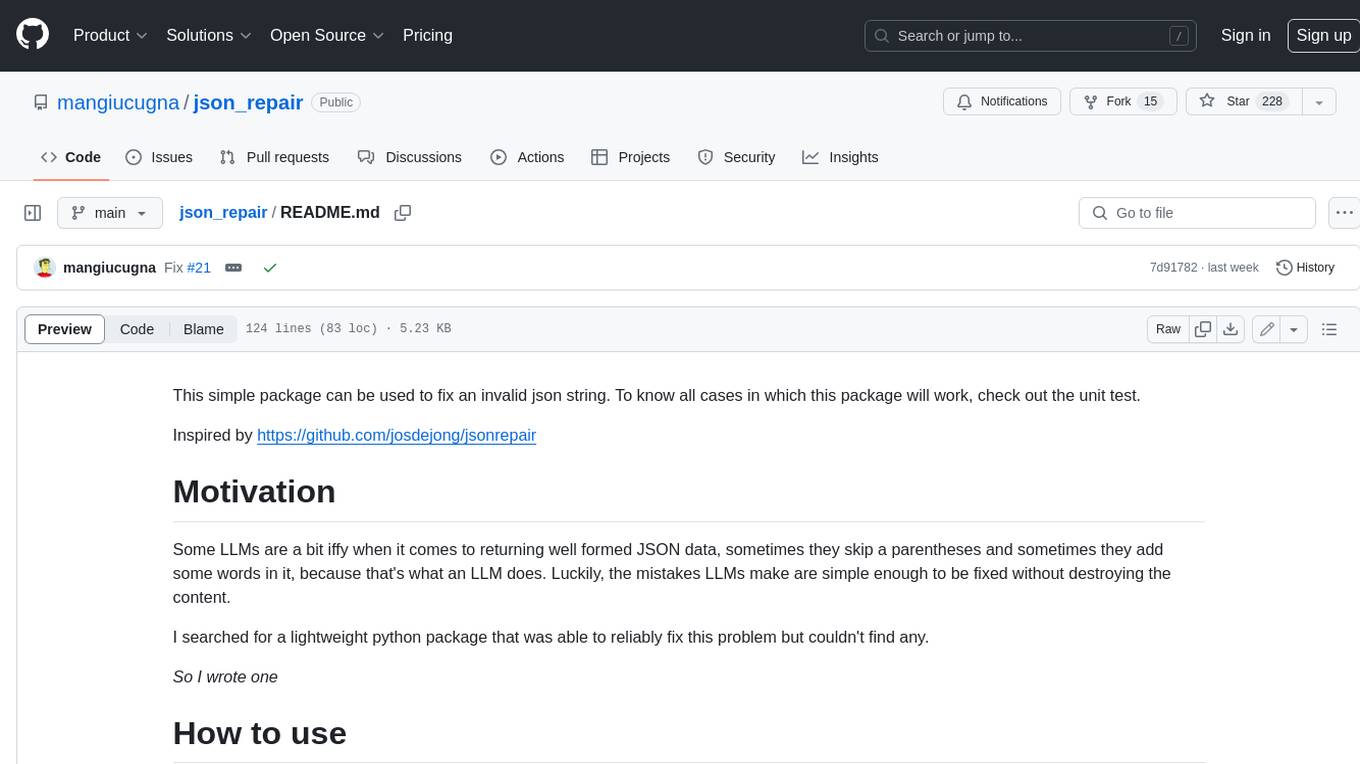

This simple package can be used to fix an invalid json string. To know all cases in which this package will work, check out the unit test. Inspired by https://github.com/josdejong/jsonrepair Motivation Some LLMs are a bit iffy when it comes to returning well formed JSON data, sometimes they skip a parentheses and sometimes they add some words in it, because that's what an LLM does. Luckily, the mistakes LLMs make are simple enough to be fixed without destroying the content. I searched for a lightweight python package that was able to reliably fix this problem but couldn't find any. So I wrote one How to use from json_repair import repair_json good_json_string = repair_json(bad_json_string) # If the string was super broken this will return an empty string You can use this library to completely replace `json.loads()`: import json_repair decoded_object = json_repair.loads(json_string) or just import json_repair decoded_object = json_repair.repair_json(json_string, return_objects=True) Read json from a file or file descriptor JSON repair provides also a drop-in replacement for `json.load()`: import json_repair try: file_descriptor = open(fname, 'rb') except OSError: ... with file_descriptor: decoded_object = json_repair.load(file_descriptor) and another method to read from a file: import json_repair try: decoded_object = json_repair.from_file(json_file) except OSError: ... except IOError: ... Keep in mind that the library will not catch any IO-related exception and those will need to be managed by you Performance considerations If you find this library too slow because is using `json.loads()` you can skip that by passing `skip_json_loads=True` to `repair_json`. Like: from json_repair import repair_json good_json_string = repair_json(bad_json_string, skip_json_loads=True) I made a choice of not using any fast json library to avoid having any external dependency, so that anybody can use it regardless of their stack. Some rules of thumb to use: - Setting `return_objects=True` will always be faster because the parser returns an object already and it doesn't have serialize that object to JSON - `skip_json_loads` is faster only if you 100% know that the string is not a valid JSON - If you are having issues with escaping pass the string as **raw** string like: `r"string with escaping\"" Adding to requirements Please pin this library only on the major version! We use TDD and strict semantic versioning, there will be frequent updates and no breaking changes in minor and patch versions. To ensure that you only pin the major version of this library in your `requirements.txt`, specify the package name followed by the major version and a wildcard for minor and patch versions. For example: json_repair==0.* In this example, any version that starts with `0.` will be acceptable, allowing for updates on minor and patch versions. How it works This module will parse the JSON file following the BNF definition:

README:

This simple package can be used to fix an invalid json string. To know all cases in which this package will work, check out the unit test.

If you find this library useful, you can help me by donating toward my monthly beer budget here: https://github.com/sponsors/mangiucugna

If you are unsure if this library will fix your specific problem, or simply want your json validated online, you can visit the demo site on GitHub pages: https://mangiucugna.github.io/json_repair/

Or hear an audio deepdive generate by Google's NotebookLM for an introduction to the module

Some LLMs are a bit iffy when it comes to returning well formed JSON data, sometimes they skip a parentheses and sometimes they add some words in it, because that's what an LLM does. Luckily, the mistakes LLMs make are simple enough to be fixed without destroying the content.

I searched for a lightweight python package that was able to reliably fix this problem but couldn't find any.

So I wrote one

As part of my job we use OpenAI APIs and we noticed that even with structured output sometimes the result isn't a fully valid json. So we still use this library to cover those outliers.

- Missing quotes, misplaced commas, unescaped characters, and incomplete key-value pairs.

- Missing quotation marks, improperly formatted values (true, false, null), and repairs corrupted key-value structures.

- Incomplete or broken arrays/objects by adding necessary elements (e.g., commas, brackets) or default values (null, "").

- The library can process JSON that includes extra non-JSON characters like comments or improperly placed characters, cleaning them up while maintaining valid structure.

- Automatically completes missing values in JSON fields with reasonable defaults (like empty strings or null), ensuring validity.

Install the library with pip

pip install json-repair

then you can use use it in your code like this

from json_repair import repair_json

good_json_string = repair_json(bad_json_string)

# If the string was super broken this will return an empty string

You can use this library to completely replace json.loads():

import json_repair

decoded_object = json_repair.loads(json_string)

or just

import json_repair

decoded_object = json_repair.repair_json(json_string, return_objects=True)

Some users of this library adopt the following pattern:

obj = {}

try:

obj = json.loads(string)

except json.JSONDecodeError as e:

obj = json_repair.loads(string)

...

This is wasteful because json_repair will already verify for you if the JSON is valid, if you still want to do that then add skip_json_loads=True to the call as explained the section below.

JSON repair provides also a drop-in replacement for json.load():

import json_repair

try:

file_descriptor = open(fname, 'rb')

except OSError:

...

with file_descriptor:

decoded_object = json_repair.load(file_descriptor)

and another method to read from a file:

import json_repair

try:

decoded_object = json_repair.from_file(json_file)

except OSError:

...

except IOError:

...

Keep in mind that the library will not catch any IO-related exception and those will need to be managed by you

When working with non-Latin characters (such as Chinese, Japanese, or Korean), you need to pass ensure_ascii=False to repair_json() in order to preserve the non-Latin characters in the output.

Here's an example using Chinese characters:

repair_json("{'test_chinese_ascii':'统一码'}")

will return

{"test_chinese_ascii": "\u7edf\u4e00\u7801"}

Instead passing ensure_ascii=False:

repair_json("{'test_chinese_ascii':'统一码'}", ensure_ascii=False)

will return

{"test_chinese_ascii": "统一码"}

If you find this library too slow because is using json.loads() you can skip that by passing skip_json_loads=True to repair_json. Like:

from json_repair import repair_json

good_json_string = repair_json(bad_json_string, skip_json_loads=True)

I made a choice of not using any fast json library to avoid having any external dependency, so that anybody can use it regardless of their stack.

Some rules of thumb to use:

- Setting

return_objects=Truewill always be faster because the parser returns an object already and it doesn't have serialize that object to JSON -

skip_json_loadsis faster only if you 100% know that the string is not a valid JSON - If you are having issues with escaping pass the string as raw string like:

r"string with escaping\""

Install the library for command-line with:

pipx install json-repair

to know all options available:

$ json_repair -h

usage: json_repair [-h] [-i] [-o TARGET] [--ensure_ascii] [--indent INDENT] [filename]

Repair and parse JSON files.

positional arguments:

filename The JSON file to repair (if omitted, reads from stdin)

options:

-h, --help show this help message and exit

-i, --inline Replace the file inline instead of returning the output to stdout

-o TARGET, --output TARGET

If specified, the output will be written to TARGET filename instead of stdout

--ensure_ascii Pass ensure_ascii=True to json.dumps()

--indent INDENT Number of spaces for indentation (Default 2)

Please pin this library only on the major version!

We use TDD and strict semantic versioning, there will be frequent updates and no breaking changes in minor and patch versions.

To ensure that you only pin the major version of this library in your requirements.txt, specify the package name followed by the major version and a wildcard for minor and patch versions. For example:

json_repair==0.*

In this example, any version that starts with 0. will be acceptable, allowing for updates on minor and patch versions.

If you are using this library in your academic work (as I know many folks are) please find the BibTex here:

@software{Baccianella_JSON_Repair_-_2025,

author = "Stefano {Baccianella}",

month = "feb",

title = "JSON Repair - A python module to repair invalid JSON, commonly used to parse the output of LLMs",

url = "https://github.com/mangiucugna/json_repair",

version = "0.39.1",

year = 2025

}

Thank you for citing my work and please send me a link to the paper if you can!

This module will parse the JSON file following the BNF definition:

<json> ::= <primitive> | <container>

<primitive> ::= <number> | <string> | <boolean>

; Where:

; <number> is a valid real number expressed in one of a number of given formats

; <string> is a string of valid characters enclosed in quotes

; <boolean> is one of the literal strings 'true', 'false', or 'null' (unquoted)

<container> ::= <object> | <array>

<array> ::= '[' [ <json> *(', ' <json>) ] ']' ; A sequence of JSON values separated by commas

<object> ::= '{' [ <member> *(', ' <member>) ] '}' ; A sequence of 'members'

<member> ::= <string> ': ' <json> ; A pair consisting of a name, and a JSON value

If something is wrong (a missing parentheses or quotes for example) it will use a few simple heuristics to fix the JSON string:

- Add the missing parentheses if the parser believes that the array or object should be closed

- Quote strings or add missing single quotes

- Adjust whitespaces and remove line breaks

I am sure some corner cases will be missing, if you have examples please open an issue or even better push a PR

Just create a virtual environment with requirements.txt, the setup uses pre-commit to make sure all tests are run.

Make sure that the Github Actions running after pushing a new commit don't fail as well.

You will need owner access to this repository

- Edit

pyproject.tomland update the version number appropriately usingsemvernotation - Commit and push all changes to the repository before continuing or the next steps will fail

- Run

python -m build - Create a new release in Github, making sure to tag all the issues solved and contributors. Create the new tag, same as the one in the build configuration

- Once the release is created, a new Github Actions workflow will start to publish on Pypi, make sure it didn't fail

- Typescript: https://github.com/josdejong/jsonrepair

- Go: https://github.com/RealAlexandreAI/json-repair

- Ruby: https://github.com/sashazykov/json-repair-rb

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for json_repair

Similar Open Source Tools

json_repair

This simple package can be used to fix an invalid json string. To know all cases in which this package will work, check out the unit test. Inspired by https://github.com/josdejong/jsonrepair Motivation Some LLMs are a bit iffy when it comes to returning well formed JSON data, sometimes they skip a parentheses and sometimes they add some words in it, because that's what an LLM does. Luckily, the mistakes LLMs make are simple enough to be fixed without destroying the content. I searched for a lightweight python package that was able to reliably fix this problem but couldn't find any. So I wrote one How to use from json_repair import repair_json good_json_string = repair_json(bad_json_string) # If the string was super broken this will return an empty string You can use this library to completely replace `json.loads()`: import json_repair decoded_object = json_repair.loads(json_string) or just import json_repair decoded_object = json_repair.repair_json(json_string, return_objects=True) Read json from a file or file descriptor JSON repair provides also a drop-in replacement for `json.load()`: import json_repair try: file_descriptor = open(fname, 'rb') except OSError: ... with file_descriptor: decoded_object = json_repair.load(file_descriptor) and another method to read from a file: import json_repair try: decoded_object = json_repair.from_file(json_file) except OSError: ... except IOError: ... Keep in mind that the library will not catch any IO-related exception and those will need to be managed by you Performance considerations If you find this library too slow because is using `json.loads()` you can skip that by passing `skip_json_loads=True` to `repair_json`. Like: from json_repair import repair_json good_json_string = repair_json(bad_json_string, skip_json_loads=True) I made a choice of not using any fast json library to avoid having any external dependency, so that anybody can use it regardless of their stack. Some rules of thumb to use: - Setting `return_objects=True` will always be faster because the parser returns an object already and it doesn't have serialize that object to JSON - `skip_json_loads` is faster only if you 100% know that the string is not a valid JSON - If you are having issues with escaping pass the string as **raw** string like: `r"string with escaping\"" Adding to requirements Please pin this library only on the major version! We use TDD and strict semantic versioning, there will be frequent updates and no breaking changes in minor and patch versions. To ensure that you only pin the major version of this library in your `requirements.txt`, specify the package name followed by the major version and a wildcard for minor and patch versions. For example: json_repair==0.* In this example, any version that starts with `0.` will be acceptable, allowing for updates on minor and patch versions. How it works This module will parse the JSON file following the BNF definition:

smartcat

Smartcat is a CLI interface that brings language models into the Unix ecosystem, allowing power users to leverage the capabilities of LLMs in their daily workflows. It features a minimalist design, seamless integration with terminal and editor workflows, and customizable prompts for specific tasks. Smartcat currently supports OpenAI, Mistral AI, and Anthropic APIs, providing access to a range of language models. With its ability to manipulate file and text streams, integrate with editors, and offer configurable settings, Smartcat empowers users to automate tasks, enhance code quality, and explore creative possibilities.

ain

Ain is a terminal HTTP API client designed for scripting input and processing output via pipes. It allows flexible organization of APIs using files and folders, supports shell-scripts and executables for common tasks, handles url-encoding, and enables sharing the resulting curl, wget, or httpie command-line. Users can put things that change in environment variables or .env-files, and pipe the API output for further processing. Ain targets users who work with many APIs using a simple file format and uses curl, wget, or httpie to make the actual calls.

node_characterai

Node.js client for the unofficial Character AI API, an awesome website which brings characters to life with AI! This repository is inspired by RichardDorian's unofficial node API. Though, I found it hard to use and it was not really stable and archived. So I remade it in javascript. This project is not affiliated with Character AI in any way! It is a community project. The purpose of this project is to bring and build projects powered by Character AI. If you like this project, please check their website.

aiomisc

aiomisc is a Python library that provides a collection of utility functions and classes for working with asynchronous I/O in a more intuitive and efficient way. It offers features like worker pools, connection pools, circuit breaker pattern, and retry mechanisms to make asyncio code more robust and easier to maintain. The library simplifies the architecture of software using asynchronous I/O, making it easier for developers to write reliable and scalable asynchronous code.

aiorun

aiorun is a Python package that provides a `run()` function as the starting point of your `asyncio`-based application. The `run()` function handles everything needed during the shutdown sequence of the application, such as creating a `Task` for the given coroutine, running the event loop, adding signal handlers for `SIGINT` and `SIGTERM`, cancelling tasks, waiting for the executor to complete shutdown, and closing the loop. It automates standard actions for asyncio apps, eliminating the need to write boilerplate code. The package also offers error handling options and tools for specific scenarios like TCP server startup and smart shield for shutdown.

paper-qa

PaperQA is a minimal package for question and answering from PDFs or text files, providing very good answers with in-text citations. It uses OpenAI Embeddings to embed and search documents, and includes a process of embedding docs, queries, searching for top passages, creating summaries, using an LLM to re-score and select relevant summaries, putting summaries into prompt, and generating answers. The tool can be used to answer specific questions related to scientific research by leveraging citations and relevant passages from documents.

vectorflow

VectorFlow is an open source, high throughput, fault tolerant vector embedding pipeline. It provides a simple API endpoint for ingesting large volumes of raw data, processing, and storing or returning the vectors quickly and reliably. The tool supports text-based files like TXT, PDF, HTML, and DOCX, and can be run locally with Kubernetes in production. VectorFlow offers functionalities like embedding documents, running chunking schemas, custom chunking, and integrating with vector databases like Pinecone, Qdrant, and Weaviate. It enforces a standardized schema for uploading data to a vector store and supports features like raw embeddings webhook, chunk validation webhook, S3 endpoint, and telemetry. The tool can be used with the Python client and provides detailed instructions for running and testing the functionalities.

algernon

Algernon is a web server with built-in support for QUIC, HTTP/2, Lua, Teal, Markdown, Pongo2, HyperApp, Amber, Sass(SCSS), GCSS, JSX, Ollama (LLMs), BoltDB, Redis, PostgreSQL, MariaDB/MySQL, MSSQL, rate limiting, graceful shutdown, plugins, users, and permissions. It is a small self-contained executable that supports various technologies and features for web development.

neo4j-graphrag-python

The Neo4j GraphRAG package for Python is an official repository that provides features for creating and managing vector indexes in Neo4j databases. It aims to offer developers a reliable package with long-term commitment, maintenance, and fast feature updates. The package supports various Python versions and includes functionalities for creating vector indexes, populating them, and performing similarity searches. It also provides guidelines for installation, examples, and development processes such as installing dependencies, making changes, and running tests.

paper-qa

PaperQA is a minimal package for question and answering from PDFs or text files, providing very good answers with in-text citations. It uses OpenAI Embeddings to embed and search documents, and follows a process of embedding docs and queries, searching for top passages, creating summaries, scoring and selecting relevant summaries, putting summaries into prompt, and generating answers. Users can customize prompts and use various models for embeddings and LLMs. The tool can be used asynchronously and supports adding documents from paths, files, or URLs.

Bard-API

The Bard API is a Python package that returns responses from Google Bard through the value of a cookie. It is an unofficial API that operates through reverse-engineering, utilizing cookie values to interact with Google Bard for users struggling with frequent authentication problems or unable to authenticate via Google Authentication. The Bard API is not a free service, but rather a tool provided to assist developers with testing certain functionalities due to the delayed development and release of Google Bard's API. It has been designed with a lightweight structure that can easily adapt to the emergence of an official API. Therefore, using it for any other purposes is strongly discouraged. If you have access to a reliable official PaLM-2 API or Google Generative AI API, replace the provided response with the corresponding official code. Check out https://github.com/dsdanielpark/Bard-API/issues/262.

MultiPL-E

MultiPL-E is a system for translating unit test-driven neural code generation benchmarks to new languages. It is part of the BigCode Code Generation LM Harness and allows for evaluating Code LLMs using various benchmarks. The tool supports multiple versions with improvements and new language additions, providing a scalable and polyglot approach to benchmarking neural code generation. Users can access a tutorial for direct usage and explore the dataset of translated prompts on the Hugging Face Hub.

fabric

Fabric is an open-source framework for augmenting humans using AI. It provides a structured approach to breaking down problems into individual components and applying AI to them one at a time. Fabric includes a collection of pre-defined Patterns (prompts) that can be used for a variety of tasks, such as extracting the most interesting parts of YouTube videos and podcasts, writing essays, summarizing academic papers, creating AI art prompts, and more. Users can also create their own custom Patterns. Fabric is designed to be easy to use, with a command-line interface and a variety of helper apps. It is also extensible, allowing users to integrate it with their own AI applications and infrastructure.

LLPhant

LLPhant is a comprehensive PHP Generative AI Framework designed to be simple yet powerful, compatible with Symfony and Laravel. It supports various LLMs like OpenAI, Anthropic, Mistral, Ollama, and services compatible with OpenAI API. The framework enables tasks such as semantic search, chatbots, personalized content creation, text summarization, personal shopper creation, autonomous AI agents, and coding tool assistance. It provides tools for generating text, images, speech-to-text transcription, and customizing system messages for question answering. LLPhant also offers features for embeddings, vector stores, document stores, and question answering with various query transformations and reranking techniques.

autoscraper

AutoScraper is a smart, automatic, fast, and lightweight web scraping tool for Python. It simplifies the process of web scraping by learning scraping rules based on sample data provided by the user. The tool can extract text, URLs, or HTML tag values from web pages and return similar elements. Users can utilize the learned object to scrape similar content or exact elements from new pages. AutoScraper is compatible with Python 3 and offers easy installation from various sources. It provides functionalities for fetching similar and exact results from web pages, such as extracting post titles from Stack Overflow or live stock prices from Yahoo Finance. The tool allows customization with custom requests module parameters like proxies or headers. Users can save and load models for future use and explore advanced usages through tutorials and examples.

For similar tasks

json_repair

This simple package can be used to fix an invalid json string. To know all cases in which this package will work, check out the unit test. Inspired by https://github.com/josdejong/jsonrepair Motivation Some LLMs are a bit iffy when it comes to returning well formed JSON data, sometimes they skip a parentheses and sometimes they add some words in it, because that's what an LLM does. Luckily, the mistakes LLMs make are simple enough to be fixed without destroying the content. I searched for a lightweight python package that was able to reliably fix this problem but couldn't find any. So I wrote one How to use from json_repair import repair_json good_json_string = repair_json(bad_json_string) # If the string was super broken this will return an empty string You can use this library to completely replace `json.loads()`: import json_repair decoded_object = json_repair.loads(json_string) or just import json_repair decoded_object = json_repair.repair_json(json_string, return_objects=True) Read json from a file or file descriptor JSON repair provides also a drop-in replacement for `json.load()`: import json_repair try: file_descriptor = open(fname, 'rb') except OSError: ... with file_descriptor: decoded_object = json_repair.load(file_descriptor) and another method to read from a file: import json_repair try: decoded_object = json_repair.from_file(json_file) except OSError: ... except IOError: ... Keep in mind that the library will not catch any IO-related exception and those will need to be managed by you Performance considerations If you find this library too slow because is using `json.loads()` you can skip that by passing `skip_json_loads=True` to `repair_json`. Like: from json_repair import repair_json good_json_string = repair_json(bad_json_string, skip_json_loads=True) I made a choice of not using any fast json library to avoid having any external dependency, so that anybody can use it regardless of their stack. Some rules of thumb to use: - Setting `return_objects=True` will always be faster because the parser returns an object already and it doesn't have serialize that object to JSON - `skip_json_loads` is faster only if you 100% know that the string is not a valid JSON - If you are having issues with escaping pass the string as **raw** string like: `r"string with escaping\"" Adding to requirements Please pin this library only on the major version! We use TDD and strict semantic versioning, there will be frequent updates and no breaking changes in minor and patch versions. To ensure that you only pin the major version of this library in your `requirements.txt`, specify the package name followed by the major version and a wildcard for minor and patch versions. For example: json_repair==0.* In this example, any version that starts with `0.` will be acceptable, allowing for updates on minor and patch versions. How it works This module will parse the JSON file following the BNF definition:

json-repair

JSON Repair is a toolkit designed to address JSON anomalies that can arise from Large Language Models (LLMs). It offers a comprehensive solution for repairing JSON strings, ensuring accuracy and reliability in your data processing. With its user-friendly interface and extensive capabilities, JSON Repair empowers developers to seamlessly integrate JSON repair into their workflows.

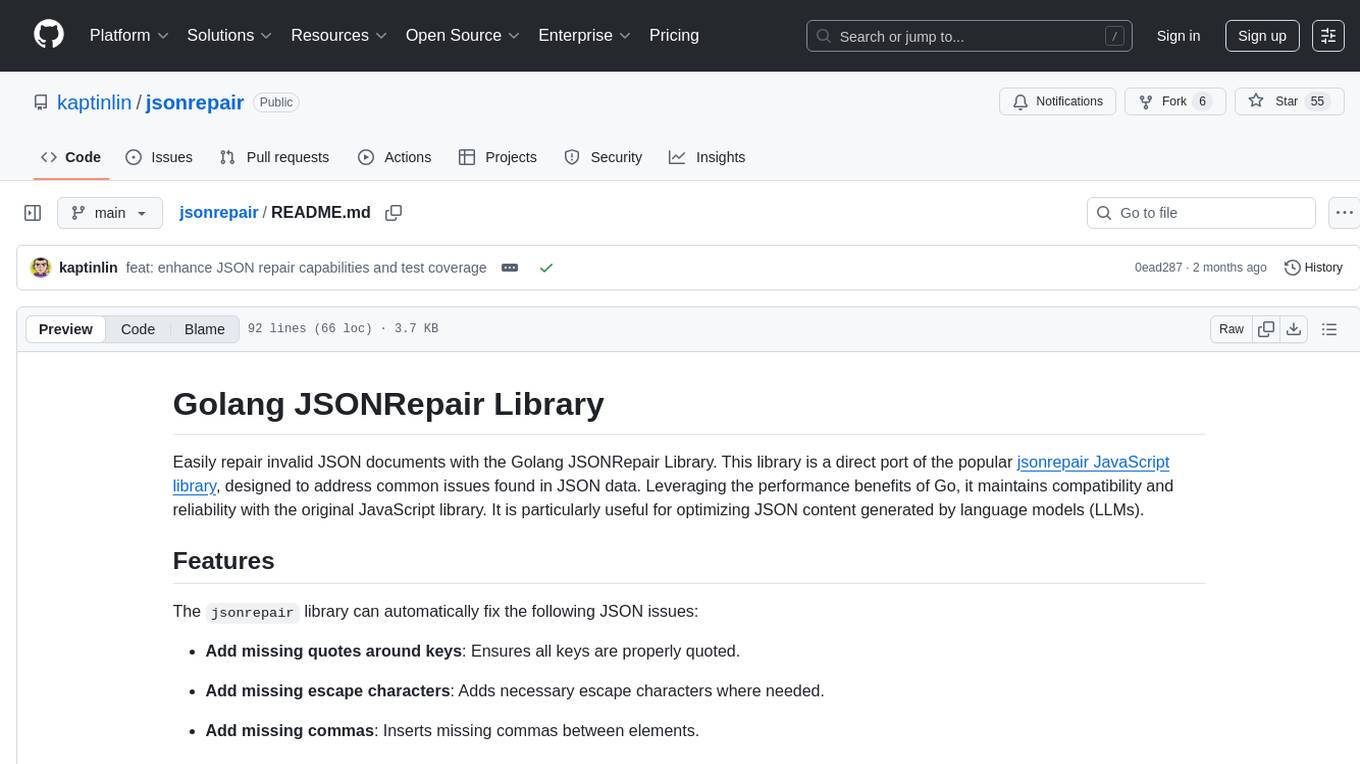

jsonrepair

Jsonrepair is a Python library that provides functionalities to repair and validate JSON files. It helps users to fix common issues in JSON data such as missing commas, incorrect data types, and structural errors. With jsonrepair, users can easily clean up and standardize their JSON files, ensuring they are well-formed and error-free.

ai

Jetify's AI SDK for Go is a unified interface for interacting with multiple AI providers including OpenAI, Anthropic, and more. It addresses the challenges of fragmented ecosystems, vendor lock-in, poor Go developer experience, and complex multi-modal handling by providing a unified interface, Go-first design, production-ready features, multi-modal support, and extensible architecture. The SDK supports language models, embeddings, image generation, multi-provider support, multi-modal inputs, tool calling, and structured outputs.

For similar jobs

lollms-webui

LoLLMs WebUI (Lord of Large Language Multimodal Systems: One tool to rule them all) is a user-friendly interface to access and utilize various LLM (Large Language Models) and other AI models for a wide range of tasks. With over 500 AI expert conditionings across diverse domains and more than 2500 fine tuned models over multiple domains, LoLLMs WebUI provides an immediate resource for any problem, from car repair to coding assistance, legal matters, medical diagnosis, entertainment, and more. The easy-to-use UI with light and dark mode options, integration with GitHub repository, support for different personalities, and features like thumb up/down rating, copy, edit, and remove messages, local database storage, search, export, and delete multiple discussions, make LoLLMs WebUI a powerful and versatile tool.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.

minio

MinIO is a High Performance Object Storage released under GNU Affero General Public License v3.0. It is API compatible with Amazon S3 cloud storage service. Use MinIO to build high performance infrastructure for machine learning, analytics and application data workloads.

mage-ai

Mage is an open-source data pipeline tool for transforming and integrating data. It offers an easy developer experience, engineering best practices built-in, and data as a first-class citizen. Mage makes it easy to build, preview, and launch data pipelines, and provides observability and scaling capabilities. It supports data integrations, streaming pipelines, and dbt integration.

AiTreasureBox

AiTreasureBox is a versatile AI tool that provides a collection of pre-trained models and algorithms for various machine learning tasks. It simplifies the process of implementing AI solutions by offering ready-to-use components that can be easily integrated into projects. With AiTreasureBox, users can quickly prototype and deploy AI applications without the need for extensive knowledge in machine learning or deep learning. The tool covers a wide range of tasks such as image classification, text generation, sentiment analysis, object detection, and more. It is designed to be user-friendly and accessible to both beginners and experienced developers, making AI development more efficient and accessible to a wider audience.

tidb

TiDB is an open-source distributed SQL database that supports Hybrid Transactional and Analytical Processing (HTAP) workloads. It is MySQL compatible and features horizontal scalability, strong consistency, and high availability.

airbyte

Airbyte is an open-source data integration platform that makes it easy to move data from any source to any destination. With Airbyte, you can build and manage data pipelines without writing any code. Airbyte provides a library of pre-built connectors that make it easy to connect to popular data sources and destinations. You can also create your own connectors using Airbyte's no-code Connector Builder or low-code CDK. Airbyte is used by data engineers and analysts at companies of all sizes to build and manage their data pipelines.

labelbox-python

Labelbox is a data-centric AI platform for enterprises to develop, optimize, and use AI to solve problems and power new products and services. Enterprises use Labelbox to curate data, generate high-quality human feedback data for computer vision and LLMs, evaluate model performance, and automate tasks by combining AI and human-centric workflows. The academic & research community uses Labelbox for cutting-edge AI research.