paper-qa

High accuracy RAG for answering questions from scientific documents with citations

Stars: 6627

PaperQA is a minimal package for question and answering from PDFs or text files, providing very good answers with in-text citations. It uses OpenAI Embeddings to embed and search documents, and includes a process of embedding docs, queries, searching for top passages, creating summaries, using an LLM to re-score and select relevant summaries, putting summaries into prompt, and generating answers. The tool can be used to answer specific questions related to scientific research by leveraging citations and relevant passages from documents.

README:

PaperQA2 is a package for doing high-accuracy retrieval augmented generation (RAG) on PDFs or text files, with a focus on the scientific literature. See our recent 2024 paper to see examples of PaperQA2's superhuman performance in scientific tasks like question answering, summarization, and contradiction detection.

- Quickstart

- What is PaperQA2

- Installation

- CLI Usage

- Library Usage

- Where do I get papers?

- Callbacks

- Customizing Prompts

- FAQ

- Reproduction

- Citation

In this example we take a folder of research paper PDFs, magically get their metadata - including citation counts with a retraction check, then parse and cache PDFs into a full-text search index, and finally answer the user question with an LLM agent.

pip install paper-qa

cd my_papers

pqa ask 'How can carbon nanotubes be manufactured at a large scale?'Question: Has anyone designed neural networks that compute with proteins or DNA?

The claim that neural networks have been designed to compute with DNA is supported by multiple sources. The work by Qian, Winfree, and Bruck demonstrates the use of DNA strand displacement cascades to construct neural network components, such as artificial neurons and associative memories, using a DNA-based system (Qian2011Neural pages 1-2, Qian2011Neural pages 15-16, Qian2011Neural pages 54-56). This research includes the implementation of a 3-bit XOR gate and a four-neuron Hopfield associative memory, showcasing the potential of DNA for neural network computation. Additionally, the application of deep learning techniques to genomics, which involves computing with DNA sequences, is well-documented. Studies have applied convolutional neural networks (CNNs) to predict genomic features such as transcription factor binding and DNA accessibility (Eraslan2019Deep pages 4-5, Eraslan2019Deep pages 5-6). These models leverage DNA sequences as input data, effectively using neural networks to compute with DNA. While the provided excerpts do not explicitly mention protein-based neural network computation, they do highlight the use of neural networks in tasks related to protein sequences, such as predicting DNA-protein binding (Zeng2016Convolutional pages 1-2). However, the primary focus remains on DNA-based computation.

PaperQA2 is engineered to be the best agentic RAG model for working with scientific papers. Here are some features:

- A simple interface to get good answers with grounded responses containing in-text citations.

- State-of-the-art implementation including document metadata-awareness in embeddings and LLM-based re-ranking and contextual summarization (RCS).

- Support for agentic RAG, where a language agent can iteratively refine queries and answers.

- Automatic redundant fetching of paper metadata, including citation and journal quality data from multiple providers.

- A usable full-text search engine for a local repository of PDF/text files.

- A robust interface for customization, with default support for all LiteLLM models.

By default, it uses OpenAI embeddings and models with a Numpy vector DB to embed and search documents. However, you can easily use other closed-source, open-source models or embeddings (see details below).

PaperQA2 depends on some awesome libraries/APIs that make our repo possible. Here are some in no particular order:

We've been working on hard on fundamental upgrades for a while and mostly followed SemVer. meaning we've incremented the major version number on each breaking change. This brings us to the current major version number v5. So why call is the repo now called PaperQA2? We wanted to remark on the fact though that we've exceeded human performance on many important metrics. So we arbitrarily call version 5 and onward PaperQA2, and versions before it as PaperQA1 to denote the significant change in performance. We recognize that we are challenged at naming and counting at FutureHouse, so we reserve the right at any time to arbitrarily change the name to PaperCrow.

Version 5 added:

- A CLI

pqa - Agentic workflows invoking tools for paper search, gathering evidence, and generating an answer

- Removed much of the statefulness from the

Docsobject - A migration to LiteLLM for compatibility with many LLM providers as well as centralized rate limits and cost tracking

- A bundled set of configurations (read here)) containing known-good hyperparameters

Note that Docs objects pickled from prior versions of PaperQA are incompatible with version 5,

and will need to be rebuilt.

Also, our minimum Python version was increased to Python 3.11.

To understand PaperQA2, let's start with the pieces of the underlying algorithm. The default workflow of PaperQA2 is as follows:

| Phase | PaperQA2 Actions |

|---|---|

| 1. Paper Search | - Get candidate papers from LLM-generated keyword query |

| - Chunk, embed, and add candidate papers to state | |

| 2. Gather Evidence | - Embed query into vector |

| - Rank top k document chunks in current state | |

| - Create scored summary of each chunk in the context of the current query | |

| - Use LLM to re-score and select most relevant summaries | |

| 3. Generate Answer | - Put best summaries into prompt with context |

| - Generate answer with prompt |

The tools can be invoked in any order by a language agent. For example, an LLM agent might do a narrow and broad search, or using different phrasing for the gather evidence step from the generate answer step.

For a non-development setup, install PaperQA2 (aka version 5) from PyPI. Note version 5 requires Python 3.11+.

pip install paper-qa>=5For development setup, please refer to the CONTRIBUTING.md file.

PaperQA2 uses an LLM to operate,

so you'll need to either set an appropriate API key environment variable (i.e. export OPENAI_API_KEY=sk-...)

or set up an open source LLM server (i.e. using llamafile.

Any LiteLLM compatible model can be configured to use with PaperQA2.

If you need to index a large set of papers (100+),

you will likely want an API key for both Crossref and Semantic Scholar,

which will allow you to avoid hitting public rate limits using these metadata services.

Those can be exported as CROSSREF_API_KEY and SEMANTIC_SCHOLAR_API_KEY variables.

The fastest way to test PaperQA2 is via the CLI. First navigate to a directory with some papers and use the pqa cli:

$ pqa ask 'What manufacturing challenges are unique to bispecific antibodies?'You will see PaperQA2 index your local PDF files, gathering the necessary metadata for each of them (using Crossref and Semantic Scholar), search over that index, then break the files into chunked evidence contexts, rank them, and ultimately generate an answer. The next time this directory is queried, your index will already be built (save for any differences detected, like new added papers), so it will skip the indexing and chunking steps.

All prior answers will be indexed and stored, you can view them by querying via the search subcommand, or access them yourself in your PQA_HOME directory, which defaults to ~/.pqa/.

$ pqa search -i 'answers' 'antibodies'PaperQA2 is highly configurable, when running from the command line, pqa --help shows all options and short descriptions. For example to run with a higher temperature:

$ pqa --temperature 0.5 ask 'What manufacturing challenges are unique to bispecific antibodies?'You can view all settings with pqa view. Another useful thing is to change to other templated settings - for example fast is a setting that answers more quickly and you can see it with pqa -s fast view

Maybe you have some new settings you want to save? You can do that with

pqa -s my_new_settings --temperature 0.5 --llm foo-bar-5 saveand then you can use it with

pqa -s my_new_settings ask 'What manufacturing challenges are unique to bispecific antibodies?'If you run pqa with a command which requires a new indexing, say if you change the default chunk_size, a new index will automatically be created for you.

pqa --parsing.chunk_size 5000 ask 'What manufacturing challenges are unique to bispecific antibodies?'You can also use pqa to do full-text search with use of LLMs view the search command. For example, let's save the index from a directory and give it a name:

pqa -i nanomaterials indexNow I can search for papers about thermoelectrics:

pqa -i nanomaterials search thermoelectricsor I can use the normal ask

pqa -i nanomaterials ask 'Are there nm scale features in thermoelectric materials?'Both the CLI and module have pre-configured settings based on prior performance and our publications, they can be invoked as follows:

pqa --settings <setting name> ask 'Are there nm scale features in thermoelectric materials?'Inside paperqa/configs we bundle known useful settings:

| Setting Name | Description |

|---|---|

| high_quality | Highly performant, relatively expensive (due to having evidence_k = 15) query using a ToolSelector agent. |

| fast | Setting to get answers cheaply and quickly. |

| wikicrow | Setting to emulate the Wikipedia article writing used in our WikiCrow publication. |

| contracrow | Setting to find contradictions in papers, your query should be a claim that needs to be flagged as a contradiction (or not). |

| debug | Setting useful solely for debugging, but not in any actual application beyond debugging. |

| tier1_limits | Settings that match OpenAI rate limits for each tier, you can use tier<1-5>_limits to specify the tier. |

If you are hitting rate limits, say with the OpenAI Tier 1 plan, you can add them into PaperQA2. For each OpenAI tier, a pre-built setting exists to limit usage.

pqa --settings 'tier1_limits' ask 'Are there nm scale features in thermoelectric materials?'This will limit your system to use the tier1_limits, and slow down your queries to accommodate.

You can also specify them manually with any rate limit string that matches the specification in the limits module:

pqa --summary_llm_config '{"rate_limit": {"gpt-4o-2024-08-06": "30000 per 1 minute"}}' ask 'Are there nm scale features in thermoelectric materials?'Or by adding into a Settings object, if calling imperatively:

from paperqa import Settings, ask

answer = ask(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=Settings(

llm_config={"rate_limit": {"gpt-4o-2024-08-06": "30000 per 1 minute"}},

summary_llm_config={"rate_limit": {"gpt-4o-2024-08-06": "30000 per 1 minute"}},

),

)PaperQA2's full workflow can be accessed via Python directly:

from paperqa import Settings, ask

answer = ask(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=Settings(temperature=0.5, paper_directory="my_papers"),

)Please see our installation docs for how to install the package from PyPI.

The answer object has the following attributes: formatted_answer, answer (answer alone), question , and context (the summaries of passages found for answer).

ask will use the SearchPapers tool, which will query a local index of files, you can specify this location via the Settings object:

from paperqa import Settings, ask

answer = ask(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=Settings(temperature=0.5, paper_directory="my_papers"),

)ask is just a convenience wrapper around the real entrypoint, which can be accessed if you'd like to run concurrent asynchronous workloads:

from paperqa import Settings, agent_query, QueryRequest

answer = await agent_query(

QueryRequest(

query="What manufacturing challenges are unique to bispecific antibodies?",

settings=Settings(temperature=0.5, paper_directory="my_papers"),

)

)The default agent will use an LLM based agent,

but you can also specify a "fake" agent to use a hard coded call path of search -> gather evidence -> answer to reduce token usage.

Normally via agent execution, the agent invokes the search tool,

which adds documents to the Docs object for you behind the scenes.

However, if you prefer fine-grained control,

you can directly interact with the Docs object.

Note that manually adding and querying Docs does not impact performance.

It just removes the automation associated with an agent picking the documents to add.

from paperqa import Docs, Settings

# valid extensions include .pdf, .txt, and .html

doc_paths = ("myfile.pdf", "myotherfile.pdf")

# Prepare the Docs object by adding a bunch of documents

docs = Docs()

for doc_path in doc_paths:

docs.add(doc_path)

# Set up how we want to query the Docs object

settings = Settings()

settings.llm = "claude-3-5-sonnet-20240620"

settings.answer.answer_max_sources = 3

# Query the Docs object to get an answer

session = docs.query(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=settings,

)

print(session)PaperQA2 is written to be used asynchronously.

The synchronous API is just a wrapper around the async.

Here are the methods and their async equivalents:

| Sync | Async |

|---|---|

Docs.add |

Docs.aadd |

Docs.add_file |

Docs.aadd_file |

Docs.add_url |

Docs.aadd_url |

Docs.get_evidence |

Docs.aget_evidence |

Docs.query |

Docs.aquery |

The synchronous version just calls the async version in a loop.

Most modern python environments support async natively (including Jupyter notebooks!).

So you can do this in a Jupyter Notebook:

import asyncio

from paperqa import Docs

async def main() -> None:

docs = Docs()

# valid extensions include .pdf, .txt, and .html

for doc in ("myfile.pdf", "myotherfile.pdf"):

await docs.aadd(doc)

session = await docs.aquery(

"What manufacturing challenges are unique to bispecific antibodies?"

)

print(session)

asyncio.run(main())By default, it uses OpenAI models with gpt-4o-2024-08-06 for both the re-ranking and summary step, the summary_llm setting, and for the answering step, the llm setting. You can adjust this easily:

from paperqa import Settings, ask

answer = ask(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=Settings(

llm="gpt-4o-mini", summary_llm="gpt-4o-mini", paper_directory="my_papers"

),

)You can use Anthropic or any other model supported by litellm:

from paperqa import Settings, ask

answer = ask(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=Settings(

llm="claude-3-5-sonnet-20240620", summary_llm="claude-3-5-sonnet-20240620"

),

)You can use llama.cpp to be the LLM. Note that you should be using relatively large models, because PaperQA2 requires following a lot of instructions. You won't get good performance with 7B models.

The easiest way to get set-up is to download a llama file and execute it with -cb -np 4 -a my-llm-model --embedding which will enable continuous batching and embeddings.

from paperqa import Settings, ask

local_llm_config = dict(

model_list=[

dict(

model_name="my_llm_model",

litellm_params=dict(

model="my-llm-model",

api_base="http://localhost:8080/v1",

api_key="sk-no-key-required",

temperature=0.1,

frequency_penalty=1.5,

max_tokens=512,

),

)

]

)

answer = ask(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=Settings(

llm="my-llm-model",

llm_config=local_llm_config,

summary_llm="my-llm-model",

summary_llm_config=local_llm_config,

),

)Models hosted with ollama are also supported.

To run the example below make sure you have downloaded llama3.2 and mxbai-embed-large via ollama.

from paperqa import Settings, ask

local_llm_config = {

"model_list": [

{

"model_name": "ollama/llama3.2",

"litellm_params": {

"model": "ollama/llama3.2",

"api_base": "http://localhost:11434",

},

}

]

}

answer = ask(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=Settings(

llm="ollama/llama3.2",

llm_config=local_llm_config,

summary_llm="ollama/llama3.2",

summary_llm_config=local_llm_config,

embedding="ollama/mxbai-embed-large",

),

)PaperQA2 defaults to using OpenAI (text-embedding-3-small) embeddings, but has flexible options for both vector stores and embedding choices. The simplest way to change an embedding is via the embedding argument to the Settings object constructor:

from paperqa import Settings, ask

answer = ask(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=Settings(embedding="text-embedding-3-large"),

)embedding accepts any embedding model name supported by litellm. PaperQA2 also supports an embedding input of "hybrid-<model_name>" i.e. "hybrid-text-embedding-3-small" to use a hybrid sparse keyword (based on a token modulo embedding) and dense vector embedding, where any litellm model can be used in the dense model name. "sparse" can be used to use a sparse keyword embedding only.

Embedding models are used to create PaperQA2's index of the full-text embedding vectors (texts_index argument). The embedding model can be specified as a setting when you are adding new papers to the Docs object:

from paperqa import Docs, Settings

docs = Docs()

for doc in ("myfile.pdf", "myotherfile.pdf"):

docs.add(doc, settings=Settings(embedding="text-embedding-large-3"))Note that PaperQA2 uses Numpy as a dense vector store.

Its design of using a keyword search initially reduces the number of chunks needed for each answer to a relatively small number < 1k.

Therefore, NumpyVectorStore is a good place to start, it's a simple in-memory store, without an index.

However, if a larger-than-memory vector store is needed, you can an external vector database like Qdrant via the QdrantVectorStore class.

The hybrid embeddings can be customized:

from paperqa import (

Docs,

HybridEmbeddingModel,

SparseEmbeddingModel,

LiteLLMEmbeddingModel,

)

model = HybridEmbeddingModel(

models=[LiteLLMEmbeddingModel(), SparseEmbeddingModel(ndim=1024)]

)

docs = Docs()

for doc in ("myfile.pdf", "myotherfile.pdf"):

docs.add(doc, embedding_model=model)The sparse embedding (keyword) models default to having 256 dimensions, but this can be specified via the ndim argument.

You can use a SentenceTransformerEmbeddingModel model if you install sentence-transformers, which is a local embedding library with support for HuggingFace models and more. You can install it by adding the local extras.

pip install paper-qa[local]and then prefix embedding model names with st-:

from paperqa import Settings, ask

answer = ask(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=Settings(embedding="st-multi-qa-MiniLM-L6-cos-v1"),

)or with a hybrid model

from paperqa import Settings, ask

answer = ask(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=Settings(embedding="hybrid-st-multi-qa-MiniLM-L6-cos-v1"),

)You can adjust the numbers of sources (passages of text) to reduce token usage or add more context. k refers to the top k most relevant and diverse (may from different sources) passages. Each passage is sent to the LLM to summarize, or determine if it is irrelevant. After this step, a limit of max_sources is applied so that the final answer can fit into the LLM context window. Thus, k > max_sources and max_sources is the number of sources used in the final answer.

from paperqa import Settings

settings = Settings()

settings.answer.answer_max_sources = 3

settings.answer.k = 5

docs.query(

"What manufacturing challenges are unique to bispecific antibodies?",

settings=settings,

)You do not need to use papers -- you can use code or raw HTML. Note that this tool is focused on answering questions, so it won't do well at writing code. One note is that the tool cannot infer citations from code, so you will need to provide them yourself.

import glob

import os

from paperqa import Docs

source_files = glob.glob("**/*.js")

docs = Docs()

for f in source_files:

# this assumes the file names are unique in code

docs.add(f, citation="File " + os.path.name(f), docname=os.path.name(f))

session = docs.query("Where is the search bar in the header defined?")

print(session)You may want to cache parsed texts and embeddings in an external database or file. You can then build a Docs object from those directly:

from paperqa import Docs, Doc, Text

docs = Docs()

for ... in my_docs:

doc = Doc(docname=..., citation=..., dockey=..., citation=...)

texts = [Text(text=..., name=..., doc=doc) for ... in my_texts]

docs.add_texts(texts, doc)Indexes will be placed in the home directory by default.

This can be controlled via the PQA_HOME environment variable.

Indexes are made by reading files in the Settings.paper_directory.

By default, we recursively read from subdirectories of the paper directory,

unless disabled using Settings.index_recursively.

The paper directory is not modified in any way, it's just read from.

The indexing process attempts to infer paper metadata like title and DOI using LLM-powered text processing. You can avoid this point of uncertainty using a "manifest" file, which is a CSV containing three columns (order doesn't matter):

-

file_location: relative path to the paper's PDF within the index directory -

doi: DOI of the paper -

title: title of the paper

By providing this information, we ensure queries to metadata providers like Crossref are accurate.

The local search indexes are built based on a hash of the current Settings object.

So make sure you properly specify the paper_directory to your Settings object.

In general, it's advisable to:

- Pre-build an index given a folder of papers (can take several minutes)

- Reuse the index to perform many queries

import os

from paperqa import Settings

from paperqa.agents.main import agent_query

from paperqa.agents.models import QueryRequest

from paperqa.agents.search import get_directory_index

async def amain(folder_of_papers: str | os.PathLike) -> None:

settings = Settings(paper_directory=folder_of_papers)

# 1. Build the index. Note an index name is autogenerated when unspecified

built_index = await get_directory_index(settings=settings)

print(settings.get_index_name()) # Display the autogenerated index name

print(await built_index.index_files) # Display the index contents

# 2. Use the settings as many times as you want with ask

answer_response_1 = await agent_query(

query=QueryRequest(

query="What is the best way to make a vaccine?", settings=settings

)

)

answer_response_2 = await agent_query(

query=QueryRequest(

query="What manufacturing challenges are unique to bispecific antibodies?",

settings=settings,

)

)In paperqa/agents/task.py, you will find:

-

GradablePaperQAEnvironment: an environment that can grade answers given an evaluation function. -

LitQAv2TaskDataset: a task dataset designed to pull LitQA v2 from Hugging Face, and create oneGradablePaperQAEnvironmentper question

Here is an example of how to use them:

import os

from aviary.env import TaskDataset

from ldp.agent import SimpleAgent

from ldp.alg.callbacks import MeanMetricsCallback

from ldp.alg.runners import Evaluator, EvaluatorConfig

from paperqa import QueryRequest, Settings

from paperqa.agents.task import TASK_DATASET_NAME

async def evaluate(folder_of_litqa_v2_papers: str | os.PathLike) -> None:

base_query = QueryRequest(

settings=Settings(paper_directory=folder_of_litqa_v2_papers)

)

dataset = TaskDataset.from_name(TASK_DATASET_NAME, base_query=base_query)

metrics_callback = MeanMetricsCallback(eval_dataset=dataset)

evaluator = Evaluator(

config=EvaluatorConfig(batch_size=3),

agent=SimpleAgent(),

dataset=dataset,

callbacks=[metrics_callback],

)

await evaluator.evaluate()

print(metrics_callback.eval_means)One of the most powerful features of PaperQA2 is its ability to combine data from multiple metadata sources. For example, Unpaywall can provide open access status/direct links to PDFs, Crossref can provide bibtex, and Semantic Scholar can provide citation licenses. Here's a short demo of how to do this:

from paperqa.clients import DocMetadataClient, ALL_CLIENTS

client = DocMetadataClient(clients=ALL_CLIENTS)

details = await client.query(title="Augmenting language models with chemistry tools")

print(details.formatted_citation)

# Andres M. Bran, Sam Cox, Oliver Schilter, Carlo Baldassari, Andrew D. White, and Philippe Schwaller.

# Augmenting large language models with chemistry tools. Nature Machine Intelligence,

# 6:525-535, May 2024. URL: https://doi.org/10.1038/s42256-024-00832-8,

# doi:10.1038/s42256-024-00832-8.

# This article has 243 citations and is from a domain leading peer-reviewed journal.

print(details.citation_count)

# 243

print(details.license)

# cc-by

print(details.pdf_url)

# https://www.nature.com/articles/s42256-024-00832-8.pdfthe client.query is meant to check for exact matches of title. It's a bit robust (like to casing, missing a word). There are duplicates for titles though - so you can also add authors to disambiguate. Or you can provide a doi directly client.query(doi="10.1038/s42256-024-00832-8").

If you're doing this at a large scale, you may not want to use ALL_CLIENTS (just omit the argument) and you can specify which specific fields you want to speed up queries. For example:

details = await client.query(

title="Augmenting large language models with chemistry tools",

authors=["Andres M. Bran", "Sam Cox"],

fields=["title", "doi"],

)will return much faster than the first query and we'll be certain the authors match.

Well that's a really good question! It's probably best to just download PDFs of papers you think will help answer your question and start from there.

It's been a while since we've tested this - so let us know if it runs into issues!

If you use Zotero to organize your personal bibliography,

you can use the paperqa.contrib.ZoteroDB to query papers from your library,

which relies on pyzotero.

Install pyzotero via the zotero extra for this feature:

pip install paperqa[zotero]First, note that PaperQA2 parses the PDFs of papers to store in the database, so all relevant papers should have PDFs stored inside your database. You can get Zotero to automatically do this by highlighting the references you wish to retrieve, right clicking, and selecting "Find Available PDFs". You can also manually drag-and-drop PDFs onto each reference.

To download papers, you need to get an API key for your account.

- Get your library ID, and set it as the environment variable

ZOTERO_USER_ID.- For personal libraries, this ID is given here at the part "Your userID for use in API calls is XXXXXX".

- For group libraries, go to your group page

https://www.zotero.org/groups/groupname, and hover over the settings link. The ID is the integer after /groups/. (h/t pyzotero!)

- Create a new API key here and set it as the environment variable

ZOTERO_API_KEY.- The key will need read access to the library.

With this, we can download papers from our library and add them to PaperQA2:

from paperqa import Docs

from paperqa.contrib import ZoteroDB

docs = Docs()

zotero = ZoteroDB(library_type="user") # "group" if group library

for item in zotero.iterate(limit=20):

if item.num_pages > 30:

continue # skip long papers

docs.add(item.pdf, docname=item.key)which will download the first 20 papers in your Zotero database and add

them to the Docs object.

We can also do specific queries of our Zotero library and iterate over the results:

for item in zotero.iterate(

q="large language models",

qmode="everything",

sort="date",

direction="desc",

limit=100,

):

print("Adding", item.title)

docs.add(item.pdf, docname=item.key)You can read more about the search syntax by typing zotero.iterate? in IPython.

If you want to search for papers outside of your own collection, I've found an unrelated project called paper-scraper that looks like it might help. But beware, this project looks like it uses some scraping tools that may violate publisher's rights or be in a gray area of legality.

from paperqa import Docs

keyword_search = "bispecific antibody manufacture"

papers = paperscraper.search_papers(keyword_search)

docs = Docs()

for path, data in papers.items():

try:

docs.add(path)

except ValueError as e:

# sometimes this happens if PDFs aren't downloaded or readable

print("Could not read", path, e)

session = docs.query(

"What manufacturing challenges are unique to bispecific antibodies?"

)

print(session)To execute a function on each chunk of LLM completions, you need to provide a function that can be executed on each chunk. For example, to get a typewriter view of the completions, you can do:

def typewriter(chunk: str) -> None:

print(chunk, end="")

docs = Docs()

# add some docs...

docs.query(

"What manufacturing challenges are unique to bispecific antibodies?",

callbacks=[typewriter],

)In general, embeddings are cached when you pickle a Docs regardless of what vector store you use. So as long as you save your underlying Docs object, you should be able to avoid re-embedding your documents.

You can customize any of the prompts using settings.

from paperqa import Docs, Settings

my_qa_prompt = (

"Answer the question '{question}'\n"

"Use the context below if helpful. "

"You can cite the context using the key like (Example2012). "

"If there is insufficient context, write a poem "

"about how you cannot answer.\n\n"

"Context: {context}"

)

docs = Docs()

settings = Settings()

settings.prompts.qa = my_qa_prompt

docs.query("Are covid-19 vaccines effective?", settings=settings)Following the syntax above, you can also include prompts that are executed after the query and before the query. For example, you can use this to critique the answer.

Internally at FutureHouse, we have a slightly different set of tools. We're trying to get some of them, like citation traversal, into this repo. However, we have APIs and licenses to access research papers that we cannot share openly. Similarly, in our research papers' results we do not start with the known relevant PDFs. Our agent has to identify them using keyword search over all papers, rather than just a subset. We're gradually aligning these two versions of PaperQA, but until there is an open-source way to freely access papers (even just open source papers) you will need to provide PDFs yourself.

LangChain and LlamaIndex are both frameworks for working with LLM applications, with abstractions made for agentic workflows and retrieval augmented generation.

Over time, the PaperQA team over time chose to become framework-agnostic, instead outsourcing LLM drivers to LiteLLM and no framework besides Pydantic for its tools. PaperQA focuses on scientific papers and their metadata.

PaperQA can be reimplemented using either LlamaIndex or LangChain.

For example, our GatherEvidence tool can be reimplemented

as a retriever with an LLM-based re-ranking and contextual summary.

There is similar work with the tree response method in LlamaIndex.

The Docs class can be pickled and unpickled. This is useful if you want to save the embeddings of the documents and then load them later.

import pickle

# save

with open("my_docs.pkl", "wb") as f:

pickle.dump(docs, f)

# load

with open("my_docs.pkl", "rb") as f:

docs = pickle.load(f)Contained in docs/2024-10-16_litqa2-splits.json5 are the question IDs (correspond with LAB-Bench's LitQA2 question IDs) used in the train and evaluation splits, as well as paper DOIs used to build the train and evaluation splits' indexes. The test split remains held out.

Please read and cite the following papers if you use this software:

@article{skarlinski2024language,

title = {Language agents achieve superhuman synthesis of scientific knowledge},

author = {

Michael D. Skarlinski and

Sam Cox and

Jon M. Laurent and

James D. Braza and

Michaela Hinks and

Michael J. Hammerling and

Manvitha Ponnapati and

Samuel G. Rodriques and

Andrew D. White},

year = {2024},

journal = {arXiv preprent arXiv:2409.13740},

url = {https://doi.org/10.48550/arXiv.2409.13740}

}@article{lala2023paperqa,

title = {PaperQA: Retrieval-Augmented Generative Agent for Scientific Research},

author = {

Jakub Lála and

Odhran O'Donoghue and

Aleksandar Shtedritski and

Sam Cox and

Samuel G. Rodriques and

Andrew D. White},

journal = {arXiv preprint arXiv:2312.07559},

year = {2023}

}For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for paper-qa

Similar Open Source Tools

paper-qa

PaperQA is a minimal package for question and answering from PDFs or text files, providing very good answers with in-text citations. It uses OpenAI Embeddings to embed and search documents, and includes a process of embedding docs, queries, searching for top passages, creating summaries, using an LLM to re-score and select relevant summaries, putting summaries into prompt, and generating answers. The tool can be used to answer specific questions related to scientific research by leveraging citations and relevant passages from documents.

paper-qa

PaperQA is a minimal package for question and answering from PDFs or text files, providing very good answers with in-text citations. It uses OpenAI Embeddings to embed and search documents, and follows a process of embedding docs and queries, searching for top passages, creating summaries, scoring and selecting relevant summaries, putting summaries into prompt, and generating answers. Users can customize prompts and use various models for embeddings and LLMs. The tool can be used asynchronously and supports adding documents from paths, files, or URLs.

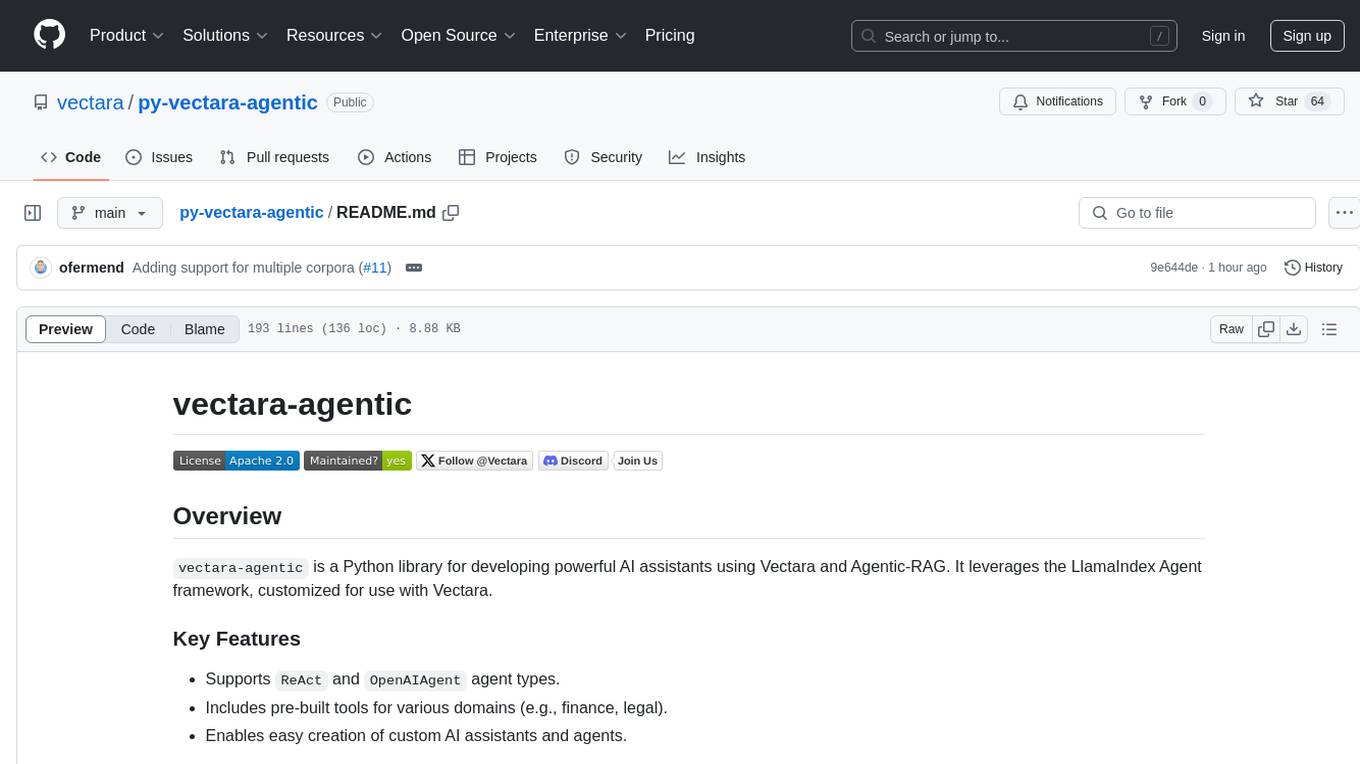

py-vectara-agentic

The `vectara-agentic` Python library is designed for developing powerful AI assistants using Vectara and Agentic-RAG. It supports various agent types, includes pre-built tools for domains like finance and legal, and enables easy creation of custom AI assistants and agents. The library provides tools for summarizing text, rephrasing text, legal tasks like summarizing legal text and critiquing as a judge, financial tasks like analyzing balance sheets and income statements, and database tools for inspecting and querying databases. It also supports observability via LlamaIndex and Arize Phoenix integration.

MultiPL-E

MultiPL-E is a system for translating unit test-driven neural code generation benchmarks to new languages. It is part of the BigCode Code Generation LM Harness and allows for evaluating Code LLMs using various benchmarks. The tool supports multiple versions with improvements and new language additions, providing a scalable and polyglot approach to benchmarking neural code generation. Users can access a tutorial for direct usage and explore the dataset of translated prompts on the Hugging Face Hub.

Trace

Trace is a new AutoDiff-like tool for training AI systems end-to-end with general feedback. It generalizes the back-propagation algorithm by capturing and propagating an AI system's execution trace. Implemented as a PyTorch-like Python library, users can write Python code directly and use Trace primitives to optimize certain parts, similar to training neural networks.

garak

Garak is a free tool that checks if a Large Language Model (LLM) can be made to fail in a way that is undesirable. It probes for hallucination, data leakage, prompt injection, misinformation, toxicity generation, jailbreaks, and many other weaknesses. Garak's a free tool. We love developing it and are always interested in adding functionality to support applications.

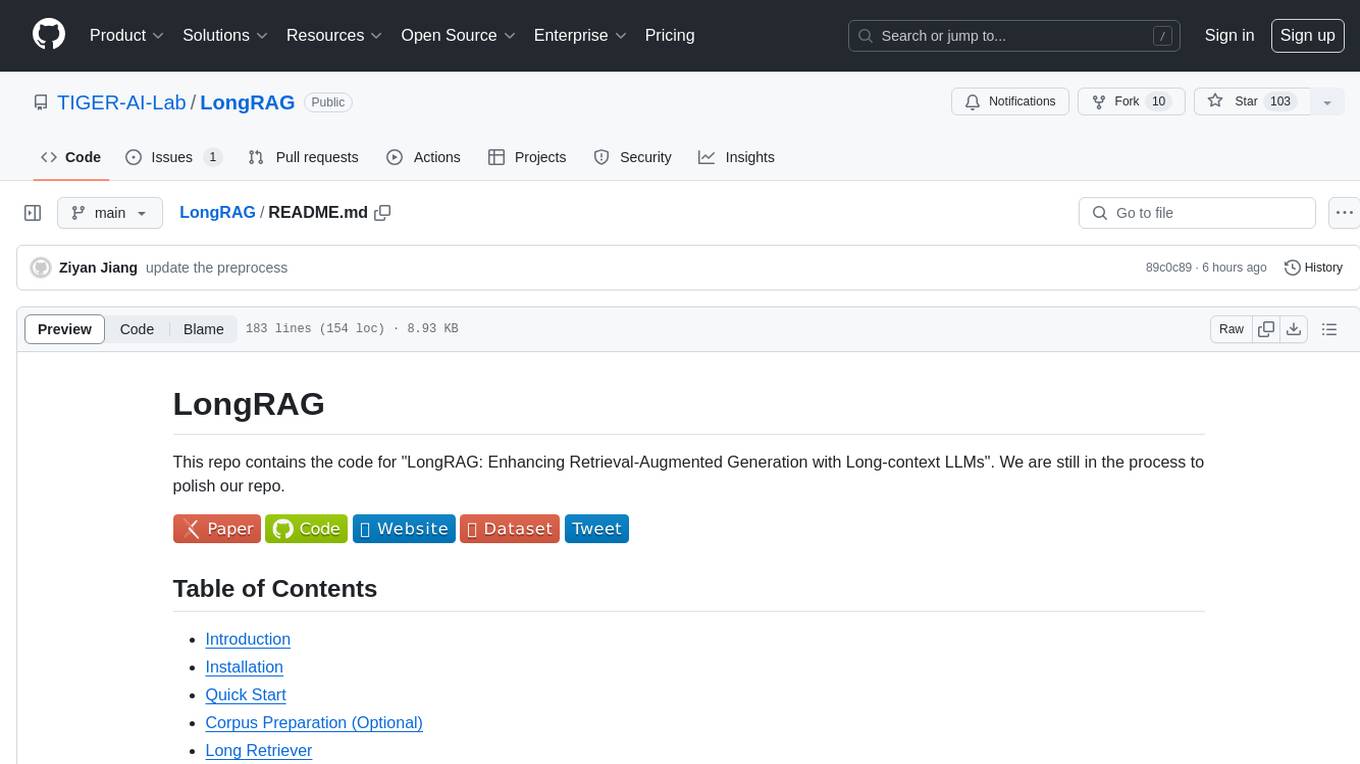

LongRAG

This repository contains the code for LongRAG, a framework that enhances retrieval-augmented generation with long-context LLMs. LongRAG introduces a 'long retriever' and a 'long reader' to improve performance by using a 4K-token retrieval unit, offering insights into combining RAG with long-context LLMs. The repo provides instructions for installation, quick start, corpus preparation, long retriever, and long reader.

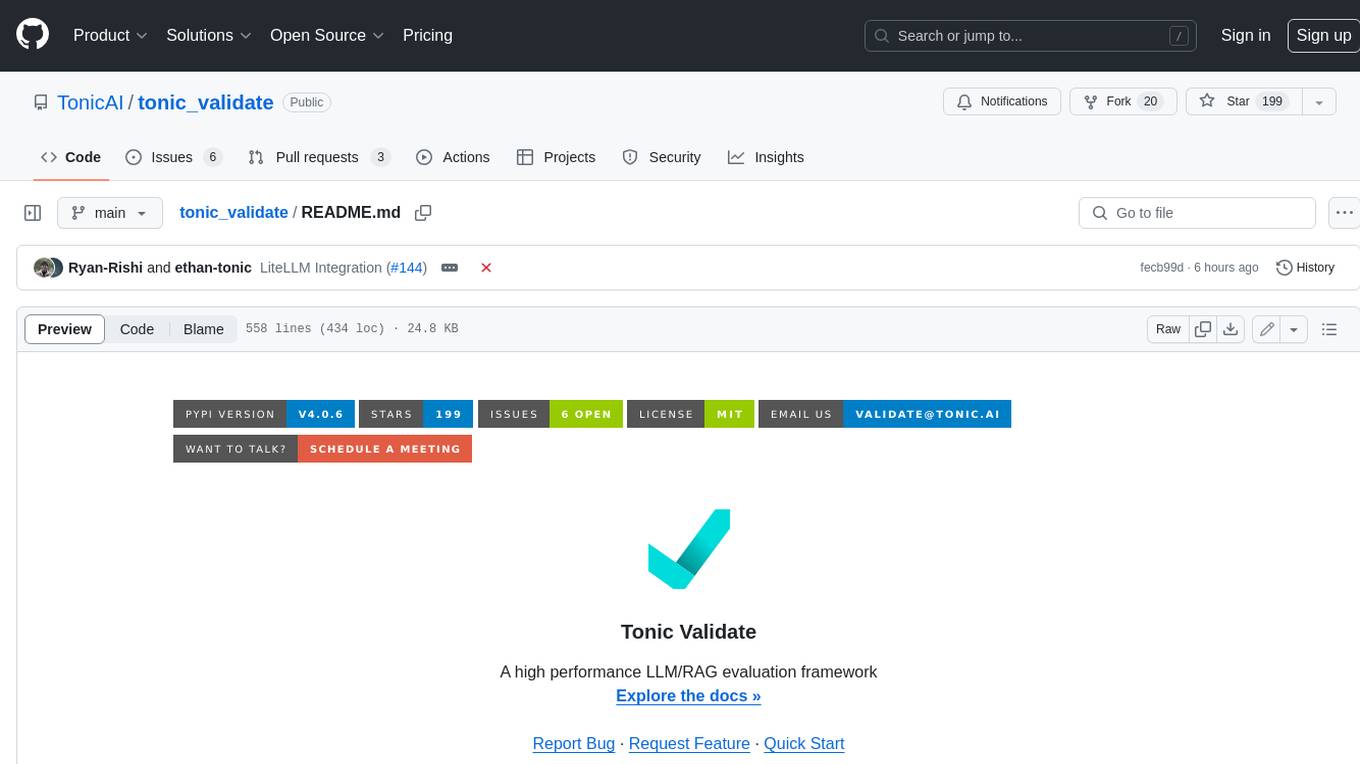

tonic_validate

Tonic Validate is a framework for the evaluation of LLM outputs, such as Retrieval Augmented Generation (RAG) pipelines. Validate makes it easy to evaluate, track, and monitor your LLM and RAG applications. Validate allows you to evaluate your LLM outputs through the use of our provided metrics which measure everything from answer correctness to LLM hallucination. Additionally, Validate has an optional UI to visualize your evaluation results for easy tracking and monitoring.

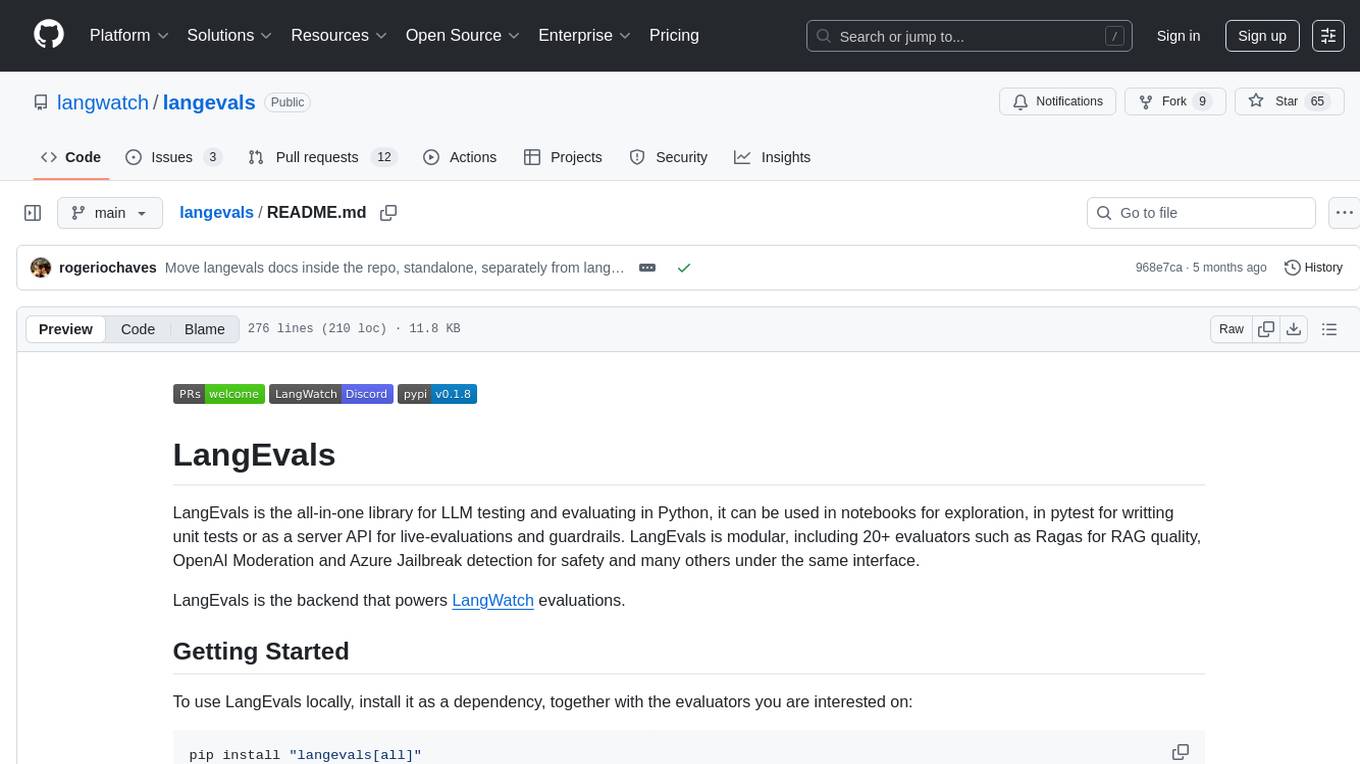

langevals

LangEvals is an all-in-one Python library for testing and evaluating LLM models. It can be used in notebooks for exploration, in pytest for writing unit tests, or as a server API for live evaluations and guardrails. The library is modular, with 20+ evaluators including Ragas for RAG quality, OpenAI Moderation, and Azure Jailbreak detection. LangEvals powers LangWatch evaluations and provides tools for batch evaluations on notebooks and unit test evaluations with PyTest. It also offers LangEvals evaluators for LLM-as-a-Judge scenarios and out-of-the-box evaluators for language detection and answer relevancy checks.

LeanCopilot

Lean Copilot is a tool that enables the use of large language models (LLMs) in Lean for proof automation. It provides features such as suggesting tactics/premises, searching for proofs, and running inference of LLMs. Users can utilize built-in models from LeanDojo or bring their own models to run locally or on the cloud. The tool supports platforms like Linux, macOS, and Windows WSL, with optional CUDA and cuDNN for GPU acceleration. Advanced users can customize behavior using Tactic APIs and Model APIs. Lean Copilot also allows users to bring their own models through ExternalGenerator or ExternalEncoder. The tool comes with caveats such as occasional crashes and issues with premise selection and proof search. Users can get in touch through GitHub Discussions for questions, bug reports, feature requests, and suggestions. The tool is designed to enhance theorem proving in Lean using LLMs.

OlympicArena

OlympicArena is a comprehensive benchmark designed to evaluate advanced AI capabilities across various disciplines. It aims to push AI towards superintelligence by tackling complex challenges in science and beyond. The repository provides detailed data for different disciplines, allows users to run inference and evaluation locally, and offers a submission platform for testing models on the test set. Additionally, it includes an annotation interface and encourages users to cite their paper if they find the code or dataset helpful.

LayerSkip

LayerSkip is an implementation enabling early exit inference and self-speculative decoding. It provides a code base for running models trained using the LayerSkip recipe, offering speedup through self-speculative decoding. The tool integrates with Hugging Face transformers and provides checkpoints for various LLMs. Users can generate tokens, benchmark on datasets, evaluate tasks, and sweep over hyperparameters to optimize inference speed. The tool also includes correctness verification scripts and Docker setup instructions. Additionally, other implementations like gpt-fast and Native HuggingFace are available. Training implementation is a work-in-progress, and contributions are welcome under the CC BY-NC license.

vectorflow

VectorFlow is an open source, high throughput, fault tolerant vector embedding pipeline. It provides a simple API endpoint for ingesting large volumes of raw data, processing, and storing or returning the vectors quickly and reliably. The tool supports text-based files like TXT, PDF, HTML, and DOCX, and can be run locally with Kubernetes in production. VectorFlow offers functionalities like embedding documents, running chunking schemas, custom chunking, and integrating with vector databases like Pinecone, Qdrant, and Weaviate. It enforces a standardized schema for uploading data to a vector store and supports features like raw embeddings webhook, chunk validation webhook, S3 endpoint, and telemetry. The tool can be used with the Python client and provides detailed instructions for running and testing the functionalities.

airflow-ai-sdk

This repository contains an SDK for working with LLMs from Apache Airflow, based on Pydantic AI. It allows users to call LLMs and orchestrate agent calls directly within their Airflow pipelines using decorator-based tasks. The SDK leverages the familiar Airflow `@task` syntax with extensions like `@task.llm`, `@task.llm_branch`, and `@task.agent`. Users can define tasks that call language models, orchestrate multi-step AI reasoning, change the control flow of a DAG based on LLM output, and support various models in the Pydantic AI library. The SDK is designed to integrate LLM workflows into Airflow pipelines, from simple LLM calls to complex agentic workflows.

kvpress

This repository implements multiple key-value cache pruning methods and benchmarks using transformers, aiming to simplify the development of new methods for researchers and developers in the field of long-context language models. It provides a set of 'presses' that compress the cache during the pre-filling phase, with each press having a compression ratio attribute. The repository includes various training-free presses, special presses, and supports KV cache quantization. Users can contribute new presses and evaluate the performance of different presses on long-context datasets.

Hurley-AI

Hurley AI is a next-gen framework for developing intelligent agents through Retrieval-Augmented Generation. It enables easy creation of custom AI assistants and agents, supports various agent types, and includes pre-built tools for domains like finance and legal. Hurley AI integrates with LLM inference services and provides observability with Arize Phoenix. Users can create Hurley RAG tools with a single line of code and customize agents with specific instructions. The tool also offers various helper functions to connect with Hurley RAG and search tools, along with pre-built tools for tasks like summarizing text, rephrasing text, understanding memecoins, and querying databases.

For similar tasks

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

onnxruntime-genai

ONNX Runtime Generative AI is a library that provides the generative AI loop for ONNX models, including inference with ONNX Runtime, logits processing, search and sampling, and KV cache management. Users can call a high level `generate()` method, or run each iteration of the model in a loop. It supports greedy/beam search and TopP, TopK sampling to generate token sequences, has built in logits processing like repetition penalties, and allows for easy custom scoring.

jupyter-ai

Jupyter AI connects generative AI with Jupyter notebooks. It provides a user-friendly and powerful way to explore generative AI models in notebooks and improve your productivity in JupyterLab and the Jupyter Notebook. Specifically, Jupyter AI offers: * An `%%ai` magic that turns the Jupyter notebook into a reproducible generative AI playground. This works anywhere the IPython kernel runs (JupyterLab, Jupyter Notebook, Google Colab, Kaggle, VSCode, etc.). * A native chat UI in JupyterLab that enables you to work with generative AI as a conversational assistant. * Support for a wide range of generative model providers, including AI21, Anthropic, AWS, Cohere, Gemini, Hugging Face, NVIDIA, and OpenAI. * Local model support through GPT4All, enabling use of generative AI models on consumer grade machines with ease and privacy.

khoj

Khoj is an open-source, personal AI assistant that extends your capabilities by creating always-available AI agents. You can share your notes and documents to extend your digital brain, and your AI agents have access to the internet, allowing you to incorporate real-time information. Khoj is accessible on Desktop, Emacs, Obsidian, Web, and Whatsapp, and you can share PDF, markdown, org-mode, notion files, and GitHub repositories. You'll get fast, accurate semantic search on top of your docs, and your agents can create deeply personal images and understand your speech. Khoj is self-hostable and always will be.

langchain_dart

LangChain.dart is a Dart port of the popular LangChain Python framework created by Harrison Chase. LangChain provides a set of ready-to-use components for working with language models and a standard interface for chaining them together to formulate more advanced use cases (e.g. chatbots, Q&A with RAG, agents, summarization, extraction, etc.). The components can be grouped into a few core modules: * **Model I/O:** LangChain offers a unified API for interacting with various LLM providers (e.g. OpenAI, Google, Mistral, Ollama, etc.), allowing developers to switch between them with ease. Additionally, it provides tools for managing model inputs (prompt templates and example selectors) and parsing the resulting model outputs (output parsers). * **Retrieval:** assists in loading user data (via document loaders), transforming it (with text splitters), extracting its meaning (using embedding models), storing (in vector stores) and retrieving it (through retrievers) so that it can be used to ground the model's responses (i.e. Retrieval-Augmented Generation or RAG). * **Agents:** "bots" that leverage LLMs to make informed decisions about which available tools (such as web search, calculators, database lookup, etc.) to use to accomplish the designated task. The different components can be composed together using the LangChain Expression Language (LCEL).

danswer

Danswer is an open-source Gen-AI Chat and Unified Search tool that connects to your company's docs, apps, and people. It provides a Chat interface and plugs into any LLM of your choice. Danswer can be deployed anywhere and for any scale - on a laptop, on-premise, or to cloud. Since you own the deployment, your user data and chats are fully in your own control. Danswer is MIT licensed and designed to be modular and easily extensible. The system also comes fully ready for production usage with user authentication, role management (admin/basic users), chat persistence, and a UI for configuring Personas (AI Assistants) and their Prompts. Danswer also serves as a Unified Search across all common workplace tools such as Slack, Google Drive, Confluence, etc. By combining LLMs and team specific knowledge, Danswer becomes a subject matter expert for the team. Imagine ChatGPT if it had access to your team's unique knowledge! It enables questions such as "A customer wants feature X, is this already supported?" or "Where's the pull request for feature Y?"

infinity

Infinity is an AI-native database designed for LLM applications, providing incredibly fast full-text and vector search capabilities. It supports a wide range of data types, including vectors, full-text, and structured data, and offers a fused search feature that combines multiple embeddings and full text. Infinity is easy to use, with an intuitive Python API and a single-binary architecture that simplifies deployment. It achieves high performance, with 0.1 milliseconds query latency on million-scale vector datasets and up to 15K QPS.

For similar jobs

SLR-FC

This repository provides a comprehensive collection of AI tools and resources to enhance literature reviews. It includes a curated list of AI tools for various tasks, such as identifying research gaps, discovering relevant papers, visualizing paper content, and summarizing text. Additionally, the repository offers materials on generative AI, effective prompts, copywriting, image creation, and showcases of AI capabilities. By leveraging these tools and resources, researchers can streamline their literature review process, gain deeper insights from scholarly literature, and improve the quality of their research outputs.

paper-ai

Paper-ai is a tool that helps you write papers using artificial intelligence. It provides features such as AI writing assistance, reference searching, and editing and formatting tools. With Paper-ai, you can quickly and easily create high-quality papers.

paper-qa

PaperQA is a minimal package for question and answering from PDFs or text files, providing very good answers with in-text citations. It uses OpenAI Embeddings to embed and search documents, and follows a process of embedding docs and queries, searching for top passages, creating summaries, scoring and selecting relevant summaries, putting summaries into prompt, and generating answers. Users can customize prompts and use various models for embeddings and LLMs. The tool can be used asynchronously and supports adding documents from paths, files, or URLs.

ChatData

ChatData is a robust chat-with-documents application designed to extract information and provide answers by querying the MyScale free knowledge base or uploaded documents. It leverages the Retrieval Augmented Generation (RAG) framework, millions of Wikipedia pages, and arXiv papers. Features include self-querying retriever, VectorSQL, session management, and building a personalized knowledge base. Users can effortlessly navigate vast data, explore academic papers, and research documents. ChatData empowers researchers, students, and knowledge enthusiasts to unlock the true potential of information retrieval.

noScribe

noScribe is an AI-based software designed for automated audio transcription, specifically tailored for transcribing interviews for qualitative social research or journalistic purposes. It is a free and open-source tool that runs locally on the user's computer, ensuring data privacy. The software can differentiate between speakers and supports transcription in 99 languages. It includes a user-friendly editor for reviewing and correcting transcripts. Developed by Kai Dröge, a PhD in sociology with a background in computer science, noScribe aims to streamline the transcription process and enhance the efficiency of qualitative analysis.

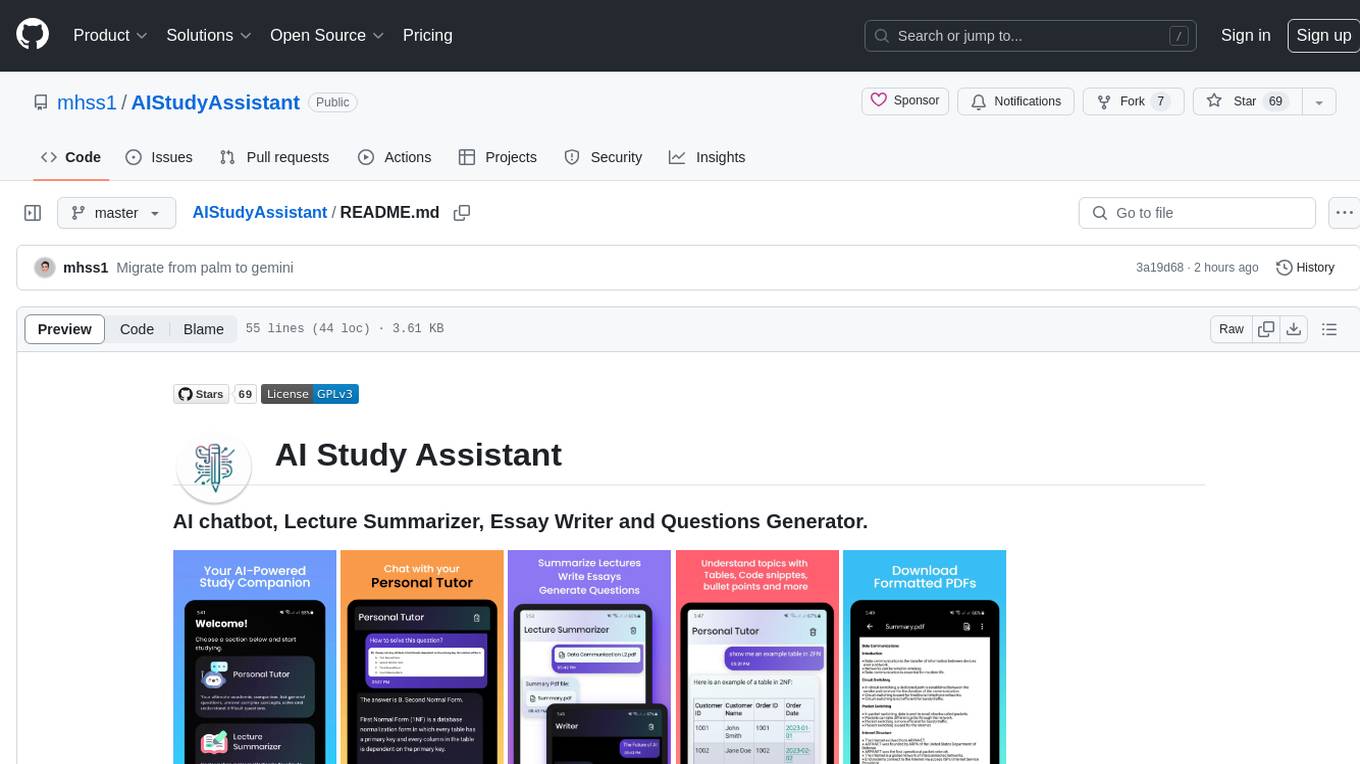

AIStudyAssistant

AI Study Assistant is an app designed to enhance learning experience and boost academic performance. It serves as a personal tutor, lecture summarizer, writer, and question generator powered by Google PaLM 2. Features include interacting with an AI chatbot, summarizing lectures, generating essays, and creating practice questions. The app is built using 100% Kotlin, Jetpack Compose, Clean Architecture, and MVVM design pattern, with technologies like Ktor, Room DB, Hilt, and Kotlin coroutines. AI Study Assistant aims to provide comprehensive AI-powered assistance for students in various academic tasks.

data-to-paper

Data-to-paper is an AI-driven framework designed to guide users through the process of conducting end-to-end scientific research, starting from raw data to the creation of comprehensive and human-verifiable research papers. The framework leverages a combination of LLM and rule-based agents to assist in tasks such as hypothesis generation, literature search, data analysis, result interpretation, and paper writing. It aims to accelerate research while maintaining key scientific values like transparency, traceability, and verifiability. The framework is field-agnostic, supports both open-goal and fixed-goal research, creates data-chained manuscripts, involves human-in-the-loop interaction, and allows for transparent replay of the research process.

k2

K2 (GeoLLaMA) is a large language model for geoscience, trained on geoscience literature and fine-tuned with knowledge-intensive instruction data. It outperforms baseline models on objective and subjective tasks. The repository provides K2 weights, core data of GeoSignal, GeoBench benchmark, and code for further pretraining and instruction tuning. The model is available on Hugging Face for use. The project aims to create larger and more powerful geoscience language models in the future.