proxyless-llm-websearch

None

Stars: 122

Proxyless-LLM-WebSearch is a tool that enables users to perform large language model-based web search without the need for proxies. It leverages state-of-the-art language models to provide accurate and efficient web search results. The tool is designed to be user-friendly and accessible for individuals looking to conduct web searches at scale. With Proxyless-LLM-WebSearch, users can easily search the web using natural language queries and receive relevant results in a timely manner. This tool is particularly useful for researchers, data analysts, content creators, and anyone interested in leveraging advanced language models for web search tasks.

README:

一个无需代理的多搜索引擎 LLM 网络检索工具,支持 URL 内容解析和网页爬取,结合 LangGraph与LangGraph-MCP 实现模块化智能体链路。专为大语言模型的外部知识调用场景而设计,支持 Playwright + Crawl4AI 网页获取与解析,支持异步并发、内容切片与重排过滤。

- 🔥 2025-09-05:支持langgraph-mcp

- 🔥 2025-09-03:新增 Docker 部署、内置智能重排器、支持自定义文本切分器与重排器

-

🌐 无需代理:通过 Playwright 配置国内浏览器支持,无需代理也能进行网络搜索。

-

🔍 多搜索引擎支持:支持 Bing、夸克、百度、搜狗 等主流搜索引擎,增强信息来源多样性。

-

🤖 意图识别:系统能够根据用户的输入内容,自动判断是进行网络搜索还是解析 URL。

-

🔄 查询分解:根据用户的搜索意图,自动将查询分解为多个子任务,并依次执行,从而提升搜索的相关性与效率。

-

⚙️ 智能体架构:基于 LangGraph 封装的**「web_search」与「link_parser」**。

-

🏃♂️ 异步并发任务处理:支持异步并发任务处理,可高效处理多个搜索任务。

-

📝 内容处理优化:

-

✂️ 内容切片:将网页长内容按段切分。

-

🔄 内容重排:智能重排序,提高信息相关性。

-

🚫 内容过滤:自动剔除无关或重复内容。

-

-

🌐 多端支持:

-

🐳 支持 Docker 部署:一键启动,快速构建后端服务。

-

🖥️ 提供 FastAPI 后端接口,可集成到任意系统中。

-

🌍 提供 Gradio Web UI,可快速部署成可视化应用。

-

🧩 浏览器插件支持:支持 Edge ,提供智能 URL 解析插件,直接在浏览器中发起网页解析与内容提取请求。

-

git clone https://github.com/itshyao/proxyless-llm-websearch.git

cd proxyless-llm-websearchpip install -r requirements.txt

python -m playwright install

# 百炼llm

OPENAI_BASE_URL=https://dashscope.aliyuncs.com/compatible-mode/v1

OPENAI_API_KEY=sk-xxx

MODEL_NAME=qwen-plus-latest

# 百炼embedding

EMBEDDING_BASE_URL=https://dashscope.aliyuncs.com/compatible-mode/v1

EMBEDDING_API_KEY=sk-xxx

EMBEDDING_MODEL_NAME=text-embedding-v4

# 百炼reranker

RERANK_BASE_URL=https://dashscope.aliyuncs.com/compatible-mode/v1

RERANK_API_KEY=sk-xxx

RERANK_MODEL=gte-rerank-v2

python agent/demo.pypython agent/api_serve.pyimport requests

url = "http://localhost:8800/search"

data = {

"question": "广州今日天气",

"engine": "bing",

"split": {

"chunk_size": 512,

"chunk_overlap": 128

},

"rerank": {

"top_k": 5

}

}

try:

response = requests.post(

url,

json=data

)

if response.status_code == 200:

print("✅ 请求成功!")

print("响应内容:", response.json())

else:

print(f"❌ 请求失败,状态码:{response.status_code}")

print("错误信息:", response.text)

except requests.exceptions.RequestException as e:

print(f"⚠️ 请求异常:{str(e)}")python agent/gradio_demo.py

docker-compose -f docker-compose-ag.yml up -d --build

python mcp/websearch.py

python mcp/demo.py

python mcp/api_serve.py

import requests

url = "http://localhost:8800/search"

data = {

"question": "广州今日天气"

}

try:

response = requests.post(

url,

json=data

)

if response.status_code == 200:

print("✅ 请求成功!")

print("响应内容:", response.json())

else:

print(f"❌ 请求失败,状态码:{response.status_code}")

print("错误信息:", response.text)

except requests.exceptions.RequestException as e:

print(f"⚠️ 请求异常:{str(e)}")

docker-compose -f docker-compose-mcp.yml up -d --build

from typing import Optional, List

class YourSplitter:

def __init__(self, text: str, chunk_size: int = 512, chunk_overlap: int = 128):

self.text = text

self.chunk_size = chunk_size

self.chunk_overlap = chunk_overlap

def split_text(self, text: Optional[str] = None) -> List:

# TODO: implement splitting logic

return ["your chunk"]

from typing import List, Union, Tuple

class YourReranker:

async def get_reranked_documents(

self,

query: Union[str, List[str]],

documents: List[str],

) -> Union[

Tuple[List[str]],

Tuple[List[int]],

]:

return ["your chunk"], ["chunk index"]

我们将项目与一些主流的在线 API 进行对比,评估了其在复杂问题下的表现。

- 数据集来自阿里发布的 WebWalkerQA,包含了 680 个高难度问题,覆盖教育、学术会议、游戏等多个领域。

- 数据集包括中英文问题。

| 搜索引擎/系统 | ✅ Correct | ❌ Incorrect | |

|---|---|---|---|

| 火山方舟 | 5.00% | 72.21% | 22.79% |

| 百炼 | 9.85% | 62.79% | 27.35% |

| Our | 19.85% | 47.94% | 32.06% |

本项目部分功能得益于以下开源项目的支持与启发,特此致谢:

- 🧠 LangGraph:用于构建模块化智能体链路框架,帮助快速搭建复杂的智能体系统。

- 🕷 Crawl4AI:强大的网页内容解析工具,助力高效网页抓取与数据提取。

- 🌐 Playwright:现代浏览器自动化工具,支持跨浏览器的网页抓取和测试自动化。

- 🔌 Langchain MCP Adapters:用于多链处理MCP的构建。

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for proxyless-llm-websearch

Similar Open Source Tools

proxyless-llm-websearch

Proxyless-LLM-WebSearch is a tool that enables users to perform large language model-based web search without the need for proxies. It leverages state-of-the-art language models to provide accurate and efficient web search results. The tool is designed to be user-friendly and accessible for individuals looking to conduct web searches at scale. With Proxyless-LLM-WebSearch, users can easily search the web using natural language queries and receive relevant results in a timely manner. This tool is particularly useful for researchers, data analysts, content creators, and anyone interested in leveraging advanced language models for web search tasks.

LLM_Web_search

LLM_Web_search project gives local LLMs the ability to search the web by outputting a specific command. It uses regular expressions to extract search queries from model output and then utilizes duckduckgo-search to search the web. LangChain's Contextual compression and Okapi BM25 or SPLADE are used to extract relevant parts of web pages in search results. The extracted results are appended to the model's output.

RAG-To-Know

RAG-To-Know is a versatile tool for knowledge extraction and summarization. It leverages the RAG (Retrieval-Augmented Generation) framework to provide a seamless way to retrieve and summarize information from various sources. With RAG-To-Know, users can easily extract key insights and generate concise summaries from large volumes of text data. The tool is designed to streamline the process of information retrieval and summarization, making it ideal for researchers, students, journalists, and anyone looking to quickly grasp the essence of complex information.

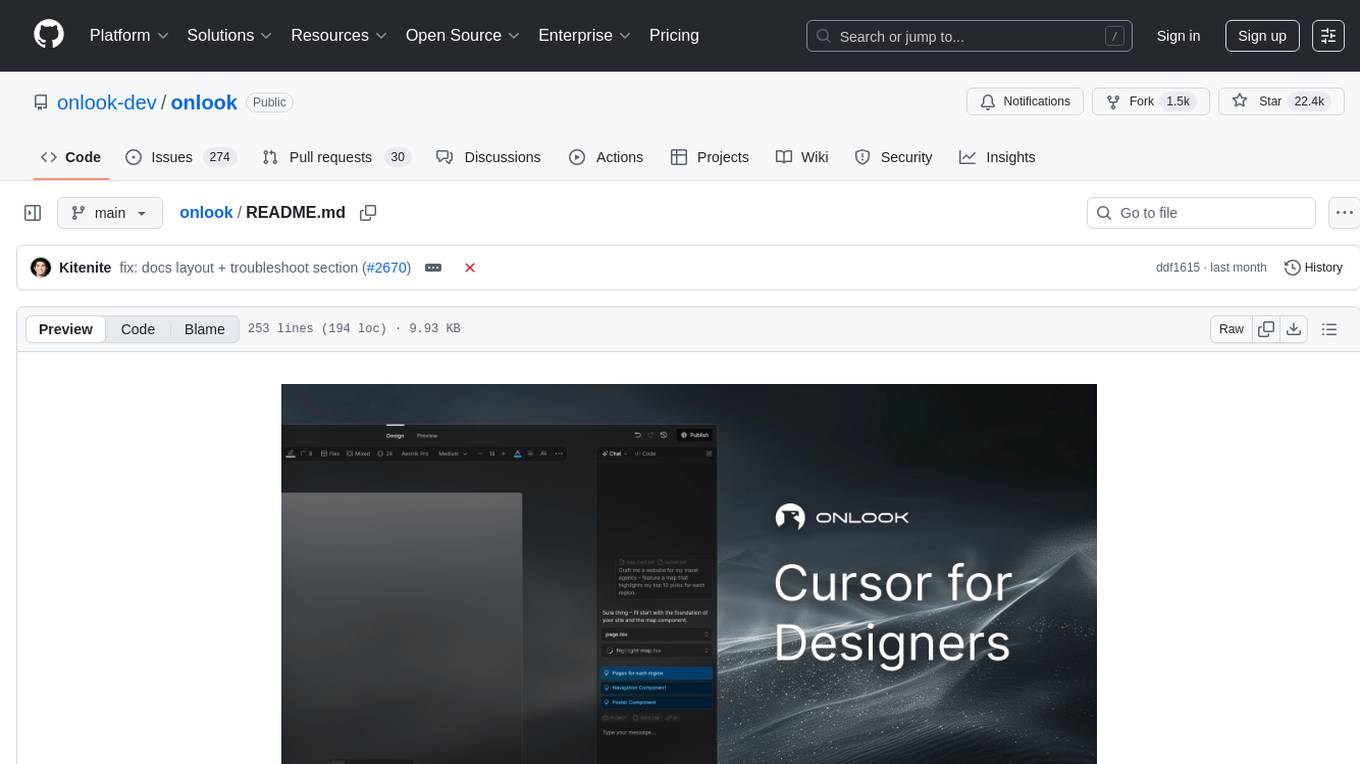

onlook

Onlook is a web scraping tool that allows users to extract data from websites easily and efficiently. It provides a user-friendly interface for creating web scraping scripts and supports various data formats for exporting the extracted data. With Onlook, users can automate the process of collecting information from multiple websites, saving time and effort. The tool is designed to be flexible and customizable, making it suitable for a wide range of web scraping tasks.

Website-Crawler

Website-Crawler is a tool designed to extract data from websites in an automated manner. It allows users to scrape information such as text, images, links, and more from web pages. The tool provides functionalities to navigate through websites, handle different types of content, and store extracted data for further analysis. Website-Crawler is useful for tasks like web scraping, data collection, content aggregation, and competitive analysis. It can be customized to extract specific data elements based on user requirements, making it a versatile tool for various web data extraction needs.

waidrin

Waidrin is a powerful web scraping tool that allows users to easily extract data from websites. It provides a user-friendly interface for creating custom web scraping scripts and supports various data formats for exporting the extracted data. With Waidrin, users can automate the process of collecting information from multiple websites, saving time and effort. The tool is designed to be flexible and scalable, making it suitable for both beginners and advanced users in the field of web scraping.

oramacore

OramaCore is a database designed for AI projects, answer engines, copilots, and search functionalities. It offers features such as a full-text search engine, vector database, LLM interface, and various utilities. The tool is currently under active development and not recommended for production use due to potential API changes. OramaCore aims to provide a comprehensive solution for managing data and enabling advanced search capabilities in AI applications.

free-llm-api-resources

The 'Free LLM API resources' repository provides a comprehensive list of services offering free access or credits for API-based LLM usage. It includes various providers with details on model names, limits, and notes. Users can find information on legitimate services and their respective usage restrictions to leverage LLM capabilities without incurring costs. The repository aims to assist developers and researchers in accessing AI models for experimentation, development, and learning purposes.

enterprise-h2ogpte

Enterprise h2oGPTe - GenAI RAG is a repository containing code examples, notebooks, and benchmarks for the enterprise version of h2oGPTe, a powerful AI tool for generating text based on the RAG (Retrieval-Augmented Generation) architecture. The repository provides resources for leveraging h2oGPTe in enterprise settings, including implementation guides, performance evaluations, and best practices. Users can explore various applications of h2oGPTe in natural language processing tasks, such as text generation, content creation, and conversational AI.

upgini

Upgini is an intelligent data search engine with a Python library that helps users find and add relevant features to their ML pipeline from various public, community, and premium external data sources. It automates the optimization of connected data sources by generating an optimal set of machine learning features using large language models, GraphNNs, and recurrent neural networks. The tool aims to simplify feature search and enrichment for external data to make it a standard approach in machine learning pipelines. It democratizes access to data sources for the data science community.

awesome-LLM-resources

This repository is a curated list of resources for learning and working with Large Language Models (LLMs). It includes a collection of articles, tutorials, tools, datasets, and research papers related to LLMs such as GPT-3, BERT, and Transformer models. Whether you are a researcher, developer, or enthusiast interested in natural language processing and artificial intelligence, this repository provides valuable resources to help you understand, implement, and experiment with LLMs.

HyperAgent

HyperAgent is a powerful tool for automating repetitive tasks in web scraping and data extraction. It provides a user-friendly interface to create custom web scraping scripts without the need for extensive coding knowledge. With HyperAgent, users can easily extract data from websites, transform it into structured formats, and save it for further analysis. The tool supports various data formats and offers scheduling options for automated data extraction at regular intervals. HyperAgent is suitable for individuals and businesses looking to streamline their data collection processes and improve efficiency in extracting information from the web.

context-portal

Context-portal is a versatile tool for managing and visualizing data in a collaborative environment. It provides a user-friendly interface for organizing and sharing information, making it easy for teams to work together on projects. With features such as customizable dashboards, real-time updates, and seamless integration with popular data sources, Context-portal streamlines the data management process and enhances productivity. Whether you are a data analyst, project manager, or team leader, Context-portal offers a comprehensive solution for optimizing workflows and driving better decision-making.

BrowserGym

BrowserGym is an open, easy-to-use, and extensible framework designed to accelerate web agent research. It provides benchmarks like MiniWoB, WebArena, VisualWebArena, WorkArena, AssistantBench, and WebLINX. Users can design new web benchmarks by inheriting the AbstractBrowserTask class. The tool allows users to install different packages for core functionalities, experiments, and specific benchmarks. It supports the development setup and offers boilerplate code for running agents on various tasks. BrowserGym is not a consumer product and should be used with caution.

open-webui-tools

Open WebUI Tools Collection is a set of tools for structured planning, arXiv paper search, Hugging Face text-to-image generation, prompt enhancement, and multi-model conversations. It enhances LLM interactions with academic research, image generation, and conversation management. Tools include arXiv Search Tool and Hugging Face Image Generator. Function Pipes like Planner Agent offer autonomous plan generation and execution. Filters like Prompt Enhancer improve prompt quality. Installation and configuration instructions are provided for each tool and pipe.

vizra-adk

Vizra-ADK is a data visualization tool that allows users to create interactive and customizable visualizations for their data. With a user-friendly interface and a wide range of customization options, Vizra-ADK makes it easy for users to explore and analyze their data in a visually appealing way. Whether you're a data scientist looking to create informative charts and graphs, or a business analyst wanting to present your findings in a compelling way, Vizra-ADK has you covered. The tool supports various data formats and provides features like filtering, sorting, and grouping to help users make sense of their data quickly and efficiently.

For similar tasks

Awesome-Segment-Anything

Awesome-Segment-Anything is a powerful tool for segmenting and extracting information from various types of data. It provides a user-friendly interface to easily define segmentation rules and apply them to text, images, and other data formats. The tool supports both supervised and unsupervised segmentation methods, allowing users to customize the segmentation process based on their specific needs. With its versatile functionality and intuitive design, Awesome-Segment-Anything is ideal for data analysts, researchers, content creators, and anyone looking to efficiently extract valuable insights from complex datasets.

Time-LLM

Time-LLM is a reprogramming framework that repurposes large language models (LLMs) for time series forecasting. It allows users to treat time series analysis as a 'language task' and effectively leverage pre-trained LLMs for forecasting. The framework involves reprogramming time series data into text representations and providing declarative prompts to guide the LLM reasoning process. Time-LLM supports various backbone models such as Llama-7B, GPT-2, and BERT, offering flexibility in model selection. The tool provides a general framework for repurposing language models for time series forecasting tasks.

crewAI

CrewAI is a cutting-edge framework designed to orchestrate role-playing autonomous AI agents. By fostering collaborative intelligence, CrewAI empowers agents to work together seamlessly, tackling complex tasks. It enables AI agents to assume roles, share goals, and operate in a cohesive unit, much like a well-oiled crew. Whether you're building a smart assistant platform, an automated customer service ensemble, or a multi-agent research team, CrewAI provides the backbone for sophisticated multi-agent interactions. With features like role-based agent design, autonomous inter-agent delegation, flexible task management, and support for various LLMs, CrewAI offers a dynamic and adaptable solution for both development and production workflows.

Transformers_And_LLM_Are_What_You_Dont_Need

Transformers_And_LLM_Are_What_You_Dont_Need is a repository that explores the limitations of transformers in time series forecasting. It contains a collection of papers, articles, and theses discussing the effectiveness of transformers and LLMs in this domain. The repository aims to provide insights into why transformers may not be the best choice for time series forecasting tasks.

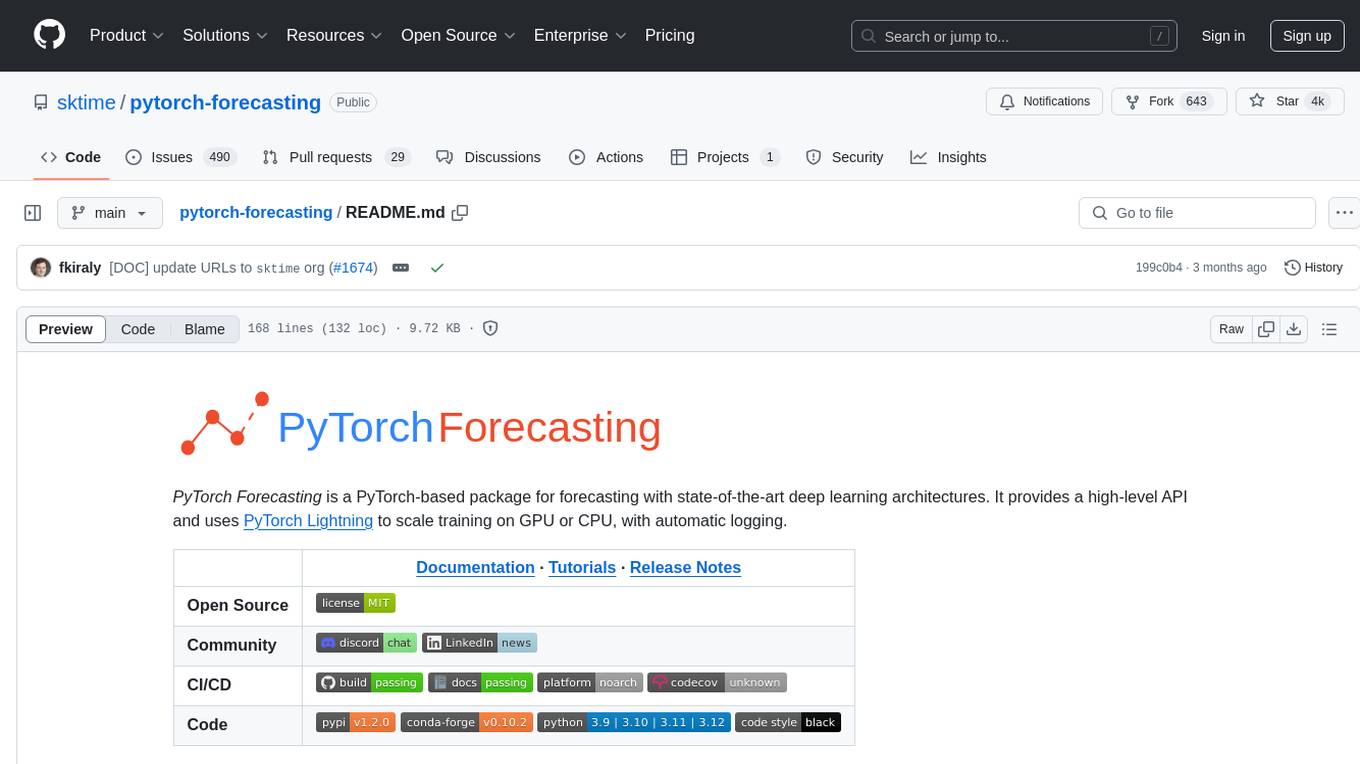

pytorch-forecasting

PyTorch Forecasting is a PyTorch-based package for time series forecasting with state-of-the-art network architectures. It offers a high-level API for training networks on pandas data frames and utilizes PyTorch Lightning for scalable training on GPUs and CPUs. The package aims to simplify time series forecasting with neural networks by providing a flexible API for professionals and default settings for beginners. It includes a timeseries dataset class, base model class, multiple neural network architectures, multi-horizon timeseries metrics, and hyperparameter tuning with optuna. PyTorch Forecasting is built on pytorch-lightning for easy training on various hardware configurations.

spider

Spider is a high-performance web crawler and indexer designed to handle data curation workloads efficiently. It offers features such as concurrency, streaming, decentralization, headless Chrome rendering, HTTP proxies, cron jobs, subscriptions, smart mode, blacklisting, whitelisting, budgeting depth, dynamic AI prompt scripting, CSS scraping, and more. Users can easily get started with the Spider Cloud hosted service or set up local installations with spider-cli. The tool supports integration with Node.js and Python for additional flexibility. With a focus on speed and scalability, Spider is ideal for extracting and organizing data from the web.

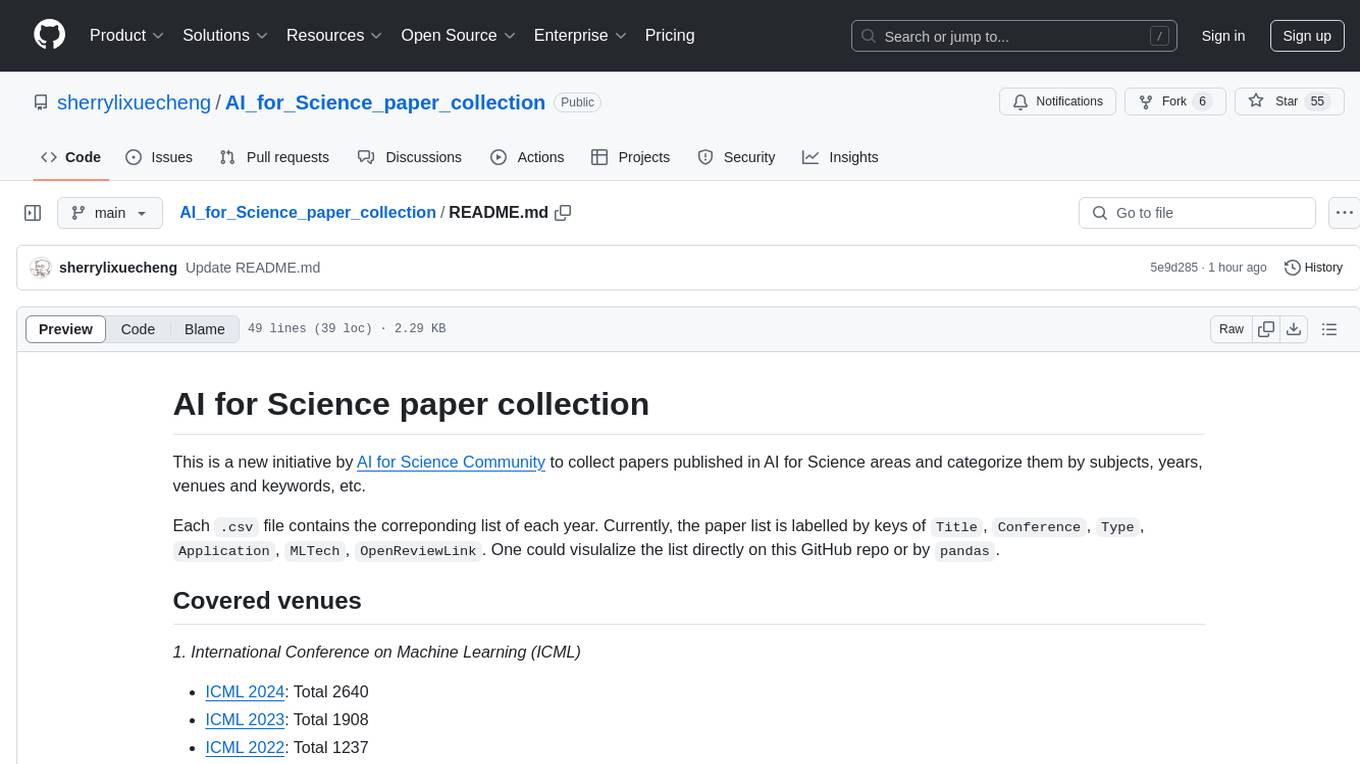

AI_for_Science_paper_collection

AI for Science paper collection is an initiative by AI for Science Community to collect and categorize papers in AI for Science areas by subjects, years, venues, and keywords. The repository contains `.csv` files with paper lists labeled by keys such as `Title`, `Conference`, `Type`, `Application`, `MLTech`, `OpenReviewLink`. It covers top conferences like ICML, NeurIPS, and ICLR. Volunteers can contribute by updating existing `.csv` files or adding new ones for uncovered conferences/years. The initiative aims to track the increasing trend of AI for Science papers and analyze trends in different applications.

pytorch-forecasting

PyTorch Forecasting is a PyTorch-based package designed for state-of-the-art timeseries forecasting using deep learning architectures. It offers a high-level API and leverages PyTorch Lightning for efficient training on GPU or CPU with automatic logging. The package aims to simplify timeseries forecasting tasks by providing a flexible API for professionals and user-friendly defaults for beginners. It includes features such as a timeseries dataset class for handling data transformations, missing values, and subsampling, various neural network architectures optimized for real-world deployment, multi-horizon timeseries metrics, and hyperparameter tuning with optuna. Built on pytorch-lightning, it supports training on CPUs, single GPUs, and multiple GPUs out-of-the-box.

For similar jobs

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

daily-poetry-image

Daily Chinese ancient poetry and AI-generated images powered by Bing DALL-E-3. GitHub Action triggers the process automatically. Poetry is provided by Today's Poem API. The website is built with Astro.

exif-photo-blog

EXIF Photo Blog is a full-stack photo blog application built with Next.js, Vercel, and Postgres. It features built-in authentication, photo upload with EXIF extraction, photo organization by tag, infinite scroll, light/dark mode, automatic OG image generation, a CMD-K menu with photo search, experimental support for AI-generated descriptions, and support for Fujifilm simulations. The application is easy to deploy to Vercel with just a few clicks and can be customized with a variety of environment variables.

SillyTavern

SillyTavern is a user interface you can install on your computer (and Android phones) that allows you to interact with text generation AIs and chat/roleplay with characters you or the community create. SillyTavern is a fork of TavernAI 1.2.8 which is under more active development and has added many major features. At this point, they can be thought of as completely independent programs.

Twitter-Insight-LLM

This project enables you to fetch liked tweets from Twitter (using Selenium), save it to JSON and Excel files, and perform initial data analysis and image captions. This is part of the initial steps for a larger personal project involving Large Language Models (LLMs).

AISuperDomain

Aila Desktop Application is a powerful tool that integrates multiple leading AI models into a single desktop application. It allows users to interact with various AI models simultaneously, providing diverse responses and insights to their inquiries. With its user-friendly interface and customizable features, Aila empowers users to engage with AI seamlessly and efficiently. Whether you're a researcher, student, or professional, Aila can enhance your AI interactions and streamline your workflow.

ChatGPT-On-CS

This project is an intelligent dialogue customer service tool based on a large model, which supports access to platforms such as WeChat, Qianniu, Bilibili, Douyin Enterprise, Douyin, Doudian, Weibo chat, Xiaohongshu professional account operation, Xiaohongshu, Zhihu, etc. You can choose GPT3.5/GPT4.0/ Lazy Treasure Box (more platforms will be supported in the future), which can process text, voice and pictures, and access external resources such as operating systems and the Internet through plug-ins, and support enterprise AI applications customized based on their own knowledge base.

obs-localvocal

LocalVocal is a live-streaming AI assistant plugin for OBS that allows you to transcribe audio speech into text and perform various language processing functions on the text using AI / LLMs (Large Language Models). It's privacy-first, with all data staying on your machine, and requires no GPU, cloud costs, network, or downtime.