text-embeddings-inference

A blazing fast inference solution for text embeddings models

Stars: 4500

Text Embeddings Inference (TEI) is a toolkit for deploying and serving open source text embeddings and sequence classification models. TEI enables high-performance extraction for popular models like FlagEmbedding, Ember, GTE, and E5. It implements features such as no model graph compilation step, Metal support for local execution on Macs, small docker images with fast boot times, token-based dynamic batching, optimized transformers code for inference using Flash Attention, Candle, and cuBLASLt, Safetensors weight loading, and production-ready features like distributed tracing with Open Telemetry and Prometheus metrics.

README:

A blazing fast inference solution for text embeddings models.

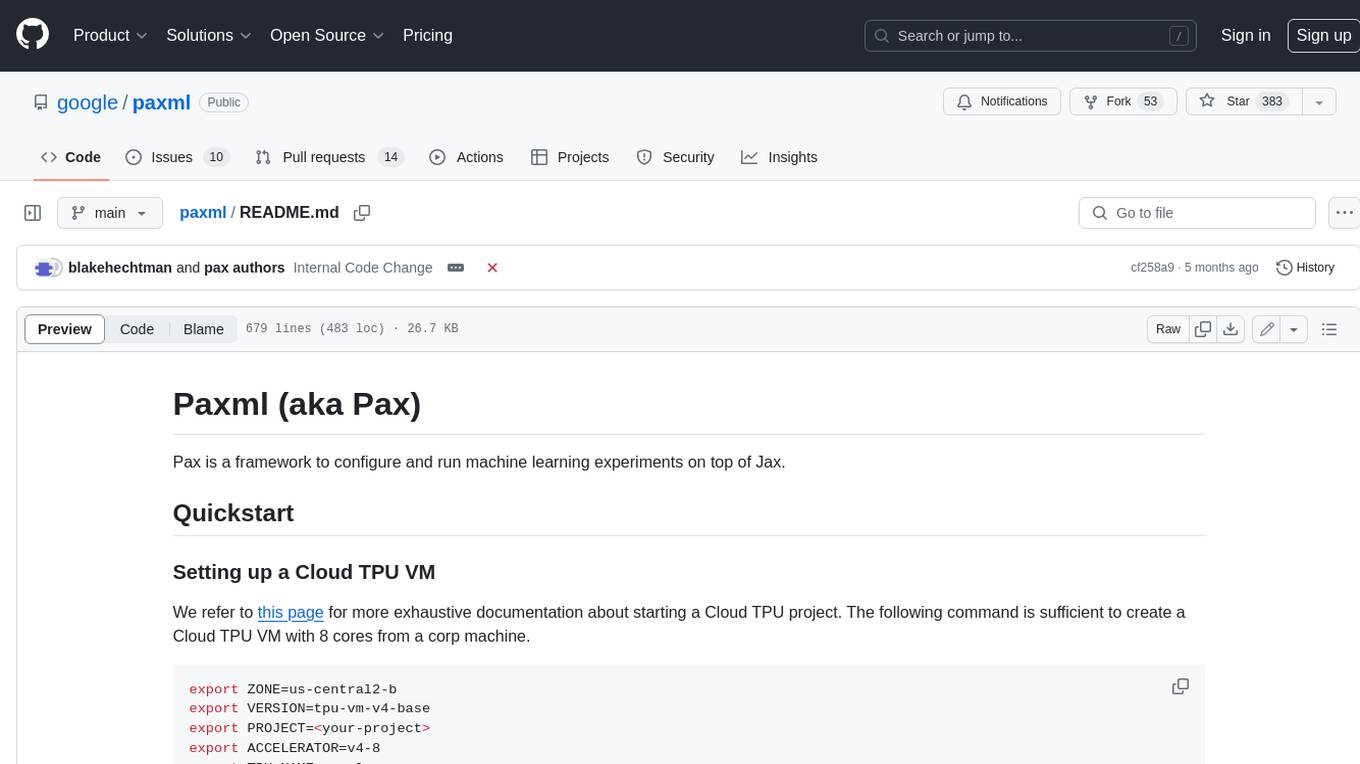

Benchmark for BAAI/bge-base-en-v1.5 on an NVIDIA A10 with a sequence length of 512 tokens:

Text Embeddings Inference (TEI) is a toolkit for deploying and serving open source text embeddings and sequence classification models. TEI enables high-performance extraction for the most popular models, including FlagEmbedding, Ember, GTE and E5. TEI implements many features such as:

- No model graph compilation step

- Metal support for local execution on Macs

- Small docker images and fast boot times. Get ready for true serverless!

- Token based dynamic batching

- Optimized transformers code for inference using Flash Attention, Candle and cuBLASLt

- Safetensors weight loading

- ONNX weight loading

- Production ready (distributed tracing with Open Telemetry, Prometheus metrics)

Text Embeddings Inference currently supports Nomic, BERT, CamemBERT, XLM-RoBERTa models with absolute positions, JinaBERT model with Alibi positions and Mistral, Alibaba GTE, Qwen2 models with Rope positions, MPNet, ModernBERT, Qwen3, and Gemma3.

Below are some examples of the currently supported models:

To explore the list of best performing text embeddings models, visit the Massive Text Embedding Benchmark (MTEB) Leaderboard.

Text Embeddings Inference currently supports CamemBERT, and XLM-RoBERTa Sequence Classification models with absolute positions.

Below are some examples of the currently supported models:

| Task | Model Type | Model ID |

|---|---|---|

| Re-Ranking | XLM-RoBERTa | BAAI/bge-reranker-large |

| Re-Ranking | XLM-RoBERTa | BAAI/bge-reranker-base |

| Re-Ranking | GTE | Alibaba-NLP/gte-multilingual-reranker-base |

| Re-Ranking | ModernBert | Alibaba-NLP/gte-reranker-modernbert-base |

| Sentiment Analysis | RoBERTa | SamLowe/roberta-base-go_emotions |

model=Qwen/Qwen3-Embedding-0.6B

volume=$PWD/data # share a volume with the Docker container to avoid downloading weights every run

docker run --gpus all -p 8080:80 -v $volume:/data --pull always ghcr.io/huggingface/text-embeddings-inference:cuda-1.9 --model-id $modelAnd then you can make requests like

curl 127.0.0.1:8080/embed \

-X POST \

-d '{"inputs":"What is Deep Learning?"}' \

-H 'Content-Type: application/json'Note: To use GPUs, you need to install the NVIDIA Container Toolkit. NVIDIA drivers on your machine need to be compatible with CUDA version 12.2 or higher.

To see all options to serve your models:

$ text-embeddings-router --help

Text Embedding Webserver

Usage: text-embeddings-router [OPTIONS] --model-id <MODEL_ID>

Options:

--model-id <MODEL_ID>

The Hugging Face model ID, can be any model listed on <https://huggingface.co/models> with the `text-embeddings-inference` tag (meaning it's compatible with Text Embeddings Inference).

Alternatively, the specified ID can also be a path to a local directory containing the necessary model files saved by the `save_pretrained(...)` methods of either Transformers or Sentence Transformers.

[env: MODEL_ID=]

--revision <REVISION>

The actual revision of the model if you're referring to a model on the hub. You can use a specific commit id or a branch like `refs/pr/2`

[env: REVISION=]

--tokenization-workers <TOKENIZATION_WORKERS>

Optionally control the number of tokenizer workers used for payload tokenization, validation and truncation. Default to the number of CPU cores on the machine

[env: TOKENIZATION_WORKERS=]

--dtype <DTYPE>

The dtype to be forced upon the model

[env: DTYPE=]

[possible values: float16, float32]

--served-model-name <SERVED_MODEL_NAME>

The name of the model that is being served. If not specified, defaults to `--model-id`. It is only used for the OpenAI-compatible endpoints via HTTP

[env: SERVED_MODEL_NAME=]

--pooling <POOLING>

Optionally control the pooling method for embedding models.

If `pooling` is not set, the pooling configuration will be parsed from the model `1_Pooling/config.json` configuration.

If `pooling` is set, it will override the model pooling configuration

[env: POOLING=]

Possible values:

- cls: Select the CLS token as embedding

- mean: Apply Mean pooling to the model embeddings

- splade: Apply SPLADE (Sparse Lexical and Expansion) to the model embeddings. This option is only available if the loaded model is a `ForMaskedLM` Transformer model

- last-token: Select the last token as embedding

--max-concurrent-requests <MAX_CONCURRENT_REQUESTS>

The maximum amount of concurrent requests for this particular deployment. Having a low limit will refuse clients requests instead of having them wait for too long and is usually good to handle backpressure correctly

[env: MAX_CONCURRENT_REQUESTS=]

[default: 512]

--max-batch-tokens <MAX_BATCH_TOKENS>

**IMPORTANT** This is one critical control to allow maximum usage of the available hardware.

This represents the total amount of potential tokens within a batch.

For `max_batch_tokens=1000`, you could fit `10` queries of `total_tokens=100` or a single query of `1000` tokens.

Overall this number should be the largest possible until the model is compute bound. Since the actual memory overhead depends on the model implementation, text-embeddings-inference cannot infer this number automatically.

[env: MAX_BATCH_TOKENS=]

[default: 16384]

--max-batch-requests <MAX_BATCH_REQUESTS>

Optionally control the maximum number of individual requests in a batch

[env: MAX_BATCH_REQUESTS=]

--max-client-batch-size <MAX_CLIENT_BATCH_SIZE>

Control the maximum number of inputs that a client can send in a single request

[env: MAX_CLIENT_BATCH_SIZE=]

[default: 32]

--auto-truncate

Control automatic truncation of inputs that exceed the model's maximum supported size. Defaults to `true` (truncation enabled). Set to `false` to disable truncation; when disabled and the model's maximum input length exceeds `--max-batch-tokens`, the server will refuse to start with an error instead of silently truncating sequences.

Unused for gRPC servers

[env: AUTO_TRUNCATE=]

--default-prompt-name <DEFAULT_PROMPT_NAME>

The name of the prompt that should be used by default for encoding. If not set, no prompt will be applied.

Must be a key in the `sentence-transformers` configuration `prompts` dictionary.

For example if ``default_prompt_name`` is "query" and the ``prompts`` is {"query": "query: ", ...}, then the sentence "What is the capital of France?" will be encoded as "query: What is the capital of France?" because the prompt text will be prepended before any text to encode.

The argument '--default-prompt-name <DEFAULT_PROMPT_NAME>' cannot be used with '--default-prompt <DEFAULT_PROMPT>`

[env: DEFAULT_PROMPT_NAME=]

--default-prompt <DEFAULT_PROMPT>

The prompt that should be used by default for encoding. If not set, no prompt will be applied.

For example if ``default_prompt`` is "query: " then the sentence "What is the capital of France?" will be encoded as "query: What is the capital of France?" because the prompt text will be prepended before any text to encode.

The argument '--default-prompt <DEFAULT_PROMPT>' cannot be used with '--default-prompt-name <DEFAULT_PROMPT_NAME>`

[env: DEFAULT_PROMPT=]

--dense-path <DENSE_PATH>

Optionally, define the path to the Dense module required for some embedding models.

Some embedding models require an extra `Dense` module which contains a single Linear layer and an activation function. By default, those `Dense` modules are stored under the `2_Dense` directory, but there might be cases where different `Dense` modules are provided, to convert the pooled embeddings into different dimensions, available as `2_Dense_<dims>` e.g. https://huggingface.co/NovaSearch/stella_en_400M_v5.

Note that this argument is optional, only required to be set if there is no `modules.json` file or when you want to override a single Dense module path, only when running with the `candle` backend.

[env: DENSE_PATH=]

--hf-token <HF_TOKEN>

Your Hugging Face Hub token. If neither `--hf-token` nor `HF_TOKEN` is set, the token will be read from the `$HF_HOME/token` path, if it exists. This ensures access to private or gated models, and allows for a more permissive rate limiting

[env: HF_TOKEN=]

--hostname <HOSTNAME>

The IP address to listen on

[env: HOSTNAME=]

[default: 0.0.0.0]

-p, --port <PORT>

The port to listen on

[env: PORT=]

[default: 3000]

--uds-path <UDS_PATH>

The name of the unix socket some text-embeddings-inference backends will use as they communicate internally with gRPC

[env: UDS_PATH=]

[default: /tmp/text-embeddings-inference-server]

--huggingface-hub-cache <HUGGINGFACE_HUB_CACHE>

The location of the huggingface hub cache. Used to override the location if you want to provide a mounted disk for instance

[env: HUGGINGFACE_HUB_CACHE=]

--payload-limit <PAYLOAD_LIMIT>

Payload size limit in bytes

Default is 2MB

[env: PAYLOAD_LIMIT=]

[default: 2000000]

--api-key <API_KEY>

Set an api key for request authorization.

By default the server responds to every request. With an api key set, the requests must have the Authorization header set with the api key as Bearer token.

[env: API_KEY=]

--json-output

Outputs the logs in JSON format (useful for telemetry)

[env: JSON_OUTPUT=]

--disable-spans

Whether or not to include the log trace through spans

[env: DISABLE_SPANS=]

--otlp-endpoint <OTLP_ENDPOINT>

The grpc endpoint for opentelemetry. Telemetry is sent to this endpoint as OTLP over gRPC. e.g. `http://localhost:4317`

[env: OTLP_ENDPOINT=]

--otlp-service-name <OTLP_SERVICE_NAME>

The service name for opentelemetry. e.g. `text-embeddings-inference.server`

[env: OTLP_SERVICE_NAME=]

[default: text-embeddings-inference.server]

--prometheus-port <PROMETHEUS_PORT>

The Prometheus port to listen on

[env: PROMETHEUS_PORT=]

[default: 9000]

--cors-allow-origin <CORS_ALLOW_ORIGIN>

Unused for gRPC servers

[env: CORS_ALLOW_ORIGIN=]

-h, --help

Print help (see a summary with '-h')

-V, --version

Print versionText Embeddings Inference ships with multiple Docker images that you can use to target a specific backend:

| Architecture | Image |

|---|---|

| CPU | ghcr.io/huggingface/text-embeddings-inference:cpu-1.9 |

| Volta | NOT SUPPORTED |

| Turing (T4, RTX 2000 series, ...) | ghcr.io/huggingface/text-embeddings-inference:turing-1.9 (experimental) |

| Ampere 8.0 (A100, A30) | ghcr.io/huggingface/text-embeddings-inference:1.9 |

| Ampere 8.6 (A10, A40, ...) | ghcr.io/huggingface/text-embeddings-inference:86-1.9 |

| Ada Lovelace (RTX 4000 series, ...) | ghcr.io/huggingface/text-embeddings-inference:89-1.9 |

| Hopper (H100) | ghcr.io/huggingface/text-embeddings-inference:hopper-1.9 |

| Blackwell 10.0 (B200, GB200, ...) | ghcr.io/huggingface/text-embeddings-inference:100-1.9 (experimental) |

| Blackwell 12.0 (GeForce RTX 50X0, ...) | ghcr.io/huggingface/text-embeddings-inference:120-1.9 (experimental) |

Warning: Flash Attention is turned off by default for the Turing image as it suffers from precision issues.

You can turn Flash Attention v1 ON by using the USE_FLASH_ATTENTION=True environment variable.

You can consult the OpenAPI documentation of the text-embeddings-inference REST API using the /docs route.

The Swagger UI is also available

at: https://huggingface.github.io/text-embeddings-inference.

You have the option to utilize the HF_TOKEN environment variable for configuring the token employed by

text-embeddings-inference. This allows you to gain access to protected resources.

For example:

- Go to https://huggingface.co/settings/tokens

- Copy your CLI READ token

- Export

HF_TOKEN=<your CLI READ token>

or with Docker:

model=<your private model>

volume=$PWD/data # share a volume with the Docker container to avoid downloading weights every run

token=<your CLI READ token>

docker run --gpus all -e HF_TOKEN=$token -p 8080:80 -v $volume:/data --pull always ghcr.io/huggingface/text-embeddings-inference:cuda-1.9 --model-id $modelTo deploy Text Embeddings Inference in an air-gapped environment, first download the weights and then mount them inside the container using a volume.

For example:

# (Optional) create a `models` directory

mkdir models

cd models

# Make sure you have git-lfs installed (https://git-lfs.com)

git lfs install

git clone https://huggingface.co/Qwen/Qwen3-Embedding-0.6B

# Set the models directory as the volume path

volume=$PWD

# Mount the models directory inside the container with a volume and set the model ID

docker run --gpus all -p 8080:80 -v $volume:/data --pull always ghcr.io/huggingface/text-embeddings-inference:cuda-1.9 --model-id /data/Qwen3-Embedding-0.6Btext-embeddings-inference v0.4.0 added support for CamemBERT, RoBERTa, XLM-RoBERTa, and GTE Sequence Classification models.

Re-rankers models are Sequence Classification cross-encoders models with a single class that scores the similarity

between a query and a text.

See this blogpost by the LlamaIndex team to understand how you can use re-rankers models in your RAG pipeline to improve downstream performance.

model=BAAI/bge-reranker-large

volume=$PWD/data # share a volume with the Docker container to avoid downloading weights every run

docker run --gpus all -p 8080:80 -v $volume:/data --pull always ghcr.io/huggingface/text-embeddings-inference:cuda-1.9 --model-id $modelAnd then you can rank the similarity between a query and a list of texts with:

curl 127.0.0.1:8080/rerank \

-X POST \

-d '{"query": "What is Deep Learning?", "texts": ["Deep Learning is not...", "Deep learning is..."]}' \

-H 'Content-Type: application/json'You can also use classic Sequence Classification models like SamLowe/roberta-base-go_emotions:

model=SamLowe/roberta-base-go_emotions

volume=$PWD/data # share a volume with the Docker container to avoid downloading weights every run

docker run --gpus all -p 8080:80 -v $volume:/data --pull always ghcr.io/huggingface/text-embeddings-inference:cuda-1.9 --model-id $modelOnce you have deployed the model you can use the predict endpoint to get the emotions most associated with an input:

curl 127.0.0.1:8080/predict \

-X POST \

-d '{"inputs":"I like you."}' \

-H 'Content-Type: application/json'You can choose to activate SPLADE pooling for Bert and Distilbert MaskedLM architectures:

model=naver/efficient-splade-VI-BT-large-query

volume=$PWD/data # share a volume with the Docker container to avoid downloading weights every run

docker run --gpus all -p 8080:80 -v $volume:/data --pull always ghcr.io/huggingface/text-embeddings-inference:cuda-1.9 --model-id $model --pooling spladeOnce you have deployed the model you can use the /embed_sparse endpoint to get the sparse embedding:

curl 127.0.0.1:8080/embed_sparse \

-X POST \

-d '{"inputs":"I like you."}' \

-H 'Content-Type: application/json'text-embeddings-inference is instrumented with distributed tracing using OpenTelemetry. You can use this feature

by setting the address to an OTLP collector with the --otlp-endpoint argument.

text-embeddings-inference offers a gRPC API as an alternative to the default HTTP API for high performance

deployments. The API protobuf definition can be

found here.

You can use the gRPC API by adding the -grpc tag to any TEI Docker image. For example:

model=Qwen/Qwen3-Embedding-0.6B

volume=$PWD/data # share a volume with the Docker container to avoid downloading weights every run

docker run --gpus all -p 8080:80 -v $volume:/data --pull always ghcr.io/huggingface/text-embeddings-inference:cuda-1.9-grpc --model-id $modelgrpcurl -d '{"inputs": "What is Deep Learning"}' -plaintext 0.0.0.0:8080 tei.v1.Embed/EmbedYou can also opt to install text-embeddings-inference locally.

First install Rust:

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | shThen run:

# On x86 with ONNX backend (recommended)

cargo install --path router -F ort

# On x86 with Intel backend

cargo install --path router -F mkl

# On M1 or M2

cargo install --path router -F metalYou can now launch Text Embeddings Inference on CPU with:

model=Qwen/Qwen3-Embedding-0.6B

text-embeddings-router --model-id $model --port 8080Note: on some machines, you may also need the OpenSSL libraries and gcc. On Linux machines, run:

sudo apt-get install libssl-dev gcc -yGPUs with CUDA compute capabilities < 7.5 are not supported (V100, Titan V, GTX 1000 series, ...).

Make sure you have CUDA and the NVIDIA drivers installed. NVIDIA drivers on your device need to be compatible with CUDA version 12.2 or higher. You also need to add the NVIDIA binaries to your path:

export PATH=$PATH:/usr/local/cuda/binThen run the following (might take a while as it needs to compile the CUDA kernels):

# On Turing GPUs (T4, RTX 2000 series ... )

cargo install --path router -F candle-cuda-turing

# On Ampere, Ada Lovelace, Hopper and Blackwell

cargo install --path router -F candle-cudaYou can now launch Text Embeddings Inference on GPU as follows:

model=Qwen/Qwen3-Embedding-0.6B

text-embeddings-router --model-id $model --port 8080You can build the CPU container with Docker as:

docker build -f Dockerfile .To build the CUDA containers, you need to know the compute cap of the GPU you will be using at runtime, to build the image accordingly:

# Get submodule dependencies

git submodule update --init

# Example for Turing (T4, RTX 2000 series, ...)

runtime_compute_cap=75

# Example for Ampere (A100, ...)

runtime_compute_cap=80

# Example for Ampere (A10, ...)

runtime_compute_cap=86

# Example for Ada Lovelace (RTX 4000 series, ...)

runtime_compute_cap=89

# Example for Hopper (H100, ...)

runtime_compute_cap=90

# Example for Blackwell (B200, GB200, ...)

runtime_compute_cap=100

# Example for Blackwell (GeForce RTX 50X0, RTX PRO 6000, ...)

runtime_compute_cap=120

docker build . -f Dockerfile-cuda --build-arg CUDA_COMPUTE_CAP=$runtime_compute_capAs explained here MPS-Ready, ARM64 Docker Image, Metal / MPS is not supported via Docker. As such inference will be CPU bound and most likely pretty slow when using this docker image on an M1/M2 ARM CPU.

docker build . -f Dockerfile --platform=linux/arm64

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for text-embeddings-inference

Similar Open Source Tools

text-embeddings-inference

Text Embeddings Inference (TEI) is a toolkit for deploying and serving open source text embeddings and sequence classification models. TEI enables high-performance extraction for popular models like FlagEmbedding, Ember, GTE, and E5. It implements features such as no model graph compilation step, Metal support for local execution on Macs, small docker images with fast boot times, token-based dynamic batching, optimized transformers code for inference using Flash Attention, Candle, and cuBLASLt, Safetensors weight loading, and production-ready features like distributed tracing with Open Telemetry and Prometheus metrics.

r2ai

r2ai is a tool designed to run a language model locally without internet access. It can be used to entertain users or assist in answering questions related to radare2 or reverse engineering. The tool allows users to prompt the language model, index large codebases, slurp file contents, embed the output of an r2 command, define different system-level assistant roles, set environment variables, and more. It is accessible as an r2lang-python plugin and can be scripted from various languages. Users can use different models, adjust query templates dynamically, load multiple models, and make them communicate with each other.

moatless-tools

Moatless Tools is a hobby project focused on experimenting with using Large Language Models (LLMs) to edit code in large existing codebases. The project aims to build tools that insert the right context into prompts and handle responses effectively. It utilizes an agentic loop functioning as a finite state machine to transition between states like Search, Identify, PlanToCode, ClarifyChange, and EditCode for code editing tasks.

mLoRA

mLoRA (Multi-LoRA Fine-Tune) is an open-source framework for efficient fine-tuning of multiple Large Language Models (LLMs) using LoRA and its variants. It allows concurrent fine-tuning of multiple LoRA adapters with a shared base model, efficient pipeline parallelism algorithm, support for various LoRA variant algorithms, and reinforcement learning preference alignment algorithms. mLoRA helps save computational and memory resources when training multiple adapters simultaneously, achieving high performance on consumer hardware.

litserve

LitServe is a high-throughput serving engine for deploying AI models at scale. It generates an API endpoint for a model, handles batching, streaming, autoscaling across CPU/GPUs, and more. Built for enterprise scale, it supports every framework like PyTorch, JAX, Tensorflow, and more. LitServe is designed to let users focus on model performance, not the serving boilerplate. It is like PyTorch Lightning for model serving but with broader framework support and scalability.

AQLM

AQLM is the official PyTorch implementation for Extreme Compression of Large Language Models via Additive Quantization. It includes prequantized AQLM models without PV-Tuning and PV-Tuned models for LLaMA, Mistral, and Mixtral families. The repository provides inference examples, model details, and quantization setups. Users can run prequantized models using Google Colab examples, work with different model families, and install the necessary inference library. The repository also offers detailed instructions for quantization, fine-tuning, and model evaluation. AQLM quantization involves calibrating models for compression, and users can improve model accuracy through finetuning. Additionally, the repository includes information on preparing models for inference and contributing guidelines.

paxml

Pax is a framework to configure and run machine learning experiments on top of Jax.

FlexFlow

FlexFlow Serve is an open-source compiler and distributed system for **low latency**, **high performance** LLM serving. FlexFlow Serve outperforms existing systems by 1.3-2.0x for single-node, multi-GPU inference and by 1.4-2.4x for multi-node, multi-GPU inference.

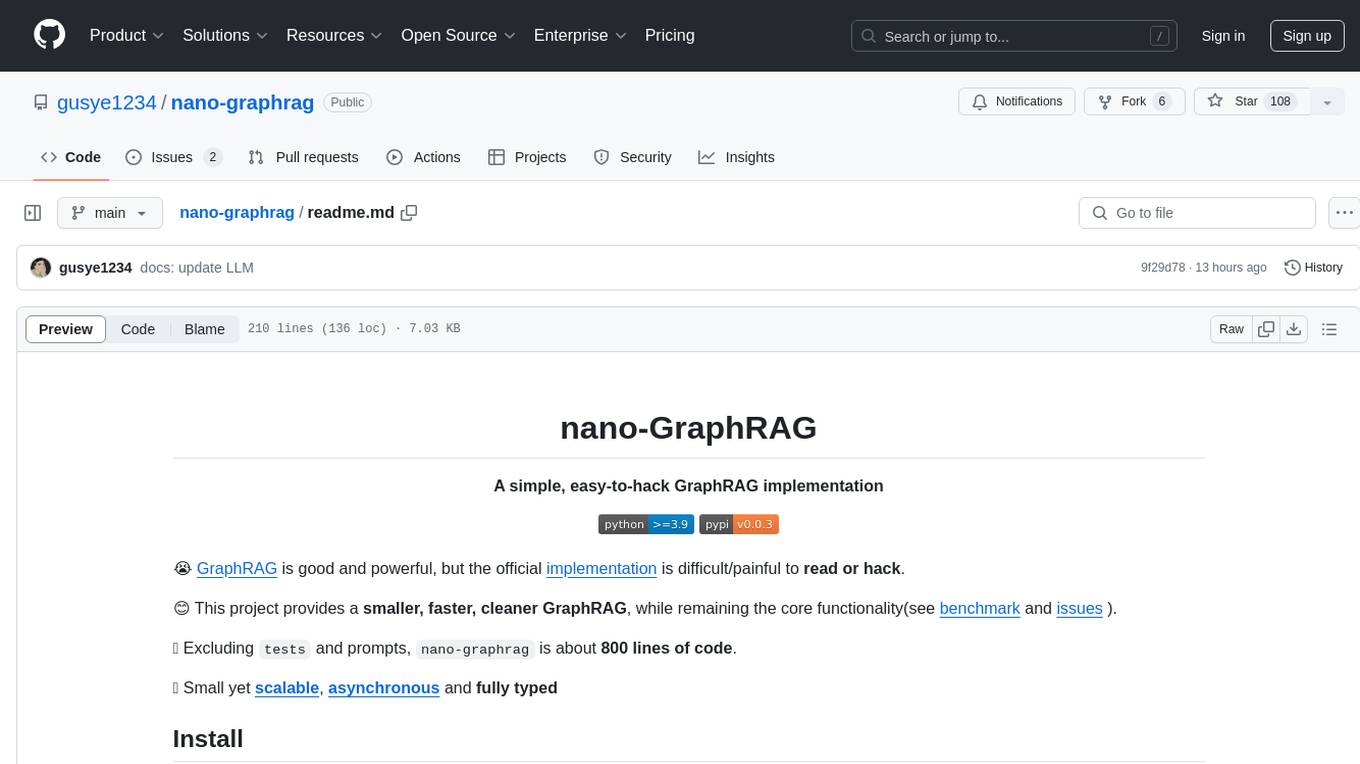

nano-graphrag

nano-GraphRAG is a simple, easy-to-hack implementation of GraphRAG that provides a smaller, faster, and cleaner version of the official implementation. It is about 800 lines of code, small yet scalable, asynchronous, and fully typed. The tool supports incremental insert, async methods, and various parameters for customization. Users can replace storage components and LLM functions as needed. It also allows for embedding function replacement and comes with pre-defined prompts for entity extraction and community reports. However, some features like covariates and global search implementation differ from the original GraphRAG. Future versions aim to address issues related to data source ID, community description truncation, and add new components.

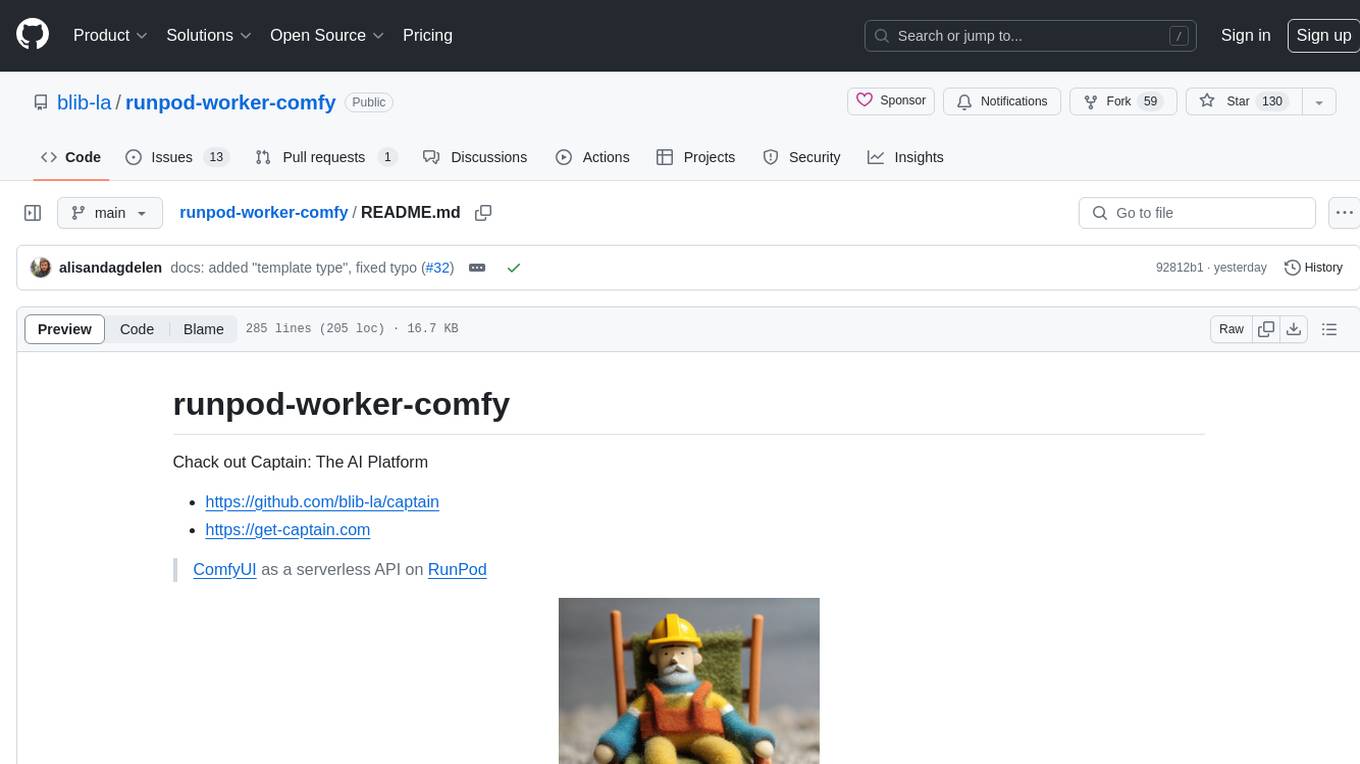

runpod-worker-comfy

runpod-worker-comfy is a serverless API tool that allows users to run any ComfyUI workflow to generate an image. Users can provide input images as base64-encoded strings, and the generated image can be returned as a base64-encoded string or uploaded to AWS S3. The tool is built on Ubuntu + NVIDIA CUDA and provides features like built-in checkpoints and VAE models. Users can configure environment variables to upload images to AWS S3 and interact with the RunPod API to generate images. The tool also supports local testing and deployment to Docker hub using Github Actions.

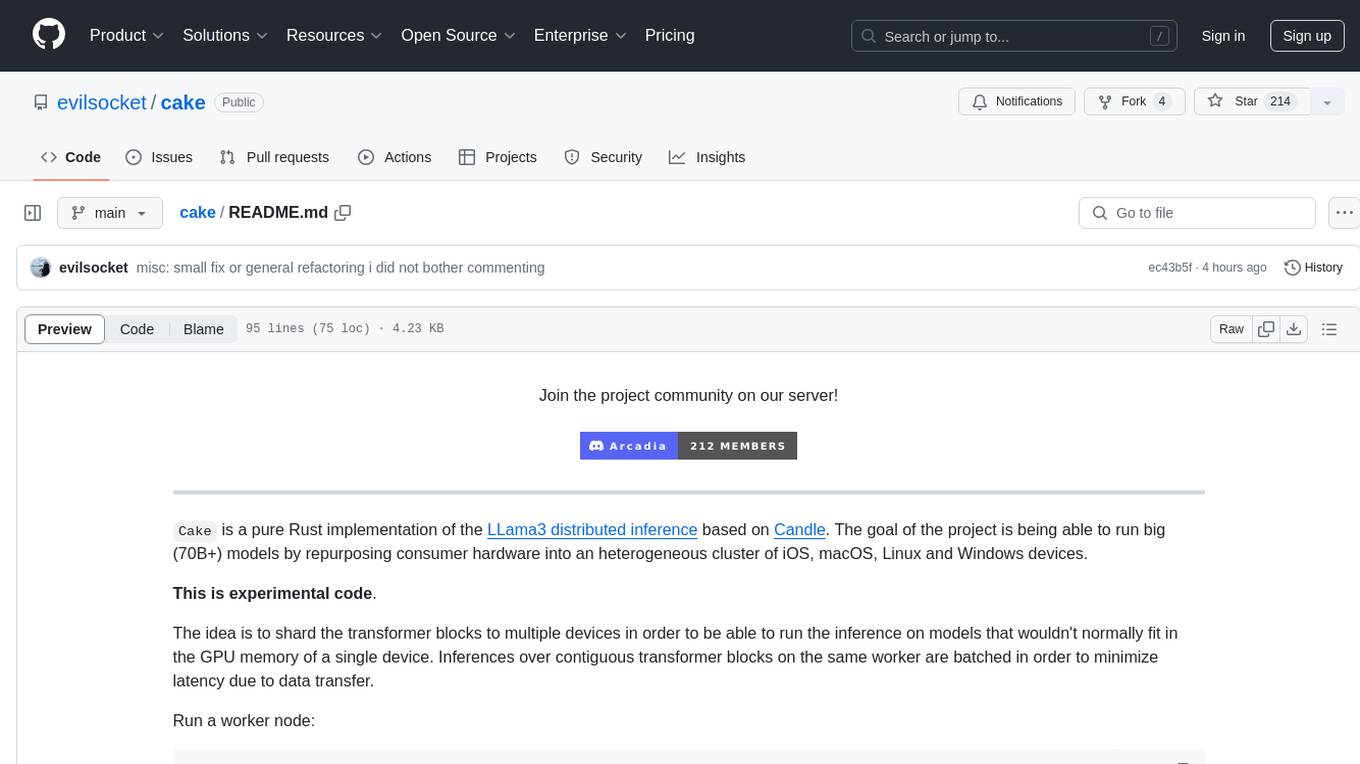

cake

cake is a pure Rust implementation of the llama3 LLM distributed inference based on Candle. The project aims to enable running large models on consumer hardware clusters of iOS, macOS, Linux, and Windows devices by sharding transformer blocks. It allows running inferences on models that wouldn't fit in a single device's GPU memory by batching contiguous transformer blocks on the same worker to minimize latency. The tool provides a way to optimize memory and disk space by splitting the model into smaller bundles for workers, ensuring they only have the necessary data. cake supports various OS, architectures, and accelerations, with different statuses for each configuration.

HuixiangDou

HuixiangDou is a **group chat** assistant based on LLM (Large Language Model). Advantages: 1. Design a two-stage pipeline of rejection and response to cope with group chat scenario, answer user questions without message flooding, see arxiv2401.08772 2. Low cost, requiring only 1.5GB memory and no need for training 3. Offers a complete suite of Web, Android, and pipeline source code, which is industrial-grade and commercially viable Check out the scenes in which HuixiangDou are running and join WeChat Group to try AI assistant inside. If this helps you, please give it a star ⭐

chatgpt-cli

ChatGPT CLI provides a powerful command-line interface for seamless interaction with ChatGPT models via OpenAI and Azure. It features streaming capabilities, extensive configuration options, and supports various modes like streaming, query, and interactive mode. Users can manage thread-based context, sliding window history, and provide custom context from any source. The CLI also offers model and thread listing, advanced configuration options, and supports GPT-4, GPT-3.5-turbo, and Perplexity's models. Installation is available via Homebrew or direct download, and users can configure settings through default values, a config.yaml file, or environment variables.

ragflow

RAGFlow is an open-source Retrieval-Augmented Generation (RAG) engine that combines deep document understanding with Large Language Models (LLMs) to provide accurate question-answering capabilities. It offers a streamlined RAG workflow for businesses of all sizes, enabling them to extract knowledge from unstructured data in various formats, including Word documents, slides, Excel files, images, and more. RAGFlow's key features include deep document understanding, template-based chunking, grounded citations with reduced hallucinations, compatibility with heterogeneous data sources, and an automated and effortless RAG workflow. It supports multiple recall paired with fused re-ranking, configurable LLMs and embedding models, and intuitive APIs for seamless integration with business applications.

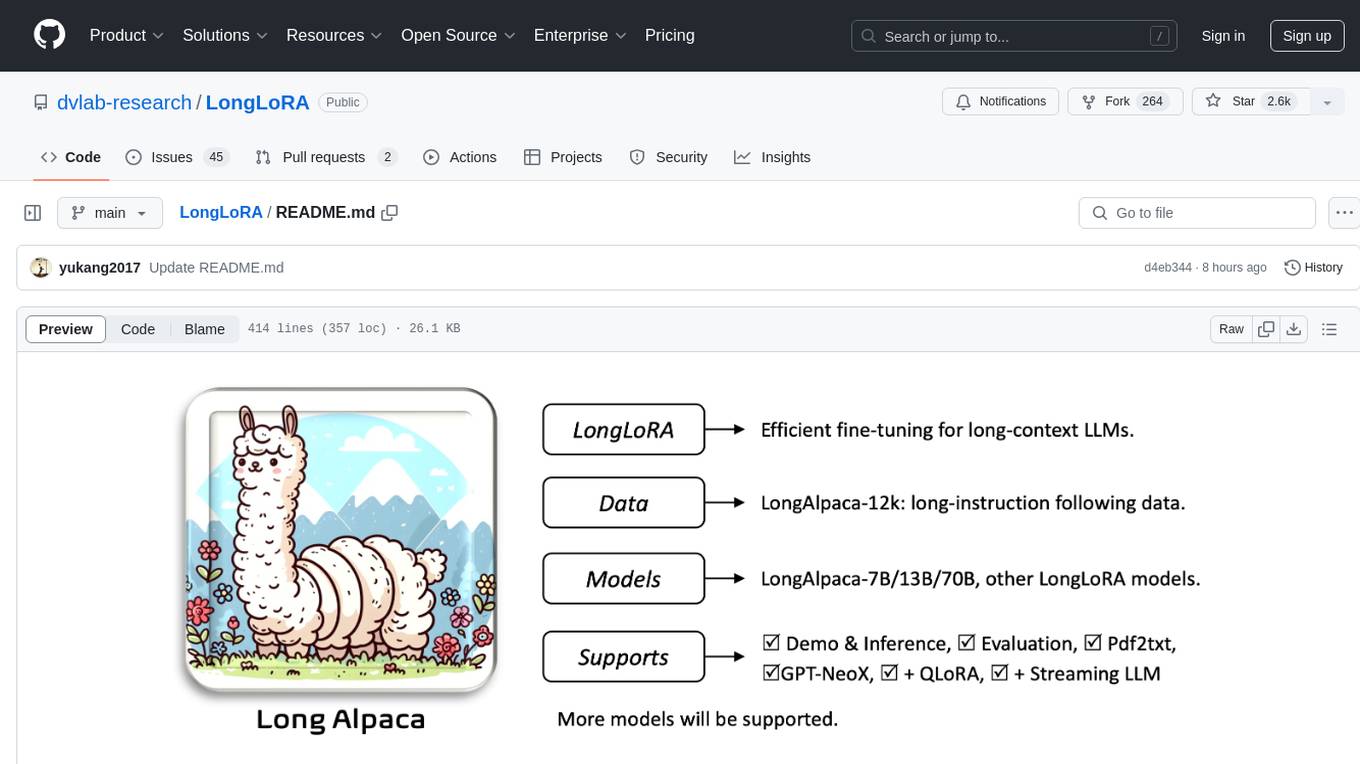

LongLoRA

LongLoRA is a tool for efficient fine-tuning of long-context large language models. It includes LongAlpaca data with long QA data collected and short QA sampled, models from 7B to 70B with context length from 8k to 100k, and support for GPTNeoX models. The tool supports supervised fine-tuning, context extension, and improved LoRA fine-tuning. It provides pre-trained weights, fine-tuning instructions, evaluation methods, local and online demos, streaming inference, and data generation via Pdf2text. LongLoRA is licensed under Apache License 2.0, while data and weights are under CC-BY-NC 4.0 License for research use only.

xFasterTransformer

xFasterTransformer is an optimized solution for Large Language Models (LLMs) on the X86 platform, providing high performance and scalability for inference on mainstream LLM models. It offers C++ and Python APIs for easy integration, along with example codes and benchmark scripts. Users can prepare models in a different format, convert them, and use the APIs for tasks like encoding input prompts, generating token ids, and serving inference requests. The tool supports various data types and models, and can run in single or multi-rank modes using MPI. A web demo based on Gradio is available for popular LLM models like ChatGLM and Llama2. Benchmark scripts help evaluate model inference performance quickly, and MLServer enables serving with REST and gRPC interfaces.

For similar tasks

nlp-llms-resources

The 'nlp-llms-resources' repository is a comprehensive resource list for Natural Language Processing (NLP) and Large Language Models (LLMs). It covers a wide range of topics including traditional NLP datasets, data acquisition, libraries for NLP, neural networks, sentiment analysis, optical character recognition, information extraction, semantics, topic modeling, multilingual NLP, domain-specific LLMs, vector databases, ethics, costing, books, courses, surveys, aggregators, newsletters, papers, conferences, and societies. The repository provides valuable information and resources for individuals interested in NLP and LLMs.

adata

AData is a free and open-source A-share database that focuses on transaction-related data. It provides comprehensive data on stocks, including basic information, market data, and sentiment analysis. AData is designed to be easy to use and integrate with other applications, making it a valuable tool for quantitative trading and AI training.

PIXIU

PIXIU is a project designed to support the development, fine-tuning, and evaluation of Large Language Models (LLMs) in the financial domain. It includes components like FinBen, a Financial Language Understanding and Prediction Evaluation Benchmark, FIT, a Financial Instruction Dataset, and FinMA, a Financial Large Language Model. The project provides open resources, multi-task and multi-modal financial data, and diverse financial tasks for training and evaluation. It aims to encourage open research and transparency in the financial NLP field.

hezar

Hezar is an all-in-one AI library designed specifically for the Persian community. It brings together various AI models and tools, making it easy to use AI with just a few lines of code. The library seamlessly integrates with Hugging Face Hub, offering a developer-friendly interface and task-based model interface. In addition to models, Hezar provides tools like word embeddings, tokenizers, feature extractors, and more. It also includes supplementary ML tools for deployment, benchmarking, and optimization.

text-embeddings-inference

Text Embeddings Inference (TEI) is a toolkit for deploying and serving open source text embeddings and sequence classification models. TEI enables high-performance extraction for popular models like FlagEmbedding, Ember, GTE, and E5. It implements features such as no model graph compilation step, Metal support for local execution on Macs, small docker images with fast boot times, token-based dynamic batching, optimized transformers code for inference using Flash Attention, Candle, and cuBLASLt, Safetensors weight loading, and production-ready features like distributed tracing with Open Telemetry and Prometheus metrics.

CodeProject.AI-Server

CodeProject.AI Server is a standalone, self-hosted, fast, free, and open-source Artificial Intelligence microserver designed for any platform and language. It can be installed locally without the need for off-device or out-of-network data transfer, providing an easy-to-use solution for developers interested in AI programming. The server includes a HTTP REST API server, backend analysis services, and the source code, enabling users to perform various AI tasks locally without relying on external services or cloud computing. Current capabilities include object detection, face detection, scene recognition, sentiment analysis, and more, with ongoing feature expansions planned. The project aims to promote AI development, simplify AI implementation, focus on core use-cases, and leverage the expertise of the developer community.

spark-nlp

Spark NLP is a state-of-the-art Natural Language Processing library built on top of Apache Spark. It provides simple, performant, and accurate NLP annotations for machine learning pipelines that scale easily in a distributed environment. Spark NLP comes with 36000+ pretrained pipelines and models in more than 200+ languages. It offers tasks such as Tokenization, Word Segmentation, Part-of-Speech Tagging, Named Entity Recognition, Dependency Parsing, Spell Checking, Text Classification, Sentiment Analysis, Token Classification, Machine Translation, Summarization, Question Answering, Table Question Answering, Text Generation, Image Classification, Image to Text (captioning), Automatic Speech Recognition, Zero-Shot Learning, and many more NLP tasks. Spark NLP is the only open-source NLP library in production that offers state-of-the-art transformers such as BERT, CamemBERT, ALBERT, ELECTRA, XLNet, DistilBERT, RoBERTa, DeBERTa, XLM-RoBERTa, Longformer, ELMO, Universal Sentence Encoder, Llama-2, M2M100, BART, Instructor, E5, Google T5, MarianMT, OpenAI GPT2, Vision Transformers (ViT), OpenAI Whisper, and many more not only to Python and R, but also to JVM ecosystem (Java, Scala, and Kotlin) at scale by extending Apache Spark natively.

scikit-llm

Scikit-LLM is a tool that seamlessly integrates powerful language models like ChatGPT into scikit-learn for enhanced text analysis tasks. It allows users to leverage large language models for various text analysis applications within the familiar scikit-learn framework. The tool simplifies the process of incorporating advanced language processing capabilities into machine learning pipelines, enabling users to benefit from the latest advancements in natural language processing.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.