redisvl

Redis Vector Library (RedisVL) enables Redis as a realtime vector database for LLM Applications.

Stars: 158

Redis Vector Library (RedisVL) is a Python client library for building AI applications on top of Redis. It provides a high-level interface for managing vector indexes, performing vector search, and integrating with popular embedding models and providers. RedisVL is designed to make it easy for developers to build and deploy AI applications that leverage the speed, flexibility, and reliability of Redis.

README:

The Python Redis Vector Library (RedisVL) is a tailor-made client for AI applications leveraging Redis.

It's specifically designed for:

- Information retrieval & vector similarity search

- Real-time RAG pipelines

- Recommendation engines

Enhance your applications with Redis' speed, flexibility, and reliability, incorporating capabilities like vector-based semantic search, full-text search, and geo-spatial search.

The emergence of the modern GenAI stack, including vector databases and LLMs, has become increasingly popular due to accelerated innovation & research in information retrieval, the ubiquity of tools & frameworks (e.g. LangChain, LlamaIndex, EmbedChain), and the never-ending stream of business problems addressable by AI.

However, organizations still struggle with delivering reliable solutions quickly (time to value) at scale (beyond a demo).

Redis has been a staple for over a decade in the NoSQL world, and boasts a number of flexible data structures and processing engines to handle realtime application workloads like caching, session management, and search. Most notably, Redis has been used as a vector database for RAG, as an LLM cache, and chat session memory store for conversational AI applications.

The vector library bridges the gap between the emerging AI-native developer ecosystem and the capabilities of Redis by providing a lightweight, elegant, and intuitive interface. Built on the back of the popular Python client, redis-py, it abstracts the features Redis into a grammar that is more aligned to the needs of today's AI/ML Engineers or Data Scientists.

Install redisvl into your Python (>=3.8) environment using pip:

pip install redisvlFor more instructions, visit the

redisvlinstallation guide.

Choose from multiple Redis deployment options:

- Redis Cloud: Managed cloud database (free tier available)

-

Redis Stack: Docker image for development

docker run -d --name redis-stack -p 6379:6379 -p 8001:8001 redis/redis-stack:latest

- Redis Enterprise: Commercial, self-hosted database

- Azure Cache for Redis Enterprise: Fully managed Redis Enterprise on Azure

Enhance your experience and observability with the free Redis Insight GUI.

-

Design an

IndexSchemathat models your dataset with built-in Redis data structures (Hash or JSON) and indexable fields (e.g. text, tags, numerics, geo, and vectors).Load a schema from a YAML file:

index: name: user-index-v1 prefix: user storage_type: json fields: - name: user type: tag - name: credit_score type: tag - name: embedding type: vector attrs: algorithm: flat dims: 3 distance_metric: cosine datatype: float32

from redisvl.schema import IndexSchema schema = IndexSchema.from_yaml("schemas/schema.yaml")

Or load directly from a Python dictionary:

schema = IndexSchema.from_dict({ "index": { "name": "user-index-v1", "prefix": "user", "storage_type": "json" }, "fields": [ {"name": "user", "type": "tag"}, {"name": "credit_score", "type": "tag"}, { "name": "embedding", "type": "vector", "attrs": { "algorithm": "flat", "datatype": "float32", "dims": 4, "distance_metric": "cosine" } } ] })

-

Create a SearchIndex class with an input schema and client connection in order to perform admin and search operations on your index in Redis:

from redis import Redis from redisvl.index import SearchIndex # Establish Redis connection and define index client = Redis.from_url("redis://localhost:6379") index = SearchIndex(schema, client) # Create the index in Redis index.create()

Async compliant search index class also available:

AsyncSearchIndex -

Load and fetch data to/from your Redis instance:

data = {"user": "john", "credit_score": "high", "embedding": [0.23, 0.49, -0.18, 0.95]} # load list of dictionaries, specify the "id" field index.load([data], id_field="user") # fetch by "id" john = index.fetch("john")

Define queries and perform advanced searches over your indices, including the combination of vectors, metadata filters, and more.

-

VectorQuery - Flexible vector queries with customizable filters enabling semantic search:

from redisvl.query import VectorQuery query = VectorQuery( vector=[0.16, -0.34, 0.98, 0.23], vector_field_name="embedding", num_results=3 ) # run the vector search query against the embedding field results = index.query(query)

Incorporate complex metadata filters on your queries:

from redisvl.query.filter import Tag # define a tag match filter tag_filter = Tag("user") == "john" # update query definition query.set_filter(tag_filter) # execute query results = index.query(query)

-

RangeQuery - Vector search within a defined range paired with customizable filters

-

FilterQuery - Standard search using filters and the full-text search

-

CountQuery - Count the number of indexed records given attributes

Read more about building advanced Redis queries here.

Create, destroy, and manage Redis index configurations from a purpose-built CLI interface: rvl.

$ rvl -h

usage: rvl <command> [<args>]

Commands:

index Index manipulation (create, delete, etc.)

version Obtain the version of RedisVL

stats Obtain statistics about an indexRead more about using the

redisvlCLI here.

Integrate with popular embedding models and providers to greatly simplify the process of vectorizing unstructured data for your index and queries:

from redisvl.utils.vectorize import CohereTextVectorizer

# set COHERE_API_KEY in your environment

co = CohereTextVectorizer()

embedding = co.embed(

text="What is the capital city of France?",

input_type="search_query"

)

embeddings = co.embed_many(

texts=["my document chunk content", "my other document chunk content"],

input_type="search_document"

)Learn more about using

redisvlVectorizers in your workflows here.

In order to perform well in production, modern GenAI applications require much more than vector search for retrieval. redisvl provides some common extensions that

aim to improve applications working with LLMs:

-

LLM Semantic Caching is designed to increase application throughput and reduce the cost of using LLM models in production by leveraging previously generated knowledge.

from redisvl.extensions.llmcache import SemanticCache # init cache with TTL (expiration) policy and semantic distance threshhold llmcache = SemanticCache( name="llmcache", ttl=360, redis_url="redis://localhost:6379" ) llmcache.set_threshold(0.2) # can be changed on-demand # store user queries and LLM responses in the semantic cache llmcache.store( prompt="What is the capital city of France?", response="Paris", metadata={} ) # quickly check the cache with a slightly different prompt (before invoking an LLM) response = llmcache.check(prompt="What is France's capital city?") print(response[0]["response"])

>>> "Paris"Learn more about Semantic Caching here.

-

LLM Session Management (COMING SOON) aims to improve personalization and accuracy of the LLM application by providing user chat session information and conversational memory.

-

LLM Contextual Access Control (COMING SOON) aims to improve security concerns by preventing malicious, irrelevant, or problematic user input from reaching LLMs and infrastructure.

To get started, check out the following guides:

Please help us by contributing PRs, opening GitHub issues for bugs or new feature ideas, improving documentation, or increasing test coverage. Read more about how to contribute!

This project is supported by Redis, Inc on a good faith effort basis. To report bugs, request features, or receive assistance, please file an issue.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for redisvl

Similar Open Source Tools

redisvl

Redis Vector Library (RedisVL) is a Python client library for building AI applications on top of Redis. It provides a high-level interface for managing vector indexes, performing vector search, and integrating with popular embedding models and providers. RedisVL is designed to make it easy for developers to build and deploy AI applications that leverage the speed, flexibility, and reliability of Redis.

SimplerLLM

SimplerLLM is an open-source Python library that simplifies interactions with Large Language Models (LLMs) for researchers and beginners. It provides a unified interface for different LLM providers, tools for enhancing language model capabilities, and easy development of AI-powered tools and apps. The library offers features like unified LLM interface, generic text loader, RapidAPI connector, SERP integration, prompt template builder, and more. Users can easily set up environment variables, create LLM instances, use tools like SERP, generic text loader, calling RapidAPI APIs, and prompt template builder. Additionally, the library includes chunking functions to split texts into manageable chunks based on different criteria. Future updates will bring more tools, interactions with local LLMs, prompt optimization, response evaluation, GPT Trainer, document chunker, advanced document loader, integration with more providers, Simple RAG with SimplerVectors, integration with vector databases, agent builder, and LLM server.

lionagi

LionAGI is a robust framework for orchestrating multi-step AI operations with precise control. It allows users to bring together multiple models, advanced reasoning, tool integrations, and custom validations in a single coherent pipeline. The framework is structured, expandable, controlled, and transparent, offering features like real-time logging, message introspection, and tool usage tracking. LionAGI supports advanced multi-step reasoning with ReAct, integrates with Anthropic's Model Context Protocol, and provides observability and debugging tools. Users can seamlessly orchestrate multiple models, integrate with Claude Code CLI SDK, and leverage a fan-out fan-in pattern for orchestration. The framework also offers optional dependencies for additional functionalities like reader tools, local inference support, rich output formatting, database support, and graph visualization.

req_llm

ReqLLM is a Req-based library for LLM interactions, offering a unified interface to AI providers through a plugin-based architecture. It brings composability and middleware advantages to LLM interactions, with features like auto-synced providers/models, typed data structures, ergonomic helpers, streaming capabilities, usage & cost extraction, and a plugin-based provider system. Users can easily generate text, structured data, embeddings, and track usage costs. The tool supports various AI providers like Anthropic, OpenAI, Groq, Google, and xAI, and allows for easy addition of new providers. ReqLLM also provides API key management, detailed documentation, and a roadmap for future enhancements.

agentpress

AgentPress is a collection of simple but powerful utilities that serve as building blocks for creating AI agents. It includes core components for managing threads, registering tools, processing responses, state management, and utilizing LLMs. The tool provides a modular architecture for handling messages, LLM API calls, response processing, tool execution, and results management. Users can easily set up the environment, create custom tools with OpenAPI or XML schema, and manage conversation threads with real-time interaction. AgentPress aims to be agnostic, simple, and flexible, allowing users to customize and extend functionalities as needed.

semantic-cache

Semantic Cache is a tool for caching natural text based on semantic similarity. It allows for classifying text into categories, caching AI responses, and reducing API latency by responding to similar queries with cached values. The tool stores cache entries by meaning, handles synonyms, supports multiple languages, understands complex queries, and offers easy integration with Node.js applications. Users can set a custom proximity threshold for filtering results. The tool is ideal for tasks involving querying or retrieving information based on meaning, such as natural language classification or caching AI responses.

GraphRAG-SDK

Build fast and accurate GenAI applications with GraphRAG SDK, a specialized toolkit for building Graph Retrieval-Augmented Generation (GraphRAG) systems. It integrates knowledge graphs, ontology management, and state-of-the-art LLMs to deliver accurate, efficient, and customizable RAG workflows. The SDK simplifies the development process by automating ontology creation, knowledge graph agent creation, and query handling, enabling users to interact and query their knowledge graphs effectively. It supports multi-agent systems and orchestrates agents specialized in different domains. The SDK is optimized for FalkorDB, ensuring high performance and scalability for large-scale applications. By leveraging knowledge graphs, it enables semantic relationships and ontology-driven queries that go beyond standard vector similarity, enhancing retrieval-augmented generation capabilities.

notte

Notte is a web browser designed specifically for LLM agents, providing a language-first web navigation experience without the need for DOM/HTML parsing. It transforms websites into structured, navigable maps described in natural language, enabling users to interact with the web using natural language commands. By simplifying browser complexity, Notte allows LLM policies to focus on conversational reasoning and planning, reducing token usage, costs, and latency. The tool supports various language model providers and offers a reinforcement learning style action space and controls for full navigation control.

datachain

DataChain is an open-source Python library for processing and curating unstructured data at scale. It supports AI-driven data curation using local ML models and LLM APIs, handles large datasets, and is Python-friendly with Pydantic objects. It excels at optimizing batch operations and is designed for offline data processing, curation, and ETL. Typical use cases include Computer Vision data curation, LLM analytics, and validation.

curator

Bespoke Curator is an open-source tool for data curation and structured data extraction. It provides a Python library for generating synthetic data at scale, with features like programmability, performance optimization, caching, and integration with HuggingFace Datasets. The tool includes a Curator Viewer for dataset visualization and offers a rich set of functionalities for creating and refining data generation strategies.

langserve

LangServe helps developers deploy `LangChain` runnables and chains as a REST API. This library is integrated with FastAPI and uses pydantic for data validation. In addition, it provides a client that can be used to call into runnables deployed on a server. A JavaScript client is available in LangChain.js.

mcp-omnisearch

mcp-omnisearch is a Model Context Protocol (MCP) server that acts as a unified gateway to multiple search providers and AI tools. It integrates Tavily, Perplexity, Kagi, Jina AI, Brave, Exa AI, and Firecrawl to offer a wide range of search, AI response, content processing, and enhancement features through a single interface. The server provides powerful search capabilities, AI response generation, content extraction, summarization, web scraping, structured data extraction, and more. It is designed to work flexibly with the API keys available, enabling users to activate only the providers they have keys for and easily add more as needed.

IntelliNode

IntelliNode is a javascript module that integrates cutting-edge AI models like ChatGPT, LLaMA, WaveNet, Gemini, and Stable diffusion into projects. It offers functions for generating text, speech, and images, as well as semantic search, multi-model evaluation, and chatbot capabilities. The module provides a wrapper layer for low-level model access, a controller layer for unified input handling, and a function layer for abstract functionality tailored to various use cases.

datachain

DataChain is a Python-based AI-data warehouse for transforming and analyzing unstructured data like images, audio, videos, text, and PDFs. It integrates with external storage to process data efficiently without duplication and manages metadata for easy querying. Use cases include ETL, analytics, versioning, and incremental processing. Key features include multimodal dataset versioning, Python-friendly operations, data enrichment, and processing. The tool allows for generating metadata using AI models, filtering, joining, and grouping datasets, and performing high-performance vectorized operations.

LightRAG

LightRAG is a repository hosting the code for LightRAG, a system that supports seamless integration of custom knowledge graphs, Oracle Database 23ai, Neo4J for storage, and multiple file types. It includes features like entity deletion, batch insert, incremental insert, and graph visualization. LightRAG provides an API server implementation for RESTful API access to RAG operations, allowing users to interact with it through HTTP requests. The repository also includes evaluation scripts, code for reproducing results, and a comprehensive code structure.

continuous-eval

Open-Source Evaluation for LLM Applications. `continuous-eval` is an open-source package created for granular and holistic evaluation of GenAI application pipelines. It offers modularized evaluation, a comprehensive metric library covering various LLM use cases, the ability to leverage user feedback in evaluation, and synthetic dataset generation for testing pipelines. Users can define their own metrics by extending the Metric class. The tool allows running evaluation on a pipeline defined with modules and corresponding metrics. Additionally, it provides synthetic data generation capabilities to create user interaction data for evaluation or training purposes.

For similar tasks

redisvl

Redis Vector Library (RedisVL) is a Python client library for building AI applications on top of Redis. It provides a high-level interface for managing vector indexes, performing vector search, and integrating with popular embedding models and providers. RedisVL is designed to make it easy for developers to build and deploy AI applications that leverage the speed, flexibility, and reliability of Redis.

kernel-memory

Kernel Memory (KM) is a multi-modal AI Service specialized in the efficient indexing of datasets through custom continuous data hybrid pipelines, with support for Retrieval Augmented Generation (RAG), synthetic memory, prompt engineering, and custom semantic memory processing. KM is available as a Web Service, as a Docker container, a Plugin for ChatGPT/Copilot/Semantic Kernel, and as a .NET library for embedded applications. Utilizing advanced embeddings and LLMs, the system enables Natural Language querying for obtaining answers from the indexed data, complete with citations and links to the original sources. Designed for seamless integration as a Plugin with Semantic Kernel, Microsoft Copilot and ChatGPT, Kernel Memory enhances data-driven features in applications built for most popular AI platforms.

nucliadb

NucliaDB is a robust database that allows storing and searching on unstructured data. It is an out of the box hybrid search database, utilizing vector, full text and graph indexes. NucliaDB is written in Rust and Python. We designed it to index large datasets and provide multi-teanant support. When utilizing NucliaDB with Nuclia cloud, you are able to the power of an NLP database without the hassle of data extraction, enrichment and inference. We do all the hard work for you.

ocular

Ocular is a set of modules and tools that allow you to build rich, reliable, and performant Generative AI-Powered Search Platforms without the need to reinvent Search Architecture. We help you build you spin up customized internal search in days not months.

genkit

Firebase Genkit (beta) is a framework with powerful tooling to help app developers build, test, deploy, and monitor AI-powered features with confidence. Genkit is cloud optimized and code-centric, integrating with many services that have free tiers to get started. It provides unified API for generation, context-aware AI features, evaluation of AI workflow, extensibility with plugins, easy deployment to Firebase or Google Cloud, observability and monitoring with OpenTelemetry, and a developer UI for prototyping and testing AI features locally. Genkit works seamlessly with Firebase or Google Cloud projects through official plugins and templates.

swiftide

Swiftide is a fast, streaming indexing and query library tailored for Retrieval Augmented Generation (RAG) in AI applications. It is built in Rust, utilizing parallel, asynchronous streams for blazingly fast performance. With Swiftide, users can easily build AI applications from idea to production in just a few lines of code. The tool addresses frustrations around performance, stability, and ease of use encountered while working with Python-based tooling. It offers features like fast streaming indexing pipeline, experimental query pipeline, integrations with various platforms, loaders, transformers, chunkers, embedders, and more. Swiftide aims to provide a platform for data indexing and querying to advance the development of automated Large Language Model (LLM) applications.

oramacore

OramaCore is a database designed for AI projects, answer engines, copilots, and search functionalities. It offers features such as a full-text search engine, vector database, LLM interface, and various utilities. The tool is currently under active development and not recommended for production use due to potential API changes. OramaCore aims to provide a comprehensive solution for managing data and enabling advanced search capabilities in AI applications.

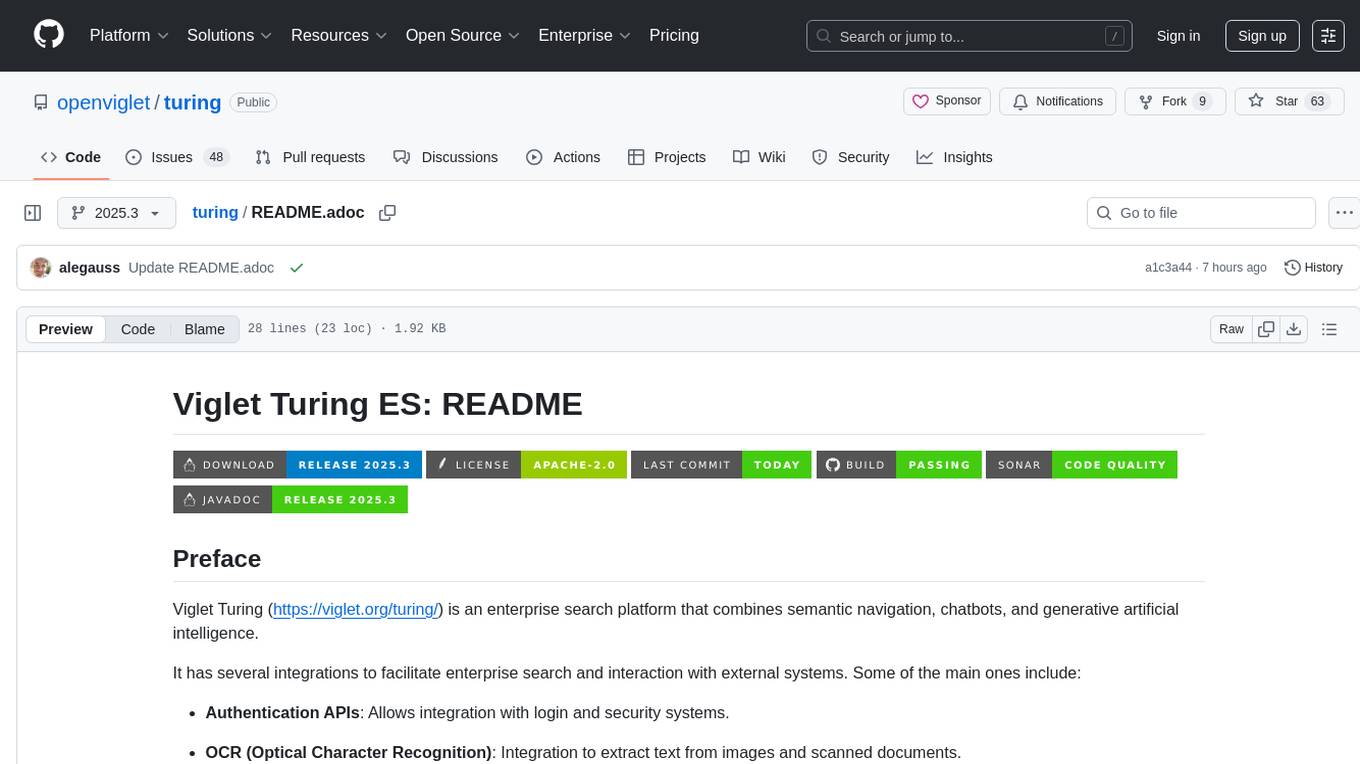

turing

Viglet Turing is an enterprise search platform that combines semantic navigation, chatbots, and generative artificial intelligence. It offers integrations for authentication APIs, OCR, content indexing, CMS connectors, web crawling, database connectors, and file system indexing.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.