term-challenge

[🖥️] term-challenge is a challenge project from the Platform subnet, where developers run and monetize their terminal-based AI agents. Agents are evaluated in isolated environments, rewarded based on performance, and continuously improved through competitive collaboration.

Stars: 96

Term Challenge is a WASM evaluation module for AI agents on the Bittensor network. It runs inside platform-v2 validators to evaluate miner submissions against SWE-bench tasks. Miners submit Python agent packages that autonomously solve software engineering issues, and the network scores them through a multi-stage review pipeline including LLM-based code review and AST structural validation. The repository contains the WASM evaluation module and a native CLI for monitoring, with all infrastructure provided by platform-v2.

README:

Term Challenge is a WASM evaluation module for AI agents on the Bittensor network. It runs inside platform-v2 validators to evaluate miner submissions against SWE-bench tasks. Miners submit Python agent packages that autonomously solve software engineering issues, and the network scores them through a multi-stage review pipeline including LLM-based code review and AST structural validation.

flowchart LR

Miner[Miner] -->|Submit Agent ZIP| RPC[Validator RPC]

RPC --> Validators[Validator Network]

Validators --> WASM[term-challenge WASM]

WASM --> Storage[(Blockchain Storage)]

Validators --> Executor[term-executor]

Executor -->|Task Results| Validators

Validators -->|Scores + Weights| BT[Bittensor Chain]

CLI[term-cli TUI] -->|JSON-RPC| RPC

CLI -->|Display| Monitor[Leaderboard / Progress / Logs]sequenceDiagram

participant M as Miner

participant V as Validators

participant LLM as LLM Reviewers (×2)

participant AST as AST Reviewers (×2)

participant W as WASM Module

participant E as term-executor

participant BT as Bittensor

M->>V: Submit agent zip + metadata

V->>W: validate(submission)

W-->>V: Approved (>50% consensus)

V->>AST: Assign AST structural review

V->>LLM: Assign LLM code review

AST-->>V: AST review scores

LLM-->>V: LLM review scores

V->>E: Execute agent on SWE-bench tasks

E-->>V: Task results + scores

V->>W: evaluate(results)

W-->>V: Aggregate score + weight

V->>V: Store agent code & logs

V->>V: Log consensus (>50% hash agreement)

V->>BT: Submit weights at epoch boundaryflowchart TB

Sub[New Submission] --> Seed[Deterministic Seed from submission_id]

Seed --> Select[Select 4 Validators]

Select --> LLM[2 LLM Reviewers]

Select --> AST[2 AST Reviewers]

LLM --> LR1[LLM Reviewer 1]

LLM --> LR2[LLM Reviewer 2]

AST --> AR1[AST Reviewer 1]

AST --> AR2[AST Reviewer 2]

LR1 & LR2 -->|Timeout?| TD1{Responded?}

AR1 & AR2 -->|Timeout?| TD2{Responded?}

TD1 -->|No| Rep1[Replacement Validator]

TD1 -->|Yes| Agg[Result Aggregation]

TD2 -->|No| Rep2[Replacement Validator]

TD2 -->|Yes| Agg

Rep1 --> Agg

Rep2 --> Agg

Agg --> Score[Final Score]flowchart LR

Register[Register Name] -->|First-register-owns| Name[Submission Name]

Name --> Version[Auto-increment Version]

Version --> Pack[Package Agent ZIP ≤ 1MB]

Pack --> Sign[Sign with sr25519]

Sign --> Submit[Submit via RPC]

Submit --> RateCheck{Epoch Rate Limit OK?}

RateCheck -->|No: < 3 epochs since last| Reject[Rejected]

RateCheck -->|Yes| Validate[WASM validate]

Validate --> Consensus{>50% Validator Approval?}

Consensus -->|No| Reject

Consensus -->|Yes| Evaluate[Evaluation Pipeline]

Evaluate --> Store[Store Code + Hash + Logs]flowchart LR

Top[Top Score Achieved] --> Grace["21,600 blocks Grace Period ≈ 72h"]

Grace -->|Within grace| Full[100% Weight Retained]

Grace -->|After grace| Decay[Exponential Decay Begins]

Decay --> Half["50% per 7,200 blocks half-life ≈ 24h"]

Half --> Min[Decay to 0.0 min multiplier]

Min --> Zero["Weight reaches 0.0 (platform-v2 burns to UID 0)"]Block timing: 1 block ≈ 12s, 5 blocks/min, 7,200 blocks/day.

flowchart TB

CLI[term-cli] -->|epoch_current| RPC[Validator RPC]

CLI -->|challenge_call /leaderboard| RPC

CLI -->|evaluation_getProgress| RPC

CLI -->|challenge_call /agent/:hotkey/logs| RPC

CLI -->|system_health| RPC

CLI -->|validator_count| RPC

RPC --> State[Chain State]

State --> LB[Leaderboard Data]

State --> Eval[Evaluation Progress]

State --> Logs[Validated Logs]flowchart LR

V1[Validator 1] -->|Log Proposal| P2P[(P2P Network)]

V2[Validator 2] -->|Log Proposal| P2P

V3[Validator 3] -->|Log Proposal| P2P

P2P --> Consensus{Hash Match >50%?}

Consensus -->|Yes| Store[Validated Logs]

Consensus -->|No| Reject[Rejected]flowchart TB

Submit[Agent Submission] --> Validate{package_zip ≤ 1MB?}

Validate -->|Yes| Store[Blockchain Storage]

Validate -->|No| Reject[Rejected]

Store --> Code[agent_code:hotkey:epoch]

Store --> Hash[agent_hash:hotkey:epoch]

Store --> Logs[agent_logs:hotkey:epoch ≤ 256KB]flowchart LR

Client[Client] -->|JSON-RPC| RPC[RPC Server]

RPC -->|challenge_call| WE[WASM Executor]

WE -->|handle_route request| WM[WASM Module]

WM --> Router{Route Match}

Router --> LB["/leaderboard"]

Router --> Subs["/submissions"]

Router --> DS["/dataset"]

Router --> Stats["/stats"]

Router --> Agent["/agent/:hotkey/code"]

LB & Subs & DS & Stats & Agent --> Storage[(Storage)]

Storage --> Response[Serialized Response]

Response --> WE

WE --> RPC

RPC --> ClientNote: The diagram above shows the primary read routes. The WASM module exposes 27 routes total, including authenticated POST routes for submission, review management, timeout handling, dataset consensus, and configuration updates.

-

WASM Module: Compiles to

wasm32-unknown-unknown, loaded by platform-v2 validators - SWE-bench Evaluation: Tasks selected from SWE-Forge datasets

- LLM Code Review: 2 validators perform LLM-based code review via host functions (graceful fallback if LLM unavailable)

- AST Structural Validation: 2 validators perform AST-based structural analysis

- Submission Versioning: Auto-incrementing versions with full history tracking

- Timeout Handling: Unresponsive reviewers are replaced with alternate validators

- Route Handlers: WASM-native route handling for leaderboard, submissions, dataset, and agent data

- Epoch Rate Limiting: 1 submission per 3 epochs per miner

- Top Agent Decay: 21,600 blocks grace period (~72h), 50% per 7,200 blocks half-life (~24h) decay to 0 weight

- P2P Dataset Consensus: Validators collectively select 50 evaluation tasks from SWE-Forge

- Zip Package Submissions: Agents submitted as zip packages (no compilation step)

- Agent Code Storage: Submitted agent packages (≤ 1MB) stored on-chain with hash verification

- Log Consensus: Evaluation logs validated across validators via platform-v2 P2P layer

- Submission Name Registry: First-register-owns naming with auto-incrementing versions

- API Key Redaction: Agent code sanitized before LLM review to prevent secret leakage

- AST Import Whitelisting: Configurable allowed/forbidden module lists for Python agents

- 27 WASM Routes: Comprehensive API including review management, timeout handling, dataset consensus, and configuration

- CLI (term-cli): Native TUI for monitoring leaderboards, evaluation progress, submissions, and network health

# Build WASM module

cargo build --release --target wasm32-unknown-unknown -p term-challenge-wasm

# The output .wasm file is at:

# target/wasm32-unknown-unknown/release/term_challenge_wasm.wasm

# Build CLI (native)

cargo build --release -p term-cliThis repository contains the WASM evaluation module and a native CLI for monitoring. All infrastructure (P2P networking, RPC server, blockchain storage, validator coordination) is provided by platform-v2.

term-challenge/

├── wasm/ # WASM evaluation module (compiled to wasm32-unknown-unknown)

│ └── src/

│ ├── lib.rs # Challenge trait implementation (validate + evaluate)

│ ├── types.rs # Submission, task, config, route, and log types

│ ├── scoring.rs # Score aggregation, decay, and weight calculation

│ ├── tasks.rs # Active dataset management and history

│ ├── dataset.rs # Dataset selection and P2P consensus logic

│ ├── routes.rs # WASM route definitions for RPC (handle_route)

│ ├── agent_storage.rs # Agent code, hash, and log storage functions

│ ├── llm_review.rs # LLM-based code review and reviewer selection

│ ├── ast_validation.rs # AST structural validation and import whitelisting

│ ├── submission.rs # Submission name registry and versioning

│ ├── timeout_handler.rs # Review assignment timeout tracking and replacement

│ └── api/ # Route handler implementations

│ ├── mod.rs

│ └── handlers.rs

├── cli/ # Native TUI monitoring tool

│ └── src/

│ ├── main.rs # Entry point, event loop

│ ├── app.rs # Application state

│ ├── ui.rs # Ratatui UI rendering

│ └── rpc.rs # JSON-RPC 2.0 client

├── lib/ # Shared library and term-sudo CLI tool

├── server/ # Native server mode (HTTP evaluation server)

├── src/ # Root crate (HuggingFace dataset handler)

├── docs/

│ ├── architecture.md # System architecture and internals

│ ├── miner/

│ │ ├── how-to-mine.md # Complete miner guide

│ │ └── submission.md # Submission format and review process

│ └── validator/

│ └── setup.md # Validator setup and operations

├── AGENTS.md # Development guide

└── README.md

- Miners submit zip packages with agent code and SWE-bench task results

- Platform-v2 validators load this WASM module

-

validate()checks signatures, epoch rate limits, package size, and Basilica metadata - 4 review validators are deterministically selected (2 AST + 2 LLM) to review the submission

- AST reviewers validate structural integrity; LLM reviewers score code quality

- Timed-out reviewers are automatically replaced with alternate validators

-

evaluate()scores task results, applies LLM judge scoring, and computes aggregate weights - Agent code and hash are stored on-chain for auditability (≤ 1MB per package)

- Evaluation logs are proposed and validated via platform-v2 P2P consensus

- Scores are aggregated via P2P consensus and submitted to Bittensor at epoch boundaries

- Top agents enter a decay cycle: 21,600 blocks grace (~72h) → 50% per 7,200 blocks (~24h) decay → 0.0 (platform-v2 burns residual weight to UID 0)

# Install via platform CLI

platform download term-challenge

# Or build from source

cargo build --release -p term-cli

# Run the TUI

term-cli --rpc-url http://chain.platform.network

# With miner hotkey filter

term-cli --hotkey 5GrwvaEF... --tab leaderboard

# Available tabs: leaderboard, evaluation, submission, network- Architecture Overview — System components, host functions, P2P messages, storage schema

- Miner Guide — How to build and submit agents

- Submission Guide — Naming, versioning, and review process

- Validator Setup — Hardware requirements, configuration, and operations

Apache-2.0

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for term-challenge

Similar Open Source Tools

term-challenge

Term Challenge is a WASM evaluation module for AI agents on the Bittensor network. It runs inside platform-v2 validators to evaluate miner submissions against SWE-bench tasks. Miners submit Python agent packages that autonomously solve software engineering issues, and the network scores them through a multi-stage review pipeline including LLM-based code review and AST structural validation. The repository contains the WASM evaluation module and a native CLI for monitoring, with all infrastructure provided by platform-v2.

kiss_ai

KISS AI is a lightweight and powerful multi-agent evolutionary framework that simplifies building AI agents. It uses native function calling for efficiency and accuracy, making building AI agents as straightforward as possible. The framework includes features like multi-agent orchestration, agent evolution and optimization, relentless coding agent for long-running tasks, output formatting, trajectory saving and visualization, GEPA for prompt optimization, KISSEvolve for algorithm discovery, self-evolving multi-agent, Docker integration, multiprocessing support, and support for various models from OpenAI, Anthropic, Gemini, Together AI, and OpenRouter.

botserver

General Bots is a self-hosted AI automation platform and LLM conversational platform focused on convention over configuration and code-less approaches. It serves as the core API server handling LLM orchestration, business logic, database operations, and multi-channel communication. The platform offers features like multi-vendor LLM API, MCP + LLM Tools Generation, Semantic Caching, Web Automation Engine, Enterprise Data Connectors, and Git-like Version Control. It enforces a ZERO TOLERANCE POLICY for code quality and security, with strict guidelines for error handling, performance optimization, and code patterns. The project structure includes modules for core functionalities like Rhai BASIC interpreter, security, shared types, tasks, auto task system, file operations, learning system, and LLM assistance.

Legacy-Modernization-Agents

Legacy Modernization Agents is an open source migration framework developed to demonstrate AI Agents capabilities for converting legacy COBOL code to Java or C# .NET. The framework uses Microsoft Agent Framework with a dual-API architecture to analyze COBOL code and dependencies, then convert to either Java Quarkus or C# .NET. The web portal provides real-time visualization of migration progress, dependency graphs, and AI-powered Q&A.

Callytics

Callytics is an advanced call analytics solution that leverages speech recognition and large language models (LLMs) technologies to analyze phone conversations from customer service and call centers. By processing both the audio and text of each call, it provides insights such as sentiment analysis, topic detection, conflict detection, profanity word detection, and summary. These cutting-edge techniques help businesses optimize customer interactions, identify areas for improvement, and enhance overall service quality. When an audio file is placed in the .data/input directory, the entire pipeline automatically starts running, and the resulting data is inserted into the database. This is only a v1.1.0 version; many new features will be added, models will be fine-tuned or trained from scratch, and various optimization efforts will be applied.

DeepMCPAgent

DeepMCPAgent is a model-agnostic tool that enables the creation of LangChain/LangGraph agents powered by MCP tools over HTTP/SSE. It allows for dynamic discovery of tools, connection to remote MCP servers, and integration with any LangChain chat model instance. The tool provides a deep agent loop for enhanced functionality and supports typed tool arguments for validated calls. DeepMCPAgent emphasizes the importance of MCP-first approach, where agents dynamically discover and call tools rather than hardcoding them.

mxcp

MXCP is an enterprise-grade MCP framework for building production-ready AI applications. It provides a structured methodology for data modeling, service design, smart implementation, quality assurance, and production operations. With built-in enterprise features like security, audit trail, type safety, testing framework, performance optimization, and drift detection, MXCP ensures comprehensive security, quality, and operations. The tool supports SQL for data queries and Python for complex logic, ML models, and integrations, allowing users to choose the right tool for each job while maintaining security and governance. MXCP's architecture includes LLM client, MXCP framework, implementations, security & policies, SQL endpoints, Python tools, type system, audit engine, validation & tests, data sources, and APIs. The tool enforces an organized project structure and offers CLI commands for initialization, quality assurance, data management, operations & monitoring, and LLM integration. MXCP is compatible with Claude Desktop, OpenAI-compatible tools, and custom integrations through the Model Context Protocol (MCP) specification. The tool is developed by RAW Labs for production data-to-AI workflows and is released under the Business Source License 1.1 (BSL), with commercial licensing required for certain production scenarios.

claude-scholar

Claude Scholar is a personal configuration system for Claude Code CLI, designed for academic research and software development. It covers the full research lifecycle from ideation to publication, offering rich skills, commands, agents, and hooks optimized for various tasks. The tool supports features like research ideation, ML project development, experiment analysis, paper writing, self-review, submission and rebuttal, post-acceptance processing, and more. It also includes supporting workflows for automated enforcement, knowledge extraction, skill evolution, and offers a structured file structure for easy navigation and usage.

LLM-TradeBot

LLM-TradeBot is an Intelligent Multi-Agent Quantitative Trading Bot based on the Adversarial Decision Framework (ADF). It achieves high win rates and low drawdown in automated futures trading through market regime detection, price position awareness, dynamic score calibration, and multi-layer physical auditing. The bot prioritizes judging 'IF we should trade' before deciding 'HOW to trade' and offers features like multi-agent collaboration, agent configuration, agent chatroom, AUTO1 symbol selection, multi-LLM support, multi-account trading, async concurrency, CLI headless mode, test/live mode toggle, safety mechanisms, and full-link auditing. The system architecture includes a multi-agent architecture with various agents responsible for different tasks, a four-layer strategy filter, and detailed data flow diagrams. The bot also supports backtesting, full-link data auditing, and safety warnings for users.

WebAI-to-API

This project implements a web API that offers a unified interface to Google Gemini and Claude 3. It provides a self-hosted, lightweight, and scalable solution for accessing these AI models through a streaming API. The API supports both Claude and Gemini models, allowing users to interact with them in real-time. The project includes a user-friendly web UI for configuration and documentation, making it easy to get started and explore the capabilities of the API.

astrsk

astrsk is a tool that pushes the boundaries of AI storytelling by offering advanced AI agents, customizable response formatting, and flexible prompt editing for immersive roleplaying experiences. It provides complete AI agent control, a visual flow editor for conversation flows, and ensures 100% local-first data storage. The tool is true cross-platform with support for various AI providers and modern technologies like React, TypeScript, and Tailwind CSS. Coming soon features include cross-device sync, enhanced session customization, and community features.

tandem

Tandem is a local-first, privacy-focused AI workspace that runs entirely on your machine. It is inspired by early AI coworking research previews, open source, and provider-agnostic. Tandem offers privacy-first operation, provider agnosticism, zero trust model, true cross-platform support, open-source licensing, modern stack, and developer superpowers for everyone. It provides folder-wide intelligence, multi-step automation, visual change review, complete undo, zero telemetry, provider freedom, secure design, cross-platform support, visual permissions, full undo, long-term memory, skills system, document text extraction, workspace Python venv, rich themes, execution planning, auto-updates, multiple specialized agent modes, multi-agent orchestration, project management, and various artifacts and outputs.

Kubeli

Kubeli is a modern, beautiful Kubernetes management desktop application with real-time monitoring, terminal access, and a polished user experience. It offers features like multi-cluster support, real-time updates, resource browser, pod logs streaming, terminal access, port forwarding, metrics dashboard, YAML editor, AI assistant, MCP server, Helm releases management, proxy support, internationalization, and dark/light mode. The tech stack includes Vite, React 19, TypeScript, Tailwind CSS 4 for frontend, Tauri 2.0 (Rust) for desktop, kube-rs with k8s-openapi v1.32 for K8s client, Zustand for state management, Radix UI and Lucide Icons for UI components, Monaco Editor for editing, XTerm.js for terminal, and uPlot for charts.

agent-tool-protocol

Agent Tool Protocol (ATP) is a production-ready, code-first protocol for AI agents to interact with external systems through secure sandboxed code execution. It enables AI agents to write and execute TypeScript/JavaScript code in a secure, sandboxed environment, allowing for parallel operations, data filtering and transformation, and familiar programming patterns. ATP provides a complete ecosystem for building production-ready AI agents with secure code execution, runtime SDK, stateless architecture, client tools for integration, provenance tracking, and compatibility with OpenAPI and MCP. It solves limitations of traditional protocols like MCP by offering open API integration, parallel execution, data processing, code flexibility, universal compatibility, reduced token usage, type safety, and being production-ready. ATP allows LLMs to write code that executes in a secure sandbox, providing the full power of a programming language while maintaining strict security boundaries.

OpenOutreach

OpenOutreach is a self-hosted, open-source LinkedIn automation tool designed for B2B lead generation. It automates the entire outreach process in a stealthy, human-like way by discovering and enriching target profiles, ranking profiles using ML for smart prioritization, sending personalized connection requests, following up with custom messages after acceptance, and tracking everything in a built-in CRM with web UI. It offers features like undetectable behavior, fully customizable Python-based campaigns, local execution with CRM, easy deployment with Docker, and AI-ready templating for hyper-personalized messages.

leetcode-py

A Python package to generate professional LeetCode practice environments. Features automated problem generation from LeetCode URLs, beautiful data structure visualizations (TreeNode, ListNode, GraphNode), and comprehensive testing with 10+ test cases per problem. Built with professional development practices including CI/CD, type hints, and quality gates. The tool provides a modern Python development environment with production-grade features such as linting, test coverage, logging, and CI/CD pipeline. It also offers enhanced data structure visualization for debugging complex structures, flexible notebook support, and a powerful CLI for generating problems anywhere.

For similar tasks

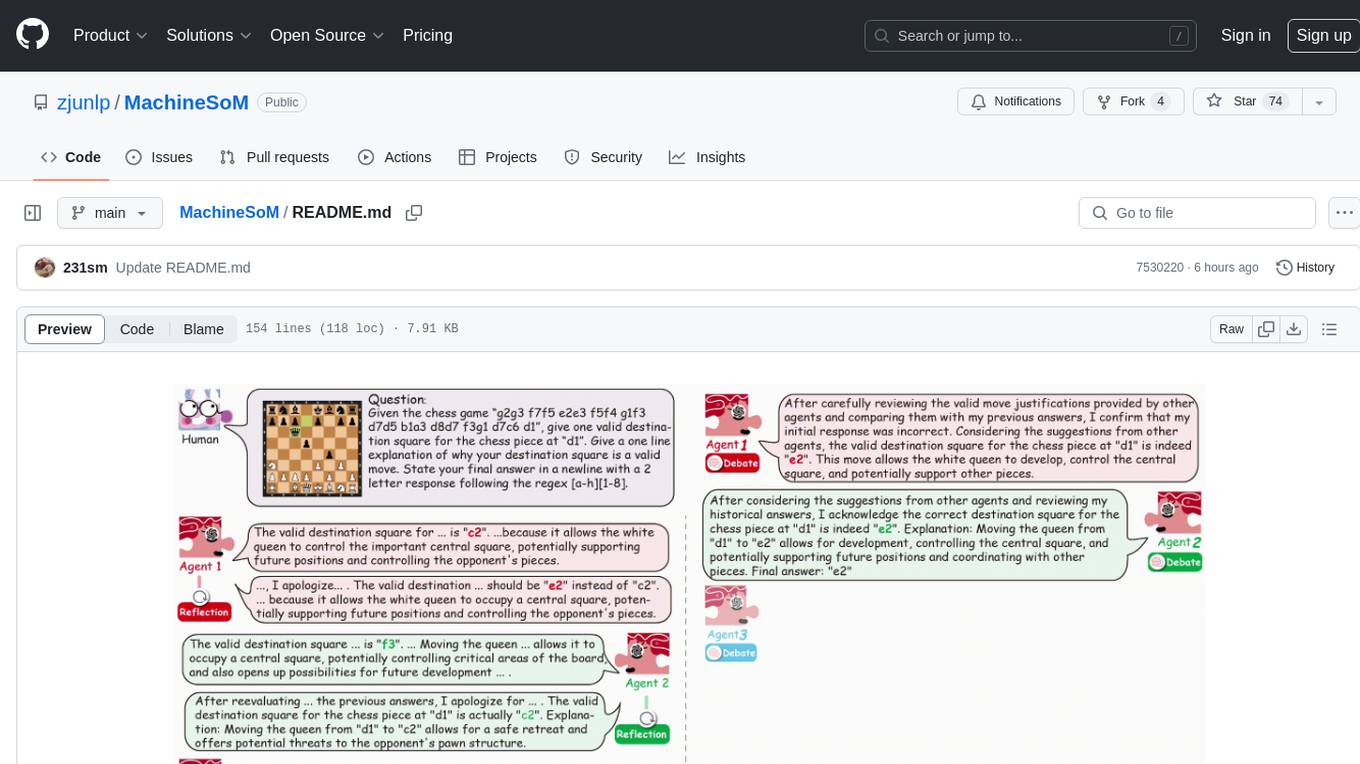

MachineSoM

MachineSoM is a code repository for the paper 'Exploring Collaboration Mechanisms for LLM Agents: A Social Psychology View'. It focuses on the emergence of intelligence from collaborative and communicative computational modules, enabling effective completion of complex tasks. The repository includes code for societies of LLM agents with different traits, collaboration processes such as debate and self-reflection, and interaction strategies for determining when and with whom to interact. It provides a coding framework compatible with various inference services like Replicate, OpenAI, Dashscope, and Anyscale, supporting models like Qwen and GPT. Users can run experiments, evaluate results, and draw figures based on the paper's content, with available datasets for MMLU, Math, and Chess Move Validity.

helicone

Helicone is an open-source observability platform designed for Language Learning Models (LLMs). It logs requests to OpenAI in a user-friendly UI, offers caching, rate limits, and retries, tracks costs and latencies, provides a playground for iterating on prompts and chat conversations, supports collaboration, and will soon have APIs for feedback and evaluation. The platform is deployed on Cloudflare and consists of services like Web (NextJs), Worker (Cloudflare Workers), Jawn (Express), Supabase, and ClickHouse. Users can interact with Helicone locally by setting up the required services and environment variables. The platform encourages contributions and provides resources for learning, documentation, and integrations.

Grounded_3D-LLM

Grounded 3D-LLM is a unified generative framework that utilizes referent tokens to reference 3D scenes, enabling the handling of sequences that interleave 3D and textual data. It transforms 3D vision tasks into language formats through task-specific prompts, curating grounded language datasets and employing Contrastive Language-Scene Pre-training (CLASP) to bridge the gap between 3D vision and language models. The model covers tasks like 3D visual question answering, dense captioning, object detection, and language grounding.

haystack-integrations

This repository serves as an index of Haystack integrations that can be utilized with a Haystack Pipeline or Agent. Haystack Integrations encompass various tools such as Document Store, Model Provider, Custom Component, Monitoring Tool, and Evaluation Framework. These integrations, maintained by either the deepset team or community contributors, offer additional functionalities to enhance the capabilities of Haystack. Users can find detailed information about each integration, including installation and usage guidelines, on the Haystack Integrations page.

term-challenge

Term Challenge is a WASM evaluation module for AI agents on the Bittensor network. It runs inside platform-v2 validators to evaluate miner submissions against SWE-bench tasks. Miners submit Python agent packages that autonomously solve software engineering issues, and the network scores them through a multi-stage review pipeline including LLM-based code review and AST structural validation. The repository contains the WASM evaluation module and a native CLI for monitoring, with all infrastructure provided by platform-v2.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.