MaskLLM

[NeurIPS 24 Spotlight] MaskLLM: Learnable Semi-structured Sparsity for Large Language Models

Stars: 142

MaskLLM is a learnable pruning method that establishes Semi-structured Sparsity in Large Language Models (LLMs) to reduce computational overhead during inference. It is scalable and benefits from larger training datasets. The tool provides examples for running MaskLLM with Megatron-LM, preparing LLaMA checkpoints, pre-tokenizing C4 data for Megatron, generating prior masks, training MaskLLM, and evaluating the model. It also includes instructions for exporting sparse models to Huggingface.

README:

Gongfan Fang, Hongxu Yin, Saurav Muralidharan, Greg Heinrich

Jeff Pool, Jan Kautz, Pavlo Molchanov, Xinchao Wang

NVIDIA Research, National University of Singapore

📄 [ArXiv] | 🎯 [Project Page] | 📎 [License] | 🤗 [Hugging Face] | 👓 [MaskLLM-4Vision]

This work introduces MaskLLM, a learnable pruning method that establishes Semi-structured (or ``N:M'') Sparsity in LLMs, aimed at reducing computational overhead during inference. The proposed method is scalable and stands to benefit from larger training datasets.

We provide pre-computed masks for Hugging Face Models such as Llama-2 7B and Llama-3 8B with the minimum requirements. It will not involve docker, Megatron or data preprocessing.

pip install transformers accelerate datasets SentencePiece The following masks were trained and provided by @VainF. We use huggingface_hub to automatically download those masks and apply them to official LLMs for evaluation. Those mask files were compressed using numpy.savez_compressed. More results for baselines (SparseGPT, Wanda) can be found in the appendix.

| Model | Pattern | Training Data | Training/Eval SeqLen | PPL (Dense) | PPL (SparseGPT) | PPL (MaskLLM) | Link |

|---|---|---|---|---|---|---|---|

| LLaMA-2 7B | 2:4 | C4 (2B Tokens) | 4096 | 5.12 | 10.42 | 6.78 | HuggingFace |

| LLaMA-3 8B | 2:4 | C4 (2B Tokens) | 4096 | 5.75 | 17.64 | 8.49 | HuggingFace |

| LLaMA-3.1 8B | 2:4 | C4 (2B Tokens) | 4096 | 5.89 | 18.65 | 8.58 | HuggingFace |

# LLaMA-2 7B, Wikitext-2 PPL=6.78

python eval_llama_ppl.py --model meta-llama/Llama-2-7b-hf --mask Vinnnf/LLaMA-2-7B-MaskLLM-C4

# LLaMA-3 8B, Wikitext-2 PPL=8.49

python eval_llama_ppl.py --model meta-llama/Meta-Llama-3-8B --mask Vinnnf/LLaMA-3-8B-MaskLLM-C4

# LlaMa-3.1 8B, Wikitext-2 PPL=8.58

python eval_llama_ppl.py --model meta-llama/Meta-Llama-3.1-8B --mask Vinnnf/LLaMA-3.1-8B-MaskLLM-C4Output (LlaMa-3.1 8B):

torch 2.2.0a0+81ea7a4

transformers 4.47.0

accelerate 1.2.0

# of gpus: 8

loading llm model meta-llama/Meta-Llama-3.1-8B

Loading checkpoint shards: 100%|█████████| 4/4 [00:06<00:00, 1.74s/it]

mask_compressed.npz: 100%|█████████| 591M/591M [00:51<00:00, 11.6MB/s

...

model.layers.31.mlp.up_proj.weight - sparsity 0.5000

model.layers.31.mlp.down_proj.weight - sparsity 0.5000

model.layers.31.input_layernorm.weight - sparsity 0.0000

model.layers.31.post_attention_layernorm.weight - sparsity 0.0000

model.norm.weight - sparsity 0.0000

lm_head.weight - sparsity 0.0000

use device cuda:0

evaluating on wikitext2

nsamples 70

sample 0

sample 50

wikitext perplexity 8.578034400939941More masks learned on public datasets will be released in the future.

The following section provides an example of MaskLLM-LLaMA-2/3 on a single node with 8 GPUs. The LLaMA model will be shared across 8 GPUs with tensor parallelism, taking ~40GB per GPU for end-to-end training.

Docker is required for Megatron-LM. Please install docker with sudo apt install docker.io and NVIDIA Container Toolkit following the official instructions. We use the docker image pytorch:24.01-py3 from NVIDIA NGC as the base image.

docker run --gpus all --ipc=host --ulimit memlock=-1 --ulimit stack=67108864 -v $HOME:$HOME -it --rm nvcr.io/nvidia/pytorch:24.01-py3In the container, we need to download the LLaMA checkpoints and convert them to Megatron format.

Install basic dependencies.

pip install transformers accelerate datasets SentencePiece wandb tqdm ninja tensorboardx==2.6 pulp timm einops nltkThe following scripts download and save all HF checkpoints at ./assets/checkpoints.

python scripts/tools/download_llama2_7b_hf.py

python scripts/tools/download_llama2_13b_hf.py

python scripts/tools/download_llama3_8b_hf.py

python scripts/tools/download_llama3.1_8b_hf.pyassets

├── checkpoints

│ ├── llama2_13b_hf

│ ├── llama2_7b_hf

│ ├── llama3_8b_hf

│ └── llama3.1_8b_hfTips: If you would like to use the Huggingface cache, link the "~/.cache/huggingface/hub" to "assets/checkpoints": ln -s $HOME/.cache/huggingface/hub assets/cache

Convert the downloaded HF checkpoint to Megatron format, with tp=8 for tensor parallelism.

bash scripts/tools/convert_llama2_7b_hf_to_megatron.sh

bash scripts/tools/convert_llama2_13b_hf_to_megatron.sh

bash scripts/tools/convert_llama3_8b_hf_to_megatron.sh

bash scripts/tools/convert_llama3.1_8b_hf_to_megatron.shassets/

├── checkpoints

│ ├── llama2_13b_hf

│ ├── llama2_13b_megatron_tp8 # <= Megatron format

│ ├── llama2_7b_hf

│ ├── llama2_7b_megatron_tp8

│ ├── llama3_8b_hf

│ ├── llama3_8b_megatron_tp8

│ ├── llama3.1_8b_hf

│ └── llama3.1_8b_megatron_tp8Evaluate the dense model with the arguments size (7b/8b/13b), tensor parallelism (8), and sparsity (dense or sparse).

bash scripts/ppl/evaluate_llama2_wikitext2.sh assets/checkpoints/llama2_7b_megatron_tp8 7b 8 dense

bash scripts/ppl/evaluate_llama2_wikitext2.sh assets/checkpoints/llama2_13b_megatron_tp8 13b 8 dense

bash scripts/ppl/evaluate_llama3_wikitext2.sh assets/checkpoints/llama3_8b_megatron_tp8 8b 8 dense

bash scripts/ppl/evaluate_llama3.1_wikitext2.sh assets/checkpoints/llama3.1_8b_megatron_tp8 8b 8 dense# Outputs for LLaMA-2 7B:

validation results on WIKITEXT2 | avg loss: 1.6323E+00 | ppl: 5.1155E+00 | adjusted ppl: 5.1155E+00 | token ratio: 1.0 |

# Outputs for LLaMA-2 13B:

validation results on WIKITEXT2 | avg loss: 1.5202E+00 | ppl: 4.5730E+00 | adjusted ppl: 4.5730E+00 | token ratio: 1.0 |

# Outputs for LLaMA-3 8B:

validation results on WIKITEXT2 | avg loss: 1.7512E+00 | ppl: 5.7615E+00 | adjusted ppl: 5.7615E+00 | token ratio: 1.0 |

# Outputs for LLaMA-3.1 8B

validation results on WIKITEXT2 | avg loss: 1.7730E+00 | ppl: 5.8887E+00 | adjusted ppl: 5.8887E+00 | token ratio: 1.0 |Our paper uses a blended internal data for training. For reproducibility, we provide an example of learning masks on a subset of the public allenai/c4 dataset. Corresponding results can be found in Appendix D of our paper. Please see docs/preprocess_c4.md for the instructions.

It is encouraged to start training with a prior mask, either generated by SparseGPT, Wanda or Magnitude Pruning. The following scripts prune an LLaMA-2 7B model with 2:4 patterns. For SparseGPT, weight update is disabled. Add an argument --update-weight if necessary. More similar scripts for LLaMA-2 13B, LLaMA-3 8B and LLaMA-3.1 8B are available at scripts/oneshot.

# <= SparseGPT mask

bash scripts/oneshot/run_llama2_7b_prune_tp8.sh hessian # --update-weight

# <= Magnitude mask

bash scripts/oneshot/run_llama2_7b_prune_tp8.sh magnitude # --update-weight

# <= Wanda mask

bash scripts/oneshot/run_llama2_7b_prune_tp8.sh wanda # --update-weight The pruned Llama model will contain additional .mask parameters in sparse linears, such as module.language_model.encoder.layers.31.mlp.dense_h_to_4h.mask.

output/

├── oneshot_pruning

│ ├── checkpoint

│ │ ├── llama2-7b-tp8.sparse.nmprune.sp0.5hessian.ex0

│ │ └── llama2-7b-tp8.sparse.nmprune.sp0.5magnitude.ex0

│ │ ├── llama2-7b-tp8.sparse.nmprune.sp0.5wanda.ex0

│ ├── llama2-7b-tp8.sparse.nmprune.sp0.5hessian.ex0.log

│ ├── llama2-7b-tp8.sparse.nmprune.sp0.5magnitude.ex0.log

│ └── llama2-7b-tp8.sparse.nmprune.sp0.5wanda.ex0.log

To evaluate the pruned model:

bash scripts/ppl/evaluate_llama2_wikitext2.sh output/oneshot_pruning/checkpoint/llama2-7b-tp8.sparse.nmprune.sp0.5hessian.ex0 7b 8 sparse| Mask Sampling | Visualization |

|---|---|

|

|

By default, the script will load SparseGPT prior. Please modify the path in the script to load other masks. Here 0 means the initial training, and 1 means continue training from the latest checkpoint.

# Initial training with a prior mask.

# By default, the script will load output/oneshot_pruning/checkpoint/llama2-7b-tp8.sparse.nmprune.sp0.5hessian.ex0 as the mask prior

bash scripts/learnable_sparsity/llama2_7b_mask_only_tp8_c4.sh 0

# Pass the argument 1 to continue the training from the latest checkpoint

bash scripts/learnable_sparsity/llama2_7b_mask_only_tp8_c4.sh 1For inference, we only need those winner masks with the highest probability. The following command will trim the checkpoint and remove unnecessary components.

python tool_trim_learnable_sparsity.py --ckpt_dir output/checkpoints/llama2-7b-tp8-mask-only-c4-singlenode/train_iters_2000/ckpt/iter_0002000 The script will create a new checkpoint named release and update the pointer to the latest checkpoint in latest_checkpointed_iteration.txt.

# Llama-2 7b & 13b

bash scripts/ppl/evaluate_llama2_wikitext2.sh output/checkpoints/llama2-7b-tp8-mask-only-c4-singlenode/train_iters_2000/ckpt/ 7b 8 sparse

bash scripts/ppl/evaluate_llama2_wikitext2.sh output/checkpoints/llama2-13b-tp8-mask-only-c4-singlenode/train_iters_2000/ckpt/ 13b 8 sparse

# Llama-3 8b

bash scripts/ppl/evaluate_llama3_wikitext2.sh output/checkpoints/llama3-8b-tp8-mask-only-c4-singlenode/train_iters_2000/ckpt/ 8b 8 sparse

# Llama-3.1 8b

bash scripts/ppl/evaluate_llama3.1_wikitext2.sh output/checkpoints/llama3.1-8b-tp8-mask-only-c4-singlenode/train_iters_2000/ckpt/ 8b 8 sparsePlease see docs/export_hf.md for instructions on exporting sparse models to Huggingface.

@article{fang2024maskllm,

title={Maskllm: Learnable semi-structured sparsity for large language models},

author={Fang, Gongfan and Yin, Hongxu and Muralidharan, Saurav and Heinrich, Greg and Pool, Jeff and Kautz, Jan and Molchanov, Pavlo and Wang, Xinchao},

journal={arXiv preprint arXiv:2409.17481},

year={2024}

}For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for MaskLLM

Similar Open Source Tools

MaskLLM

MaskLLM is a learnable pruning method that establishes Semi-structured Sparsity in Large Language Models (LLMs) to reduce computational overhead during inference. It is scalable and benefits from larger training datasets. The tool provides examples for running MaskLLM with Megatron-LM, preparing LLaMA checkpoints, pre-tokenizing C4 data for Megatron, generating prior masks, training MaskLLM, and evaluating the model. It also includes instructions for exporting sparse models to Huggingface.

GraphGen

GraphGen is a framework for synthetic data generation guided by knowledge graphs. It enhances supervised fine-tuning for large language models (LLMs) by generating synthetic data based on a fine-grained knowledge graph. The tool identifies knowledge gaps in LLMs, prioritizes generating QA pairs targeting high-value knowledge, incorporates multi-hop neighborhood sampling, and employs style-controlled generation to diversify QA data. Users can use LLaMA-Factory and xtuner for fine-tuning LLMs after data generation.

Automodel

Automodel is a Python library for automating the process of building and evaluating machine learning models. It provides a set of tools and utilities to streamline the model development workflow, from data preprocessing to model selection and evaluation. With Automodel, users can easily experiment with different algorithms, hyperparameters, and feature engineering techniques to find the best model for their dataset. The library is designed to be user-friendly and customizable, allowing users to define their own pipelines and workflows. Automodel is suitable for data scientists, machine learning engineers, and anyone looking to quickly build and test machine learning models without the need for manual intervention.

aiconfigurator

The `aiconfigurator` tool assists in finding a strong starting configuration for disaggregated serving in AI deployments. It helps optimize throughput at a given latency by evaluating thousands of configurations based on model, GPU count, and GPU type. The tool models LLM inference using collected data for a target machine and framework, running via CLI and web app. It generates configuration files for deployment with Dynamo, offering features like customized configuration, all-in-one automation, and tuning with advanced features. The tool estimates performance by breaking down LLM inference into operations, collecting operation execution times, and searching for strong configurations. Supported features include models like GPT and operations like attention, KV cache, GEMM, AllReduce, embedding, P2P, element-wise, MoE, MLA BMM, TRTLLM versions, and parallel modes like tensor-parallel and pipeline-parallel.

GPULlama3.java

GPULlama3.java powered by TornadoVM is a Java-native implementation of Llama3 that automatically compiles and executes Java code on GPUs via TornadoVM. It supports Llama3, Mistral, Qwen2.5, Qwen3, and Phi3 models in the GGUF format. The repository aims to provide GPU acceleration for Java code, enabling faster execution and high-performance access to off-heap memory. It offers features like interactive and instruction modes, flexible backend switching between OpenCL and PTX, and cross-platform compatibility with NVIDIA, Intel, and Apple GPUs.

vnc-lm

vnc-lm is a Discord bot designed for messaging with language models. Users can configure model parameters, branch conversations, and edit prompts to enhance responses. The bot supports various providers like OpenAI, Huggingface, and Cloudflare Workers AI. It integrates with ollama and LiteLLM, allowing users to access a wide range of language model APIs through a single interface. Users can manage models, switch between models, split long messages, and create conversation branches. LiteLLM integration enables support for OpenAI-compatible APIs and local LLM services. The bot requires Docker for installation and can be configured through environment variables. Troubleshooting tips are provided for common issues like context window problems, Discord API errors, and LiteLLM issues.

paperbanana

PaperBanana is an automated academic illustration tool designed for AI scientists. It implements an agentic framework for generating publication-quality academic diagrams and statistical plots from text descriptions. The tool utilizes a two-phase multi-agent pipeline with iterative refinement, Gemini-based VLM planning, and image generation. It offers a CLI, Python API, and MCP server for IDE integration, along with Claude Code skills for generating diagrams, plots, and evaluating diagrams. PaperBanana is not affiliated with or endorsed by the original authors or Google Research, and it may differ from the original system described in the paper.

Edit-Banana

Edit Banana is a universal content re-editor that allows users to transform fixed content into fully manipulatable assets. Powered by SAM 3 and multimodal large models, it enables high-fidelity reconstruction while preserving original diagram details and logical relationships. The platform offers advanced segmentation, fixed multi-round VLM scanning, high-quality OCR, user system with credits, multi-user concurrency, and a web interface. Users can upload images or PDFs to get editable DrawIO (XML) or PPTX files in seconds. The project structure includes components for segmentation, text extraction, frontend, models, and scripts, with detailed installation and setup instructions provided. The tool is open-source under the Apache License 2.0, allowing commercial use and secondary development.

readme-ai

README-AI is a developer tool that auto-generates README.md files using a combination of data extraction and generative AI. It streamlines documentation creation and maintenance, enhancing developer productivity. This project aims to enable all skill levels, across all domains, to better understand, use, and contribute to open-source software. It offers flexible README generation, supports multiple large language models (LLMs), provides customizable output options, works with various programming languages and project types, and includes an offline mode for generating boilerplate README files without external API calls.

smart-ralph

Smart Ralph is a Claude Code plugin designed for spec-driven development. It helps users turn vague feature ideas into structured specs and executes them task-by-task. The tool operates within a self-contained execution loop without external dependencies, providing a seamless workflow for feature development. Named after the Ralph agentic loop pattern, Smart Ralph simplifies the development process by focusing on the next task at hand, akin to the simplicity of the Springfield student, Ralph.

AIGC_text_detector

AIGC_text_detector is a repository containing the official codes for the paper 'Multiscale Positive-Unlabeled Detection of AI-Generated Texts'. It includes detector models for both English and Chinese texts, along with stronger detectors developed with enhanced training strategies. The repository provides links to download the detector models, datasets, and necessary preprocessing tools. Users can train RoBERTa and BERT models on the HC3-English dataset using the provided scripts.

dora

Dataflow-oriented robotic application (dora-rs) is a framework that makes creation of robotic applications fast and simple. Building a robotic application can be summed up as bringing together hardwares, algorithms, and AI models, and make them communicate with each others. At dora-rs, we try to: make integration of hardware and software easy by supporting Python, C, C++, and also ROS2. make communication low latency by using zero-copy Arrow messages. dora-rs is still experimental and you might experience bugs, but we're working very hard to make it stable as possible.

FalkorDB

FalkorDB is the first queryable Property Graph database to use sparse matrices to represent the adjacency matrix in graphs and linear algebra to query the graph. Primary features: * Adopting the Property Graph Model * Nodes (vertices) and Relationships (edges) that may have attributes * Nodes can have multiple labels * Relationships have a relationship type * Graphs represented as sparse adjacency matrices * OpenCypher with proprietary extensions as a query language * Queries are translated into linear algebra expressions

Legacy-Modernization-Agents

Legacy Modernization Agents is an open source migration framework developed to demonstrate AI Agents capabilities for converting legacy COBOL code to Java or C# .NET. The framework uses Microsoft Agent Framework with a dual-API architecture to analyze COBOL code and dependencies, then convert to either Java Quarkus or C# .NET. The web portal provides real-time visualization of migration progress, dependency graphs, and AI-powered Q&A.

rwkv-qualcomm

This repository provides support for inference RWKV models on Qualcomm HTP (Hexagon Tensor Processor) using QNN SDK. It supports RWKV v5, v6, and experimentally v7 models, inference using Qualcomm CPU, GPU, or HTP as the backend, whole-model float16 inference, activation INT16 and weights INT8 quantized inference, and activation INT16 and weights INT4/INT8 mixed quantized inference. Users can convert model weights to QNN model library files, generate HTP context cache, and run inference on Qualcomm Snapdragon SM8650 with HTP v75. The project requires QNN SDK, AIMET toolkit, and specific hardware for verification.

bmalph

bmalph is a tool that bundles and installs two AI development systems, BMAD-METHOD for planning agents and workflows (Phases 1-3) and Ralph for autonomous implementation loop (Phase 4). It provides commands like `bmalph init` to install both systems, `bmalph upgrade` to update to the latest versions, `bmalph doctor` to check installation health, and `/bmalph-implement` to transition from BMAD to Ralph. Users can work through BMAD phases 1-3 with commands like BP, MR, DR, CP, VP, CA, etc., and then transition to Ralph for implementation.

For similar tasks

lighteval

LightEval is a lightweight LLM evaluation suite that Hugging Face has been using internally with the recently released LLM data processing library datatrove and LLM training library nanotron. We're releasing it with the community in the spirit of building in the open. Note that it is still very much early so don't expect 100% stability ^^' In case of problems or question, feel free to open an issue!

Firefly

Firefly is an open-source large model training project that supports pre-training, fine-tuning, and DPO of mainstream large models. It includes models like Llama3, Gemma, Qwen1.5, MiniCPM, Llama, InternLM, Baichuan, ChatGLM, Yi, Deepseek, Qwen, Orion, Ziya, Xverse, Mistral, Mixtral-8x7B, Zephyr, Vicuna, Bloom, etc. The project supports full-parameter training, LoRA, QLoRA efficient training, and various tasks such as pre-training, SFT, and DPO. Suitable for users with limited training resources, QLoRA is recommended for fine-tuning instructions. The project has achieved good results on the Open LLM Leaderboard with QLoRA training process validation. The latest version has significant updates and adaptations for different chat model templates.

Awesome-Text2SQL

Awesome Text2SQL is a curated repository containing tutorials and resources for Large Language Models, Text2SQL, Text2DSL, Text2API, Text2Vis, and more. It provides guidelines on converting natural language questions into structured SQL queries, with a focus on NL2SQL. The repository includes information on various models, datasets, evaluation metrics, fine-tuning methods, libraries, and practice projects related to Text2SQL. It serves as a comprehensive resource for individuals interested in working with Text2SQL and related technologies.

create-million-parameter-llm-from-scratch

The 'create-million-parameter-llm-from-scratch' repository provides a detailed guide on creating a Large Language Model (LLM) with 2.3 million parameters from scratch. The blog replicates the LLaMA approach, incorporating concepts like RMSNorm for pre-normalization, SwiGLU activation function, and Rotary Embeddings. The model is trained on a basic dataset to demonstrate the ease of creating a million-parameter LLM without the need for a high-end GPU.

StableToolBench

StableToolBench is a new benchmark developed to address the instability of Tool Learning benchmarks. It aims to balance stability and reality by introducing features such as a Virtual API System with caching and API simulators, a new set of solvable queries determined by LLMs, and a Stable Evaluation System using GPT-4. The Virtual API Server can be set up either by building from source or using a prebuilt Docker image. Users can test the server using provided scripts and evaluate models with Solvable Pass Rate and Solvable Win Rate metrics. The tool also includes model experiments results comparing different models' performance.

BetaML.jl

The Beta Machine Learning Toolkit is a package containing various algorithms and utilities for implementing machine learning workflows in multiple languages, including Julia, Python, and R. It offers a range of supervised and unsupervised models, data transformers, and assessment tools. The models are implemented entirely in Julia and are not wrappers for third-party models. Users can easily contribute new models or request implementations. The focus is on user-friendliness rather than computational efficiency, making it suitable for educational and research purposes.

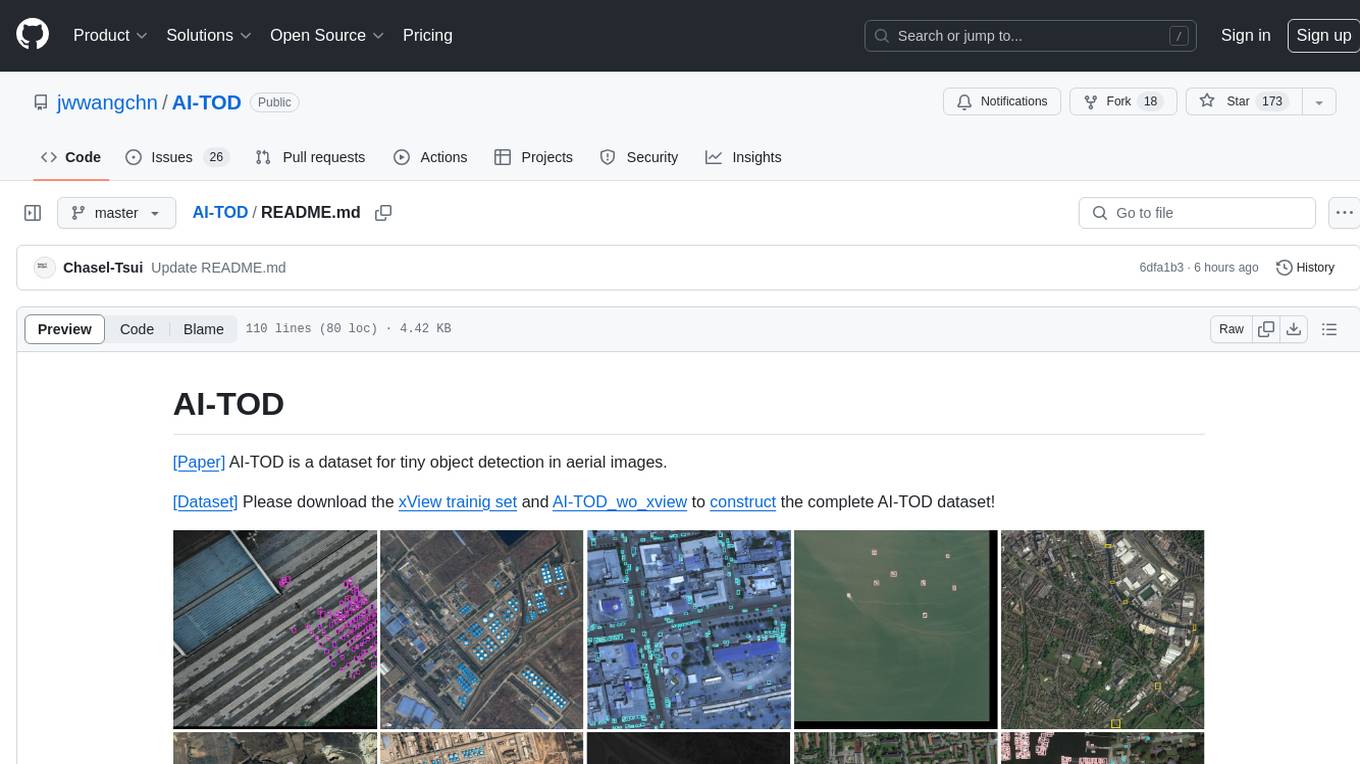

AI-TOD

AI-TOD is a dataset for tiny object detection in aerial images, containing 700,621 object instances across 28,036 images. Objects in AI-TOD are smaller with a mean size of 12.8 pixels compared to other aerial image datasets. To use AI-TOD, download xView training set and AI-TOD_wo_xview, then generate the complete dataset using the provided synthesis tool. The dataset is publicly available for academic and research purposes under CC BY-NC-SA 4.0 license.

UMOE-Scaling-Unified-Multimodal-LLMs

Uni-MoE is a MoE-based unified multimodal model that can handle diverse modalities including audio, speech, image, text, and video. The project focuses on scaling Unified Multimodal LLMs with a Mixture of Experts framework. It offers enhanced functionality for training across multiple nodes and GPUs, as well as parallel processing at both the expert and modality levels. The model architecture involves three training stages: building connectors for multimodal understanding, developing modality-specific experts, and incorporating multiple trained experts into LLMs using the LoRA technique on mixed multimodal data. The tool provides instructions for installation, weights organization, inference, training, and evaluation on various datasets.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.