Awesome-LM-SSP

A reading list for large models safety, security, and privacy (including Awesome LLM Security, Safety, etc.).

Stars: 1865

Awesome-LM-SSP is a repository that focuses on resources related to the trustworthiness of large models (LMs) across multiple dimensions such as safety, security, and privacy, with a special emphasis on multi-modal LMs like vision-language models and diffusion models. The repository is a work in progress, manually collected, and includes badges for different types of models and comments. It provides resources related to various venues, encourages community contributions, and offers guidelines for updating and adding information about papers. The repository also celebrates milestones and includes collections of books, competitions, leaderboards, toolkits, surveys, and papers categorized under safety, security, and privacy.

README:

The resources related to the trustworthiness of large models (LMs) across multiple dimensions (e.g., safety, security, and privacy), with a special focus on multi-modal LMs (e.g., vision-language models and diffusion models).

-

This repo is in progress 🌱 (manually collected).

-

Badges:

-

🔥🔥🔥 Help us update the list! 🔥🔥🔥

- First, check papers through our database: Metadata of LM-SSP.

- If you want to update the information of a paper (e.g., an arXiv paper has been accepted by a venue), search the paper title in our metadata table and then leave a message in the corresponding cell of the table.

- If you would like to add some paper, please fill in the following table through

ISSUE:

| Title | Link | Code | Venue | Classification | Model | Comment |

|---|---|---|---|---|---|---|

| This is a title | paper.com | github | bb'23 | A1. Jailbreak | LLM | Agent |

- [2026.01.09] 🎂🎂 Happy 2nd Birthday to Awesome-LM-SSP! Keep Going! 💪

- [2025.01.09] 🎂 Happy 1st Birthday to Awesome-LM-SSP! Keep Going! 💪

- [2024.01.09] 🚀 LM-SSP is released!

- Book (3)

- Competition (5)

- Leaderboard (5)

- Toolkit (15)

- Survey (40)

- Paper (2375)

- A. Safety (1191)

- A0. General (30)

- A1. Jailbreak (532)

- A2. Alignment (147)

- A3. Deepfake (94)

- A4. Ethics (8)

- A5. Fairness (60)

- A6. Hallucination (116)

- A7. Prompt Injection (118)

- A8. Toxicity (86)

- B. Security (465)

- B0. General (16)

- B1. Adversarial Examples (105)

- B2. Agent (136)

- B3. Poison & Backdoor (182)

- B4. Side-Channel (2)

- B5. System (24)

- C. Privacy (719)

- C0. General (54)

- C1. Contamination (17)

- C2. Data Reconstruction (63)

- C3. Membership Inference Attacks (68)

- C4. Model Extraction (14)

- C5. Privacy-Preserving Computation (133)

- C6. Property Inference Attacks (8)

- C7. Side-Channel (10)

- C8. Unlearning (70)

- C9. Watermark & Copyright (282)

- A. Safety (1191)

-

Organizers: Tianshuo Cong (丛天硕), Xinlei He (何新磊), Zhengyu Zhao (赵正宇), Yugeng Liu (刘禹更), Delong Ran (冉德龙)

-

This project is inspired by LLM Security, Awesome LLM Security, LLM Security & Privacy, UR2-LLMs, PLMpapers, EvaluationPapers4ChatGPT

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for Awesome-LM-SSP

Similar Open Source Tools

Awesome-LM-SSP

Awesome-LM-SSP is a repository that focuses on resources related to the trustworthiness of large models (LMs) across multiple dimensions such as safety, security, and privacy, with a special emphasis on multi-modal LMs like vision-language models and diffusion models. The repository is a work in progress, manually collected, and includes badges for different types of models and comments. It provides resources related to various venues, encourages community contributions, and offers guidelines for updating and adding information about papers. The repository also celebrates milestones and includes collections of books, competitions, leaderboards, toolkits, surveys, and papers categorized under safety, security, and privacy.

Awesome-LM-SSP

The Awesome-LM-SSP repository is a collection of resources related to the trustworthiness of large models (LMs) across multiple dimensions, with a special focus on multi-modal LMs. It includes papers, surveys, toolkits, competitions, and leaderboards. The resources are categorized into three main dimensions: safety, security, and privacy. Within each dimension, there are several subcategories. For example, the safety dimension includes subcategories such as jailbreak, alignment, deepfake, ethics, fairness, hallucination, prompt injection, and toxicity. The security dimension includes subcategories such as adversarial examples, poisoning, and system security. The privacy dimension includes subcategories such as contamination, copyright, data reconstruction, membership inference attacks, model extraction, privacy-preserving computation, and unlearning.

Awesome-LLM-Ensemble

Awesome-LLM-Ensemble is a collection of papers on LLM Ensemble, focusing on the comprehensive use of multiple large language models to benefit from their individual strengths. It provides a systematic review of recent developments in LLM Ensemble, including taxonomy, methods for ensemble before, during, and after inference, benchmarks, applications, and related surveys.

lmdeploy

LMDeploy is a toolkit for compressing, deploying, and serving LLM, developed by the MMRazor and MMDeploy teams. It has the following core features: * **Efficient Inference** : LMDeploy delivers up to 1.8x higher request throughput than vLLM, by introducing key features like persistent batch(a.k.a. continuous batching), blocked KV cache, dynamic split&fuse, tensor parallelism, high-performance CUDA kernels and so on. * **Effective Quantization** : LMDeploy supports weight-only and k/v quantization, and the 4-bit inference performance is 2.4x higher than FP16. The quantization quality has been confirmed via OpenCompass evaluation. * **Effortless Distribution Server** : Leveraging the request distribution service, LMDeploy facilitates an easy and efficient deployment of multi-model services across multiple machines and cards. * **Interactive Inference Mode** : By caching the k/v of attention during multi-round dialogue processes, the engine remembers dialogue history, thus avoiding repetitive processing of historical sessions.

EvalAI

EvalAI is an open-source platform for evaluating and comparing machine learning (ML) and artificial intelligence (AI) algorithms at scale. It provides a central leaderboard and submission interface, making it easier for researchers to reproduce results mentioned in papers and perform reliable & accurate quantitative analysis. EvalAI also offers features such as custom evaluation protocols and phases, remote evaluation, evaluation inside environments, CLI support, portability, and faster evaluation.

Jarvis

Jarvis is a powerful virtual AI assistant designed to simplify daily tasks through voice command integration. It features automation, device management, and personalized interactions, transforming technology engagement. Built using Python and AI models, it serves personal and administrative needs efficiently, making processes seamless and productive.

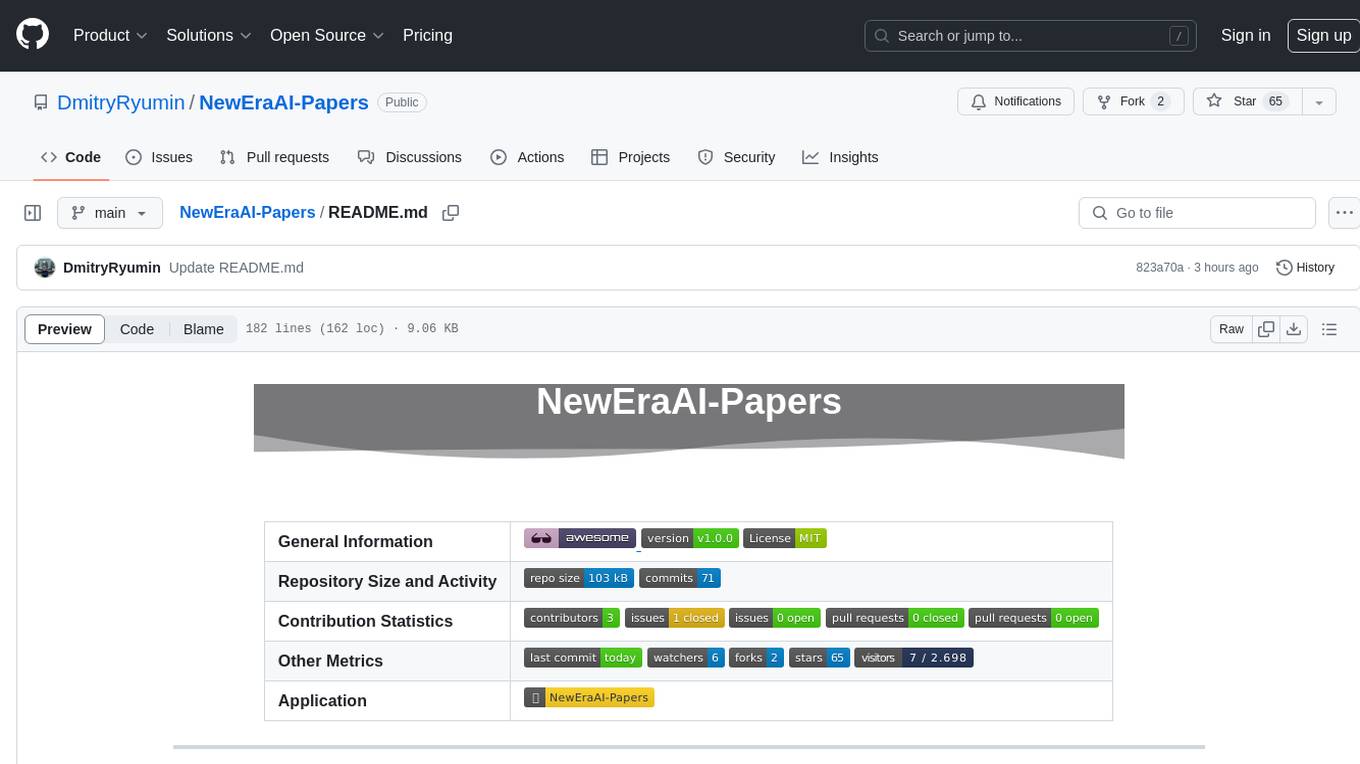

NewEraAI-Papers

The NewEraAI-Papers repository provides links to collections of influential and interesting research papers from top AI conferences, along with open-source code to promote reproducibility and provide detailed implementation insights beyond the scope of the article. Users can stay up to date with the latest advances in AI research by exploring this repository. Contributions to improve the completeness of the list are welcomed, and users can create pull requests, open issues, or contact the repository owner via email to enhance the repository further.

ExplainableAI.jl

ExplainableAI.jl is a Julia package that implements interpretability methods for black-box classifiers, focusing on local explanations and attribution maps in input space. The package requires models to be differentiable with Zygote.jl. It is similar to Captum and Zennit for PyTorch and iNNvestigate for Keras models. Users can analyze and visualize explanations for model predictions, with support for different XAI methods and customization. The package aims to provide transparency and insights into model decision-making processes, making it a valuable tool for understanding and validating machine learning models.

latentbox

Latent Box is a curated collection of resources for AI, creativity, and art. It aims to bridge the information gap with high-quality content, promote diversity and interdisciplinary collaboration, and maintain updates through community co-creation. The website features a wide range of resources, including articles, tutorials, tools, and datasets, covering various topics such as machine learning, computer vision, natural language processing, generative art, and creative coding.

ST-LLM

ST-LLM is a temporal-sensitive video large language model that incorporates joint spatial-temporal modeling, dynamic masking strategy, and global-local input module for effective video understanding. It has achieved state-of-the-art results on various video benchmarks. The repository provides code and weights for the model, along with demo scripts for easy usage. Users can train, validate, and use the model for tasks like video description, action identification, and reasoning.

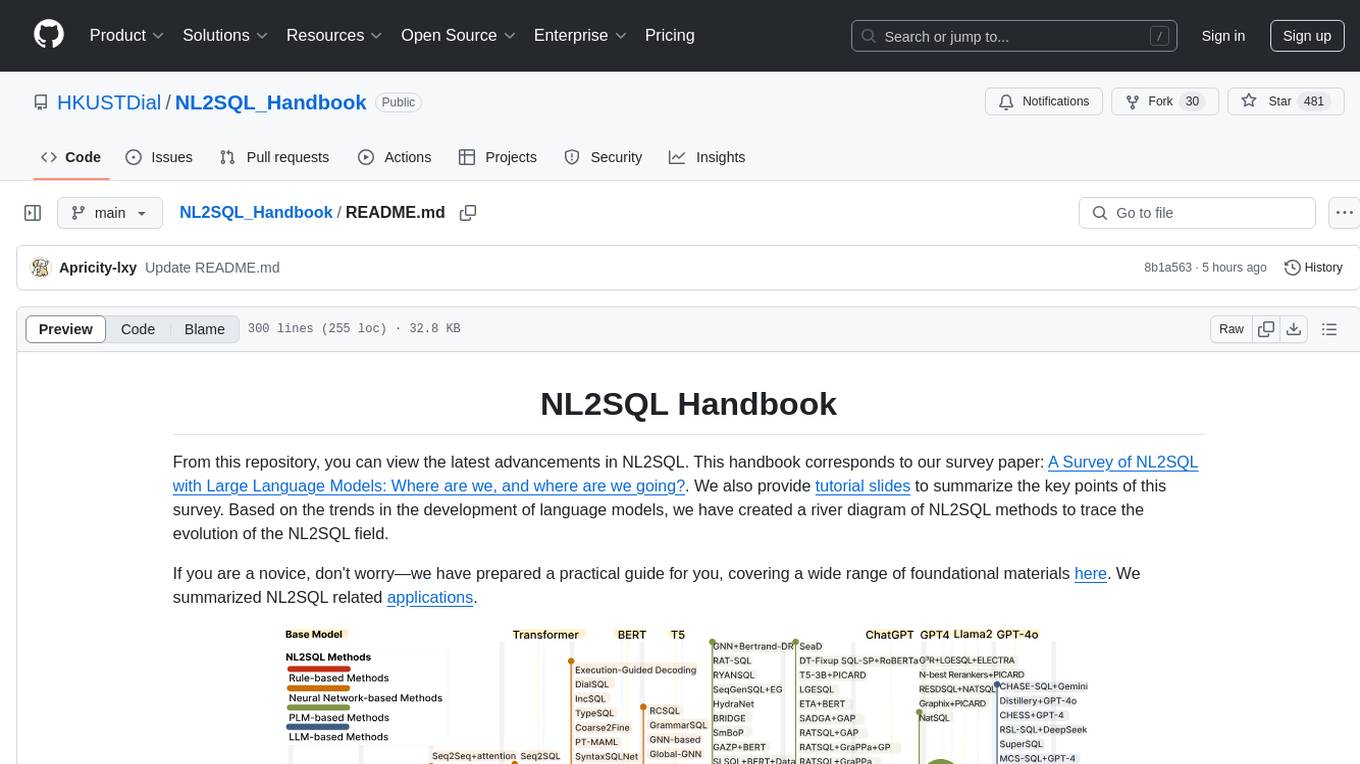

NL2SQL_Handbook

NL2SQL Handbook provides a comprehensive overview of Natural Language to SQL (NL2SQL) advancements, including survey papers, tutorial slides, and a river diagram of NL2SQL methods. It covers the evolution of NL2SQL solutions, module-based methods, benchmark development, and future directions. The repository also offers practical guides for beginners, access to high-performance language models, and evaluation metrics for NL2SQL models.

Eridanus

Eridanus is a powerful data visualization tool designed to help users create interactive and insightful visualizations from their datasets. With a user-friendly interface and a wide range of customization options, Eridanus makes it easy for users to explore and analyze their data in a meaningful way. Whether you are a data scientist, business analyst, or student, Eridanus provides the tools you need to communicate your findings effectively and make data-driven decisions.

big-AGI

big-AGI is an AI suite designed for professionals seeking function, form, simplicity, and speed. It offers best-in-class Chats, Beams, and Calls with AI personas, visualizations, coding, drawing, side-by-side chatting, and more, all wrapped in a polished UX. The tool is powered by the latest models from 12 vendors and open-source servers, providing users with advanced AI capabilities and a seamless user experience. With continuous updates and enhancements, big-AGI aims to stay ahead of the curve in the AI landscape, catering to the needs of both developers and AI enthusiasts.

nacos

Nacos is an easy-to-use platform designed for dynamic service discovery and configuration and service management. It helps build cloud native applications and microservices platform easily. Nacos provides functions like service discovery, health check, dynamic configuration management, dynamic DNS service, and service metadata management.

univer

Univer is an isomorphic full-stack framework designed for creating and editing spreadsheets, documents, and slides across web and server. It is highly extensible, high-performance, and can be embedded into applications. Univer offers a wide range of features including formulas, conditional formatting, data validation, collaborative editing, printing, import & export, and more. It supports multiple languages and provides a distraction-free editing experience with a clean interface. Univer is suitable for data analysts, software developers, project managers, content creators, and educators.

For similar tasks

Awesome-LM-SSP

Awesome-LM-SSP is a repository that focuses on resources related to the trustworthiness of large models (LMs) across multiple dimensions such as safety, security, and privacy, with a special emphasis on multi-modal LMs like vision-language models and diffusion models. The repository is a work in progress, manually collected, and includes badges for different types of models and comments. It provides resources related to various venues, encourages community contributions, and offers guidelines for updating and adding information about papers. The repository also celebrates milestones and includes collections of books, competitions, leaderboards, toolkits, surveys, and papers categorized under safety, security, and privacy.

opensourceAI

This repository is a collection of various open source AI projects and topics, each focusing on specific areas such as language models, security, and deepfake technology. It includes projects like privateGPT for building a private version of the GPT language model, AutoGPT for automating training GPT models, and DeepFaceLab for deepfake creation. Explore these repositories to find projects that interest you.

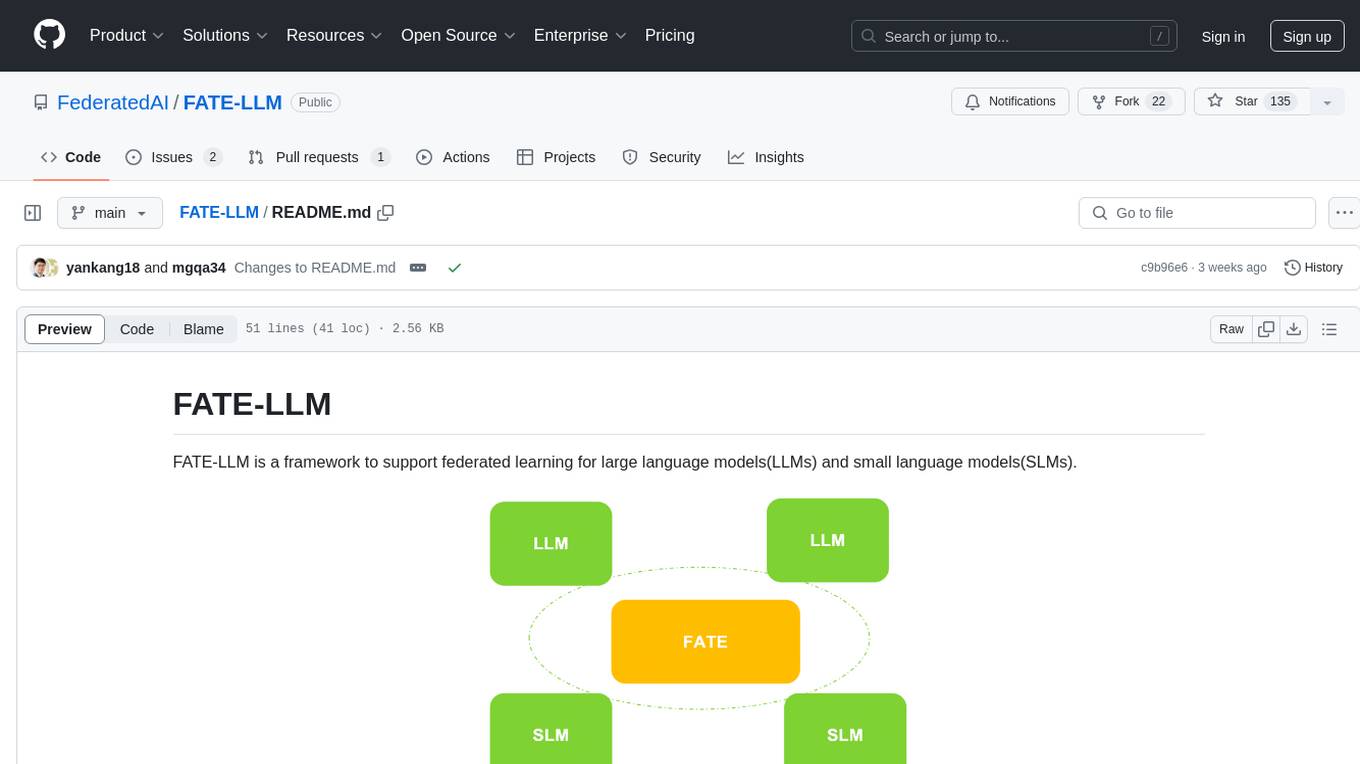

FATE-LLM

FATE-LLM is a framework supporting federated learning for large and small language models. It promotes training efficiency of federated LLMs using Parameter-Efficient methods, protects the IP of LLMs using FedIPR, and ensures data privacy during training and inference through privacy-preserving mechanisms.

AI-Security-and-Privacy-Events

AI-Security-and-Privacy-Events is a curated list of academic events focusing on AI security and privacy. It includes seminars, conferences, workshops, tutorials, special sessions, and covers various topics such as NLP & LLM Security, Privacy and Security in ML, Machine Learning Security, AI System with Confidential Computing, Adversarial Machine Learning, and more.

shimmy

Shimmy is a 5.1MB single-binary local inference server providing OpenAI-compatible endpoints for GGUF models. It offers fast, reliable AI inference with sub-second responses, zero configuration, and automatic port management. Perfect for developers seeking privacy, cost-effectiveness, speed, and easy integration with popular tools like VSCode and Cursor. Shimmy is designed to be invisible infrastructure that simplifies local AI development and deployment.

For similar jobs

LLM-and-Law

This repository is dedicated to summarizing papers related to large language models with the field of law. It includes applications of large language models in legal tasks, legal agents, legal problems of large language models, data resources for large language models in law, law LLMs, and evaluation of large language models in the legal domain.

start-llms

This repository is a comprehensive guide for individuals looking to start and improve their skills in Large Language Models (LLMs) without an advanced background in the field. It provides free resources, online courses, books, articles, and practical tips to become an expert in machine learning. The guide covers topics such as terminology, transformers, prompting, retrieval augmented generation (RAG), and more. It also includes recommendations for podcasts, YouTube videos, and communities to stay updated with the latest news in AI and LLMs.

aiverify

AI Verify is an AI governance testing framework and software toolkit that validates the performance of AI systems against internationally recognised principles through standardised tests. It offers a new API Connector feature to bypass size limitations, test various AI frameworks, and configure connection settings for batch requests. The toolkit operates within an enterprise environment, conducting technical tests on common supervised learning models for tabular and image datasets. It does not define AI ethical standards or guarantee complete safety from risks or biases.

Awesome-LLM-Watermark

This repository contains a collection of research papers related to watermarking techniques for text and images, specifically focusing on large language models (LLMs). The papers cover various aspects of watermarking LLM-generated content, including robustness, statistical understanding, topic-based watermarks, quality-detection trade-offs, dual watermarks, watermark collision, and more. Researchers have explored different methods and frameworks for watermarking LLMs to protect intellectual property, detect machine-generated text, improve generation quality, and evaluate watermarking techniques. The repository serves as a valuable resource for those interested in the field of watermarking for LLMs.

LLM-LieDetector

This repository contains code for reproducing experiments on lie detection in black-box LLMs by asking unrelated questions. It includes Q/A datasets, prompts, and fine-tuning datasets for generating lies with language models. The lie detectors rely on asking binary 'elicitation questions' to diagnose whether the model has lied. The code covers generating lies from language models, training and testing lie detectors, and generalization experiments. It requires access to GPUs and OpenAI API calls for running experiments with open-source models. Results are stored in the repository for reproducibility.

graphrag

The GraphRAG project is a data pipeline and transformation suite designed to extract meaningful, structured data from unstructured text using LLMs. It enhances LLMs' ability to reason about private data. The repository provides guidance on using knowledge graph memory structures to enhance LLM outputs, with a warning about the potential costs of GraphRAG indexing. It offers contribution guidelines, development resources, and encourages prompt tuning for optimal results. The Responsible AI FAQ addresses GraphRAG's capabilities, intended uses, evaluation metrics, limitations, and operational factors for effective and responsible use.

langtest

LangTest is a comprehensive evaluation library for custom LLM and NLP models. It aims to deliver safe and effective language models by providing tools to test model quality, augment training data, and support popular NLP frameworks. LangTest comes with benchmark datasets to challenge and enhance language models, ensuring peak performance in various linguistic tasks. The tool offers more than 60 distinct types of tests with just one line of code, covering aspects like robustness, bias, representation, fairness, and accuracy. It supports testing LLMS for question answering, toxicity, clinical tests, legal support, factuality, sycophancy, and summarization.

Awesome-Jailbreak-on-LLMs

Awesome-Jailbreak-on-LLMs is a collection of state-of-the-art, novel, and exciting jailbreak methods on Large Language Models (LLMs). The repository contains papers, codes, datasets, evaluations, and analyses related to jailbreak attacks on LLMs. It serves as a comprehensive resource for researchers and practitioners interested in exploring various jailbreak techniques and defenses in the context of LLMs. Contributions such as additional jailbreak-related content, pull requests, and issue reports are welcome, and contributors are acknowledged. For any inquiries or issues, contact [email protected]. If you find this repository useful for your research or work, consider starring it to show appreciation.

-589cf4)

-c7688b)

-39c5bb)