EvalAI

:cloud: :rocket: :bar_chart: :chart_with_upwards_trend: Evaluating state of the art in AI

Stars: 1828

EvalAI is an open-source platform for evaluating and comparing machine learning (ML) and artificial intelligence (AI) algorithms at scale. It provides a central leaderboard and submission interface, making it easier for researchers to reproduce results mentioned in papers and perform reliable & accurate quantitative analysis. EvalAI also offers features such as custom evaluation protocols and phases, remote evaluation, evaluation inside environments, CLI support, portability, and faster evaluation.

README:

EvalAI is an open source platform for evaluating and comparing machine learning (ML) and artificial intelligence (AI) algorithms at scale.

In recent years, it has become increasingly difficult to compare an algorithm solving a given task with other existing approaches. These comparisons suffer from minor differences in algorithm implementation, use of non-standard dataset splits and different evaluation metrics. By providing a central leaderboard and submission interface, we make it easier for researchers to reproduce the results mentioned in the paper and perform reliable & accurate quantitative analysis. By providing swift and robust backends based on map-reduce frameworks that speed up evaluation on the fly, EvalAI aims to make it easier for researchers to reproduce results from technical papers and perform reliable and accurate analyses.

-

Custom evaluation protocols and phases: We allow creation of an arbitrary number of evaluation phases and dataset splits, compatibility using any programming language, and organizing results in both public and private leaderboards.

-

Remote evaluation: Certain large-scale challenges need special compute capabilities for evaluation. If the challenge needs extra computational power, challenge organizers can easily add their own cluster of worker nodes to process participant submissions while we take care of hosting the challenge, handling user submissions, and maintaining the leaderboard.

-

Evaluation inside environments: EvalAI lets participants submit code for their agent in the form of docker images which are evaluated against test environments on the evaluation server. During evaluation, the worker fetches the image, test environment, and the model snapshot and spins up a new container to perform evaluation.

-

CLI support: evalai-cli is designed to extend the functionality of the EvalAI web application to your command line to make the platform more accessible and terminal-friendly.

-

Portability: EvalAI is designed with keeping in mind scalability and portability of such a system from the very inception of the idea. Most of the components rely heavily on open-source technologies – Docker, Django, Node.js, and PostgreSQL.

-

Faster evaluation: We warm-up the worker nodes at start-up by importing the challenge code and pre-loading the dataset in memory. We also split the dataset into small chunks that are simultaneously evaluated on multiple cores. These simple tricks result in faster evaluation and reduces the evaluation time by an order of magnitude in some cases.

Our ultimate goal is to build a centralized platform to host, participate and collaborate in AI challenges organized around the globe and we hope to help in benchmarking progress in AI.

Setting up EvalAI on your local machine is really easy. You can setup EvalAI using docker: The steps are:

-

Install docker and docker-compose on your machine.

-

Get the source code on to your machine via git.

git clone https://github.com/Cloud-CV/EvalAI.git evalai && cd evalai

-

Build and run the Docker containers. This might take a while.

docker-compose up --buildBy default, this starts only the required services (

db,sqs, anddjango).

If you need worker services, start them using:docker-compose --profile worker up --buildIf you need statsd-exporter, start it using:

docker-compose --profile statsd up --buildTo start both optional services, use:

docker-compose --profile worker --profile statsd up --build -

That's it. Open web browser and hit the URL http://127.0.0.1:8888. Three users will be created by default which are listed below -

SUPERUSER- username:

adminpassword:password

HOST USER- username:hostpassword:password

PARTICIPANT USER- username:participantpassword:password

If you are facing any issue during installation, please see our common errors during installation page.

If you are using EvalAI for hosting challenges, please cite the following technical report:

@article{EvalAI,

title = {EvalAI: Towards Better Evaluation Systems for AI Agents},

author = {Deshraj Yadav and Rishabh Jain and Harsh Agrawal and Prithvijit

Chattopadhyay and Taranjeet Singh and Akash Jain and Shiv Baran

Singh and Stefan Lee and Dhruv Batra},

year = {2019},

volume = arXiv:1902.03570

}

EvalAI is currently maintained by Rishabh Jain, Gunjan Chhablani . A non-exhaustive list of other major contributors includes: Deshraj Yadav, Ram Ramrakhya,Akash Jain, Taranjeet Singh, Shiv Baran Singh, Harsh Agarwal, Prithvijit Chattopadhyay, Devi Parikh and Dhruv Batra.

If you are interested in contributing to EvalAI, follow our contribution guidelines.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for EvalAI

Similar Open Source Tools

EvalAI

EvalAI is an open-source platform for evaluating and comparing machine learning (ML) and artificial intelligence (AI) algorithms at scale. It provides a central leaderboard and submission interface, making it easier for researchers to reproduce results mentioned in papers and perform reliable & accurate quantitative analysis. EvalAI also offers features such as custom evaluation protocols and phases, remote evaluation, evaluation inside environments, CLI support, portability, and faster evaluation.

awesome-LLM-AIOps

The 'awesome-LLM-AIOps' repository is a curated list of academic research and industrial materials related to Large Language Models (LLM) and Artificial Intelligence for IT Operations (AIOps). It covers various topics such as incident management, log analysis, root cause analysis, incident mitigation, and incident postmortem analysis. The repository provides a comprehensive collection of papers, projects, and tools related to the application of LLM and AI in IT operations, offering valuable insights and resources for researchers and practitioners in the field.

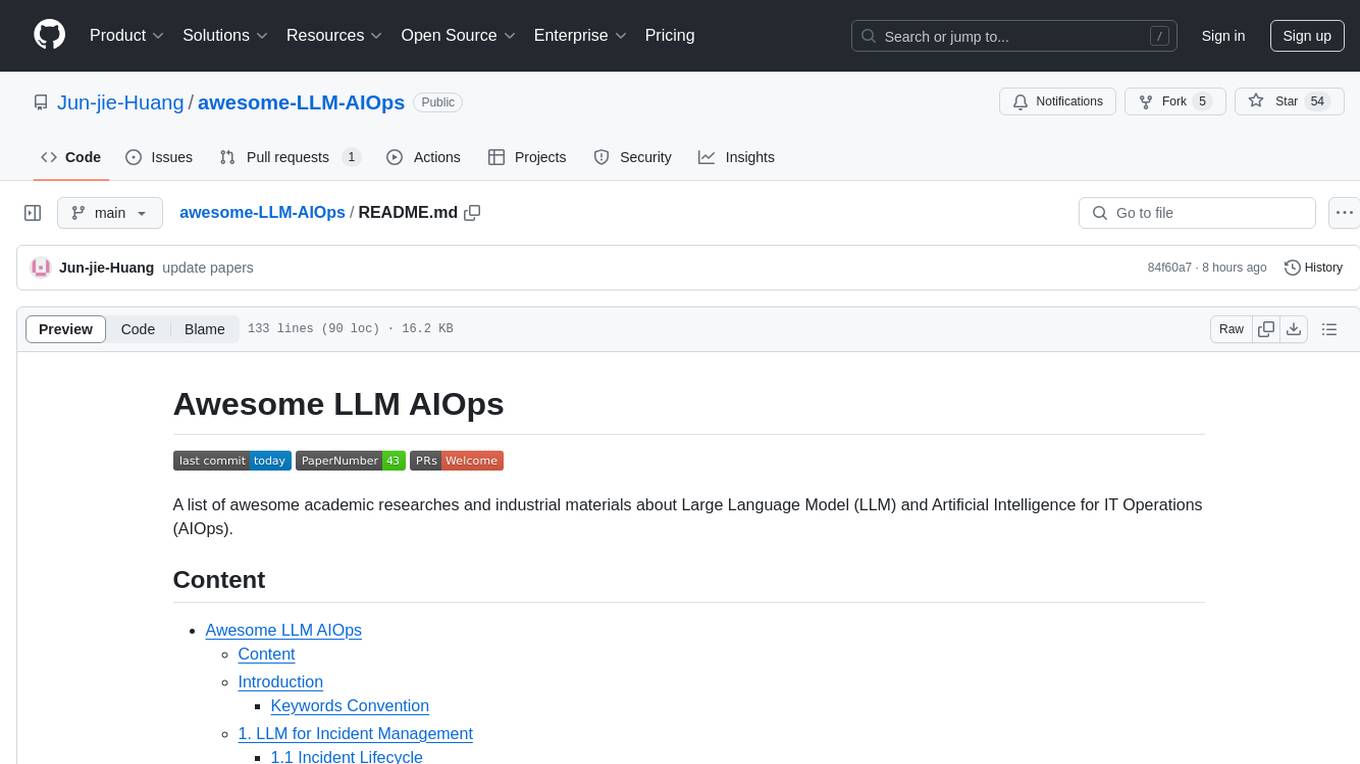

NL2SQL_Handbook

NL2SQL Handbook provides a comprehensive overview of Natural Language to SQL (NL2SQL) advancements, including survey papers, tutorial slides, and a river diagram of NL2SQL methods. It covers the evolution of NL2SQL solutions, module-based methods, benchmark development, and future directions. The repository also offers practical guides for beginners, access to high-performance language models, and evaluation metrics for NL2SQL models.

innoshop

InnoShop is an innovative open-source e-commerce system based on Laravel 12. It supports multiple languages, multiple currencies, and is integrated with OpenAI. The system features plugin mechanisms and theme template development for enhanced user experience and system extensibility. It is globally oriented, user-friendly, and based on the latest technology with deep AI integration.

Awesome-LLM-Ensemble

Awesome-LLM-Ensemble is a collection of papers on LLM Ensemble, focusing on the comprehensive use of multiple large language models to benefit from their individual strengths. It provides a systematic review of recent developments in LLM Ensemble, including taxonomy, methods for ensemble before, during, and after inference, benchmarks, applications, and related surveys.

LLM-for-misinformation-research

LLM-for-misinformation-research is a curated paper list of misinformation research using large language models (LLMs). The repository covers methods for detection and verification, tools for fact-checking complex claims, decision-making and explanation, claim matching, post-hoc explanation generation, and other tasks related to combating misinformation. It includes papers on fake news detection, rumor detection, fact verification, and more, showcasing the application of LLMs in various aspects of misinformation research.

LLM-IR-Bias-Fairness-Survey

LLM-IR-Bias-Fairness-Survey is a collection of papers related to bias and fairness in Information Retrieval (IR) with Large Language Models (LLMs). The repository organizes papers according to a survey paper titled 'Bias and Unfairness in Information Retrieval Systems: New Challenges in the LLM Era'. The survey provides a comprehensive review of emerging issues related to bias and unfairness in the integration of LLMs into IR systems, categorizing mitigation strategies into data sampling and distribution reconstruction approaches.

Awesome-Text2SQL

Awesome Text2SQL is a curated repository containing tutorials and resources for Large Language Models, Text2SQL, Text2DSL, Text2API, Text2Vis, and more. It provides guidelines on converting natural language questions into structured SQL queries, with a focus on NL2SQL. The repository includes information on various models, datasets, evaluation metrics, fine-tuning methods, libraries, and practice projects related to Text2SQL. It serves as a comprehensive resource for individuals interested in working with Text2SQL and related technologies.

LLMFarm

LLMFarm is an iOS and MacOS app designed to work with large language models (LLM). It allows users to load different LLMs with specific parameters, test the performance of various LLMs on iOS and macOS, and identify the most suitable model for their projects. The tool is based on ggml and llama.cpp by Georgi Gerganov and incorporates sources from rwkv.cpp by saharNooby, Mia by byroneverson, and LlamaChat by alexrozanski. LLMFarm features support for MacOS (13+) and iOS (16+), various inferences and sampling methods, Metal compatibility (not supported on Intel Mac), model setting templates, LoRA adapters support, LoRA finetune support, LoRA export as model support, and more. It also offers a range of inferences including LLaMA, GPTNeoX, Replit, GPT2, Starcoder, RWKV, Falcon, MPT, Bloom, and others. Additionally, it supports multimodal models like LLaVA, Obsidian, and MobileVLM. Users can customize inference options through JSON files and access supported models for download.

code-a2z

Code A2Z is an open-source project designed to empower developers by providing a platform for building, learning, and collaborating through structured modular design and real-time tools. It offers a full-stack platform with React, Vite, MUI on the frontend, and Node.js, Express, MongoDB on the backend. The platform aims to bridge the gap between solo learning and team development by offering real-time editing, project organization, subscription-based updates, and structured contribution systems. Future releases will include AI-driven productivity tools, personalized feeds, and real-time collaboration analytics.

Awesome-LM-SSP

The Awesome-LM-SSP repository is a collection of resources related to the trustworthiness of large models (LMs) across multiple dimensions, with a special focus on multi-modal LMs. It includes papers, surveys, toolkits, competitions, and leaderboards. The resources are categorized into three main dimensions: safety, security, and privacy. Within each dimension, there are several subcategories. For example, the safety dimension includes subcategories such as jailbreak, alignment, deepfake, ethics, fairness, hallucination, prompt injection, and toxicity. The security dimension includes subcategories such as adversarial examples, poisoning, and system security. The privacy dimension includes subcategories such as contamination, copyright, data reconstruction, membership inference attacks, model extraction, privacy-preserving computation, and unlearning.

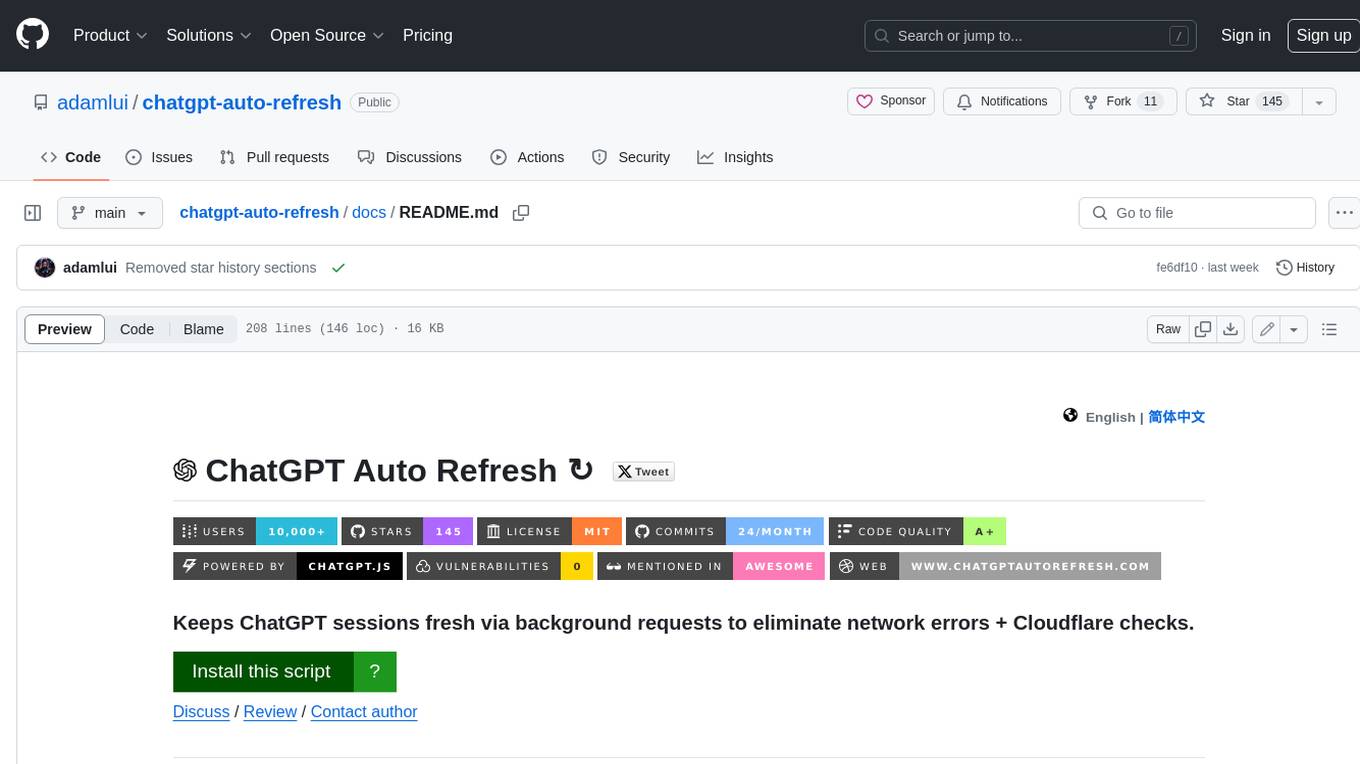

chatgpt-auto-refresh

ChatGPT Auto Refresh is a userscript that keeps ChatGPT sessions fresh by eliminating network errors and Cloudflare checks. It removes the 10-minute time limit from conversations when Chat History is disabled, ensuring a seamless experience. The tool is safe, lightweight, and a time-saver, allowing users to keep their sessions alive without constant copy/paste/refresh actions. It works even in background tabs, providing convenience and efficiency for users interacting with ChatGPT. The tool relies on the chatgpt.js library and is compatible with various browsers using Tampermonkey, making it accessible to a wide range of users.

milvus

Milvus is an open-source vector database built to power embedding similarity search and AI applications. Milvus makes unstructured data search more accessible, and provides a consistent user experience regardless of the deployment environment. Milvus 2.0 is a cloud-native vector database with storage and computation separated by design. All components in this refactored version of Milvus are stateless to enhance elasticity and flexibility. For more architecture details, see Milvus Architecture Overview. Milvus was released under the open-source Apache License 2.0 in October 2019. It is currently a graduate project under LF AI & Data Foundation.

ExplainableAI.jl

ExplainableAI.jl is a Julia package that implements interpretability methods for black-box classifiers, focusing on local explanations and attribution maps in input space. The package requires models to be differentiable with Zygote.jl. It is similar to Captum and Zennit for PyTorch and iNNvestigate for Keras models. Users can analyze and visualize explanations for model predictions, with support for different XAI methods and customization. The package aims to provide transparency and insights into model decision-making processes, making it a valuable tool for understanding and validating machine learning models.

Cyberion-Spark-X

Cyberion-Spark-X is a powerful open-source tool designed for cybersecurity professionals and data analysts. It provides advanced capabilities for analyzing and visualizing large datasets to detect security threats and anomalies. The tool integrates with popular data sources and supports various machine learning algorithms for predictive analytics and anomaly detection. Cyberion-Spark-X is user-friendly and highly customizable, making it suitable for both beginners and experienced professionals in the field of cybersecurity and data analysis.

chinmaykaitade

Chinmay Kaitade is a MERN Stack Developer and AI Enthusiast from India. He is an Electrical Engineer turned Full Stack Developer and AI/ML Enthusiast, passionate about crafting innovative web applications and contributing to Open Source projects. His mission is to build scalable web applications using his full-stack expertise and emerging AI/ML knowledge. Connect with him on LinkedIn for new projects and collaborations.

For similar tasks

EvalAI

EvalAI is an open-source platform for evaluating and comparing machine learning (ML) and artificial intelligence (AI) algorithms at scale. It provides a central leaderboard and submission interface, making it easier for researchers to reproduce results mentioned in papers and perform reliable & accurate quantitative analysis. EvalAI also offers features such as custom evaluation protocols and phases, remote evaluation, evaluation inside environments, CLI support, portability, and faster evaluation.

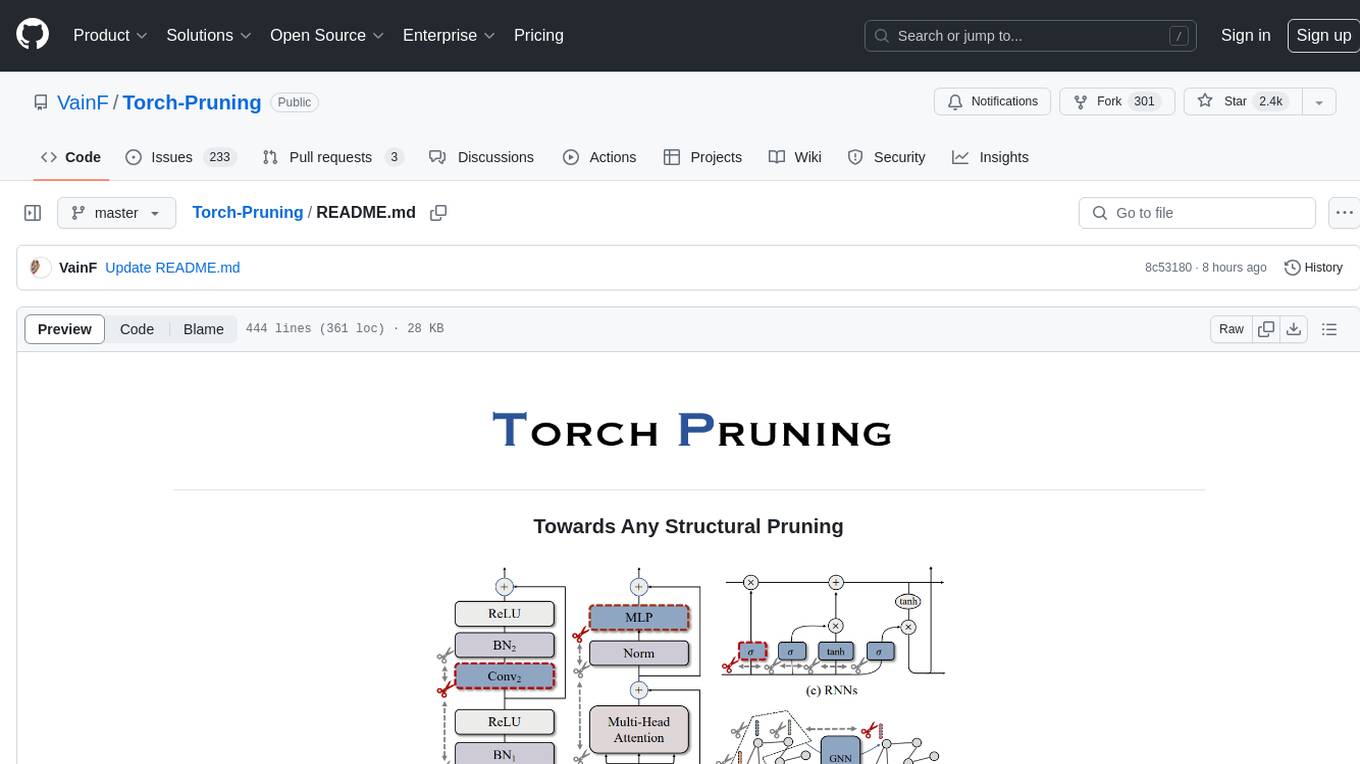

Torch-Pruning

Torch-Pruning (TP) is a library for structural pruning that enables pruning for a wide range of deep neural networks. It uses an algorithm called DepGraph to physically remove parameters. The library supports pruning off-the-shelf models from various frameworks and provides benchmarks for reproducing results. It offers high-level pruners, dependency graph for automatic pruning, low-level pruning functions, and supports various importance criteria and modules. Torch-Pruning is compatible with both PyTorch 1.x and 2.x versions.

For similar jobs

LLM-FineTuning-Large-Language-Models

This repository contains projects and notes on common practical techniques for fine-tuning Large Language Models (LLMs). It includes fine-tuning LLM notebooks, Colab links, LLM techniques and utils, and other smaller language models. The repository also provides links to YouTube videos explaining the concepts and techniques discussed in the notebooks.

lloco

LLoCO is a technique that learns documents offline through context compression and in-domain parameter-efficient finetuning using LoRA, which enables LLMs to handle long context efficiently.

camel

CAMEL is an open-source library designed for the study of autonomous and communicative agents. We believe that studying these agents on a large scale offers valuable insights into their behaviors, capabilities, and potential risks. To facilitate research in this field, we implement and support various types of agents, tasks, prompts, models, and simulated environments.

llm-baselines

LLM-baselines is a modular codebase to experiment with transformers, inspired from NanoGPT. It provides a quick and easy way to train and evaluate transformer models on a variety of datasets. The codebase is well-documented and easy to use, making it a great resource for researchers and practitioners alike.

python-tutorial-notebooks

This repository contains Jupyter-based tutorials for NLP, ML, AI in Python for classes in Computational Linguistics, Natural Language Processing (NLP), Machine Learning (ML), and Artificial Intelligence (AI) at Indiana University.

EvalAI

EvalAI is an open-source platform for evaluating and comparing machine learning (ML) and artificial intelligence (AI) algorithms at scale. It provides a central leaderboard and submission interface, making it easier for researchers to reproduce results mentioned in papers and perform reliable & accurate quantitative analysis. EvalAI also offers features such as custom evaluation protocols and phases, remote evaluation, evaluation inside environments, CLI support, portability, and faster evaluation.

Weekly-Top-LLM-Papers

This repository provides a curated list of weekly published Large Language Model (LLM) papers. It includes top important LLM papers for each week, organized by month and year. The papers are categorized into different time periods, making it easy to find the most recent and relevant research in the field of LLM.

self-llm

This project is a Chinese tutorial for domestic beginners based on the AutoDL platform, providing full-process guidance for various open-source large models, including environment configuration, local deployment, and efficient fine-tuning. It simplifies the deployment, use, and application process of open-source large models, enabling more ordinary students and researchers to better use open-source large models and helping open and free large models integrate into the lives of ordinary learners faster.