Best AI tools for< Running Inference >

20 - AI tool Sites

Segwise

Segwise is an AI tool designed to help game developers increase their game's Lifetime Value (LTV) by providing insights into player behavior and metrics. The tool uses AI agents to detect causal LTV drivers, root causes of LTV drops, and opportunities for growth. Segwise offers features such as running causal inference models on player data, hyper-segmenting player data, and providing instant answers to questions about LTV metrics. It also promises seamless integrations with gaming data sources and warehouses, ensuring data ownership and transparent pricing. The tool aims to simplify the process of improving LTV for game developers.

fal.ai

fal.ai is a generative media platform designed for developers to build the next generation of creativity. It offers lightning-fast inference with no compromise on quality, providing access to high-quality generative media models optimized by the fal Inference Engine™. The platform allows developers to fine-tune their own models, leverage real-time infrastructure for new user experiences, and scale to thousands of GPUs as needed. With a focus on developer experience, fal.ai aims to be the fastest AI tool for running diffusion models.

Lamini

Lamini is an enterprise-level LLM platform that offers precise recall with Memory Tuning, enabling teams to achieve over 95% accuracy even with large amounts of specific data. It guarantees JSON output and delivers massive throughput for inference. Lamini is designed to be deployed anywhere, including air-gapped environments, and supports training and inference on Nvidia or AMD GPUs. The platform is known for its factual LLMs and reengineered decoder that ensures 100% schema accuracy in the JSON output.

GPUX

GPUX is a cloud platform that provides access to GPUs for running AI workloads. It offers a variety of features to make it easy to deploy and run AI models, including a user-friendly interface, pre-built templates, and support for a variety of programming languages. GPUX is also committed to providing a sustainable and ethical platform, and it has partnered with organizations such as the Climate Leadership Council to reduce its carbon footprint.

Anycores

Anycores is an AI tool designed to optimize the performance of deep neural networks and reduce the cost of running AI models in the cloud. It offers a platform that provides automated solutions for tuning and inference consultation, optimized networks zoo, and platform for reducing AI model cost. Anycores focuses on faster execution, reducing inference time over 10x times, and footprint reduction during model deployment. It is device agnostic, supporting Nvidia, AMD GPUs, Intel, ARM, AMD CPUs, servers, and edge devices. The tool aims to provide highly optimized, low footprint networks tailored to specific deployment scenarios.

Modal

Modal is a high-performance cloud platform designed for developers, AI data, and ML teams. It offers a serverless environment for running generative AI models, large-scale batch jobs, job queues, and more. With Modal, users can bring their own code and leverage the platform's optimized container file system for fast cold boots and seamless autoscaling. The platform is engineered for large-scale workloads, allowing users to scale to hundreds of GPUs, pay only for what they use, and deploy functions to the cloud in seconds without the need for YAML or Dockerfiles. Modal also provides features for job scheduling, web endpoints, observability, and security compliance.

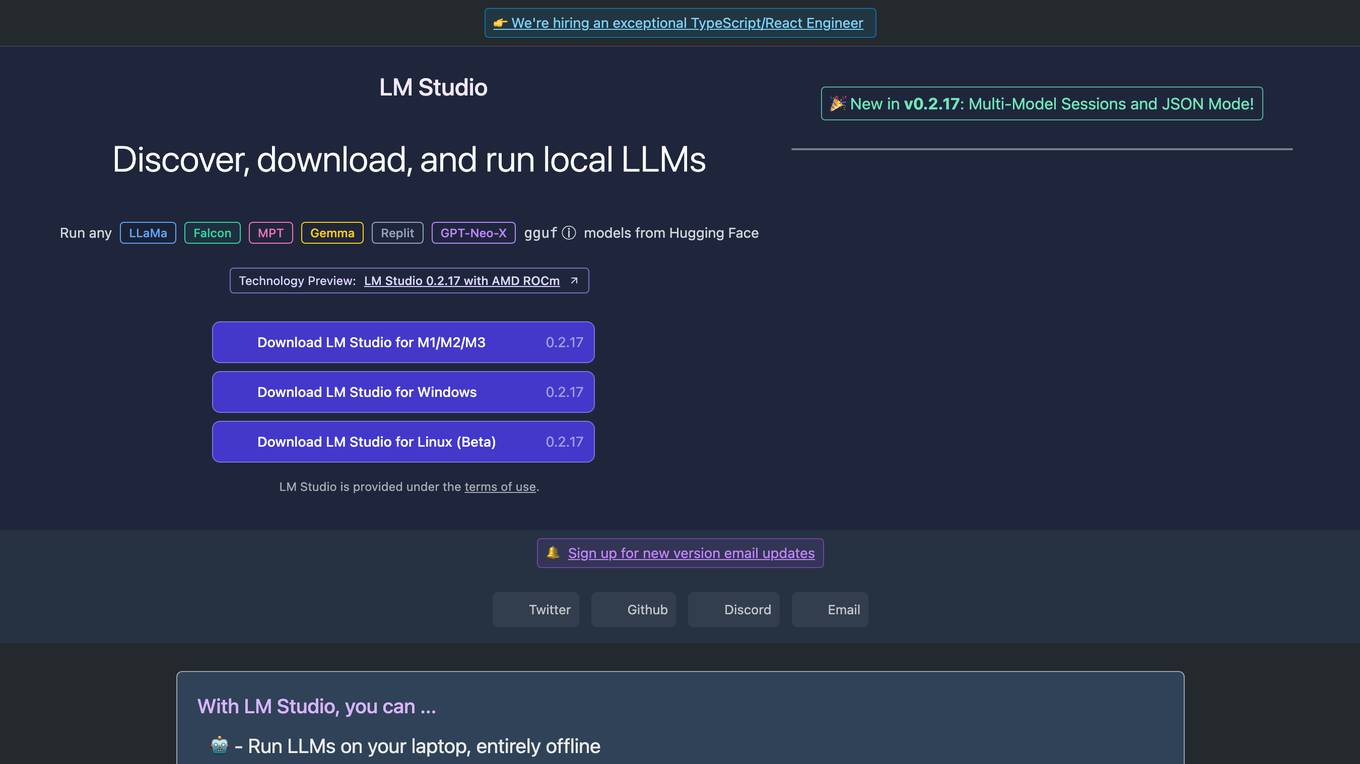

LM Studio

LM Studio is an AI tool designed for discovering, downloading, and running local LLMs (Large Language Models). Users can run LLMs on their laptops offline, use models through an in-app Chat UI or a local server, download compatible model files from HuggingFace repositories, and discover new LLMs. The tool ensures privacy by not collecting data or monitoring user actions, making it suitable for personal and business use. LM Studio supports various models like ggml Llama, MPT, and StarCoder on Hugging Face, with minimum hardware/software requirements specified for different platforms.

PicturePerfectAI

PicturePerfectAI is an AI-powered avatar maker that allows users to create customized, life-like avatars for various purposes. With a user-friendly interface and over 100 styles to choose from, users can generate unique avatars that represent their personality or brand. PicturePerfectAI prioritizes quality results by training its own models and running its own GPU servers, offering high-quality avatars at an affordable price. The platform ensures complete data privacy by encrypting user data and deleting uploaded photos and AI models within 24 hours.

Run Recommender

The Run Recommender is a web-based tool that helps runners find the perfect pair of running shoes. It uses a smart algorithm to suggest options based on your input, giving you a starting point in your search for the perfect pair. The Run Recommender is designed to be user-friendly and easy to use. Simply input your shoe width, age, weight, and other details, and the Run Recommender will generate a list of potential shoes that might suit your running style and body. You can also provide information about your running experience, distance, and frequency, and the Run Recommender will use this information to further refine its suggestions. Once you have a list of potential shoes, you can click on each shoe to learn more about it, including its features, benefits, and price. You can also search for the shoe on Amazon to find the best deals.

Cascadeur

Cascadeur is a standalone 3D software that lets you create keyframe animation, as well as clean up and edit any imported ones. Thanks to its AI-assisted and physics tools you can dramatically speed up the animation process and get high quality results. It works with .FBX, .DAE and .USD files making it easy to integrate into any animation workflow.

Gooey.AI

Gooey.AI is a platform that provides access to a variety of AI models and tools, making it easy for users to build and deploy AI solutions. The platform offers a no-code interface, making it accessible to users of all skill levels. Gooey.AI also provides a community of users who share workflows and examples, making it easy to get started with AI development.

Ollama

Ollama is an AI tool that allows users to access and utilize large language models such as Llama 3, Phi 3, Mistral, Gemma 2, and more. Users can customize and create their own models. The tool is available for macOS, Linux, and Windows platforms, offering a preview version for users to explore and utilize these models for various applications.

Obviously AI

Obviously AI is a no-code AI tool that allows users to build and deploy machine learning models without writing any code. It is designed to be easy to use, even for those with no data science experience. Obviously AI offers a variety of features, including model building, model deployment, model monitoring, and integration with other tools. It also provides expert support from a dedicated data scientist.

DORA

DORA is a research program by Google Cloud that focuses on understanding the capabilities driving software delivery and operations performance. It helps teams apply these capabilities to enhance organizational performance. The program introduces the DORA AI Capabilities Model, identifying key technical and cultural practices that amplify the positive impacts of AI on performance. DORA offers resources, guides, and tools like the DORA Quick Check to help organizations improve their software delivery goals.

Rupert AI

Rupert AI is an all-in-one AI platform that allows users to train custom AI models for text, audio, video, and images. The platform streamlines AI workflows by providing access to the latest open-source AI models and tools in a single studio tailored to business needs. Users can automate their AI workflow, generate high-quality AI product photography, and utilize popular AI workflows like the AI Fashion Model Generator and Facebook Ad Testing Tool. Rupert AI aims to revolutionize the way businesses leverage AI technology to enhance marketing visuals, streamline operations, and make informed decisions.

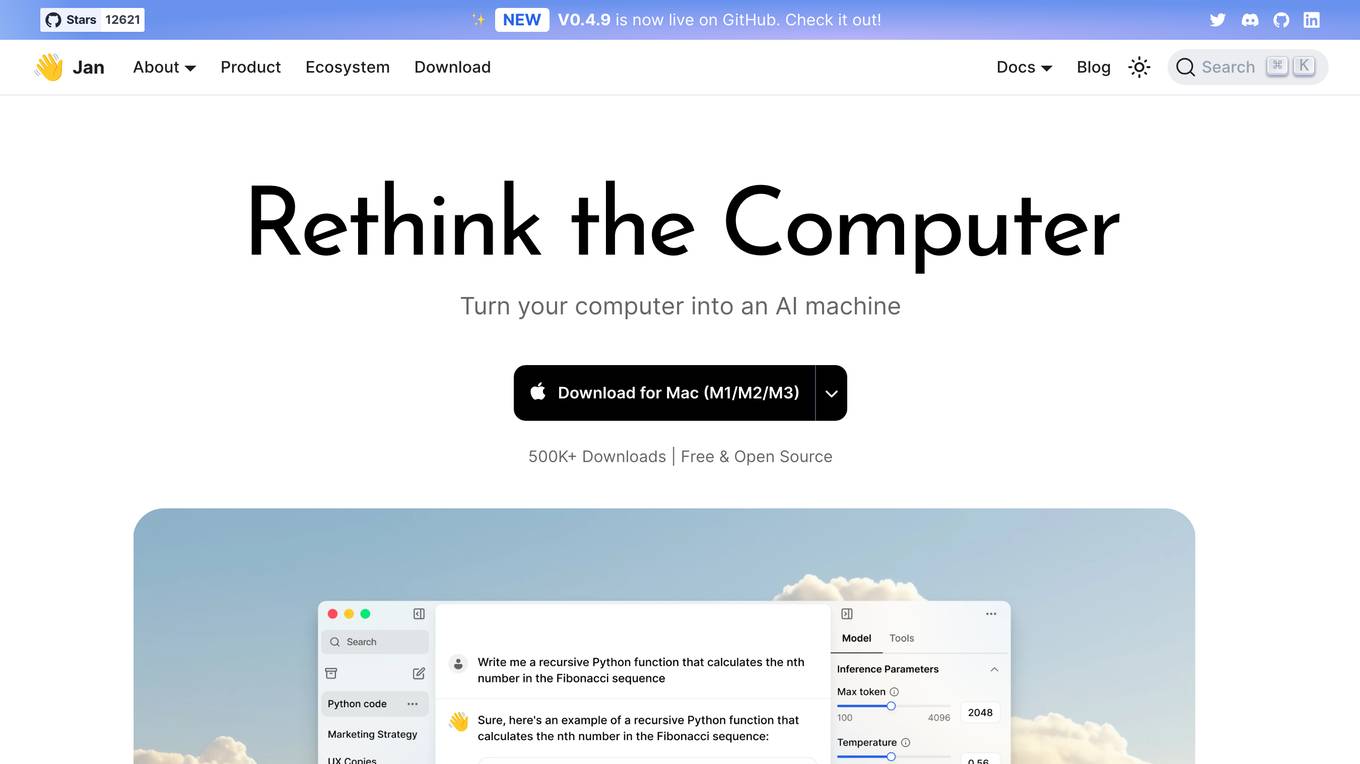

Jan

Jan is an open-source ChatGPT-alternative that runs 100% offline. It allows users to chat with AI, download and run powerful models, connect to cloud AIs, set up a local API server, and chat with files. Highly customizable, Jan also offers features like creating personalized AI assistants, memory, and extensions. The application prioritizes local-first AI, user-owned data, and full customization, making it a versatile tool for AI enthusiasts and developers.

Equixly

Equixly is an AI-powered application designed to help users secure their APIs by identifying vulnerabilities and weaknesses through continuous security testing. The platform offers features such as scalable API PenTesting, attack simulation, mapping of attack surfaces, compliance simplification, and data exposure minimization. Equixly aims to streamline the process of identifying and fixing API security risks, ultimately enabling users to release secure code faster and reduce their attack surface.

HappyPagesAI

HappyPagesAI is an AI coloring page generator that allows users to create personalized coloring pages for children. The application uses AI technology to turn ideas into designs in 3 easy steps, providing a platform for children to explore their creativity and imagination. With a catalog of over 5000 coloring pages available for free download and print, HappyPagesAI aims to offer a fun and educational experience for kids and parents alike.

Milo

Milo is an AI-powered co-pilot for parents, designed to help them manage the chaos of family life. It uses GPT-4, the latest in large-language models, to sort and organize information, send reminders, and provide updates. Milo is designed to be accurate and solve complex problems, and it learns and gets better based on user feedback. It can be used to manage tasks such as adding items to a grocery list, getting updates on the week's schedule, and sending screenshots of birthday invitations.

Verihubs

Verihubs is an AI-based verification system that offers backend infrastructure solutions for digital businesses. It provides services such as deepfake detection, face recognition, liveness detection, data extraction, identity verification, phone number verification, and watchlist screening. The platform helps protect businesses from fraud by verifying user identities and preventing AI-based video and image identity fraud. Verihubs is trusted by over 400 clients worldwide for its secure and reliable services.

1 - Open Source AI Tools

Gaudi-tutorials

The Intel Gaudi Tutorials repository contains source files for tutorials on using PyTorch and PyTorch Lightning on the Intel Gaudi AI Processor. The tutorials cater to users from beginner to advanced levels and cover various tasks such as fine-tuning models, running inference, and setting up DeepSpeed for training large language models. Users need access to an Intel Gaudi 2 Accelerator card or node, run the Intel Gaudi PyTorch Docker image, clone the tutorial repository, install Jupyterlab, and run the Jupyterlab server to follow along with the tutorials.

20 - OpenAI Gpts

Xアカウント分析GPTs

X(旧Twitter)アカウントの運用に関する専門的なアドバイスを提供するために設計されています。ユーザーが持つXアカウントのデータを分析し、エンゲージメントの向上、フォロワーの興味が高いトピックの把握、最適な投稿時間の特定などを行うことで、効果的なソーシャルメディア戦略を構築をサポートします。具体的には、ユーザーから提供されるXのアナリティクスデータを元に、高いエンゲージメントを得たツイートの特徴やエンゲージメントが低いツイートの改善点、インプレッションが高いツイートの分析などを行います。

Running Habit Architect

I'm a running coach that helps you to became addicted to running in 2-3 weeks by building your personalized plan.

Pace Assistant

Provides running splits for Strava Routes, accounting for distance and elevation changes

AgencyAi

If you are running an Agency, use this AI. It will answer you based on some books and podcast which I have used over the year. Also I uploaded some articles I have written for agency.

Painting Auto Agent - saysay.ai

Auto painting agent running with LLMermaid. Type "continue" for to continue tasks.

Mythological

A helpful assistant for D&D DMs running Dungeons & Dragons campaigns. Create towns, shops, characters, monsters, items, plots, encounters and more! Built for Dungeon Masters building DnD settings.

Dr. Business

An online business expert offering guidance for creating and running digital ventures.

Adept Online Business Builder

A guide for aspiring online entrepreneurs, offering practical advice on setting up and running a business. Please note: The product is independently developed and not affiliated, endorsed, or sponsored by OpenAI.

Live Dwell

I teach Home Economics and help with Cooking, Cleaning, and Running a Household.

AIProductGPT: Add AI to your Product and get a PRD

With simple prompts, AIProductGPT instantly crafts detailed AI-powered requirements (PRD) and mocks so that you team can hit the ground running

EOS Personal Growth Navigator

Your go-to assistant for integrating EOS (Entrepreneurial Operating System) principles into personal life. Offers expert guidance on utilizing the core EOS tools to drive personal growth and provides structured support for running your own personal annual, quarterly, and weekly L10 meetings.

Swiss Solopreneur Pilot

Comprehensive guide for Swiss solopreneurship, offering formal, well-researched advice.

Marathon Prep Coach

Designs training programs for runners preparing for marathons and other races, tailored to fitness levels.