AI tools for ICL

Related Jobs:

Related Tools:

MonAi

MonAi is an AI-powered expense tracker that simplifies the process of tracking expenses by allowing users to input expenses in a conversational manner, similar to sending a voice message to a friend. The AI technology automatically categorizes the expenses, generates a short description, and records the amount. Users can easily confirm and save the information without the need for logging in. The data is securely stored in the user's private iCloud account, ensuring privacy and security. Additionally, MonAi enables users to share and collaborate on expense tracking. Overall, MonAi streamlines expense management through intuitive AI capabilities.

Flash Notes

Flash Notes is a highly personalized and efficient learning experience tailored to advanced learners. It offers AI-powered flashcards, tailored learning algorithms, seamless multi-device synchronization, and a privacy-centric design. With Flash Notes, you can convert your notes into dynamic flashcards, get compelling prompts generated by ChatGPT, and learn at your own pace based on the spaced repetition method. Your notes are securely stored in iCloud and synced across all your devices using CRDT technology.

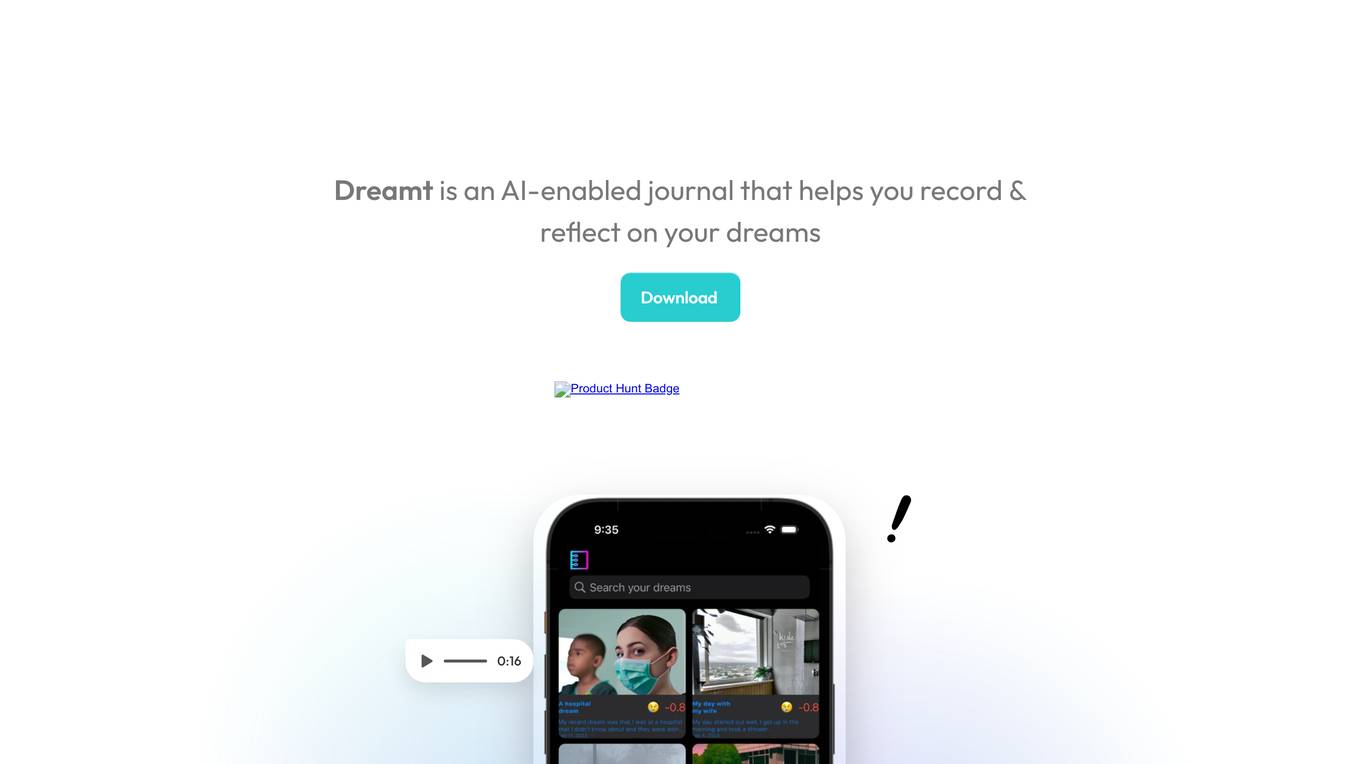

Dreamt

Dreamt is an AI-enabled journal application that allows users to record and reflect on their dreams. Users can input dream entries via text or voice, and the application provides insights on dream data such as hours slept, number of dreams captured, and recurring entities in dreams. Additionally, Dreamt offers a feature to turn dream entries into story images using AI technology. The application also includes SentiMoji for automated sentiment analysis of dreams using emojis and auto-tags for names of people, places, and organizations. Dreamt ensures user privacy with iCloud backup, advanced search capabilities, and a commitment to not collect any user data or use cookies or trackers.

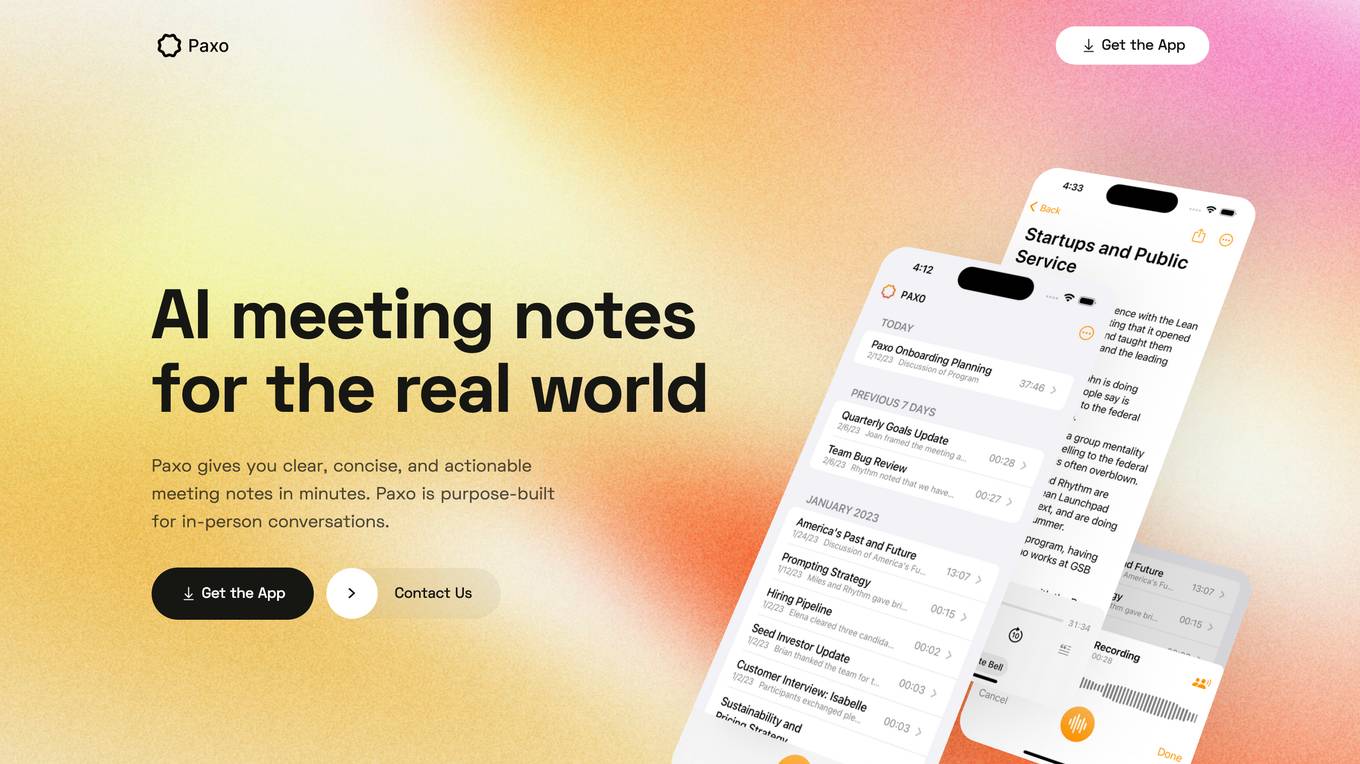

Paxo

Paxo is an AI-powered meeting notes app that provides clear, concise, and actionable meeting notes in minutes. It is purpose-built for in-person conversations and offers features such as voice identification, privacy-first architecture, and easy imports and exports. Paxo helps users stay organized and on top of their game by eliminating messy handwriting, misheard words, and forgotten action items. It is available as an app for iOS devices and syncs across all devices using iCloud.

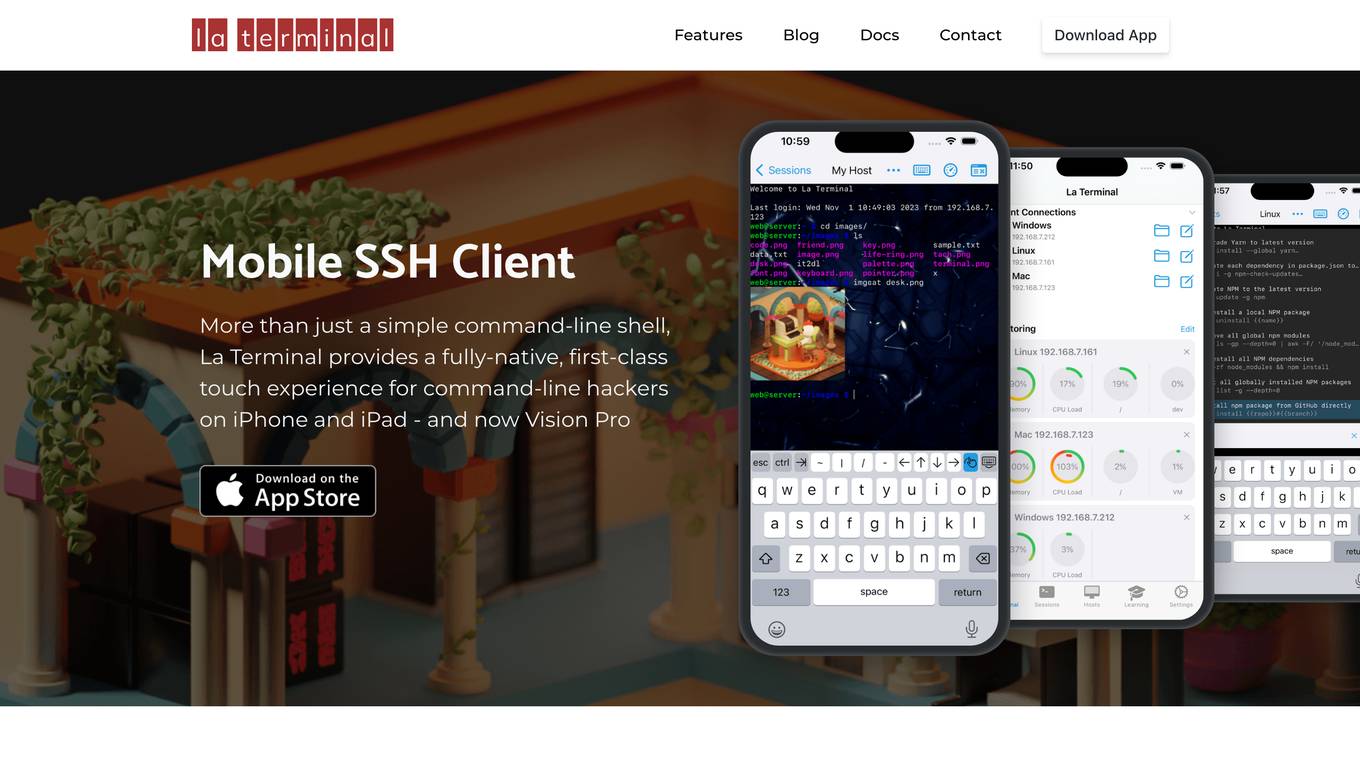

La Terminal

La Terminal is a fully-native, first-class touch experience for command-line hackers on iPhone and iPad. It provides a secure, open-source, and feature-rich environment for managing remote servers, automating tasks, and exploring the command line. With AI assistance from El Copiloto, La Terminal makes it easy to find and execute commands, even for beginners.

SimpleMail

SimpleMail is an AI-powered email writing tool that helps users save time and improve their communication. It offers a range of features, including the ability to compose emails from bullet points, summarize lengthy emails, and generate replies in different tones. SimpleMail is free to use during its open beta period.

SaneBox

SaneBox is an email management service that helps users save time and stay organized by automatically sorting incoming emails into different folders based on their importance. It offers features like BlackHole for removing unwanted senders, Snooze for delaying emails, and Daily Digest for summarizing unimportant emails. SaneBox prioritizes security and privacy, with annual security assessments and encryption of user credentials. Users can access the service on various devices without manual setup. Testimonials highlight the time-saving benefits and effectiveness of SaneBox in managing email overload.

PromptCraft

PromptCraft: Your go-to tool for crafting concise, effective prompts for diverse dialogues with ChatGPT.

Co-LLM-Agents

This repository contains code for building cooperative embodied agents modularly with large language models. The agents are trained to perform tasks in two different environments: ThreeDWorld Multi-Agent Transport (TDW-MAT) and Communicative Watch-And-Help (C-WAH). TDW-MAT is a multi-agent environment where agents must transport objects to a goal position using containers. C-WAH is an extension of the Watch-And-Help challenge, which enables agents to send messages to each other. The code in this repository can be used to train agents to perform tasks in both of these environments.

Time-LLM

Time-LLM is a reprogramming framework that repurposes large language models (LLMs) for time series forecasting. It allows users to treat time series analysis as a 'language task' and effectively leverage pre-trained LLMs for forecasting. The framework involves reprogramming time series data into text representations and providing declarative prompts to guide the LLM reasoning process. Time-LLM supports various backbone models such as Llama-7B, GPT-2, and BERT, offering flexibility in model selection. The tool provides a general framework for repurposing language models for time series forecasting tasks.

llmblueprint

LLM Blueprint is an official implementation of a paper that enables text-to-image generation with complex and detailed prompts. It leverages Large Language Models (LLMs) to extract critical components from text prompts, including bounding box coordinates for foreground objects, detailed textual descriptions for individual objects, and a succinct background context. The tool operates in two phases: Global Scene Generation creates an initial scene using object layouts and background context, and an Iterative Refinement Scheme refines box-level content to align with textual descriptions, ensuring consistency and improving recall compared to baseline diffusion models.

xFinder

xFinder is a model specifically designed for key answer extraction from large language models (LLMs). It addresses the challenges of unreliable evaluation methods by optimizing the key answer extraction module. The model achieves high accuracy and robustness compared to existing frameworks, enhancing the reliability of LLM evaluation. It includes a specialized dataset, the Key Answer Finder (KAF) dataset, for effective training and evaluation. xFinder is suitable for researchers and developers working with LLMs to improve answer extraction accuracy.

TokenFormer

TokenFormer is a fully attention-based neural network architecture that leverages tokenized model parameters to enhance architectural flexibility. It aims to maximize the flexibility of neural networks by unifying token-token and token-parameter interactions through the attention mechanism. The architecture allows for incremental model scaling and has shown promising results in language modeling and visual modeling tasks. The codebase is clean, concise, easily readable, state-of-the-art, and relies on minimal dependencies.

AutoDAN-Turbo

AutoDAN-Turbo is the official implementation of the ICLR2025 paper 'AutoDAN-Turbo: A Lifelong Agent for Strategy Self-Exploration to Jailbreak LLMs'. It is a black-box jailbreak method that automatically discovers jailbreak strategies without human intervention, achieving high attack success rates on public benchmarks. The tool can incorporate existing human-designed strategies and outperform baseline methods.

Co-LLM-Agents

Co-LLM-Agents is a repository containing codes for the paper 'Building Cooperative Embodied Agents Modularly with Large Language Models'. The project focuses on developing cooperative embodied agents using large language models, with a specific emphasis on the ThreeDWorld Multi-Agent Transport environment. The repository provides implementations, installation instructions, and example scripts for running experiments with the CoELA model. It extends the ThreeDWorld Transport Challenge into a multi-agent setting, enabling agents to transport target objects using containers and communicate with each other. Additionally, it includes the Communicative Watch-And-Help challenge, where agents can send messages to each other while performing tasks such as preparing meals, washing dishes, and setting up dinner tables.

Q-Bench

Q-Bench is a benchmark for general-purpose foundation models on low-level vision, focusing on multi-modality LLMs performance. It includes three realms for low-level vision: perception, description, and assessment. The benchmark datasets LLVisionQA and LLDescribe are collected for perception and description tasks, with open submission-based evaluation. An abstract evaluation code is provided for assessment using public datasets. The tool can be used with the datasets API for single images and image pairs, allowing for automatic download and usage. Various tasks and evaluations are available for testing MLLMs on low-level vision tasks.

MME-RealWorld

MME-RealWorld is a benchmark designed to address real-world applications with practical relevance, featuring 13,366 high-resolution images and 29,429 annotations across 43 tasks. It aims to provide substantial recognition challenges and overcome common barriers in existing Multimodal Large Language Model benchmarks, such as small data scale, restricted data quality, and insufficient task difficulty. The dataset offers advantages in data scale, data quality, task difficulty, and real-world utility compared to existing benchmarks. It also includes a Chinese version with additional images and QA pairs focused on Chinese scenarios.

AutoMathText

AutoMathText is an extensive dataset of around 200 GB of mathematical texts autonomously selected by the language model Qwen-72B. It aims to facilitate research in mathematics and artificial intelligence, serve as an educational tool for learning complex mathematical concepts, and provide a foundation for developing AI models specialized in processing mathematical content.

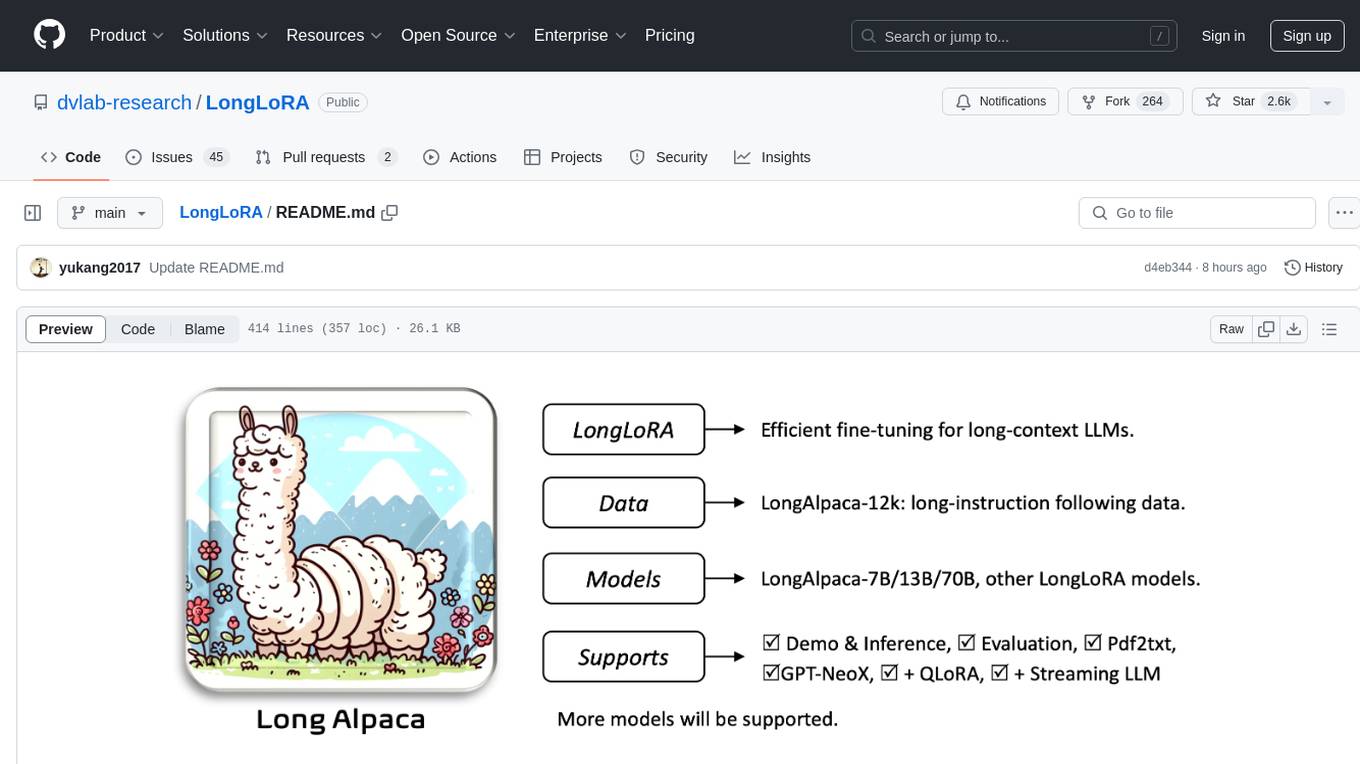

LongLoRA

LongLoRA is a tool for efficient fine-tuning of long-context large language models. It includes LongAlpaca data with long QA data collected and short QA sampled, models from 7B to 70B with context length from 8k to 100k, and support for GPTNeoX models. The tool supports supervised fine-tuning, context extension, and improved LoRA fine-tuning. It provides pre-trained weights, fine-tuning instructions, evaluation methods, local and online demos, streaming inference, and data generation via Pdf2text. LongLoRA is licensed under Apache License 2.0, while data and weights are under CC-BY-NC 4.0 License for research use only.

CogVideo

CogVideo is an open-source repository that provides pretrained text-to-video models for generating videos based on input text. It includes models like CogVideoX-2B and CogVideo, offering powerful video generation capabilities. The repository offers tools for inference, fine-tuning, and model conversion, along with demos showcasing the model's capabilities through CLI, web UI, and online experiences. CogVideo aims to facilitate the creation of high-quality videos from textual descriptions, catering to a wide range of applications.

CogVideo

CogVideo is a Python library for analyzing and processing video data. It provides functionalities for video segmentation, object detection, and tracking. With CogVideo, users can extract meaningful information from video streams, enabling applications in computer vision, surveillance, and video analytics. The library is designed to be user-friendly and efficient, making it suitable for both research and industrial projects.

only_train_once

Only Train Once (OTO) is an automatic, architecture-agnostic DNN training and compression framework that allows users to train a general DNN from scratch or a pretrained checkpoint to achieve high performance and slimmer architecture simultaneously in a one-shot manner without fine-tuning. The framework includes features for automatic structured pruning and erasing operators, as well as hybrid structured sparse optimizers for efficient model compression. OTO provides tools for pruning zero-invariant group partitioning, constructing pruned models, and visualizing pruning and erasing dependency graphs. It supports the HESSO optimizer and offers a sanity check for compliance testing on various DNNs. The repository also includes publications, installation instructions, quick start guides, and a roadmap for future enhancements and collaborations.

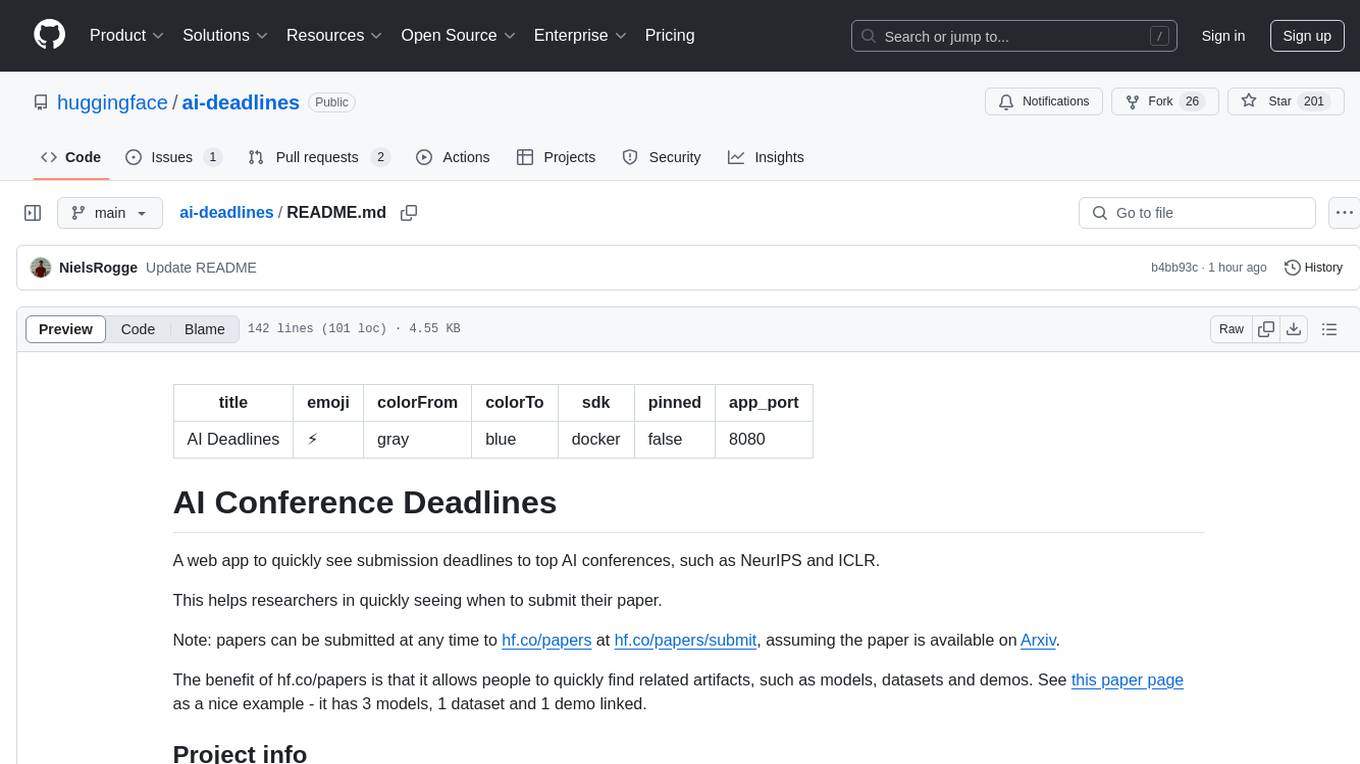

ai-deadlines

AI Deadlines is a web app that displays submission deadlines for top AI conferences like NeurIPS and ICLR. It helps researchers know when to submit their papers. The data is fetched from a GitHub repository and updated automatically using a CRON job. The project is based on an existing repository and features a new UI. Users can contribute by updating conference deadlines in the provided YAML file. The app can be run locally with Node.js and npm or deployed using Docker. It is built with Vite, TypeScript, React, shadcn-ui, and Tailwind CSS. The project is licensed under MIT.

AgentSquare

AgentSquare is an official implementation for the paper 'AgentSquare: Automatic LLM Agent Search in Modular Design Space'. It provides code, prompts, and results for automatic LLM agent search. The tool allows users to set up OpenAI API key, install dependencies, and run various tasks such as ALFworld, Webshop, M3Tooleval, and Sciworld. Users can also contribute new modules to the modular design challenge by standardizing LLM agents with recommended I/O interfaces. The tool aims to offer a platform for fully exploiting successful agent designs and consolidating efforts of the LLM agent research community.

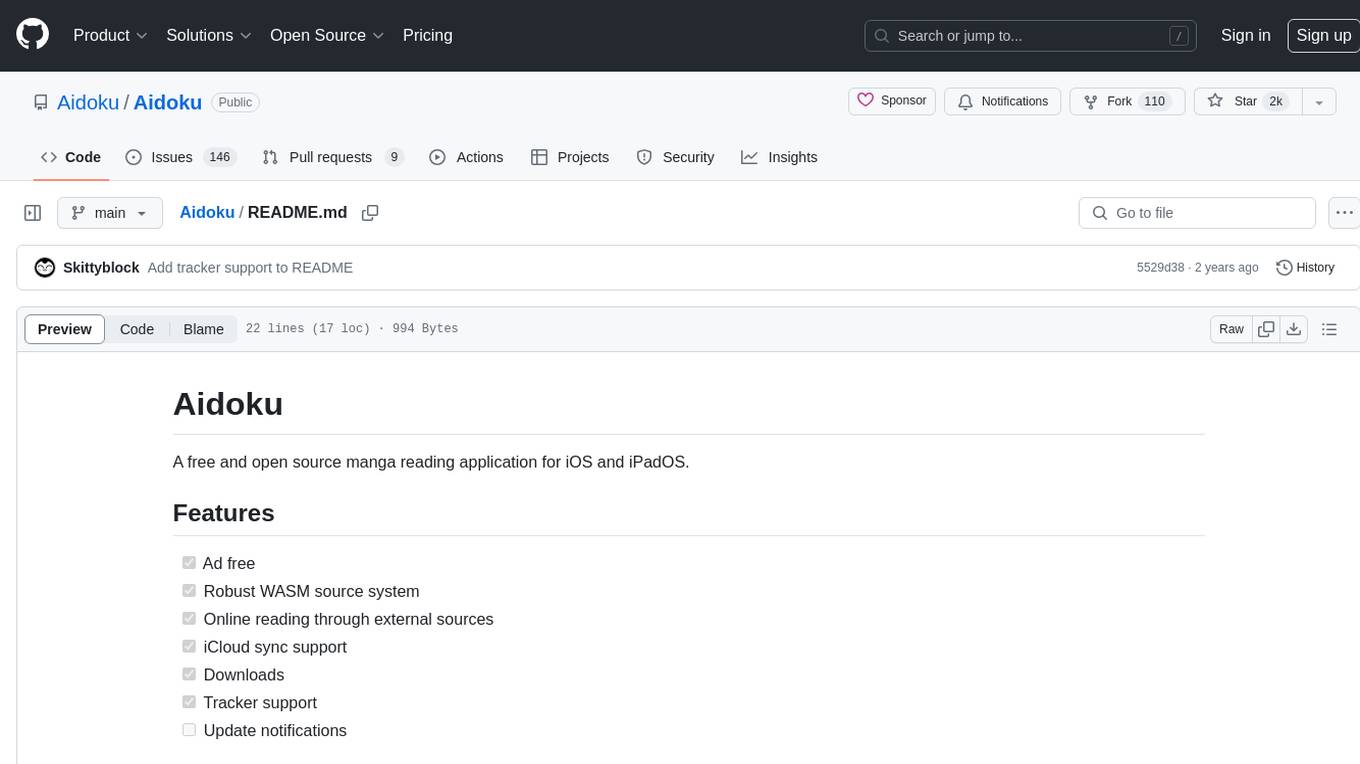

Aidoku

Aidoku is a free and open source manga reading application for iOS and iPadOS. It offers features like ad-free experience, robust WASM source system, online reading through external sources, iCloud sync support, downloads, and tracker support. Users can access the latest ipa from the releases page and join TestFlight via the Aidoku Discord for detailed installation instructions. The project is open to contributions, with planned features and fixes. Translation efforts are welcomed through Weblate for crowd-sourced translations.

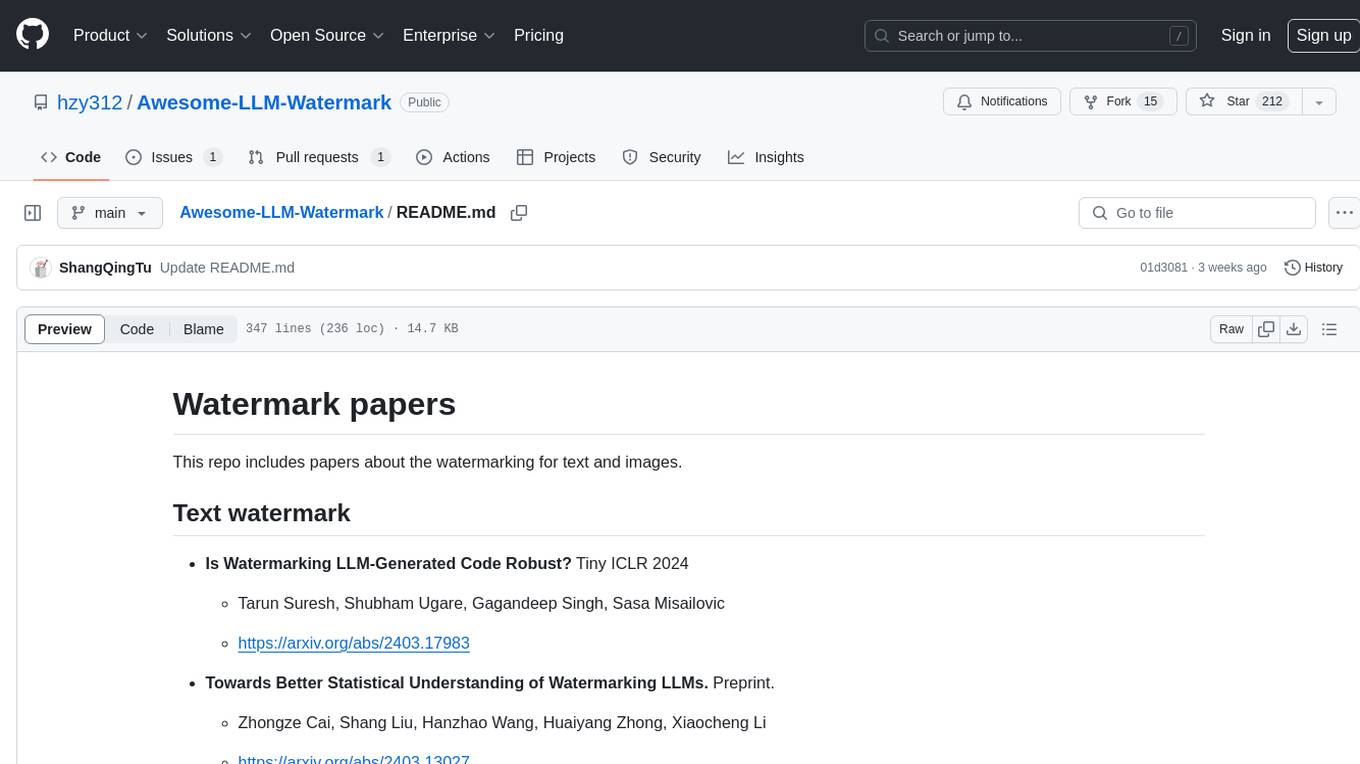

Awesome-LLM-Watermark

This repository contains a collection of research papers related to watermarking techniques for text and images, specifically focusing on large language models (LLMs). The papers cover various aspects of watermarking LLM-generated content, including robustness, statistical understanding, topic-based watermarks, quality-detection trade-offs, dual watermarks, watermark collision, and more. Researchers have explored different methods and frameworks for watermarking LLMs to protect intellectual property, detect machine-generated text, improve generation quality, and evaluate watermarking techniques. The repository serves as a valuable resource for those interested in the field of watermarking for LLMs.

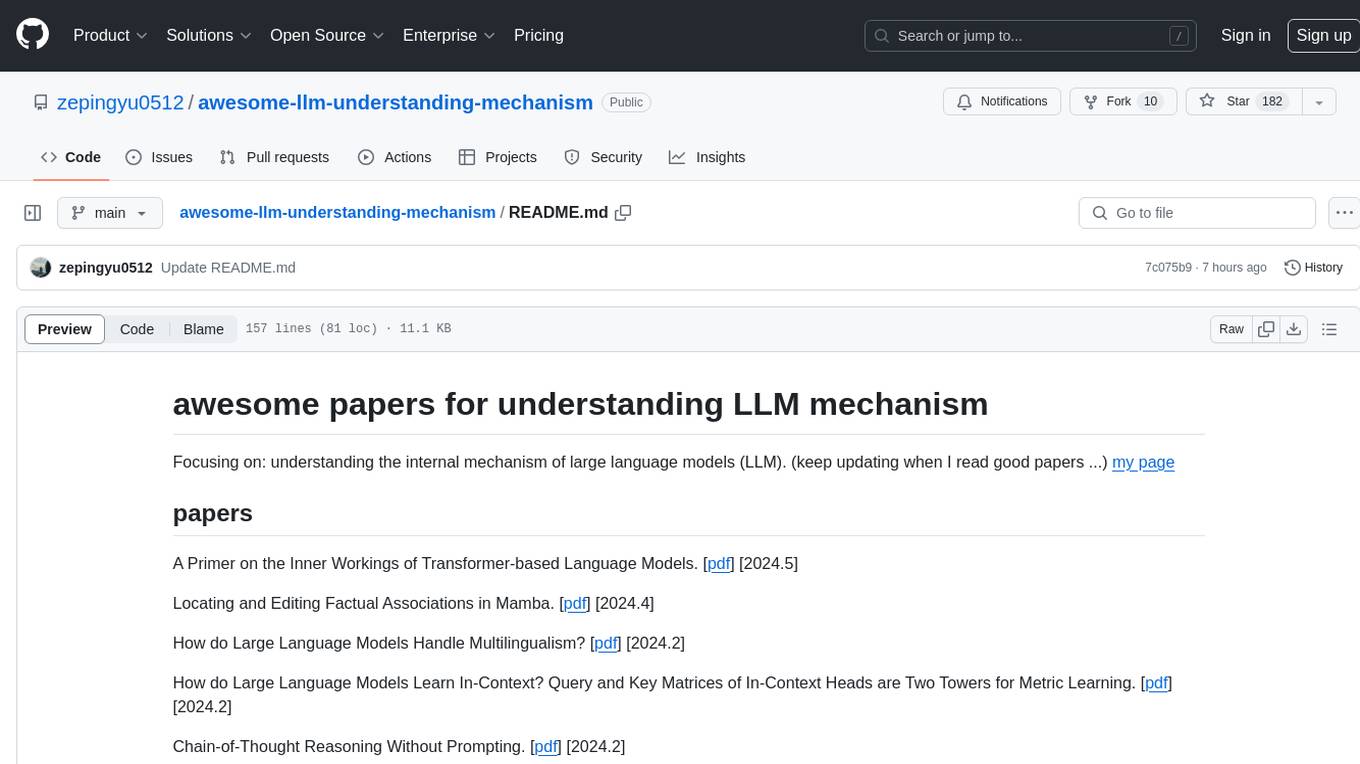

awesome-llm-understanding-mechanism

This repository is a collection of papers focused on understanding the internal mechanism of large language models (LLM). It includes research on topics such as how LLMs handle multilingualism, learn in-context, and handle factual associations. The repository aims to provide insights into the inner workings of transformer-based language models through a curated list of papers and surveys.