openguardrails

Guard Agent for AI Agents, a.k.a OpenClaw.

Stars: 237

OpenGuardrails provides runtime security for AI agents by detecting prompt injection, credential leakage, data exfiltration, and behavioral threats in real time. It wraps AI agents with a security layer, intercepts tool calls and messages, scans them against threat detectors and a behavioral rule engine, and blocks or alerts before damage is done. The tool includes a management dashboard for full visibility and an optional local gateway to sanitize sensitive data before leaving the machine. It is open source under the Apache 2.0 license.

README:

Runtime Security for AI Agents. Detects prompt injection, credential leakage, data exfiltration, and behavioral threats — in real time, before they execute.

OpenGuardrails wraps your AI agent with a security layer: the agent-side plugin intercepts every tool call and message, scans it against 10 threat detectors and a behavioral rule engine, and blocks or alerts before damage is done. A management dashboard gives you full visibility. An optional local gateway sanitizes sensitive data before it ever leaves your machine.

Open source (Apache 2.0). Architecture →

Run this in your terminal to install the MoltGuard OpenClaw skill:

npx clawhub@latest install moltguardThen ask OpenClaw to install and activate it:

Install and activate moltguard

MoltGuard will output a claim link. Open it in your browser, enter your email address and the verification code — you'll receive a confirmation email to complete activation.

That's it. Your agent is now protected and you have 30,000 free detections.

Sign in at openguardrails.com/dashboard to see detected threats, agent behavior graphs, permission policies, and risk events.

10 built-in scanners + a behavioral engine that watches tool call sequences:

Content scanners: Prompt injection · System override · Web attacks · MCP tool poisoning · Malicious code execution · NSFW · PII leakage · Credential leakage · Confidential data · Off-topic drift

Behavioral patterns (cross-call): File read → exfiltration · Credential access → external write · Shell exec after web fetch · Command injection · and more

See architecture.md for the full list.

The detection engine (Core) is a hosted service — the rest can be self-hosted:

Private dashboard — deploy locally, data stays in SQLite at ~/.openguardrails/:

npm install -g openguardrails

openguardrails dashboard startAI Security Gateway — sanitize PII and credentials locally before they reach any LLM provider:

npm install -g @openguardrails/gateway

openguardrails gateway start

# Point OpenClaw base URL to http://localhost:8900Apache License 2.0. See LICENSE for details.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for openguardrails

Similar Open Source Tools

openguardrails

OpenGuardrails provides runtime security for AI agents by detecting prompt injection, credential leakage, data exfiltration, and behavioral threats in real time. It wraps AI agents with a security layer, intercepts tool calls and messages, scans them against threat detectors and a behavioral rule engine, and blocks or alerts before damage is done. The tool includes a management dashboard for full visibility and an optional local gateway to sanitize sensitive data before leaving the machine. It is open source under the Apache 2.0 license.

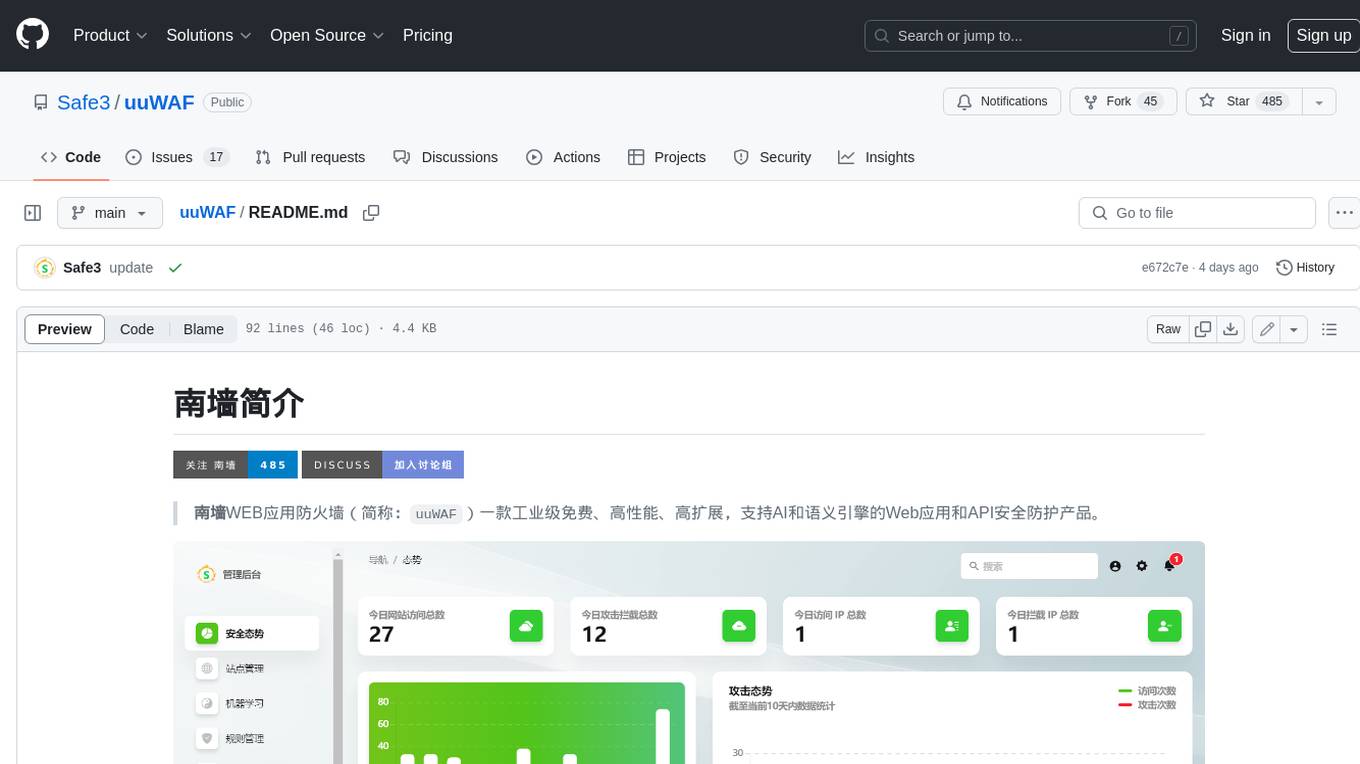

uuWAF

uuWAF is an industrial-grade, free, high-performance, highly extensible web application and API security protection product that supports AI and semantic engines.

uusec-waf

UUSEC WAF is an industrial grade free, high-performance, and highly scalable web application and API security protection product that supports AI and semantic engines. It provides intelligent 0-day defense, ultimate CDN acceleration, powerful proactive defense, advanced semantic engine, and advanced rule engine. With features like machine learning technology, cache cleaning, dual layer defense, semantic analysis, and Lua script rule writing, UUSEC WAF offers comprehensive website protection with three-layer defense functions at traffic, system, and runtime layers.

langwatch

LangWatch is a monitoring and analytics platform designed to track, visualize, and analyze interactions with Large Language Models (LLMs). It offers real-time telemetry to optimize LLM cost and latency, a user-friendly interface for deep insights into LLM behavior, user analytics for engagement metrics, detailed debugging capabilities, and guardrails to monitor LLM outputs for issues like PII leaks and toxic language. The platform supports OpenAI and LangChain integrations, simplifying the process of tracing LLM calls and generating API keys for usage. LangWatch also provides documentation for easy integration and self-hosting options for interested users.

bionic-gpt

BionicGPT is an on-premise replacement for ChatGPT, offering the advantages of Generative AI while maintaining strict data confidentiality. BionicGPT can run on your laptop or scale into the data center.

agno

Agno is a lightweight library for building multi-modal Agents. It is designed with core principles of simplicity, uncompromising performance, and agnosticism, allowing users to create blazing fast agents with minimal memory footprint. Agno supports any model, any provider, and any modality, making it a versatile container for AGI. Users can build agents with lightning-fast agent creation, model agnostic capabilities, native support for text, image, audio, and video inputs and outputs, memory management, knowledge stores, structured outputs, and real-time monitoring. The library enables users to create autonomous programs that use language models to solve problems, improve responses, and achieve tasks with varying levels of agency and autonomy.

Instrukt

Instrukt is a terminal-based AI integrated environment that allows users to create and instruct modular AI agents, generate document indexes for question-answering, and attach tools to any agent. It provides a platform for users to interact with AI agents in natural language and run them inside secure containers for performing tasks. The tool supports custom AI agents, chat with code and documents, tools customization, prompt console for quick interaction, LangChain ecosystem integration, secure containers for agent execution, and developer console for debugging and introspection. Instrukt aims to make AI accessible to everyone by providing tools that empower users without relying on external APIs and services.

AntSK

AntSK is an AI knowledge base/agent built with .Net8+Blazor+SemanticKernel. It features a semantic kernel for accurate natural language processing, a memory kernel for continuous learning and knowledge storage, a knowledge base for importing and querying knowledge from various document formats, a text-to-image generator integrated with StableDiffusion, GPTs generation for creating personalized GPT models, API interfaces for integrating AntSK into other applications, an open API plugin system for extending functionality, a .Net plugin system for integrating business functions, real-time information retrieval from the internet, model management for adapting and managing different models from different vendors, support for domestic models and databases for operation in a trusted environment, and planned model fine-tuning based on llamafactory.

MyDeviceAI

MyDeviceAI is a personal AI assistant app for iPhone that brings the power of artificial intelligence directly to the device. It focuses on privacy, performance, and personalization by running AI models locally and integrating with privacy-focused web services. The app offers seamless user experience, web search integration, advanced reasoning capabilities, personalization features, chat history access, and broad device support. It requires macOS, Xcode, CocoaPods, Node.js, and a React Native development environment for installation. The technical stack includes React Native framework, AI models like Qwen 3 and BGE Small, SearXNG integration, Redux for state management, AsyncStorage for storage, Lucide for UI components, and tools like ESLint and Prettier for code quality.

Conversation-Knowledge-Mining-Solution-Accelerator

The Conversation Knowledge Mining Solution Accelerator enables customers to leverage intelligence to uncover insights, relationships, and patterns from conversational data. It empowers users to gain valuable knowledge and drive targeted business impact by utilizing Azure AI Foundry, Azure OpenAI, Microsoft Fabric, and Azure Search for topic modeling, key phrase extraction, speech-to-text transcription, and interactive chat experiences.

nebula

Nebula is an advanced, AI-powered penetration testing tool designed for cybersecurity professionals, ethical hackers, and developers. It integrates state-of-the-art AI models into the command-line interface, automating vulnerability assessments and enhancing security workflows with real-time insights and automated note-taking. Nebula revolutionizes penetration testing by providing AI-driven insights, enhanced tool integration, AI-assisted note-taking, and manual note-taking features. It also supports any tool that can be invoked from the CLI, making it a versatile and powerful tool for cybersecurity tasks.

litlytics

LitLytics is an affordable analytics platform leveraging LLMs for automated data analysis. It simplifies analytics for teams without data scientists, generates custom pipelines, and allows customization. Cost-efficient with low data processing costs. Scalable and flexible, works with CSV, PDF, and plain text data formats.

draive

draive is an open-source Python library designed to simplify and accelerate the development of LLM-based applications. It offers abstract building blocks for connecting functionalities with large language models, flexible integration with various AI solutions, and a user-friendly framework for building scalable data processing pipelines. The library follows a function-oriented design, allowing users to represent complex programs as simple functions. It also provides tools for measuring and debugging functionalities, ensuring type safety and efficient asynchronous operations for modern Python apps.

AgentPilot

Agent Pilot is an open source desktop app for creating, managing, and chatting with AI agents. It features multi-agent, branching chats with various providers through LiteLLM. Users can combine models from different providers, configure interactions, and run code using the built-in Open Interpreter. The tool allows users to create agents, manage chats, work with multi-agent workflows, branching workflows, context blocks, tools, and plugins. It also supports a code interpreter, scheduler, voice integration, and integration with various AI providers. Contributions to the project are welcome, and users can report known issues for improvement.

AgentUp

AgentUp is an active development tool that provides a developer-first agent framework for creating AI agents with enterprise-grade infrastructure. It allows developers to define agents with configuration, ensuring consistent behavior across environments. The tool offers secure design, configuration-driven architecture, extensible ecosystem for customizations, agent-to-agent discovery, asynchronous task architecture, deterministic routing, and MCP support. It supports multiple agent types like reactive agents and iterative agents, making it suitable for chatbots, interactive applications, research tasks, and more. AgentUp is built by experienced engineers from top tech companies and is designed to make AI agents production-ready, secure, and reliable.

calfkit-sdk

The Calfkit SDK is a Python SDK designed to build event-driven, distributed AI agents. It allows users to compose agents with independent services such as chat, tools, and routing that communicate asynchronously. The SDK enables users to add agent capabilities without coordination, scale each component independently, and stream agent outputs to any downstream system. Calfkit aims to create AI employees that integrate seamlessly into existing systems by providing benefits like loose coupling, horizontal scalability, and event persistence for reliable message delivery.

For similar tasks

openguardrails

OpenGuardrails provides runtime security for AI agents by detecting prompt injection, credential leakage, data exfiltration, and behavioral threats in real time. It wraps AI agents with a security layer, intercepts tool calls and messages, scans them against threat detectors and a behavioral rule engine, and blocks or alerts before damage is done. The tool includes a management dashboard for full visibility and an optional local gateway to sanitize sensitive data before leaving the machine. It is open source under the Apache 2.0 license.

last_layer

last_layer is a security library designed to protect LLM applications from prompt injection attacks, jailbreaks, and exploits. It acts as a robust filtering layer to scrutinize prompts before they are processed by LLMs, ensuring that only safe and appropriate content is allowed through. The tool offers ultra-fast scanning with low latency, privacy-focused operation without tracking or network calls, compatibility with serverless platforms, advanced threat detection mechanisms, and regular updates to adapt to evolving security challenges. It significantly reduces the risk of prompt-based attacks and exploits but cannot guarantee complete protection against all possible threats.

Cyberion-Spark-X

Cyberion-Spark-X is a powerful open-source tool designed for cybersecurity professionals and data analysts. It provides advanced capabilities for analyzing and visualizing large datasets to detect security threats and anomalies. The tool integrates with popular data sources and supports various machine learning algorithms for predictive analytics and anomaly detection. Cyberion-Spark-X is user-friendly and highly customizable, making it suitable for both beginners and experienced professionals in the field of cybersecurity and data analysis.

Here-Comes-the-AI-Worm

Large Language Models (LLMs) are now embedded in everyday tools like email assistants, chat apps, and productivity software. This project introduces DonkeyRail, a lightweight guardrail that detects and blocks malicious self-replicating prompts known as RAGworm within GenAI-powered applications. The guardrail is fast, accurate, and practical for real-world GenAI systems, preventing activities like spam, phishing campaigns, and data leaks.

sec-gemini

Sec-Gemini is an experimental cybersecurity-focused AI tool developed by Google. This repository contains SDKs and a CLI for Sec-Gemini, with SDKs available for Python and TypeScript. Additionally, there is a web component provided to facilitate integration on websites.

HydraDragonPlatform

Hydra Dragon Automatic Malware/Executable Analysis Platform offers dynamic and static analysis for Windows, including open-source XDR projects, ClamAV, YARA-X, machine learning AI, behavioral analysis, Unpacker, Deobfuscator, Decompiler, website signatures, Ghidra, Suricata, Sigma, Kernel based protection, and more. It is a Unified Executable Analysis & Detection Framework.

NetSecGame

The NetSecGame (Network Security Game) is a framework for training and evaluation of AI agents in network security tasks. It enables rapid development and testing of AI agents in highly configurable scenarios using the CYST network simulator. The framework includes examples of implemented agents in the submodule NetSecGameAgents.

For similar jobs

awesome-MLSecOps

Awesome MLSecOps is a curated list of open-source tools, resources, and tutorials for MLSecOps (Machine Learning Security Operations). It includes a wide range of security tools and libraries for protecting machine learning models against adversarial attacks, as well as resources for AI security, data anonymization, model security, and more. The repository aims to provide a comprehensive collection of tools and information to help users secure their machine learning systems and infrastructure.

mimir

MIMIR is a Python package designed for measuring memorization in Large Language Models (LLMs). It provides functionalities for conducting experiments related to membership inference attacks on LLMs. The package includes implementations of various attacks such as Likelihood, Reference-based, Zlib Entropy, Neighborhood, Min-K% Prob, Min-K%++, Gradient Norm, and allows users to extend it by adding their own datasets and attacks.

openshield

OpenShield is a firewall designed for AI models to protect against various attacks such as prompt injection, insecure output handling, training data poisoning, model denial of service, supply chain vulnerabilities, sensitive information disclosure, insecure plugin design, excessive agency granting, overreliance, and model theft. It provides rate limiting, content filtering, and keyword filtering for AI models. The tool acts as a transparent proxy between AI models and clients, allowing users to set custom rate limits for OpenAI endpoints and perform tokenizer calculations for OpenAI models. OpenShield also supports Python and LLM based rules, with upcoming features including rate limiting per user and model, prompts manager, content filtering, keyword filtering based on LLM/Vector models, OpenMeter integration, and VectorDB integration. The tool requires an OpenAI API key, Postgres, and Redis for operation.

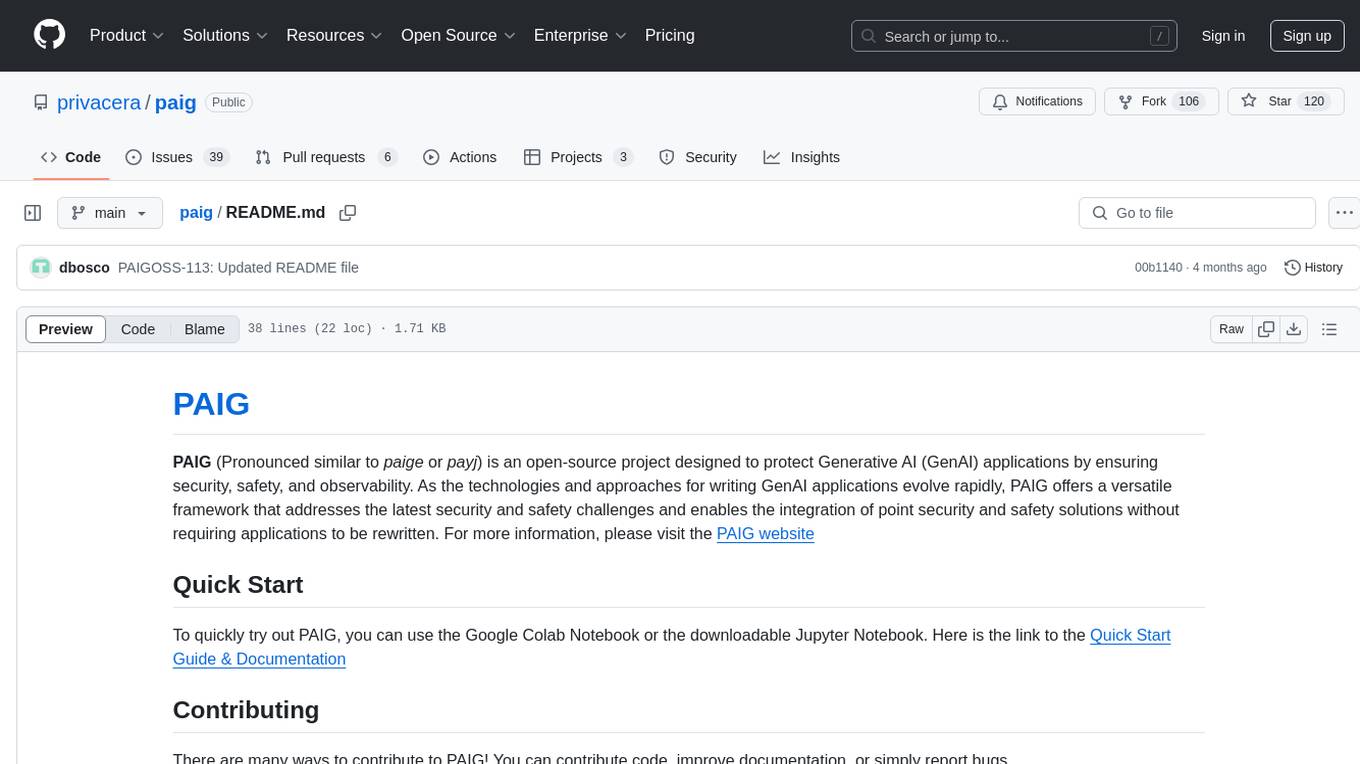

paig

PAIG is an open-source project focused on protecting Generative AI applications by ensuring security, safety, and observability. It offers a versatile framework to address the latest security challenges and integrate point security solutions without rewriting applications. The project aims to provide a secure environment for developing and deploying GenAI applications.

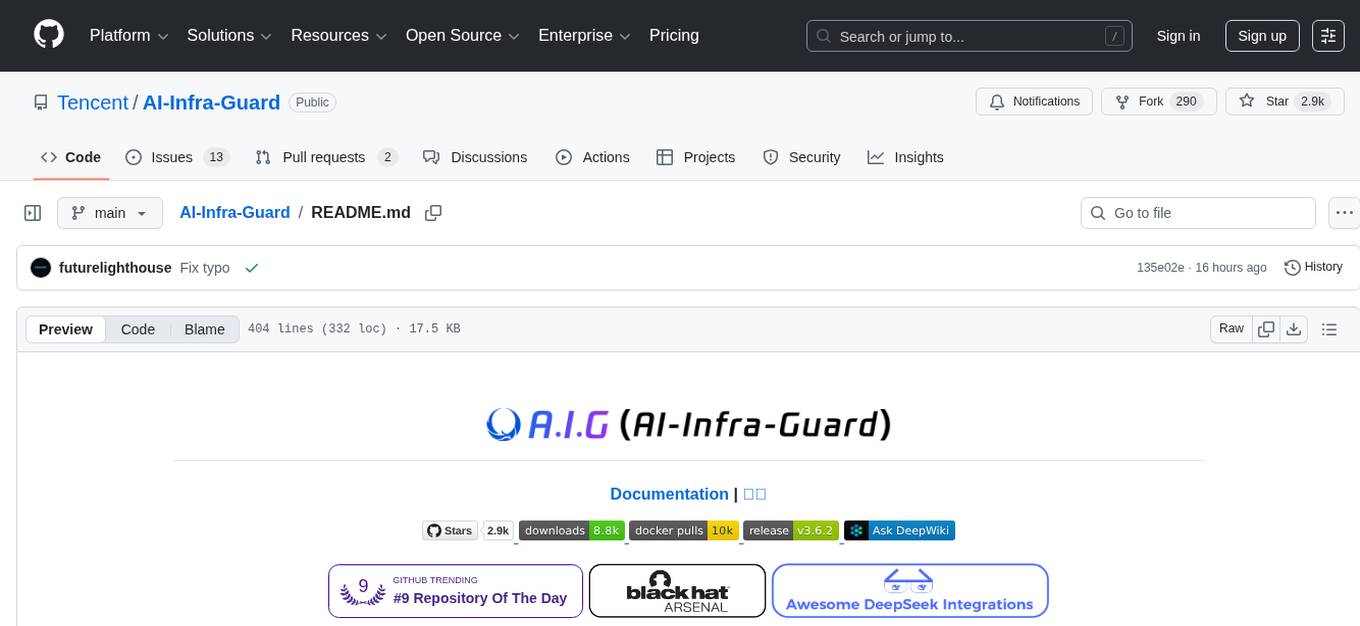

AI-Infra-Guard

A.I.G (AI-Infra-Guard) is an AI red teaming platform by Tencent Zhuque Lab that integrates capabilities such as AI infra vulnerability scan, MCP Server risk scan, and Jailbreak Evaluation. It aims to provide users with a comprehensive, intelligent, and user-friendly solution for AI security risk self-examination. The platform offers features like AI Infra Scan, AI Tool Protocol Scan, and Jailbreak Evaluation, along with a modern web interface, complete API, multi-language support, cross-platform deployment, and being free and open-source under the MIT license.

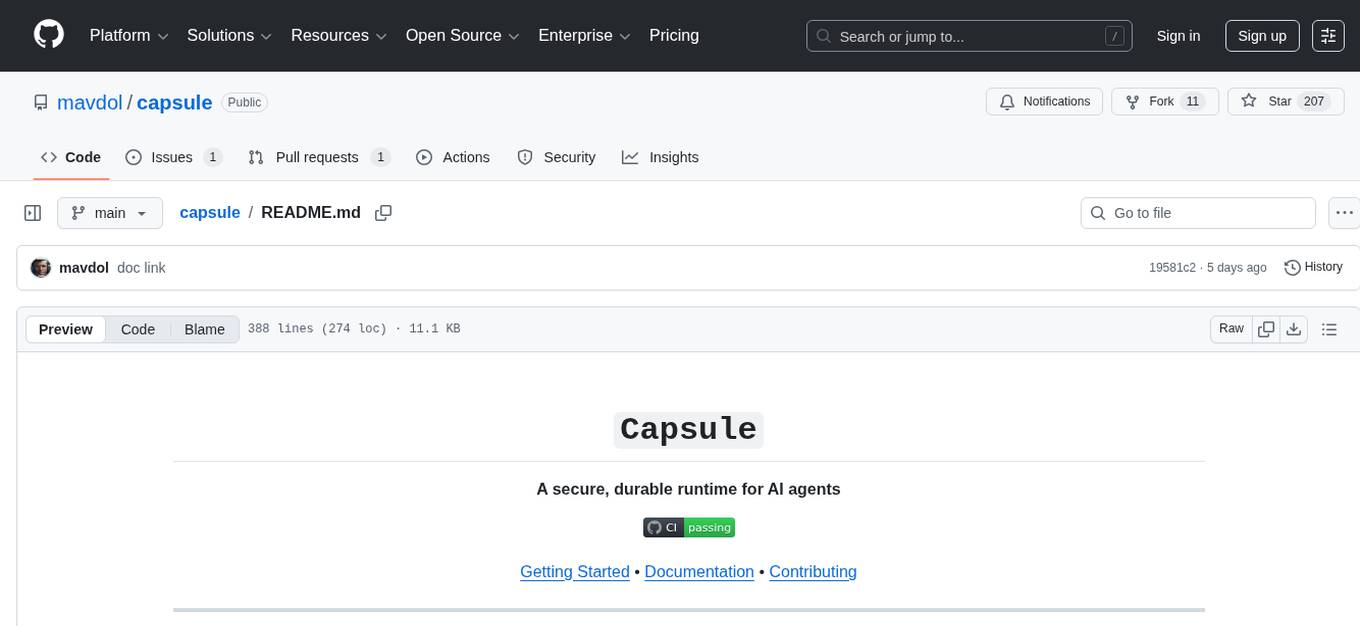

capsule

Capsule is a secure and durable runtime for AI agents, designed to coordinate tasks in isolated environments. It allows for long-running workflows, large-scale processing, autonomous decision-making, and multi-agent systems. Tasks run in WebAssembly sandboxes with isolated execution, resource limits, automatic retries, and lifecycle tracking. It enables safe execution of untrusted code within AI agent systems.

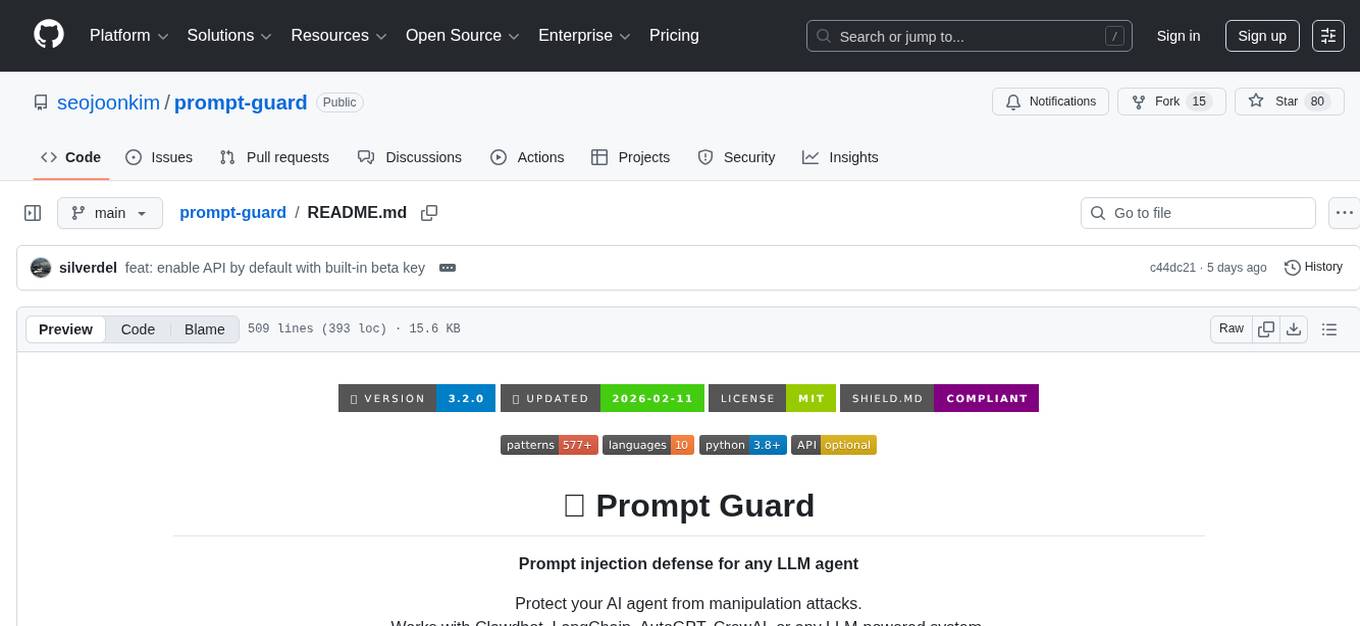

prompt-guard

Prompt Guard is a tool designed to provide prompt injection defense for any LLM agent, protecting AI agents from manipulation attacks. It works with various LLM-powered systems like Clawdbot, LangChain, AutoGPT, CrewAI, etc. The tool offers features such as protection against injection attacks, secret exfiltration, jailbreak attempts, auto-approve & MCP abuse, browser & Unicode injection, skill weaponization defense, encoded & obfuscated payloads detection, output DLP, enterprise DLP, Canary Tokens, JSONL logging, token smuggling defense, severity scoring, and SHIELD.md compliance. It supports multiple languages and provides an API-enhanced mode for advanced detection. The tool can be used via CLI or integrated into Python scripts for analyzing user input and LLM output for potential threats.

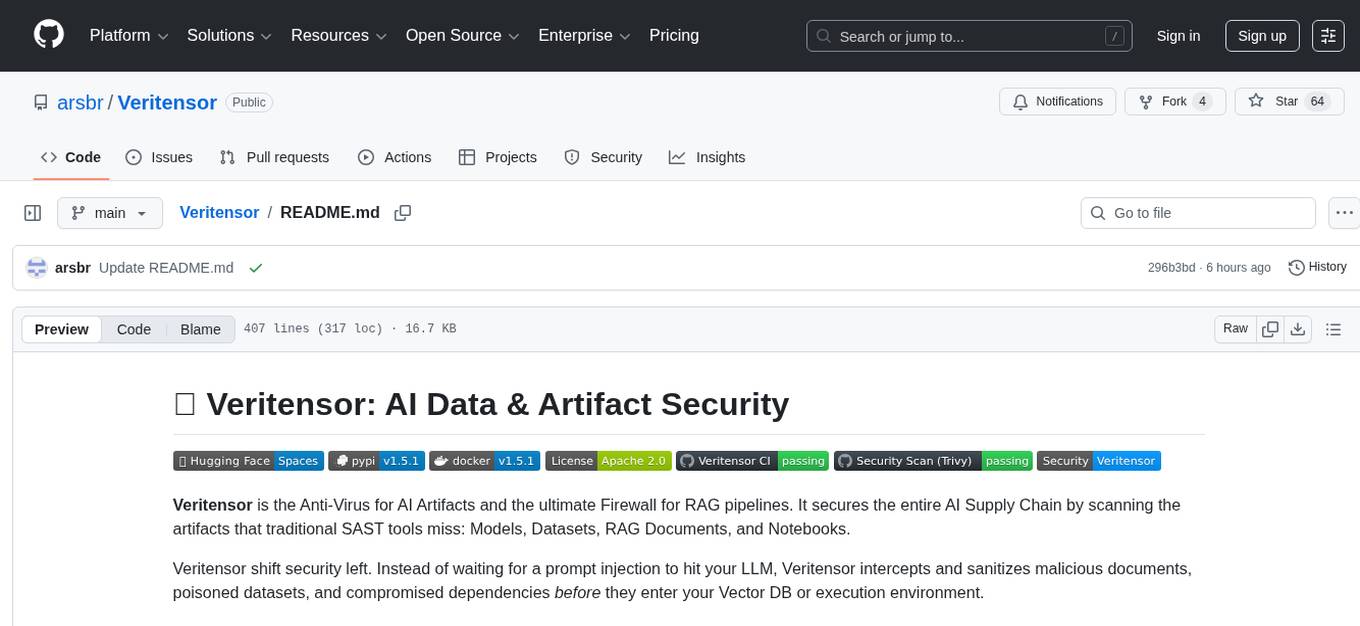

Veritensor

Veritensor is an Anti-Virus tool designed for AI Artifacts and a Firewall for RAG pipelines. It secures the AI Supply Chain by scanning models, datasets, RAG documents, and notebooks for threats that traditional SAST tools may miss. Veritensor shifts security left by intercepting and sanitizing malicious documents, poisoned datasets, and compromised dependencies before they enter the execution environment. It understands binary and serialized formats used in Machine Learning, such as models, data & RAG documents, notebooks, dependencies, and governance aspects. The tool offers features like native RAG security integration, high-performance parallel scanning, advanced stealth detection, dataset security, archive inspection, dependency audit, data provenance, identity verification, de-obfuscation engine, magic number validation, smart filtering, and entropy analysis.