moltis

A Rust-native claw you can trust. One binary — sandboxed, secure, auditable. Voice, memory, MCP tools, and multi-channel access built-in.

Stars: 1429

Moltis is a secure, full-featured Rust-native AI gateway tool that runs on your own hardware, providing sandboxed execution and auditable code. It offers voice, memory, scheduling, Telegram, browser automation, and MCP servers functionalities without the need for Node.js or npm. Moltis ensures that your keys never leave your machine and includes features like auditable codebase, secure execution environment, and built-in functionalities for various tasks.

README:

One binary — sandboxed, secure, yours.

Installation • Comparison • Architecture • Security • Features • How It Works • Contributing

Moltis recently hit the front page of Hacker News. Please open an issue for any friction at all. I'm focused on making Moltis excellent.

Secure by design — Your keys never leave your machine. Every command runs in a sandboxed container, never on your host.

Your hardware — Runs on a Mac Mini, a Raspberry Pi, or any server you own. One Rust binary, no Node.js, no npm, no runtime.

Full-featured — Voice, memory, scheduling, Telegram, browser automation, MCP servers — all built-in. No plugin marketplace to get supply-chain attacked through.

Auditable — The agent loop + provider model fits in ~5K lines. The core (excluding the optional web UI) is ~121K lines across modular crates you can audit independently, with 2,300+ tests and zero unsafe code*.

# One-liner install script (macOS / Linux)

curl -fsSL https://www.moltis.org/install.sh | sh

# macOS / Linux via Homebrew

brew install moltis-org/tap/moltis

# Docker (multi-arch: amd64/arm64)

docker pull ghcr.io/moltis-org/moltis:latest

# Or build from source

cargo install moltis --git https://github.com/moltis-org/moltis| OpenClaw | PicoClaw | NanoClaw | ZeroClaw | Moltis | |

|---|---|---|---|---|---|

| Language | TypeScript | Go | TypeScript | Rust | Rust |

| Agent loop | ~430K LoC | Small | ~500 LoC | ~3.4K LoC |

~5K LoC (runner.rs + model.rs) |

| Full codebase | — | — | — | 1,000+ tests | ~124K LoC (2,300+ tests) |

| Runtime | Node.js + npm | Single binary | Node.js | Single binary (3.4 MB) | Single binary (44 MB) |

| Sandbox | App-level | — | Docker | Docker | Docker + Apple Container |

| Memory safety | GC | GC | GC | Ownership | Ownership, zero unsafe* |

| Auth | Basic | API keys | None | Token + OAuth | Password + Passkey + API keys |

| Voice I/O | Plugin | — | — | — | Built-in (15+ providers) |

| MCP | Yes | — | — | — | Yes (stdio + HTTP/SSE) |

| Hooks | Yes (limited) | — | — | — | 15 event types |

| Skills | Yes (store) | Yes | Yes | Yes | Yes (+ OpenClaw Store) |

| Memory/RAG | Plugin | — | Per-group | SQLite + FTS | SQLite + FTS + vector |

* unsafe is denied workspace-wide. The only exceptions are opt-in FFI wrappers behind the local-embeddings feature flag, not part of the core.

Core (always compiled):

| Crate | LoC | Role |

|---|---|---|

moltis (cli) |

2.4K | Entry point, CLI commands |

moltis-agents |

20.1K | LLM providers, agent loop, streaming |

moltis-gateway |

29.2K | HTTP/WS server, RPC, auth |

moltis-chat |

10.2K | Chat engine, agent orchestration |

moltis-tools |

13.4K | Tool execution, sandbox |

moltis-config |

5.1K | Configuration, validation |

moltis-sessions |

2.7K | Session persistence |

moltis-plugins |

1.4K | Hook dispatch, plugin formats |

moltis-common |

0.8K | Shared utilities |

Optional (feature-gated or additive):

| Category | Crates | Combined LoC |

|---|---|---|

| Web UI | moltis-web |

4.3K |

| Voice | moltis-voice |

4.7K |

| Memory |

moltis-memory, moltis-qmd

|

5.8K |

| Channels |

moltis-telegram, moltis-channels

|

6.4K |

| Browser | moltis-browser |

4.8K |

| Scheduling | moltis-cron |

3.8K |

| Extensibility |

moltis-mcp, moltis-skills

|

7.4K |

| Auth/OAuth |

moltis-oauth, moltis-onboarding

|

2.8K |

| Metrics | moltis-metrics |

1.7K |

| Other |

moltis-projects, moltis-routing, moltis-protocol, moltis-media, moltis-canvas, moltis-auto-reply

|

2.4K |

Use --no-default-features --features lightweight for constrained devices (Raspberry Pi, etc.).

-

Zero

unsafecode* — denied workspace-wide; only opt-in FFI behindlocal-embeddingsflag - Sandboxed execution — Docker + Apple Container, per-session isolation

-

Secret handling —

secrecy::Secret, zeroed on drop, redacted from tool output - Authentication — password + passkey (WebAuthn), rate-limited, per-IP throttle

- SSRF protection — DNS-resolved, blocks loopback/private/link-local

- Origin validation — rejects cross-origin WebSocket upgrades

-

Hook gating —

BeforeToolCallhooks can inspect/block any tool invocation

See Security Architecture for details.

- AI Gateway — Multi-provider LLM support (OpenAI Codex, GitHub Copilot, Local), streaming responses, agent loop with sub-agent delegation, parallel tool execution

- Communication — Web UI, Telegram, API access, voice I/O (8 TTS + 7 STT providers), mobile PWA with push notifications

- Memory & Context — Embeddings-powered long-term memory, hybrid vector + full-text search, session persistence with auto-compaction, project context

- Extensibility — MCP servers (stdio + HTTP/SSE), skill system, 15 lifecycle hook events with circuit breaker, destructive command guard

- Operations — Cron scheduling, OpenTelemetry tracing, Prometheus metrics, cloud deploy (Fly.io, DigitalOcean), Tailscale integration

Moltis is a local-first AI gateway — a single Rust binary that sits between you and multiple LLM providers. Everything runs on your machine; no cloud relay required.

┌─────────────┐ ┌─────────────┐ ┌─────────────┐

│ Web UI │ │ Telegram │ │ Discord │

└──────┬──────┘ └──────┬──────┘ └──────┬──────┘

│ │ │

└────────┬───────┴────────┬───────┘

│ WebSocket │

▼ ▼

┌─────────────────────────────────┐

│ Gateway Server │

│ (Axum · HTTP · WS · Auth) │

├─────────────────────────────────┤

│ Chat Service │

│ ┌───────────┐ ┌─────────────┐ │

│ │ Agent │ │ Tool │ │

│ │ Runner │◄┤ Registry │ │

│ └─────┬─────┘ └─────────────┘ │

│ │ │

│ ┌─────▼─────────────────────┐ │

│ │ Provider Registry │ │

│ │ Multiple providers │ │

│ │ (Codex · Copilot · Local)│ │

│ └───────────────────────────┘ │

├─────────────────────────────────┤

│ Sessions │ Memory │ Hooks │

│ (JSONL) │ (SQLite)│ (events) │

└─────────────────────────────────┘

│

┌───────▼───────┐

│ Sandbox │

│ Docker/Apple │

│ Container │

└───────────────┘

See Quickstart for gateway startup, message flow, sessions, and memory details.

git clone https://github.com/moltis-org/moltis.git

cd moltis

cargo build --release

cargo run --releaseOpen https://moltis.localhost:3000. On first run, a setup code is printed to

the terminal — enter it in the web UI to set your password or register a passkey.

Optional flags: --config-dir /path/to/config --data-dir /path/to/data

# Docker / OrbStack

docker run -d \

--name moltis \

-p 13131:13131 \

-p 13132:13132 \

-v moltis-config:/home/moltis/.config/moltis \

-v moltis-data:/home/moltis/.moltis \

-v /var/run/docker.sock:/var/run/docker.sock \

ghcr.io/moltis-org/moltis:latestOpen https://localhost:13131 and complete the setup. See Docker docs for Podman, OrbStack, TLS trust, and persistence details.

| Provider | Deploy |

|---|---|

| DigitalOcean |

Fly.io (CLI):

fly launch --image ghcr.io/moltis-org/moltis:latest

fly secrets set MOLTIS_PASSWORD="your-password"All cloud configs use --no-tls because the provider handles TLS termination.

See Cloud Deploy docs for details.

MIT

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for moltis

Similar Open Source Tools

moltis

Moltis is a secure, full-featured Rust-native AI gateway tool that runs on your own hardware, providing sandboxed execution and auditable code. It offers voice, memory, scheduling, Telegram, browser automation, and MCP servers functionalities without the need for Node.js or npm. Moltis ensures that your keys never leave your machine and includes features like auditable codebase, secure execution environment, and built-in functionalities for various tasks.

hyper-mcp

hyper-mcp is a fast and secure MCP server that enables adding AI capabilities to applications through WebAssembly plugins. It supports writing plugins in various languages, distributing them via standard OCI registries, and running them in resource-constrained environments. The tool offers sandboxing with WASM for limiting access, cross-platform compatibility, and deployment flexibility. Security features include sandboxed plugins, memory-safe execution, secure plugin distribution, and fine-grained access control. Users can configure the tool for global or project-specific use, start the server with different transport options, and utilize available plugins for tasks like time calculations, QR code generation, hash generation, IP retrieval, and webpage fetching.

goclaw

goclaw is a powerful AI Agent framework written in Go language. It provides a complete tool system for FileSystem, Shell, Web, and Browser with Docker sandbox support and permission control. The framework includes a skill system compatible with OpenClaw and AgentSkills specifications, supporting automatic discovery and environment gating. It also offers persistent session storage, multi-channel support for Telegram, WhatsApp, Feishu, QQ, and WeWork, flexible configuration with YAML/JSON support, multiple LLM providers like OpenAI, Anthropic, and OpenRouter, WebSocket Gateway, Cron scheduling, and Browser automation based on Chrome DevTools Protocol.

nullclaw

NullClaw is the smallest fully autonomous AI assistant infrastructure, a static Zig binary that fits on any $5 board, boots in milliseconds, and requires nothing but libc. It features an impossibly small 678 KB static binary with no runtime or framework overhead, near-zero memory usage, instant startup, true portability across different CPU architectures, and a feature-complete stack with 22+ providers, 11 channels, and 18+ tools. The tool is lean by default, secure by design, fully swappable with core systems as vtable interfaces, and offers no lock-in with OpenAI-compatible provider support and pluggable custom endpoints.

runhouse

Runhouse is a tool that allows you to build, run, and deploy production-quality AI apps and workflows on your own compute. It provides simple, powerful APIs for the full lifecycle of AI development, from research to evaluation to production to updates to scaling to management, and across any infra. By automatically packaging your apps into scalable, secure, and observable services, Runhouse can also turn otherwise redundant AI activities into common reusable components across your team or company, which improves cost, velocity, and reproducibility.

qwen-code

Qwen Code is an open-source AI agent optimized for Qwen3-Coder, designed to help users understand large codebases, automate tedious work, and expedite the shipping process. It offers an agentic workflow with rich built-in tools, a terminal-first approach with optional IDE integration, and supports both OpenAI-compatible API and Qwen OAuth authentication methods. Users can interact with Qwen Code in interactive mode, headless mode, IDE integration, and through a TypeScript SDK. The tool can be configured via settings.json, environment variables, and CLI flags, and offers benchmark results for performance evaluation. Qwen Code is part of an ecosystem that includes AionUi and Gemini CLI Desktop for graphical interfaces, and troubleshooting guides are available for issue resolution.

ai-manus

AI Manus is a general-purpose AI Agent system that supports running various tools and operations in a sandbox environment. It offers deployment with minimal dependencies, supports multiple tools like Terminal, Browser, File, Web Search, and messaging tools, allocates separate sandboxes for tasks, manages session history, supports stopping and interrupting conversations, file upload and download, and is multilingual. The system also provides user login and authentication. The project primarily relies on Docker for development and deployment, with model capability requirements and recommended Deepseek and GPT models.

google_workspace_mcp

The Google Workspace MCP Server is a production-ready server that integrates major Google Workspace services with AI assistants. It supports single-user and multi-user authentication via OAuth 2.1, making it a powerful backend for custom applications. Built with FastMCP for optimal performance, it features advanced authentication handling, service caching, and streamlined development patterns. The server provides full natural language control over Google Calendar, Drive, Gmail, Docs, Sheets, Slides, Forms, Tasks, and Chat through all MCP clients, AI assistants, and developer tools. It supports free Google accounts and Google Workspace plans with expanded app options like Chat & Spaces. The server also offers private cloud instance options.

capsule

Capsule is a secure and durable runtime for AI agents, designed to coordinate tasks in isolated environments. It allows for long-running workflows, large-scale processing, autonomous decision-making, and multi-agent systems. Tasks run in WebAssembly sandboxes with isolated execution, resource limits, automatic retries, and lifecycle tracking. It enables safe execution of untrusted code within AI agent systems.

koog

Koog is a Kotlin-based framework for building and running AI agents entirely in idiomatic Kotlin. It allows users to create agents that interact with tools, handle complex workflows, and communicate with users. Key features include pure Kotlin implementation, MCP integration, embedding capabilities, custom tool creation, ready-to-use components, intelligent history compression, powerful streaming API, persistent agent memory, comprehensive tracing, flexible graph workflows, modular feature system, scalable architecture, and multiplatform support.

spacebot

Spacebot is an AI agent designed for teams, communities, and multi-user environments. It splits the monolith into specialized processes that delegate tasks, allowing it to handle concurrent conversations, execute tasks, and respond to multiple users simultaneously. Built for Discord, Slack, and Telegram, Spacebot can run coding sessions, manage files, automate web browsing, and search the web. Its memory system is structured and graph-connected, enabling productive knowledge synthesis. With capabilities for task execution, messaging, memory management, scheduling, model routing, and extensible skills, Spacebot offers a comprehensive solution for collaborative work environments.

lightspeed-service

OpenShift LightSpeed (OLS) is an AI powered assistant that runs on OpenShift and provides answers to product questions using backend LLM services. It supports various LLM providers such as OpenAI, Azure OpenAI, OpenShift AI, RHEL AI, and Watsonx. Users can configure the service, manage API keys securely, and deploy it locally or on OpenShift. The project structure includes REST API handlers, configuration loader, LLM providers registry, and more. Additional tools include generating OpenAPI schema, requirements.txt file, and uploading artifacts to an S3 bucket. The project is open source under the Apache 2.0 License.

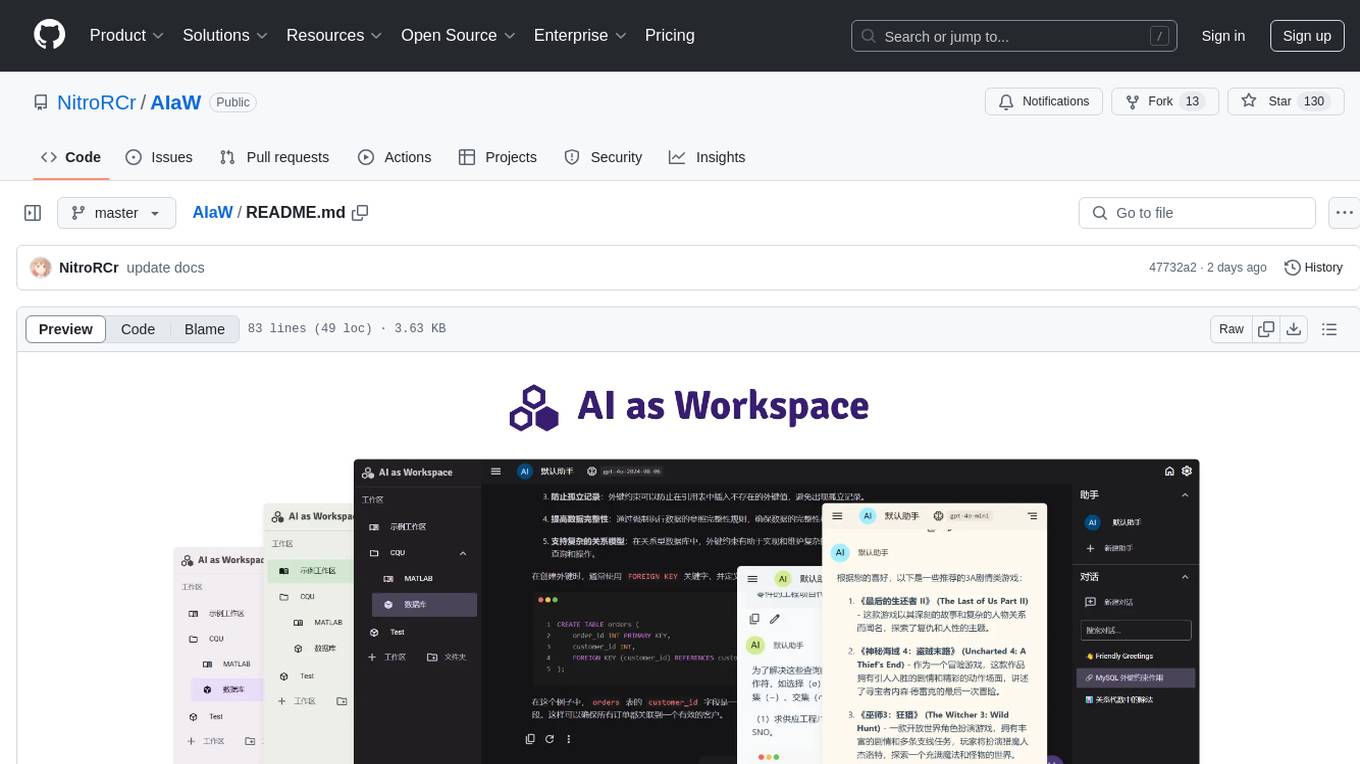

AIaW

AIaW is a next-generation LLM client with full functionality, lightweight, and extensible. It supports various basic functions such as streaming transfer, image uploading, and latex formulas. The tool is cross-platform with a responsive interface design. It supports multiple service providers like OpenAI, Anthropic, and Google. Users can modify questions, regenerate in a forked manner, and visualize conversations in a tree structure. Additionally, it offers features like file parsing, video parsing, plugin system, assistant market, local storage with real-time cloud sync, and customizable interface themes. Users can create multiple workspaces, use dynamic prompt word variables, extend plugins, and benefit from detailed design elements like real-time content preview, optimized code pasting, and support for various file types.

superset

Superset is a turbocharged terminal that allows users to run multiple CLI coding agents simultaneously, isolate tasks in separate worktrees, monitor agent status, review changes quickly, and enhance development workflow. It supports any CLI-based coding agent and offers features like parallel execution, worktree isolation, agent monitoring, built-in diff viewer, workspace presets, universal compatibility, quick context switching, and IDE integration. Users can customize keyboard shortcuts, configure workspace setup, and teardown, and contribute to the project. The tech stack includes Electron, React, TailwindCSS, Bun, Turborepo, Vite, Biome, Drizzle ORM, Neon, and tRPC. The community provides support through Discord, Twitter, GitHub Issues, and GitHub Discussions.

holmesgpt

HolmesGPT is an open-source DevOps assistant powered by OpenAI or any tool-calling LLM of your choice. It helps in troubleshooting Kubernetes, incident response, ticket management, automated investigation, and runbook automation in plain English. The tool connects to existing observability data, is compliance-friendly, provides transparent results, supports extensible data sources, runbook automation, and integrates with existing workflows. Users can install HolmesGPT using Brew, prebuilt Docker container, Python Poetry, or Docker. The tool requires an API key for functioning and supports OpenAI, Azure AI, and self-hosted LLMs.

axonhub

AxonHub is an all-in-one AI development platform that serves as an AI gateway allowing users to switch between model providers without changing any code. It provides features like vendor lock-in prevention, integration simplification, observability enhancement, and cost control. Users can access any model using any SDK with zero code changes. The platform offers full request tracing, enterprise RBAC, smart load balancing, and real-time cost tracking. AxonHub supports multiple databases, provides a unified API gateway, and offers flexible model management and API key creation for authentication. It also integrates with various AI coding tools and SDKs for seamless usage.

For similar tasks

Botright

Botright is a tool designed for browser automation that focuses on stealth and captcha solving. It uses a real Chromium-based browser for enhanced stealth and offers features like browser fingerprinting and AI-powered captcha solving. The tool is suitable for developers looking to automate browser tasks while maintaining anonymity and bypassing captchas. Botright is available in async mode and can be easily integrated with existing Playwright code. It provides solutions for various captchas such as hCaptcha, reCaptcha, and GeeTest, with high success rates. Additionally, Botright offers browser stealth techniques and supports different browser functionalities for seamless automation.

CoolCline

CoolCline is a proactive programming assistant that combines the best features of Cline, Roo Code, and Bao Cline. It seamlessly collaborates with your command line interface and editor, providing the most powerful AI development experience. It optimizes queries, allows quick switching of LLM Providers, and offers auto-approve options for actions. Users can configure LLM Providers, select different chat modes, perform file and editor operations, integrate with the command line, automate browser tasks, and extend capabilities through the Model Context Protocol (MCP). Context mentions help provide explicit context, and installation is easy through the editor's extension panel or by dragging and dropping the `.vsix` file. Local setup and development instructions are available for contributors.

cursor-tools

cursor-tools is a CLI tool designed to enhance AI agents with advanced skills, such as web search, repository context, documentation generation, GitHub integration, Xcode tools, and browser automation. It provides features like Perplexity for web search, Gemini 2.0 for codebase context, and Stagehand for browser operations. The tool requires API keys for Perplexity AI and Google Gemini, and supports global installation for system-wide access. It offers various commands for different tasks and integrates with Cursor Composer for AI agent usage.

LLM-Navigation

LLM-Navigation is a repository dedicated to documenting learning records related to large models, including basic knowledge, prompt engineering, building effective agents, model expansion capabilities, security measures against prompt injection, and applications in various fields such as AI agent control, browser automation, financial analysis, 3D modeling, and tool navigation using MCP servers. The repository aims to organize and collect information for personal learning and self-improvement through AI exploration.

browser4

Browser4 is a lightning-fast, coroutine-safe browser designed for AI integration with large language models. It offers ultra-fast automation, deep web understanding, and powerful data extraction APIs. Users can automate the browser, extract data at scale, and perform tasks like summarizing products, extracting product details, and finding specific links. The tool is developer-friendly, supports AI-powered automation, and provides advanced features like X-SQL for precise data extraction. It also offers RPA capabilities, browser control, and complex data extraction with X-SQL. Browser4 is suitable for web scraping, data extraction, automation, and AI integration tasks.

sandbox

AIO Sandbox is an all-in-one agent sandbox environment that combines Browser, Shell, File, MCP operations, and VSCode Server in a single Docker container. It provides a unified, secure execution environment for AI agents and developers, with features like unified file system, multiple interfaces, secure execution, zero configuration, and agent-ready MCP-compatible APIs. The tool allows users to run shell commands, perform file operations, automate browser tasks, and integrate with various development tools and services.

moltis

Moltis is a secure, full-featured Rust-native AI gateway tool that runs on your own hardware, providing sandboxed execution and auditable code. It offers voice, memory, scheduling, Telegram, browser automation, and MCP servers functionalities without the need for Node.js or npm. Moltis ensures that your keys never leave your machine and includes features like auditable codebase, secure execution environment, and built-in functionalities for various tasks.

motorhead

Motorhead is a memory and information retrieval server for LLMs. It provides three simple APIs to assist with memory handling in chat applications using LLMs. The first API, GET /sessions/:id/memory, returns messages up to a maximum window size. The second API, POST /sessions/:id/memory, allows you to send an array of messages to Motorhead for storage. The third API, DELETE /sessions/:id/memory, deletes the session's message list. Motorhead also features incremental summarization, where it processes half of the maximum window size of messages and summarizes them when the maximum is reached. Additionally, it supports searching by text query using vector search. Motorhead is configurable through environment variables, including the maximum window size, whether to enable long-term memory, the model used for incremental summarization, the server port, your OpenAI API key, and the Redis URL.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.