CoolCline

CoolCline is a proactive programming assistant that combines the best features of Cline, Roo Code, and Bao Cline(thanks to all contributors of the `Clines` projects!). It seamlessly collaborates with your command line interface and editor, providing the most powerful AI development experience.

Stars: 132

CoolCline is a proactive programming assistant that combines the best features of Cline, Roo Code, and Bao Cline. It seamlessly collaborates with your command line interface and editor, providing the most powerful AI development experience. It optimizes queries, allows quick switching of LLM Providers, and offers auto-approve options for actions. Users can configure LLM Providers, select different chat modes, perform file and editor operations, integrate with the command line, automate browser tasks, and extend capabilities through the Model Context Protocol (MCP). Context mentions help provide explicit context, and installation is easy through the editor's extension panel or by dragging and dropping the `.vsix` file. Local setup and development instructions are available for contributors.

README:

README: English | 简体中文 CHANGELOG: English | 简体中文 CONTRIBUTING: English | 简体中文

CoolCline is a proactive programming assistant that combines the best features of Cline and Roo Code, offering the following modes:

-

AgentMode: An autonomous AI programming agent with comprehensive capabilities in code understanding, generation, and project management (automatic code reading/editing, command execution, context understanding, task analysis/decomposition, and tool usage, note: this mode is not affected by the checkboxes in the auto-approval area) -

CodeMode: Helps you write, refactor, fix code and run commands (write code, execute commands) -

ArchitectMode: Suitable for high-level technical design and system architecture discussions (this mode cannot write code or execute commands) -

AskMode: Suitable for codebase-related questions and concept exploration (this mode cannot write code or execute commands)

- Search for

CoolClinein the VSCode extension marketplace and install

- If you're installing

CoolClinefor the first time or clicked theResetbutton at the bottom of theSettings⚙️ page, you'll see theWelcomepage where you can set theLanguage(default is English, supports Chinese, Russian, and other major languages) - If you've already configured an LLM Provider, you will not see the

Welcomepage, to further configure language, you can access theSettings⚙️ page from the extension's top-right corner

You need to configure at least one LLM Provider before using CoolCline (Required)

- If you're installing

CoolClinefor the first time or clicked theResetbutton at the bottom of theSettings⚙️ page, you'll see theWelcomepage where you can configureLLM Provider - Based on your chosen LLM Provider, fill in the API Key, Model, and other parameters (some LLM Providers have quick links below the API Key input field to apply for an API Key)

- If you've already configured an LLM Provider, you will not see the

Welcomepage, but you can access theSettings⚙️ page from the extension's top-right corner to further configure it or other options - The same configurations are synchronized and shared across different pages

I'll mark three levels of using CoolCline:

Basic,Advanced, andExpert. These should be interpreted as suggested focus areas rather than strict or rigid standards.

Different role modes adapt to your workflow needs:

-

Select different role modes at the bottom of the chat input box

-

Autonomous Agent (

Agentmode): A proactive AI programming agent with the following capabilities:-

Context Analysis Capabilities:

- Uses codebase search for broad understanding

- Automatically uses file reading for detailed inspection

- Uses definition name lists to understand code structure

- Uses file lists to explore project organization

- Uses codebase-wide search to quickly locate relevant code

-

Task Management Capabilities:

- Automatically breaks down complex tasks into steps

- Uses new task tools to manage major subtasks

- Tracks progress and dependencies

- Uses task completion tools to verify task status

-

Code Operation Capabilities:

- Uses search and replace for systematic code changes

- Automatically uses file editing for precise modifications

- Uses diff application for complex changes

- Uses content insertion tools for code block management

- Validates changes and checks for errors

-

Git Snapshot Feature:

- Uses

save_checkpointto save code state snapshots, automatically recording important modification points - Uses

restore_checkpointto roll back to previous snapshots when needed - Uses

get_checkpoint_diffto view specific changes between snapshots - Snapshot feature is independent for each task, not affecting your main Git repository

- All snapshot operations are performed on hidden branches, keeping the main branch clean

- You can start by sending one or more of the following messages:

- "Create a git snapshot before starting this task"

- "Save current changes as a git snapshot with description 'completed basic functionality'"

- "Show me the changes between the last two git snapshots"

- "This change is problematic, roll back to the previous git snapshot"

- "Compare the differences between the initial git snapshot and current state"

- Uses

-

Research and Integration Capabilities:

- Automatically uses browser operations to research solutions and best practices (requires model support for Computer Use)

- Automatically uses commands (requires manual configuration of allowed commands in

Settings⚙️ page) - Automatically uses MCP tools to access external resources and data (requires manual configuration of MCP servers in the

MCP Serverspage)

-

Communication and Validation Capabilities: - Provides clear explanations for each operation - Uses follow-up questions for clarification - Records important changes - Uses appropriate tests to validate results Note:

Agentmode is not affected by the checkboxes in the auto-approval area

-

-

Code Assistant (

Codemode): For writing, refactoring, fixing code, and running commands -

Software Architect (

Architectmode): For high-level technical design and system architecture (cannot write code or execute commands) -

Technical Assistant (

Askmode): For codebase queries and concept discussions (cannot write code or execute commands)

- Access the

Promptspage from CoolCline's top-right corner to create custom role modes - Custom chat modes appear below the

Askmode - Custom roles are saved locally and persist between CoolCline sessions

The switch button is located at the bottom center of the input box.

Dropdown list options are maintained on the

Settingspage.

- You can open the

Settings⚙️ page, and in the top area, you will see the settings location, which has adefaultoption. By setting this, you will get the dropdown list you want. - Here, you can create and manage multiple LLM Provider options.

- You can even create separate options for different models of the same LLM Provider, each option saving the complete configuration information of the current LLM Provider.

- After creation, you can switch configurations in real-time at the bottom of the chat input box.

- Configuration information includes: LLM Provider, API Key, Model, and other configuration items related to the LLM Provider.

- The steps to create an LLM Provider option are as follows (steps 4 can be interchanged with 2 and 3):

- Click the + button, the system will automatically

copyan option based on the current configuration information, named xx (copy); - Click the ✏️ icon to modify the option name;

- Click the ☑️ to save the option name;

- Adjust core parameters such as Model as needed (the edit box will automatically save when it loses focus).

- Click the + button, the system will automatically

- Naming suggestions for option names: It is recommended to use the structure "Provider-ModelVersion-Feature", for example: openrouter-deepseek-v3-free; openrouter-deepseek-r1-free; deepseek-v3-official; deepseek-r1-official.

After entering a question in the input box, you can click the ✨ button at the bottom, which will enhance your question content. You can set the LLM Provider used for Prompt Enhancement in the Auxiliary Function Prompt Configuration section on the Prompts page.

Associate the most relevant context to save your token budget

Type @ in the input box when you need to explicitly provide context:

-

@Problems– Provide workspace errors/warnings for CoolCline to fix -

@Paste URL to fetch contents– Fetch documentation from URL and convert to Markdown, no need to manually type@, just paste the link -

@Add Folder– Provide folders to CoolCline, after typing@, you can directly enter the folder name for fuzzy search and quick selection -

@Add File– Provide files to CoolCline, after typing@, you can directly enter the file name for fuzzy search and quick selection -

@Git Commits– Provide Git commits or diff lists for CoolCline to analyze code history -

Add Terminal Content to Context- No@needed, select content in terminal interface, right-click, and clickCoolCline:Add Terminal Content to Context

To use CoolCline assistance in a controlled manner (preventing uncontrolled actions), the application provides three approval options:

- Manual Approval: Review and approve each step to maintain full control, click allow or cancel in application prompts for saves, command execution, etc.

- Auto Approval: Grant CoolCline the ability to run tasks without interruption (recommended in Agent mode for full autonomy)

- Auto Approval Settings: Check or uncheck options you want to control above the chat input box or in settings page

- For allowing automatic command approval: You need to go to the

Settingspage, in theCommand Linearea, add commands you want to auto-approve, likenpm install,npm run,npm test, etc. - Hybrid: Auto-approve specific operations (like file writes) but require confirmation for higher-risk tasks (strongly recommended to

notconfigure git add, git commit, etc., these should be done manually).

Regardless of your preference, you always have final control over CoolCline's operations.

- Use LLM Provider and Model with good capabilities

- Start with clear high-level task descriptions

- Use

@to provide clearer, more accurate context from codebase, files, URLs, Git commits, etc. - Utilize Git snapshot feature to manage important changes:

You can start by sending one or more of these messages:

- "Create a git snapshot before starting this task"

- "Save current changes as a git snapshot with description 'completed basic functionality'"

- "Show me the changes between the last two git snapshots"

- "This change is problematic, roll back to the previous git snapshot"

- "Compare the differences between the initial git snapshot and current state"

- Configure allowed commands in the

Settingspage and MCP servers in theMCP Serverspage, Agent will automatically use these commands and MCP servers - It's recommended to

notsetgit add,git commitcommands in the command settings interface, you should control these manually - Consider switching to specialized modes (Code/Architect/Ask) for specific subtasks when needed

- Code Mode: Best for direct coding tasks and implementation

- Architect Mode: Suitable for planning and design discussions

- Ask Mode: Perfect for learning and exploring concepts

CoolCline can also open browser sessions to:

- Launch local or remote web applications

- Click, type, scroll, and take screenshots

- Collect console logs to debug runtime or UI/UX issues

Perfect for end-to-end testing or visually verifying changes without constant copy-pasting.

- Check

Approve Browser Operationsin theAuto Approvalarea (requires LLM Provider support for Computer Use) - In the

Settingspage, you can set other options in theBrowser Settingsarea

- MCP Official Documentation: https://modelcontextprotocol.io/introduction

Extend CoolCline through the Model Context Protocol (MCP) with commands like:

- "Add a tool to manage AWS EC2 resources."

- "Add a tool to query company Jira."

- "Add a tool to pull latest PagerDuty events."

CoolCline can autonomously build and configure new tools (with your approval) to immediately expand its capabilities.

- In the

Settingspage, you can enable sound effects and volume, so you'll get audio notifications when tasks complete (allowing you to multitask while CoolCline works)

- In the

Settingspage, you can configure other options

Two installation methods, choose one:

- Search for

CoolClinein the editor's extension panel to install directly - Or get the

.vsixfile from Marketplace / Open-VSX anddrag and dropit into the editor

Tips:

- For better experience, move the extension to the right side of the screen: Right-click on the CoolCline extension icon -> Move to -> Secondary Sidebar

- If you close the

Secondary Sidebarand don't know how to reopen it, click theToggle Secondary Sidebarbutton in the top-right corner of VSCode, or use the keyboard shortcut ctrl + shift + L.

Refer to the instructions in the CONTRIBUTING file: English | 简体中文

We welcome community contributions! Here's how to participate: CONTRIBUTING: English | 简体中文

CoolCline draws inspiration from the excellent features of the

Clinesopen source community (thanks to allClinesproject contributors!).

Please note that CoolCline makes no representations or warranties of any kind concerning any code, models, or other tools provided, any related third-party tools, or any output results. You assume all risk of using any such tools or output; such tools are provided on an "as is" and "as available" basis. Such risks may include but are not limited to intellectual property infringement, network vulnerabilities or attacks, bias, inaccuracies, errors, defects, viruses, downtime, property loss or damage, and/or personal injury. You are solely responsible for your use of any such tools or output, including but not limited to their legality, appropriateness, and results.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for CoolCline

Similar Open Source Tools

CoolCline

CoolCline is a proactive programming assistant that combines the best features of Cline, Roo Code, and Bao Cline. It seamlessly collaborates with your command line interface and editor, providing the most powerful AI development experience. It optimizes queries, allows quick switching of LLM Providers, and offers auto-approve options for actions. Users can configure LLM Providers, select different chat modes, perform file and editor operations, integrate with the command line, automate browser tasks, and extend capabilities through the Model Context Protocol (MCP). Context mentions help provide explicit context, and installation is easy through the editor's extension panel or by dragging and dropping the `.vsix` file. Local setup and development instructions are available for contributors.

cognita

Cognita is an open-source framework to organize your RAG codebase along with a frontend to play around with different RAG customizations. It provides a simple way to organize your codebase so that it becomes easy to test it locally while also being able to deploy it in a production ready environment. The key issues that arise while productionizing RAG system from a Jupyter Notebook are: 1. **Chunking and Embedding Job** : The chunking and embedding code usually needs to be abstracted out and deployed as a job. Sometimes the job will need to run on a schedule or be trigerred via an event to keep the data updated. 2. **Query Service** : The code that generates the answer from the query needs to be wrapped up in a api server like FastAPI and should be deployed as a service. This service should be able to handle multiple queries at the same time and also autoscale with higher traffic. 3. **LLM / Embedding Model Deployment** : Often times, if we are using open-source models, we load the model in the Jupyter notebook. This will need to be hosted as a separate service in production and model will need to be called as an API. 4. **Vector DB deployment** : Most testing happens on vector DBs in memory or on disk. However, in production, the DBs need to be deployed in a more scalable and reliable way. Cognita makes it really easy to customize and experiment everything about a RAG system and still be able to deploy it in a good way. It also ships with a UI that makes it easier to try out different RAG configurations and see the results in real time. You can use it locally or with/without using any Truefoundry components. However, using Truefoundry components makes it easier to test different models and deploy the system in a scalable way. Cognita allows you to host multiple RAG systems using one app. ### Advantages of using Cognita are: 1. A central reusable repository of parsers, loaders, embedders and retrievers. 2. Ability for non-technical users to play with UI - Upload documents and perform QnA using modules built by the development team. 3. Fully API driven - which allows integration with other systems. > If you use Cognita with Truefoundry AI Gateway, you can get logging, metrics and feedback mechanism for your user queries. ### Features: 1. Support for multiple document retrievers that use `Similarity Search`, `Query Decompostion`, `Document Reranking`, etc 2. Support for SOTA OpenSource embeddings and reranking from `mixedbread-ai` 3. Support for using LLMs using `Ollama` 4. Support for incremental indexing that ingests entire documents in batches (reduces compute burden), keeps track of already indexed documents and prevents re-indexing of those docs.

vector-vein

VectorVein is a no-code AI workflow software inspired by LangChain and langflow, aiming to combine the powerful capabilities of large language models and enable users to achieve intelligent and automated daily workflows through simple drag-and-drop actions. Users can create powerful workflows without the need for programming, automating all tasks with ease. The software allows users to define inputs, outputs, and processing methods to create customized workflow processes for various tasks such as translation, mind mapping, summarizing web articles, and automatic categorization of customer reviews.

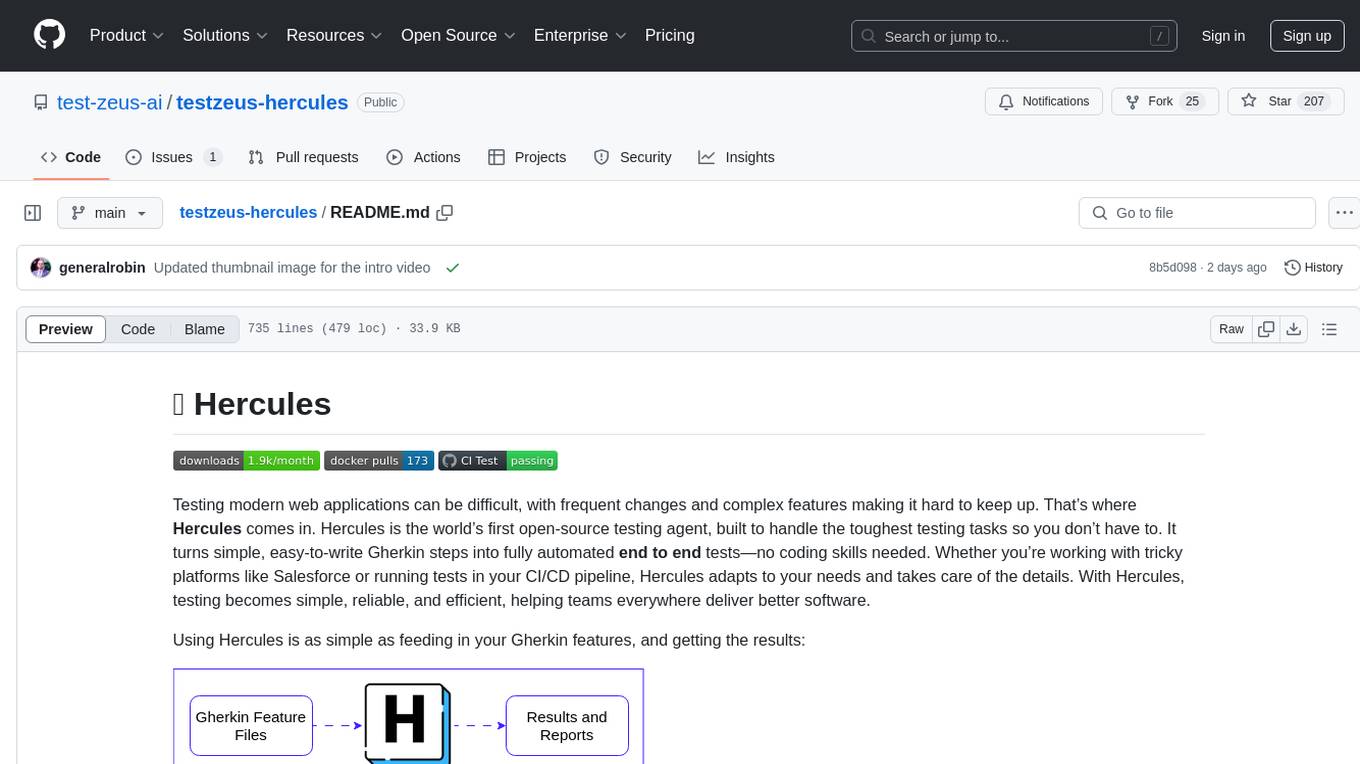

testzeus-hercules

Hercules is the world’s first open-source testing agent designed to handle the toughest testing tasks for modern web applications. It turns simple Gherkin steps into fully automated end-to-end tests, making testing simple, reliable, and efficient. Hercules adapts to various platforms like Salesforce and is suitable for CI/CD pipelines. It aims to democratize and disrupt test automation, making top-tier testing accessible to everyone. The tool is transparent, reliable, and community-driven, empowering teams to deliver better software. Hercules offers multiple ways to get started, including using PyPI package, Docker, or building and running from source code. It supports various AI models, provides detailed installation and usage instructions, and integrates with Nuclei for security testing and WCAG for accessibility testing. The tool is production-ready, open core, and open source, with plans for enhanced LLM support, advanced tooling, improved DOM distillation, community contributions, extensive documentation, and a bounty program.

knowledge-graph-of-thoughts

Knowledge Graph of Thoughts (KGoT) is an innovative AI assistant architecture that integrates LLM reasoning with dynamically constructed knowledge graphs (KGs). KGoT extracts and structures task-relevant knowledge into a dynamic KG representation, iteratively enhanced through external tools such as math solvers, web crawlers, and Python scripts. Such structured representation of task-relevant knowledge enables low-cost models to solve complex tasks effectively. The KGoT system consists of three main components: the Controller, the Graph Store, and the Integrated Tools, each playing a critical role in the task-solving process.

llm-subtrans

LLM-Subtrans is an open source subtitle translator that utilizes LLMs as a translation service. It supports translating subtitles between any language pairs supported by the language model. The application offers multiple subtitle formats support through a pluggable system, including .srt, .ssa/.ass, and .vtt files. Users can choose to use the packaged release for easy usage or install from source for more control over the setup. The tool requires an active internet connection as subtitles are sent to translation service providers' servers for translation.

honcho

Honcho is a platform for creating personalized AI agents and LLM powered applications for end users. The repository is a monorepo containing the server/API for managing database interactions and storing application state, along with a Python SDK. It utilizes FastAPI for user context management and Poetry for dependency management. The API can be run using Docker or manually by setting environment variables. The client SDK can be installed using pip or Poetry. The project is open source and welcomes contributions, following a fork and PR workflow. Honcho is licensed under the AGPL-3.0 License.

Open_Data_QnA

Open Data QnA is a Python library that allows users to interact with their PostgreSQL or BigQuery databases in a conversational manner, without needing to write SQL queries. The library leverages Large Language Models (LLMs) to bridge the gap between human language and database queries, enabling users to ask questions in natural language and receive informative responses. It offers features such as conversational querying with multiturn support, table grouping, multi schema/dataset support, SQL generation, query refinement, natural language responses, visualizations, and extensibility. The library is built on a modular design and supports various components like Database Connectors, Vector Stores, and Agents for SQL generation, validation, debugging, descriptions, embeddings, responses, and visualizations.

LARS

LARS is an application that enables users to run Large Language Models (LLMs) locally on their devices, upload their own documents, and engage in conversations where the LLM grounds its responses with the uploaded content. The application focuses on Retrieval Augmented Generation (RAG) to increase accuracy and reduce AI-generated inaccuracies. LARS provides advanced citations, supports various file formats, allows follow-up questions, provides full chat history, and offers customization options for LLM settings. Users can force enable or disable RAG, change system prompts, and tweak advanced LLM settings. The application also supports GPU-accelerated inferencing, multiple embedding models, and text extraction methods. LARS is open-source and aims to be the ultimate RAG-centric LLM application.

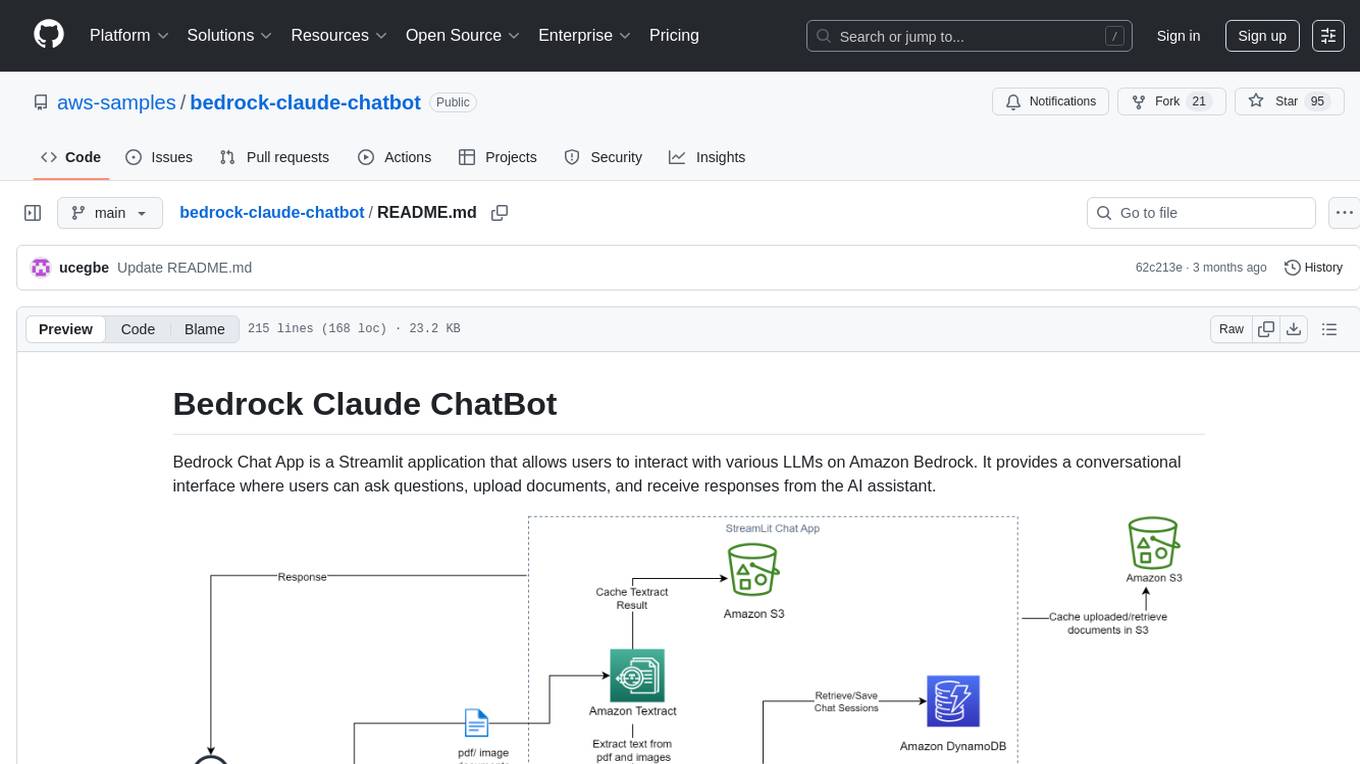

bedrock-claude-chatbot

Bedrock Claude ChatBot is a Streamlit application that provides a conversational interface for users to interact with various Large Language Models (LLMs) on Amazon Bedrock. Users can ask questions, upload documents, and receive responses from the AI assistant. The app features conversational UI, document upload, caching, chat history storage, session management, model selection, cost tracking, logging, and advanced data analytics tool integration. It can be customized using a config file and is extensible for implementing specialized tools using Docker containers and AWS Lambda. The app requires access to Amazon Bedrock Anthropic Claude Model, S3 bucket, Amazon DynamoDB, Amazon Textract, and optionally Amazon Elastic Container Registry and Amazon Athena for advanced analytics features.

vulnerability-analysis

The NVIDIA AI Blueprint for Vulnerability Analysis for Container Security showcases accelerated analysis on common vulnerabilities and exposures (CVE) at an enterprise scale, reducing mitigation time from days to seconds. It enables security analysts to determine software package vulnerabilities using large language models (LLMs) and retrieval-augmented generation (RAG). The blueprint is designed for security analysts, IT engineers, and AI practitioners in cybersecurity. It requires NVAIE developer license and API keys for vulnerability databases, search engines, and LLM model services. Hardware requirements include L40 GPU for pipeline operation and optional LLM NIM and Embedding NIM. The workflow involves LLM pipeline for CVE impact analysis, utilizing LLM planner, agent, and summarization nodes. The blueprint uses NVIDIA NIM microservices and Morpheus Cybersecurity AI SDK for vulnerability analysis.

SeaGOAT

SeaGOAT is a local search tool that leverages vector embeddings to enable you to search your codebase semantically. It is designed to work on Linux, macOS, and Windows and can process files in various formats, including text, Markdown, Python, C, C++, TypeScript, JavaScript, HTML, Go, Java, PHP, and Ruby. SeaGOAT uses a vector database called ChromaDB and a local vector embedding engine to provide fast and accurate search results. It also supports regular expression/keyword-based matches. SeaGOAT is open-source and licensed under an open-source license, and users are welcome to examine the source code, raise concerns, or create pull requests to fix problems.

OSWorld

OSWorld is a benchmarking tool designed to evaluate multimodal agents for open-ended tasks in real computer environments. It provides a platform for running experiments, setting up virtual machines, and interacting with the environment using Python scripts. Users can install the tool on their desktop or server, manage dependencies with Conda, and run benchmark tasks. The tool supports actions like executing commands, checking for specific results, and evaluating agent performance. OSWorld aims to facilitate research in AI by providing a standardized environment for testing and comparing different agent baselines.

AntSK

AntSK is an AI knowledge base/agent built with .Net8+Blazor+SemanticKernel. It features a semantic kernel for accurate natural language processing, a memory kernel for continuous learning and knowledge storage, a knowledge base for importing and querying knowledge from various document formats, a text-to-image generator integrated with StableDiffusion, GPTs generation for creating personalized GPT models, API interfaces for integrating AntSK into other applications, an open API plugin system for extending functionality, a .Net plugin system for integrating business functions, real-time information retrieval from the internet, model management for adapting and managing different models from different vendors, support for domestic models and databases for operation in a trusted environment, and planned model fine-tuning based on llamafactory.

guidellm

GuideLLM is a powerful tool for evaluating and optimizing the deployment of large language models (LLMs). By simulating real-world inference workloads, GuideLLM helps users gauge the performance, resource needs, and cost implications of deploying LLMs on various hardware configurations. This approach ensures efficient, scalable, and cost-effective LLM inference serving while maintaining high service quality. Key features include performance evaluation, resource optimization, cost estimation, and scalability testing.

ai_automation_suggester

An integration for Home Assistant that leverages AI models to understand your unique home environment and propose intelligent automations. By analyzing your entities, devices, areas, and existing automations, the AI Automation Suggester helps you discover new, context-aware use cases you might not have considered, ultimately streamlining your home management and improving efficiency, comfort, and convenience. The tool acts as a personal automation consultant, providing actionable YAML-based automations that can save energy, improve security, enhance comfort, and reduce manual intervention. It turns the complexity of a large Home Assistant environment into actionable insights and tangible benefits.

For similar tasks

Botright

Botright is a tool designed for browser automation that focuses on stealth and captcha solving. It uses a real Chromium-based browser for enhanced stealth and offers features like browser fingerprinting and AI-powered captcha solving. The tool is suitable for developers looking to automate browser tasks while maintaining anonymity and bypassing captchas. Botright is available in async mode and can be easily integrated with existing Playwright code. It provides solutions for various captchas such as hCaptcha, reCaptcha, and GeeTest, with high success rates. Additionally, Botright offers browser stealth techniques and supports different browser functionalities for seamless automation.

CoolCline

CoolCline is a proactive programming assistant that combines the best features of Cline, Roo Code, and Bao Cline. It seamlessly collaborates with your command line interface and editor, providing the most powerful AI development experience. It optimizes queries, allows quick switching of LLM Providers, and offers auto-approve options for actions. Users can configure LLM Providers, select different chat modes, perform file and editor operations, integrate with the command line, automate browser tasks, and extend capabilities through the Model Context Protocol (MCP). Context mentions help provide explicit context, and installation is easy through the editor's extension panel or by dragging and dropping the `.vsix` file. Local setup and development instructions are available for contributors.

cursor-tools

cursor-tools is a CLI tool designed to enhance AI agents with advanced skills, such as web search, repository context, documentation generation, GitHub integration, Xcode tools, and browser automation. It provides features like Perplexity for web search, Gemini 2.0 for codebase context, and Stagehand for browser operations. The tool requires API keys for Perplexity AI and Google Gemini, and supports global installation for system-wide access. It offers various commands for different tasks and integrates with Cursor Composer for AI agent usage.

LLM-Navigation

LLM-Navigation is a repository dedicated to documenting learning records related to large models, including basic knowledge, prompt engineering, building effective agents, model expansion capabilities, security measures against prompt injection, and applications in various fields such as AI agent control, browser automation, financial analysis, 3D modeling, and tool navigation using MCP servers. The repository aims to organize and collect information for personal learning and self-improvement through AI exploration.

browser4

Browser4 is a lightning-fast, coroutine-safe browser designed for AI integration with large language models. It offers ultra-fast automation, deep web understanding, and powerful data extraction APIs. Users can automate the browser, extract data at scale, and perform tasks like summarizing products, extracting product details, and finding specific links. The tool is developer-friendly, supports AI-powered automation, and provides advanced features like X-SQL for precise data extraction. It also offers RPA capabilities, browser control, and complex data extraction with X-SQL. Browser4 is suitable for web scraping, data extraction, automation, and AI integration tasks.

sandbox

AIO Sandbox is an all-in-one agent sandbox environment that combines Browser, Shell, File, MCP operations, and VSCode Server in a single Docker container. It provides a unified, secure execution environment for AI agents and developers, with features like unified file system, multiple interfaces, secure execution, zero configuration, and agent-ready MCP-compatible APIs. The tool allows users to run shell commands, perform file operations, automate browser tasks, and integrate with various development tools and services.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

jupyter-ai

Jupyter AI connects generative AI with Jupyter notebooks. It provides a user-friendly and powerful way to explore generative AI models in notebooks and improve your productivity in JupyterLab and the Jupyter Notebook. Specifically, Jupyter AI offers: * An `%%ai` magic that turns the Jupyter notebook into a reproducible generative AI playground. This works anywhere the IPython kernel runs (JupyterLab, Jupyter Notebook, Google Colab, Kaggle, VSCode, etc.). * A native chat UI in JupyterLab that enables you to work with generative AI as a conversational assistant. * Support for a wide range of generative model providers, including AI21, Anthropic, AWS, Cohere, Gemini, Hugging Face, NVIDIA, and OpenAI. * Local model support through GPT4All, enabling use of generative AI models on consumer grade machines with ease and privacy.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.