ipex-llm

Accelerate local LLM inference and finetuning (LLaMA, Mistral, ChatGLM, Qwen, DeepSeek, Mixtral, Gemma, Phi, MiniCPM, Qwen-VL, MiniCPM-V, etc.) on Intel XPU (e.g., local PC with iGPU and NPU, discrete GPU such as Arc, Flex and Max); seamlessly integrate with llama.cpp, Ollama, HuggingFace, LangChain, LlamaIndex, vLLM, DeepSpeed, Axolotl, etc.

Stars: 7638

The `ipex-llm` repository is an LLM acceleration library designed for Intel GPU, NPU, and CPU. It provides seamless integration with various models and tools like llama.cpp, Ollama, HuggingFace transformers, LangChain, LlamaIndex, vLLM, Text-Generation-WebUI, DeepSpeed-AutoTP, FastChat, Axolotl, and more. The library offers optimizations for over 70 models, XPU acceleration, and support for low-bit (FP8/FP6/FP4/INT4) operations. Users can run different models on Intel GPUs, NPU, and CPUs with support for various features like finetuning, inference, serving, and benchmarking.

README:

< English | 中文 >

IPEX-LLM is an LLM acceleration library for Intel GPU (e.g., local PC with iGPU, discrete GPU such as Arc, Flex and Max), NPU and CPU 1.

[!NOTE]

IPEX-LLMprovides seamless integration with llama.cpp, Ollama, HuggingFace transformers, LangChain, LlamaIndex, vLLM, Text-Generation-WebUI, DeepSpeed-AutoTP, FastChat, Axolotl, HuggingFace PEFT, HuggingFace TRL, AutoGen, ModeScope, etc.- 70+ models have been optimized/verified on

ipex-llm(e.g., Llama, Phi, Mistral, Mixtral, DeepSeek, Qwen, ChatGLM, MiniCPM, Qwen-VL, MiniCPM-V and more), with state-of-art LLM optimizations, XPU acceleration and low-bit (FP8/FP6/FP4/INT4) support; see the complete list here.

- [2025/03] We added support for Gemma3 model in the latest llama.cpp Portable Zip.

- [2025/03] We can now run DeepSeek-R1-671B-Q4_K_M with 1 or 2 Arc A770 on Xeon using the latest llama.cpp Portable Zip.

- [2025/02] We added support of llama.cpp Portable Zip for Intel GPU (both Windows and Linux) and NPU (Windows only).

- [2025/02] We added support of Ollama Portable Zip to directly run Ollama on Intel GPU for both Windows and Linux (without the need of manual installations).

- [2025/02] We added support for running vLLM 0.6.6 on Intel Arc GPUs.

- [2025/01] We added the guide for running

ipex-llmon Intel Arc B580 GPU. - [2025/01] We added support for running Ollama 0.5.4 on Intel GPU.

- [2024/12] We added both Python and C++ support for Intel Core Ultra NPU (including 100H, 200V, 200K and 200H series).

More updates

- [2024/11] We added support for running vLLM 0.6.2 on Intel Arc GPUs.

- [2024/07] We added support for running Microsoft's GraphRAG using local LLM on Intel GPU; see the quickstart guide here.

- [2024/07] We added extensive support for Large Multimodal Models, including StableDiffusion, Phi-3-Vision, Qwen-VL, and more.

- [2024/07] We added FP6 support on Intel GPU.

- [2024/06] We added experimental NPU support for Intel Core Ultra processors; see the examples here.

- [2024/06] We added extensive support of pipeline parallel inference, which makes it easy to run large-sized LLM using 2 or more Intel GPUs (such as Arc).

- [2024/06] We added support for running RAGFlow with

ipex-llmon Intel GPU. - [2024/05]

ipex-llmnow supports Axolotl for LLM finetuning on Intel GPU; see the quickstart here. - [2024/05] You can now easily run

ipex-llminference, serving and finetuning using the Docker images. - [2024/05] You can now install

ipex-llmon Windows using just "one command". - [2024/04] You can now run Open WebUI on Intel GPU using

ipex-llm; see the quickstart here. - [2024/04] You can now run Llama 3 on Intel GPU using

llama.cppandollamawithipex-llm; see the quickstart here. - [2024/04]

ipex-llmnow supports Llama 3 on both Intel GPU and CPU. - [2024/04]

ipex-llmnow provides C++ interface, which can be used as an accelerated backend for running llama.cpp and ollama on Intel GPU. - [2024/03]

bigdl-llmhas now becomeipex-llm(see the migration guide here); you may find the originalBigDLproject here. - [2024/02]

ipex-llmnow supports directly loading model from ModelScope (魔搭). - [2024/02]

ipex-llmadded initial INT2 support (based on llama.cpp IQ2 mechanism), which makes it possible to run large-sized LLM (e.g., Mixtral-8x7B) on Intel GPU with 16GB VRAM. - [2024/02] Users can now use

ipex-llmthrough Text-Generation-WebUI GUI. - [2024/02]

ipex-llmnow supports Self-Speculative Decoding, which in practice brings ~30% speedup for FP16 and BF16 inference latency on Intel GPU and CPU respectively. - [2024/02]

ipex-llmnow supports a comprehensive list of LLM finetuning on Intel GPU (including LoRA, QLoRA, DPO, QA-LoRA and ReLoRA). - [2024/01] Using

ipex-llmQLoRA, we managed to finetune LLaMA2-7B in 21 minutes and LLaMA2-70B in 3.14 hours on 8 Intel Max 1550 GPU for Standford-Alpaca (see the blog here). - [2023/12]

ipex-llmnow supports ReLoRA (see "ReLoRA: High-Rank Training Through Low-Rank Updates"). - [2023/12]

ipex-llmnow supports Mixtral-8x7B on both Intel GPU and CPU. - [2023/12]

ipex-llmnow supports QA-LoRA (see "QA-LoRA: Quantization-Aware Low-Rank Adaptation of Large Language Models"). - [2023/12]

ipex-llmnow supports FP8 and FP4 inference on Intel GPU. - [2023/11] Initial support for directly loading GGUF, AWQ and GPTQ models into

ipex-llmis available. - [2023/11]

ipex-llmnow supports vLLM continuous batching on both Intel GPU and CPU. - [2023/10]

ipex-llmnow supports QLoRA finetuning on both Intel GPU and CPU. - [2023/10]

ipex-llmnow supports FastChat serving on on both Intel CPU and GPU. - [2023/09]

ipex-llmnow supports Intel GPU (including iGPU, Arc, Flex and MAX). - [2023/09]

ipex-llmtutorial is released.

See demos of running local LLMs on Intel Core Ultra iGPU, Intel Core Ultra NPU, single-card Arc GPU, or multi-card Arc GPUs using ipex-llm below.

| Intel Core Ultra iGPU | Intel Core Ultra NPU | Intel Arc dGPU | 2-Card Intel Arc dGPUs |

|

|

|

|

|

Ollama (Mistral-7B, Q4_K) |

HuggingFace (Llama3.2-3B, SYM_INT4) |

TextGeneration-WebUI (Llama3-8B, FP8) |

llama.cpp (DeepSeek-R1-Distill-Qwen-32B, Q4_K) |

See the Token Generation Speed on Intel Core Ultra and Intel Arc GPU below1 (and refer to [2][3][4] for more details).

|

|

You may follow the Benchmarking Guide to run ipex-llm performance benchmark yourself.

Please see the Perplexity result below (tested on Wikitext dataset using the script here).

| Perplexity | sym_int4 | q4_k | fp6 | fp8_e5m2 | fp8_e4m3 | fp16 |

|---|---|---|---|---|---|---|

| Llama-2-7B-chat-hf | 6.364 | 6.218 | 6.092 | 6.180 | 6.098 | 6.096 |

| Mistral-7B-Instruct-v0.2 | 5.365 | 5.320 | 5.270 | 5.273 | 5.246 | 5.244 |

| Baichuan2-7B-chat | 6.734 | 6.727 | 6.527 | 6.539 | 6.488 | 6.508 |

| Qwen1.5-7B-chat | 8.865 | 8.816 | 8.557 | 8.846 | 8.530 | 8.607 |

| Llama-3.1-8B-Instruct | 6.705 | 6.566 | 6.338 | 6.383 | 6.325 | 6.267 |

| gemma-2-9b-it | 7.541 | 7.412 | 7.269 | 7.380 | 7.268 | 7.270 |

| Baichuan2-13B-Chat | 6.313 | 6.160 | 6.070 | 6.145 | 6.086 | 6.031 |

| Llama-2-13b-chat-hf | 5.449 | 5.422 | 5.341 | 5.384 | 5.332 | 5.329 |

| Qwen1.5-14B-Chat | 7.529 | 7.520 | 7.367 | 7.504 | 7.297 | 7.334 |

- Ollama: running Ollama on Intel GPU without the need of manual installations

- llama.cpp: running llama.cpp on Intel GPU without the need of manual installations

-

Arc B580: running

ipex-llmon Intel Arc B580 GPU for Ollama, llama.cpp, PyTorch, HuggingFace, etc. -

NPU: running

ipex-llmon Intel NPU in both Python/C++ or llama.cpp API. -

PyTorch/HuggingFace: running PyTorch, HuggingFace, LangChain, LlamaIndex, etc. (using Python interface of

ipex-llm) on Intel GPU for Windows and Linux -

vLLM: running

ipex-llmin vLLM on both Intel GPU and CPU -

FastChat: running

ipex-llmin FastChat serving on on both Intel GPU and CPU -

Serving on multiple Intel GPUs: running

ipex-llmserving on multiple Intel GPUs by leveraging DeepSpeed AutoTP and FastAPI -

Text-Generation-WebUI: running

ipex-llminoobaboogaWebUI -

Axolotl: running

ipex-llmin Axolotl for LLM finetuning -

Benchmarking: running (latency and throughput) benchmarks for

ipex-llmon Intel CPU and GPU

-

GPU Inference in C++: running

llama.cpp,ollama, etc., withipex-llmon Intel GPU -

GPU Inference in Python : running HuggingFace

transformers,LangChain,LlamaIndex,ModelScope, etc. withipex-llmon Intel GPU -

vLLM on GPU: running

vLLMserving withipex-llmon Intel GPU -

vLLM on CPU: running

vLLMserving withipex-llmon Intel CPU -

FastChat on GPU: running

FastChatserving withipex-llmon Intel GPU -

VSCode on GPU: running and developing

ipex-llmapplications in Python using VSCode on Intel GPU

-

GraphRAG: running Microsoft's

GraphRAGusing local LLM withipex-llm -

RAGFlow: running

RAGFlow(an open-source RAG engine) withipex-llm -

LangChain-Chatchat: running

LangChain-Chatchat(Knowledge Base QA using RAG pipeline) withipex-llm -

Coding copilot: running

Continue(coding copilot in VSCode) withipex-llm -

Open WebUI: running

Open WebUIwithipex-llm -

PrivateGPT: running

PrivateGPTto interact with documents withipex-llm -

Dify platform: running

ipex-llminDify(production-ready LLM app development platform)

-

Windows GPU: installing

ipex-llmon Windows with Intel GPU -

Linux GPU: installing

ipex-llmon Linux with Intel GPU - For more details, please refer to the full installation guide

-

- INT4 inference: INT4 LLM inference on Intel GPU and CPU

- FP8/FP6/FP4 inference: FP8, FP6 and FP4 LLM inference on Intel GPU

- INT8 inference: INT8 LLM inference on Intel GPU and CPU

- INT2 inference: INT2 LLM inference (based on llama.cpp IQ2 mechanism) on Intel GPU

-

- FP16 LLM inference on Intel GPU, with possible self-speculative decoding optimization

- BF16 LLM inference on Intel CPU, with possible self-speculative decoding optimization

-

-

Low-bit models: saving and loading

ipex-llmlow-bit models (INT4/FP4/FP6/INT8/FP8/FP16/etc.) -

GGUF: directly loading GGUF models into

ipex-llm -

AWQ: directly loading AWQ models into

ipex-llm -

GPTQ: directly loading GPTQ models into

ipex-llm

-

Low-bit models: saving and loading

- Tutorials

Over 70 models have been optimized/verified on ipex-llm, including LLaMA/LLaMA2, Mistral, Mixtral, Gemma, LLaVA, Whisper, ChatGLM2/ChatGLM3, Baichuan/Baichuan2, Qwen/Qwen-1.5, InternLM and more; see the list below.

| Model | CPU Example | GPU Example | NPU Example |

|---|---|---|---|

| LLaMA | link1, link2 | link | |

| LLaMA 2 | link1, link2 | link | Python link, C++ link |

| LLaMA 3 | link | link | Python link, C++ link |

| LLaMA 3.1 | link | link | |

| LLaMA 3.2 | link | Python link, C++ link | |

| LLaMA 3.2-Vision | link | ||

| ChatGLM | link | ||

| ChatGLM2 | link | link | |

| ChatGLM3 | link | link | |

| GLM-4 | link | link | |

| GLM-4V | link | link | |

| GLM-Edge | link | Python link | |

| GLM-Edge-V | link | ||

| Mistral | link | link | |

| Mixtral | link | link | |

| Falcon | link | link | |

| MPT | link | link | |

| Dolly-v1 | link | link | |

| Dolly-v2 | link | link | |

| Replit Code | link | link | |

| RedPajama | link1, link2 | ||

| Phoenix | link1, link2 | ||

| StarCoder | link1, link2 | link | |

| Baichuan | link | link | |

| Baichuan2 | link | link | Python link |

| InternLM | link | link | |

| InternVL2 | link | ||

| Qwen | link | link | |

| Qwen1.5 | link | link | |

| Qwen2 | link | link | Python link, C++ link |

| Qwen2.5 | link | Python link, C++ link | |

| Qwen-VL | link | link | |

| Qwen2-VL | link | ||

| Qwen2-Audio | link | ||

| Aquila | link | link | |

| Aquila2 | link | link | |

| MOSS | link | ||

| Whisper | link | link | |

| Phi-1_5 | link | link | |

| Flan-t5 | link | link | |

| LLaVA | link | link | |

| CodeLlama | link | link | |

| Skywork | link | ||

| InternLM-XComposer | link | ||

| WizardCoder-Python | link | ||

| CodeShell | link | ||

| Fuyu | link | ||

| Distil-Whisper | link | link | |

| Yi | link | link | |

| BlueLM | link | link | |

| Mamba | link | link | |

| SOLAR | link | link | |

| Phixtral | link | link | |

| InternLM2 | link | link | |

| RWKV4 | link | ||

| RWKV5 | link | ||

| Bark | link | link | |

| SpeechT5 | link | ||

| DeepSeek-MoE | link | ||

| Ziya-Coding-34B-v1.0 | link | ||

| Phi-2 | link | link | |

| Phi-3 | link | link | |

| Phi-3-vision | link | link | |

| Yuan2 | link | link | |

| Gemma | link | link | |

| Gemma2 | link | ||

| DeciLM-7B | link | link | |

| Deepseek | link | link | |

| StableLM | link | link | |

| CodeGemma | link | link | |

| Command-R/cohere | link | link | |

| CodeGeeX2 | link | link | |

| MiniCPM | link | link | Python link, C++ link |

| MiniCPM3 | link | ||

| MiniCPM-V | link | ||

| MiniCPM-V-2 | link | link | |

| MiniCPM-Llama3-V-2_5 | link | Python link | |

| MiniCPM-V-2_6 | link | link | Python link |

| MiniCPM-o-2_6 | link | ||

| Janus-Pro | link | ||

| Moonlight | link | ||

| StableDiffusion | link | ||

| Bce-Embedding-Base-V1 | Python link | ||

| Speech_Paraformer-Large | Python link |

- Please report a bug or raise a feature request by opening a Github Issue

- Please report a vulnerability by opening a draft GitHub Security Advisory

-

Performance varies by use, configuration and other factors.

ipex-llmmay not optimize to the same degree for non-Intel products. Learn more at www.Intel.com/PerformanceIndex. ↩ ↩2

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ipex-llm

Similar Open Source Tools

ipex-llm

The `ipex-llm` repository is an LLM acceleration library designed for Intel GPU, NPU, and CPU. It provides seamless integration with various models and tools like llama.cpp, Ollama, HuggingFace transformers, LangChain, LlamaIndex, vLLM, Text-Generation-WebUI, DeepSpeed-AutoTP, FastChat, Axolotl, and more. The library offers optimizations for over 70 models, XPU acceleration, and support for low-bit (FP8/FP6/FP4/INT4) operations. Users can run different models on Intel GPUs, NPU, and CPUs with support for various features like finetuning, inference, serving, and benchmarking.

ipex-llm

IPEX-LLM is a PyTorch library for running Large Language Models (LLMs) on Intel CPUs and GPUs with very low latency. It provides seamless integration with various LLM frameworks and tools, including llama.cpp, ollama, Text-Generation-WebUI, HuggingFace transformers, and more. IPEX-LLM has been optimized and verified on over 50 LLM models, including LLaMA, Mistral, Mixtral, Gemma, LLaVA, Whisper, ChatGLM, Baichuan, Qwen, and RWKV. It supports a range of low-bit inference formats, including INT4, FP8, FP4, INT8, INT2, FP16, and BF16, as well as finetuning capabilities for LoRA, QLoRA, DPO, QA-LoRA, and ReLoRA. IPEX-LLM is actively maintained and updated with new features and optimizations, making it a valuable tool for researchers, developers, and anyone interested in exploring and utilizing LLMs.

Open-dLLM

Open-dLLM is the most open release of a diffusion-based large language model, providing pretraining, evaluation, inference, and checkpoints. It introduces Open-dCoder, the code-generation variant of Open-dLLM. The repo offers a complete stack for diffusion LLMs, enabling users to go from raw data to training, checkpoints, evaluation, and inference in one place. It includes pretraining pipeline with open datasets, inference scripts for easy sampling and generation, evaluation suite with various metrics, weights and checkpoints on Hugging Face, and transparent configs for full reproducibility.

NornicDB

NornicDB is a high-performance graph database designed for AI agents and knowledge systems. It is Neo4j-compatible, GPU-accelerated, and features memory that evolves. The database automatically discovers and manages relationships in the data, allowing meaning to emerge from the knowledge graph. NornicDB is suitable for AI agent memory, knowledge graphs, RAG systems, session context, and research tools. It offers features like intelligent memory, auto-relationships, performance benchmarks, vector search, Heimdall AI assistant, APOC functions, and various Docker images for different platforms. The tool is built with Neo4j Bolt protocol, Cypher query engine, memory decay system, GPU acceleration, vector search, auto-relationship engine, and more.

Native-LLM-for-Android

This repository provides a demonstration of running a native Large Language Model (LLM) on Android devices. It supports various models such as Qwen2.5-Instruct, MiniCPM-DPO/SFT, Yuan2.0, Gemma2-it, StableLM2-Chat/Zephyr, and Phi3.5-mini-instruct. The demo models are optimized for extreme execution speed after being converted from HuggingFace or ModelScope. Users can download the demo models from the provided drive link, place them in the assets folder, and follow specific instructions for decompression and model export. The repository also includes information on quantization methods and performance benchmarks for different models on various devices.

new-api

New API is a next-generation large model gateway and AI asset management system that provides a wide range of features, including a new UI interface, multi-language support, online recharge function, key query for usage quota, compatibility with the original One API database, model charging by usage count, channel weighted randomization, data dashboard, token grouping and model restrictions, support for various authorization login methods, support for Rerank models, OpenAI Realtime API, Claude Messages format, reasoning effort setting, content reasoning, user-specific model rate limiting, request format conversion, cache billing support, and various model support such as gpts, Midjourney-Proxy, Suno API, custom channels, Rerank models, Claude Messages format, Dify, and more.

ai-dev-kit

The AI Dev Kit is a comprehensive toolkit designed to enhance AI-driven development on Databricks. It provides trusted sources for AI coding assistants like Claude Code and Cursor to build faster and smarter on Databricks. The kit includes features such as Spark Declarative Pipelines, Databricks Jobs, AI/BI Dashboards, Unity Catalog, Genie Spaces, Knowledge Assistants, MLflow Experiments, Model Serving, Databricks Apps, and more. Users can choose from different adventures like installing the kit, using the visual builder app, teaching AI assistants Databricks patterns, executing Databricks actions, or building custom integrations with the core library. The kit also includes components like databricks-tools-core, databricks-mcp-server, databricks-skills, databricks-builder-app, and ai-dev-project.

langfuse

Langfuse is a powerful tool that helps you develop, monitor, and test your LLM applications. With Langfuse, you can: * **Develop:** Instrument your app and start ingesting traces to Langfuse, inspect and debug complex logs, and manage, version, and deploy prompts from within Langfuse. * **Monitor:** Track metrics (cost, latency, quality) and gain insights from dashboards & data exports, collect and calculate scores for your LLM completions, run model-based evaluations, collect user feedback, and manually score observations in Langfuse. * **Test:** Track and test app behaviour before deploying a new version, test expected in and output pairs and benchmark performance before deploying, and track versions and releases in your application. Langfuse is easy to get started with and offers a generous free tier. You can sign up for Langfuse Cloud or deploy Langfuse locally or on your own infrastructure. Langfuse also offers a variety of integrations to make it easy to connect to your LLM applications.

swift

SWIFT (Scalable lightWeight Infrastructure for Fine-Tuning) supports training, inference, evaluation and deployment of nearly **200 LLMs and MLLMs** (multimodal large models). Developers can directly apply our framework to their own research and production environments to realize the complete workflow from model training and evaluation to application. In addition to supporting the lightweight training solutions provided by [PEFT](https://github.com/huggingface/peft), we also provide a complete **Adapters library** to support the latest training techniques such as NEFTune, LoRA+, LLaMA-PRO, etc. This adapter library can be used directly in your own custom workflow without our training scripts. To facilitate use by users unfamiliar with deep learning, we provide a Gradio web-ui for controlling training and inference, as well as accompanying deep learning courses and best practices for beginners. Additionally, we are expanding capabilities for other modalities. Currently, we support full-parameter training and LoRA training for AnimateDiff.

PocketFlow

Pocket Flow is a 100-line minimalist LLM framework designed for (Multi-)Agents, Workflow, RAG, etc. It provides a core abstraction for LLM projects by focusing on computation and communication through a graph structure and shared store. The framework aims to support the development of LLM Agents, such as Cursor AI, by offering a minimal and low-level approach that is well-suited for understanding and usage. Users can install Pocket Flow via pip or by copying the source code, and detailed documentation is available on the project website.

ReGraph

ReGraph is a decentralized AI compute marketplace that connects hardware providers with developers who need inference and training resources. It democratizes access to AI computing power by creating a global network of distributed compute nodes. It is cost-effective, decentralized, easy to integrate, supports multiple models, and offers pay-as-you-go pricing.

DownEdit

DownEdit is a powerful program that allows you to download videos from various social media platforms such as TikTok, Douyin, Kuaishou, and more. With DownEdit, you can easily download videos from user profiles and edit them in bulk. You have the option to flip the videos horizontally or vertically throughout the entire directory with just a single click. Stay tuned for more exciting features coming soon!

END-TO-END-GENERATIVE-AI-PROJECTS

The 'END TO END GENERATIVE AI PROJECTS' repository is a collection of awesome industry projects utilizing Large Language Models (LLM) for various tasks such as chat applications with PDFs, image to speech generation, video transcribing and summarizing, resume tracking, text to SQL conversion, invoice extraction, medical chatbot, financial stock analysis, and more. The projects showcase the deployment of LLM models like Google Gemini Pro, HuggingFace Models, OpenAI GPT, and technologies such as Langchain, Streamlit, LLaMA2, LLaMAindex, and more. The repository aims to provide end-to-end solutions for different AI applications.

awesome-LangGraph

Awesome LangGraph is a curated list of projects, resources, and tools for building stateful, multi-actor applications with LangGraph. It provides valuable resources for developers at all stages of development, from beginners to those building production-ready systems. The repository covers core ecosystem components, LangChain ecosystem, LangGraph platform, official resources, starter templates, pre-built agents, example applications, development tools, community projects, AI assistants, content & media, knowledge & retrieval, finance & business, sustainability, learning resources, companies using LangGraph, contributing guidelines, and acknowledgments.

runanywhere-sdks

RunAnywhere is an on-device AI tool for mobile apps that allows users to run LLMs, speech-to-text, text-to-speech, and voice assistant features locally, ensuring privacy, offline functionality, and fast performance. The tool provides a range of AI capabilities without relying on cloud services, reducing latency and ensuring that no data leaves the device. RunAnywhere offers SDKs for Swift (iOS/macOS), Kotlin (Android), React Native, and Flutter, making it easy for developers to integrate AI features into their mobile applications. The tool supports various models for LLM, speech-to-text, and text-to-speech, with detailed documentation and installation instructions available for each platform.

For similar tasks

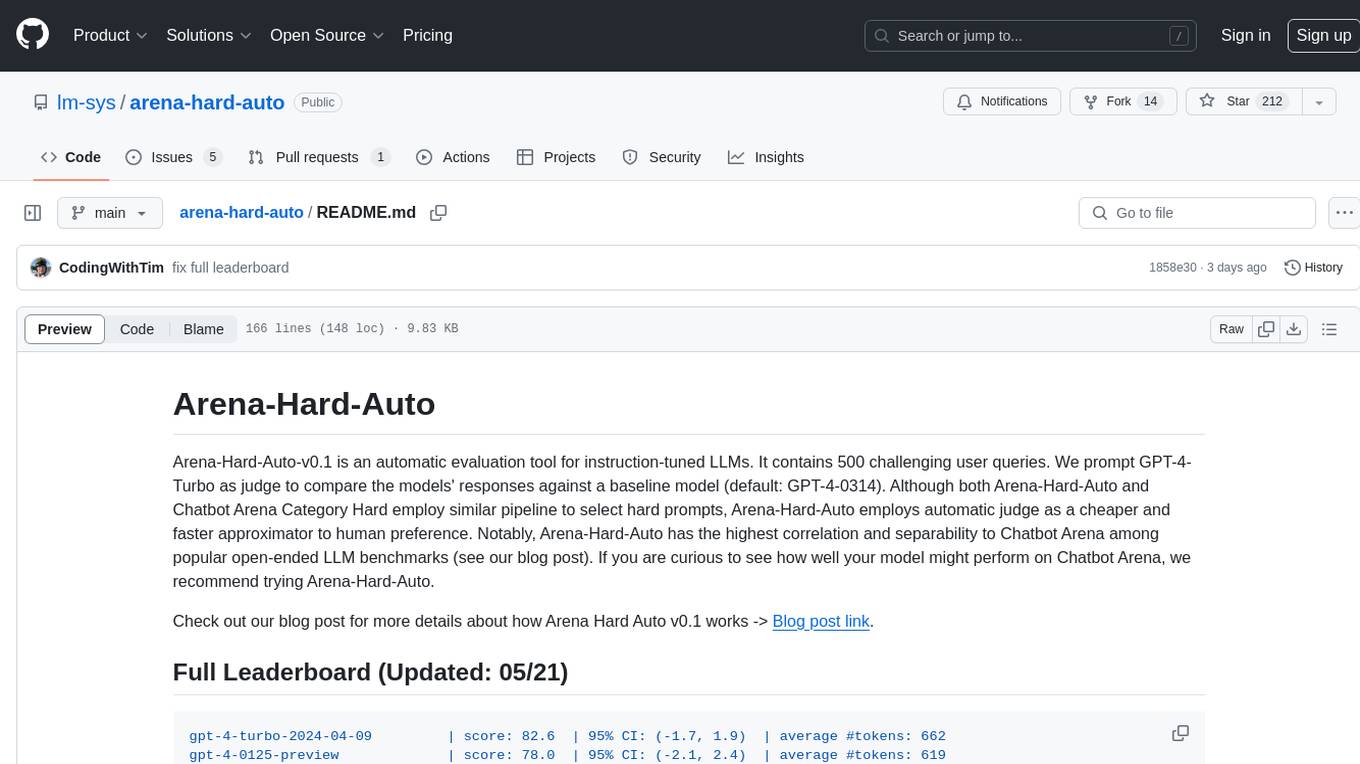

arena-hard-auto

Arena-Hard-Auto-v0.1 is an automatic evaluation tool for instruction-tuned LLMs. It contains 500 challenging user queries. The tool prompts GPT-4-Turbo as a judge to compare models' responses against a baseline model (default: GPT-4-0314). Arena-Hard-Auto employs an automatic judge as a cheaper and faster approximator to human preference. It has the highest correlation and separability to Chatbot Arena among popular open-ended LLM benchmarks. Users can evaluate their models' performance on Chatbot Arena by using Arena-Hard-Auto.

max

The Modular Accelerated Xecution (MAX) platform is an integrated suite of AI libraries, tools, and technologies that unifies commonly fragmented AI deployment workflows. MAX accelerates time to market for the latest innovations by giving AI developers a single toolchain that unlocks full programmability, unparalleled performance, and seamless hardware portability.

ai-hub

AI Hub Project aims to continuously test and evaluate mainstream large language models, while accumulating and managing various effective model invocation prompts. It has integrated all mainstream large language models in China, including OpenAI GPT-4 Turbo, Baidu ERNIE-Bot-4, Tencent ChatPro, MiniMax abab5.5-chat, and more. The project plans to continuously track, integrate, and evaluate new models. Users can access the models through REST services or Java code integration. The project also provides a testing suite for translation, coding, and benchmark testing.

long-context-attention

Long-Context-Attention (YunChang) is a unified sequence parallel approach that combines the strengths of DeepSpeed-Ulysses-Attention and Ring-Attention to provide a versatile and high-performance solution for long context LLM model training and inference. It addresses the limitations of both methods by offering no limitation on the number of heads, compatibility with advanced parallel strategies, and enhanced performance benchmarks. The tool is verified in Megatron-LM and offers best practices for 4D parallelism, making it suitable for various attention mechanisms and parallel computing advancements.

marlin

Marlin is a highly optimized FP16xINT4 matmul kernel designed for large language model (LLM) inference, offering close to ideal speedups up to batchsizes of 16-32 tokens. It is suitable for larger-scale serving, speculative decoding, and advanced multi-inference schemes like CoT-Majority. Marlin achieves optimal performance by utilizing various techniques and optimizations to fully leverage GPU resources, ensuring efficient computation and memory management.

MMC

This repository, MMC, focuses on advancing multimodal chart understanding through large-scale instruction tuning. It introduces a dataset supporting various tasks and chart types, a benchmark for evaluating reasoning capabilities over charts, and an assistant achieving state-of-the-art performance on chart QA benchmarks. The repository provides data for chart-text alignment, benchmarking, and instruction tuning, along with existing datasets used in experiments. Additionally, it offers a Gradio demo for the MMCA model.

Tiktoken

Tiktoken is a high-performance implementation focused on token count operations. It provides various encodings like o200k_base, cl100k_base, r50k_base, p50k_base, and p50k_edit. Users can easily encode and decode text using the provided API. The repository also includes a benchmark console app for performance tracking. Contributions in the form of PRs are welcome.

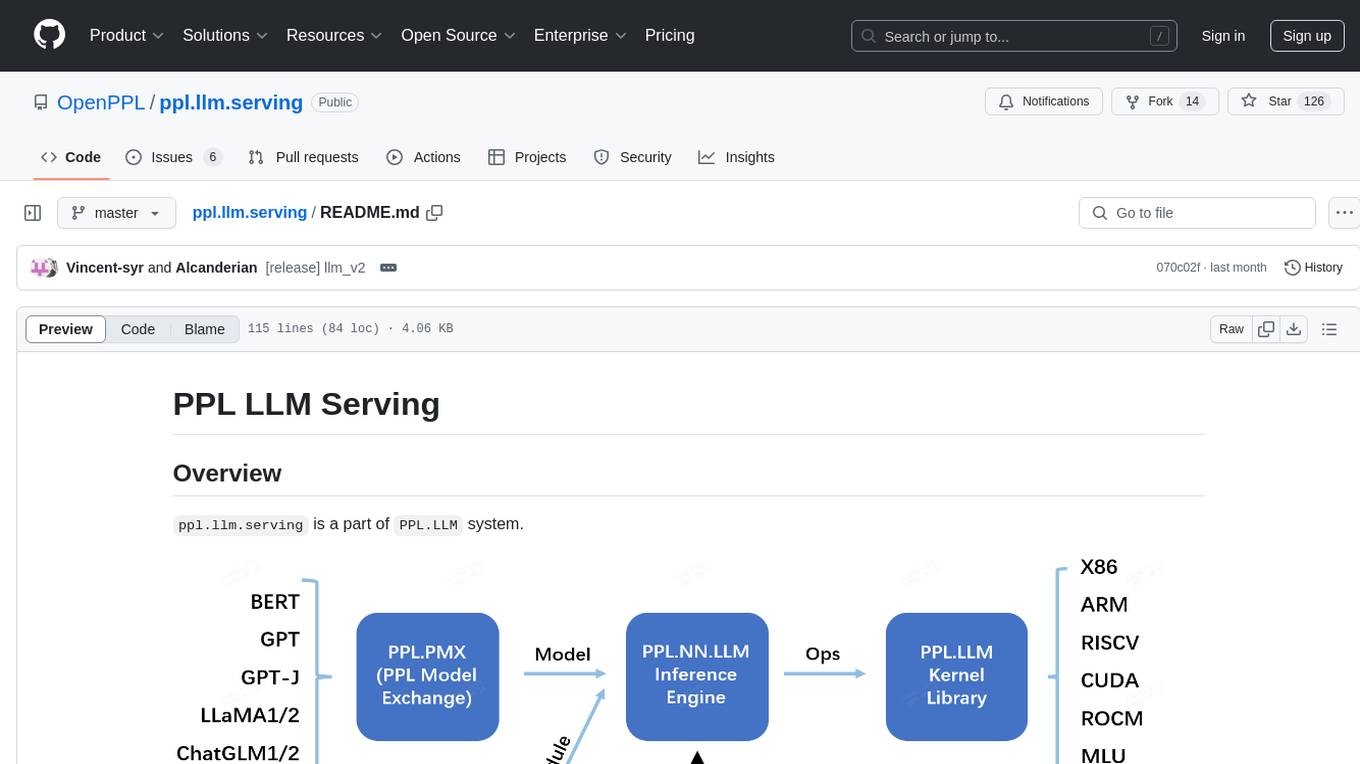

ppl.llm.serving

ppl.llm.serving is a serving component for Large Language Models (LLMs) within the PPL.LLM system. It provides a server based on gRPC and supports inference for LLaMA. The repository includes instructions for prerequisites, quick start guide, model exporting, server setup, client usage, benchmarking, and offline inference. Users can refer to the LLaMA Guide for more details on using this serving component.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.