HuaTuoAI

基于人工智能的中医图像分类, 本存储库包含一个针对中药的人工智能图像分类系统。该项目的目标是通过输入图像准确识别和分类各种中草药和成分。这个仓库里藏着一个神秘的宝藏——一个专为中药打造的人工智能图像分类系统。就像一位奇幻冒险中的导航者,这个项目的任务是将神秘的图像输入,变幻成准确的中草药和成分分类。让我们一起揭开这个数字世界中的迷雾,解锁植物的秘密,用技术和智能描绘中药的未知领域。

Stars: 83

HuaTuoAI is an artificial intelligence image classification system specifically designed for traditional Chinese medicine. It utilizes deep learning techniques, such as Convolutional Neural Networks (CNN), to accurately classify Chinese herbs and ingredients based on input images. The project aims to unlock the secrets of plants, depict the unknown realm of Chinese medicine using technology and intelligence, and perpetuate ancient cultural heritage.

README:

这个仓库里藏着一个神秘的宝藏——一个专为中药打造的人工智能图像分类系统。就像一位奇幻冒险中的导航者,这个项目的任务是将神秘的图像输入,变幻成准确的中草药和成分分类。让我们一起揭开这个数字世界中的迷雾,解锁植物的秘密,用技术和智能描绘中药的未知领域。

版权所有 2023至2050

特此授予任何获得华佗AI应用程序(以下简称“软件”)副本的人免费许可,可根据以下条件使用软件:

- 使用者被允许复制、修改、合并、出版发行、散布、再授权和/或销售本软件的副本。

- 在使用、复制、修改和分发软件的副本时,使用者必须在显著位置保留原始许可声明,包括对华佗AI应用程序的适当署名,

并特别标明原作者的姓名为钟智强。

- 在使用者派生的作品中,如果使用了本软件的代码或借鉴了本软件的思想,使用者必须在相关代码、文档或其他材料中明确

指出华佗AI应用程序及其对应的贡献,并提供华佗AI应用程序原作者钟智强的适当署名。

- 使用者不得将华佗AI应用程序标记为自己的作品,或以任何方式暗示华佗AI应用程序对派生作品的认可或支持。

- 如果使用者希望在本软件的基础上进行盈利或生产产品,必须获得原作者钟智强的书面许可。

- 华佗AI应用程序提供的是按"原样"提供的,不提供任何明示或暗示的担保或条件,包括但不限于对适销性、特定用途适用性

和非侵权性的担保或条件。在任何情况下,华佗AI应用程序的作者或版权持有人均不承担因使用或无法使用本软件所引起的

任何索赔、损害或其他责任。

- 华佗AI应用程序的作者或版权持有人不对因使用、复制、修改、合并、出版发行、散布、再授权和/或销售本软件而产生的

任何索赔、损害或其他责任承担责任,无论是合同责任、侵权行为或其他原因,即使事先已被告知发生此类损害的可能性。

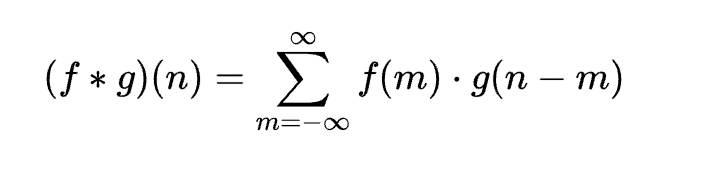

以上许可条款和限制适用于使用华佗AI应用程序的全部或部分功能。使用华佗AI应用程序即表示接受本许可证的条款和条件。- 图像分类:该项目的核心功能是图像分类模型,利用深度学习技术对中草药和成分进行分类。模型使用了一种称为卷积神经网络(Convolutional Neural Network,CNN) 的算法。具体而言,模型通过一系列卷积层、池化层和全连接层来提取图像中的特征,并利用softmax函数将图像分为不同的类别。模型的数学表示如下:

在这个公式中,

(f * g)(n)表示在位置n对两个函数f和g进行卷积操作的结果。符号*表示卷积操作, 求和符号\sum对m的范围进行求和计算。该公式在每个m值处将f(m)和g(n - m)相乘,并将它们相加, 以得到卷积结果(f * g)(n)。

- 预处理和增强:为提高模型的性能,该项目采用了强大的预处理技术来准备训练和推理的输入图像。此外,还使用数据增强方法来扩充数据集,改善模型对中草药和成分的泛化能力。

- 模型评估:本存储库提供了评估脚本,用于评估图像分类模型的性能。计算准确率、精确率、召回率和F1分数等指标,以衡量分类系统的有效性。

- 部署:存储库中提供了部署指南和资源,以便在生产环境中部署图像分类模型。这使得训练好的模型可以用于实时推理,并集成到现有的系统或应用程序中。

| 参数 | 值 |

|---|---|

| 损失函数 | 二元交叉熵 |

| 类别模式 | Binary |

| 优化器 | RMSProp |

| 深度学习模型 | 卷积神经网络 |

Epoch 1/5

1/1 [==============] - 1s 756ms/step - loss: 4.8716 - accuracy: 0.0000e+00 - val_loss: 4.8392 - val_accuracy: 0.0000e+00

Epoch 2/5

1/1 [==============] - 0s 208ms/step - loss: 4.8392 - accuracy: 0.0000e+00 - val_loss: 4.8164 - val_accuracy: 0.2000

Epoch 3/5

1/1 [==============] - 0s 200ms/step - loss: 4.8164 - accuracy: 0.2000 - val_loss: 4.7990 - val_accuracy: 0.2000

Epoch 4/5

1/1 [==============] - 0s 202ms/step - loss: 4.7990 - accuracy: 0.2000 - val_loss: 4.7838 - val_accuracy: 0.2000

Epoch 5/5

1/1 [==============] - 0s 203ms/step - loss: 4.7838 - accuracy: 0.2000 - val_loss: 4.7683 - val_accuracy: 0.2000

18/18 [============] - 1s 23ms/step - loss: 0.7025 - accuracy: 0.1667 - val_loss: -9.6575 - val_accuracy: 0.25001️⃣ 安装依赖

pip3 install -r requirements.txt如果您遇到以下问题 (MacOS):

ssl.SSLCertVerificationError: [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed:

unable to get local issuer certificate请执行以下命令:

/Applications/Python\{您的python版本}/Install\ Certificates.command ; exit;

# 目前我的版本是 Python 3.10 所以以下的是我命令:

/Applications/Python\3.10/Install\ Certificates.command ; exit;为了训练更多数据,请将文件放置在数据集 [data/images] 文件夹中。 为了进行验证,请将您的输入数据添加到[data/input]文件夹中。

HuaTuoAI/

:

:.. data/

: :.. chinese_medicine.txt

: :.. images/

: :.. 金银花/

: :.. 1.png

: :.. 2.png

: :.. 3.png

: :.. 丁公藤/

: :.. 1.png

: :.. 2.png

: :.. 3.png

: :.. 罗汉果/

: :.. 1.png

: :.. 2.png

: :.. 3.png

: :.. 人参片/

: :.. 1.png

: :.. 2.png

: :.. 3.png

: :.. 绿豆/

: :.. 1.png

: :.. 2.png

: :.. 3.png

: :.. input

: :.. 金银花/

: :.. 1.png

: :.. 丁公藤/

: :.. 1.png

: :.. 罗汉果/

: :.. 1.png

: :.. 人参片/

: :.. 1.png

: :.. 绿豆/

: :.. 1.png若您对中药完全陌生,无需担心,我已经收集了一份中药清单。请点击此处链接查看。

2️⃣ 运行程序

python3 run.py3️⃣ 运行测试

python3 -m unittest tests/test.py感谢您使用本项目!您的支持是开源持续发展的核心动力。

每一份捐赠都将直接用于:

✅ 服务器与基础设施维护

✅ 新功能开发与版本迭代

✅ 文档优化与社区建设

点滴支持皆能汇聚成海,让我们共同打造更强大的开源工具!

🙌 感谢您成为开源社区的重要一员!

💬 捐赠后欢迎通过社交平台与我联系,您的名字将出现在项目致谢列表!

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for HuaTuoAI

Similar Open Source Tools

HuaTuoAI

HuaTuoAI is an artificial intelligence image classification system specifically designed for traditional Chinese medicine. It utilizes deep learning techniques, such as Convolutional Neural Networks (CNN), to accurately classify Chinese herbs and ingredients based on input images. The project aims to unlock the secrets of plants, depict the unknown realm of Chinese medicine using technology and intelligence, and perpetuate ancient cultural heritage.

ai-trend-publish

AI TrendPublish is an AI-based trend discovery and content publishing system that supports multi-source data collection, intelligent summarization, and automatic publishing to WeChat official accounts. It features data collection from various sources, AI-powered content processing using DeepseekAI Together, key information extraction, intelligent title generation, automatic article publishing to WeChat official accounts with custom templates and scheduled tasks, notification system integration with Bark for task status updates and error alerts. The tool offers multiple templates for content customization and is built using Node.js + TypeScript with AI services from DeepseekAI Together, data sources including Twitter/X API and FireCrawl, and uses node-cron for scheduling tasks and EJS as the template engine.

Thor

Thor is a powerful AI model management tool designed for unified management and usage of various AI models. It offers features such as user, channel, and token management, data statistics preview, log viewing, system settings, external chat link integration, and Alipay account balance purchase. Thor supports multiple AI models including OpenAI, Kimi, Starfire, Claudia, Zhilu AI, Ollama, Tongyi Qianwen, AzureOpenAI, and Tencent Hybrid models. It also supports various databases like SqlServer, PostgreSql, Sqlite, and MySql, allowing users to choose the appropriate database based on their needs.

kirara-ai

Kirara AI is a chatbot that supports mainstream large language models and chat platforms. It provides features such as image sending, keyword-triggered replies, multi-account support, personality settings, and support for various chat platforms like QQ, Telegram, Discord, and WeChat. The tool also supports HTTP server for Web API, popular large models like OpenAI and DeepSeek, plugin mechanism, conditional triggers, admin commands, drawing models, voice replies, multi-turn conversations, cross-platform message sending, custom workflows, web management interface, and built-in Frpc intranet penetration.

Streamer-Sales

Streamer-Sales is a large model for live streamers that can explain products based on their characteristics and inspire users to make purchases. It is designed to enhance sales efficiency and user experience, whether for online live sales or offline store promotions. The model can deeply understand product features and create tailored explanations in vivid and precise language, sparking user's desire to purchase. It aims to revolutionize the shopping experience by providing detailed and unique product descriptions to engage users effectively.

meet-libai

The 'meet-libai' project aims to promote and popularize the cultural heritage of the Chinese poet Li Bai by constructing a knowledge graph of Li Bai and training a professional AI intelligent body using large models. The project includes features such as data preprocessing, knowledge graph construction, question-answering system development, and visualization exploration of the graph structure. It also provides code implementations for large models and RAG retrieval enhancement.

goodsKill

The 'goodsKill' project aims to build a complete project framework integrating good technologies and development techniques, mainly focusing on backend technologies. It provides a simulated flash sale project with unified flash sale simulation request interface. The project uses SpringMVC + Mybatis for the overall technology stack, Dubbo3.x for service intercommunication, Nacos for service registration and discovery, and Spring State Machine for data state transitions. It also integrates Spring AI service for simulating flash sale actions.

ddddocr

ddddocr is a Rust version of a simple OCR API server that provides easy deployment for captcha recognition without relying on the OpenCV library. It offers a user-friendly general-purpose captcha recognition Rust library. The tool supports recognizing various types of captchas, including single-line text, transparent black PNG images, target detection, and slider matching algorithms. Users can also import custom OCR training models and utilize the OCR API server for flexible OCR result control and range limitation. The tool is cross-platform and can be easily deployed.

GalTransl

GalTransl is an automated translation tool for Galgames that combines minor innovations in several basic functions with deep utilization of GPT prompt engineering. It is used to create embedded translation patches. The core of GalTransl is a set of automated translation scripts that solve most known issues when using ChatGPT for Galgame translation and improve overall translation quality. It also integrates with other projects to streamline the patch creation process, reducing the learning curve to some extent. Interested users can more easily build machine-translated patches of a certain quality through this project and may try to efficiently build higher-quality localization patches based on this framework.

bce-qianfan-sdk

The Qianfan SDK provides best practices for large model toolchains, allowing AI workflows and AI-native applications to access the Qianfan large model platform elegantly and conveniently. The core capabilities of the SDK include three parts: large model reasoning, large model training, and general and extension: * `Large model reasoning`: Implements interface encapsulation for reasoning of Yuyan (ERNIE-Bot) series, open source large models, etc., supporting dialogue, completion, Embedding, etc. * `Large model training`: Based on platform capabilities, it supports end-to-end large model training process, including training data, fine-tuning/pre-training, and model services. * `General and extension`: General capabilities include common AI development tools such as Prompt/Debug/Client. The extension capability is based on the characteristics of Qianfan to adapt to common middleware frameworks.

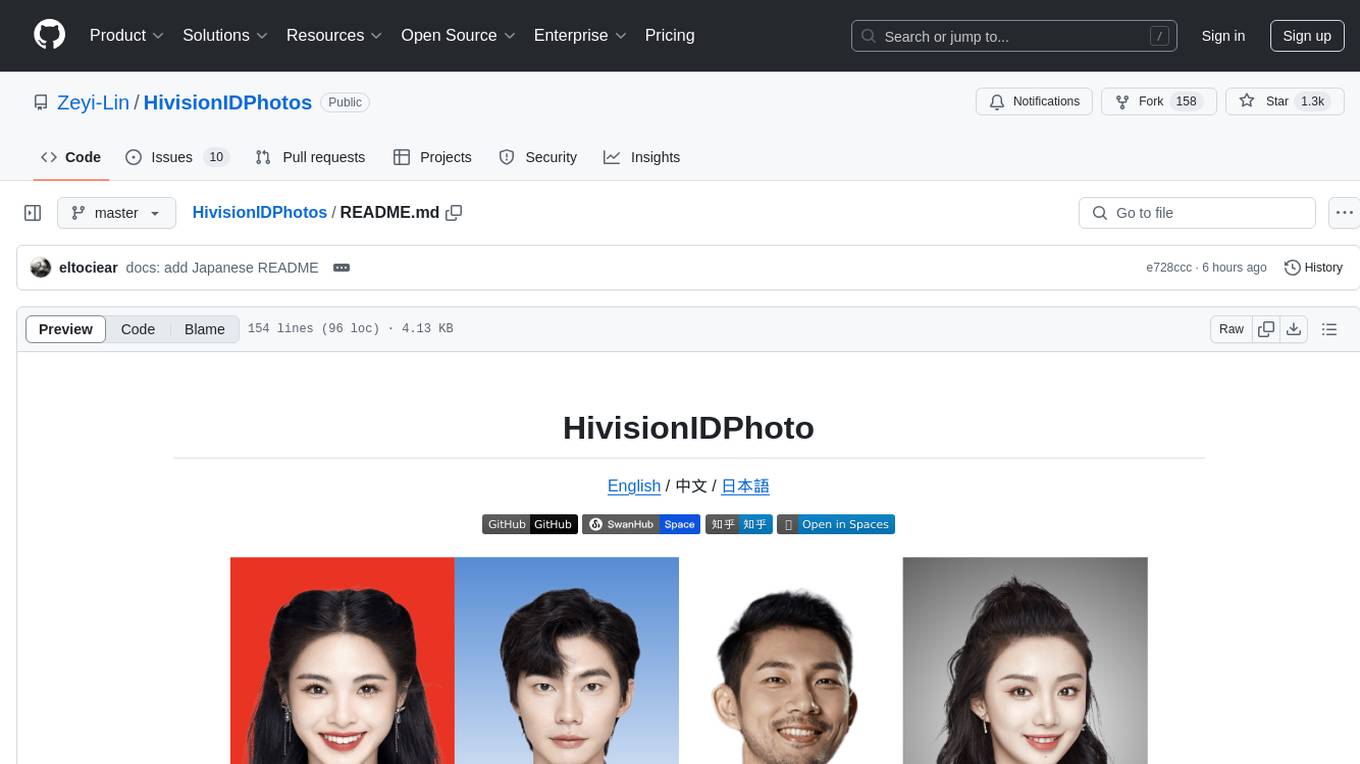

HivisionIDPhotos

HivisionIDPhoto is a practical algorithm for intelligent ID photo creation. It utilizes a comprehensive model workflow to recognize, cut out, and generate ID photos for various user photo scenarios. The tool offers lightweight cutting, standard ID photo generation based on different size specifications, six-inch layout photo generation, beauty enhancement (waiting), and intelligent outfit swapping (waiting). It aims to solve emergency ID photo creation issues.

prompt-optimizer

Prompt Optimizer is a powerful AI prompt optimization tool that helps you write better AI prompts, improving AI output quality. It supports both web application and Chrome extension usage. The tool features intelligent optimization for prompt words, real-time testing to compare before and after optimization, integration with multiple mainstream AI models, client-side processing for security, encrypted local storage for data privacy, responsive design for user experience, and more.

chatgpt-mirai-qq-bot

Kirara AI is a chatbot that supports mainstream language models and chat platforms. It features various functionalities such as image sending, keyword-triggered replies, multi-account support, content moderation, personality settings, and support for platforms like QQ, Telegram, Discord, and WeChat. It also offers HTTP server capabilities, plugin support, conditional triggers, admin commands, drawing models, voice replies, multi-turn conversations, cross-platform message sending, and custom workflows. The tool can be accessed via HTTP API for integration with other platforms.

TelegramForwarder

Telegram Forwarder is a message forwarding tool that allows you to forward messages from specified chats to other chats without the need for a bot to enter the corresponding channels/groups to listen. It can be used for information stream integration filtering, message reminders, content archiving, and more. The tool supports multiple sources forwarding, keyword filtering in whitelist and blacklist modes, regular expression matching, message content modification, AI processing using major vendors' AI interfaces, media file filtering, and synchronization with a universal forum blocking plugin to achieve three-end blocking.

LotteryMaster

LotteryMaster is a tool designed to fetch lottery data, save it to Excel files, and provide analysis reports including number prediction, number recommendation, and number trends. It supports multiple platforms for access such as Web and mobile App. The tool integrates AI models like Qwen API and DeepSeek for generating analysis reports and trend analysis charts. Users can configure API parameters for controlling randomness, diversity, presence penalty, and maximum tokens. The tool also includes a frontend project based on uniapp + Vue3 + TypeScript for multi-platform applications. It provides a backend service running on Fastify with Node.js, Cheerio.js for web scraping, Pino for logging, xlsx for Excel file handling, and Jest for testing. The project is still in development and some features may not be fully implemented. The analysis reports are for reference only and do not constitute investment advice. Users are advised to use the tool responsibly and avoid addiction to gambling.

For similar tasks

HuaTuoAI

HuaTuoAI is an artificial intelligence image classification system specifically designed for traditional Chinese medicine. It utilizes deep learning techniques, such as Convolutional Neural Networks (CNN), to accurately classify Chinese herbs and ingredients based on input images. The project aims to unlock the secrets of plants, depict the unknown realm of Chinese medicine using technology and intelligence, and perpetuate ancient cultural heritage.

vllm

vLLM is a fast and easy-to-use library for LLM inference and serving. It is designed to be efficient, flexible, and easy to use. vLLM can be used to serve a variety of LLM models, including Hugging Face models. It supports a variety of decoding algorithms, including parallel sampling, beam search, and more. vLLM also supports tensor parallelism for distributed inference and streaming outputs. It is open-source and available on GitHub.

bce-qianfan-sdk

The Qianfan SDK provides best practices for large model toolchains, allowing AI workflows and AI-native applications to access the Qianfan large model platform elegantly and conveniently. The core capabilities of the SDK include three parts: large model reasoning, large model training, and general and extension: * `Large model reasoning`: Implements interface encapsulation for reasoning of Yuyan (ERNIE-Bot) series, open source large models, etc., supporting dialogue, completion, Embedding, etc. * `Large model training`: Based on platform capabilities, it supports end-to-end large model training process, including training data, fine-tuning/pre-training, and model services. * `General and extension`: General capabilities include common AI development tools such as Prompt/Debug/Client. The extension capability is based on the characteristics of Qianfan to adapt to common middleware frameworks.

dstack

Dstack is an open-source orchestration engine for running AI workloads in any cloud. It supports a wide range of cloud providers (such as AWS, GCP, Azure, Lambda, TensorDock, Vast.ai, CUDO, RunPod, etc.) as well as on-premises infrastructure. With Dstack, you can easily set up and manage dev environments, tasks, services, and pools for your AI workloads.

tiny-llm-zh

Tiny LLM zh is a project aimed at building a small-parameter Chinese language large model for quick entry into learning large model-related knowledge. The project implements a two-stage training process for large models and subsequent human alignment, including tokenization, pre-training, instruction fine-tuning, human alignment, evaluation, and deployment. It is deployed on ModeScope Tiny LLM website and features open access to all data and code, including pre-training data and tokenizer. The project trains a tokenizer using 10GB of Chinese encyclopedia text to build a Tiny LLM vocabulary. It supports training with Transformers deepspeed, multiple machine and card support, and Zero optimization techniques. The project has three main branches: llama2_torch, main tiny_llm, and tiny_llm_moe, each with specific modifications and features.

examples

Cerebrium's official examples repository provides practical, ready-to-use examples for building Machine Learning / AI applications on the platform. The repository contains self-contained projects demonstrating specific use cases with detailed instructions on deployment. Examples cover a wide range of categories such as getting started, advanced concepts, endpoints, integrations, large language models, voice, image & video, migrations, application demos, batching, and Python apps.

vector-inference

This repository provides an easy-to-use solution for running inference servers on Slurm-managed computing clusters using vLLM. All scripts in this repository run natively on the Vector Institute cluster environment. Users can deploy models as Slurm jobs, check server status and performance metrics, and shut down models. The repository also supports launching custom models with specific configurations. Additionally, users can send inference requests and set up an SSH tunnel to run inference from a local device.

Gemini

Gemini is an open-source model designed to handle multiple modalities such as text, audio, images, and videos. It utilizes a transformer architecture with special decoders for text and image generation. The model processes input sequences by transforming them into tokens and then decoding them to generate image outputs. Gemini differs from other models by directly feeding image embeddings into the transformer instead of using a visual transformer encoder. The model also includes a component called Codi for conditional generation. Gemini aims to effectively integrate image, audio, and video embeddings to enhance its performance.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.