air

☁️ Live reload for Go apps

Stars: 15778

Air is a live-reloading command line utility for developing Go applications. It provides colorful log output, allows customization of build or any command, supports excluding subdirectories, and allows watching new directories after Air has started. Air can be installed via `go install`, `install.sh`, `goblin.run`, or Docker/Podman. To use Air, simply run `air` in your project root directory and leave it alone to focus on your code. Air has nothing to do with hot-deploy for production.

README:

When I started developing websites in Go and using gin framework, it was a pity that gin lacked a live-reloading function. So I searched around and tried fresh, it seems not much flexible, so I intended to rewrite it better. Finally, Air's born. In addition, great thanks to pilu, no fresh, no air :)

Air is yet another live-reloading command line utility for developing Go applications. Run air in your project root directory, leave it alone,

and focus on your code.

Note: This tool has nothing to do with hot-deploy for production.

- Colorful log output

- Customize build or any command

- Support excluding subdirectories

- Allow watching new directories after Air started

- Better building process

Support air config fields as arguments:

If you want to config build command and run command, you can use like the following command without the config file:

air --build.cmd "go build -o bin/api cmd/run.go" --build.bin "./bin/api"Use a comma to separate items for arguments that take a list as input:

air --build.cmd "go build -o bin/api cmd/run.go" --build.bin "./bin/api" --build.exclude_dir "templates,build"With go 1.22 or higher:

go install github.com/cosmtrek/air@latest# binary will be $(go env GOPATH)/bin/air

curl -sSfL https://raw.githubusercontent.com/cosmtrek/air/master/install.sh | sh -s -- -b $(go env GOPATH)/bin

# or install it into ./bin/

curl -sSfL https://raw.githubusercontent.com/cosmtrek/air/master/install.sh | sh -s

air -vVia goblin.run

# binary will be /usr/local/bin/air

curl -sSfL https://goblin.run/github.com/cosmtrek/air | sh

# to put to a custom path

curl -sSfL https://goblin.run/github.com/cosmtrek/air | PREFIX=/tmp shPlease pull this Docker image cosmtrek/air.

docker/podman run -it --rm \

-w "<PROJECT>" \

-e "air_wd=<PROJECT>" \

-v $(pwd):<PROJECT> \

-p <PORT>:<APP SERVER PORT> \

cosmtrek/air

-c <CONF>if you want to use air continuously like a normal app, you can create a function in your ${SHELL}rc (Bash, Zsh, etc…)

air() {

podman/docker run -it --rm \

-w "$PWD" -v "$PWD":"$PWD" \

-p "$AIR_PORT":"$AIR_PORT" \

docker.io/cosmtrek/air "$@"

}<PROJECT> is your project path in container, eg: /go/example

if you want to enter the container, Please add --entrypoint=bash.

For example

One of my project runs in Docker:

docker run -it --rm \

-w "/go/src/github.com/cosmtrek/hub" \

-v $(pwd):/go/src/github.com/cosmtrek/hub \

-p 9090:9090 \

cosmtrek/airAnother example:

cd /go/src/github.com/cosmtrek/hub

AIR_PORT=8080 air -c "config.toml"this will replace $PWD with the current directory, $AIR_PORT is the port where to publish and $@ is to accept arguments of the application itself for example -c

For less typing, you could add alias air='~/.air' to your .bashrc or .zshrc.

First enter into your project

cd /path/to/your_projectThe simplest usage is run

# firstly find `.air.toml` in current directory, if not found, use defaults

air -c .air.tomlYou can initialize the .air.toml configuration file to the current directory with the default settings running the following command.

air initAfter this, you can just run the air command without additional arguments, and it will use the .air.toml file for configuration.

airFor modifying the configuration refer to the air_example.toml file.

You can pass arguments for running the built binary by adding them after the air command.

# Will run ./tmp/main bench

air bench

# Will run ./tmp/main server --port 8080

air server --port 8080You can separate the arguments passed for the air command and the built binary with -- argument.

# Will run ./tmp/main -h

air -- -h

# Will run air with custom config and pass -h argument to the built binary

air -c .air.toml -- -hservices:

my-project-with-air:

image: cosmtrek/air

# working_dir value has to be the same of mapped volume

working_dir: /project-package

ports:

- <any>:<any>

environment:

- ENV_A=${ENV_A}

- ENV_B=${ENV_B}

- ENV_C=${ENV_C}

volumes:

- ./project-relative-path/:/project-package/air -d prints all logs.

Dockerfile

# Choose whatever you want, version >= 1.16

FROM golang:1.22-alpine

WORKDIR /app

RUN go install github.com/cosmtrek/air@latest

COPY go.mod go.sum ./

RUN go mod download

CMD ["air", "-c", ".air.toml"]docker-compose.yaml

version: "3.8"

services:

web:

build:

context: .

# Correct the path to your Dockerfile

dockerfile: Dockerfile

ports:

- 8080:3000

# Important to bind/mount your codebase dir to /app dir for live reload

volumes:

- ./:/appexport GOPATH=$HOME/xxxxx

export PATH=$PATH:$GOROOT/bin:$GOPATH/bin

export PATH=$PATH:$(go env GOPATH)/bin <---- Confirm this line in you profile!!!Should use \ to escape the `' in the bin. related issue: #305

[build]

cmd = "/usr/bin/true"Refer to issue #512 for additional details.

- Ensure your static files in

include_dir,include_ext, orinclude_file. - Ensure your HTML has a

</body>tag - Activate the proxy by configuring the following config:

[proxy]

enabled = true

proxy_port = <air proxy port>

app_port = <your server port>Please note that it requires Go 1.16+ since I use go mod to manage dependencies.

# Fork this project

# Clone it

mkdir -p $GOPATH/src/github.com/cosmtrek

cd $GOPATH/src/github.com/cosmtrek

git clone [email protected]:<YOUR USERNAME>/air.git

# Install dependencies

cd air

make ci

# Explore it and happy hacking!

make installPull requests are welcome.

# Checkout to master

git checkout master

# Add the version that needs to be released

git tag v1.xx.x

# Push to remote

git push origin v1.xx.x

# The CI will process and release a new version. Wait about 5 min, and you can fetch the latest versionGive huge thanks to lots of supporters. I've always been remembering your kindness.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for air

Similar Open Source Tools

air

Air is a live-reloading command line utility for developing Go applications. It provides colorful log output, allows customization of build or any command, supports excluding subdirectories, and allows watching new directories after Air has started. Air can be installed via `go install`, `install.sh`, `goblin.run`, or Docker/Podman. To use Air, simply run `air` in your project root directory and leave it alone to focus on your code. Air has nothing to do with hot-deploy for production.

air

Air is a live-reloading command line utility for developing Go applications. It provides colorful log output, customizable build or any command, support for excluding subdirectories, and allows watching new directories after Air started. Users can overwrite specific configuration from arguments and pass runtime arguments for running the built binary. Air can be installed via `go install`, `install.sh`, or `goblin.run`, and can also be used with Docker/Podman. It supports debugging, Docker Compose, and provides a Q&A section for common issues. The tool requires Go 1.16+ for development and welcomes pull requests. Air is released under the GNU General Public License v3.0.

shell-pilot

Shell-pilot is a simple, lightweight shell script designed to interact with various AI models such as OpenAI, Ollama, Mistral AI, LocalAI, ZhipuAI, Anthropic, Moonshot, and Novita AI from the terminal. It enhances intelligent system management without any dependencies, offering features like setting up a local LLM repository, using official models and APIs, viewing history and session persistence, passing input prompts with pipe/redirector, listing available models, setting request parameters, generating and running commands in the terminal, easy configuration setup, system package version checking, and managing system aliases.

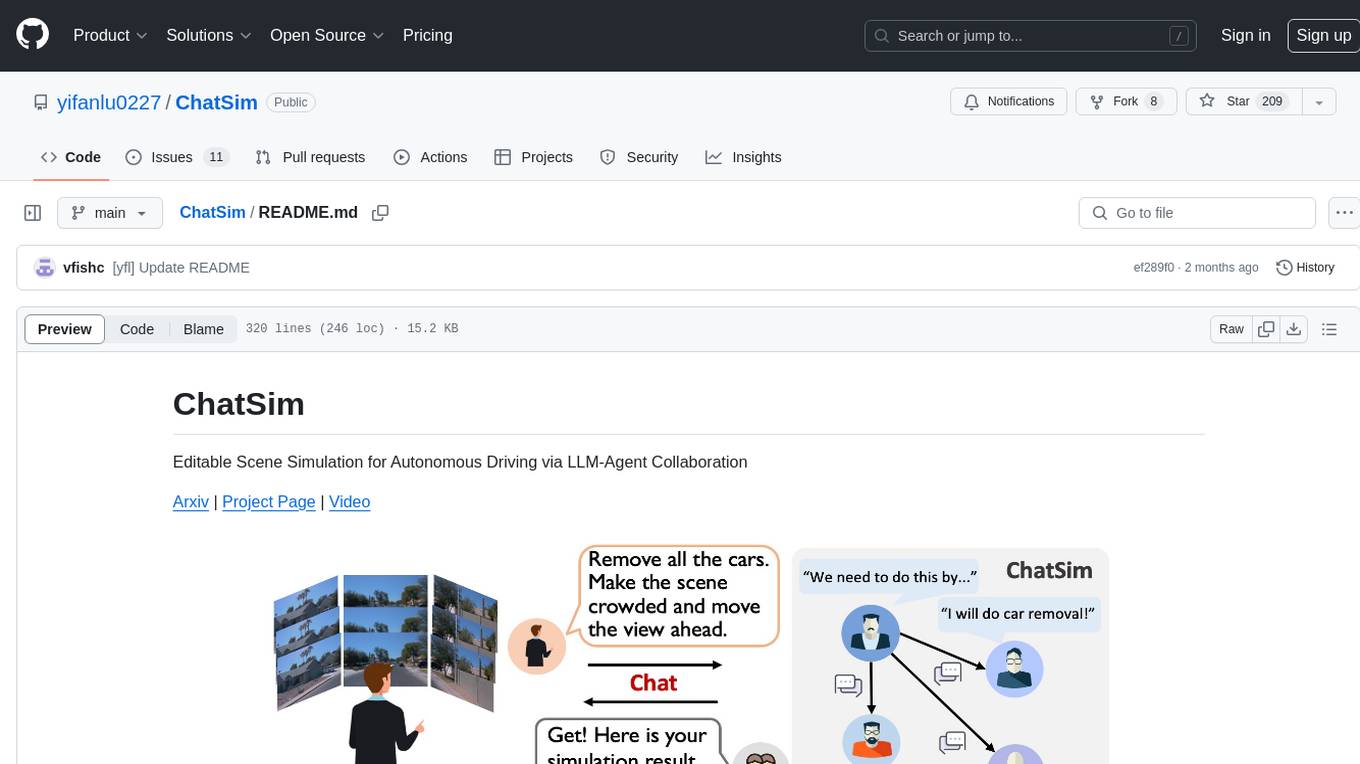

ChatSim

ChatSim is a tool designed for editable scene simulation for autonomous driving via LLM-Agent collaboration. It provides functionalities for setting up the environment, installing necessary dependencies like McNeRF and Inpainting tools, and preparing data for simulation. Users can train models, simulate scenes, and track trajectories for smoother and more realistic results. The tool integrates with Blender software and offers options for training McNeRF models and McLight's skydome estimation network. It also includes a trajectory tracking module for improved trajectory tracking. ChatSim aims to facilitate the simulation of autonomous driving scenarios with collaborative LLM-Agents.

comp

Comp AI is an open-source compliance automation platform designed to assist companies in achieving compliance with standards like SOC 2, ISO 27001, and GDPR. It transforms compliance into an engineering problem solved through code, automating evidence collection, policy management, and control implementation while maintaining data and infrastructure control.

tenere

Tenere is a TUI interface for Language Model Libraries (LLMs) written in Rust. It provides syntax highlighting, chat history, saving chats to files, Vim keybindings, copying text from/to clipboard, and supports multiple backends. Users can configure Tenere using a TOML configuration file, set key bindings, and use different LLMs such as ChatGPT, llama.cpp, and ollama. Tenere offers default key bindings for global and prompt modes, with features like starting a new chat, saving chats, scrolling, showing chat history, and quitting the app. Users can interact with the prompt in different modes like Normal, Visual, and Insert, with various key bindings for navigation, editing, and text manipulation.

nosia

Nosia is a platform that allows users to run an AI model on their own data. It is designed to be easy to install and use. Users can follow the provided guides for quickstart, API usage, upgrading, starting, stopping, and troubleshooting. The platform supports custom installations with options for remote Ollama instances, custom completion models, and custom embeddings models. Advanced installation instructions are also available for macOS with a Debian or Ubuntu VM setup. Users can access the platform at 'https://nosia.localhost' and troubleshoot any issues by checking logs and job statuses.

raycast_api_proxy

The Raycast AI Proxy is a tool that acts as a proxy for the Raycast AI application, allowing users to utilize the application without subscribing. It intercepts and forwards Raycast requests to various AI APIs, then reformats the responses for Raycast. The tool supports multiple AI providers and allows for custom model configurations. Users can generate self-signed certificates, add them to the system keychain, and modify DNS settings to redirect requests to the proxy. The tool is designed to work with providers like OpenAI, Azure OpenAI, Google, and more, enabling tasks such as AI chat completions, translations, and image generation.

1.5-Pints

1.5-Pints is a repository that provides a recipe to pre-train models in 9 days, aiming to create AI assistants comparable to Apple OpenELM and Microsoft Phi. It includes model architecture, training scripts, and utilities for 1.5-Pints and 0.12-Pint developed by Pints.AI. The initiative encourages replication, experimentation, and open-source development of Pint by sharing the model's codebase and architecture. The repository offers installation instructions, dataset preparation scripts, model training guidelines, and tools for model evaluation and usage. Users can also find information on finetuning models, converting lit models to HuggingFace models, and running Direct Preference Optimization (DPO) post-finetuning. Additionally, the repository includes tests to ensure code modifications do not disrupt the existing functionality.

k8sgpt

K8sGPT is a tool for scanning your Kubernetes clusters, diagnosing, and triaging issues in simple English. It has SRE experience codified into its analyzers and helps to pull out the most relevant information to enrich it with AI.

alcless

Alcoholless is a lightweight security sandbox for macOS programs, originally designed for securing Homebrew but can be used for any CLI programs. It allows AI agents to run shell commands with reduced risk of breaking the host OS. The tool creates a separate environment for executing commands, syncing changes back to the host directory upon command exit. It uses utilities like sudo, su, pam_launchd, and rsync, with potential future integration of FSKit for file syncing. The tool also generates a sudo configuration for user-specific sandbox access, enabling users to run commands as the sandbox user without a password.

llm-vscode

llm-vscode is an extension designed for all things LLM, utilizing llm-ls as its backend. It offers features such as code completion with 'ghost-text' suggestions, the ability to choose models for code generation via HTTP requests, ensuring prompt size fits within the context window, and code attribution checks. Users can configure the backend, suggestion behavior, keybindings, llm-ls settings, and tokenization options. Additionally, the extension supports testing models like Code Llama 13B, Phind/Phind-CodeLlama-34B-v2, and WizardLM/WizardCoder-Python-34B-V1.0. Development involves cloning llm-ls, building it, and setting up the llm-vscode extension for use.

loz

Loz is a command-line tool that integrates AI capabilities with Unix tools, enabling users to execute system commands and utilize Unix pipes. It supports multiple LLM services like OpenAI API, Microsoft Copilot, and Ollama. Users can run Linux commands based on natural language prompts, enhance Git commit formatting, and interact with the tool in safe mode. Loz can process input from other command-line tools through Unix pipes and automatically generate Git commit messages. It provides features like chat history access, configurable LLM settings, and contribution opportunities.

tiledesk-dashboard

Tiledesk is an open-source live chat platform with integrated chatbots written in Node.js and Express. It is designed to be a multi-channel platform for web, Android, and iOS, and it can be used to increase sales or provide post-sales customer service. Tiledesk's chatbot technology allows for automation of conversations, and it also provides APIs and webhooks for connecting external applications. Additionally, it offers a marketplace for apps and features such as CRM, ticketing, and data export.

backend.ai-webui

Backend.AI Web UI is a user-friendly web and app interface designed to make AI accessible for end-users, DevOps, and SysAdmins. It provides features for session management, inference service management, pipeline management, storage management, node management, statistics, configurations, license checking, plugins, help & manuals, kernel management, user management, keypair management, manager settings, proxy mode support, service information, and integration with the Backend.AI Web Server. The tool supports various devices, offers a built-in websocket proxy feature, and allows for versatile usage across different platforms. Users can easily manage resources, run environment-supported apps, access a web-based terminal, use Visual Studio Code editor, manage experiments, set up autoscaling, manage pipelines, handle storage, monitor nodes, view statistics, configure settings, and more.

AMD-AI

AMD-AI is a repository containing detailed instructions for installing, setting up, and configuring ROCm on Ubuntu systems with AMD GPUs. The repository includes information on installing various tools like Stable Diffusion, ComfyUI, and Oobabooga for tasks like text generation and performance tuning. It provides guidance on adding AMD GPU package sources, installing ROCm-related packages, updating system packages, and finding graphics devices. The instructions are aimed at users with AMD hardware looking to set up their Linux systems for AI-related tasks.

For similar tasks

air

Air is a live-reloading command line utility for developing Go applications. It provides colorful log output, allows customization of build or any command, supports excluding subdirectories, and allows watching new directories after Air has started. Air can be installed via `go install`, `install.sh`, `goblin.run`, or Docker/Podman. To use Air, simply run `air` in your project root directory and leave it alone to focus on your code. Air has nothing to do with hot-deploy for production.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

sourcegraph

Sourcegraph is a code search and navigation tool that helps developers read, write, and fix code in large, complex codebases. It provides features such as code search across all repositories and branches, code intelligence for navigation and refactoring, and the ability to fix and refactor code across multiple repositories at once.

open-webui

Open WebUI is an extensible, feature-rich, and user-friendly self-hosted WebUI designed to operate entirely offline. It supports various LLM runners, including Ollama and OpenAI-compatible APIs. For more information, be sure to check out our Open WebUI Documentation.

ray

Ray is a unified framework for scaling AI and Python applications. It consists of a core distributed runtime and a set of AI libraries for simplifying ML compute, including Data, Train, Tune, RLlib, and Serve. Ray runs on any machine, cluster, cloud provider, and Kubernetes, and features a growing ecosystem of community integrations. With Ray, you can seamlessly scale the same code from a laptop to a cluster, making it easy to meet the compute-intensive demands of modern ML workloads.

litgpt

LitGPT is a command-line tool designed to easily finetune, pretrain, evaluate, and deploy 20+ LLMs **on your own data**. It features highly-optimized training recipes for the world's most powerful open-source large-language-models (LLMs).

khoj

Khoj is an open-source, personal AI assistant that extends your capabilities by creating always-available AI agents. You can share your notes and documents to extend your digital brain, and your AI agents have access to the internet, allowing you to incorporate real-time information. Khoj is accessible on Desktop, Emacs, Obsidian, Web, and Whatsapp, and you can share PDF, markdown, org-mode, notion files, and GitHub repositories. You'll get fast, accurate semantic search on top of your docs, and your agents can create deeply personal images and understand your speech. Khoj is self-hostable and always will be.

chronon

Chronon is a platform that simplifies and improves ML workflows by providing a central place to define features, ensuring point-in-time correctness for backfills, simplifying orchestration for batch and streaming pipelines, offering easy endpoints for feature fetching, and guaranteeing and measuring consistency. It offers benefits over other approaches by enabling the use of a broad set of data for training, handling large aggregations and other computationally intensive transformations, and abstracting away the infrastructure complexity of data plumbing.

rag-experiment-accelerator

The RAG Experiment Accelerator is a versatile tool that helps you conduct experiments and evaluations using Azure AI Search and RAG pattern. It offers a rich set of features, including experiment setup, integration with Azure AI Search, Azure Machine Learning, MLFlow, and Azure OpenAI, multiple document chunking strategies, query generation, multiple search types, sub-querying, re-ranking, metrics and evaluation, report generation, and multi-lingual support. The tool is designed to make it easier and faster to run experiments and evaluations of search queries and quality of response from OpenAI, and is useful for researchers, data scientists, and developers who want to test the performance of different search and OpenAI related hyperparameters, compare the effectiveness of various search strategies, fine-tune and optimize parameters, find the best combination of hyperparameters, and generate detailed reports and visualizations from experiment results.