concierge

🚀 The reliability layer for building next gen MCP servers

Stars: 476

Concierge AI is a tool that implements the Model Context Protocol (MCP) to connect AI agents to tools in a standardized way. It ensures deterministic results and reliable tool invocation by progressively disclosing only relevant tools. Users can scaffold new projects or wrap existing MCP servers easily. Concierge works at the MCP protocol level, dynamically changing which tools are returned based on the current workflow step. It allows users to group tools into steps, define transitions, share state between steps, enable semantic search, and run over HTTP. The tool offers features like progressive disclosure, enforced tool ordering, shared state, semantic search, protocol compatibility, session isolation, multiple transports, and a scaffolding CLI for quick project setup.

README:

The Model Context Protocol (MCP) is a standardized way to connect AI agents to tools. Instead of exposing a flat list of every tool on every request, Concierge progressively discloses only what's relevant. Concierge guarantees deterministic results and reliable tool invocation.

[!NOTE] Concierge requires Python 3.9+. We recommend installing with uv for faster dependency resolution, but pip works just as well.

pip install concierge-sdkScaffold a new project:

concierge init my-store # Generate a ready to run project

cd my-store # Enter project

python main.py # Start the MCP serverOr wrap an existing MCP server two lines, nothing else changes:

# Before

from mcp.server.fastmcp import FastMCP

app = FastMCP("my-server")

# After: just wrap it

from concierge import Concierge

app = Concierge(FastMCP("my-server"))[!TIP] Concierge works at the MCP protocol level. It dynamically changes which tools are returned by

tools/listbased on the current workflow step. The agent and client don't need to know Concierge exists, they just see fewer, more relevant tools at each point.

from concierge import Concierge

from mcp.server.fastmcp import FastMCP

app = Concierge(FastMCP("my-server"))

# Your @app.tool() decorators stay exactly the same.

# You can additionally add app.stages and app.transitions.[!NOTE] The wrap and go gives you progressive tool disclosure immediately. Add

app.stagesandapp.transitionswhen you want full workflow control, no code changes required.

Instead of exposing everything at once, group related tools together. Only the current step's tools are visible to the agent:

app.stages = {

"browse": ["search_products", "view_product"],

"cart": ["add_to_cart", "remove_from_cart", "view_cart"],

"checkout": ["apply_coupon", "complete_purchase"],

}Control which steps can follow which. The agent moves forward (or backward) only along paths you allow:

app.transitions = {

"browse": ["cart"], # Can only move to cart

"cart": ["browse", "checkout"], # Can go back or proceed

"checkout": [], # Terminal step

}Share state between steps

Pass data between workflow steps without round-tripping through the LLM. State is session-scoped and works across distributed replicas:

# In the "browse" step - save a selection

app.set_state("selected_product", {"id": "p1", "name": "Laptop"})

# In the "cart" step retrieve it directly

product = app.get_state("selected_product")Scale with semantic search

When you have hundreds of tools, enable semantic search to collapse your entire API behind two meta-tools:

from concierge import Concierge, Config, ProviderType

app = Concierge("large-api", config=Config(

provider_type=ProviderType.SEARCH,

max_results=5,

))No matter how many tools you register, the agent only ever sees:

search_tools(query: str) → Find tools by description

call_tool(tool_name: str, args: dict) → Execute a discovered tool

Concierge supports multiple transports. Use streamable HTTP for web deployments:

# Streamable HTTP (recommended for web)

http_app = app.streamable_http_app()

# Or run over stdio (default, for CLI-based clients)

app.run()[!TIP] All of the above: stages, transitions, state, semantic search are optional and independent. Use any combination. Start simple and add structure as your workflow grows.

| Progressive Disclosure: Only expose the tools that matter right now. Fewer tools in context means less confusion and lower cost. | Enforced Tool Ordering: Define which tools unlock which. The agent follows your business logic, not its own guesses. |

| Shared State: Pass data between workflow steps server-side. No tool-call chaining through the LLM, no re-injecting data into prompts. | Semantic Search: For large APIs (100+ tools), collapse everything behind two meta-tools. The agent searches by description, then invokes. |

Protocol Compatible: Wraps any MCP server. Your existing @app.tool() decorators, resources, and prompts work unchanged. |

Session Isolation: Each conversation gets its own workflow state. Atomic, consistent, works across distributed replicas. |

| Multiple Transports: Run over stdio, streamable HTTP, or SSE. Deploy anywhere: serverless, containers, bare metal. |

Scaffolding CLI: concierge init generates a ready to run project with tools, stages, and transitions wired up ready to go. |

A complete e-commerce workflow in under 30 lines:

from concierge import Concierge

app = Concierge("shopping")

@app.tool()

def search_products(query: str) -> dict:

"""Search the product catalog."""

return {"products": [{"id": "p1", "name": "Laptop", "price": 999}]}

@app.tool()

def add_to_cart(product_id: str) -> dict:

"""Add a product to the cart."""

cart = app.get_state("cart", [])

cart.append(product_id)

app.set_state("cart", cart)

return {"cart": cart}

@app.tool()

def checkout(payment_method: str) -> dict:

"""Complete the purchase."""

cart = app.get_state("cart", [])

return {"order_id": "ORD-123", "items": len(cart), "status": "confirmed"}

app.stages = {

"browse": ["search_products"],

"cart": ["add_to_cart"],

"checkout": ["checkout"],

}

app.transitions = {

"browse": ["cart"],

"cart": ["browse", "checkout"],

"checkout": [],

}

app.run() # Start over stdioThe agent starts at browse. It can move to cart, then to checkout. It cannot call checkout from browse. Concierge enforces this at the protocol level, no prompt engineering required.

Full guides, API reference, and deployment patterns are available at docs.getconcierge.app.

- Discord: Ask questions, share what you're building, get help.

- Issues: Report bugs or request features.

- Discussions: Longer form discussions and RFCs.

We are building the agentic web. Come join us.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for concierge

Similar Open Source Tools

concierge

Concierge AI is a tool that implements the Model Context Protocol (MCP) to connect AI agents to tools in a standardized way. It ensures deterministic results and reliable tool invocation by progressively disclosing only relevant tools. Users can scaffold new projects or wrap existing MCP servers easily. Concierge works at the MCP protocol level, dynamically changing which tools are returned based on the current workflow step. It allows users to group tools into steps, define transitions, share state between steps, enable semantic search, and run over HTTP. The tool offers features like progressive disclosure, enforced tool ordering, shared state, semantic search, protocol compatibility, session isolation, multiple transports, and a scaffolding CLI for quick project setup.

memobase

Memobase is a user profile-based memory system designed to enhance Generative AI applications by enabling them to remember, understand, and evolve with users. It provides structured user profiles, scalable profiling, easy integration with existing LLM stacks, batch processing for speed, and is production-ready. Users can manage users, insert data, get memory profiles, and track user preferences and behaviors. Memobase is ideal for applications that require user analysis, tracking, and personalized interactions.

FlashLearn

FlashLearn is a tool that provides a simple interface and orchestration for incorporating Agent LLMs into workflows and ETL pipelines. It allows data transformations, classifications, summarizations, rewriting, and custom multi-step tasks using LLMs. Each step and task has a compact JSON definition, making pipelines easy to understand and maintain. FlashLearn supports LiteLLM, Ollama, OpenAI, DeepSeek, and other OpenAI-compatible clients.

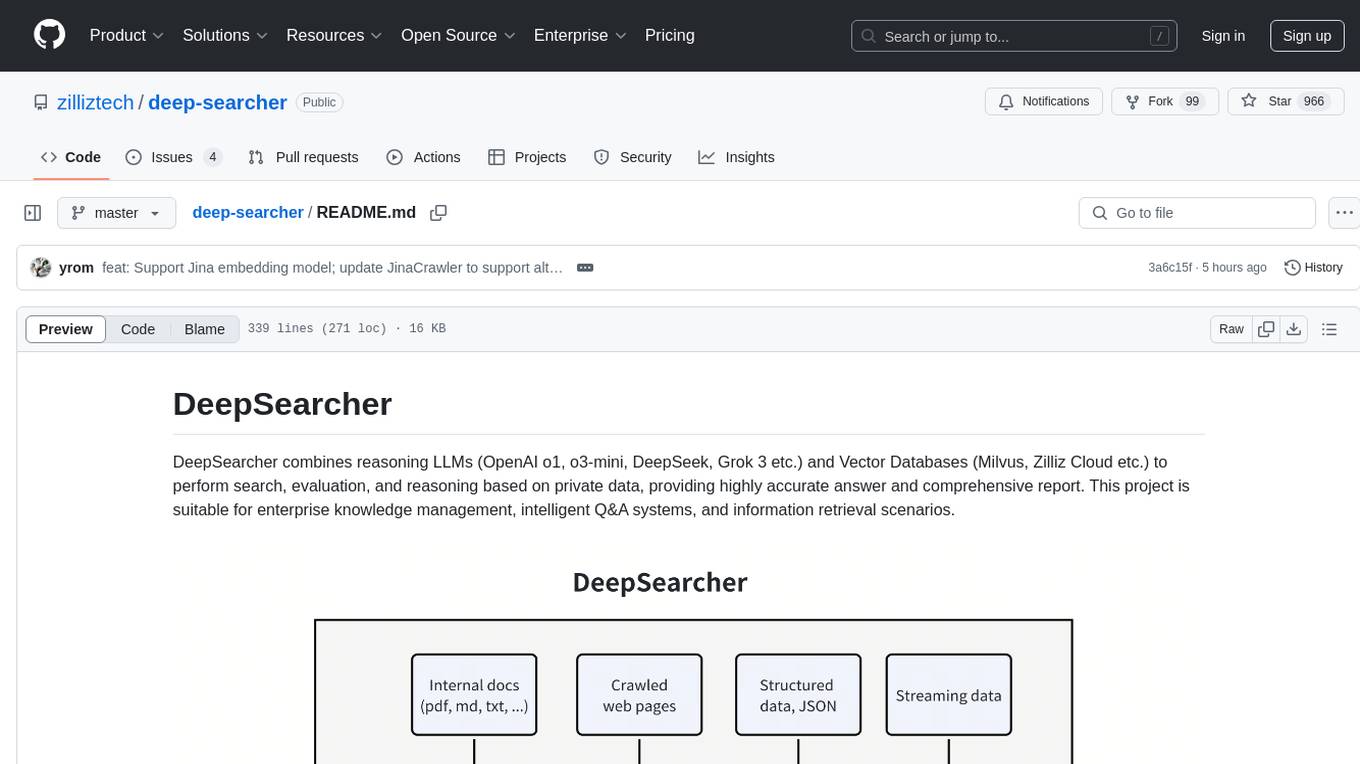

deep-searcher

DeepSearcher is a tool that combines reasoning LLMs and Vector Databases to perform search, evaluation, and reasoning based on private data. It is suitable for enterprise knowledge management, intelligent Q&A systems, and information retrieval scenarios. The tool maximizes the utilization of enterprise internal data while ensuring data security, supports multiple embedding models, and provides support for multiple LLMs for intelligent Q&A and content generation. It also includes features like private data search, vector database management, and document loading with web crawling capabilities under development.

pipelex

Pipelex is an open-source devtool designed to transform how users build repeatable AI workflows. It acts as a Docker or SQL for AI operations, allowing users to create modular 'pipes' using different LLMs for structured outputs. These pipes can be connected sequentially, in parallel, or conditionally to build complex knowledge transformations from reusable components. With Pipelex, users can share and scale proven methods instantly, saving time and effort in AI workflow development.

npi

NPi is an open-source platform providing Tool-use APIs to empower AI agents with the ability to take action in the virtual world. It is currently under active development, and the APIs are subject to change in future releases. NPi offers a command line tool for installation and setup, along with a GitHub app for easy access to repositories. The platform also includes a Python SDK and examples like Calendar Negotiator and Twitter Crawler. Join the NPi community on Discord to contribute to the development and explore the roadmap for future enhancements.

vinagent

Vinagent is a lightweight and flexible library designed for building smart agent assistants across various industries. It provides a simple yet powerful foundation for creating AI-powered customer service bots, data analysis assistants, or domain-specific automation agents. With its modular tool system, users can easily extend their agent's capabilities by integrating a wide range of tools that are self-contained, well-documented, and can be registered dynamically. Vinagent allows users to scale and adapt their agents to new tasks or environments effortlessly.

swarmzero

SwarmZero SDK is a library that simplifies the creation and execution of AI Agents and Swarms of Agents. It supports various LLM Providers such as OpenAI, Azure OpenAI, Anthropic, MistralAI, Gemini, Nebius, and Ollama. Users can easily install the library using pip or poetry, set up the environment and configuration, create and run Agents, collaborate with Swarms, add tools for complex tasks, and utilize retriever tools for semantic information retrieval. Sample prompts are provided to help users explore the capabilities of the agents and swarms. The SDK also includes detailed examples and documentation for reference.

redis-vl-python

The Python Redis Vector Library (RedisVL) is a tailor-made client for AI applications leveraging Redis. It enhances applications with Redis' speed, flexibility, and reliability, incorporating capabilities like vector-based semantic search, full-text search, and geo-spatial search. The library bridges the gap between the emerging AI-native developer ecosystem and the capabilities of Redis by providing a lightweight, elegant, and intuitive interface. It abstracts the features of Redis into a grammar that is more aligned to the needs of today's AI/ML Engineers or Data Scientists.

ai-gateway

LangDB AI Gateway is an open-source enterprise AI gateway built in Rust. It provides a unified interface to all LLMs using the OpenAI API format, focusing on high performance, enterprise readiness, and data control. The gateway offers features like comprehensive usage analytics, cost tracking, rate limiting, data ownership, and detailed logging. It supports various LLM providers and provides OpenAI-compatible endpoints for chat completions, model listing, embeddings generation, and image generation. Users can configure advanced settings, such as rate limiting, cost control, dynamic model routing, and observability with OpenTelemetry tracing. The gateway can be run with Docker Compose and integrated with MCP tools for server communication.

letta

Letta is an open source framework for building stateful LLM applications. It allows users to build stateful agents with advanced reasoning capabilities and transparent long-term memory. The framework is white box and model-agnostic, enabling users to connect to various LLM API backends. Letta provides a graphical interface, the Letta ADE, for creating, deploying, interacting, and observing with agents. Users can access Letta via REST API, Python, Typescript SDKs, and the ADE. Letta supports persistence by storing agent data in a database, with PostgreSQL recommended for data migrations. Users can install Letta using Docker or pip, with Docker defaulting to PostgreSQL and pip defaulting to SQLite. Letta also offers a CLI tool for interacting with agents. The project is open source and welcomes contributions from the community.

sparkle

Sparkle is a tool that streamlines the process of building AI-driven features in applications using Large Language Models (LLMs). It guides users through creating and managing agents, defining tools, and interacting with LLM providers like OpenAI. Sparkle allows customization of LLM provider settings, model configurations, and provides a seamless integration with Sparkle Server for exposing agents via an OpenAI-compatible chat API endpoint.

wtf.nvim

wtf.nvim is a Neovim plugin that enhances diagnostic debugging by providing explanations and solutions for code issues using ChatGPT. It allows users to search the web for answers directly from Neovim, making the debugging process faster and more efficient. The plugin works with any language that has LSP support in Neovim, offering AI-powered diagnostic assistance and seamless integration with various resources for resolving coding problems.

comet-llm

CometLLM is a tool to log and visualize your LLM prompts and chains. Use CometLLM to identify effective prompt strategies, streamline your troubleshooting, and ensure reproducible workflows!

parea-sdk-py

Parea AI provides a SDK to evaluate & monitor AI applications. It allows users to test, evaluate, and monitor their AI models by defining and running experiments. The SDK also enables logging and observability for AI applications, as well as deploying prompts to facilitate collaboration between engineers and subject-matter experts. Users can automatically log calls to OpenAI and Anthropic, create hierarchical traces of their applications, and deploy prompts for integration into their applications.

langchainrb

Langchain.rb is a Ruby library that makes it easy to build LLM-powered applications. It provides a unified interface to a variety of LLMs, vector search databases, and other tools, making it easy to build and deploy RAG (Retrieval Augmented Generation) systems and assistants. Langchain.rb is open source and available under the MIT License.

For similar tasks

concierge

Concierge AI is a tool that implements the Model Context Protocol (MCP) to connect AI agents to tools in a standardized way. It ensures deterministic results and reliable tool invocation by progressively disclosing only relevant tools. Users can scaffold new projects or wrap existing MCP servers easily. Concierge works at the MCP protocol level, dynamically changing which tools are returned based on the current workflow step. It allows users to group tools into steps, define transitions, share state between steps, enable semantic search, and run over HTTP. The tool offers features like progressive disclosure, enforced tool ordering, shared state, semantic search, protocol compatibility, session isolation, multiple transports, and a scaffolding CLI for quick project setup.

git-mcp

GitMCP is a free, open-source service that transforms any GitHub project into a remote Model Context Protocol (MCP) endpoint, allowing AI assistants to access project documentation effortlessly. It empowers AI with semantic search capabilities, requires zero setup, is completely free and private, and serves as a bridge between GitHub repositories and AI assistants.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.