letta

Letta is the platform for building stateful agents: open AI with advanced memory that can learn and self-improve over time.

Stars: 18501

Letta is an open source framework for building stateful LLM applications. It allows users to build stateful agents with advanced reasoning capabilities and transparent long-term memory. The framework is white box and model-agnostic, enabling users to connect to various LLM API backends. Letta provides a graphical interface, the Letta ADE, for creating, deploying, interacting, and observing with agents. Users can access Letta via REST API, Python, Typescript SDKs, and the ADE. Letta supports persistence by storing agent data in a database, with PostgreSQL recommended for data migrations. Users can install Letta using Docker or pip, with Docker defaulting to PostgreSQL and pip defaulting to SQLite. Letta also offers a CLI tool for interacting with agents. The project is open source and welcomes contributions from the community.

README:

Letta is the platform for building stateful agents: open AI with advanced memory that can learn and self-improve over time.

- Developer Documentation: Learn how create agents that learn using Python / TypeScript

- Agent Development Environment (ADE): A no-code UI for building stateful agents

- Letta Desktop: A fully-local version of the ADE, available on MacOS and Windows

- Letta Cloud: The fastest way to try Letta, with agents running in the cloud

Or install the Letta SDK (available for both Python and TypeScript):

pip install letta-clientnpm install @letta-ai/letta-clientIn the example below, we'll create a stateful agent with two memory blocks, one for itself (the persona block), and one for the human. We'll initialize the human memory block with incorrect information, and correct agent in our first message - which will trigger the agent to update its own memory with a tool call.

To run the examples, you'll need to get a LETTA_API_KEY from Letta Cloud, or run your own self-hosted server (see our guide)

from letta_client import Letta

client = Letta(token="LETTA_API_KEY")

# client = Letta(base_url="http://localhost:8283") # if self-hosting, set your base_url

agent_state = client.agents.create(

model="openai/gpt-4.1",

embedding="openai/text-embedding-3-small",

memory_blocks=[

{

"label": "human",

"value": "The human's name is Chad. They like vibe coding."

},

{

"label": "persona",

"value": "My name is Sam, a helpful assistant."

}

],

tools=["web_search", "run_code"]

)

print(agent_state.id)

# agent-d9be...0846

response = client.agents.messages.create(

agent_id=agent_state.id,

messages=[

{

"role": "user",

"content": "Hey, nice to meet you, my name is Brad."

}

]

)

# the agent will think, then edit its memory using a tool

for message in response.messages:

print(message)import { LettaClient } from '@letta-ai/letta-client'

const client = new LettaClient({ token: "LETTA_API_KEY" });

// const client = new LettaClient({ baseUrl: "http://localhost:8283" }); // if self-hosting, set your baseUrl

const agentState = await client.agents.create({

model: "openai/gpt-4.1",

embedding: "openai/text-embedding-3-small",

memoryBlocks: [

{

label: "human",

value: "The human's name is Chad. They like vibe coding."

},

{

label: "persona",

value: "My name is Sam, a helpful assistant."

}

],

tools: ["web_search", "run_code"]

});

console.log(agentState.id);

// agent-d9be...0846

const response = await client.agents.messages.create(

agentState.id, {

messages: [

{

role: "user",

content: "Hey, nice to meet you, my name is Brad."

}

]

}

);

// the agent will think, then edit its memory using a tool

for (const message of response.messages) {

console.log(message);

}Letta is made by the creators of MemGPT, a research paper that introduced the concept of the "LLM Operating System" for memory management. The core concepts in Letta for designing stateful agents follow the MemGPT LLM OS principles:

- Memory Hierarchy: Agents have self-editing memory that is split between in-context memory and out-of-context memory

- Memory Blocks: The agent's in-context memory is composed of persistent editable memory blocks

- Agentic Context Engineering: Agents control the context window by using tools to edit, delete, or search for memory

- Perpetual Self-Improving Agents: Every "agent" is a single entity that has a perpetual (infinite) message history

Multi-agent shared memory (full guide)

A single memory block can be attached to multiple agents, allowing to extremely powerful multi-agent shared memory setups. For example, you can create two agents that have their own independent memory blocks in addition to a shared memory block.

# create a shared memory block

shared_block = client.blocks.create(

label="organization",

description="Shared information between all agents within the organization.",

value="Nothing here yet, we should update this over time."

)

# create a supervisor agent

supervisor_agent = client.agents.create(

model="anthropic/claude-3-5-sonnet-20241022",

embedding="openai/text-embedding-3-small",

# blocks created for this agent

memory_blocks=[{"label": "persona", "value": "I am a supervisor"}],

# pre-existing shared block that is "attached" to this agent

block_ids=[shared_block.id],

)

# create a worker agent

worker_agent = client.agents.create(

model="openai/gpt-4.1-mini",

embedding="openai/text-embedding-3-small",

# blocks created for this agent

memory_blocks=[{"label": "persona", "value": "I am a worker"}],

# pre-existing shared block that is "attached" to this agent

block_ids=[shared_block.id],

)// create a shared memory block

const sharedBlock = await client.blocks.create({

label: "organization",

description: "Shared information between all agents within the organization.",

value: "Nothing here yet, we should update this over time."

});

// create a supervisor agent

const supervisorAgent = await client.agents.create({

model: "anthropic/claude-3-5-sonnet-20241022",

embedding: "openai/text-embedding-3-small",

// blocks created for this agent

memoryBlocks: [{ label: "persona", value: "I am a supervisor" }],

// pre-existing shared block that is "attached" to this agent

blockIds: [sharedBlock.id]

});

// create a worker agent

const workerAgent = await client.agents.create({

model: "openai/gpt-4.1-mini",

embedding: "openai/text-embedding-3-small",

// blocks created for this agent

memoryBlocks: [{ label: "persona", value: "I am a worker" }],

// pre-existing shared block that is "attached" to this agent

blockIds: [sharedBlock.id]

});Sleep-time agents (full guide)

In Letta, you can create special sleep-time agents that share the memory of your primary agents, but run in the background (like an agent's "subconcious"). You can think of sleep-time agents as a special form of multi-agent architecture.

To enable sleep-time agents for your agent, set the enable_sleeptime flag to true when creating your agent. This will automatically create a sleep-time agent in addition to your main agent which will handle the memory editing, instead of your primary agent.

agent_state = client.agents.create(

...

enable_sleeptime=True, # <- enable this flag to create a sleep-time agent

)const agentState = await client.agents.create({

...

enableSleeptime: true // <- enable this flag to create a sleep-time agent

});Saving and sharing agents with Agent File (.af) (full guide)

In Letta, all agent data is persisted to disk (Postgres or SQLite), and can be easily imported and exported using the open source Agent File (.af) file format. You can use Agent File to checkpoint your agents, as well as move your agents (and their complete state/memories) between different Letta servers, e.g. between self-hosted Letta and Letta Cloud.

View code snippets

# Import your .af file from any location

agent_state = client.agents.import_agent_serialized(file=open("/path/to/agent/file.af", "rb"))

print(f"Imported agent: {agent.id}")

# Export your agent into a serialized schema object (which you can write to a file)

schema = client.agents.export_agent_serialized(agent_id="<AGENT_ID>")import { readFileSync } from 'fs';

import { Blob } from 'buffer';

// Import your .af file from any location

const file = new Blob([readFileSync('/path/to/agent/file.af')])

const agentState = await client.agents.importAgentSerialized(file, {})

console.log(`Imported agent: ${agentState.id}`);

// Export your agent into a serialized schema object (which you can write to a file)

const schema = await client.agents.exportAgentSerialized("<AGENT_ID>");Model Context Protocol (MCP) and custom tools (full guide)

Letta has rich support for MCP tools (Letta acts as an MCP client), as well as custom Python tools. MCP servers can be easily added within the Agent Development Environment (ADE) tool manager UI, as well as via the SDK:

View code snippets

# List tools from an MCP server

tools = client.tools.list_mcp_tools_by_server(mcp_server_name="weather-server")

# Add a specific tool from the MCP server

tool = client.tools.add_mcp_tool(

mcp_server_name="weather-server",

mcp_tool_name="get_weather"

)

# Create agent with MCP tool attached

agent_state = client.agents.create(

model="openai/gpt-4o-mini",

embedding="openai/text-embedding-3-small",

tool_ids=[tool.id]

)

# Or attach tools to an existing agent

client.agents.tool.attach(

agent_id=agent_state.id

tool_id=tool.id

)

# Use the agent with MCP tools

response = client.agents.messages.create(

agent_id=agent_state.id,

messages=[

{

"role": "user",

"content": "Use the weather tool to check the forecast"

}

]

)// List tools from an MCP server

const tools = await client.tools.listMcpToolsByServer("weather-server");

// Add a specific tool from the MCP server

const tool = await client.tools.addMcpTool("weather-server", "get_weather");

// Create agent with MCP tool

const agentState = await client.agents.create({

model: "openai/gpt-4o-mini",

embedding: "openai/text-embedding-3-small",

toolIds: [tool.id]

});

// Use the agent with MCP tools

const response = await client.agents.messages.create(agentState.id, {

messages: [

{

role: "user",

content: "Use the weather tool to check the forecast"

}

]

});Filesystem (full guide)

Letta’s filesystem allow you to easily connect your agents to external files, for example: research papers, reports, medical records, or any other data in common text formats (.pdf, .txt, .md, .json, etc).

Once you attach a folder to an agent, the agent will be able to use filesystem tools (open_file, grep_file, search_file) to browse the files to search for information.

View code snippets

# get an available embedding_config

embedding_configs = client.embedding_models.list()

embedding_config = embedding_configs[0]

# create the folder

folder = client.folders.create(

name="my_folder",

embedding_config=embedding_config

)

# upload a file into the folder

job = client.folders.files.upload(

folder_id=folder.id,

file=open("my_file.txt", "rb")

)

# wait until the job is completed

while True:

job = client.jobs.retrieve(job.id)

if job.status == "completed":

break

elif job.status == "failed":

raise ValueError(f"Job failed: {job.metadata}")

print(f"Job status: {job.status}")

time.sleep(1)

# once you attach a folder to an agent, the agent can see all files in it

client.agents.folders.attach(agent_id=agent.id, folder_id=folder.id)

response = client.agents.messages.create(

agent_id=agent_state.id,

messages=[

{

"role": "user",

"content": "What data is inside of my_file.txt?"

}

]

)

for message in response.messages:

print(message)// get an available embedding_config

const embeddingConfigs = await client.embeddingModels.list()

const embeddingConfig = embeddingConfigs[0];

// create the folder

const folder = await client.folders.create({

name: "my_folder",

embeddingConfig: embeddingConfig

});

// upload a file into the folder

const uploadJob = await client.folders.files.upload(

createReadStream("my_file.txt"),

folder.id,

);

console.log("file uploaded")

// wait until the job is completed

while (true) {

const job = await client.jobs.retrieve(uploadJob.id);

if (job.status === "completed") {

break;

} else if (job.status === "failed") {

throw new Error(`Job failed: ${job.metadata}`);

}

console.log(`Job status: ${job.status}`);

await new Promise((resolve) => setTimeout(resolve, 1000));

}

// list files in the folder

const files = await client.folders.files.list(folder.id);

console.log(`Files in folder: ${files}`);

// list passages in the folder

const passages = await client.folders.passages.list(folder.id);

console.log(`Passages in folder: ${passages}`);

// once you attach a folder to an agent, the agent can see all files in it

await client.agents.folders.attach(agent.id, folder.id);

const response = await client.agents.messages.create(

agentState.id, {

messages: [

{

role: "user",

content: "What data is inside of my_file.txt?"

}

]

}

);

for (const message of response.messages) {

console.log(message);

}Long-running agents (full guide)

When agents need to execute multiple tool calls or perform complex operations (like deep research, data analysis, or multi-step workflows), processing time can vary significantly. Letta supports both a background mode (with resumable streaming) as well as an async mode (with polling) to enable robust long-running agent executions.

View code snippets

stream = client.agents.messages.create_stream(

agent_id=agent_state.id,

messages=[

{

"role": "user",

"content": "Run comprehensive analysis on this dataset"

}

],

stream_tokens=True,

background=True,

)

run_id = None

last_seq_id = None

for chunk in stream:

if hasattr(chunk, "run_id") and hasattr(chunk, "seq_id"):

run_id = chunk.run_id # Save this to reconnect if your connection drops

last_seq_id = chunk.seq_id # Save this as your resumption point for cursor-based pagination

print(chunk)

# If disconnected, resume from last received seq_id:

for chunk in client.runs.stream(run_id, starting_after=last_seq_id):

print(chunk)const stream = await client.agents.messages.createStream({

agentId: agentState.id,

requestBody: {

messages: [

{

role: "user",

content: "Run comprehensive analysis on this dataset"

}

],

streamTokens: true,

background: true,

}

});

let runId = null;

let lastSeqId = null;

for await (const chunk of stream) {

if (chunk.run_id && chunk.seq_id) {

runId = chunk.run_id; // Save this to reconnect if your connection drops

lastSeqId = chunk.seq_id; // Save this as your resumption point for cursor-based pagination

}

console.log(chunk);

}

// If disconnected, resume from last received seq_id

for await (const chunk of client.runs.stream(runId, {startingAfter: lastSeqId})) {

console.log(chunk);

}Letta is model agnostic and supports using local model providers such as Ollama and LM Studio. You can also easily swap models inside an agent after the agent has been created, by modifying the agent state with the new model provider via the SDK or in the ADE.

Note: this repostory contains the source code for the core Letta service (API server), not the client SDKs. The client SDKs can be found here: Python, TypeScript.

To install the Letta server from source, fork the repo, clone your fork, then use uv to install from inside the main directory:

cd letta

uv sync --all-extrasTo run the Letta server from source, use uv run:

uv run letta serverLetta is an open source project built by over a hundred contributors. There are many ways to get involved in the Letta OSS project!

- Join the Discord: Chat with the Letta devs and other AI developers.

- Chat on our forum: If you're not into Discord, check out our developer forum.

- Follow our socials: Twitter/X, LinkedIn, YouTube

Legal notices: By using Letta and related Letta services (such as the Letta endpoint or hosted service), you are agreeing to our privacy policy and terms of service.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for letta

Similar Open Source Tools

letta

Letta is an open source framework for building stateful LLM applications. It allows users to build stateful agents with advanced reasoning capabilities and transparent long-term memory. The framework is white box and model-agnostic, enabling users to connect to various LLM API backends. Letta provides a graphical interface, the Letta ADE, for creating, deploying, interacting, and observing with agents. Users can access Letta via REST API, Python, Typescript SDKs, and the ADE. Letta supports persistence by storing agent data in a database, with PostgreSQL recommended for data migrations. Users can install Letta using Docker or pip, with Docker defaulting to PostgreSQL and pip defaulting to SQLite. Letta also offers a CLI tool for interacting with agents. The project is open source and welcomes contributions from the community.

magma

Magma is a powerful and flexible framework for building scalable and efficient machine learning pipelines. It provides a simple interface for creating complex workflows, enabling users to easily experiment with different models and data processing techniques. With Magma, users can streamline the development and deployment of machine learning projects, saving time and resources.

sparkle

Sparkle is a tool that streamlines the process of building AI-driven features in applications using Large Language Models (LLMs). It guides users through creating and managing agents, defining tools, and interacting with LLM providers like OpenAI. Sparkle allows customization of LLM provider settings, model configurations, and provides a seamless integration with Sparkle Server for exposing agents via an OpenAI-compatible chat API endpoint.

swarmzero

SwarmZero SDK is a library that simplifies the creation and execution of AI Agents and Swarms of Agents. It supports various LLM Providers such as OpenAI, Azure OpenAI, Anthropic, MistralAI, Gemini, Nebius, and Ollama. Users can easily install the library using pip or poetry, set up the environment and configuration, create and run Agents, collaborate with Swarms, add tools for complex tasks, and utilize retriever tools for semantic information retrieval. Sample prompts are provided to help users explore the capabilities of the agents and swarms. The SDK also includes detailed examples and documentation for reference.

agent-mimir

Agent Mimir is a command line and Discord chat client 'agent' manager for LLM's like Chat-GPT that provides the models with access to tooling and a framework with which accomplish multi-step tasks. It is easy to configure your own agent with a custom personality or profession as well as enabling access to all tools that are compatible with LangchainJS. Agent Mimir is based on LangchainJS, every tool or LLM that works on Langchain should also work with Mimir. The tasking system is based on Auto-GPT and BabyAGI where the agent needs to come up with a plan, iterate over its steps and review as it completes the task.

agent-toolkit

The Stripe Agent Toolkit enables popular agent frameworks to integrate with Stripe APIs through function calling. It includes support for Python and TypeScript, built on top of Stripe Python and Node SDKs. The toolkit provides tools for LangChain, CrewAI, and Vercel's AI SDK, allowing users to configure actions like creating payment links, invoices, refunds, and more. Users can pass the toolkit as a list of tools to agents for integration with Stripe. Context values can be provided for making requests, such as specifying connected accounts for API calls. The toolkit also supports metered billing for Vercel's AI SDK, enabling billing events submission based on customer ID and input/output meters.

aiavatarkit

AIAvatarKit is a tool for building AI-based conversational avatars quickly. It supports various platforms like VRChat and cluster, along with real-world devices. The tool is extensible, allowing unlimited capabilities based on user needs. It requires VOICEVOX API, Google or Azure Speech Services API keys, and Python 3.10. Users can start conversations out of the box and enjoy seamless interactions with the avatars.

UniChat

UniChat is a pipeline tool for creating online and offline chat-bots in Unity. It leverages Unity.Sentis and text vector embedding technology to enable offline mode text content search based on vector databases. The tool includes a chain toolkit for embedding LLM and Agent in games, along with middleware components for Text to Speech, Speech to Text, and Sub-classifier functionalities. UniChat also offers a tool for invoking tools based on ReActAgent workflow, allowing users to create personalized chat scenarios and character cards. The tool provides a comprehensive solution for designing flexible conversations in games while maintaining developer's ideas.

parea-sdk-py

Parea AI provides a SDK to evaluate & monitor AI applications. It allows users to test, evaluate, and monitor their AI models by defining and running experiments. The SDK also enables logging and observability for AI applications, as well as deploying prompts to facilitate collaboration between engineers and subject-matter experts. Users can automatically log calls to OpenAI and Anthropic, create hierarchical traces of their applications, and deploy prompts for integration into their applications.

vinagent

Vinagent is a lightweight and flexible library designed for building smart agent assistants across various industries. It provides a simple yet powerful foundation for creating AI-powered customer service bots, data analysis assistants, or domain-specific automation agents. With its modular tool system, users can easily extend their agent's capabilities by integrating a wide range of tools that are self-contained, well-documented, and can be registered dynamically. Vinagent allows users to scale and adapt their agents to new tasks or environments effortlessly.

FlashLearn

FlashLearn is a tool that provides a simple interface and orchestration for incorporating Agent LLMs into workflows and ETL pipelines. It allows data transformations, classifications, summarizations, rewriting, and custom multi-step tasks using LLMs. Each step and task has a compact JSON definition, making pipelines easy to understand and maintain. FlashLearn supports LiteLLM, Ollama, OpenAI, DeepSeek, and other OpenAI-compatible clients.

langchainrb

Langchain.rb is a Ruby library that makes it easy to build LLM-powered applications. It provides a unified interface to a variety of LLMs, vector search databases, and other tools, making it easy to build and deploy RAG (Retrieval Augmented Generation) systems and assistants. Langchain.rb is open source and available under the MIT License.

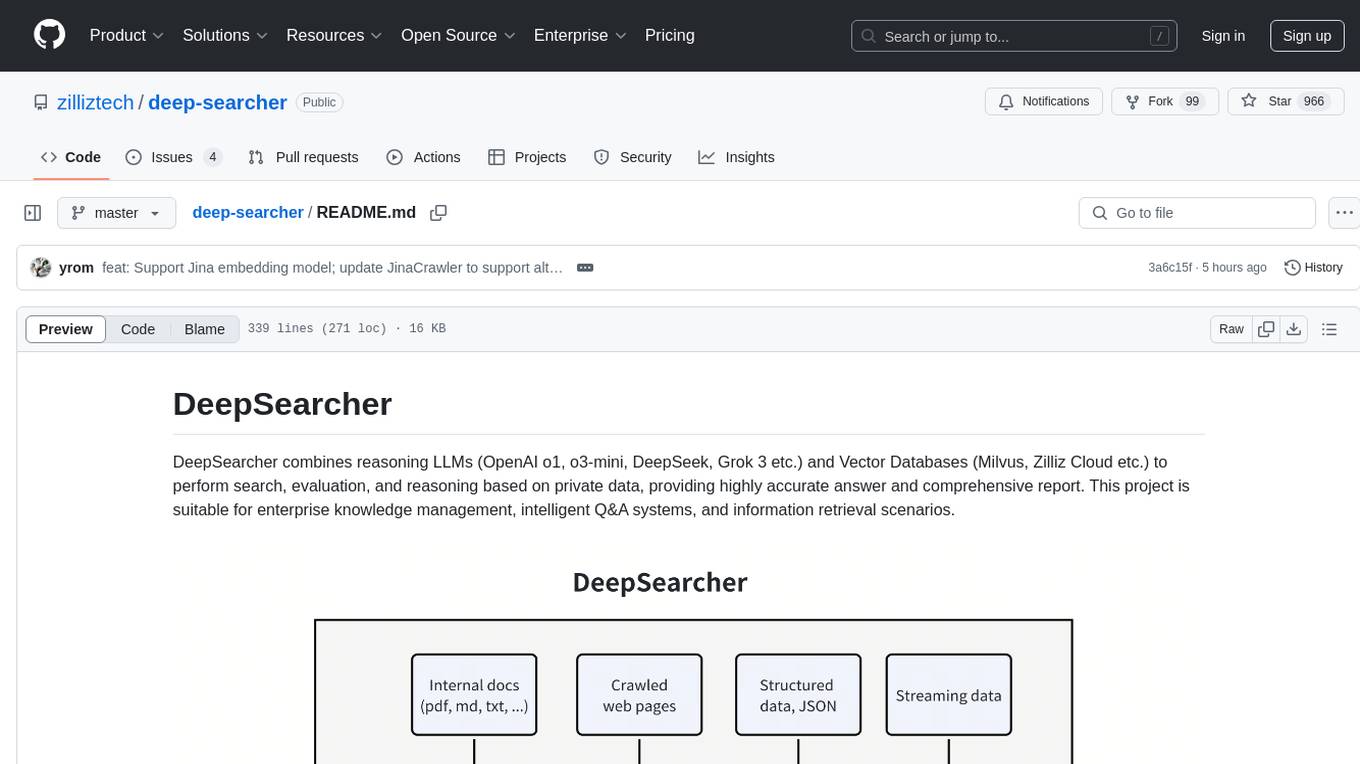

deep-searcher

DeepSearcher is a tool that combines reasoning LLMs and Vector Databases to perform search, evaluation, and reasoning based on private data. It is suitable for enterprise knowledge management, intelligent Q&A systems, and information retrieval scenarios. The tool maximizes the utilization of enterprise internal data while ensuring data security, supports multiple embedding models, and provides support for multiple LLMs for intelligent Q&A and content generation. It also includes features like private data search, vector database management, and document loading with web crawling capabilities under development.

promptic

Promptic is a tool designed for LLM app development, providing a productive and pythonic way to build LLM applications. It leverages LiteLLM, allowing flexibility to switch LLM providers easily. Promptic focuses on building features by providing type-safe structured outputs, easy-to-build agents, streaming support, automatic prompt caching, and built-in conversation memory.

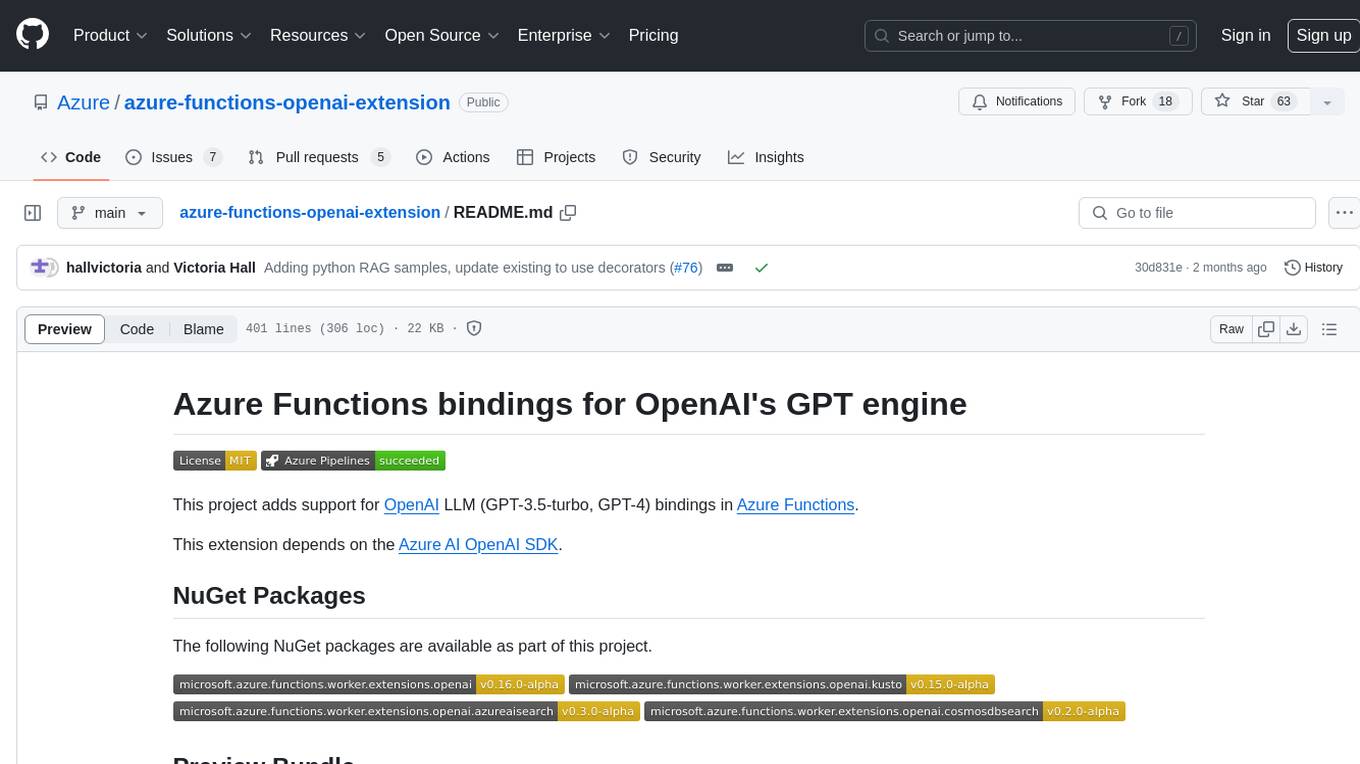

azure-functions-openai-extension

Azure Functions OpenAI Extension is a project that adds support for OpenAI LLM (GPT-3.5-turbo, GPT-4) bindings in Azure Functions. It provides NuGet packages for various functionalities like text completions, chat completions, assistants, embeddings generators, and semantic search. The project requires .NET 6 SDK or greater, Azure Functions Core Tools v4.x, and specific settings in Azure Function or local settings for development. It offers features like text completions, chat completion, assistants with custom skills, embeddings generators for text relatedness, and semantic search using vector databases. The project also includes examples in C# and Python for different functionalities.

npi

NPi is an open-source platform providing Tool-use APIs to empower AI agents with the ability to take action in the virtual world. It is currently under active development, and the APIs are subject to change in future releases. NPi offers a command line tool for installation and setup, along with a GitHub app for easy access to repositories. The platform also includes a Python SDK and examples like Calendar Negotiator and Twitter Crawler. Join the NPi community on Discord to contribute to the development and explore the roadmap for future enhancements.

For similar tasks

letta

Letta is an open source framework for building stateful LLM applications. It allows users to build stateful agents with advanced reasoning capabilities and transparent long-term memory. The framework is white box and model-agnostic, enabling users to connect to various LLM API backends. Letta provides a graphical interface, the Letta ADE, for creating, deploying, interacting, and observing with agents. Users can access Letta via REST API, Python, Typescript SDKs, and the ADE. Letta supports persistence by storing agent data in a database, with PostgreSQL recommended for data migrations. Users can install Letta using Docker or pip, with Docker defaulting to PostgreSQL and pip defaulting to SQLite. Letta also offers a CLI tool for interacting with agents. The project is open source and welcomes contributions from the community.

SuperAGI

SuperAGI is an open-source framework designed to build, manage, and run autonomous AI agents. It enables developers to create production-ready and scalable agents, extend agent capabilities with toolkits, and interact with agents through a graphical user interface. The framework allows users to connect to multiple Vector DBs, optimize token usage, store agent memory, utilize custom fine-tuned models, and automate tasks with predefined steps. SuperAGI also provides a marketplace for toolkits that enable agents to interact with external systems and third-party plugins.

AutoAgent

AutoAgent is a fully-automated and zero-code framework that enables users to create and deploy LLM agents through natural language alone. It is a top performer on the GAIA Benchmark, equipped with a native self-managing vector database, and allows for easy creation of tools, agents, and workflows without any coding. AutoAgent seamlessly integrates with a wide range of LLMs and supports both function-calling and ReAct interaction modes. It is designed to be dynamic, extensible, customized, and lightweight, serving as a personal AI assistant.

AgentUp

AgentUp is an active development tool that provides a developer-first agent framework for creating AI agents with enterprise-grade infrastructure. It allows developers to define agents with configuration, ensuring consistent behavior across environments. The tool offers secure design, configuration-driven architecture, extensible ecosystem for customizations, agent-to-agent discovery, asynchronous task architecture, deterministic routing, and MCP support. It supports multiple agent types like reactive agents and iterative agents, making it suitable for chatbots, interactive applications, research tasks, and more. AgentUp is built by experienced engineers from top tech companies and is designed to make AI agents production-ready, secure, and reliable.

NeMo-Agent-Toolkit

NVIDIA NeMo Agent toolkit is a flexible, lightweight, and unifying library that allows you to easily connect existing enterprise agents to data sources and tools across any framework. It is framework agnostic, promotes reusability, enables rapid development, provides profiling capabilities, offers observability features, includes an evaluation system, features a user interface for interaction, and supports the Model Context Protocol (MCP). With NeMo Agent toolkit, users can move quickly, experiment freely, and ensure reliability across all agent-driven projects.

agent-service-toolkit

The AI Agent Service Toolkit is a comprehensive toolkit designed for running an AI agent service using LangGraph, FastAPI, and Streamlit. It includes a LangGraph agent, a FastAPI service, a client for interacting with the service, and a Streamlit app for providing a chat interface. The project offers a template for building and running agents with the LangGraph framework, showcasing a complete setup from agent definition to user interface. Key features include LangGraph Agent with latest features, FastAPI Service, Advanced Streaming support, Streamlit Interface, Multiple Agent Support, Asynchronous Design, Content Moderation, RAG Agent implementation, Feedback Mechanism, Docker Support, and Testing. The repository structure includes directories for defining agents, protocol schema, core modules, service, client, Streamlit app, and tests.

agent-shell

Agent-Shell is a native Emacs shell designed to interact with LLM agents powered by ACP (Agent Client Protocol). With Agent-Shell, users can chat with various ACP-driven agents like Gemini CLI, Claude Code, Auggie, Mistral Vibe, and more. The tool provides a seamless interface for communication and interaction with these agents within the Emacs environment.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.