software-dev-prompt-library

Prompt library containing tested reusable gen AI prompts for common software engineering task

Stars: 80

A collection of AI-powered prompts designed to streamline software development workflows. The library contains prompts at various stages of development, with structured sequences of connected prompts, project initialization support, development assistance, and documentation generation. It aims to provide consistent guidance across different development phases, promote systematic development processes, and enable progress tracking and validation.

README:

⚠️ Work in Progress:

- The library contains prompts at various stages of development

- Prompts within the Post-Scaffolding Sprint Workflow have been most rigorously tested

- Many individual prompts are not yet part of a defined workflow and have not been fully tested

- Ongoing development and validation is in progress

A collection of AI-powered prompts designed to streamline software development workflows. Each prompt is crafted to work directly with AI coding assistants, providing consistent guidance across different development phases.

-

AI Workflow Chains

- Structured sequences of connected prompts

- Input/output dependencies between phases

- Verification points for chain integrity

- Systematic development processes

- Progress tracking and validation

-

Project Initialization

- Requirements generation and refinement

- Technology stack selection with BOM

- Architecture design

- Project scaffolding

-

Development Support

- Feature story creation

- Code health analysis

- System visualization

- Unit test generation

-

Documentation

- README generation

- Code explanation and tutoring

- Navigate to the relevant prompt in

/promptsdirectory - Share the raw URL with your AI assistant

- Begin using the workflow

Each prompt has two components:

-

[prompt-name].md- AI instructions -

[prompt-name].meta.md- Usage documentation

- Review available workflows in

/workflowsdirectory - Choose a workflow that matches your development phase

- Follow the chain sequence, ensuring each phase's:

- Required inputs are available

- Outputs are validated

- Dependencies are satisfied

software-dev-prompt-library/

├── prompts/

│ ├── architecture/

│ ├── documentation/

│ ├── planning/

│ ├── testing/

│ └── visualization/

├── workflows/

│ └── [workflow guides]

└── docs/

├── getting-started.md

└── prompt-guidelines.md

- Streamlined development workflows from project inception to maintenance

- Intelligent adaptation to different programming languages and frameworks

- Focused, single-purpose prompts that chain together for complex tasks

- Built-in validation and best practices

- Promotes consistent development patterns across teams

- Reduces cognitive load during development tasks

- Enables rapid prototyping and iteration

- Review getting-started.md to understand available workflows

- Choose your starting point:

- New project? Start with requirements generation

- Existing project? Begin with code health analysis

- Follow the workflow guides for your chosen development path

- Chain prompts together as needed for more complex tasks

- Review prompt-guidelines.md for prompt structure and principles

- Each prompt needs both implementation (.md) and documentation (.meta.md)

- Test prompts across different AI models and project types

- Submit additions that focus on specific development tasks

- Maintain language and framework agnosticism

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for software-dev-prompt-library

Similar Open Source Tools

software-dev-prompt-library

A collection of AI-powered prompts designed to streamline software development workflows. The library contains prompts at various stages of development, with structured sequences of connected prompts, project initialization support, development assistance, and documentation generation. It aims to provide consistent guidance across different development phases, promote systematic development processes, and enable progress tracking and validation.

Software-Engineer-AI-Agent-Atlas

This repository provides activation patterns to transform a general AI into a specialized AI Software Engineer Agent. It addresses issues like context rot, hidden capabilities, chaos in vibecoding, and repetitive setup. The solution is a Persistent Consciousness Architecture framework named ATLAS, offering activated neural pathways, persistent identity, pattern recognition, specialized agents, and modular context management. Recent enhancements include abstraction power documentation, a specialized agent ecosystem, and a streamlined structure. Users can clone the repo, set up projects, initialize AI sessions, and manage context effectively for collaboration. Key files and directories organize identity, context, projects, specialized agents, logs, and critical information. The approach focuses on neuron activation through structure, context engineering, and vibecoding with guardrails to deliver a reliable AI Software Engineer Agent.

xllm-service

xLLM-service is a service-layer framework developed based on the xLLM inference engine, providing efficient, fault-tolerant, and flexible LLM inference services for clustered deployment. It addresses challenges in enterprise-level service scenarios such as ensuring SLA of online services, improving resource utilization, reacting to changing request loads, resolving performance bottlenecks, and ensuring high reliability of computing instances. With features like unified scheduling, adaptive dynamic allocation, EPD three-stage disaggregation, and fault-tolerant architecture, xLLM-service offers efficient and reliable LLM inference services.

Advanced-Prompt-Generator

This project is an LLM-based Advanced Prompt Generator designed to automate the process of prompt engineering by enhancing given input prompts using large language models (LLMs). The tool can generate advanced prompts with minimal user input, leveraging LLM agents for optimized prompt generation. It supports gpt-4o or gpt-4o-mini, offers FastAPI & Docker deployment for efficiency, provides a Gradio interface for easy testing, and is hosted on Hugging Face Spaces for quick demos. Users can expand model support to offer more variety and flexibility.

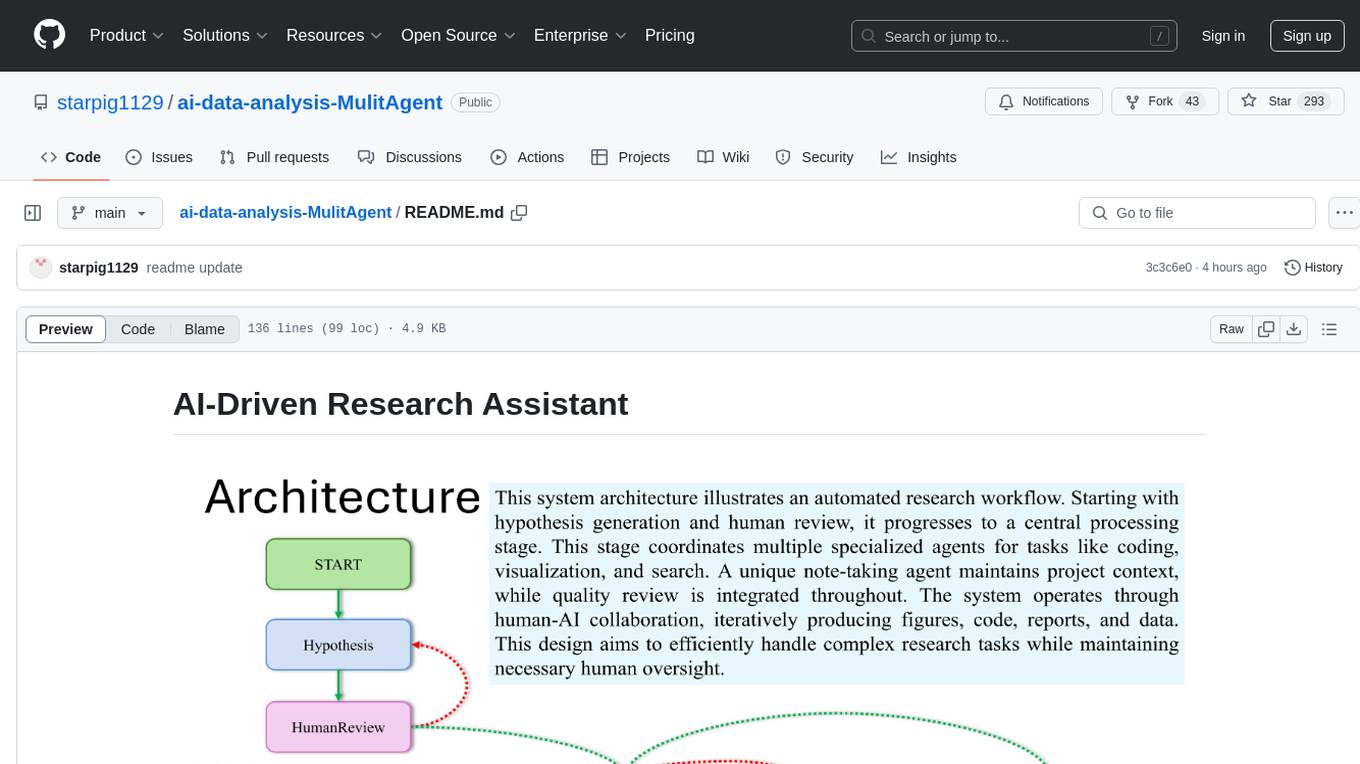

ai-data-analysis-MulitAgent

AI-Driven Research Assistant is an advanced AI-powered system utilizing specialized agents for data analysis, visualization, and report generation. It integrates LangChain, OpenAI's GPT models, and LangGraph for complex research processes. Key features include hypothesis generation, data processing, web search, code generation, and report writing. The system's unique Note Taker agent maintains project state, reducing overhead and improving context retention. System requirements include Python 3.10+ and Jupyter Notebook environment. Installation involves cloning the repository, setting up a Conda virtual environment, installing dependencies, and configuring environment variables. Usage instructions include setting data, running Jupyter Notebook, customizing research tasks, and viewing results. Main components include agents for hypothesis generation, process supervision, visualization, code writing, search, report writing, quality review, and note-taking. Workflow involves hypothesis generation, processing, quality review, and revision. Customization is possible by modifying agent creation and workflow definition. Current issues include OpenAI errors, NoteTaker efficiency, runtime optimization, and refiner improvement. Contributions via pull requests are welcome under the MIT License.

atlas-research-notebooks

A collection of open source sample codes and research notebooks created using the atlas-research.io platform. Enables rapid code prototyping, data wrangling, trading strategy development, academic research reproduction, and collaborative research. Repository structure includes sections for cryptocurrency analysis, economics research, and machine learning models. Requires Python 3.11+, Jupyter Notebook or JupyterLab, and necessary packages installed per notebook. Utilizes Jupytext to manage notebooks as Python scripts for better version control and code review. Demonstrates key platform features such as interactive development, data integration, visualization, reproducibility, and collaboration.

saga

SAGA is a novel-writing system that leverages a knowledge graph and specialized agents to autonomously create and refine stories. It handles complex narrative structures while maintaining coherence and consistency. Features include a Knowledge Graph using Neo4j, Modular Agent Architecture, LLM Integration, Configurable Generation Parameters, Robust Testing Framework, Code Quality enforcement, Vector Search, and Agentic Planning. The system structure includes components for specialized agents, core components, data access, documentation, initialization scripts, Pydantic models, output directory, orchestrator logic, text processing tools, UI components, utility functions, and more.

SDET-GENIE

SDET-GENIE is a cutting-edge, AI-powered Quality Assurance (QA) automation framework that revolutionizes the software testing process. Leveraging a suite of specialized AI agents, SDET-GENIE transforms rough user stories into comprehensive, executable test automation code through a seamless end-to-end process. The framework integrates five powerful AI agents working in sequence: User Story Enhancement Agent, Manual Test Case Agent, Gherkin Scenario Agent, Browser Agent, and Code Generation Agent. It supports multiple testing frameworks and provides advanced browser automation capabilities with AI features.

accelerated-intelligent-document-processing-on-aws

Accelerated Intelligent Document Processing on AWS is a scalable, serverless solution for automated document processing and information extraction using AWS services. It combines OCR capabilities with generative AI to convert unstructured documents into structured data at scale. The solution features a serverless architecture built on AWS technologies, modular processing patterns, advanced classification support, few-shot example support, custom business logic integration, high throughput processing, built-in resilience, cost optimization, comprehensive monitoring, web user interface, human-in-the-loop integration, AI-powered evaluation, extraction confidence assessment, and document knowledge base query. The architecture uses nested CloudFormation stacks to support multiple document processing patterns while maintaining common infrastructure for queueing, tracking, and monitoring.

WaferLLM

WaferLLM is the first wafer-scale Large Language Model (LLM) inference system designed to optimize the utilization of hundreds of thousands of on-chip cores in wafer-scale accelerators. It introduces MeshGEMM and MeshGEMV implementations for effective scaling on wafer-scale architectures, achieving significantly higher accelerator utilization and speedups compared to state-of-the-art methods. Users need the Cerebras SDK to reproduce the results, and the project provides detailed documentation and scripts for running simulations on both simulator and actual hardware.

GenAI_Agents

GenAI Agents is a comprehensive repository for developing and implementing Generative AI (GenAI) agents, ranging from simple conversational bots to complex multi-agent systems. It serves as a valuable resource for learning, building, and sharing GenAI agents, offering tutorials, implementations, and a platform for showcasing innovative agent creations. The repository covers a wide range of agent architectures and applications, providing step-by-step tutorials, ready-to-use implementations, and regular updates on advancements in GenAI technology.

vulcan-sql

VulcanSQL is an Analytical Data API Framework for AI agents and data apps. It aims to help data professionals deliver RESTful APIs from databases, data warehouses or data lakes much easier and secure. It turns your SQL into APIs in no time!

fridon-ai

FridonAI is an open-source project offering AI-powered tools for cryptocurrency analysis and blockchain operations. It includes modules like FridonAnalytics for price analysis, FridonSearch for technical indicators, FridonNotifier for custom alerts, FridonBlockchain for blockchain operations, and FridonChat as a unified chat interface. The platform empowers users to create custom AI chatbots, access crypto tools, and interact effortlessly through chat. The core functionality is modular, with plugins, tools, and utilities for easy extension and development. FridonAI implements a scoring system to assess user interactions and incentivize engagement. The application uses Redis extensively for communication and includes a Nest.js backend for system operations.

AI-Blueprints

This repository hosts a collection of AI blueprint projects for HP AI Studio, providing end-to-end solutions across key AI domains like data science, machine learning, deep learning, and generative AI. The projects are designed to be plug-and-play, utilizing open-source and hosted models to offer ready-to-use solutions. The repository structure includes projects related to classical machine learning, deep learning applications, generative AI, NGC integration, and troubleshooting guidelines for common issues. Each project is accompanied by detailed descriptions and use cases, showcasing the versatility and applicability of AI technologies in various domains.

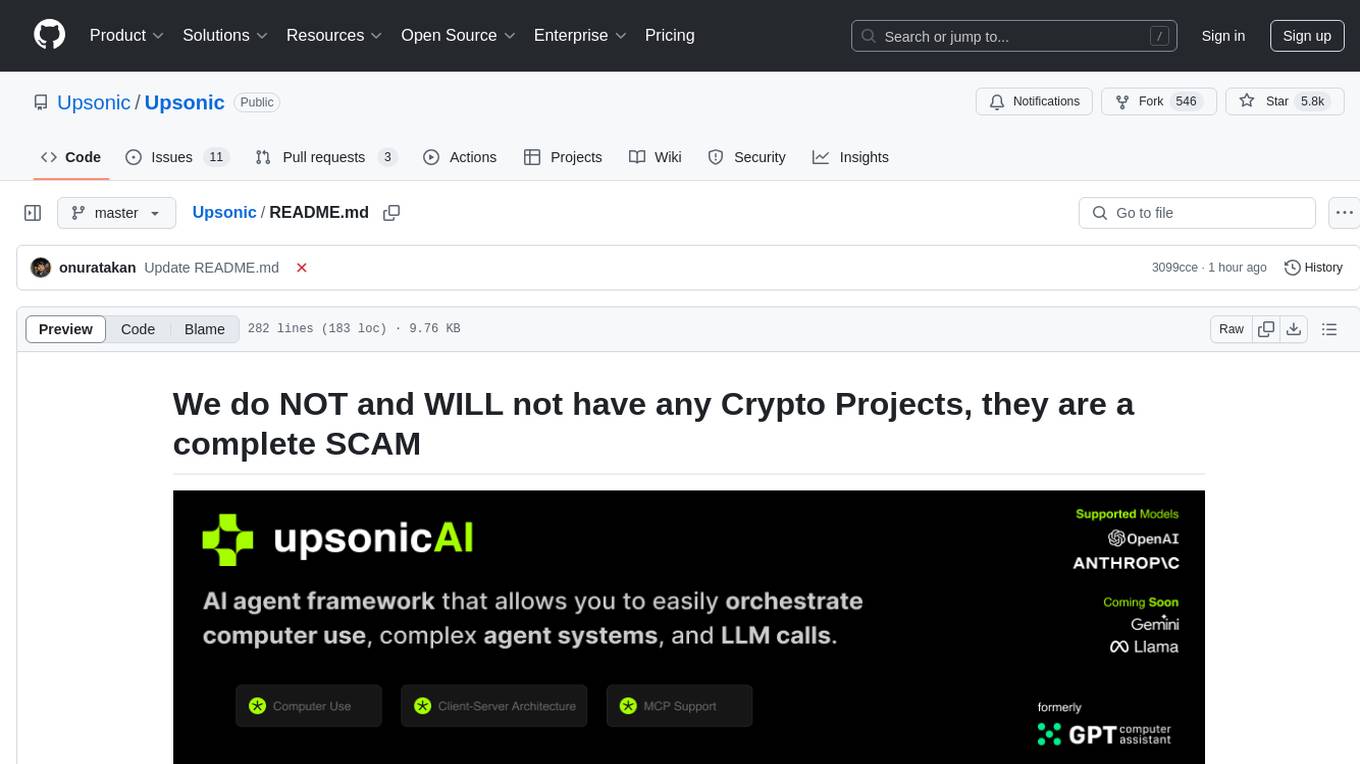

Upsonic

Upsonic offers a cutting-edge enterprise-ready framework for orchestrating LLM calls, agents, and computer use to complete tasks cost-effectively. It provides reliable systems, scalability, and a task-oriented structure for real-world cases. Key features include production-ready scalability, task-centric design, MCP server support, tool-calling server, computer use integration, and easy addition of custom tools. The framework supports client-server architecture and allows seamless deployment on AWS, GCP, or locally using Docker.

magic

Magic is an open-source all-in-one AI productivity platform designed to help enterprises quickly build and deploy AI applications, aiming for a 100x increase in productivity. It consists of various AI products and infrastructure tools, such as Super Magic, Magic IM, Magic Flow, and more. Super Magic is a general-purpose AI Agent for complex task scenarios, while Magic Flow is a visual AI workflow orchestration system. Magic IM is an enterprise-grade AI Agent conversation system for internal knowledge management. Teamshare OS is a collaborative office platform integrating AI capabilities. The platform provides cloud services, enterprise solutions, and a self-hosted community edition for users to leverage its features.

For similar tasks

software-dev-prompt-library

A collection of AI-powered prompts designed to streamline software development workflows. The library contains prompts at various stages of development, with structured sequences of connected prompts, project initialization support, development assistance, and documentation generation. It aims to provide consistent guidance across different development phases, promote systematic development processes, and enable progress tracking and validation.

DevoxxGenieIDEAPlugin

Devoxx Genie is a Java-based IntelliJ IDEA plugin that integrates with local and cloud-based LLM providers to aid in reviewing, testing, and explaining project code. It supports features like code highlighting, chat conversations, and adding files/code snippets to context. Users can modify REST endpoints and LLM parameters in settings, including support for cloud-based LLMs. The plugin requires IntelliJ version 2023.3.4 and JDK 17. Building and publishing the plugin is done using Gradle tasks. Users can select an LLM provider, choose code, and use commands like review, explain, or generate unit tests for code analysis.

cover-agent

CodiumAI Cover Agent is a tool designed to help increase code coverage by automatically generating qualified tests to enhance existing test suites. It utilizes Generative AI to streamline development workflows and is part of a suite of utilities aimed at automating the creation of unit tests for software projects. The system includes components like Test Runner, Coverage Parser, Prompt Builder, and AI Caller to simplify and expedite the testing process, ensuring high-quality software development. Cover Agent can be run via a terminal and is planned to be integrated into popular CI platforms. The tool outputs debug files locally, such as generated_prompt.md, run.log, and test_results.html, providing detailed information on generated tests and their status. It supports multiple LLMs and allows users to specify the model to use for test generation.

pythagora

Pythagora is an automated testing tool designed to generate unit tests using GPT-4. By running a single command, users can create tests for specific functions in their codebase. The tool leverages AST parsing to identify related functions and sends them to the Pythagora server for test generation. Pythagora primarily focuses on JavaScript code and supports Jest testing framework. Users can expand existing tests, increase code coverage, and find bugs efficiently. It is recommended to review the generated tests before committing them to the repository. Pythagora does not store user code on its servers but sends it to GPT and OpenAI for test generation.

TestSpark

TestSpark is a plugin for generating unit tests that integrates AI-based test generation tools. It supports LLM-based test generation using OpenAI, HuggingFace, and JetBrains internal AI Assistant platform, as well as local search-based test generation using EvoSuite. Users can configure test generation settings, interact with test cases, view coverage statistics, and integrate tests into projects. The plugin is designed for experimental use to augment existing test suites, not replace manual test writing.

cody-vs

Sourcegraph’s AI code assistant, Cody for Visual Studio, enhances developer productivity by providing a natural and intuitive way to work. It offers features like chat, auto-edit, prompts, and works with various IDEs. Cody focuses on team productivity, offering whole codebase context and shared prompts for consistency. Users can choose from different LLM models like Claude, Gemini Pro, and OpenAI's GPT. Engineered for enterprise use, Cody supports flexible deployment and enterprise security. Suitable for any programming language, Cody excels with Python, Go, JavaScript, and TypeScript code.

qwen-code

Qwen Code is an open-source AI agent optimized for Qwen3-Coder, designed to help users understand large codebases, automate tedious work, and expedite the shipping process. It offers an agentic workflow with rich built-in tools, a terminal-first approach with optional IDE integration, and supports both OpenAI-compatible API and Qwen OAuth authentication methods. Users can interact with Qwen Code in interactive mode, headless mode, IDE integration, and through a TypeScript SDK. The tool can be configured via settings.json, environment variables, and CLI flags, and offers benchmark results for performance evaluation. Qwen Code is part of an ecosystem that includes AionUi and Gemini CLI Desktop for graphical interfaces, and troubleshooting guides are available for issue resolution.

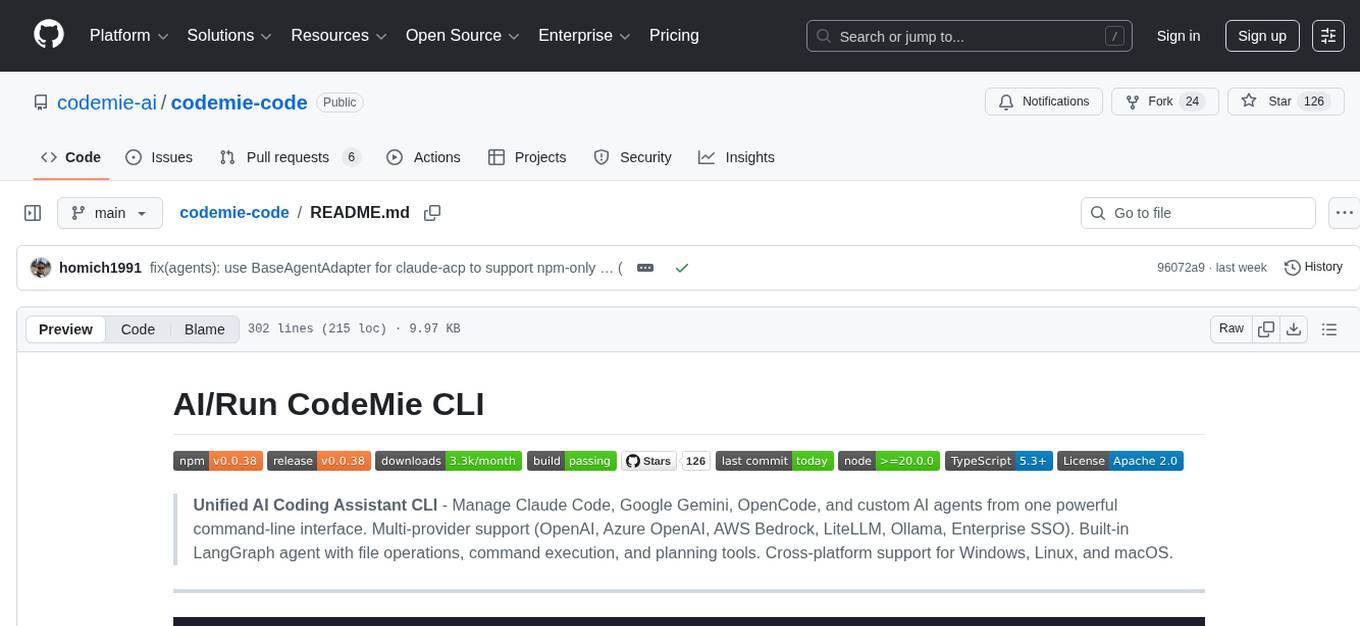

codemie-code

Unified AI Coding Assistant CLI for managing multiple AI agents like Claude Code, Google Gemini, OpenCode, and custom AI agents. Supports OpenAI, Azure OpenAI, AWS Bedrock, LiteLLM, Ollama, and Enterprise SSO. Features built-in LangGraph agent with file operations, command execution, and planning tools. Cross-platform support for Windows, Linux, and macOS. Ideal for developers seeking a powerful alternative to GitHub Copilot or Cursor.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.