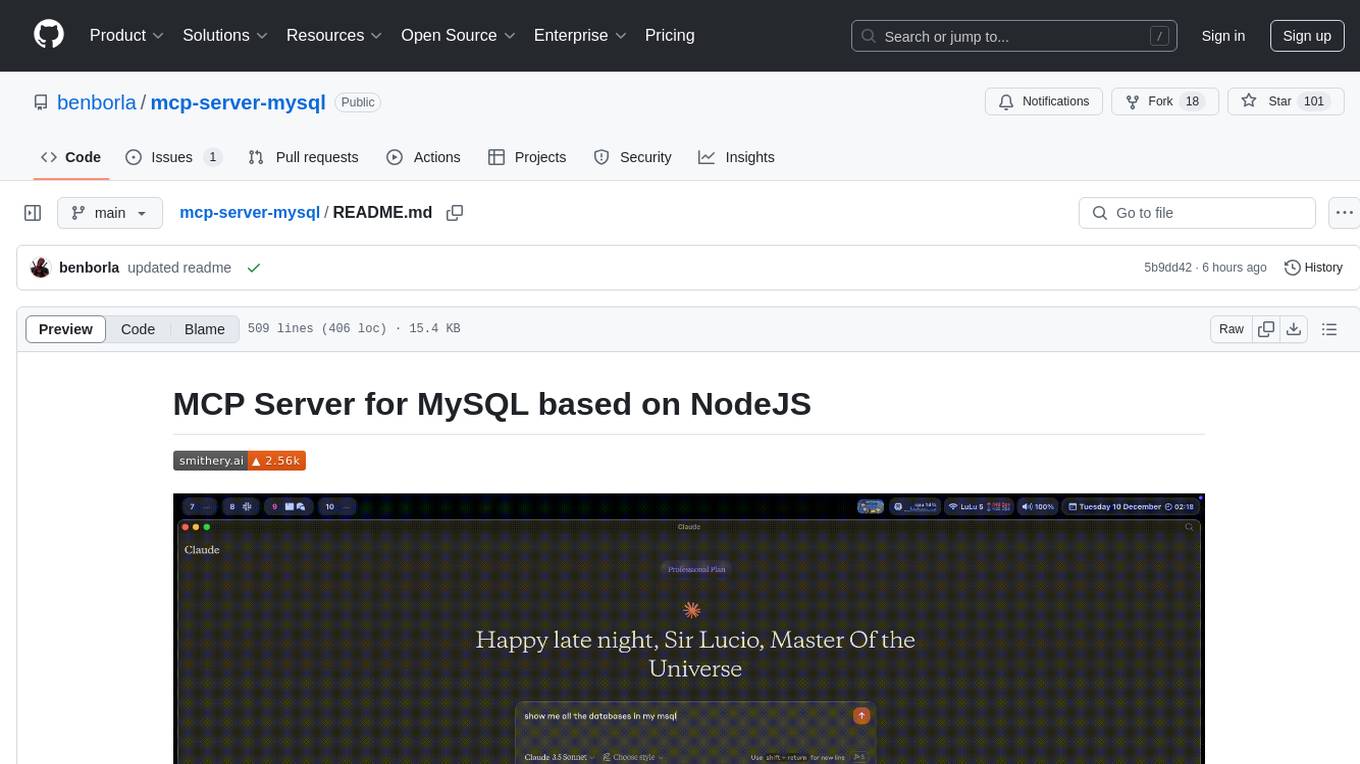

mcp-server-mysql

A Model Context Protocol server that provides read-only access to MySQL databases. This server enables LLMs to inspect database schemas and execute read-only queries.

Stars: 1230

The MCP Server for MySQL based on NodeJS is a Model Context Protocol server that provides access to MySQL databases. It enables users to inspect database schemas and execute SQL queries. The server offers tools for executing SQL queries, providing comprehensive database information, security features like SQL injection prevention, performance optimizations, monitoring, and debugging capabilities. Users can configure the server using environment variables and advanced options. The server supports multi-DB mode, schema-specific permissions, and includes troubleshooting guidelines for common issues. Contributions are welcome, and the project roadmap includes enhancing query capabilities, security features, performance optimizations, monitoring, and expanding schema information.

README:

🚀 This is a modified version optimized for Claude Code with SSH tunnel support

Original Author: @benborla29

Original Repository: https://github.com/benborla/mcp-server-mysql

License: MIT

- ✅ Claude Code Integration - Optimized for use with Anthropic's Claude Code CLI

- ✅ SSH Tunnel Support - Built-in support for SSH tunnels to remote databases

- ✅ Auto-start/stop Hooks - Automatic tunnel management with Claude start/stop

- ✅ DDL Operations - Added

MYSQL_DISABLE_READ_ONLY_TRANSACTIONSfor CREATE TABLE support - ✅ Multi-Project Setup - Easy configuration for multiple projects with different databases

- Read the Setup Guide: See PROJECT_SETUP_GUIDE.md for detailed instructions

- Configure SSH Tunnels: Set up automatic SSH tunnels for remote databases

- Use with Claude: Integrated MCP server works seamlessly with Claude Code

A Model Context Protocol server that provides access to MySQL databases through SSH tunnels. This server enables Claude and other LLMs to inspect database schemas and execute SQL queries securely.

- Requirements

- Installation

- Components

- Configuration

- Environment Variables

- Multi-DB Mode

- Schema-Specific Permissions

- Testing

- Troubleshooting

- Contributing

- License

- Node.js v20 or higher

- MySQL 5.7 or higher (MySQL 8.0+ recommended)

- MySQL user with appropriate permissions for the operations you need

- For write operations: MySQL user with INSERT, UPDATE, and/or DELETE privileges

There are several ways to install and configure the MCP server but the most common would be checking this website https://smithery.ai/server/@benborla29/mcp-server-mysql

For Cursor IDE, you can install this MCP server with the following command in your project:

- Visit https://smithery.ai/server/@benborla29/mcp-server-mysql

- Follow the instruction for Cursor

MCP Get provides a centralized registry of MCP servers and simplifies the installation process.

Codex CLI installation is similar to Claude Code below

codex mcp add mcp_server_mysql \

--env MYSQL_HOST="127.0.0.1" \

--env MYSQL_PORT="3306" \

--env MYSQL_USER="root" \

--env MYSQL_PASS="your_password" \

--env MYSQL_DB="your_database" \

--env ALLOW_INSERT_OPERATION="false" \

--env ALLOW_UPDATE_OPERATION="false" \

--env ALLOW_DELETE_OPERATION="false" \

-- npx -y @benborla29/mcp-server-mysqlIf you already have this MCP server configured in Claude Desktop, you can import it automatically:

claude mcp add-from-claude-desktopThis will show an interactive dialog where you can select your mcp_server_mysql server to import with all existing configuration.

Using NPM/PNPM Global Installation:

First, install the package globally:

# Using npm

npm install -g @benborla29/mcp-server-mysql

# Using pnpm

pnpm add -g @benborla29/mcp-server-mysqlThen add the server to Claude Code:

claude mcp add mcp_server_mysql \

-e MYSQL_HOST="127.0.0.1" \

-e MYSQL_PORT="3306" \

-e MYSQL_USER="root" \

-e MYSQL_PASS="your_password" \

-e MYSQL_DB="your_database" \

-e ALLOW_INSERT_OPERATION="false" \

-e ALLOW_UPDATE_OPERATION="false" \

-e ALLOW_DELETE_OPERATION="false" \

-- npx @benborla29/mcp-server-mysqlUsing Local Repository (for development):

If you're running from a cloned repository:

claude mcp add mcp_server_mysql \

-e MYSQL_HOST="127.0.0.1" \

-e MYSQL_PORT="3306" \

-e MYSQL_USER="root" \

-e MYSQL_PASS="your_password" \

-e MYSQL_DB="your_database" \

-e ALLOW_INSERT_OPERATION="false" \

-e ALLOW_UPDATE_OPERATION="false" \

-e ALLOW_DELETE_OPERATION="false" \

-e PATH="/path/to/node/bin:/usr/bin:/bin" \

-e NODE_PATH="/path/to/node/lib/node_modules" \

-- /path/to/node /full/path/to/mcp-server-mysql/dist/index.jsReplace:

-

/path/to/nodewith your Node.js binary path (find withwhich node) -

/full/path/to/mcp-server-mysqlwith the full path to your cloned repository - Update MySQL credentials to match your environment

Using Unix Socket Connection:

For local MySQL instances using Unix sockets:

claude mcp add mcp_server_mysql \

-e MYSQL_SOCKET_PATH="/tmp/mysql.sock" \

-e MYSQL_USER="root" \

-e MYSQL_PASS="your_password" \

-e MYSQL_DB="your_database" \

-e ALLOW_INSERT_OPERATION="false" \

-e ALLOW_UPDATE_OPERATION="false" \

-e ALLOW_DELETE_OPERATION="false" \

-- npx @benborla29/mcp-server-mysqlConsider which scope to use based on your needs:

# Local scope (default) - only available in current project

claude mcp add mcp_server_mysql [options...]

# User scope - available across all your projects

claude mcp add mcp_server_mysql -s user [options...]

# Project scope - shared with team members via .mcp.json

claude mcp add mcp_server_mysql -s project [options...]For database servers with credentials, local or user scope is recommended to keep credentials private.

After adding the server, verify it's configured correctly:

# List all configured servers

claude mcp list

# Get details for your MySQL server

claude mcp get mcp_server_mysql

# Check server status within Claude Code

/mcpFor multi-database mode, omit the MYSQL_DB environment variable:

claude mcp add mcp_server_mysql_multi \

-e MYSQL_HOST="127.0.0.1" \

-e MYSQL_PORT="3306" \

-e MYSQL_USER="root" \

-e MYSQL_PASS="your_password" \

-e MULTI_DB_WRITE_MODE="false" \

-- npx @benborla29/mcp-server-mysqlFor advanced features, add additional environment variables:

claude mcp add mcp_server_mysql \

-e MYSQL_HOST="127.0.0.1" \

-e MYSQL_PORT="3306" \

-e MYSQL_USER="root" \

-e MYSQL_PASS="your_password" \

-e MYSQL_DB="your_database" \

-e MYSQL_POOL_SIZE="10" \

-e MYSQL_QUERY_TIMEOUT="30000" \

-e MYSQL_CACHE_TTL="60000" \

-e MYSQL_RATE_LIMIT="100" \

-e MYSQL_SSL="true" \

-e ALLOW_INSERT_OPERATION="false" \

-e ALLOW_UPDATE_OPERATION="false" \

-e ALLOW_DELETE_OPERATION="false" \

-e MYSQL_ENABLE_LOGGING="true" \

-- npx @benborla29/mcp-server-mysql-

Server Connection Issues: Use

/mcpcommand in Claude Code to check server status and authenticate if needed. -

Path Issues: If using a local repository, ensure Node.js paths are correctly set:

# Find your Node.js path which node # For PATH environment variable echo "$(which node)/../" # For NODE_PATH environment variable echo "$(which node)/../../lib/node_modules"

-

Permission Errors: Ensure your MySQL user has appropriate permissions for the operations you've enabled.

-

Server Not Starting: Check Claude Code logs or run the server directly to debug:

# Test the server directly npx @benborla29/mcp-server-mysql

For manual installation:

# Using npm

npm install -g @benborla29/mcp-server-mysql

# Using pnpm

pnpm add -g @benborla29/mcp-server-mysqlAfter manual installation, you'll need to configure your LLM application to use the MCP server (see Configuration section below).

If you want to clone and run this MCP server directly from the source code, follow these steps:

-

Clone the repository

git clone https://github.com/benborla/mcp-server-mysql.git cd mcp-server-mysql -

Install dependencies

npm install # or pnpm install -

Build the project

npm run build # or pnpm run build -

Configure Claude Desktop

Add the following to your Claude Desktop configuration file (

claude_desktop_config.json):{ "mcpServers": { "mcp_server_mysql": { "command": "/path/to/node", "args": [ "/full/path/to/mcp-server-mysql/dist/index.js" ], "env": { "MYSQL_HOST": "127.0.0.1", "MYSQL_PORT": "3306", "MYSQL_USER": "root", "MYSQL_PASS": "your_password", "MYSQL_DB": "your_database", "ALLOW_INSERT_OPERATION": "false", "ALLOW_UPDATE_OPERATION": "false", "ALLOW_DELETE_OPERATION": "false", "PATH": "/path/to/node/bin:/usr/bin:/bin", // <--- Important to add the following, run in your terminal `echo "$(which node)/../"` to get the path "NODE_PATH": "/path/to/node/lib/node_modules" // <--- Important to add the following, run in your terminal `echo "$(which node)/../../lib/node_modules"` } } } }Replace:

-

/path/to/nodewith the full path to your Node.js binary (find it withwhich node) -

/full/path/to/mcp-server-mysqlwith the full path to where you cloned the repository - Set the MySQL credentials to match your environment

-

-

Test the server

# Run the server directly to test node dist/index.jsIf it connects to MySQL successfully, you're ready to use it with Claude Desktop.

To run in remote mode, you'll need to provide environment variables to the npx script.

-

Create env file in preferred directory

# create .env file touch .env -

Copy-paste example file from this repository

-

Set the MySQL credentials to match your environment

-

Set

IS_REMOTE_MCP=true -

Set

REMOTE_SECRET_KEYto a secure string. -

Provide custom

PORTif needed. Default is 3000. -

Load variables in current session:

source .env -

Run the server

npx @benborla29/mcp-server-mysql

-

Configure your agent to connect to the MCP with the next configuration:

{ "mcpServers": { "mysql": { "url": "http://your-host:3000/mcp", "type": "streamableHttp", "headers": { "Authorization": "Bearer <REMOTE_SECRET_KEY>" } } } }

-

mysql_query

- Execute SQL queries against the connected database

- Input:

sql(string): The SQL query to execute - By default, limited to READ ONLY operations

- Optional write operations (when enabled via configuration):

- INSERT: Add new data to tables (requires

ALLOW_INSERT_OPERATION=true) - UPDATE: Modify existing data (requires

ALLOW_UPDATE_OPERATION=true) - DELETE: Remove data (requires

ALLOW_DELETE_OPERATION=true)

- INSERT: Add new data to tables (requires

- All operations are executed within a transaction with proper commit/rollback handling

- Supports prepared statements for secure parameter handling

- Configurable query timeouts and result pagination

- Built-in query execution statistics

The server provides comprehensive database information:

-

Table Schemas

- JSON schema information for each table

- Column names and data types

- Index information and constraints

- Foreign key relationships

- Table statistics and metrics

- Automatically discovered from database metadata

- SQL injection prevention through prepared statements

- Query whitelisting/blacklisting capabilities

- Rate limiting for query execution

- Query complexity analysis

- Configurable connection encryption

- Read-only transaction enforcement

- Optimized connection pooling

- Query result caching

- Large result set streaming

- Query execution plan analysis

- Configurable query timeouts

- Comprehensive query logging

- Performance metrics collection

- Error tracking and reporting

- Health check endpoints

- Query execution statistics

If you installed using Smithery, your configuration is already set up. You can view or modify it with:

smithery configure @benborla29/mcp-server-mysqlWhen reconfiguring, you can update any of the MySQL connection details as well as the write operation settings:

-

Basic connection settings:

- MySQL Host, Port, User, Password, Database

- SSL/TLS configuration (if your database requires secure connections)

-

Write operation permissions:

- Allow INSERT Operations: Set to true if you want to allow adding new data

- Allow UPDATE Operations: Set to true if you want to allow updating existing data

- Allow DELETE Operations: Set to true if you want to allow deleting data

For security reasons, all write operations are disabled by default. Only enable these settings if you specifically need Claude to modify your database data.

For more control over the MCP server's behavior, you can use these advanced configuration options:

{

"mcpServers": {

"mcp_server_mysql": {

"command": "/path/to/npx/binary/npx",

"args": [

"-y",

"@benborla29/mcp-server-mysql"

],

"env": {

// Basic connection settings

"MYSQL_HOST": "127.0.0.1",

"MYSQL_PORT": "3306",

"MYSQL_USER": "root",

"MYSQL_PASS": "",

"MYSQL_DB": "db_name",

"PATH": "/path/to/node/bin:/usr/bin:/bin",

// Performance settings

"MYSQL_POOL_SIZE": "10",

"MYSQL_QUERY_TIMEOUT": "30000",

"MYSQL_CACHE_TTL": "60000",

// Security settings

"MYSQL_RATE_LIMIT": "100",

"MYSQL_MAX_QUERY_COMPLEXITY": "1000",

"MYSQL_SSL": "true",

// Monitoring settings

"ENABLE_LOGGING": "true",

"MYSQL_LOG_LEVEL": "info",

"MYSQL_METRICS_ENABLED": "true",

// Write operation flags

"ALLOW_INSERT_OPERATION": "false",

"ALLOW_UPDATE_OPERATION": "false",

"ALLOW_DELETE_OPERATION": "false"

}

}

}

}-

MYSQL_SOCKET_PATH: Unix socket path for local connections (e.g., "/tmp/mysql.sock") -

MYSQL_HOST: MySQL server host (default: "127.0.0.1") - ignored if MYSQL_SOCKET_PATH is set -

MYSQL_PORT: MySQL server port (default: "3306") - ignored if MYSQL_SOCKET_PATH is set -

MYSQL_USER: MySQL username (default: "root") -

MYSQL_PASS: MySQL password -

MYSQL_DB: Target database name (leave empty for multi-DB mode)

For scenarios requiring frequent credential rotation or temporary connections, you can use a MySQL connection string instead of individual environment variables:

-

MYSQL_CONNECTION_STRING: MySQL CLI-format connection string (e.g.,mysql --default-auth=mysql_native_password -A -hHOST -PPORT -uUSER -pPASS database_name)

When MYSQL_CONNECTION_STRING is provided, it takes precedence over individual connection settings. This is particularly useful for:

- Rotating credentials that expire frequently

- Temporary database connections

- Quick testing with different database configurations

Note: For security, this should only be set via environment variables, not stored in version-controlled configuration files. Consider using the prompt input type in Claude Code's MCP configuration for credentials that expire.

-

MYSQL_POOL_SIZE: Connection pool size (default: "10") -

MYSQL_QUERY_TIMEOUT: Query timeout in milliseconds (default: "30000") -

MYSQL_CACHE_TTL: Cache time-to-live in milliseconds (default: "60000") -

MYSQL_QUEUE_LIMIT: Maximum number of queued connection requests (default: "100") -

MYSQL_CONNECT_TIMEOUT: Connection timeout in milliseconds (default: "10000")

-

MYSQL_RATE_LIMIT: Maximum queries per minute (default: "100") -

MYSQL_MAX_QUERY_COMPLEXITY: Maximum query complexity score (default: "1000") -

MYSQL_SSL: Enable SSL/TLS encryption (default: "false") -

MYSQL_SSL_CA: Path to SSL CA certificate file (PEM format). Only used whenMYSQL_SSL=true. Required for connecting to MySQL instances with self-signed certificates or custom CAs. -

ALLOW_INSERT_OPERATION: Enable INSERT operations (default: "false") -

ALLOW_UPDATE_OPERATION: Enable UPDATE operations (default: "false") -

ALLOW_DELETE_OPERATION: Enable DELETE operations (default: "false") -

ALLOW_DDL_OPERATION: Enable DDL operations (default: "false") -

MYSQL_DISABLE_READ_ONLY_TRANSACTIONS: [NEW] Disable read-only transaction enforcement (default: "false")⚠️ Security Warning: Only enable this if you need full write capabilities and trust the LLM with your database -

SCHEMA_INSERT_PERMISSIONS: Schema-specific INSERT permissions -

SCHEMA_UPDATE_PERMISSIONS: Schema-specific UPDATE permissions -

SCHEMA_DELETE_PERMISSIONS: Schema-specific DELETE permissions -

SCHEMA_DDL_PERMISSIONS: Schema-specific DDL permissions -

MULTI_DB_WRITE_MODE: Enable write operations in multi-DB mode (default: "false")

-

MYSQL_TIMEZONE: Set the timezone for date/time values. Accepts formats like+08:00(UTC+8),-05:00(UTC-5),Z(UTC), orlocal(system timezone). Useful for ensuring consistent date/time handling across different server locations. -

MYSQL_DATE_STRINGS: When set to"true", returns date/datetime values as strings instead of JavaScript Date objects. This preserves the exact database values without any timezone conversion, which is particularly useful for:- Applications that need precise control over date formatting

- Cross-timezone database operations

- Avoiding JavaScript Date timezone quirks

-

MYSQL_ENABLE_LOGGING: Enable query logging (default: "false") -

MYSQL_LOG_LEVEL: Logging level (default: "info") -

MYSQL_METRICS_ENABLED: Enable performance metrics (default: "false")

-

IS_REMOTE_MCP: Enable remote MCP mode (default: "false") -

REMOTE_SECRET_KEY: Secret key for remote MCP authentication (default: ""). If not provided, remote MCP mode will be disabled. -

PORT: Port number for the remote MCP server (default: 3000)

MCP-Server-MySQL supports connecting to multiple databases when no specific database is set. This allows the LLM to query any database the MySQL user has access to. For full details, see README-MULTI-DB.md.

To enable multi-DB mode, simply leave the MYSQL_DB environment variable empty. In multi-DB mode, queries require schema qualification:

-- Use fully qualified table names

SELECT * FROM database_name.table_name;

-- Or use USE statements to switch between databases

USE database_name;

SELECT * FROM table_name;For fine-grained control over database operations, MCP-Server-MySQL now supports schema-specific permissions. This allows different databases to have different levels of access (read-only, read-write, etc.).

SCHEMA_INSERT_PERMISSIONS=development:true,test:true,production:false

SCHEMA_UPDATE_PERMISSIONS=development:true,test:true,production:false

SCHEMA_DELETE_PERMISSIONS=development:false,test:true,production:false

SCHEMA_DDL_PERMISSIONS=development:false,test:true,production:falseFor complete details and security recommendations, see README-MULTI-DB.md.

Before running tests, you need to set up the test database and seed it with test data:

-

Create Test Database and User

-- Connect as root and create test database CREATE DATABASE IF NOT EXISTS mcp_test; -- Create test user with appropriate permissions CREATE USER IF NOT EXISTS 'mcp_test'@'localhost' IDENTIFIED BY 'mcp_test_password'; GRANT ALL PRIVILEGES ON mcp_test.* TO 'mcp_test'@'localhost'; FLUSH PRIVILEGES;

-

Run Database Setup Script

# Run the database setup script pnpm run setup:test:dbThis will create the necessary tables and seed data. The script is located in

scripts/setup-test-db.ts -

Configure Test Environment Create a

.env.testfile in the project root (if not existing):MYSQL_HOST=127.0.0.1 MYSQL_PORT=3306 MYSQL_USER=mcp_test MYSQL_PASS=mcp_test_password MYSQL_DB=mcp_test

-

Update package.json Scripts Add these scripts to your package.json:

{ "scripts": { "setup:test:db": "ts-node scripts/setup-test-db.ts", "pretest": "pnpm run setup:test:db", "test": "vitest run", "test:watch": "vitest", "test:coverage": "vitest run --coverage" } }

The project includes a comprehensive test suite to ensure functionality and reliability:

# First-time setup

pnpm run setup:test:db

# Run all tests

pnpm testThe evals package loads an mcp client that then runs the index.ts file, so there is no need to rebuild between tests. You can load environment variables by prefixing the npx command. Full documentation can be found at MCP Evals.

OPENAI_API_KEY=your-key npx mcp-eval evals.ts index.ts-

Connection Issues

- Verify MySQL server is running and accessible

- Check credentials and permissions

- Ensure SSL/TLS configuration is correct if enabled

- Try connecting with a MySQL client to confirm access

-

Performance Issues

- Adjust connection pool size

- Configure query timeout values

- Enable query caching if needed

- Check query complexity settings

- Monitor server resource usage

-

Security Restrictions

- Review rate limiting configuration

- Check query whitelist/blacklist settings

- Verify SSL/TLS settings

- Ensure the user has appropriate MySQL permissions

-

Path Resolution If you encounter an error "Could not connect to MCP server mcp-server-mysql", explicitly set the path of all required binaries:

{ "env": { "PATH": "/path/to/node/bin:/usr/bin:/bin" } }Where can I find my

nodebin path Run the following command to get it:For PATH

echo "$(which node)/../"

For NODE_PATH

echo "$(which node)/../../lib/node_modules"

-

Claude Desktop Specific Issues

-

If you see "Server disconnected" logs in Claude Desktop, check the logs at

~/Library/Logs/Claude/mcp-server-mcp_server_mysql.log -

Ensure you're using the absolute path to both the Node binary and the server script

-

Check if your

.envfile is being properly loaded; use explicit environment variables in the configuration -

Try running the server directly from the command line to see if there are connection issues

-

If you need write operations (INSERT, UPDATE, DELETE), set the appropriate flags to "true" in your configuration:

"env": { "ALLOW_INSERT_OPERATION": "true", // Enable INSERT operations "ALLOW_UPDATE_OPERATION": "true", // Enable UPDATE operations "ALLOW_DELETE_OPERATION": "true" // Enable DELETE operations }

-

Ensure your MySQL user has the appropriate permissions for the operations you're enabling

-

For direct execution configuration, use:

{ "mcpServers": { "mcp_server_mysql": { "command": "/full/path/to/node", "args": [ "/full/path/to/mcp-server-mysql/dist/index.js" ], "env": { "MYSQL_HOST": "127.0.0.1", "MYSQL_PORT": "3306", "MYSQL_USER": "root", "MYSQL_PASS": "your_password", "MYSQL_DB": "your_database" } } } }

-

-

Authentication Issues

-

For MySQL 8.0+, ensure the server supports the

caching_sha2_passwordauthentication plugin -

Check if your MySQL user is configured with the correct authentication method

-

Try creating a user with legacy authentication if needed:

CREATE USER 'user'@'localhost' IDENTIFIED WITH mysql_native_password BY 'password';

@lizhuangs

-

-

I am encountering

Error [ERR_MODULE_NOT_FOUND]: Cannot find package 'dotenv' imported fromerror try this workaround:npx -y -p @benborla29/mcp-server-mysql -p dotenv mcp-server-mysql

Thanks to @lizhuangs

Contributions are welcome! Please feel free to submit a Pull Request to https://github.com/benborla/mcp-server-mysql

- Clone the repository

- Install dependencies:

pnpm install - Build the project:

pnpm run build - Run tests:

pnpm test

We're actively working on enhancing this MCP server. Check our CHANGELOG.md for details on planned features, including:

- Enhanced query capabilities with prepared statements

- Advanced security features

- Performance optimizations

- Comprehensive monitoring

- Expanded schema information

If you'd like to contribute to any of these areas, please check the issues on GitHub or open a new one to discuss your ideas.

- Fork the repository

- Create a feature branch:

git checkout -b feature/your-feature-name - Commit your changes:

git commit -am 'Add some feature' - Push to the branch:

git push origin feature/your-feature-name - Submit a pull request

This MCP server is licensed under the MIT License. See the LICENSE file for details.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for mcp-server-mysql

Similar Open Source Tools

mcp-server-mysql

The MCP Server for MySQL based on NodeJS is a Model Context Protocol server that provides access to MySQL databases. It enables users to inspect database schemas and execute SQL queries. The server offers tools for executing SQL queries, providing comprehensive database information, security features like SQL injection prevention, performance optimizations, monitoring, and debugging capabilities. Users can configure the server using environment variables and advanced options. The server supports multi-DB mode, schema-specific permissions, and includes troubleshooting guidelines for common issues. Contributions are welcome, and the project roadmap includes enhancing query capabilities, security features, performance optimizations, monitoring, and expanding schema information.

mcp-redis

The Redis MCP Server is a natural language interface designed for agentic applications to efficiently manage and search data in Redis. It integrates seamlessly with MCP (Model Content Protocol) clients, enabling AI-driven workflows to interact with structured and unstructured data in Redis. The server supports natural language queries, seamless MCP integration, full Redis support for various data types, search and filtering capabilities, scalability, and lightweight design. It provides tools for managing data stored in Redis, such as string, hash, list, set, sorted set, pub/sub, streams, JSON, query engine, and server management. Installation can be done from PyPI or GitHub, with options for testing, development, and Docker deployment. Configuration can be via command line arguments or environment variables. Integrations include OpenAI Agents SDK, Augment, Claude Desktop, and VS Code with GitHub Copilot. Use cases include AI assistants, chatbots, data search & analytics, and event processing. Contributions are welcome under the MIT License.

flapi

flAPI is a powerful service that automatically generates read-only APIs for datasets by utilizing SQL templates. Built on top of DuckDB, it offers features like automatic API generation, support for Model Context Protocol (MCP), connecting to multiple data sources, caching, security implementation, and easy deployment. The tool allows users to create APIs without coding and enables the creation of AI tools alongside REST endpoints using SQL templates. It supports unified configuration for REST endpoints and MCP tools/resources, concurrent servers for REST API and MCP server, and automatic tool discovery. The tool also provides DuckLake-backed caching for modern, snapshot-based caching with features like full refresh, incremental sync, retention, compaction, and audit logs.

mcphost

MCPHost is a CLI host application that enables Large Language Models (LLMs) to interact with external tools through the Model Context Protocol (MCP). It acts as a host in the MCP client-server architecture, allowing language models to access external tools and data sources, maintain consistent context across interactions, and execute commands safely. The tool supports interactive conversations with Claude 3.5 Sonnet and Ollama models, multiple concurrent MCP servers, dynamic tool discovery and integration, configurable server locations and arguments, and a consistent command interface across model types.

pgedge-postgres-mcp

The pgedge-postgres-mcp repository contains a set of tools and scripts for managing and monitoring PostgreSQL databases in an edge computing environment. It provides functionalities for automating database tasks, monitoring database performance, and ensuring data integrity in edge computing scenarios. The tools are designed to be lightweight and efficient, making them suitable for resource-constrained edge devices. With pgedge-postgres-mcp, users can easily deploy and manage PostgreSQL databases in edge computing environments with minimal overhead.

open-edison

OpenEdison is a secure MCP control panel that connects AI to data/software with additional security controls to reduce data exfiltration risks. It helps address the lethal trifecta problem by providing visibility, monitoring potential threats, and alerting on data interactions. The tool offers features like data leak monitoring, controlled execution, easy configuration, visibility into agent interactions, a simple API, and Docker support. It integrates with LangGraph, LangChain, and plain Python agents for observability and policy enforcement. OpenEdison helps gain observability, control, and policy enforcement for AI interactions with systems of records, existing company software, and data to reduce risks of AI-caused data leakage.

mcphub.nvim

MCPHub.nvim is a powerful Neovim plugin that integrates MCP (Model Context Protocol) servers into your workflow. It offers a centralized config file for managing servers and tools, with an intuitive UI for testing resources. Ideal for LLM integration, it provides programmatic API access and interactive testing through the `:MCPHub` command.

mcp

Model Context Protocol (MCP) server providing Vuetify component information and documentation to any MCP-compatible client or IDE. The Vuetify MCP server bridges the gap between Vuetify's component library and AI-assisted development environments, enabling seamless access to Vuetify's extensive component ecosystem directly within your development workflow. Gain AI-powered assistance that understands Vuetify's component structure, styling conventions, and implementation details.

postman-mcp-server

The Postman MCP Server connects Postman to AI tools, enabling AI agents and assistants to access workspaces, manage collections and environments, evaluate APIs, and automate workflows through natural language interactions. It supports various tool configurations like Minimal, Full, and Code, catering to users with different needs. The server offers authentication via OAuth for the best developer experience and fastest setup. Use cases include API testing, code synchronization, collection management, workspace and environment management, automatic spec creation, and client code generation. Designed for developers integrating AI tools with Postman's context and features, supporting quick natural language queries to advanced agent workflows.

sonarqube-mcp-server

The SonarQube MCP Server is a Model Context Protocol (MCP) server that enables seamless integration with SonarQube Server or Cloud for code quality and security. It supports the analysis of code snippets directly within the agent context. The server provides various tools for analyzing code, managing issues, accessing metrics, and interacting with SonarQube projects. It also supports advanced features like dependency risk analysis, enterprise portfolio management, and system health checks. The server can be configured for different transport modes, proxy settings, and custom certificates. Telemetry data collection can be disabled if needed.

mcp

Semgrep MCP Server is a beta server under active development for using Semgrep to scan code for security vulnerabilities. It provides a Model Context Protocol (MCP) for various coding tools to get specialized help in tasks. Users can connect to Semgrep AppSec Platform, scan code for vulnerabilities, customize Semgrep rules, analyze and filter scan results, and compare results. The tool is published on PyPI as semgrep-mcp and can be installed using pip, pipx, uv, poetry, or other methods. It supports CLI and Docker environments for running the server. Integration with VS Code is also available for quick installation. The project welcomes contributions and is inspired by core technologies like Semgrep and MCP, as well as related community projects and tools.

supergateway

Supergateway is a tool that allows running MCP stdio-based servers over SSE (Server-Sent Events) with one command. It is useful for remote access, debugging, or connecting to SSE-based clients when your MCP server only speaks stdio. The tool supports running in SSE to Stdio mode as well, where it connects to a remote SSE server and exposes a local stdio interface for downstream clients. Supergateway can be used with ngrok to share local MCP servers with remote clients and can also be run in a Docker containerized deployment. It is designed with modularity in mind, ensuring compatibility and ease of use for AI tools exchanging data.

ocode

OCode is a sophisticated terminal-native AI coding assistant that provides deep codebase intelligence and autonomous task execution. It seamlessly works with local Ollama models, bringing enterprise-grade AI assistance directly to your development workflow. OCode offers core capabilities such as terminal-native workflow, deep codebase intelligence, autonomous task execution, direct Ollama integration, and an extensible plugin layer. It can perform tasks like code generation & modification, project understanding, development automation, data processing, system operations, and interactive operations. The tool includes specialized tools for file operations, text processing, data processing, system operations, development tools, and integration. OCode enhances conversation parsing, offers smart tool selection, and provides performance improvements for coding tasks.

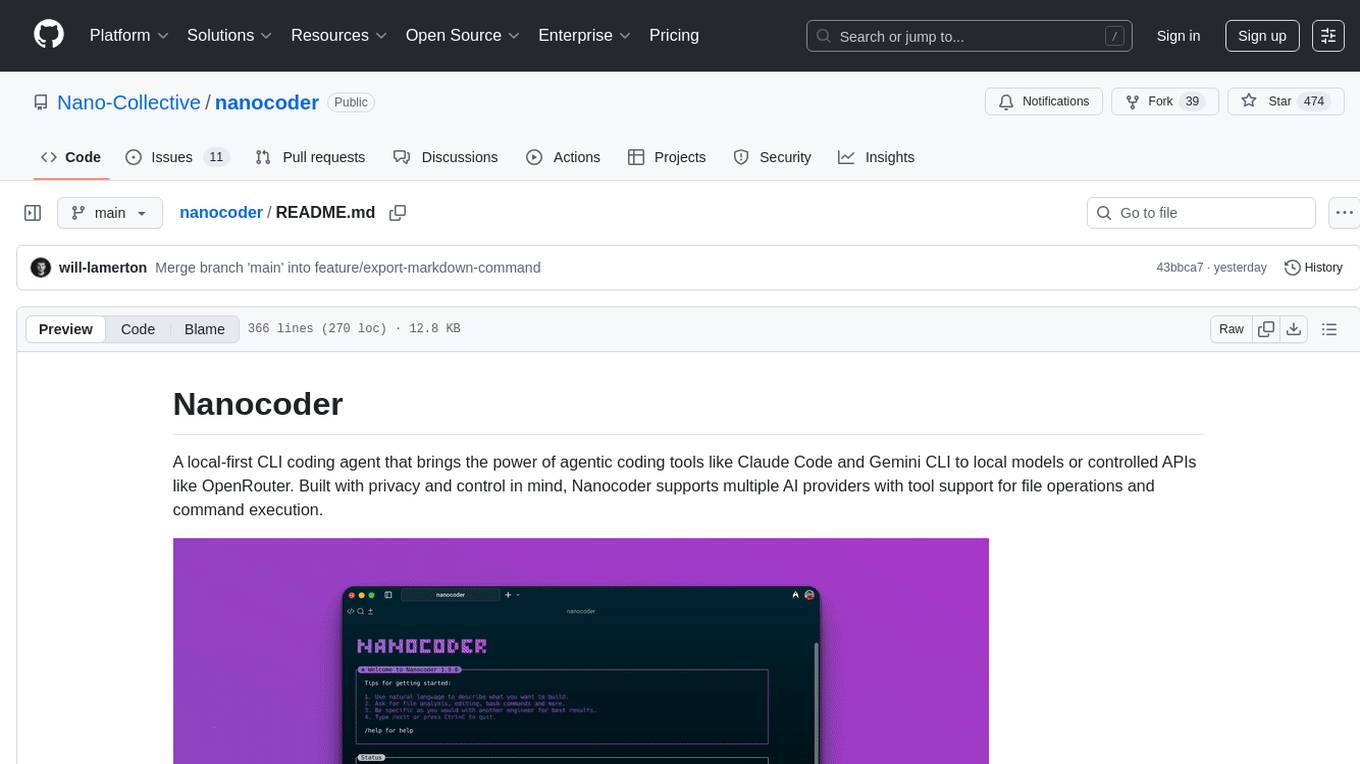

nanocoder

Nanocoder is a versatile code editor designed for beginners and experienced programmers alike. It provides a user-friendly interface with features such as syntax highlighting, code completion, and error checking. With Nanocoder, you can easily write and debug code in various programming languages, making it an ideal tool for learning, practicing, and developing software projects. Whether you are a student, hobbyist, or professional developer, Nanocoder offers a seamless coding experience to boost your productivity and creativity.

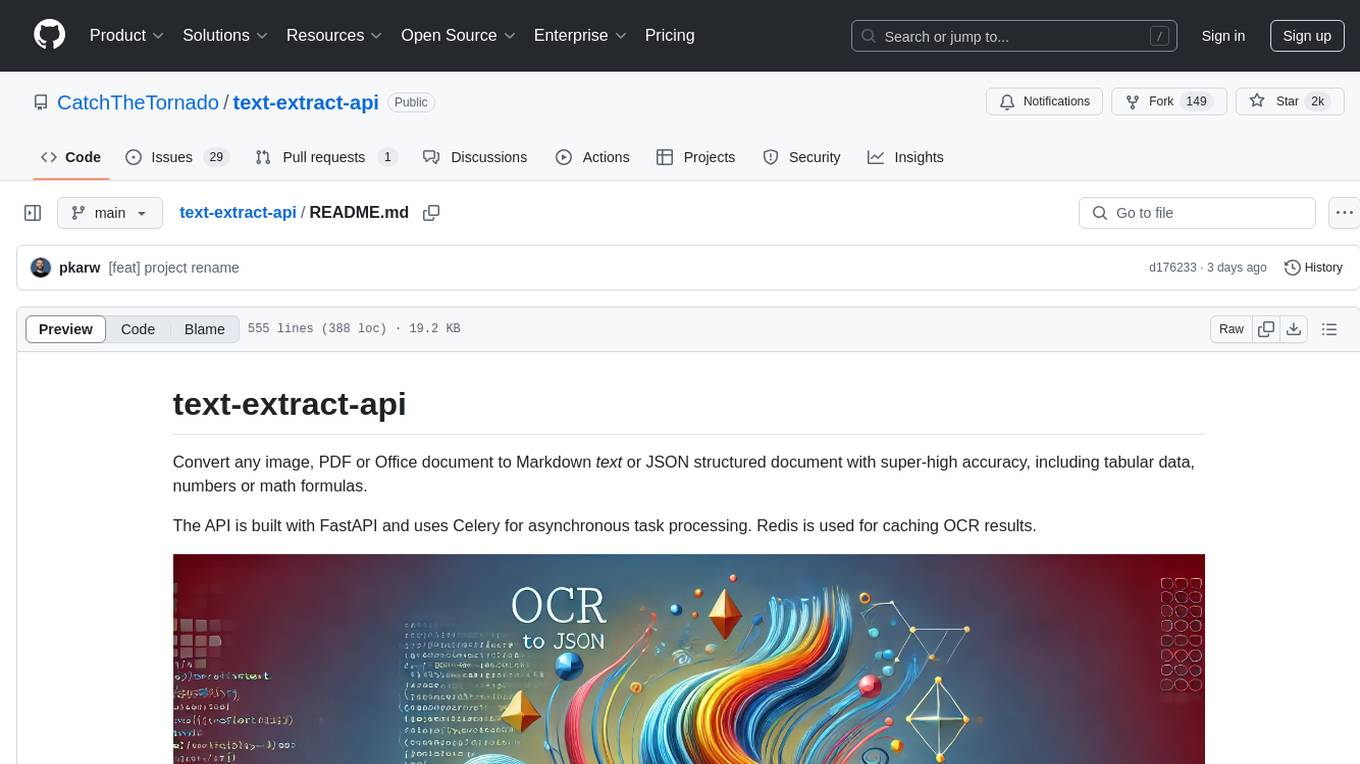

text-extract-api

The text-extract-api is a powerful tool that allows users to convert images, PDFs, or Office documents to Markdown text or JSON structured documents with high accuracy. It is built using FastAPI and utilizes Celery for asynchronous task processing, with Redis for caching OCR results. The tool provides features such as PDF/Office to Markdown and JSON conversion, improving OCR results with LLama, removing Personally Identifiable Information from documents, distributed queue processing, caching using Redis, switchable storage strategies, and a CLI tool for task management. Users can run the tool locally or on cloud services, with support for GPU processing. The tool also offers an online demo for testing purposes.

context7

Context7 is a powerful tool for analyzing and visualizing data in various formats. It provides a user-friendly interface for exploring datasets, generating insights, and creating interactive visualizations. With advanced features such as data filtering, aggregation, and customization, Context7 is suitable for both beginners and experienced data analysts. The tool supports a wide range of data sources and formats, making it versatile for different use cases. Whether you are working on exploratory data analysis, data visualization, or data storytelling, Context7 can help you uncover valuable insights and communicate your findings effectively.

For similar tasks

mcp-server-mysql

The MCP Server for MySQL based on NodeJS is a Model Context Protocol server that provides access to MySQL databases. It enables users to inspect database schemas and execute SQL queries. The server offers tools for executing SQL queries, providing comprehensive database information, security features like SQL injection prevention, performance optimizations, monitoring, and debugging capabilities. Users can configure the server using environment variables and advanced options. The server supports multi-DB mode, schema-specific permissions, and includes troubleshooting guidelines for common issues. Contributions are welcome, and the project roadmap includes enhancing query capabilities, security features, performance optimizations, monitoring, and expanding schema information.

hass-ollama-conversation

The Ollama Conversation integration adds a conversation agent powered by Ollama in Home Assistant. This agent can be used in automations to query information provided by Home Assistant about your house, including areas, devices, and their states. Users can install the integration via HACS and configure settings such as API timeout, model selection, context size, maximum tokens, and other parameters to fine-tune the responses generated by the AI language model. Contributions to the project are welcome, and discussions can be held on the Home Assistant Community platform.

rclip

rclip is a command-line photo search tool powered by the OpenAI's CLIP neural network. It allows users to search for images using text queries, similar image search, and combining multiple queries. The tool extracts features from photos to enable searching and indexing, with options for previewing results in supported terminals or custom viewers. Users can install rclip on Linux, macOS, and Windows using different installation methods. The repository follows the Conventional Commits standard and welcomes contributions from the community.

honcho

Honcho is a platform for creating personalized AI agents and LLM powered applications for end users. The repository is a monorepo containing the server/API for managing database interactions and storing application state, along with a Python SDK. It utilizes FastAPI for user context management and Poetry for dependency management. The API can be run using Docker or manually by setting environment variables. The client SDK can be installed using pip or Poetry. The project is open source and welcomes contributions, following a fork and PR workflow. Honcho is licensed under the AGPL-3.0 License.

core

OpenSumi is a framework designed to help users quickly build AI Native IDE products. It provides a set of tools and templates for creating Cloud IDEs, Desktop IDEs based on Electron, CodeBlitz web IDE Framework, Lite Web IDE on the Browser, and Mini-App liked IDE. The framework also offers documentation for users to refer to and a detailed guide on contributing to the project. OpenSumi encourages contributions from the community and provides a platform for users to report bugs, contribute code, or improve documentation. The project is licensed under the MIT license and contains third-party code under other open source licenses.

yolo-ios-app

The Ultralytics YOLO iOS App GitHub repository offers an advanced object detection tool leveraging YOLOv8 models for iOS devices. Users can transform their devices into intelligent detection tools to explore the world in a new and exciting way. The app provides real-time detection capabilities with multiple AI models to choose from, ranging from 'nano' to 'x-large'. Contributors are welcome to participate in this open-source project, and licensing options include AGPL-3.0 for open-source use and an Enterprise License for commercial integration. Users can easily set up the app by following the provided steps, including cloning the repository, adding YOLOv8 models, and running the app on their iOS devices.

PyAirbyte

PyAirbyte brings the power of Airbyte to every Python developer by providing a set of utilities to use Airbyte connectors in Python. It enables users to easily manage secrets, work with various connectors like GitHub, Shopify, and Postgres, and contribute to the project. PyAirbyte is not a replacement for Airbyte but complements it, supporting data orchestration frameworks like Airflow and Snowpark. Users can develop ETL pipelines and import connectors from local directories. The tool simplifies data integration tasks for Python developers.

md-agent

MD-Agent is a LLM-agent based toolset for Molecular Dynamics. It uses Langchain and a collection of tools to set up and execute molecular dynamics simulations, particularly in OpenMM. The tool assists in environment setup, installation, and usage by providing detailed steps. It also requires API keys for certain functionalities, such as OpenAI and paper-qa for literature searches. Contributions to the project are welcome, with a detailed Contributor's Guide available for interested individuals.

For similar jobs

lollms-webui

LoLLMs WebUI (Lord of Large Language Multimodal Systems: One tool to rule them all) is a user-friendly interface to access and utilize various LLM (Large Language Models) and other AI models for a wide range of tasks. With over 500 AI expert conditionings across diverse domains and more than 2500 fine tuned models over multiple domains, LoLLMs WebUI provides an immediate resource for any problem, from car repair to coding assistance, legal matters, medical diagnosis, entertainment, and more. The easy-to-use UI with light and dark mode options, integration with GitHub repository, support for different personalities, and features like thumb up/down rating, copy, edit, and remove messages, local database storage, search, export, and delete multiple discussions, make LoLLMs WebUI a powerful and versatile tool.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.

minio

MinIO is a High Performance Object Storage released under GNU Affero General Public License v3.0. It is API compatible with Amazon S3 cloud storage service. Use MinIO to build high performance infrastructure for machine learning, analytics and application data workloads.

mage-ai

Mage is an open-source data pipeline tool for transforming and integrating data. It offers an easy developer experience, engineering best practices built-in, and data as a first-class citizen. Mage makes it easy to build, preview, and launch data pipelines, and provides observability and scaling capabilities. It supports data integrations, streaming pipelines, and dbt integration.

AiTreasureBox

AiTreasureBox is a versatile AI tool that provides a collection of pre-trained models and algorithms for various machine learning tasks. It simplifies the process of implementing AI solutions by offering ready-to-use components that can be easily integrated into projects. With AiTreasureBox, users can quickly prototype and deploy AI applications without the need for extensive knowledge in machine learning or deep learning. The tool covers a wide range of tasks such as image classification, text generation, sentiment analysis, object detection, and more. It is designed to be user-friendly and accessible to both beginners and experienced developers, making AI development more efficient and accessible to a wider audience.

tidb

TiDB is an open-source distributed SQL database that supports Hybrid Transactional and Analytical Processing (HTAP) workloads. It is MySQL compatible and features horizontal scalability, strong consistency, and high availability.

airbyte

Airbyte is an open-source data integration platform that makes it easy to move data from any source to any destination. With Airbyte, you can build and manage data pipelines without writing any code. Airbyte provides a library of pre-built connectors that make it easy to connect to popular data sources and destinations. You can also create your own connectors using Airbyte's no-code Connector Builder or low-code CDK. Airbyte is used by data engineers and analysts at companies of all sizes to build and manage their data pipelines.

labelbox-python

Labelbox is a data-centric AI platform for enterprises to develop, optimize, and use AI to solve problems and power new products and services. Enterprises use Labelbox to curate data, generate high-quality human feedback data for computer vision and LLMs, evaluate model performance, and automate tasks by combining AI and human-centric workflows. The academic & research community uses Labelbox for cutting-edge AI research.