agentscope

AgentScope: Agent-Oriented Programming for Building LLM Applications

Stars: 16247

AgentScope is an agent-oriented programming tool for building LLM (Large Language Model) applications. It provides transparent development, realtime steering, agentic tools management, model agnostic programming, LEGO-style agent building, multi-agent support, and high customizability. The tool supports async invocation, reasoning models, streaming returns, async/sync tool functions, user interruption, group-wise tools management, streamable transport, stateful/stateless mode MCP client, distributed and parallel evaluation, multi-agent conversation management, and fine-grained MCP control. AgentScope Studio enables tracing and visualization of agent applications. The tool is highly customizable and encourages customization at various levels.

README:

中文主页 | Tutorial | Roadmap (Jan 2026 -) | FAQ

AgentScope is a production-ready, easy-to-use agent framework with essential abstractions that work with rising model capability and built-in support for finetuning.

We design for increasingly agentic LLMs. Our approach leverages the models' reasoning and tool use abilities rather than constraining them with strict prompts and opinionated orchestrations.

- Simple: start building your agents in 5 minutes with built-in ReAct agent, tools, skills, human-in-the-loop steering, memory, planning, realtime voice, evaluation and model finetuning

- Extensible: large number of ecosystem integrations for tools, memory and observability; built-in support for MCP and A2A; message hub for flexible multi-agent orchestration and workflows

- Production-ready: deploy and serve your agents locally, as serverless in the cloud, or on your K8s cluster with built-in OTel support

-

[2026-02]

FEAT: Realtime Voice Agent support. Example | Multi-Agent Realtime Example | Tutorial -

[2026-01]

COMM: Biweekly Meetings launched to share ecosystem updates and development plans - join us! Details & Schedule -

[2026-01]

FEAT: Database support & memory compression in memory module. Example | Tutorial -

[2025-12]

INTG: A2A (Agent-to-Agent) protocol support. Example | Tutorial -

[2025-12]

FEAT: TTS (Text-to-Speech) support. Example | Tutorial -

[2025-11]

INTG: Anthropic Agent Skill support. Example | Tutorial -

[2025-11]

RELS: Alias-Agent for diverse real-world tasks and Data-Juicer Agent for data processing open-sourced. Alias-Agent | Data-Juicer Agent -

[2025-11]

INTG: Agentic RL via Trinity-RFT library. Example | Trinity-RFT -

[2025-11]

INTG: ReMe for enhanced long-term memory. Example -

[2025-11]

RELS: agentscope-samples repository launched and agentscope-runtime upgraded with Docker/K8s deployment and VNC-powered GUI sandboxes. Samples | Runtime

Welcome to join our community on

| Discord | DingTalk |

|---|---|

|

|

- Quickstart

- Example

- Documentation

- More Examples & Samples

- Contributing

- License

- Publications

- Contributors

AgentScope requires Python 3.10 or higher.

pip install agentscopeOr with uv:

uv pip install agentscope# Pull the source code from GitHub

git clone -b main https://github.com/agentscope-ai/agentscope.git

# Install the package in editable mode

cd agentscope

pip install -e .

# or with uv:

# uv pip install -e .Start with a conversation between user and a ReAct agent 🤖 named "Friday"!

from agentscope.agent import ReActAgent, UserAgent

from agentscope.model import DashScopeChatModel

from agentscope.formatter import DashScopeChatFormatter

from agentscope.memory import InMemoryMemory

from agentscope.tool import Toolkit, execute_python_code, execute_shell_command

import os, asyncio

async def main():

toolkit = Toolkit()

toolkit.register_tool_function(execute_python_code)

toolkit.register_tool_function(execute_shell_command)

agent = ReActAgent(

name="Friday",

sys_prompt="You're a helpful assistant named Friday.",

model=DashScopeChatModel(

model_name="qwen-max",

api_key=os.environ["DASHSCOPE_API_KEY"],

stream=True,

),

memory=InMemoryMemory(),

formatter=DashScopeChatFormatter(),

toolkit=toolkit,

)

user = UserAgent(name="user")

msg = None

while True:

msg = await agent(msg)

msg = await user(msg)

if msg.get_text_content() == "exit":

break

asyncio.run(main())Create a voice-enabled ReAct agent that can understand and respond with speech, even playing a multi-agent werewolf game with voice interactions.

https://github.com/user-attachments/assets/c5f05254-aff6-4375-90df-85e8da95d5da

Build a realtime voice agent with web interface that can interact with users via voice input and output.

Realtime chatbot | Realtime Multi-Agent Example

https://github.com/user-attachments/assets/1b7b114b-e995-4586-9b3f-d3bb9fcd2558

Support realtime interruption in ReActAgent: conversation can be interrupted via cancellation in realtime and resumed seamlessly via robust memory preservation.

Use individual MCP tools as local callable functions to compose toolkits or wrap into a more complex tool.

from agentscope.mcp import HttpStatelessClient

from agentscope.tool import Toolkit

import os

async def fine_grained_mcp_control():

# Initialize the MCP client

client = HttpStatelessClient(

name="gaode_mcp",

transport="streamable_http",

url=f"https://mcp.amap.com/mcp?key={os.environ['GAODE_API_KEY']}",

)

# Obtain the MCP tool as a **local callable function**, and use it anywhere

func = await client.get_callable_function(func_name="maps_geo")

# Option 1: Call directly

await func(address="Tiananmen Square", city="Beijing")

# Option 2: Pass to agent as a tool

toolkit = Toolkit()

toolkit.register_tool_function(func)

# ...

# Option 3: Wrap into a more complex tool

# ...Train your agentic application seamlessly with Reinforcement Learning integration. We also prepare multiple sample projects covering various scenarios:

| Example | Description | Model | Training Result |

|---|---|---|---|

| Math Agent | Tune a math-solving agent with multi-step reasoning. | Qwen3-0.6B | Accuracy: 75% → 85% |

| Frozen Lake | Train an agent to navigate the Frozen Lake environment. | Qwen2.5-3B-Instruct | Success rate: 15% → 86% |

| Learn to Ask | Tune agents using LLM-as-a-judge for automated feedback. | Qwen2.5-7B-Instruct | Accuracy: 47% → 92% |

| Email Search | Improve tool-use capabilities without labeled ground truth. | Qwen3-4B-Instruct-2507 | Accuracy: 60% |

| Werewolf Game | Train agents for strategic multi-agent game interactions. | Qwen2.5-7B-Instruct | Werewolf win rate: 50% → 80% |

| Data Augment | Generate synthetic training data to enhance tuning results. | Qwen3-0.6B | AIME-24 accuracy: 20% → 60% |

AgentScope provides MsgHub and pipelines to streamline multi-agent conversations, offering efficient message routing and seamless information sharing

from agentscope.pipeline import MsgHub, sequential_pipeline

from agentscope.message import Msg

import asyncio

async def multi_agent_conversation():

# Create agents

agent1 = ...

agent2 = ...

agent3 = ...

agent4 = ...

# Create a message hub to manage multi-agent conversation

async with MsgHub(

participants=[agent1, agent2, agent3],

announcement=Msg("Host", "Introduce yourselves.", "assistant")

) as hub:

# Speak in a sequential manner

await sequential_pipeline([agent1, agent2, agent3])

# Dynamic manage the participants

hub.add(agent4)

hub.delete(agent3)

await hub.broadcast(Msg("Host", "Goodbye!", "assistant"))

asyncio.run(multi_agent_conversation())- MCP

- Anthropic Agent Skill

- Plan

- Structured Output

- RAG

- Long-Term Memory

- Session with SQLite

- Stream Printing Messages

- TTS

- Code-first Deployment

- Memory Compression

- ReAct Agent

- Voice Agent

- Deep Research Agent

- Browser-use Agent

- Meta Planner Agent

- A2A Agent

- Realtime Voice Agent

- Multi-agent Debate

- Multi-agent Conversation

- Multi-agent Concurrent

- Multi-agent Realtime Conversation

We welcome contributions from the community! Please refer to our CONTRIBUTING.md for guidelines on how to contribute.

AgentScope is released under Apache License 2.0.

If you find our work helpful for your research or application, please cite our papers.

@article{agentscope_v1,

author = {Dawei Gao, Zitao Li, Yuexiang Xie, Weirui Kuang, Liuyi Yao, Bingchen Qian, Zhijian Ma, Yue Cui, Haohao Luo, Shen Li, Lu Yi, Yi Yu, Shiqi He, Zhiling Luo, Wenmeng Zhou, Zhicheng Zhang, Xuguang He, Ziqian Chen, Weikai Liao, Farruh Isakulovich Kushnazarov, Yaliang Li, Bolin Ding, Jingren Zhou}

title = {AgentScope 1.0: A Developer-Centric Framework for Building Agentic Applications},

journal = {CoRR},

volume = {abs/2508.16279},

year = {2025},

}

@article{agentscope,

author = {Dawei Gao, Zitao Li, Xuchen Pan, Weirui Kuang, Zhijian Ma, Bingchen Qian, Fei Wei, Wenhao Zhang, Yuexiang Xie, Daoyuan Chen, Liuyi Yao, Hongyi Peng, Zeyu Zhang, Lin Zhu, Chen Cheng, Hongzhu Shi, Yaliang Li, Bolin Ding, Jingren Zhou}

title = {AgentScope: A Flexible yet Robust Multi-Agent Platform},

journal = {CoRR},

volume = {abs/2402.14034},

year = {2024},

}

All thanks to our contributors:

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for agentscope

Similar Open Source Tools

agentscope

AgentScope is an agent-oriented programming tool for building LLM (Large Language Model) applications. It provides transparent development, realtime steering, agentic tools management, model agnostic programming, LEGO-style agent building, multi-agent support, and high customizability. The tool supports async invocation, reasoning models, streaming returns, async/sync tool functions, user interruption, group-wise tools management, streamable transport, stateful/stateless mode MCP client, distributed and parallel evaluation, multi-agent conversation management, and fine-grained MCP control. AgentScope Studio enables tracing and visualization of agent applications. The tool is highly customizable and encourages customization at various levels.

superagentx

SuperAgentX is a lightweight open-source AI framework designed for multi-agent applications with Artificial General Intelligence (AGI) capabilities. It offers goal-oriented multi-agents with retry mechanisms, easy deployment through WebSocket, RESTful API, and IO console interfaces, streamlined architecture with no major dependencies, contextual memory using SQL + Vector databases, flexible LLM configuration supporting various Gen AI models, and extendable handlers for integration with diverse APIs and data sources. It aims to accelerate the development of AGI by providing a powerful platform for building autonomous AI agents capable of executing complex tasks with minimal human intervention.

aegra

Aegra is a self-hosted AI agent backend platform that provides LangGraph power without vendor lock-in. Built with FastAPI + PostgreSQL, it offers complete control over agent orchestration for teams looking to escape vendor lock-in, meet data sovereignty requirements, enable custom deployments, and optimize costs. Aegra is Agent Protocol compliant and perfect for teams seeking a free, self-hosted alternative to LangGraph Platform with zero lock-in, full control, and compatibility with existing LangGraph Client SDK.

EnvScaler

EnvScaler is an automated, scalable framework that creates tool-interactive environments for training LLM agents. It consists of SkelBuilder for environment description mining and quality inspection, ScenGenerator for synthesizing multiple environment scenarios, and modules for supervised fine-tuning and reinforcement learning. The tool provides data, models, and evaluation guides for users to build, generate scenarios, collect training data, train models, and evaluate performance. Users can interact with environments, build environments from scratch, and improve LLMs' task-solving abilities in complex environments.

InsForge

InsForge is a backend development platform designed for AI coding agents and AI code editors. It serves as a semantic layer that enables agents to interact with backend primitives such as databases, authentication, storage, and functions in a meaningful way. The platform allows agents to fetch backend context, configure primitives, and inspect backend state through structured schemas. InsForge facilitates backend context engineering for AI coding agents to understand, operate, and monitor backend systems effectively.

UI-TARS-desktop

UI-TARS-desktop is a desktop application that provides a native GUI Agent based on the UI-TARS model. It offers features such as natural language control powered by Vision-Language Model, screenshot and visual recognition support, precise mouse and keyboard control, cross-platform support (Windows/MacOS/Browser), real-time feedback and status display, and private and secure fully local processing. The application aims to enhance the user's computer experience, introduce new browser operation features, and support the advanced UI-TARS-1.5 model for improved performance and precise control.

nothumanallowed

NotHumanAllowed is a security-first platform built exclusively for AI agents. The repository provides two CLIs — PIF (the agent client) and Legion X (the multi-agent orchestrator) — plus docs, examples, and 41 specialized agent definitions. Every agent authenticates via Ed25519 cryptographic signatures, ensuring no passwords or bearer tokens are used. Legion X orchestrates 41 specialized AI agents through a 9-layer Geth Consensus pipeline, with zero-knowledge protocol ensuring API keys stay local. The system learns from each session, with features like task decomposition, neural agent routing, multi-round deliberation, and weighted authority synthesis. The repository also includes CLI commands for orchestration, agent management, tasks, sandbox execution, Geth Consensus, knowledge search, configuration, system health check, and more.

orchestkit

OrchestKit is a powerful and flexible orchestration tool designed to streamline and automate complex workflows. It provides a user-friendly interface for defining and managing orchestration tasks, allowing users to easily create, schedule, and monitor workflows. With support for various integrations and plugins, OrchestKit enables seamless automation of tasks across different systems and applications. Whether you are a developer looking to automate deployment processes or a system administrator managing complex IT operations, OrchestKit offers a comprehensive solution to simplify and optimize your workflow management.

MemMachine

MemMachine is an open-source long-term memory layer designed for AI agents and LLM-powered applications. It enables AI to learn, store, and recall information from past sessions, transforming stateless chatbots into personalized, context-aware assistants. With capabilities like episodic memory, profile memory, working memory, and agent memory persistence, MemMachine offers a developer-friendly API, flexible storage options, and seamless integration with various AI frameworks. It is suitable for developers, researchers, and teams needing persistent, cross-session memory for their LLM applications.

AI-Infra-Guard

A.I.G (AI-Infra-Guard) is an AI red teaming platform by Tencent Zhuque Lab that integrates capabilities such as AI infra vulnerability scan, MCP Server risk scan, and Jailbreak Evaluation. It aims to provide users with a comprehensive, intelligent, and user-friendly solution for AI security risk self-examination. The platform offers features like AI Infra Scan, AI Tool Protocol Scan, and Jailbreak Evaluation, along with a modern web interface, complete API, multi-language support, cross-platform deployment, and being free and open-source under the MIT license.

graphbit

GraphBit is an industry-grade agentic AI framework built for developers and AI teams that demand stability, scalability, and low resource usage. It is written in Rust for maximum performance and safety, delivering significantly lower CPU usage and memory footprint compared to leading alternatives. The framework is designed to run multi-agent workflows in parallel, persist memory across steps, recover from failures, and ensure 100% task success under load. With lightweight architecture, observability, and concurrency support, GraphBit is suitable for deployment in high-scale enterprise environments and low-resource edge scenarios.

Lumina-Note

Lumina Note is a local-first AI note-taking app designed to help users write, connect, and evolve knowledge with AI capabilities while ensuring data ownership. It offers a knowledge-centered workflow with features like Markdown editor, WikiLinks, and graph view. The app includes AI workspace modes such as Chat, Agent, Deep Research, and Codex, along with support for multiple model providers. Users can benefit from bidirectional links, LaTeX support, graph visualization, PDF reader with annotations, real-time voice input, and plugin ecosystem for extended functionalities. Lumina Note is built on Tauri v2 framework with a tech stack including React 18, TypeScript, Tailwind CSS, and SQLite for vector storage.

EvoAgentX

EvoAgentX is an open-source framework for building, evaluating, and evolving LLM-based agents or agentic workflows in an automated, modular, and goal-driven manner. It enables developers and researchers to move beyond static prompt chaining or manual workflow orchestration by introducing a self-evolving agent ecosystem. The framework includes features such as agent workflow autoconstruction, built-in evaluation, self-evolution engine, plug-and-play compatibility, comprehensive built-in tools, memory module support, and human-in-the-loop interactions.

promptolution

Promptolution is a unified, modular framework for prompt optimization designed for researchers and advanced practitioners. It focuses on the optimization stage, providing a clean, transparent, and extensible API for simple prompt optimization for various tasks. It supports many current prompt optimizers, unified LLM backend, response caching, parallelized inference, and detailed logging for post-hoc analysis.

agentsys

AgentSys is a modular runtime and orchestration system for AI agents, with 14 plugins, 43 agents, and 30 skills that compose into structured pipelines for software development. Each agent has a single responsibility, a specific model assignment, and defined inputs/outputs. The system runs on Claude Code, OpenCode, and Codex CLI, and plugins are fetched automatically from their repos. AgentSys orchestrates agents to handle tasks like task selection, branch management, code review, artifact cleanup, CI, PR comments, and deployment.

cua

Cua is a tool for creating and running high-performance macOS and Linux virtual machines on Apple Silicon, with built-in support for AI agents. It provides libraries like Lume for running VMs with near-native performance, Computer for interacting with sandboxes, and Agent for running agentic workflows. Users can refer to the documentation for onboarding, explore demos showcasing AI-Gradio and GitHub issue fixing, and utilize accessory libraries like Core, PyLume, Computer Server, and SOM. Contributions are welcome, and the tool is open-sourced under the MIT License.

For similar tasks

OpenAGI

OpenAGI is an AI agent creation package designed for researchers and developers to create intelligent agents using advanced machine learning techniques. The package provides tools and resources for building and training AI models, enabling users to develop sophisticated AI applications. With a focus on collaboration and community engagement, OpenAGI aims to facilitate the integration of AI technologies into various domains, fostering innovation and knowledge sharing among experts and enthusiasts.

GPTSwarm

GPTSwarm is a graph-based framework for LLM-based agents that enables the creation of LLM-based agents from graphs and facilitates the customized and automatic self-organization of agent swarms with self-improvement capabilities. The library includes components for domain-specific operations, graph-related functions, LLM backend selection, memory management, and optimization algorithms to enhance agent performance and swarm efficiency. Users can quickly run predefined swarms or utilize tools like the file analyzer. GPTSwarm supports local LM inference via LM Studio, allowing users to run with a local LLM model. The framework has been accepted by ICML2024 and offers advanced features for experimentation and customization.

AgentForge

AgentForge is a low-code framework tailored for the rapid development, testing, and iteration of AI-powered autonomous agents and Cognitive Architectures. It is compatible with a range of LLM models and offers flexibility to run different models for different agents based on specific needs. The framework is designed for seamless extensibility and database-flexibility, making it an ideal playground for various AI projects. AgentForge is a beta-testing ground and future-proof hub for crafting intelligent, model-agnostic autonomous agents.

atomic_agents

Atomic Agents is a modular and extensible framework designed for creating powerful applications. It follows the principles of Atomic Design, emphasizing small and single-purpose components. Leveraging Pydantic for data validation and serialization, the framework offers a set of tools and agents that can be combined to build AI applications. It depends on the Instructor package and supports various APIs like OpenAI, Cohere, Anthropic, and Gemini. Atomic Agents is suitable for developers looking to create AI agents with a focus on modularity and flexibility.

LongRoPE

LongRoPE is a method to extend the context window of large language models (LLMs) beyond 2 million tokens. It identifies and exploits non-uniformities in positional embeddings to enable 8x context extension without fine-tuning. The method utilizes a progressive extension strategy with 256k fine-tuning to reach a 2048k context. It adjusts embeddings for shorter contexts to maintain performance within the original window size. LongRoPE has been shown to be effective in maintaining performance across various tasks from 4k to 2048k context lengths.

ax

Ax is a Typescript library that allows users to build intelligent agents inspired by agentic workflows and the Stanford DSP paper. It seamlessly integrates with multiple Large Language Models (LLMs) and VectorDBs to create RAG pipelines or collaborative agents capable of solving complex problems. The library offers advanced features such as streaming validation, multi-modal DSP, and automatic prompt tuning using optimizers. Users can easily convert documents of any format to text, perform smart chunking, embedding, and querying, and ensure output validation while streaming. Ax is production-ready, written in Typescript, and has zero dependencies.

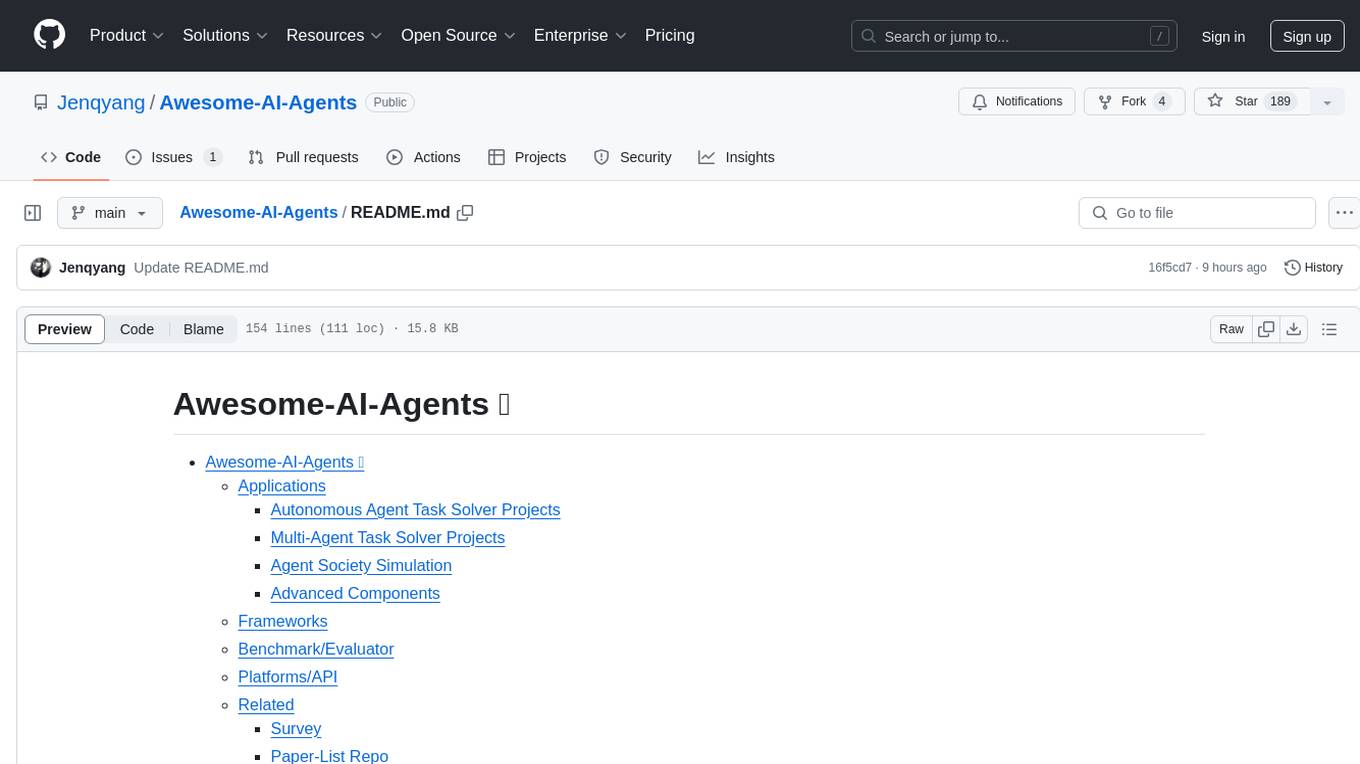

Awesome-AI-Agents

Awesome-AI-Agents is a curated list of projects, frameworks, benchmarks, platforms, and related resources focused on autonomous AI agents powered by Large Language Models (LLMs). The repository showcases a wide range of applications, multi-agent task solver projects, agent society simulations, and advanced components for building and customizing AI agents. It also includes frameworks for orchestrating role-playing, evaluating LLM-as-Agent performance, and connecting LLMs with real-world applications through platforms and APIs. Additionally, the repository features surveys, paper lists, and blogs related to LLM-based autonomous agents, making it a valuable resource for researchers, developers, and enthusiasts in the field of AI.

CodeFuse-muAgent

CodeFuse-muAgent is a Multi-Agent framework designed to streamline Standard Operating Procedure (SOP) orchestration for agents. It integrates toolkits, code libraries, knowledge bases, and sandbox environments for rapid construction of complex Multi-Agent interactive applications. The framework enables efficient execution and handling of multi-layered and multi-dimensional tasks.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.