quivr-mobile

The Quivr React Native Client is a mobile application built using React Native that provides users with the ability to upload files and engage in chat conversations using the Quivr backend API.

Stars: 63

Quivr-Mobile is a React Native mobile application that allows users to upload files and engage in chat conversations using the Quivr backend API. It supports features like file upload and chatting with a language model about uploaded data. The project uses technologies like React Native, React Native Paper, and React Native Navigation. Users can follow the installation steps to set up the client and contribute to the project by opening issues or submitting pull requests following the existing coding style.

README:

This project has not been updated in the last year and does not work with the current version of quivr because of the huge api changes.

The Quivr React Native Client is a mobile application built using React Native that provides users with the ability to upload files and engage in chat conversations using the Quivr backend API.

https://github.com/iMADi-ARCH/quivr-mobile/assets/61308761/878da303-b056-4c14-a3c4-f29e7e375d45

- React Native (with expo)

- React Native Paper

- React Native Navigation

- File Upload: Users can easily upload files to the Quivr backend API using the client.

- Chat with your brain: Talk to a language model about your uploaded data

Follow the steps below to install and run the Quivr React Native Client:

- Clone the repository:

git clone https://github.com/iMADi-ARCH/quivr-mobile.git- Navigate to the project directory:

cd quivr-mobile- Install the required dependencies:

yarn install-

Set environment variables: Change the variables inside

.envrc.examplefile with your own.a. Option A: Using

direnv-

Install direnv - https://direnv.net/#getting-started

-

Copy

.envrc.exampleto.envrccp .envrc.example .envrc

-

Allow reading

.envrcdirenv allow .

b. Option B: Set system wide environment variables by copying the content of

.envrcand placing it at the bottom of your shell file e.g..bashrcor.zshrc -

-

Configure the backend API endpoints: Open the

config.tsfile and update theBACKEND_PORTandPROD_BACKEND_DOMAINconstants with the appropriate values corresponding to your Quivr backend. -

Run the application:

yarn expo startThen you can press a to run the app on an android emulator (given you already have Android studio setup)

Contributions to the Quivr React Native Client are welcome! If you encounter any issues or want to add features, please open an issue on the GitHub repository.

When contributing code, please follow the existing coding style and submit a pull request for review.

- Stan Girard for making such a wonderful api 🫶

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for quivr-mobile

Similar Open Source Tools

quivr-mobile

Quivr-Mobile is a React Native mobile application that allows users to upload files and engage in chat conversations using the Quivr backend API. It supports features like file upload and chatting with a language model about uploaded data. The project uses technologies like React Native, React Native Paper, and React Native Navigation. Users can follow the installation steps to set up the client and contribute to the project by opening issues or submitting pull requests following the existing coding style.

blinkid-react-native

BlinkID SDK wrapper for React Native provides best-in-class ID scanning software for cross-platform apps built with React Native. It offers complete guidance on installing and linking BlinkID library with iOS and Android apps. The SDK requires a valid license key for scanning, with offline data extraction. It supports React Native v0.71.2 and includes installation and linking instructions for iOS and Android. The repository also contains a script to create a sample React Native project and dependencies. Video tutorials demonstrate using documentVerificationOverlay and CombinedRecognizer for scanning various document types.

raggenie

RAGGENIE is a low-code RAG builder tool designed to simplify the creation of conversational AI applications. It offers out-of-the-box plugins for connecting to various data sources and building conversational AI on top of them, including integration with pre-built agents for actions. The tool is open-source under the MIT license, with a current focus on making it easy to build RAG applications and future plans for maintenance, monitoring, and transitioning applications from pilots to production.

bolt-python-ai-chatbot

The 'bolt-python-ai-chatbot' is a Slack chatbot app template that allows users to integrate AI-powered conversations into their Slack workspace. Users can interact with the bot in conversations and threads, send direct messages for private interactions, use commands to communicate with the bot, customize bot responses, and store user preferences. The app supports integration with Workflow Builder, custom language models, and different AI providers like OpenAI, Anthropic, and Google Cloud Vertex AI. Users can create user objects, manage user states, and select from various AI models for communication.

serverless-pdf-chat

The serverless-pdf-chat repository contains a sample application that allows users to ask natural language questions of any PDF document they upload. It leverages serverless services like Amazon Bedrock, AWS Lambda, and Amazon DynamoDB to provide text generation and analysis capabilities. The application architecture involves uploading a PDF document to an S3 bucket, extracting metadata, converting text to vectors, and using a LangChain to search for information related to user prompts. The application is not intended for production use and serves as a demonstration and educational tool.

chatflow

Chatflow is a tool that provides a chat interface for users to interact with systems using natural language. The engine understands user intent and executes commands for tasks, allowing easy navigation of complex websites/products. This approach enhances user experience, reduces training costs, and boosts productivity.

LLM_AppDev-HandsOn

This repository showcases how to build a simple LLM-based chatbot for answering questions based on documents using retrieval augmented generation (RAG) technique. It also provides guidance on deploying the chatbot using Podman or on the OpenShift Container Platform. The workshop associated with this repository introduces participants to LLMs & RAG concepts and demonstrates how to customize the chatbot for specific purposes. The software stack relies on open-source tools like streamlit, LlamaIndex, and local open LLMs via Ollama, making it accessible for GPU-constrained environments.

aiarena-web

aiarena-web is a website designed for running the aiarena.net infrastructure. It consists of different modules such as core functionality, web API endpoints, frontend templates, and a module for linking users to their Patreon accounts. The website serves as a platform for obtaining new matches, reporting results, featuring match replays, and connecting with Patreon supporters. The project is licensed under GPLv3 in 2019.

whisplay-ai-chatbot

Whisplay-AI-Chatbot is a pocket-sized AI chatbot device built using a Raspberry Pi Zero 2w. It features a PiSugar Whisplay HAT with an LCD screen, on-board speaker, and microphone. Users can interact with the chatbot by pressing a button, speaking, and receiving responses, similar to a futuristic walkie-talkie. The tool supports various functionalities such as adjusting volume autonomously, resetting conversation history, local ASR and TTS capabilities, image generation, and integration with APIs like Google Gemini and Grok. It also offers support for LLM8850 AI Accelerator for offline capabilities like ASR, TTS, and LLM API. The chatbot saves conversation history and generated images in a data folder, and users can customize the tool with different enclosure cases available for Pi02 and Pi5 models.

shipstation

ShipStation is an AI-based website and agents generation platform that optimizes landing page websites and generic connect-anything-to-anything services. It enables seamless communication between service providers and integration partners, offering features like user authentication, project management, code editing, payment integration, and real-time progress tracking. The project architecture includes server-side (Node.js) and client-side (React with Vite) components. Prerequisites include Node.js, npm or yarn, Anthropic API key, Supabase account, Tavily API key, and Razorpay account. Setup instructions involve cloning the repository, setting up Supabase, configuring environment variables, and starting the backend and frontend servers. Users can access the application through the browser, sign up or log in, create landing pages or portfolios, and get websites stored in an S3 bucket. Deployment to Heroku involves building the client project, committing changes, and pushing to the main branch. Contributions to the project are encouraged, and the license encourages doing good.

jaison-core

J.A.I.son is a Python project designed for generating responses using various components and applications. It requires specific plugins like STT, T2T, TTSG, and TTSC to function properly. Users can customize responses, voice, and configurations. The project provides a Discord bot, Twitch events and chat integration, and VTube Studio Animation Hotkeyer. It also offers features for managing conversation history, training AI models, and monitoring conversations.

ultimate-rvc

Ultimate RVC is an extension of AiCoverGen, offering new features and improvements for generating audio content using RVC. It is designed for users looking to integrate singing functionality into AI assistants/chatbots/vtubers, create character voices for songs or books, and train voice models. The tool provides easy setup, voice conversion enhancements, TTS functionality, voice model training suite, caching system, UI improvements, and support for custom configurations. It is available for local and Google Colab use, with a PyPI package for easy access. The tool also offers CLI usage and customization through environment variables.

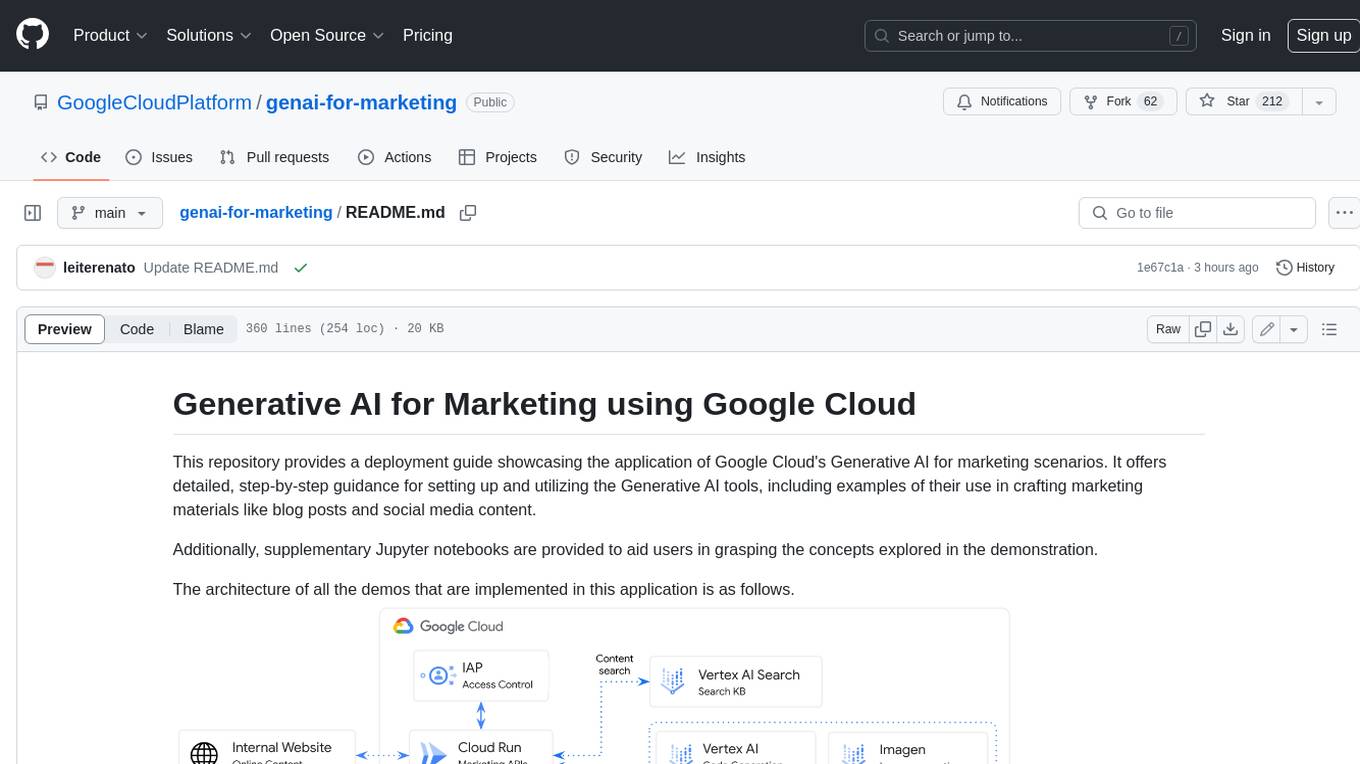

genai-for-marketing

This repository provides a deployment guide for utilizing Google Cloud's Generative AI tools in marketing scenarios. It includes step-by-step instructions, examples of crafting marketing materials, and supplementary Jupyter notebooks. The demos cover marketing insights, audience analysis, trendspotting, content search, content generation, and workspace integration. Users can access and visualize marketing data, analyze trends, improve search experience, and generate compelling content. The repository structure includes backend APIs, frontend code, sample notebooks, templates, and installation scripts.

geti-sdk

The Intel® Geti™ SDK is a python package that enables teams to rapidly develop AI models by easing the complexities of model development and enhancing collaboration between teams. It provides tools to interact with an Intel® Geti™ server via the REST API, allowing for project creation, downloading, uploading, deploying for local inference with OpenVINO, setting project and model configuration, launching and monitoring training jobs, and media upload and prediction. The SDK also includes tutorial-style Jupyter notebooks demonstrating its usage.

geti-sdk

The Intel® Geti™ SDK is a python package that enables teams to rapidly develop AI models by easing the complexities of model development and fostering collaboration. It provides tools to interact with an Intel® Geti™ server via the REST API, allowing for project creation, downloading, uploading, deploying for local inference with OpenVINO, configuration management, training job monitoring, media upload, and prediction. The repository also includes tutorial-style Jupyter notebooks demonstrating SDK usage.

vertex-ai-creative-studio

GenMedia Creative Studio is an application showcasing the capabilities of Google Cloud Vertex AI generative AI creative APIs. It includes features like Gemini for prompt rewriting and multimodal evaluation of generated images. The app is built with Mesop, a Python-based UI framework, enabling rapid development of web and internal apps. The Experimental folder contains stand-alone applications and upcoming features demonstrating cutting-edge generative AI capabilities, such as image generation, prompting techniques, and audio/video tools.

For similar tasks

NaLLM

The NaLLM project repository explores the synergies between Neo4j and Large Language Models (LLMs) through three primary use cases: Natural Language Interface to a Knowledge Graph, Creating a Knowledge Graph from Unstructured Data, and Generating a Report using static and LLM data. The repository contains backend and frontend code organized for easy navigation. It includes blog posts, a demo database, instructions for running demos, and guidelines for contributing. The project aims to showcase the potential of Neo4j and LLMs in various applications.

lobe-icons

Lobe Icons is a collection of popular AI / LLM Model Brand SVG logos and icons. It features lightweight and scalable icons designed with highly optimized scalable vector graphics (SVG) for optimal performance. The collection is tree-shakable, allowing users to import only the icons they need to reduce the overall bundle size of their projects. Lobe Icons has an active community of designers and developers who can contribute and seek support on platforms like GitHub and Discord. The repository supports a wide range of brands across different models, providers, and applications, with more brands continuously being added through contributions. Users can easily install Lobe UI with the provided commands and integrate it with NextJS for server-side rendering. Local development can be done using Github Codespaces or by cloning the repository. Contributions are welcome, and users can contribute code by checking out the GitHub Issues. The project is MIT licensed and maintained by LobeHub.

ibm-generative-ai

IBM Generative AI Python SDK is a tool designed for the Tech Preview program for IBM Foundation Models Studio. It brings IBM Generative AI (GenAI) into Python programs, offering various operations and types. Users can start a trial version or request a demo via the provided link. The SDK was recently rewritten and released under V2 in 2024, with a migration guide available. Contributors are welcome to participate in the open-source project by contributing documentation, tests, bug fixes, and new functionality.

ollama4j

Ollama4j is a Java library that serves as a wrapper or binding for the Ollama server. It facilitates communication with the Ollama server and provides models for deployment. The tool requires Java 11 or higher and can be installed locally or via Docker. Users can integrate Ollama4j into Maven projects by adding the specified dependency. The tool offers API specifications and supports various development tasks such as building, running unit tests, and integration tests. Releases are automated through GitHub Actions CI workflow. Areas of improvement include adhering to Java naming conventions, updating deprecated code, implementing logging, using lombok, and enhancing request body creation. Contributions to the project are encouraged, whether reporting bugs, suggesting enhancements, or contributing code.

openkore

OpenKore is a custom client and intelligent automated assistant for Ragnarok Online. It is a free, open source, and cross-platform program (Linux, Windows, and MacOS are supported). To run OpenKore, you need to download and extract it or clone the repository using Git. Configure OpenKore according to the documentation and run openkore.pl to start. The tool provides a FAQ section for troubleshooting, guidelines for reporting issues, and information about botting status on official servers. OpenKore is developed by a global team, and contributions are welcome through pull requests. Various community resources are available for support and communication. Users are advised to comply with the GNU General Public License when using and distributing the software.

quivr-mobile

Quivr-Mobile is a React Native mobile application that allows users to upload files and engage in chat conversations using the Quivr backend API. It supports features like file upload and chatting with a language model about uploaded data. The project uses technologies like React Native, React Native Paper, and React Native Navigation. Users can follow the installation steps to set up the client and contribute to the project by opening issues or submitting pull requests following the existing coding style.

python-projects-2024

Welcome to `OPEN ODYSSEY 1.0` - an Open-source extravaganza for Python and AI/ML Projects. Collaborating with MLH (Major League Hacking), this repository welcomes contributions in the form of fixing outstanding issues, submitting bug reports or new feature requests, adding new projects, implementing new models, and encouraging creativity. Follow the instructions to contribute by forking the repository, cloning it to your PC, creating a new folder for your project, and making a pull request. The repository also features a special Leaderboard for top contributors and offers certificates for all participants and mentors. Follow `OPEN ODYSSEY 1.0` on social media for swift approval of your quest.

evalite

Evalite is a TypeScript-native, local-first tool designed for testing LLM-powered apps. It allows users to view documentation and join a Discord community. To contribute, users need to create a .env file with an OPENAI_API_KEY, run the dev command to check types, run tests, and start the UI dev server. Additionally, users can run 'evalite watch' on examples in the 'packages/example' directory. Note that running 'pnpm build' in the root and 'npm link' in 'packages/evalite' may be necessary for the global 'evalite' command to work.

For similar jobs

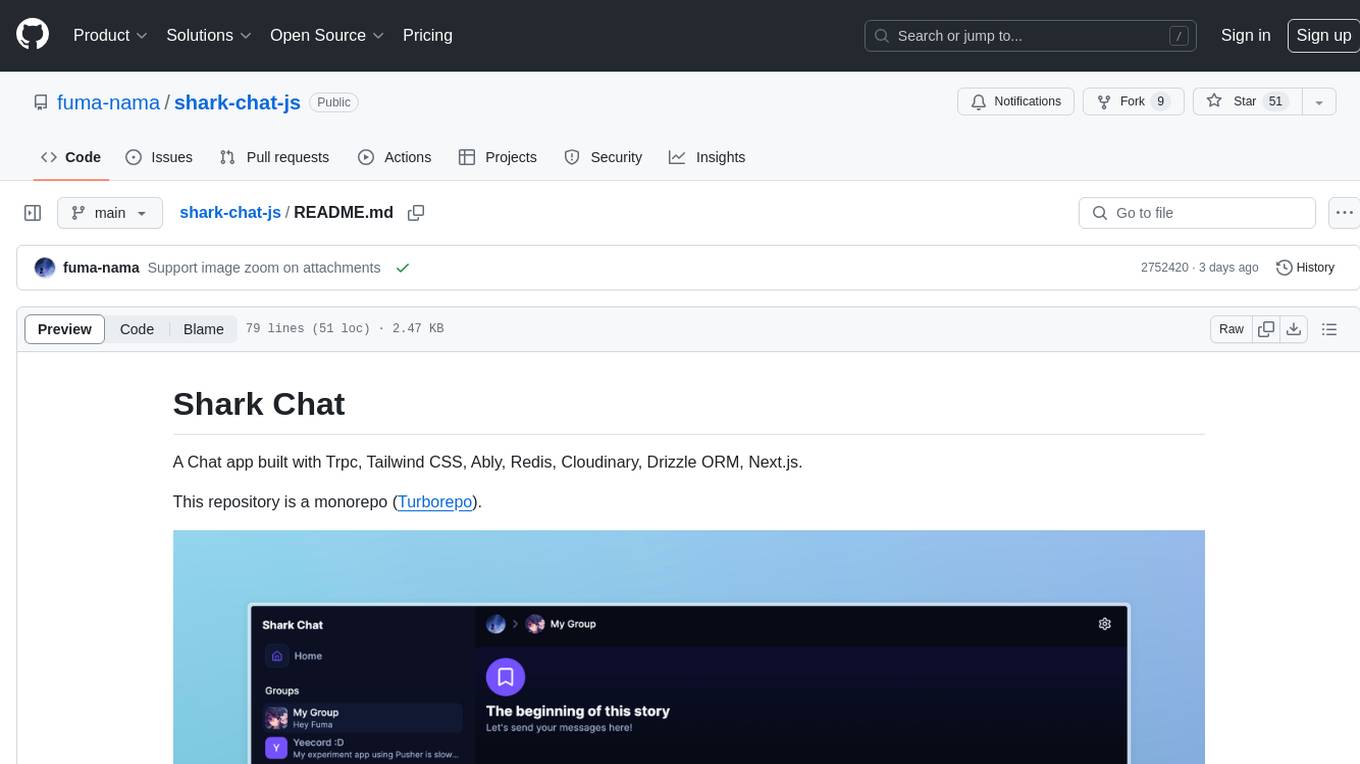

shark-chat-js

Shark Chat is a feature-rich chat application built with Trpc, Tailwind CSS, Ably, Redis, Cloudinary, Drizzle ORM, and Next.js. It allows users to create, update, and delete chat groups, send messages with markdown support, reference messages, embed links, send images/files, have direct messages, manage group members, upload images, receive notifications, use AI-powered features, delete accounts, and switch between light and dark modes. The project is 100% TypeScript and can be played with online or locally after setting up various third-party services.

quivr-mobile

Quivr-Mobile is a React Native mobile application that allows users to upload files and engage in chat conversations using the Quivr backend API. It supports features like file upload and chatting with a language model about uploaded data. The project uses technologies like React Native, React Native Paper, and React Native Navigation. Users can follow the installation steps to set up the client and contribute to the project by opening issues or submitting pull requests following the existing coding style.

jiwu-mall-chat-tauri

Jiwu Chat Tauri APP is a desktop chat application based on Nuxt3 + Tauri + Element Plus framework. It provides a beautiful user interface with integrated chat and social functions. It also supports AI shopping chat and global dark mode. Users can engage in real-time chat, share updates, and interact with AI customer service through this application.

nonebot-plugin-marshoai

nonebot-plugin-marshoai is a chatbot plugin that utilizes the OpenAI standard format API, such as the GitHub Models API, to enable chat functionalities. The plugin features the character Marsho, a cute cat girl, for engaging conversations. It supports OneBot adapters and GitHub Models API, with limited validation for other adapters. Developed by Melobot.

OpenClawChineseTranslation

OpenClaw Chinese Translation is a localization project that provides a fully Chinese interface for the OpenClaw open-source personal AI assistant platform. It allows users to interact with their AI assistant through chat applications like WhatsApp, Telegram, and Discord to manage daily tasks such as emails, calendars, and files. The project includes both CLI command-line and dashboard web interface fully translated into Chinese.

Protofy

Protofy is a full-stack, batteries-included low-code enabled web/app and IoT system with an API system and real-time messaging. It is based on Protofy (protoflow + visualui + protolib + protodevices) + Expo + Next.js + Tamagui + Solito + Express + Aedes + Redbird + Many other amazing packages. Protofy can be used to fast prototype Apps, webs, IoT systems, automations, or APIs. It is a ultra-extensible CMS with supercharged capabilities, mobile support, and IoT support (esp32 thanks to esphome).

react-native-vision-camera

VisionCamera is a powerful, high-performance Camera library for React Native. It features Photo and Video capture, QR/Barcode scanner, Customizable devices and multi-cameras ("fish-eye" zoom), Customizable resolutions and aspect-ratios (4k/8k images), Customizable FPS (30..240 FPS), Frame Processors (JS worklets to run facial recognition, AI object detection, realtime video chats, ...), Smooth zooming (Reanimated), Fast pause and resume, HDR & Night modes, Custom C++/GPU accelerated video pipeline (OpenGL).

dev-conf-replay

This repository contains information about various IT seminars and developer conferences in South Korea, allowing users to watch replays of past events. It covers a wide range of topics such as AI, big data, cloud, infrastructure, devops, blockchain, mobility, games, security, mobile development, frontend, programming languages, open source, education, and community events. Users can explore upcoming and past events, view related YouTube channels, and access additional resources like free programming ebooks and data structures and algorithms tutorials.