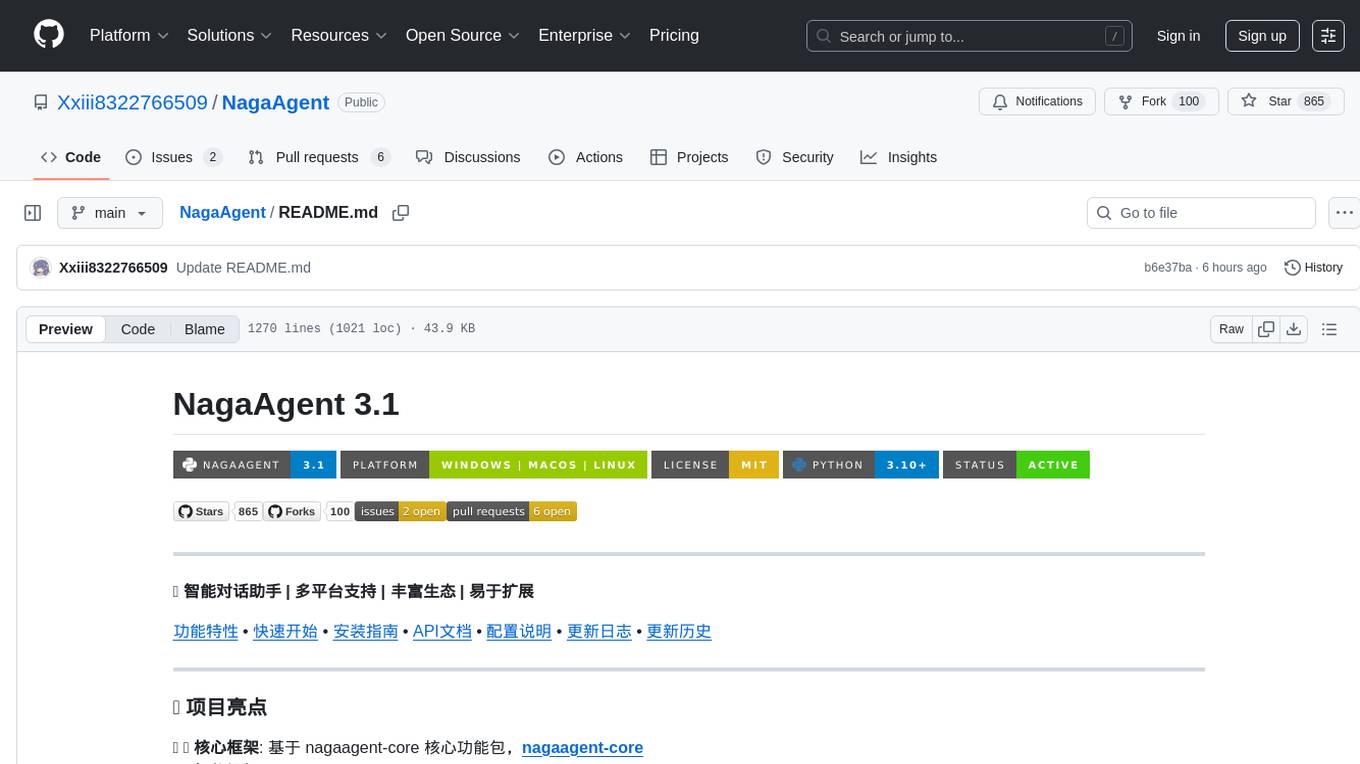

AgentChat

AgentChat 是一个基于 LLM 的智能体交流平台,内置默认 Agent 并支持用户自定义 Agent。通过多轮对话和任务协作,Agent 可以理解并协助完成复杂任务。项目集成 LangChain、Function Call、MCP 协议、RAG、Memory、Milvus 和 ElasticSearch 等技术,实现高效的知识检索与工具调用,使用 FastAPI 构建高性能后端服务。

Stars: 363

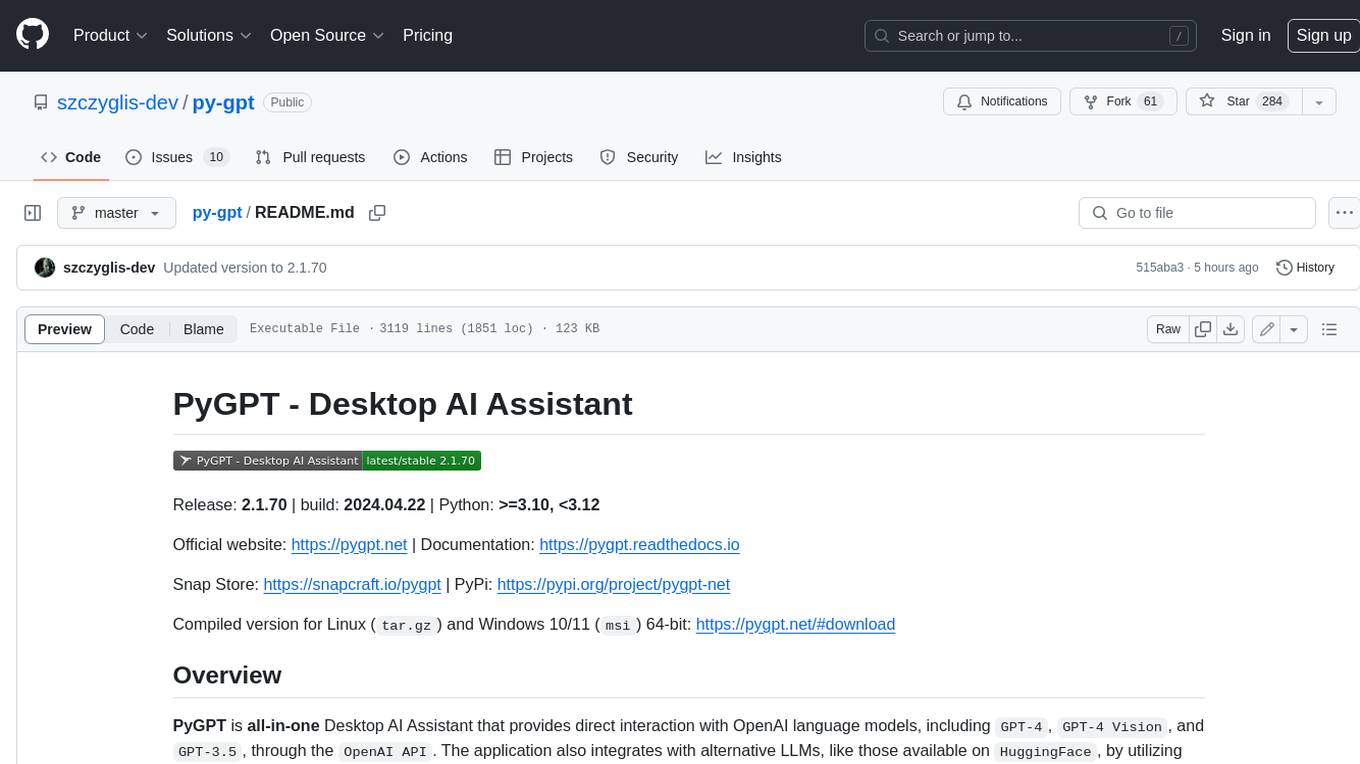

AgentChat is a modern intelligent conversation system built on large language models, providing rich AI conversation features. The system adopts a front-end and back-end separation architecture, supporting various AI models, knowledge base retrieval, tool invocation, MCP server integration, and other advanced functions. It features multiple model support, intelligent agents supporting collaboration, precise knowledge retrieval with RAG technology, a tool ecosystem with customizable extensions, MCP integration supporting Model Context Protocol servers, real-time conversation with smooth user experience, and a modern interface based on Vue 3 and Element Plus.

README:

AgentChat 是一个现代化的智能对话系统,基于大语言模型构建,提供了丰富的AI对话功能。系统采用前后端分离架构,支持多种AI模型、知识库检索、工具调用、MCP服务器集成等高级功能。

- 🤖 多模型支持: 集成OpenAI、DeepSeek、Qwen等主流大语言模型

- 🧠 智能Agent: 支持多Agent协作,具备推理和决策能力

- 📚 知识库检索: RAG技术实现精准知识检索和问答

- 🔧 工具生态: 内置多种实用工具,支持自定义扩展

- 🌐 MCP集成: 支持Model Context Protocol服务器

- 💬 实时对话: 流式响应,提供流畅的对话体验

- 🎨 现代界面: 基于Vue 3和Element Plus的美观UI

🎨 界面预览 - 体验现代化的智能对话系统

|

实时天气信息查询和预报 |

AI驱动的图像生成服务 |

平台中智能体支持工具多轮调用(指的是根据工具C依赖工具B结果,执行工具B依赖工具A结果,所以调用工具的顺序是 A --> B --> C)

支持Model Context Protocol,可上传自定义MCP服务

智能知识管理,为Agent提供丰富的外部知识支持

支持PDF、Markdown、Docx、Txt等多种格式的智能解析

丰富的内置工具集,持续扩展中

多模型支持,灵活配置不同AI服务

获取最新的AI咨询,支持生成图片类型的日报

⚠️ 从 AgentChat v2.2.0 版本开始,LangChain 已升级至 1.0 版本,代码改动较大!

| 🔄 版本 | 📦 LangChain版本 | 🔧 兼容性 | 📝 说明 |

|---|---|---|---|

| v2.1.x 及以下 | 0.x | 使用旧版LangChain API | |

| v2.2.0+ | 1.0+ | ✅ 最新版本 | 重大更新,API变化较大 |

升级注意事项:

- 🔄 LangChain 1.0 引入了重大API变更

- 📚 部分工具和Agent配置方式已更新

- 🛠️ 建议查看迁移指南了解详细变更

- 💡 新用户建议直接使用最新版本

⭐ 全方位的AI智能服务 - 从对话到工具,从知识到决策

|

|

|

|

|

Model Context Protocol集成

|

安全的身份认证与权限控制

|

现代化的技术架构

|

| 🌟 特性 | 📝 描述 | 🔧 技术 |

|---|---|---|

| 流式响应 | 实时生成内容,提升用户体验 | Server-Sent Events |

| 向量检索 | 语义级别的知识检索 | ChromaDB + Embedding |

| 异步处理 | 高并发任务处理 | FastAPI + AsyncIO |

| 模块化设计 | 松耦合架构,易于扩展 | 微服务架构 |

| 智能缓存 | Redis缓存,提升响应速度 | Redis + 智能缓存策略 |

- 框架: FastAPI (Python 3.12+)

- AI集成: LangChain, OpenAI, Anthropic

- 数据库: MySQL 8.0, Redis 7.0

- 向量数据库: ChromaDB, Milvus

- 搜索引擎: Elasticsearch

- 文档处理: PyMuPDF, Unstructured

- 异步任务: Celery

- 部署: Docker, Gunicorn, Uvicorn

- 框架: Vue 3.4+ (Composition API)

- UI组件: Element Plus

- 状态管理: Pinia

- 路由: Vue Router 4

- 构建工具: Vite 5

- 开发语言: TypeScript

- 样式: SCSS

- Markdown: md-editor-v3

- 包管理: Poetry (后端), npm (前端)

- 代码格式: Black, Prettier

- 类型检查: mypy, TypeScript

- 容器化: Docker, Docker Compose

🏗️ 完整的项目架构 - 模块化设计,清晰的职责分离

🔍 点击展开完整项目结构

AgentChat/ # 🏠 项目根目录

├── 📄 README.md # 📖 项目说明文档

├── 📄 LICENSE # ⚖️ 开源许可证

├── 📄 .gitignore # 🚫 Git忽略文件配置

├── 📄 pyproject.toml # 🐍 Python项目配置

├── 📄 requirements.txt # 📦 Python依赖包列表

│

├── 📁 .vscode/ # 🔧 VSCode编辑器配置

├── 📁 .idea/ # 💡 JetBrains IDE配置

│

├── 📁 docs/ # 📚 项目文档目录

│ ├── 📄 API_Documentation_v3.0.md # 🔄 最新API文档

│ ├── 📄 API_Documentation_v2.0.md # 📋 v2.0 API文档

│ └── 📄 API_Documentation_v1.0.md # 📝 v1.0 API文档

│

├── 📁 docker/ # 🐳 容器化配置

│ ├── 📄 Dockerfile # 🐳 Docker镜像构建文件

│ └── 📄 docker-compose.yml # 🔧 Docker编排配置

│

└── 📁 src/ # 💻 源代码目录

├── 📁 backend/ # 🔧 后端服务

│ ├── 📁 chroma_db/ # 🗄️ ChromaDB向量数据库

│ └── 📁 agentchat/ # 🤖 核心后端应用

│ ├── 📄 __init__.py # 🐍 Python包初始化文件

│ ├── 📄 main.py # 🚀 FastAPI应用入口

│ ├── 📄 settings.py # ⚙️ 应用配置设置

│ ├── 📄 config.yaml # 📋 YAML配置文件

│ │

│ ├── 📁 api/ # 🌐 API路由层

│ │ ├── 📄 __init__.py

│ │ ├── 📄 router.py # 🔀 主路由配置

│ │ ├── 📄 JWT.py # 🔐 JWT认证处理

│ │ ├── 📁 v1/ # 📊 v1版本API接口

│ │ ├── 📁 services/ # 🔧 服务层API

│ │ └── 📁 errcode/ # ❌ 错误码定义

│ │

│ ├── 📁 core/ # 🏗️ 核心功能模块

│ │ ├── 📄 __init__.py

│ │ └── 📁 models/ # 🧠 AI模型管理

│ │

│ ├── 📁 database/ # 🗃️ 数据库层

│ │ ├── 📄 __init__.py # 🔗 数据库连接配置

│ │ ├── 📄 init_data.py # 🏗️ 数据库初始化脚本

│ │ ├── 📁 models/ # 📊 数据模型定义

│ │ └── 📁 dao/ # 💾 数据访问对象

│ │

│ ├── 📁 services/ # 🎯 业务服务层

│ │ ├── 📄 __init__.py

│ │ ├── 📄 retrieval.py # 🔍 信息检索服务

│ │ ├── 📄 rag_handler.py # 📚 RAG处理服务

│ │ ├── 📄 aliyun_oss.py # ☁️ 阿里云OSS服务

│ │ ├── 📄 redis.py # 💾 Redis缓存服务

│ │ ├── 📁 rag/ # 📖 RAG检索增强生成

│ │ ├── 📁 mars/ # 🚀 Mars智能体服务

│ │ ├── 📁 mcp/ # 🔌 MCP协议服务

│ │ ├── 📁 mcp_agent/ # 🤖 MCP Agent服务

│ │ ├── 📁 mcp_openai/ # 🧠 MCP OpenAI集成

│ │ ├── 📁 deepsearch/ # 🕵️ 深度搜索服务

│ │ ├── 📁 transform_paper/ # 📄 论文转换服务

│ │ ├── 📁 autobuild/ # 🏗️ 自动构建服务

│ │ └── 📁 rewrite/ # ✏️ 内容重写服务

│ │

│ ├── 📁 tools/ # 🛠️ 工具集成

│ │ ├── 📄 __init__.py # 🧰 工具注册和管理

│ │ ├── 📁 arxiv/ # 📚 ArXiv论文工具

│ │ ├── 📁 delivery/ # 📦 快递查询工具

│ │ ├── 📁 web_search/ # 🔍 网络搜索工具

│ │ ├── 📁 get_weather/ # 🌤️ 天气查询工具

│ │ ├── 📁 send_email/ # 📧 邮件发送工具

│ │ ├── 📁 text2image/ # 🎨 文本转图片工具

│ │ ├── 📁 image2text/ # 👁️ 图片转文本工具

│ │ ├── 📁 convert_to_pdf/ # 📄 PDF转换工具

│ │ ├── 📁 convert_to_docx/ # 📝 Word转换工具

│ │ ├── 📁 resume_optimizer/# 📋 简历优化工具

│ │ ├── 📁 rag_data/ # 📊 RAG数据处理工具

│ │ └── 📁 crawl_web/ # 🕷️ 网页爬虫工具

│ │

│ ├── 📁 mcp_servers/ # 🖥️ MCP服务器集合

│ ├── 📁 prompts/ # 💬 提示词模板库

│ ├── 📁 config/ # ⚙️ 配置文件目录

│ ├── 📁 schema/ # 📋 数据模式定义

│ ├── 📁 data/ # 💾 数据存储目录

│ ├── 📁 utils/ # 🧰 通用工具函数

│ └── 📁 test/ # 🧪 测试代码目录

│

└── 📁 frontend/ # 🎨 前端应用

├── 📄 package.json # 📦 Node.js项目配置

├── 📄 package-lock.json # 🔒 依赖版本锁定

├── 📄 tsconfig.json # 🔧 TypeScript配置

├── 📄 tsconfig.app.json # 📱 应用TypeScript配置

├── 📄 tsconfig.node.json # 🔧 Node环境TypeScript配置

├── 📄 vite.config.ts # ⚡ Vite构建配置

├── 📄 index.html # 🌐 HTML入口文件

├── 📄 .gitignore # 🚫 前端Git忽略配置

├── 📄 README.md # 📖 前端说明文档

├── 📄 DEBUGGING_GUIDE.md # 🐛 调试指南

├── 📄 auto-imports.d.ts # 🔄 自动导入类型声明

├── 📄 components.d.ts # 🧩 组件类型声明

│

├── 📁 public/ # 🌍 静态资源目录

│

└── 📁 src/ # 💻 前端源代码

├── 📄 main.ts # 🚀 Vue应用入口

├── 📄 App.vue # 🏠 根组件

├── 📄 style.css # 🎨 全局样式

├── 📄 type.ts # 📋 TypeScript类型定义

├── 📄 vite-env.d.ts # 🔧 Vite环境类型声明

│

├── 📁 components/ # 🧩 可复用组件库

│ ├── 📁 agentCard/ # 🤖 Agent卡片组件

│ ├── 📁 commonCard/ # 🃏 通用卡片组件

│ ├── 📁 dialog/ # 💬 对话框组件

│ ├── 📁 drawer/ # 📜 抽屉组件

│ └── 📁 historyCard/ # 📜 历史记录卡片

│

├── 📁 pages/ # 📄 页面组件

│ ├── 📄 index.vue # 🏠 首页

│ ├── 📁 agent/ # 🤖 Agent管理页面

│ ├── 📁 configuration/ # ⚙️ 配置页面

│ ├── 📁 construct/ # 🏗️ 构建页面

│ ├── 📁 conversation/ # 💬 对话页面

│ ├── 📁 homepage/ # 🏠 主页模块

│ ├── 📁 knowledge/ # 📚 知识库页面

│ ├── 📁 login/ # 🔐 登录页面

│ ├── 📁 mars/ # 🚀 Mars对话页面

│ ├── 📁 mcp-server/ # 🖥️ MCP服务器页面

│ ├── 📁 model/ # 🧠 模型管理页面

│ ├── 📁 notFound/ # ❓ 404页面

│ ├── 📁 profile/ # 👤 用户资料页面

│ └── 📁 tool/ # 🛠️ 工具管理页面

│

├── 📁 router/ # 🛣️ 路由配置

├── 📁 store/ # 🗄️ 状态管理(Pinia)

├── 📁 apis/ # 🌐 API接口定义

├── 📁 utils/ # 🧰 工具函数库

└── 📁 assets/ # 🖼️ 静态资源(图片、字体等)

| 📂 类别 | 📈 数量 | 📝 说明 |

|---|---|---|

| 后端模块 | 15+ | API、服务、工具、数据库等核心模块 |

| 前端页面 | 12+ | 完整的用户界面和交互页面 |

| 内置工具 | 10+ | 涵盖搜索、文档、图像、通信等功能 |

| AI模型 | 5+ | 支持主流大语言模型和嵌入模型 |

| MCP服务 | 多个 | 可扩展的MCP协议服务器 |

📝 基于文件扩展名的详细代码统计

| 🔍 文件类型 | 📁 文件数量 | 📄 总行数 | 📉 最少行数 | 📈 最多行数 | 📊 平均行数 |

|---|---|---|---|---|---|

| 🐍 Python | 247 | 19,599 | 0 | 1,039 | 79 |

| 🎨 Vue | 31 | 21,907 | 12 | 2,588 | 706 |

| 📰 Markdown | 8 | 3,475 | 5 | 1,079 | 434 |

| ⚡ TypeScript | 46 | 2,103 | 1 | 212 | 45 |

| 📋 TXT | 1 | 539 | 539 | 539 | 539 |

| 📦 JSON | 11 | 348 | 7 | 110 | 31 |

| ⚙️ TOML | 1 | 328 | 328 | 328 | 328 |

| 🎨 CSS | 1 | 176 | 176 | 176 | 176 |

| 🔧 YML | 2 | 177 | 52 | 125 | 88 |

| 📋 YAML | 2 | 152 | 35 | 117 | 76 |

| ⚙️ CONF | 1 | 101 | 101 | 101 | 101 |

| 🚀 Shell | 2 | 87 | 35 | 52 | 43 |

| 🚦 PROD | 1 | 41 | 41 | 41 | 41 |

| 🚫 GitIgnore | 1 | 24 | 24 | 24 | 24 |

| 🌐 HTML | 1 | 13 | 13 | 13 | 13 |

| 🐳 DockerIgnore | 1 | 10 | 10 | 10 | 10 |

📊 总计: 356 个文件,48,560 行代码

| 🎯 技术栈 | 📈 占比 | 🔥 特点 |

|---|---|---|

| 🎨 前端 (Vue+TS) | 45.1% | 现代化响应式界面,TypeScript强类型支持 |

| 🐍 后端 (Python) | 40.4% | 高性能异步服务,丰富的AI集成 |

| 📚 文档 (MD) | 7.2% | 完整的项目文档和API说明 |

| ⚙️ 配置 (JSON/YAML) | 7.3% | 灵活的配置管理和部署支持 |

💡 项目采用前后端分离架构,代码结构清晰,文档完善

🎯 三种部署方式任你选择 - Docker一键部署 | 本地开发 | 生产环境

| 🛠️ 组件 | 🔢 版本要求 | 📝 说明 |

|---|---|---|

| Python | 3.12+ | 后端运行环境 |

| Node.js | 18+ | 前端构建环境 |

| MySQL | 8.0+ | 主数据库 |

| Redis | 7.0+ | 缓存和会话存储 |

| Docker | 20.10+ | 容器化部署(推荐) |

💫 点击展开Docker部署步骤

# 1️⃣ 克隆项目

git clone https://github.com/Shy2593666979/AgentChat.git

cd AgentChat

# 2️⃣ 配置API密钥

cp src/backend/agentchat/config.yaml.example src/backend/agentchat/config.yaml

# 编辑配置文件,填入你的API密钥

# 3️⃣ 一键启动

cd docker

docker-compose up --build -d# 查看服务状态

docker-compose ps

# 查看日志

docker-compose logs -f app🎊 完成! 访问 http://localhost:8090 开始使用!

👨💻 点击展开本地开发步骤

# 1️⃣ 克隆项目

git clone https://github.com/Shy2593666979/AgentChat.git

cd AgentChat

# 使用pip安装依赖

pip install -r requirements.txt创建并编辑配置文件 src/backend/agentchat/config.yaml:

# 后端服务

cd src/backend

uvicorn agentchat.main:app --port 7860 --host 0.0.0.0

# 新终端 - 前端服务

cd src/frontend

npm install

npm run dev| 🎯 服务 | 🔗 地址 | 📝 说明 |

|---|---|---|

| 前端界面 | localhost:8090 | 用户界面 |

| 后端API | localhost:7860 | API服务 |

| API文档 | localhost:7860/docs | Swagger文档 |

🎯 灵活的部署选择 - 从开发测试到生产环境的完整方案

由于 找到你的虚拟环境中的文件: 替换为以下内容: 点击展开配置代码from datetime import timedelta

from typing import Optional, Union, Sequence, List

from pydantic import (

BaseModel,

validator,

StrictBool,

StrictInt,

StrictStr

)

class LoadConfig(BaseModel):

authjwt_token_location: Optional[List[StrictStr]] = ['headers']

authjwt_secret_key: Optional[StrictStr] = None

authjwt_public_key: Optional[StrictStr] = None

authjwt_private_key: Optional[StrictStr] = None

authjwt_algorithm: Optional[StrictStr] = "HS256"

authjwt_decode_algorithms: Optional[List[StrictStr]] = None

authjwt_decode_leeway: Optional[Union[StrictInt,timedelta]] = 0

authjwt_encode_issuer: Optional[StrictStr] = None

authjwt_decode_issuer: Optional[StrictStr] = None

authjwt_decode_audience: Optional[Union[StrictStr,Sequence[StrictStr]]] = None

authjwt_denylist_enabled: Optional[StrictBool] = False

authjwt_denylist_token_checks: Optional[List[StrictStr]] = ['access','refresh']

authjwt_header_name: Optional[StrictStr] = "Authorization"

authjwt_header_type: Optional[StrictStr] = "Bearer"

authjwt_access_token_expires: Optional[Union[StrictBool,StrictInt,timedelta]] = timedelta(minutes=15)

authjwt_refresh_token_expires: Optional[Union[StrictBool,StrictInt,timedelta]] = timedelta(days=30)

# # option for create cookies

authjwt_access_cookie_key: Optional[StrictStr] = "access_token_cookie"

authjwt_refresh_cookie_key: Optional[StrictStr] = "refresh_token_cookie"

authjwt_access_cookie_path: Optional[StrictStr] = "/"

authjwt_refresh_cookie_path: Optional[StrictStr] = "/"

authjwt_cookie_max_age: Optional[StrictInt] = None

authjwt_cookie_domain: Optional[StrictStr] = None

authjwt_cookie_secure: Optional[StrictBool] = False

authjwt_cookie_samesite: Optional[StrictStr] = None

# # option for double submit csrf protection

authjwt_cookie_csrf_protect: Optional[StrictBool] = True

authjwt_access_csrf_cookie_key: Optional[StrictStr] = "csrf_access_token"

authjwt_refresh_csrf_cookie_key: Optional[StrictStr] = "csrf_refresh_token"

authjwt_access_csrf_cookie_path: Optional[StrictStr] = "/"

authjwt_refresh_csrf_cookie_path: Optional[StrictStr] = "/"

authjwt_access_csrf_header_name: Optional[StrictStr] = "X-CSRF-Token"

authjwt_refresh_csrf_header_name: Optional[StrictStr] = "X-CSRF-Token"

authjwt_csrf_methods: Optional[List[StrictStr]] = ['POST','PUT','PATCH','DELETE']

@validator('authjwt_access_token_expires')

def validate_access_token_expires(cls, v):

if v is True:

raise ValueError("The 'authjwt_access_token_expires' only accept value False (bool)")

return v

@validator('authjwt_refresh_token_expires')

def validate_refresh_token_expires(cls, v):

if v is True:

raise ValueError("The 'authjwt_refresh_token_expires' only accept value False (bool)")

return v

@validator('authjwt_denylist_token_checks', each_item=True)

def validate_denylist_token_checks(cls, v):

if v not in ['access','refresh']:

raise ValueError("The 'authjwt_denylist_token_checks' must be between 'access' or 'refresh'")

return v

@validator('authjwt_token_location', each_item=True)

def validate_token_location(cls, v):

if v not in ['headers','cookies']:

raise ValueError("The 'authjwt_token_location' must be between 'headers' or 'cookies'")

return v

@validator('authjwt_cookie_samesite')

def validate_cookie_samesite(cls, v):

if v not in ['strict','lax','none']:

raise ValueError("The 'authjwt_cookie_samesite' must be between 'strict', 'lax', 'none'")

return v

@validator('authjwt_csrf_methods', each_item=True)

def validate_csrf_methods(cls, v):

if v.upper() not in ["GET", "HEAD", "POST", "PUT", "DELETE", "PATCH"]:

raise ValueError("The 'authjwt_csrf_methods' must be between http request methods")

return v.upper()

class Config:

str_min_length = 1

str_strip_whitespace = True其实找起来挺麻烦的,所以提供了一个直接修改源代码的脚本 python scripts/fix_fastapi_jwt_auth.py # 进行脚本修复(前提是需要将依赖包安装完整)

本项目采用 MIT License 开源许可证 这意味着你可以自由使用、修改和分发本项目 🎉 |

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for AgentChat

Similar Open Source Tools

AgentChat

AgentChat is a modern intelligent conversation system built on large language models, providing rich AI conversation features. The system adopts a front-end and back-end separation architecture, supporting various AI models, knowledge base retrieval, tool invocation, MCP server integration, and other advanced functions. It features multiple model support, intelligent agents supporting collaboration, precise knowledge retrieval with RAG technology, a tool ecosystem with customizable extensions, MCP integration supporting Model Context Protocol servers, real-time conversation with smooth user experience, and a modern interface based on Vue 3 and Element Plus.

adnify

Adnify is an advanced code editor with ultimate visual experience and deep integration of AI Agent. It goes beyond traditional IDEs, featuring Cyberpunk glass morphism design style and a powerful AI Agent supporting full automation from code generation to file operations.

ai_quant_trade

The ai_quant_trade repository is a comprehensive platform for stock AI trading, offering learning, simulation, and live trading capabilities. It includes features such as factor mining, traditional strategies, machine learning, deep learning, reinforcement learning, graph networks, and high-frequency trading. The repository provides tools for monitoring stocks, stock recommendations, and deployment tools for live trading. It also features new functionalities like sentiment analysis using StructBERT, reinforcement learning for multi-stock trading with a 53% annual return, automatic factor mining with 5000 factors, customized stock monitoring software, and local deep reinforcement learning strategies.

py-xiaozhi

py-xiaozhi is a Python-based XiaoZhi voice client designed for learning through code and experiencing AI XiaoZhi's voice functions without hardware conditions. The repository is based on the xiaozhi-esp32 port. It supports AI voice interaction, visual multimodal capabilities, IoT device integration, online music playback, voice wake-up, automatic conversation mode, graphical user interface, command-line mode, cross-platform support, volume control, session management, encrypted audio transmission, automatic captcha handling, automatic MAC address retrieval, code modularization, and stability optimization.

xiaoyaosearch

XiaoyaoSearch is a cross-platform local desktop application designed for knowledge workers, content creators, and developers. It integrates AI models to support various input methods such as voice, text, and image to intelligently search local files. The application is free for non-commercial use, provides source code and development documentation, and ensures privacy by running locally without uploading data to the cloud. It features modern interface design using Electron, Vue 3, and TypeScript.

PromptHub

PromptHub is a versatile tool for generating prompts and ideas to spark creativity and overcome writer's block. It provides a wide range of customizable prompts and exercises to inspire writers, artists, educators, and anyone looking to enhance their creative thinking. With PromptHub, users can access a diverse collection of prompts across various categories such as writing, drawing, brainstorming, and more. The tool offers a user-friendly interface and allows users to save and share their favorite prompts for future reference. Whether you're a professional writer seeking inspiration or a student looking to boost your creativity, PromptHub is the perfect companion to ignite your imagination and enhance your creative process.

torch-rechub

Torch-RecHub is a lightweight, efficient, and user-friendly PyTorch recommendation system framework. It provides easy-to-use solutions for industrial-level recommendation systems, with features such as generative recommendation models, modular design for adding new models and datasets, PyTorch-based implementation for GPU acceleration, a rich library of 30+ classic and cutting-edge recommendation algorithms, standardized data loading, training, and evaluation processes, easy configuration through files or command-line parameters, reproducibility of experimental results, ONNX model export for production deployment, cross-engine data processing with PySpark support, and experiment visualization and tracking with integrated tools like WandB, SwanLab, and TensorBoardX.

banana-slides

Banana-slides is a native AI-powered PPT generation application based on the nano banana pro model. It supports generating complete PPT presentations from ideas, outlines, and page descriptions. The app automatically extracts attachment charts, uploads any materials, and allows verbal modifications, aiming to truly 'Vibe PPT'. It lowers the threshold for creating PPTs, enabling everyone to quickly create visually appealing and professional presentations.

tradecat

TradeCat is a comprehensive data analysis and trading platform designed for cryptocurrency, stock, and macroeconomic data. It offers a wide range of features including multi-market data collection, technical indicator modules, AI analysis, signal detection engine, Telegram bot integration, and more. The platform utilizes technologies like Python, TimescaleDB, TA-Lib, Pandas, NumPy, and various APIs to provide users with valuable insights and tools for trading decisions. With a modular architecture and detailed documentation, TradeCat aims to empower users in making informed trading decisions across different markets.

AI-CloudOps

AI+CloudOps is a cloud-native operations management platform designed for enterprises. It aims to integrate artificial intelligence technology with cloud-native practices to significantly improve the efficiency and level of operations work. The platform offers features such as AIOps for monitoring data analysis and alerts, multi-dimensional permission management, visual CMDB for resource management, efficient ticketing system, deep integration with Prometheus for real-time monitoring, and unified Kubernetes management for cluster optimization.

LunaBox

LunaBox is a lightweight, fast, and feature-rich tool for managing and tracking visual novels, with the ability to customize game categories, automatically track playtime, generate personalized reports through AI analysis, import data from other platforms, backup data locally or on cloud services, and ensure privacy and security by storing sensitive data locally. The tool supports multi-dimensional statistics, offers a variety of customization options, and provides a user-friendly interface for easy navigation and usage.

WenShape

WenShape is a context engineering system for creating long novels. It addresses the challenge of narrative consistency over thousands of words by using an orchestrated writing process, dynamic fact tracking, and precise token budget management. All project data is stored in YAML/Markdown/JSONL text format, naturally supporting Git version control.

Unity-Skills

UnitySkills is an AI-driven Unity editor automation engine based on REST API. It allows AI to directly control Unity scenes through Skills. The tool offers extreme efficiency with Result Truncation and SKILL.md slimming, a versatile tool library with 282 Skills supporting Batch operations, ensuring transactional safety with automatic rollback, multiple instance support for controlling multiple Unity projects simultaneously, deep integration with Antigravity Slash Commands for interactive experience, compatibility with popular AI terminals like Claude Code, Antigravity, Gemini CLI, and support for Cinemachine 2.x/3.x dual versions with advanced camera control features like MixingCamera, ClearShot, TargetGroup, and Spline.

vocotype-cli

VocoType is a free desktop voice input method designed for professionals who value privacy and efficiency. All recognition is done locally, ensuring offline operation and no data upload. The CLI open-source version of the VocoType core engine on GitHub is mainly targeted at developers.

resume-design

Resume-design is an open-source and free resume design and template download website, built with Vue3 + TypeScript + Vite + Element-plus + pinia. It provides two design tools for creating beautiful resumes and a complete backend management system. The project has released two frontend versions and will integrate with a backend system in the future. Users can learn frontend by downloading the released versions or learn design tools by pulling the latest frontend code.

For similar tasks

ChatFAQ

ChatFAQ is an open-source comprehensive platform for creating a wide variety of chatbots: generic ones, business-trained, or even capable of redirecting requests to human operators. It includes a specialized NLP/NLG engine based on a RAG architecture and customized chat widgets, ensuring a tailored experience for users and avoiding vendor lock-in.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

superagent-js

Superagent is an open source framework that enables any developer to integrate production ready AI Assistants into any application in a matter of minutes.

chainlit

Chainlit is an open-source async Python framework which allows developers to build scalable Conversational AI or agentic applications. It enables users to create ChatGPT-like applications, embedded chatbots, custom frontends, and API endpoints. The framework provides features such as multi-modal chats, chain of thought visualization, data persistence, human feedback, and an in-context prompt playground. Chainlit is compatible with various Python programs and libraries, including LangChain, Llama Index, Autogen, OpenAI Assistant, and Haystack. It offers a range of examples and a cookbook to showcase its capabilities and inspire users. Chainlit welcomes contributions and is licensed under the Apache 2.0 license.

neo4j-generative-ai-google-cloud

This repo contains sample applications that show how to use Neo4j with the generative AI capabilities in Google Cloud Vertex AI. We explore how to leverage Google generative AI to build and consume a knowledge graph in Neo4j.

MemGPT

MemGPT is a system that intelligently manages different memory tiers in LLMs in order to effectively provide extended context within the LLM's limited context window. For example, MemGPT knows when to push critical information to a vector database and when to retrieve it later in the chat, enabling perpetual conversations. MemGPT can be used to create perpetual chatbots with self-editing memory, chat with your data by talking to your local files or SQL database, and more.

py-gpt

Py-GPT is a Python library that provides an easy-to-use interface for OpenAI's GPT-3 API. It allows users to interact with the powerful GPT-3 model for various natural language processing tasks. With Py-GPT, developers can quickly integrate GPT-3 capabilities into their applications, enabling them to generate text, answer questions, and more with just a few lines of code.

For similar jobs

promptflow

**Prompt flow** is a suite of development tools designed to streamline the end-to-end development cycle of LLM-based AI applications, from ideation, prototyping, testing, evaluation to production deployment and monitoring. It makes prompt engineering much easier and enables you to build LLM apps with production quality.

deepeval

DeepEval is a simple-to-use, open-source LLM evaluation framework specialized for unit testing LLM outputs. It incorporates various metrics such as G-Eval, hallucination, answer relevancy, RAGAS, etc., and runs locally on your machine for evaluation. It provides a wide range of ready-to-use evaluation metrics, allows for creating custom metrics, integrates with any CI/CD environment, and enables benchmarking LLMs on popular benchmarks. DeepEval is designed for evaluating RAG and fine-tuning applications, helping users optimize hyperparameters, prevent prompt drifting, and transition from OpenAI to hosting their own Llama2 with confidence.

MegaDetector

MegaDetector is an AI model that identifies animals, people, and vehicles in camera trap images (which also makes it useful for eliminating blank images). This model is trained on several million images from a variety of ecosystems. MegaDetector is just one of many tools that aims to make conservation biologists more efficient with AI. If you want to learn about other ways to use AI to accelerate camera trap workflows, check out our of the field, affectionately titled "Everything I know about machine learning and camera traps".

leapfrogai

LeapfrogAI is a self-hosted AI platform designed to be deployed in air-gapped resource-constrained environments. It brings sophisticated AI solutions to these environments by hosting all the necessary components of an AI stack, including vector databases, model backends, API, and UI. LeapfrogAI's API closely matches that of OpenAI, allowing tools built for OpenAI/ChatGPT to function seamlessly with a LeapfrogAI backend. It provides several backends for various use cases, including llama-cpp-python, whisper, text-embeddings, and vllm. LeapfrogAI leverages Chainguard's apko to harden base python images, ensuring the latest supported Python versions are used by the other components of the stack. The LeapfrogAI SDK provides a standard set of protobuffs and python utilities for implementing backends and gRPC. LeapfrogAI offers UI options for common use-cases like chat, summarization, and transcription. It can be deployed and run locally via UDS and Kubernetes, built out using Zarf packages. LeapfrogAI is supported by a community of users and contributors, including Defense Unicorns, Beast Code, Chainguard, Exovera, Hypergiant, Pulze, SOSi, United States Navy, United States Air Force, and United States Space Force.

llava-docker

This Docker image for LLaVA (Large Language and Vision Assistant) provides a convenient way to run LLaVA locally or on RunPod. LLaVA is a powerful AI tool that combines natural language processing and computer vision capabilities. With this Docker image, you can easily access LLaVA's functionalities for various tasks, including image captioning, visual question answering, text summarization, and more. The image comes pre-installed with LLaVA v1.2.0, Torch 2.1.2, xformers 0.0.23.post1, and other necessary dependencies. You can customize the model used by setting the MODEL environment variable. The image also includes a Jupyter Lab environment for interactive development and exploration. Overall, this Docker image offers a comprehensive and user-friendly platform for leveraging LLaVA's capabilities.

carrot

The 'carrot' repository on GitHub provides a list of free and user-friendly ChatGPT mirror sites for easy access. The repository includes sponsored sites offering various GPT models and services. Users can find and share sites, report errors, and access stable and recommended sites for ChatGPT usage. The repository also includes a detailed list of ChatGPT sites, their features, and accessibility options, making it a valuable resource for ChatGPT users seeking free and unlimited GPT services.

TrustLLM

TrustLLM is a comprehensive study of trustworthiness in LLMs, including principles for different dimensions of trustworthiness, established benchmark, evaluation, and analysis of trustworthiness for mainstream LLMs, and discussion of open challenges and future directions. Specifically, we first propose a set of principles for trustworthy LLMs that span eight different dimensions. Based on these principles, we further establish a benchmark across six dimensions including truthfulness, safety, fairness, robustness, privacy, and machine ethics. We then present a study evaluating 16 mainstream LLMs in TrustLLM, consisting of over 30 datasets. The document explains how to use the trustllm python package to help you assess the performance of your LLM in trustworthiness more quickly. For more details about TrustLLM, please refer to project website.

AI-YinMei

AI-YinMei is an AI virtual anchor Vtuber development tool (N card version). It supports fastgpt knowledge base chat dialogue, a complete set of solutions for LLM large language models: [fastgpt] + [one-api] + [Xinference], supports docking bilibili live broadcast barrage reply and entering live broadcast welcome speech, supports Microsoft edge-tts speech synthesis, supports Bert-VITS2 speech synthesis, supports GPT-SoVITS speech synthesis, supports expression control Vtuber Studio, supports painting stable-diffusion-webui output OBS live broadcast room, supports painting picture pornography public-NSFW-y-distinguish, supports search and image search service duckduckgo (requires magic Internet access), supports image search service Baidu image search (no magic Internet access), supports AI reply chat box [html plug-in], supports AI singing Auto-Convert-Music, supports playlist [html plug-in], supports dancing function, supports expression video playback, supports head touching action, supports gift smashing action, supports singing automatic start dancing function, chat and singing automatic cycle swing action, supports multi scene switching, background music switching, day and night automatic switching scene, supports open singing and painting, let AI automatically judge the content.