MiniCPM-V

MiniCPM-Llama3-V 2.5: A GPT-4V Level Multimodal LLM on Your Phone

Stars: 8161

MiniCPM-V is a series of end-side multimodal LLMs designed for vision-language understanding. The models take image and text inputs to provide high-quality text outputs. The series includes models like MiniCPM-Llama3-V 2.5 with 8B parameters surpassing proprietary models, and MiniCPM-V 2.0, a lighter model with 2B parameters. The models support over 30 languages, efficient deployment on end-side devices, and have strong OCR capabilities. They achieve state-of-the-art performance on various benchmarks and prevent hallucinations in text generation. The models can process high-resolution images efficiently and support multilingual capabilities.

README:

A GPT-4V Level Multimodal LLM on Your Phone

中文 | English

Join our 💬 WeChat

MiniCPM-Llama3-V 2.5 🤗 🤖 | MiniCPM-V 2.0 🤗 🤖 | MiniCPM-Llama3-V 2.5 Technical Report

MiniCPM-V is a series of end-side multimodal LLMs (MLLMs) designed for vision-language understanding. The models take image and text as inputs and provide high-quality text outputs. Since February 2024, we have released 4 versions of the model, aiming to achieve strong performance and efficient deployment. The most notable models in this series currently include:

-

MiniCPM-Llama3-V 2.5: 🔥🔥🔥 The latest and most capable model in the MiniCPM-V series. With a total of 8B parameters, the model surpasses proprietary models such as GPT-4V-1106, Gemini Pro, Qwen-VL-Max and Claude 3 in overall performance. Equipped with the enhanced OCR and instruction-following capability, the model can also support multimodal conversation for over 30 languages including English, Chinese, French, Spanish, German etc. With help of quantization, compilation optimizations, and several efficient inference techniques on CPUs and NPUs, MiniCPM-Llama3-V 2.5 can be efficiently deployed on end-side devices.

-

MiniCPM-V 2.0: The lightest model in the MiniCPM-V series. With 2B parameters, it surpasses larger models such as Yi-VL 34B, CogVLM-Chat 17B, and Qwen-VL-Chat 10B in overall performance. It can accept image inputs of any aspect ratio and up to 1.8 million pixels (e.g., 1344x1344), achieving comparable performance with Gemini Pro in understanding scene-text and matches GPT-4V in low hallucination rates.

- [2024.08.03] MiniCPM-Llama3-V 2.5 technical report is released! See here.

- [2024.07.19] MiniCPM-Llama3-V 2.5 supports vLLM now! See here.

- [2024.05.28] 🚀🚀🚀 MiniCPM-Llama3-V 2.5 now fully supports its feature in llama.cpp and ollama! Please pull the latest code of our provided forks (llama.cpp, ollama). GGUF models in various sizes are available here. MiniCPM-Llama3-V 2.5 series is not supported by the official repositories yet, and we are working hard to merge PRs. Please stay tuned!

- [2024.05.28] 💫 We now support LoRA fine-tuning for MiniCPM-Llama3-V 2.5, using only 2 V100 GPUs! See more statistics here.

- [2024.05.23] 🔍 We've released a comprehensive comparison between Phi-3-vision-128k-instruct and MiniCPM-Llama3-V 2.5, including benchmarks evaluations, multilingual capabilities, and inference efficiency 🌟📊🌍🚀. Click here to view more details.

- [2024.05.23] 🔥🔥🔥 MiniCPM-V tops GitHub Trending and Hugging Face Trending! Our demo, recommended by Hugging Face Gradio’s official account, is available here. Come and try it out!

- [2024.06.03] Now, you can run MiniCPM-Llama3-V 2.5 on multiple low VRAM GPUs(12 GB or 16 GB) by distributing the model's layers across multiple GPUs. For more details, Check this link.

- [2024.05.25] MiniCPM-Llama3-V 2.5 now supports streaming outputs and customized system prompts. Try it here!

- [2024.05.24] We release the MiniCPM-Llama3-V 2.5 gguf, which supports llama.cpp inference and provides a 6~8 token/s smooth decoding on mobile phones. Try it now!

- [2024.05.20] We open-soure MiniCPM-Llama3-V 2.5, it has improved OCR capability and supports 30+ languages, representing the first end-side MLLM achieving GPT-4V level performance! We provide efficient inference and simple fine-tuning. Try it now!

- [2024.04.23] MiniCPM-V-2.0 supports vLLM now! Click here to view more details.

- [2024.04.18] We create a HuggingFace Space to host the demo of MiniCPM-V 2.0 at here!

- [2024.04.17] MiniCPM-V-2.0 supports deploying WebUI Demo now!

- [2024.04.15] MiniCPM-V-2.0 now also supports fine-tuning with the SWIFT framework!

- [2024.04.12] We open-source MiniCPM-V 2.0, which achieves comparable performance with Gemini Pro in understanding scene text and outperforms strong Qwen-VL-Chat 9.6B and Yi-VL 34B on OpenCompass, a comprehensive evaluation over 11 popular benchmarks. Click here to view the MiniCPM-V 2.0 technical blog.

- [2024.03.14] MiniCPM-V now supports fine-tuning with the SWIFT framework. Thanks to Jintao for the contribution!

- [2024.03.01] MiniCPM-V now can be deployed on Mac!

- [2024.02.01] We open-source MiniCPM-V and OmniLMM-12B, which support efficient end-side deployment and powerful multimodal capabilities correspondingly.

- MiniCPM-Llama3-V 2.5

- MiniCPM-V 2.0

- Chat with Our Demo on Gradio

- Install

- Inference

- Fine-tuning

- TODO

- 🌟 Star History

- Citation

MiniCPM-Llama3-V 2.5 is the latest model in the MiniCPM-V series. The model is built on SigLip-400M and Llama3-8B-Instruct with a total of 8B parameters. It exhibits a significant performance improvement over MiniCPM-V 2.0. Notable features of MiniCPM-Llama3-V 2.5 include:

-

🔥 Leading Performance. MiniCPM-Llama3-V 2.5 has achieved an average score of 65.1 on OpenCompass, a comprehensive evaluation over 11 popular benchmarks. With only 8B parameters, it surpasses widely used proprietary models like GPT-4V-1106, Gemini Pro, Claude 3 and Qwen-VL-Max and greatly outperforms other Llama 3-based MLLMs.

-

💪 Strong OCR Capabilities. MiniCPM-Llama3-V 2.5 can process images with any aspect ratio and up to 1.8 million pixels (e.g., 1344x1344), achieving a 700+ score on OCRBench, surpassing proprietary models such as GPT-4o, GPT-4V-0409, Qwen-VL-Max and Gemini Pro. Based on recent user feedback, MiniCPM-Llama3-V 2.5 has now enhanced full-text OCR extraction, table-to-markdown conversion, and other high-utility capabilities, and has further strengthened its instruction-following and complex reasoning abilities, enhancing multimodal interaction experiences.

-

🏆 Trustworthy Behavior. Leveraging the latest RLAIF-V method (the newest technique in the RLHF-V [CVPR'24] series), MiniCPM-Llama3-V 2.5 exhibits more trustworthy behavior. It achieves a 10.3% hallucination rate on Object HalBench, lower than GPT-4V-1106 (13.6%), achieving the best-level performance within the open-source community. Data released.

-

🌏 Multilingual Support. Thanks to the strong multilingual capabilities of Llama 3 and the cross-lingual generalization technique from VisCPM, MiniCPM-Llama3-V 2.5 extends its bilingual (Chinese-English) multimodal capabilities to over 30 languages including German, French, Spanish, Italian, Korean etc. All Supported Languages.

-

🚀 Efficient Deployment. MiniCPM-Llama3-V 2.5 systematically employs model quantization, CPU optimizations, NPU optimizations and compilation optimizations, achieving high-efficiency deployment on end-side devices. For mobile phones with Qualcomm chips, we have integrated the NPU acceleration framework QNN into llama.cpp for the first time. After systematic optimization, MiniCPM-Llama3-V 2.5 has realized a 150x acceleration in end-side MLLM image encoding and a 3x speedup in language decoding.

-

💫 Easy Usage. MiniCPM-Llama3-V 2.5 can be easily used in various ways: (1) llama.cpp and ollama support for efficient CPU inference on local devices, (2) GGUF format quantized models in 16 sizes, (3) efficient LoRA fine-tuning with only 2 V100 GPUs, (4) streaming output, (5) quick local WebUI demo setup with Gradio and Streamlit, and (6) interactive demos on HuggingFace Spaces.

Click to view results on TextVQA, DocVQA, OCRBench, OpenCompass, MME, MMBench, MMMU, MathVista, LLaVA Bench, RealWorld QA, Object HalBench.

| Model | Size | OCRBench | TextVQA val | DocVQA test | Open-Compass | MME | MMB test (en) | MMB test (cn) | MMMU val | Math-Vista | LLaVA Bench | RealWorld QA | Object HalBench |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Proprietary | |||||||||||||

| Gemini Pro | - | 680 | 74.6 | 88.1 | 62.9 | 2148.9 | 73.6 | 74.3 | 48.9 | 45.8 | 79.9 | 60.4 | - |

| GPT-4V (2023.11.06) | - | 645 | 78.0 | 88.4 | 63.5 | 1771.5 | 77.0 | 74.4 | 53.8 | 47.8 | 93.1 | 63.0 | 86.4 |

| Open-source | |||||||||||||

| Mini-Gemini | 2.2B | - | 56.2 | 34.2* | - | 1653.0 | - | - | 31.7 | - | - | - | - |

| Qwen-VL-Chat | 9.6B | 488 | 61.5 | 62.6 | 51.6 | 1860.0 | 61.8 | 56.3 | 37.0 | 33.8 | 67.7 | 49.3 | 56.2 |

| DeepSeek-VL-7B | 7.3B | 435 | 64.7* | 47.0* | 54.6 | 1765.4 | 73.8 | 71.4 | 38.3 | 36.8 | 77.8 | 54.2 | - |

| Yi-VL-34B | 34B | 290 | 43.4* | 16.9* | 52.2 | 2050.2 | 72.4 | 70.7 | 45.1 | 30.7 | 62.3 | 54.8 | 79.3 |

| CogVLM-Chat | 17.4B | 590 | 70.4 | 33.3* | 54.2 | 1736.6 | 65.8 | 55.9 | 37.3 | 34.7 | 73.9 | 60.3 | 73.6 |

| TextMonkey | 9.7B | 558 | 64.3 | 66.7 | - | - | - | - | - | - | - | - | - |

| Idefics2 | 8.0B | - | 73.0 | 74.0 | 57.2 | 1847.6 | 75.7 | 68.6 | 45.2 | 52.2 | 49.1 | 60.7 | - |

| Bunny-LLama-3-8B | 8.4B | - | - | - | 54.3 | 1920.3 | 77.0 | 73.9 | 41.3 | 31.5 | 61.2 | 58.8 | - |

| LLaVA-NeXT Llama-3-8B | 8.4B | - | - | 78.2 | - | 1971.5 | - | - | 41.7 | 37.5 | 80.1 | 60.0 | - |

| Phi-3-vision-128k-instruct | 4.2B | 639* | 70.9 | - | - | 1537.5* | - | - | 40.4 | 44.5 | 64.2* | 58.8* | - |

| MiniCPM-V 1.0 | 2.8B | 366 | 60.6 | 38.2 | 47.5 | 1650.2 | 64.1 | 62.6 | 38.3 | 28.9 | 51.3 | 51.2 | 78.4 |

| MiniCPM-V 2.0 | 2.8B | 605 | 74.1 | 71.9 | 54.5 | 1808.6 | 69.1 | 66.5 | 38.2 | 38.7 | 69.2 | 55.8 | 85.5 |

| MiniCPM-Llama3-V 2.5 | 8.5B | 725 | 76.6 | 84.8 | 65.1 | 2024.6 | 77.2 | 74.2 | 45.8 | 54.3 | 86.7 | 63.5 | 89.7 |

We deploy MiniCPM-Llama3-V 2.5 on end devices. The demo video is the raw screen recording on a Xiaomi 14 Pro without edition.

Click to view more details of MiniCPM-V 2.0

MiniCPM-V 2.0 is an efficient version with promising performance for deployment. The model is built based on SigLip-400M and MiniCPM-2.4B, connected by a perceiver resampler. Our latest version, MiniCPM-V 2.0 has several notable features.

-

🔥 State-of-the-art Performance.

MiniCPM-V 2.0 achieves state-of-the-art performance on multiple benchmarks (including OCRBench, TextVQA, MME, MMB, MathVista, etc) among models under 7B parameters. It even outperforms strong Qwen-VL-Chat 9.6B, CogVLM-Chat 17.4B, and Yi-VL 34B on OpenCompass, a comprehensive evaluation over 11 popular benchmarks. Notably, MiniCPM-V 2.0 shows strong OCR capability, achieving comparable performance to Gemini Pro in scene-text understanding, and state-of-the-art performance on OCRBench among open-source models.

-

🏆 Trustworthy Behavior.

LMMs are known for suffering from hallucination, often generating text not factually grounded in images. MiniCPM-V 2.0 is the first end-side LMM aligned via multimodal RLHF for trustworthy behavior (using the recent RLHF-V [CVPR'24] series technique). This allows the model to match GPT-4V in preventing hallucinations on Object HalBench.

-

🌟 High-Resolution Images at Any Aspect Raito.

MiniCPM-V 2.0 can accept 1.8 million pixels (e.g., 1344x1344) images at any aspect ratio. This enables better perception of fine-grained visual information such as small objects and optical characters, which is achieved via a recent technique from LLaVA-UHD.

-

⚡️ High Efficiency.

MiniCPM-V 2.0 can be efficiently deployed on most GPU cards and personal computers, and even on end devices such as mobile phones. For visual encoding, we compress the image representations into much fewer tokens via a perceiver resampler. This allows MiniCPM-V 2.0 to operate with favorable memory cost and speed during inference even when dealing with high-resolution images.

-

🙌 Bilingual Support.

MiniCPM-V 2.0 supports strong bilingual multimodal capabilities in both English and Chinese. This is enabled by generalizing multimodal capabilities across languages, a technique from VisCPM [ICLR'24].

We deploy MiniCPM-V 2.0 on end devices. The demo video is the raw screen recording on a Xiaomi 14 Pro without edition.

| Model | Introduction and Guidance |

|---|---|

| MiniCPM-V 1.0 | Document |

| OmniLMM-12B | Document |

We provide online and local demos powered by HuggingFace Gradio, the most popular model deployment framework nowadays. It supports streaming outputs, progress bars, queuing, alerts, and other useful features.

Click here to try out the online demo of MiniCPM-Llama3-V 2.5 | MiniCPM-V 2.0 on HuggingFace Spaces.

You can easily build your own local WebUI demo with Gradio using the following commands.

pip install -r requirements.txt# For NVIDIA GPUs, run:

python web_demo_2.5.py --device cuda

# For Mac with MPS (Apple silicon or AMD GPUs), run:

PYTORCH_ENABLE_MPS_FALLBACK=1 python web_demo_2.5.py --device mps- Clone this repository and navigate to the source folder

git clone https://github.com/OpenBMB/MiniCPM-V.git

cd MiniCPM-V- Create conda environment

conda create -n MiniCPM-V python=3.10 -y

conda activate MiniCPM-V- Install dependencies

pip install -r requirements.txt| Model | Device | Memory | Description | Download |

|---|---|---|---|---|

| MiniCPM-Llama3-V 2.5 | GPU | 19 GB | The lastest version, achieving state-of-the end-side multimodal performance. |

🤗

|

| MiniCPM-Llama3-V 2.5 gguf | CPU | 5 GB | The gguf version, lower memory usage and faster inference. |

🤗

|

| MiniCPM-Llama3-V 2.5 int4 | GPU | 8 GB | The int4 quantized version,lower GPU memory usage. |

🤗

|

| MiniCPM-V 2.0 | GPU | 8 GB | Light version, balance the performance the computation cost. |

🤗

|

| MiniCPM-V 1.0 | GPU | 7 GB | Lightest version, achieving the fastest inference. |

🤗

|

Please refer to the following codes to run.

from chat import MiniCPMVChat, img2base64

import torch

import json

torch.manual_seed(0)

chat_model = MiniCPMVChat('openbmb/MiniCPM-Llama3-V-2_5')

im_64 = img2base64('./assets/airplane.jpeg')

# First round chat

msgs = [{"role": "user", "content": "Tell me the model of this aircraft."}]

inputs = {"image": im_64, "question": json.dumps(msgs)}

answer = chat_model.chat(inputs)

print(answer)

# Second round chat

# pass history context of multi-turn conversation

msgs.append({"role": "assistant", "content": answer})

msgs.append({"role": "user", "content": "Introduce something about Airbus A380."})

inputs = {"image": im_64, "question": json.dumps(msgs)}

answer = chat_model.chat(inputs)

print(answer)You will get the following output:

"The aircraft in the image is an Airbus A380, which can be identified by its large size, double-deck structure, and the distinctive shape of its wings and engines. The A380 is a wide-body aircraft known for being the world's largest passenger airliner, designed for long-haul flights. It has four engines, which are characteristic of large commercial aircraft. The registration number on the aircraft can also provide specific information about the model if looked up in an aviation database."

"The Airbus A380 is a double-deck, wide-body, four-engine jet airliner made by Airbus. It is the world's largest passenger airliner and is known for its long-haul capabilities. The aircraft was developed to improve efficiency and comfort for passengers traveling over long distances. It has two full-length passenger decks, which can accommodate more passengers than a typical single-aisle airplane. The A380 has been operated by airlines such as Lufthansa, Singapore Airlines, and Emirates, among others. It is widely recognized for its unique design and significant impact on the aviation industry."

You can run MiniCPM-Llama3-V 2.5 on multiple low VRAM GPUs (12 GB or 16 GB) by distributing the model's layers across multiple GPUs. Please refer to this tutorial for detailed instructions on how to load the model and inference using multiple low VRAM GPUs.

Click to view an example, to run MiniCPM-Llama3-V 2.5 on 💻 Mac with MPS (Apple silicon or AMD GPUs).

# test.py Need more than 16GB memory.

import torch

from PIL import Image

from transformers import AutoModel, AutoTokenizer

model = AutoModel.from_pretrained('openbmb/MiniCPM-Llama3-V-2_5', trust_remote_code=True, low_cpu_mem_usage=True)

model = model.to(device='mps')

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-Llama3-V-2_5', trust_remote_code=True)

model.eval()

image = Image.open('./assets/hk_OCR.jpg').convert('RGB')

question = 'Where is this photo taken?'

msgs = [{'role': 'user', 'content': question}]

answer, context, _ = model.chat(

image=image,

msgs=msgs,

context=None,

tokenizer=tokenizer,

sampling=True

)

print(answer)Run with command:

PYTORCH_ENABLE_MPS_FALLBACK=1 python test.pyMiniCPM-Llama3-V 2.5 and MiniCPM-V 2.0 can be deployed on mobile phones with Android operating systems. 🚀 Click MiniCPM-Llama3-V 2.5 / MiniCPM-V 2.0 to install apk.

MiniCPM-Llama3-V 2.5 can run with llama.cpp now! See our fork of llama.cpp for more detail. This implementation supports smooth inference of 6~8 token/s on mobile phones (test environment:Xiaomi 14 pro + Snapdragon 8 Gen 3).

vLLM now officially supports MiniCPM-V 2.0 and MiniCPM-Llama3-V 2.5, Click to see.

- Clone the official vLLM:

git clone https://github.com/vllm-project/vllm.git- Install vLLM:

cd vllm

pip install -e .- Install timm:

pip install timm==0.9.10- Run the example:(If you use model in local path, please update the model code to the latest version on Hugging Face.)

python examples/minicpmv_example.py We support simple fine-tuning with Hugging Face for MiniCPM-V 2.0 and MiniCPM-Llama3-V 2.5.

We now support MiniCPM-V series fine-tuning with the SWIFT framework. SWIFT supports training, inference, evaluation and deployment of nearly 200 LLMs and MLLMs . It supports the lightweight training solutions provided by PEFT and a complete Adapters Library including techniques such as NEFTune, LoRA+ and LLaMA-PRO.

Best Practices:MiniCPM-V 1.0, MiniCPM-V 2.0

- [x] MiniCPM-V fine-tuning support

- [ ] Code release for real-time interactive assistant

-

This repository is released under the Apache-2.0 License.

-

The usage of MiniCPM-V model weights must strictly follow MiniCPM Model License.md.

-

The models and weights of MiniCPM are completely free for academic research. after filling out a "questionnaire" for registration, are also available for free commercial use.

As LMMs, MiniCPM-V models (including OmniLMM) generate contents by learning a large amount of multimodal corpora, but they cannot comprehend, express personal opinions or make value judgement. Anything generated by MiniCPM-V models does not represent the views and positions of the model developers

We will not be liable for any problems arising from the use of MiniCPMV-V models, including but not limited to data security issues, risk of public opinion, or any risks and problems arising from the misdirection, misuse, dissemination or misuse of the model.

This project is developed by the following institutions:

👏 Welcome to explore other multimodal projects of our team:

VisCPM | RLHF-V | LLaVA-UHD | RLAIF-V

If you find our model/code/paper helpful, please consider cite our papers 📝 and star us ⭐️!

@article{yu2023rlhf,

title={Rlhf-v: Towards trustworthy mllms via behavior alignment from fine-grained correctional human feedback},

author={Yu, Tianyu and Yao, Yuan and Zhang, Haoye and He, Taiwen and Han, Yifeng and Cui, Ganqu and Hu, Jinyi and Liu, Zhiyuan and Zheng, Hai-Tao and Sun, Maosong and others},

journal={arXiv preprint arXiv:2312.00849},

year={2023}

}

@article{viscpm,

title={Large Multilingual Models Pivot Zero-Shot Multimodal Learning across Languages},

author={Jinyi Hu and Yuan Yao and Chongyi Wang and Shan Wang and Yinxu Pan and Qianyu Chen and Tianyu Yu and Hanghao Wu and Yue Zhao and Haoye Zhang and Xu Han and Yankai Lin and Jiao Xue and Dahai Li and Zhiyuan Liu and Maosong Sun},

journal={arXiv preprint arXiv:2308.12038},

year={2023}

}

@article{xu2024llava-uhd,

title={{LLaVA-UHD}: an LMM Perceiving Any Aspect Ratio and High-Resolution Images},

author={Xu, Ruyi and Yao, Yuan and Guo, Zonghao and Cui, Junbo and Ni, Zanlin and Ge, Chunjiang and Chua, Tat-Seng and Liu, Zhiyuan and Huang, Gao},

journal={arXiv preprint arXiv:2403.11703},

year={2024}

}

@article{yu2024rlaifv,

title={RLAIF-V: Aligning MLLMs through Open-Source AI Feedback for Super GPT-4V Trustworthiness},

author={Yu, Tianyu and Zhang, Haoye and Yao, Yuan and Dang, Yunkai and Chen, Da and Lu, Xiaoman and Cui, Ganqu and He, Taiwen and Liu, Zhiyuan and Chua, Tat-Seng and Sun, Maosong},

journal={arXiv preprint arXiv:2405.17220},

year={2024}

}For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for MiniCPM-V

Similar Open Source Tools

MiniCPM-V

MiniCPM-V is a series of end-side multimodal LLMs designed for vision-language understanding. The models take image and text inputs to provide high-quality text outputs. The series includes models like MiniCPM-Llama3-V 2.5 with 8B parameters surpassing proprietary models, and MiniCPM-V 2.0, a lighter model with 2B parameters. The models support over 30 languages, efficient deployment on end-side devices, and have strong OCR capabilities. They achieve state-of-the-art performance on various benchmarks and prevent hallucinations in text generation. The models can process high-resolution images efficiently and support multilingual capabilities.

buffer-of-thought-llm

Buffer of Thoughts (BoT) is a thought-augmented reasoning framework designed to enhance the accuracy, efficiency, and robustness of large language models (LLMs). It introduces a meta-buffer to store high-level thought-templates distilled from problem-solving processes, enabling adaptive reasoning for efficient problem-solving. The framework includes a buffer-manager to dynamically update the meta-buffer, ensuring scalability and stability. BoT achieves significant performance improvements on reasoning-intensive tasks and demonstrates superior generalization ability and robustness while being cost-effective compared to other methods.

LLaVA-MORE

LLaVA-MORE is a new family of Multimodal Language Models (MLLMs) that integrates recent language models with diverse visual backbones. The repository provides a unified training protocol for fair comparisons across all architectures and releases training code and scripts for distributed training. It aims to enhance Multimodal LLM performance and offers various models for different tasks. Users can explore different visual backbones like SigLIP and methods for managing image resolutions (S2) to improve the connection between images and language. The repository is a starting point for expanding the study of Multimodal LLMs and enhancing new features in the field.

BitBLAS

BitBLAS is a library for mixed-precision BLAS operations on GPUs, for example, the $W_{wdtype}A_{adtype}$ mixed-precision matrix multiplication where $C_{cdtype}[M, N] = A_{adtype}[M, K] \times W_{wdtype}[N, K]$. BitBLAS aims to support efficient mixed-precision DNN model deployment, especially the $W_{wdtype}A_{adtype}$ quantization in large language models (LLMs), for example, the $W_{UINT4}A_{FP16}$ in GPTQ, the $W_{INT2}A_{FP16}$ in BitDistiller, the $W_{INT2}A_{INT8}$ in BitNet-b1.58. BitBLAS is based on techniques from our accepted submission at OSDI'24.

ColossalAI

Colossal-AI is a deep learning system for large-scale parallel training. It provides a unified interface to scale sequential code of model training to distributed environments. Colossal-AI supports parallel training methods such as data, pipeline, tensor, and sequence parallelism and is integrated with heterogeneous training and zero redundancy optimizer.

UniCoT

Uni-CoT is a unified reasoning framework that extends Chain-of-Thought (CoT) principles to the multimodal domain, enabling Multimodal Large Language Models (MLLMs) to perform interpretable, step-by-step reasoning across both text and vision. It decomposes complex multimodal tasks into structured, manageable steps that can be executed sequentially or in parallel, allowing for more scalable and systematic reasoning.

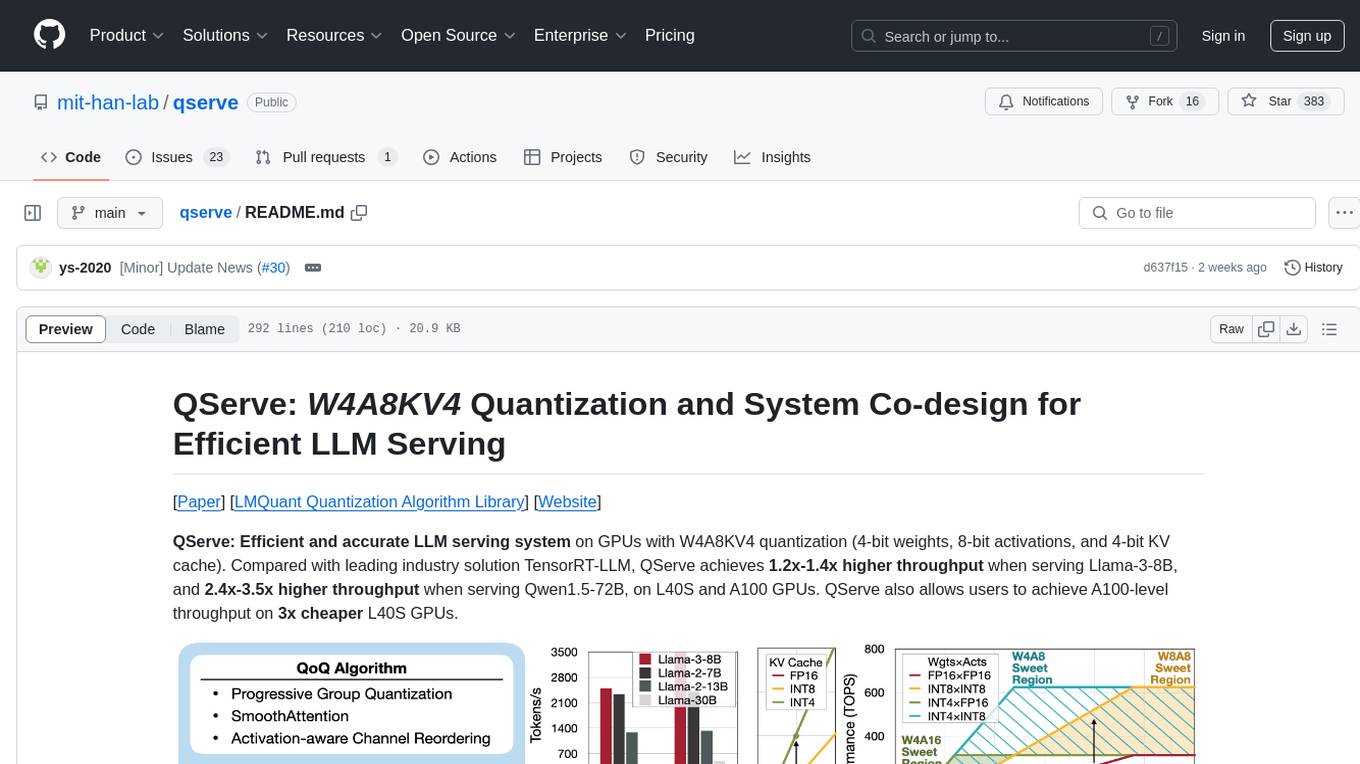

qserve

QServe is a serving system designed for efficient and accurate Large Language Models (LLM) on GPUs with W4A8KV4 quantization. It achieves higher throughput compared to leading industry solutions, allowing users to achieve A100-level throughput on cheaper L40S GPUs. The system introduces the QoQ quantization algorithm with 4-bit weight, 8-bit activation, and 4-bit KV cache, addressing runtime overhead challenges. QServe improves serving throughput for various LLM models by implementing compute-aware weight reordering, register-level parallelism, and fused attention memory-bound techniques.

ShapeLLM

ShapeLLM is the first 3D Multimodal Large Language Model designed for embodied interaction, exploring a universal 3D object understanding with 3D point clouds and languages. It supports single-view colored point cloud input and introduces a robust 3D QA benchmark, 3D MM-Vet, encompassing various variants. The model extends the powerful point encoder architecture, ReCon++, achieving state-of-the-art performance across a range of representation learning tasks. ShapeLLM can be used for tasks such as training, zero-shot understanding, visual grounding, few-shot learning, and zero-shot learning on 3D MM-Vet.

IDvs.MoRec

This repository contains the source code for the SIGIR 2023 paper 'Where to Go Next for Recommender Systems? ID- vs. Modality-based Recommender Models Revisited'. It provides resources for evaluating foundation, transferable, multi-modal, and LLM recommendation models, along with datasets, pre-trained models, and training strategies for IDRec and MoRec using in-batch debiased cross-entropy loss. The repository also offers large-scale datasets, code for SASRec with in-batch debias cross-entropy loss, and information on joining the lab for research opportunities.

Q-Bench

Q-Bench is a benchmark for general-purpose foundation models on low-level vision, focusing on multi-modality LLMs performance. It includes three realms for low-level vision: perception, description, and assessment. The benchmark datasets LLVisionQA and LLDescribe are collected for perception and description tasks, with open submission-based evaluation. An abstract evaluation code is provided for assessment using public datasets. The tool can be used with the datasets API for single images and image pairs, allowing for automatic download and usage. Various tasks and evaluations are available for testing MLLMs on low-level vision tasks.

VILA

VILA is a family of open Vision Language Models optimized for efficient video understanding and multi-image understanding. It includes models like NVILA, LongVILA, VILA-M3, VILA-U, and VILA-1.5, each offering specific features and capabilities. The project focuses on efficiency, accuracy, and performance in various tasks related to video, image, and language understanding and generation. VILA models are designed to be deployable on diverse NVIDIA GPUs and support long-context video understanding, medical applications, and multi-modal design.

TokenPacker

TokenPacker is a novel visual projector that compresses visual tokens by 75%∼89% with high efficiency. It adopts a 'coarse-to-fine' scheme to generate condensed visual tokens, achieving comparable or better performance across diverse benchmarks. The tool includes TokenPacker for general use and TokenPacker-HD for high-resolution image understanding. It provides training scripts, checkpoints, and supports various compression ratios and patch numbers.

HuatuoGPT-II

HuatuoGPT2 is an innovative domain-adapted medical large language model that excels in medical knowledge and dialogue proficiency. It showcases state-of-the-art performance in various medical benchmarks, surpassing GPT-4 in expert evaluations and fresh medical licensing exams. The open-source release includes HuatuoGPT2 models in 7B, 13B, and 34B versions, training code for one-stage adaptation, partial pre-training and fine-tuning instructions, and evaluation methods for medical response capabilities and professional pharmacist exams. The tool aims to enhance LLM capabilities in the Chinese medical field through open-source principles.

agentscope

AgentScope is a multi-agent platform designed to empower developers to build multi-agent applications with large-scale models. It features three high-level capabilities: Easy-to-Use, High Robustness, and Actor-Based Distribution. AgentScope provides a list of `ModelWrapper` to support both local model services and third-party model APIs, including OpenAI API, DashScope API, Gemini API, and ollama. It also enables developers to rapidly deploy local model services using libraries such as ollama (CPU inference), Flask + Transformers, Flask + ModelScope, FastChat, and vllm. AgentScope supports various services, including Web Search, Data Query, Retrieval, Code Execution, File Operation, and Text Processing. Example applications include Conversation, Game, and Distribution. AgentScope is released under Apache License 2.0 and welcomes contributions.

Cherry_LLM

Cherry Data Selection project introduces a self-guided methodology for LLMs to autonomously discern and select cherry samples from open-source datasets, minimizing manual curation and cost for instruction tuning. The project focuses on selecting impactful training samples ('cherry data') to enhance LLM instruction tuning by estimating instruction-following difficulty. The method involves phases like 'Learning from Brief Experience', 'Evaluating Based on Experience', and 'Retraining from Self-Guided Experience' to improve LLM performance.

For similar tasks

MiniCPM-V

MiniCPM-V is a series of end-side multimodal LLMs designed for vision-language understanding. The models take image and text inputs to provide high-quality text outputs. The series includes models like MiniCPM-Llama3-V 2.5 with 8B parameters surpassing proprietary models, and MiniCPM-V 2.0, a lighter model with 2B parameters. The models support over 30 languages, efficient deployment on end-side devices, and have strong OCR capabilities. They achieve state-of-the-art performance on various benchmarks and prevent hallucinations in text generation. The models can process high-resolution images efficiently and support multilingual capabilities.

ChatGPT-Next-Web

ChatGPT Next Web is a well-designed cross-platform ChatGPT web UI tool that supports Claude, GPT4, and Gemini Pro models. It allows users to deploy their private ChatGPT applications with ease. The tool offers features like one-click deployment, compact client for Linux/Windows/MacOS, compatibility with self-deployed LLMs, privacy-first approach with local data storage, markdown support, responsive design, fast loading speed, prompt templates, awesome prompts, chat history compression, multilingual support, and more.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.