SWE-AF

Autonomous software engineering fleet of AI agents for production grade PRs on AgentField: plan, code, test, and ship.

Stars: 212

SWE-AF is an autonomous engineering team runtime built on AgentField, designed to spin up a full engineering team that can scope, build, adapt, and ship complex software end-to-end. It enables autonomous software engineering factories, scaling from simple goals to multi-issue programs with hundreds to thousands of agent invocations. SWE-AF offers one-call DX for quick deployment and adaptive factory control using three nested control loops to adapt to task difficulty in real-time. It features a factory architecture, continual learning, agent-scale parallelism, fleet-scale orchestration with AgentField, explicit compromise tracking, and long-run reliability.

README:

Autonomous Engineering Team Runtime Built on AgentField

Pronounced: "swee-AF" (one word)

One API call → full engineering team → shipped code.

Quick Start • Why SWE-AF • In Action • Factory Control • Benchmark • Modes • API • Architecture

One API call spins up a full autonomous engineering team — product managers, architects, coders, reviewers, testers — that scopes, builds, adapts, and ships complex software end to end. SWE-AF is a first step toward autonomous software engineering factories, scaling from simple goals to hard multi-issue programs with hundreds to thousands of agent invocations.

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d @- <<'JSON'

{

"input": {

"goal": "Refactor and harden auth + billing flows",

"repo_url": "https://github.com/user/my-project",

"config": {

"runtime": "claude_code",

"models": {

"default": "sonnet",

"coder": "opus",

"qa": "opus"

},

"enable_learning": true

}

}

}

JSONSwap models.default and any role key (coder, qa, architect, etc.) to any model your runtime supports.

SWE-AF works in two modes: point it at a single repository, or orchestrate coordinated changes across multiple repos in one build.

The default. Pass repo_url (remote) or repo_path (local) and SWE-AF handles everything:

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d '{

"input": {

"goal": "Add JWT auth",

"repo_url": "https://github.com/user/my-project"

}

}'When your work spans multiple codebases — a primary app plus shared libraries, monorepo sub-projects, or dependent microservices — pass config.repos as an array with roles:

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d '{

"input": {

"goal": "Add JWT auth across API and shared-lib",

"config": {

"repos": [

{

"repo_url": "https://github.com/org/main-app",

"role": "primary"

},

{

"repo_url": "https://github.com/org/shared-lib",

"role": "dependency"

}

],

"runtime": "claude_code",

"models": {

"default": "sonnet"

}

}

}

}'Roles:

-

primary— The main application. Changes here drive the build; failures block progress. -

dependency— Libraries or services modified to support the primary repo. Failures are captured but don't block.

Use cases:

- Primary app + shared SDK or utilities library

- Monorepo sub-projects that live in separate repos

- Feature spanning multiple microservices (e.g., API + worker queue)

Rust-based Python compiler benchmark (built autonomously):

| Metric | CPython (subprocess) | RustPython (SWE-AF) | Improvement |

|---|---|---|---|

| Steady-state execution | Baseline (~19ms) | Optimized in-process runtime | 88.3x-602.3x faster |

| Geometric mean | 1.0x baseline | 253.8x | 253.8x |

| Peak throughput | ~52 ops/s | 31,807 ops/s | ~612x |

Measurement methodology

Throughput comparison measures different execution models: CPython subprocess spawn (~19ms per call → ~52 ops/s) vs RustPython pre-warmed interpreter pool (in-process). This is the real-world tradeoff the system was built to optimize — replacing repeated subprocess invocations with a persistent pool for short-snippet execution.

Artifact trail includes 175 tracked autonomous agents across planning, coding, review, merge, and verification.

Details: examples/llm-rust-python-compiler-sonnet/README.md

Most agent frameworks wrap a single coder loop. SWE-AF is a coordinated engineering factory — planning, execution, and governance agents run as a control stack that adapts in real time.

- Hardness-aware execution — easy issues pass through quickly, while hard issues trigger deeper adaptation and DAG-level replanning instead of blind retries.

- Factory architecture — not a single-agent wrapper. Planning, execution, and governance agents run as a coordinated control stack.

-

Multi-model, multi-provider — assign different models per role (

coder: opus,qa: haiku). Works with Claude, OpenRouter, OpenAI, and Google. -

Continual learning — with

enable_learning=true, conventions and failure patterns discovered early are injected into downstream issues. - Agent-scale parallelism — dependency-level scheduling + isolated git worktrees allow large fan-out without branch collisions.

- Fleet-scale orchestration — many SWE-AF nodes can run continuously in parallel via AgentField, driving thousands of agent invocations across concurrent builds.

- Explicit compromise tracking — when scope is relaxed, debt is typed, severity-rated, and propagated.

-

Long-run reliability — checkpointed execution supports

resume_buildafter crashes or interruptions.

PR #179: Go SDK DID/VC Registration — built entirely by SWE-AF (Claude runtime with haiku-class models). One API call, zero human code.

| Metric | Value |

|---|---|

| Issues completed | 10/10 |

| Tests passing | 217 |

| Acceptance criteria | 34/34 |

| Agent invocations | 79 |

| Model | claude-haiku-4-5 |

| Total cost | $19.23 |

Cost breakdown by agent role

| Role | Cost | % |

|---|---|---|

| Coder | $5.88 | 30.6% |

| Code Reviewer | $3.48 | 18.1% |

| QA | $1.78 | 9.2% |

| GitHub PR | $1.66 | 8.6% |

| Integration Tester | $1.59 | 8.3% |

| Merger | $1.22 | 6.3% |

| Workspace Ops | $1.77 | 9.2% |

| Planning (PM + Arch + TL + Sprint) | $0.79 | 4.1% |

| Verifier + Finalize | $0.34 | 1.8% |

| Synthesizer | $0.05 | 0.2% |

79 invocations, 2,070 conversation turns. Planning agents scope and decompose; coders work in parallel isolated worktrees; reviewers and QA validate each issue; merger integrates branches; verifier checks acceptance criteria against the PRD.

Claude & open-source models supported: Run builds with either runtime and tune models per role in one flat config map.

-

runtime: "claude_code"maps to Claude backend. -

runtime: "open_code"maps to OpenCode backend (OpenRouter/OpenAI/Google/Anthropic model IDs).

SWE-AF uses three nested control loops to adapt to task difficulty in real time:

| Loop | Scope | Trigger | Action |

|---|---|---|---|

| Inner loop | Single issue | QA/review fails | Coder retries with feedback |

| Middle loop | Single issue | Inner loop exhausted |

run_issue_advisor retries with a new approach, splits work, or accepts with debt |

| Outer loop | Remaining DAG | Escalated failures |

run_replanner restructures remaining issues and dependencies |

This is the core factory-control behavior: control agents supervise worker agents and continuously reshape the plan as reality changes.

One click deploys SWE-AF + AgentField control plane + PostgreSQL. Set two environment variables in Railway:

-

CLAUDE_CODE_OAUTH_TOKEN— runclaude setup-tokenin Claude Code CLI (uses Pro/Max subscription credits) -

GH_TOKEN— GitHub personal access token withreposcope for draft PR creation

Once deployed, trigger a build:

curl -X POST https://<control-plane>.up.railway.app/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-H "X-API-Key: this-is-a-secret" \

-d '{"input": {"goal": "Add JWT auth", "repo_url": "https://github.com/user/my-repo"}}'- Python 3.12+

- AgentField control plane (

af) - AI provider API key (Anthropic, OpenRouter, OpenAI, or Google)

python3.12 -m venv .venv

source .venv/bin/activate

python -m pip install --upgrade pip

python -m pip install -e ".[dev]"af # starts AgentField control plane on :8080

python -m swe_af # registers node id "swe-planner"# Default (uses Claude)

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d @- <<'JSON'

{

"input": {

"goal": "Add JWT auth to all API endpoints",

"repo_url": "https://github.com/user/my-project"

}

}

JSON

# With open-source runtime + flat role map

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d @- <<'JSON'

{

"input": {

"goal": "Add JWT auth",

"repo_url": "https://github.com/user/my-project",

"config": {

"runtime": "open_code",

"models": {

"default": "openrouter/minimax/minimax-m2.5"

}

}

}

}

JSON

# Local workspace mode (repo_path) + targeted role override

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d @- <<'JSON'

{

"input": {

"goal": "Refactor and harden auth + billing flows",

"repo_path": "/path/to/repo",

"config": {

"runtime": "claude_code",

"models": {

"default": "sonnet",

"coder": "opus",

"qa": "opus"

},

"enable_learning": true

}

}

}

JSONFor OpenRouter with open_code, use model IDs in openrouter/<provider>/<model> format (for example openrouter/minimax/minimax-m2.5).

- Architecture is generated and reviewed before coding starts

- Issues are dependency-sorted and run in parallel across isolated worktrees

- Each issue gets dedicated coder, tester, and reviewer passes

- Failed issues trigger advisor-driven adaptation (split, re-scope, or escalate)

- Escalations trigger replanning of the remaining DAG

- End result is merged, integration-tested, and verified against acceptance criteria

Typical runs spin up 400-500+ agent instances across planning, execution, QA, and verification. For larger DAGs and repeated adaptation/replanning cycles, SWE-AF can scale into the high hundreds to thousands of agent invocations in a single build.

95/100 with haiku and MiniMax: SWE-AF scored 95/100 with both Claude haiku-class routing ($20) and MiniMax M2.5 via open runtime ($6), outperforming Claude Code sonnet (73), Codex o3 (62), and Claude Code haiku (59) on the same prompt.

| Dimension | SWE-AF (haiku) | SWE-AF (MiniMax) | CC Sonnet | Codex (o3) | CC Haiku |

|---|---|---|---|---|---|

| Functional (30) | 30 | 30 | 30 | 30 | 30 |

| Structure (20) | 20 | 20 | 10 | 10 | 10 |

| Hygiene (20) | 20 | 20 | 16 | 10 | 7 |

| Git (15) | 15 | 15 | 2 | 2 | 2 |

| Quality (15) | 10 | 10 | 15 | 10 | 10 |

| Total | 95 | 95 | 73 | 62 | 59 |

| Cost | ~$20 | ~$6 | ? | ? | ? |

| Time | ~30-40 min | 43 min | ? | ? | ? |

Full benchmark details and reproduction

Same prompt tested across multiple agents. SWE-AF with Claude runtime (haiku-class model mapping) used 400+ agent instances; SWE-AF with MiniMax M2.5 via open runtime achieved identical quality at 70% cost savings.

Prompt used for all agents:

Build a Node.js CLI todo app with add, list, complete, and delete commands. Data should persist to a JSON file. Initialize git, write tests, and commit your work.

| Dimension | Points | What it measures |

|---|---|---|

| Functional | 30 | CLI behavior and passing tests |

| Structure | 20 | Modular source layout and test organization |

| Hygiene | 20 |

.gitignore, clean status, no junk artifacts |

| Git | 15 | Commit discipline and message quality |

| Quality | 15 | Error handling, package metadata, README quality |

# SWE-AF (Claude runtime, haiku-class mapping) - $20, 30-40 min

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d @- <<'JSON'

{

"input": {

"goal": "Build a Node.js CLI todo app with add, list, complete, and delete commands. Data should persist to a JSON file. Initialize git, write tests, and commit your work.",

"repo_path": "/tmp/swe-af-output",

"config": {

"runtime": "claude_code",

"models": {

"default": "haiku"

}

}

}

}

JSON

# SWE-AF (MiniMax M2.5 via OpenRouter runtime) - $6, 43 min

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d @- <<'JSON'

{

"input": {

"goal": "Build a Node.js CLI todo app with add, list, complete, and delete commands. Data should persist to a JSON file. Initialize git, write tests, and commit your work.",

"repo_path": "/workspaces/todo-app-benchmark",

"config": {

"runtime": "open_code",

"models": {

"default": "openrouter/minimax/minimax-m2.5"

}

}

}

}

JSON

# Claude Code (haiku)

claude -p "Build a Node.js CLI todo app with add, list, complete, and delete commands. Data should persist to a JSON file. Initialize git, write tests, and commit your work." --model haiku --dangerously-skip-permissions

# Claude Code (sonnet)

claude -p "Build a Node.js CLI todo app with add, list, complete, and delete commands. Data should persist to a JSON file. Initialize git, write tests, and commit your work." --model sonnet --dangerously-skip-permissions

# Codex (gpt-5.3-codex)

codex exec "Build a Node.js CLI todo app with add, list, complete, and delete commands. Data should persist to a JSON file. Initialize git, write tests, and commit your work." --full-autoMiniMax M2.5 Measured Metrics (Feb 2026):

- 99.22% code coverage (only agent with measured coverage)

- 4 custom error types (TodoError, ValidationError, NotFoundError, StorageError)

- 999 LOC, 4 modules, 74 tests, 9 commits

Production Quality Analysis: Objective comparison of measurable metrics across all agents.

Benchmark assets, logs, evaluator, and generated projects live in examples/agent-comparison/.

cp .env.example .env

# Add your API key: ANTHROPIC_API_KEY, OPENROUTER_API_KEY, OPENAI_API_KEY, or GOOGLE_API_KEY

# Optionally add GH_TOKEN for draft PR workflow

docker compose up -dSubmit a build:

# Default (Claude)

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d @- <<'JSON'

{

"input": {

"goal": "Add JWT auth",

"repo_url": "https://github.com/user/my-repo"

}

}

JSON

# With open-source runtime (set OPENROUTER_API_KEY in .env)

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d @- <<'JSON'

{

"input": {

"goal": "Add JWT auth",

"repo_url": "https://github.com/user/my-repo",

"config": {

"runtime": "open_code",

"models": {

"default": "openrouter/minimax/minimax-m2.5"

}

}

}

}

JSON

# Local workspace mode (repo_path)

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d @- <<'JSON'

{

"input": {

"goal": "Add JWT auth",

"repo_path": "/workspaces/my-repo"

}

}

JSONScale workers:

docker compose up --scale swe-agent=3 -dUse a host control plane instead of Docker control-plane service:

docker compose -f docker-compose.local.yml up -dPass repo_url instead of repo_path to let SWE-AF clone and open a draft PR after execution.

curl -X POST http://localhost:8080/api/v1/execute/async/swe-planner.build \

-H "Content-Type: application/json" \

-d @- <<'JSON'

{

"input": {

"repo_url": "https://github.com/user/my-project",

"goal": "Add comprehensive test coverage",

"config": {

"runtime": "claude_code",

"models": {

"default": "sonnet",

"coder": "opus",

"qa": "opus"

}

}

}

}

JSONRequirements:

-

GH_TOKENin.envwithreposcope - Repo access for that token

Agent endpoints

Core async endpoints (returns an execution_id immediately):

# Full build: plan -> execute -> verify

POST /api/v1/execute/async/swe-planner.build

# Plan only

POST /api/v1/execute/async/swe-planner.plan

# Execute a prebuilt plan

POST /api/v1/execute/async/swe-planner.execute

# Resume after interruption

POST /api/v1/execute/async/swe-planner.resume_buildMonitoring:

curl http://localhost:8080/api/v1/executions/<execution_id>Every specialist is also callable directly:

POST /api/v1/execute/async/swe-planner.<agent>

Agent execution flow

| Agent | In -> Out |

|---|---|

run_product_manager |

goal -> PRD |

run_architect |

PRD -> architecture |

run_tech_lead |

architecture -> review |

run_sprint_planner |

architecture -> issue DAG |

run_issue_writer |

issue spec -> detailed issue |

run_coder |

issue + worktree -> code + tests + commit |

run_qa |

worktree -> test results |

run_code_reviewer |

worktree -> quality/security review |

run_qa_synthesizer |

QA + review -> FIX / APPROVE / BLOCK |

run_issue_advisor |

failure context -> adapt / split / accept / escalate |

run_replanner |

build state + failures -> restructured plan |

run_merger |

branches -> merged output |

run_integration_tester |

merged repo -> integration results |

run_verifier |

repo + PRD -> acceptance pass/fail |

generate_fix_issues |

failed criteria -> targeted fix issues |

run_github_pr |

branch -> push + draft PR |

Configuration

Pass config to build or execute. Full schema: swe_af/execution/schemas.py

| Key | Default | Description |

|---|---|---|

runtime |

"claude_code" |

Model runtime: "claude_code" or "open_code"

|

models |

null |

Flat role-model map (default + role keys below) |

max_coding_iterations |

5 |

Inner-loop retry budget |

max_advisor_invocations |

2 |

Middle-loop advisor budget |

max_replans |

2 |

Build-level replanning budget |

enable_issue_advisor |

true |

Enable issue adaptation |

enable_replanning |

true |

Enable global replanning |

enable_learning |

false |

Enable cross-issue shared memory (continual learning) |

agent_timeout_seconds |

2700 |

Per-agent timeout |

agent_max_turns |

150 |

Tool-use turn budget |

Model Role Keys

models supports:

default-

pm,architect,tech_lead,sprint_planner -

coder,qa,code_reviewer,qa_synthesizer -

replan,retry_advisor,issue_writer,issue_advisor -

verifier,git,merger,integration_tester

Resolution order

runtime defaults < models.default < models.<role>

Config examples

Minimal:

{

"runtime": "claude_code"

}Fully customized:

{

"runtime": "open_code",

"models": {

"default": "minimax/minimax-m2.5",

"pm": "openrouter/qwen/qwen-2.5-72b-instruct",

"architect": "openrouter/qwen/qwen-2.5-72b-instruct",

"coder": "deepseek/deepseek-chat",

"qa": "deepseek/deepseek-chat",

"verifier": "openrouter/qwen/qwen-2.5-72b-instruct"

},

"max_coding_iterations": 6,

"enable_learning": true

}Artifacts

.artifacts/

├── plan/ # PRD, architecture, issue specs

├── execution/ # checkpoints, per-issue logs, agent outputs

└── verification/ # acceptance criteria results

Development

make test

make check

make clean

make clean-examplesSecurity and Community

- Contribution guide:

docs/CONTRIBUTING.md - Code of conduct:

CODE_OF_CONDUCT.md - Security policy:

SECURITY.md - Changelog:

CHANGELOG.md - License:

Apache-2.0

SWE-AF is built on AgentField as a first step from single-agent harnesses to autonomous software engineering factories.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for SWE-AF

Similar Open Source Tools

SWE-AF

SWE-AF is an autonomous engineering team runtime built on AgentField, designed to spin up a full engineering team that can scope, build, adapt, and ship complex software end-to-end. It enables autonomous software engineering factories, scaling from simple goals to multi-issue programs with hundreds to thousands of agent invocations. SWE-AF offers one-call DX for quick deployment and adaptive factory control using three nested control loops to adapt to task difficulty in real-time. It features a factory architecture, continual learning, agent-scale parallelism, fleet-scale orchestration with AgentField, explicit compromise tracking, and long-run reliability.

shodh-memory

Shodh-Memory is a cognitive memory system designed for AI agents to persist memory across sessions, learn from experience, and run entirely offline. It features Hebbian learning, activation decay, and semantic consolidation, packed into a single ~17MB binary. Users can deploy it on cloud, edge devices, or air-gapped systems to enhance the memory capabilities of AI agents.

mengram

Mengram is an AI memory tool that goes beyond storing facts by also capturing episodic events and procedural workflows that evolve from failures. It offers multi-user isolation, a knowledge graph, and integrates with various tools like LangChain and CrewAI. Users can add conversations to automatically extract facts, events, and workflows. Mengram provides a cognitive profile based on all memories and allows importing existing data from tools like ChatGPT and Obsidian. It offers REST API for adding and searching memories, along with smart triggers and memory agents for personalized experiences. The tool is free for commercial use under the Apache 2.0 license.

agent-security-scanner-mcp

The 'agent-security-scanner-mcp' is a security scanner designed for AI coding agents and autonomous assistants. It scans code for vulnerabilities, detects hallucinated packages, and blocks prompt injection. The tool supports two versions: ProofLayer (lightweight) and Full Version (advanced) with different features and capabilities. It provides various tools for scanning code, fixing vulnerabilities, checking package legitimacy, and detecting prompt injection. The scanner also includes specific tools for scanning MCP servers, OpenClaw skills, and integrating with OpenClaw for autonomous AI threat detection. The tool utilizes AST analysis, taint tracking, and cross-file analysis to provide accurate security assessments. It supports multiple languages and ecosystems, offering comprehensive security coverage for various development environments.

Code

A3S Code is an embeddable AI coding agent framework in Rust that allows users to build agents capable of reading, writing, and executing code with tool access, planning, and safety controls. It is production-ready with features like permission system, HITL confirmation, skill-based tool restrictions, and error recovery. The framework is extensible with 19 trait-based extension points and supports lane-based priority queue for scalable multi-machine task distribution.

zeroclaw

ZeroClaw is a fast, small, and fully autonomous AI assistant infrastructure built with Rust. It features a lean runtime, cost-efficient deployment, fast cold starts, and a portable architecture. It is secure by design, fully swappable, and supports OpenAI-compatible provider support. The tool is designed for low-cost boards and small cloud instances, with a memory footprint of less than 5MB. It is suitable for tasks like deploying AI assistants, swapping providers/channels/tools, and pluggable everything.

flyto-core

Flyto-core is a powerful Python library for geospatial analysis and visualization. It provides a wide range of tools for working with geographic data, including support for various file formats, spatial operations, and interactive mapping. With Flyto-core, users can easily load, manipulate, and visualize spatial data to gain insights and make informed decisions. Whether you are a GIS professional, a data scientist, or a developer, Flyto-core offers a versatile and user-friendly solution for geospatial tasks.

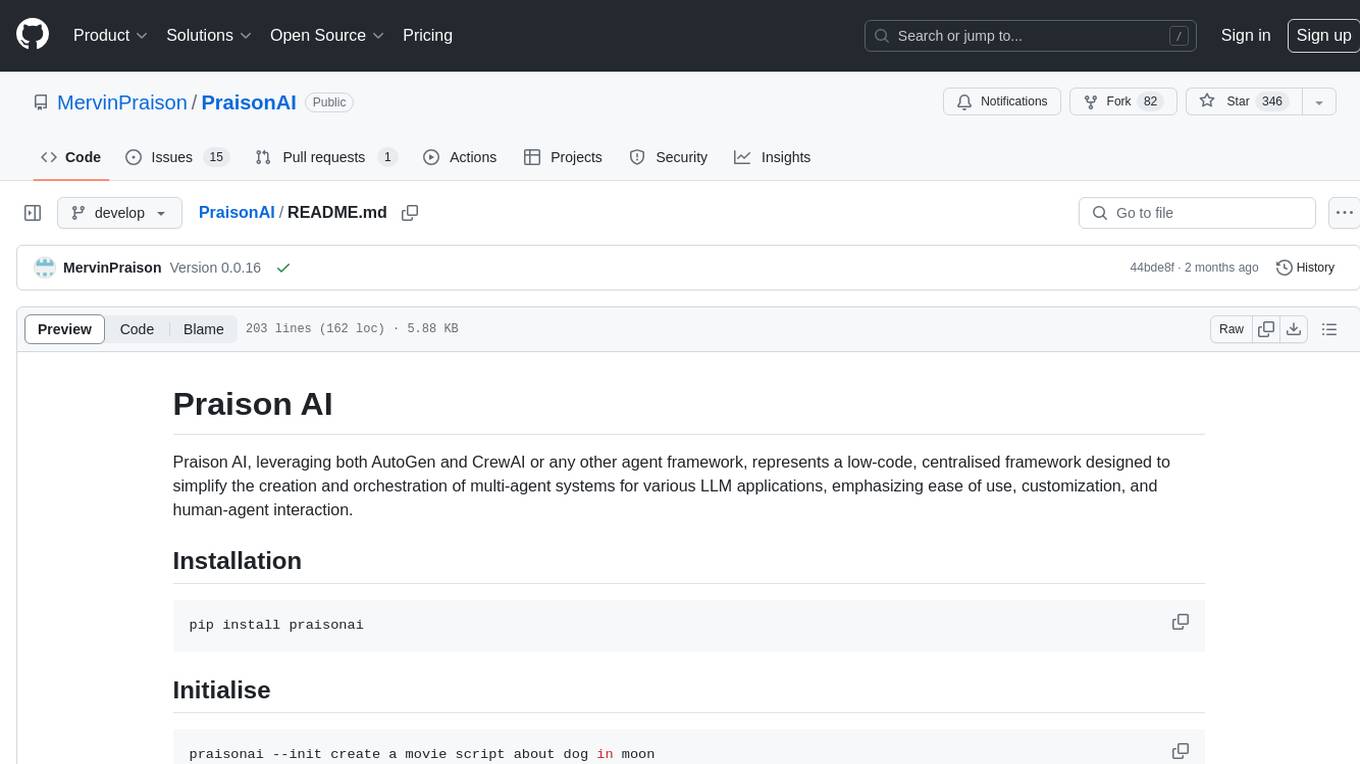

PraisonAI

Praison AI is a low-code, centralised framework that simplifies the creation and orchestration of multi-agent systems for various LLM applications. It emphasizes ease of use, customization, and human-agent interaction. The tool leverages AutoGen and CrewAI frameworks to facilitate the development of AI-generated scripts and movie concepts. Users can easily create, run, test, and deploy agents for scriptwriting and movie concept development. Praison AI also provides options for full automatic mode and integration with OpenAI models for enhanced AI capabilities.

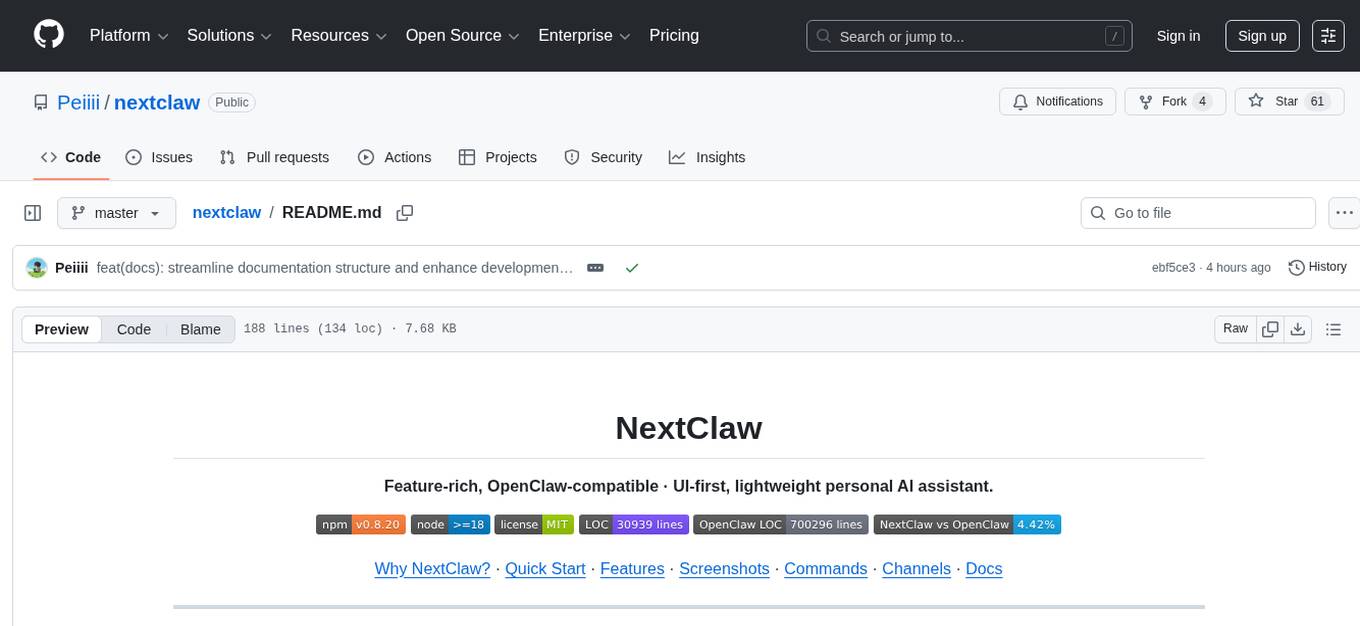

nextclaw

NextClaw is a feature-rich, OpenClaw-compatible personal AI assistant designed for quick trials, secondary machines, or anyone who wants multi-channel + multi-provider capabilities with low maintenance overhead. It offers a UI-first workflow, lightweight codebase, and easy configuration through a built-in UI. The tool supports various providers like OpenRouter, OpenAI, MiniMax, Moonshot, and more, along with channels such as Telegram, Discord, WhatsApp, and others. Users can perform tasks like web search, command execution, memory management, and scheduling with Cron + Heartbeat. NextClaw aims to provide a user-friendly experience with minimal setup and maintenance requirements.

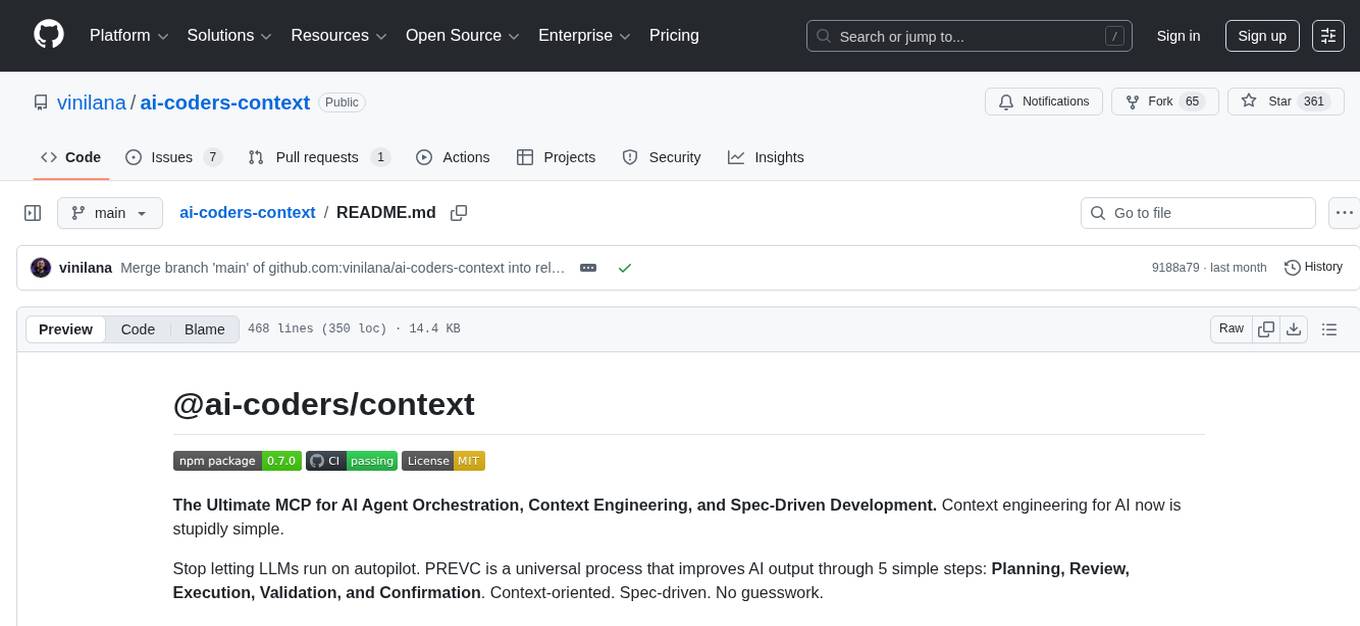

ai-coders-context

The @ai-coders/context repository provides the Ultimate MCP for AI Agent Orchestration, Context Engineering, and Spec-Driven Development. It simplifies context engineering for AI by offering a universal process called PREVC, which consists of Planning, Review, Execution, Validation, and Confirmation steps. The tool aims to address the problem of context fragmentation by introducing a single `.context/` directory that works universally across different tools. It enables users to create structured documentation, generate agent playbooks, manage workflows, provide on-demand expertise, and sync across various AI tools. The tool follows a structured, spec-driven development approach to improve AI output quality and ensure reproducible results across projects.

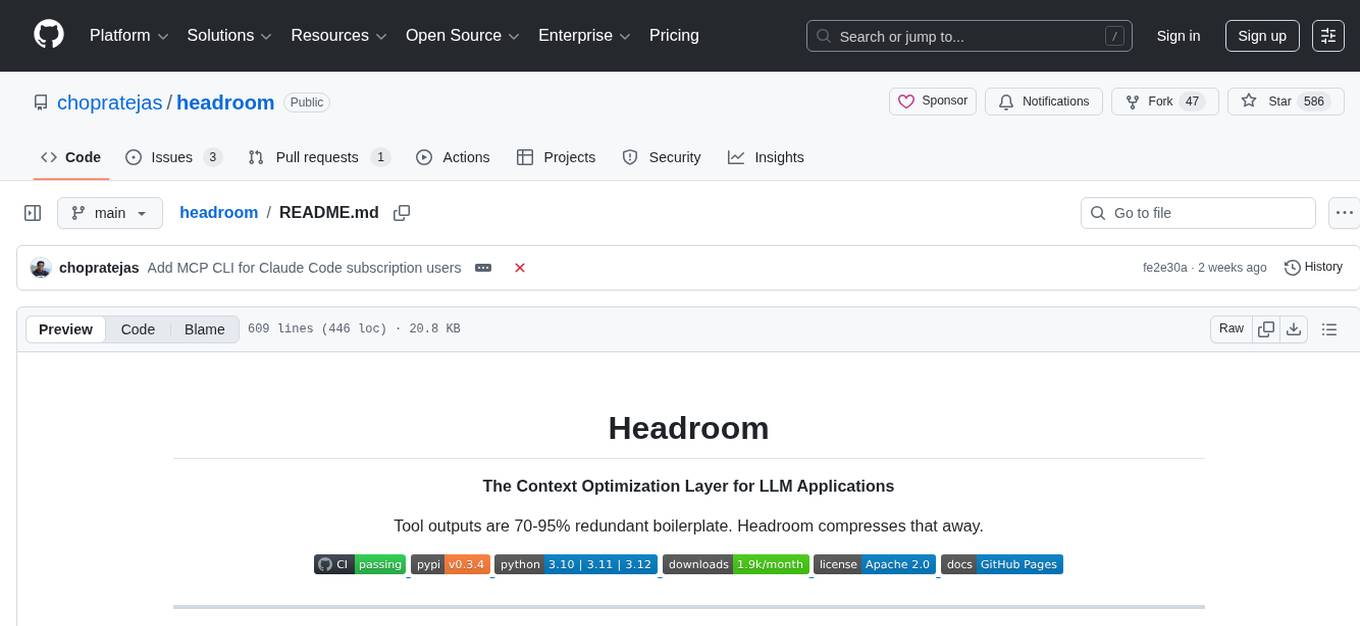

headroom

Headroom is a tool designed to optimize the context layer for Large Language Models (LLMs) applications by compressing redundant boilerplate outputs. It intercepts context from tool outputs, logs, search results, and intermediate agent steps, stabilizes dynamic content like timestamps and UUIDs, removes low-signal content, and preserves original data for retrieval only when needed by the LLM. It ensures provider caching works efficiently by aligning prompts for cache hits. The tool works as a transparent proxy with zero code changes, offering significant savings in token count and enabling reversible compression for various types of content like code, logs, JSON, and images. Headroom integrates seamlessly with frameworks like LangChain, Agno, and MCP, supporting features like memory, retrievers, agents, and more.

AnyCrawl

AnyCrawl is a high-performance crawling and scraping toolkit designed for SERP crawling, web scraping, site crawling, and batch tasks. It offers multi-threading and multi-process capabilities for high performance. The tool also provides AI extraction for structured data extraction from pages, making it LLM-friendly and easy to integrate and use.

LocalAGI

LocalAGI is a powerful, self-hostable AI Agent platform that allows you to design AI automations without writing code. It provides a complete drop-in replacement for OpenAI's Responses APIs with advanced agentic capabilities. With LocalAGI, you can create customizable AI assistants, automations, chat bots, and agents that run 100% locally, without the need for cloud services or API keys. The platform offers features like no-code agents, web-based interface, advanced agent teaming, connectors for various platforms, comprehensive REST API, short & long-term memory capabilities, planning & reasoning, periodic tasks scheduling, memory management, multimodal support, extensible custom actions, fully customizable models, observability, and more.

Conduit

Conduit is a unified Swift 6.2 SDK for local and cloud LLM inference, providing a single Swift-native API that can target Anthropic, OpenRouter, Ollama, MLX, HuggingFace, and Apple’s Foundation Models without rewriting your prompt pipeline. It allows switching between local, cloud, and system providers with minimal code changes, supports downloading models from HuggingFace Hub for local MLX inference, generates Swift types directly from LLM responses, offers privacy-first options for on-device running, and is built with Swift 6.2 concurrency features like actors, Sendable types, and AsyncSequence.

skylos

Skylos is a privacy-first SAST tool for Python, TypeScript, and Go that bridges the gap between traditional static analysis and AI agents. It detects dead code, security vulnerabilities (SQLi, SSRF, Secrets), and code quality issues with high precision. Skylos uses a hybrid engine (AST + optional Local/Cloud LLM) to eliminate false positives, verify via runtime, find logic bugs, and provide context-aware audits. It offers automated fixes, end-to-end remediation, and 100% local privacy. The tool supports taint analysis, secrets detection, vulnerability checks, dead code detection and cleanup, agentic AI and hybrid analysis, codebase optimization, operational governance, and runtime verification.

sparrow

Sparrow is an innovative open-source solution for efficient data extraction and processing from various documents and images. It seamlessly handles forms, invoices, receipts, and other unstructured data sources. Sparrow stands out with its modular architecture, offering independent services and pipelines all optimized for robust performance. One of the critical functionalities of Sparrow - pluggable architecture. You can easily integrate and run data extraction pipelines using tools and frameworks like LlamaIndex, Haystack, or Unstructured. Sparrow enables local LLM data extraction pipelines through Ollama or Apple MLX. With Sparrow solution you get API, which helps to process and transform your data into structured output, ready to be integrated with custom workflows. Sparrow Agents - with Sparrow you can build independent LLM agents, and use API to invoke them from your system. **List of available agents:** * **llamaindex** - RAG pipeline with LlamaIndex for PDF processing * **vllamaindex** - RAG pipeline with LLamaIndex multimodal for image processing * **vprocessor** - RAG pipeline with OCR and LlamaIndex for image processing * **haystack** - RAG pipeline with Haystack for PDF processing * **fcall** - Function call pipeline * **unstructured-light** - RAG pipeline with Unstructured and LangChain, supports PDF and image processing * **unstructured** - RAG pipeline with Weaviate vector DB query, Unstructured and LangChain, supports PDF and image processing * **instructor** - RAG pipeline with Unstructured and Instructor libraries, supports PDF and image processing. Works great for JSON response generation

For similar tasks

SWE-AF

SWE-AF is an autonomous engineering team runtime built on AgentField, designed to spin up a full engineering team that can scope, build, adapt, and ship complex software end-to-end. It enables autonomous software engineering factories, scaling from simple goals to multi-issue programs with hundreds to thousands of agent invocations. SWE-AF offers one-call DX for quick deployment and adaptive factory control using three nested control loops to adapt to task difficulty in real-time. It features a factory architecture, continual learning, agent-scale parallelism, fleet-scale orchestration with AgentField, explicit compromise tracking, and long-run reliability.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.