blog

🇻🇳 VNTechies Dev Blog - Kho tài nguyên và mã nguồn với sứ mệnh đào tạo kiến thức, định hướng nghề nghiệp cho cộng đồng Cloud ☁️ DevOps 🚀 và AI

Stars: 74

VNTechies Blog is a platform dedicated to sharing open-source resources and providing knowledge and career guidance for the Cloud and DevOps community. The blog encourages contributions and support through donations. All content on the blog and repository is licensed under Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

README:

🇻🇳 VNTechies Dev Blog - Kho tài nguyên mã nguồn mở với sứ mệnh đào tạo kiến thức, định hướng nghề nghiệp cho cộng đồng Cloud ☁️ DevOps 🚀

Cảm ơn các đồng chí 🔥

Cách bạn có thể đóng góp cho dự án này.

Nếu bạn thấy dự án này hữu ích, xin hãy ủng hộ VNTechies một ly cà phê. Toàn bộ các đóng góp sẽ được sử dụng để duy trì dự án này.

Tất cả các bài viết trên VNTechies Dev Blog và các file trong repository này đều có lincense Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

License này chỉ cho phép người khác có thể thực hiện đăng tải lại, chỉnh sửa và xây dựng dựa trên nội dung gốc cho mục đích phi thương mại kèm theo điều kiện ghi công cho tác giả chẳng hạn như: nêu tên tác giả, dẫn link tới tác phẩm gốc hoặc theo yêu cầu riêng của tác giả; Ngoài ra, các bản phân phối, sửa đổi bắt buộc phải gắn cùng license với tác phẩm gốc.

Template Tailwind Nextjs Starter Blog by Timothy Lin

Copyright (c) 2023 VNTechies

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for blog

Similar Open Source Tools

blog

VNTechies Blog is a platform dedicated to sharing open-source resources and providing knowledge and career guidance for the Cloud and DevOps community. The blog encourages contributions and support through donations. All content on the blog and repository is licensed under Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Ai-Hoshino

Ai Hoshino - MD is a WhatsApp bot tool with features like voice and text interaction, group configuration, anti-delete, anti-link, personalized welcome messages, chatbot functionality, sticker creation, sub-bot integration, RPG game, YouTube music and video downloads, and more. The tool is actively maintained by Starlights Team and offers a range of functionalities for WhatsApp users.

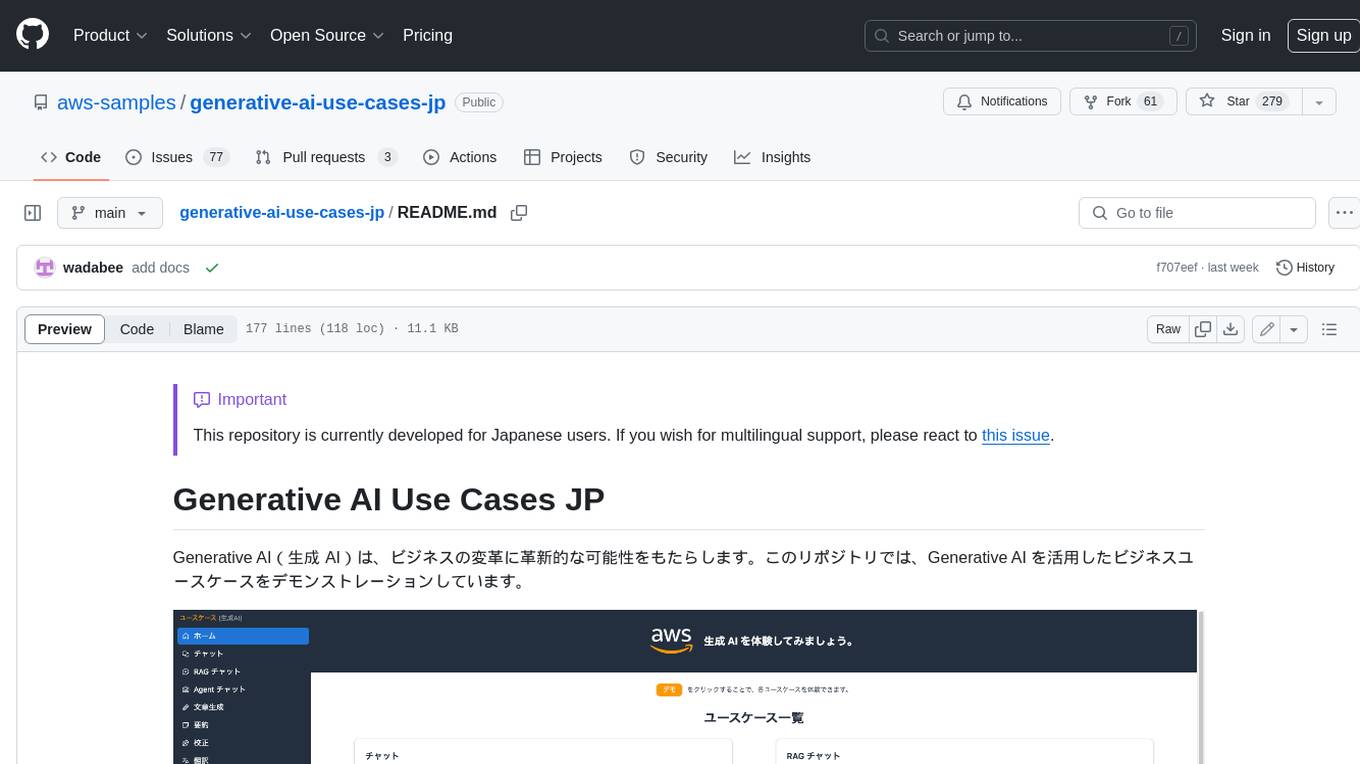

generative-ai-use-cases-jp

Generative AI (生成 AI) brings revolutionary potential to transform businesses. This repository demonstrates business use cases leveraging Generative AI.

Thor

Thor is a powerful AI model management tool designed for unified management and usage of various AI models. It offers features such as user, channel, and token management, data statistics preview, log viewing, system settings, external chat link integration, and Alipay account balance purchase. Thor supports multiple AI models including OpenAI, Kimi, Starfire, Claudia, Zhilu AI, Ollama, Tongyi Qianwen, AzureOpenAI, and Tencent Hybrid models. It also supports various databases like SqlServer, PostgreSql, Sqlite, and MySql, allowing users to choose the appropriate database based on their needs.

mangaba_ai

Mangaba AI is a minimalist repository for creating intelligent and versatile AI agents with A2A (Agent-to-Agent) and MCP (Model Context Protocol) protocols. It supports any AI provider, facilitates communication between agents, manages context effectively, and offers integrated functionalities like chat, analysis, and translation. The setup is straightforward with only 2 steps to get started. The repository includes scripts for automated configuration, manual setup, and environment validation. Users can easily chat with context, analyze text, translate, and interact with multiple agents using A2A protocol. The MCP protocol handles advanced context management automatically, categorizing context types and priorities. The repository also provides examples, documentation, and a comprehensive wiki in Brazilian Portuguese for beginners and developers.

md_design

Nakidka is a tool for 1C:Enterprise 8 that allows for quick creation of forms based on text descriptions. It uses a simple and understandable syntax similar to Markdown, and also supports visual design of interface elements.

goodsKill

The 'goodsKill' project aims to build a complete project framework integrating good technologies and development techniques, mainly focusing on backend technologies. It provides a simulated flash sale project with unified flash sale simulation request interface. The project uses SpringMVC + Mybatis for the overall technology stack, Dubbo3.x for service intercommunication, Nacos for service registration and discovery, and Spring State Machine for data state transitions. It also integrates Spring AI service for simulating flash sale actions.

ChatGPT-On-CS

This project is an intelligent dialogue customer service tool based on a large model, which supports access to platforms such as WeChat, Qianniu, Bilibili, Douyin Enterprise, Douyin, Doudian, Weibo chat, Xiaohongshu professional account operation, Xiaohongshu, Zhihu, etc. You can choose GPT3.5/GPT4.0/ Lazy Treasure Box (more platforms will be supported in the future), which can process text, voice and pictures, and access external resources such as operating systems and the Internet through plug-ins, and support enterprise AI applications customized based on their own knowledge base.

aios-core

Synkra AIOS is a Framework for Universal AI Agents powered by AI. It is founded on Agent-Driven Agile Development, offering revolutionary capabilities for AI-driven development and more. Transform any domain with specialized AI expertise: software development, entertainment, creative writing, business strategy, personal well-being, and more. The framework follows a clear hierarchy of priorities: CLI First, Observability Second, UI Third. The CLI is where intelligence resides, all execution, decisions, and automation happen there. Observability is for observing and monitoring what happens in the CLI in real-time. The UI is for specific management and visualizations when necessary. The two key innovations of Synkra AIOS are Planejamento Agêntico and Desenvolvimento Contextualizado por Engenharia, which eliminate inconsistency in planning and loss of context in AI-assisted development.

nonebot_plugin_naturel_gpt

NoneBotPluginNaturelGPT is a plugin for NoneBot that enhances the GPT chat AI with more human-like characteristics. It supports multiple customizable personalities, preset sharing, and various features to improve chat interactions. Users can create personalized chat experiences, enable context-aware conversations, and benefit from features like long-term memory, user-specific impressions, and data persistence. The plugin also allows for personality switching, custom trigger words, content blocking, and more. It offers extensive capabilities for enhancing chat interactions and enabling AI to actively participate in conversations.

siliconflow-plugin

SiliconFlow-PLUGIN (SF-PLUGIN) is a versatile AI integration plugin for the Yunzai robot framework, supporting multiple AI services and models. It includes features such as AI drawing, intelligent conversations, real-time search, text-to-speech synthesis, resource management, link handling, video parsing, group functions, WebSocket support, and Jimeng-Api interface. The plugin offers functionalities for drawing, conversation, search, image link retrieval, video parsing, group interactions, and more, enhancing the capabilities of the Yunzai framework.

FastGPT

FastGPT is a knowledge base Q&A system based on the LLM large language model, providing out-of-the-box data processing, model calling and other capabilities. At the same time, you can use Flow to visually arrange workflows to achieve complex Q&A scenarios!

forksilly.doc

ForkSilly.doc is a repository mainly for storing documentation of ForkSilly, an Android project developed using React Native/Expo. It is suitable for users with experience in SillyTavern. The project is self-shared and may not accept feature requests. It is designed for pure text cards, illustration cards, and Stable Diffusion text-image. It is compatible with SillyTavern V2 character cards, world books, regex, presets, and chat records. Users can import and export at any time. The tool supports various customization options such as chat font, background image, and quick toggle of preset entries. It also allows the use of various OpenAI-compatible APIs and provides built-in storage management features. Users can utilize text-image functionality and access free text-image services like pollinations.ai. Additionally, it supports Stable Diffusion text-image features and integration with silicon-based flow and Gemini embedding models. The tool does not support TTS or connecting to NAI.

Tianji

Tianji is a free, non-commercial artificial intelligence system developed by SocialAI for tasks involving worldly wisdom, such as etiquette, hospitality, gifting, wishes, communication, awkwardness resolution, and conflict handling. It includes four main technical routes: pure prompt, Agent architecture, knowledge base, and model training. Users can find corresponding source code for these routes in the tianji directory to replicate their own vertical domain AI applications. The project aims to accelerate the penetration of AI into various fields and enhance AI's core competencies.

nekro-agent

Nekro Agent is an AI chat plugin and proxy execution bot that is highly scalable, offers high freedom, and has minimal deployment requirements. It features context-aware chat for group/private chats, custom character settings, sandboxed execution environment, interactive image resource handling, customizable extension development interface, easy deployment with docker-compose, integration with Stable Diffusion for AI drawing capabilities, support for various file types interaction, hot configuration updates and command control, native multimodal understanding, visual application management control panel, CoT (Chain of Thought) support, self-triggered timers and holiday greetings, event notification understanding, and more. It allows for third-party extensions and AI-generated extensions, and includes features like automatic context trigger based on LLM, and a variety of basic commands for bot administrators.

For similar tasks

airswap-web

AirSwap Web is a peer-to-peer web frontend for AirSwap, an open developer community focused on decentralized trading systems. The repository utilizes React and NodeJS v16, with Yarn as the package manager. Designers and developers can contribute and earn through this platform, with detailed instructions available in the CONTRIBUTING file and the Discord server. The tool aims to facilitate decentralized trading and foster collaboration within the community.

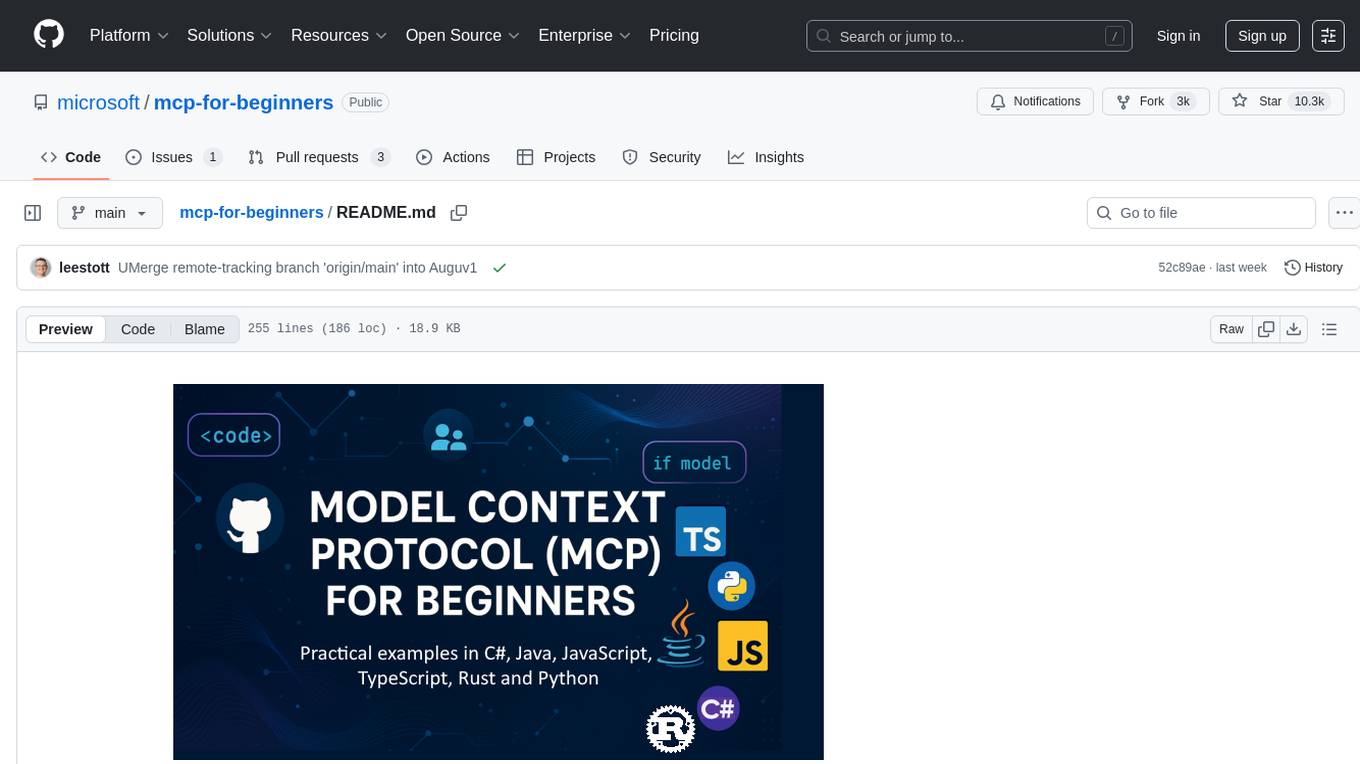

mcp-for-beginners

The Model Context Protocol (MCP) Curriculum for Beginners is an open-source framework designed to standardize interactions between AI models and client applications. It offers a structured learning path with practical coding examples and real-world use cases in popular programming languages like C#, Java, JavaScript, Rust, Python, and TypeScript. Whether you're an AI developer, system architect, or software engineer, this guide provides comprehensive resources for mastering MCP fundamentals and implementation strategies.

blog

VNTechies Blog is a platform dedicated to sharing open-source resources and providing knowledge and career guidance for the Cloud and DevOps community. The blog encourages contributions and support through donations. All content on the blog and repository is licensed under Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

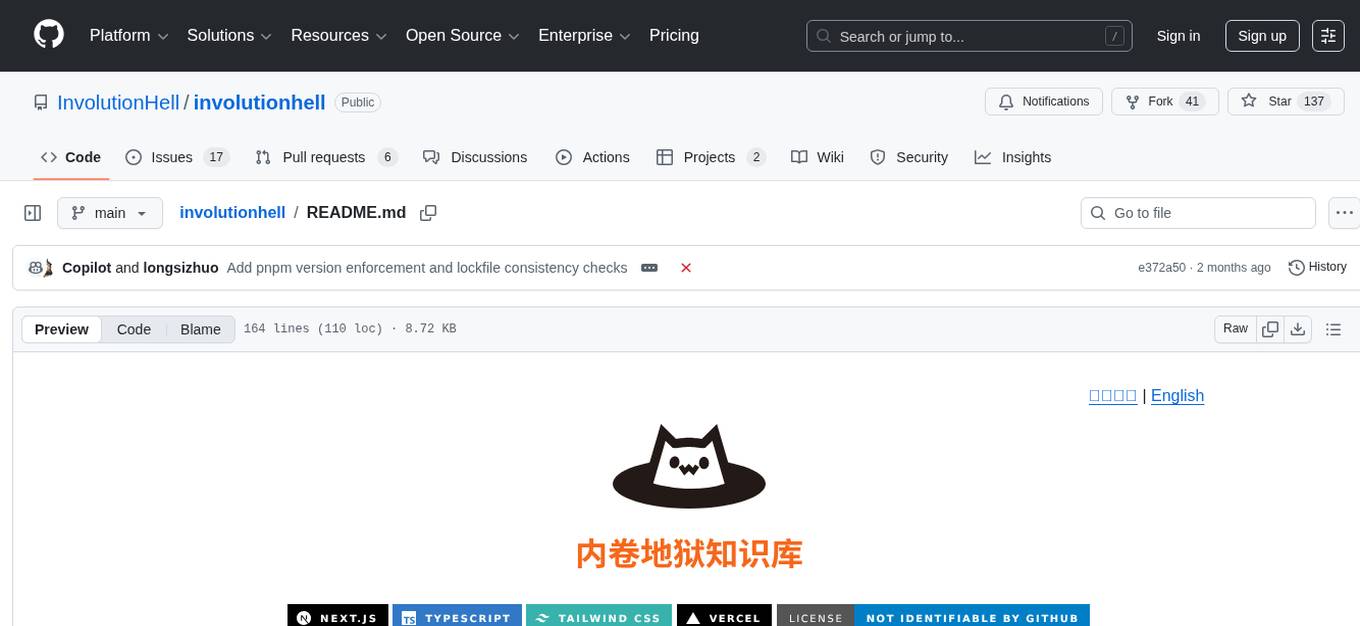

involutionhell

Involution Hell is a non-profit, open-source platform designed to help students share and access study materials, course notes, and project experiences. The platform features a high-performance site built with Next.js App Router and Fumadocs UI, supports multiple languages and a 'file as navigation' directory structure, and automates deployment, image migration, and content validation to reduce maintenance costs.

For similar jobs

AirGo

AirGo is a front and rear end separation, multi user, multi protocol proxy service management system, simple and easy to use. It supports vless, vmess, shadowsocks, and hysteria2.

mosec

Mosec is a high-performance and flexible model serving framework for building ML model-enabled backend and microservices. It bridges the gap between any machine learning models you just trained and the efficient online service API. * **Highly performant** : web layer and task coordination built with Rust 🦀, which offers blazing speed in addition to efficient CPU utilization powered by async I/O * **Ease of use** : user interface purely in Python 🐍, by which users can serve their models in an ML framework-agnostic manner using the same code as they do for offline testing * **Dynamic batching** : aggregate requests from different users for batched inference and distribute results back * **Pipelined stages** : spawn multiple processes for pipelined stages to handle CPU/GPU/IO mixed workloads * **Cloud friendly** : designed to run in the cloud, with the model warmup, graceful shutdown, and Prometheus monitoring metrics, easily managed by Kubernetes or any container orchestration systems * **Do one thing well** : focus on the online serving part, users can pay attention to the model optimization and business logic

llm-code-interpreter

The 'llm-code-interpreter' repository is a deprecated plugin that provides a code interpreter on steroids for ChatGPT by E2B. It gives ChatGPT access to a sandboxed cloud environment with capabilities like running any code, accessing Linux OS, installing programs, using filesystem, running processes, and accessing the internet. The plugin exposes commands to run shell commands, read files, and write files, enabling various possibilities such as running different languages, installing programs, starting servers, deploying websites, and more. It is powered by the E2B API and is designed for agents to freely experiment within a sandboxed environment.

pezzo

Pezzo is a fully cloud-native and open-source LLMOps platform that allows users to observe and monitor AI operations, troubleshoot issues, save costs and latency, collaborate, manage prompts, and deliver AI changes instantly. It supports various clients for prompt management, observability, and caching. Users can run the full Pezzo stack locally using Docker Compose, with prerequisites including Node.js 18+, Docker, and a GraphQL Language Feature Support VSCode Extension. Contributions are welcome, and the source code is available under the Apache 2.0 License.

learn-generative-ai

Learn Cloud Applied Generative AI Engineering (GenEng) is a course focusing on the application of generative AI technologies in various industries. The course covers topics such as the economic impact of generative AI, the role of developers in adopting and integrating generative AI technologies, and the future trends in generative AI. Students will learn about tools like OpenAI API, LangChain, and Pinecone, and how to build and deploy Large Language Models (LLMs) for different applications. The course also explores the convergence of generative AI with Web 3.0 and its potential implications for decentralized intelligence.

gcloud-aio

This repository contains shared codebase for two projects: gcloud-aio and gcloud-rest. gcloud-aio is built for Python 3's asyncio, while gcloud-rest is a threadsafe requests-based implementation. It provides clients for Google Cloud services like Auth, BigQuery, Datastore, KMS, PubSub, Storage, and Task Queue. Users can install the library using pip and refer to the documentation for usage details. Developers can contribute to the project by following the contribution guide.

fluid

Fluid is an open source Kubernetes-native Distributed Dataset Orchestrator and Accelerator for data-intensive applications, such as big data and AI applications. It implements dataset abstraction, scalable cache runtime, automated data operations, elasticity and scheduling, and is runtime platform agnostic. Key concepts include Dataset and Runtime. Prerequisites include Kubernetes version > 1.16, Golang 1.18+, and Helm 3. The tool offers features like accelerating remote file accessing, machine learning, accelerating PVC, preloading dataset, and on-the-fly dataset cache scaling. Contributions are welcomed, and the project is under the Apache 2.0 license with a vendor-neutral approach.

aiges

AIGES is a core component of the Athena Serving Framework, designed as a universal encapsulation tool for AI developers to deploy AI algorithm models and engines quickly. By integrating AIGES, you can deploy AI algorithm models and engines rapidly and host them on the Athena Serving Framework, utilizing supporting auxiliary systems for networking, distribution strategies, data processing, etc. The Athena Serving Framework aims to accelerate the cloud service of AI algorithm models and engines, providing multiple guarantees for cloud service stability through cloud-native architecture. You can efficiently and securely deploy, upgrade, scale, operate, and monitor models and engines without focusing on underlying infrastructure and service-related development, governance, and operations.