zeptoclaw

Final form of claw family (Wannabe)

Stars: 307

ZeptoClaw is an ultra-lightweight personal AI assistant that offers a compact Rust binary with 29 tools, 8 channels, 9 providers, and container isolation. It focuses on integrations, security, and size discipline without compromising on performance. With features like container isolation, prompt injection detection, secret leak scanner, policy engine, input validator, and more, ZeptoClaw ensures secure AI agent execution. It supports migration from OpenClaw, deployment on various platforms, and configuration of LLM providers. ZeptoClaw is designed for efficient AI assistance with minimal resource consumption and maximum security.

README:

Ultra-lightweight personal AI assistant.

$ zeptoclaw agent --stream -m "Analyze our API for security issues"

🤖 ZeptoClaw — Streaming analysis...

[web_fetch] Fetching API docs...

[shell] Running integration tests...

[longterm_memory] Storing findings...

→ Found 12 endpoints, 3 missing auth headers, 1 open redirect

→ Saved findings to long-term memory under "api-audit"

✓ Analysis complete in 4.2s

We studied the best AI assistants — and their tradeoffs. OpenClaw's integrations without the 100MB. NanoClaw's security without the TypeScript bundle. PicoClaw's size without the bare-bones feature set. One Rust binary with 29 tools, 8 channels, 9 providers, and container isolation.

We studied what works — and what doesn't.

OpenClaw proved an AI assistant can handle 12 channels and 100+ skills. But it costs 100MB and 400K lines. NanoClaw proved security-first is possible. But it's still 50MB of TypeScript. PicoClaw proved AI assistants can run on $10 hardware. But it stripped out everything to get there.

ZeptoClaw took notes. The integrations, the security, the size discipline — without the tradeoffs each one made. One 4MB Rust binary that starts in 50ms, uses 6MB of RAM, and ships with container isolation, prompt injection detection, and a circuit breaker provider stack.

AI agents execute code. Most frameworks trust that nothing will go wrong.

The OpenClaw ecosystem has seen CVE-2026-25253 (CVSS 8.8 — cross-site WebSocket hijacking to RCE), ClawHavoc (341 malicious skills, 9,000+ compromised installations), and 42,000 exposed instances with auth bypass. ZeptoClaw was built with this threat model in mind.

| Layer | What it does |

|---|---|

| Container Isolation | Every shell command runs in Docker or Apple Container — not on your host |

| Prompt Injection Detection | Aho-Corasick multi-pattern matcher (17 patterns) + 4 regex rules |

| Secret Leak Scanner | 22 regex patterns catch API keys, tokens, and credentials before they reach the LLM |

| Policy Engine | 7 rules blocking system file access, crypto key extraction, SQL injection, encoded exploits |

| Input Validator | 100KB limit, null byte detection, whitespace ratio analysis, repetition detection |

| Shell Blocklist | Regex patterns blocking reverse shells, rm -rf, privilege escalation |

| SSRF Prevention | DNS pinning, private IP blocking, scheme validation for all web requests |

| Tool Approval Gate | Require explicit confirmation before executing dangerous tools |

Every layer runs by default. No flags to remember, no config to enable.

# One-liner (macOS / Linux)

curl -fsSL https://raw.githubusercontent.com/qhkm/zeptoclaw/main/install.sh | sh

# Homebrew

brew install qhkm/tap/zeptoclaw

# Docker

docker pull ghcr.io/qhkm/zeptoclaw:latest

# Build from source

cargo install zeptoclaw --git https://github.com/qhkm/zeptoclaw# Interactive setup (walks you through API keys, channels, workspace)

zeptoclaw onboard

# Talk to your agent

zeptoclaw agent -m "Hello, set up my workspace"

# Stream responses token-by-token

zeptoclaw agent --stream -m "Explain async Rust"

# Use a built-in template

zeptoclaw agent --template researcher -m "Search for Rust agent frameworks"

# Process prompts in batch

zeptoclaw batch --input prompts.txt --output results.jsonl

# Start as a Telegram/Slack/Discord/Webhook gateway

zeptoclaw gateway

# With full container isolation per request

zeptoclaw gateway --containerizedAlready running OpenClaw? ZeptoClaw can import your config and skills in one command.

# Auto-detect OpenClaw installation (~/.openclaw, ~/.clawdbot, ~/.moldbot)

zeptoclaw migrate

# Specify path manually

zeptoclaw migrate --from /path/to/openclaw

# Preview what would be migrated (no files written)

zeptoclaw migrate --dry-run

# Non-interactive (skip confirmation prompts)

zeptoclaw migrate --yesThe migration command:

- Converts provider API keys, model settings, and channel configs

- Copies skills to

~/.zeptoclaw/skills/ - Backs up your existing ZeptoClaw config before overwriting

- Validates the migrated config and reports any issues

- Lists features that can't be automatically ported

Supports JSON and JSON5 config files (comments, trailing commas, unquoted keys).

curl -fsSL https://zeptoclaw.com/setup.sh | bashInstalls the binary and prints next steps. Run zeptoclaw onboard to configure providers and channels.

ZeptoClaw supports 9 LLM providers. All OpenAI-compatible endpoints work out of the box.

| Provider | Config key | Setup |

|---|---|---|

| Anthropic | anthropic |

api_key |

| OpenAI | openai |

api_key |

| OpenRouter | openrouter |

api_key |

| Groq | groq |

api_key |

| Ollama | ollama |

api_key (any value) |

| VLLM | vllm |

api_key (any value) |

| Google Gemini | gemini |

api_key |

| NVIDIA NIM | nvidia |

api_key |

| Zhipu (GLM) | zhipu |

api_key |

Configure in ~/.zeptoclaw/config.json or via environment variables:

{

"providers": {

"openrouter": { "api_key": "sk-or-..." },

"ollama": { "api_key": "ollama" }

},

"agents": { "defaults": { "model": "anthropic/claude-sonnet-4" } }

}export ZEPTOCLAW_PROVIDERS_GROQ_API_KEY=gsk_...Any provider's base URL can be overridden with api_base for proxies or self-hosted endpoints. See the provider docs for full details.

| Feature | What it does |

|---|---|

| Multi-Provider LLM | 9 providers with SSE streaming, retry with backoff, auto-failover |

| 29 Tools + Plugins | Shell, filesystem, web, git, stripe, PDF, transcription, Android ADB, and more |

| Agent Swarms | Delegate to sub-agents with parallel dispatch, aggregation, and cost-aware routing |

| Batch Mode | Process hundreds of prompts from text/JSONL files with template support |

| Agent Modes | Observer, Assistant, Autonomous — category-based tool access control |

| Feature | What it does |

|---|---|

| 8-Channel Gateway | Telegram, Slack, Discord, WhatsApp, Lark, Email, Webhook, CLI — unified message bus |

| Plugin System | JSON manifest plugins auto-discovered from ~/.zeptoclaw/plugins/

|

| Hooks |

before_tool, after_tool, on_error with Log, Block, and Notify actions |

| Cron & Heartbeat | Schedule recurring tasks, proactive check-ins, background spawning |

| Memory & History | Workspace memory, long-term key-value store, conversation history |

| Feature | What it does |

|---|---|

| Container Isolation | Shell execution in Docker or Apple Container per request |

| Tool Approval Gate | Policy-based gating — require confirmation for dangerous tools |

| SSRF Prevention | DNS pinning, private IP blocking, scheme validation |

| Shell Blocklist | Regex patterns blocking reverse shells, rm -rf, privilege escalation |

| Token Budget & Cost | Per-session budget enforcement, per-model cost estimation for 8 models |

| Telemetry | Prometheus + JSON metrics export, structured logging, per-tenant tracing |

| Multi-Tenant | Hundreds of tenants on one VPS — isolated workspaces, ~6MB RAM each |

Full documentation — zeptoclaw.com/docs covers configuration, environment variables, CLI reference, deployment guides, and more.

ZeptoClaw is inspired by projects in the open-source AI agent ecosystem — OpenClaw, NanoClaw, and PicoClaw — each taking a different approach to the same problem. ZeptoClaw's contribution is Rust's memory safety, async performance, and container isolation for production multi-tenant deployments.

cargo test # 2,300+ tests

cargo clippy -- -D warnings

cargo fmt -- --checkApache 2.0 — see LICENSE

ZeptoClaw — Because your AI assistant shouldn't need more RAM than your text editor.

Built by Aisar Labs

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for zeptoclaw

Similar Open Source Tools

zeptoclaw

ZeptoClaw is an ultra-lightweight personal AI assistant that offers a compact Rust binary with 29 tools, 8 channels, 9 providers, and container isolation. It focuses on integrations, security, and size discipline without compromising on performance. With features like container isolation, prompt injection detection, secret leak scanner, policy engine, input validator, and more, ZeptoClaw ensures secure AI agent execution. It supports migration from OpenClaw, deployment on various platforms, and configuration of LLM providers. ZeptoClaw is designed for efficient AI assistance with minimal resource consumption and maximum security.

pocketpaw

PocketPaw is a lightweight and user-friendly tool designed for managing and organizing your digital assets. It provides a simple interface for users to easily categorize, tag, and search for files across different platforms. With PocketPaw, you can efficiently organize your photos, documents, and other files in a centralized location, making it easier to access and share them. Whether you are a student looking to organize your study materials, a professional managing project files, or a casual user wanting to declutter your digital space, PocketPaw is the perfect solution for all your file management needs.

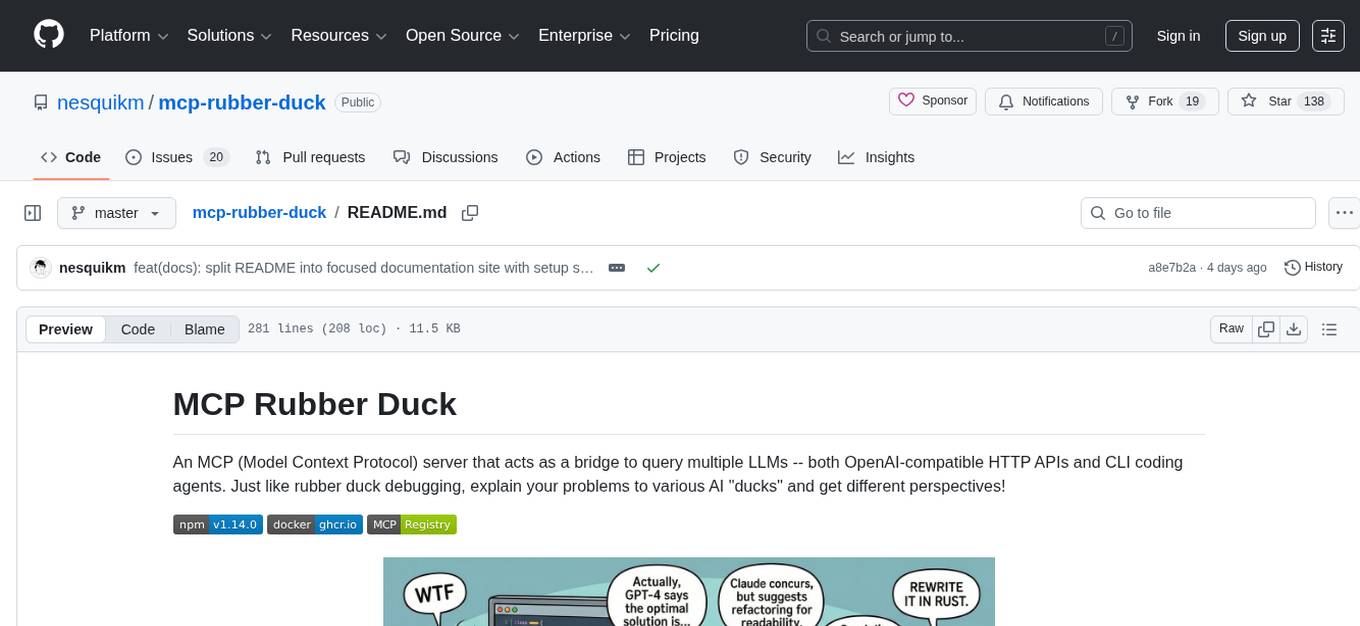

mcp-rubber-duck

MCP Rubber Duck is a Model Context Protocol server that acts as a bridge to query multiple LLMs, including OpenAI-compatible HTTP APIs and CLI coding agents. Users can explain their problems to various AI 'ducks' to get different perspectives. The tool offers features like universal OpenAI compatibility, CLI agent support, conversation management, multi-duck querying, consensus voting, LLM-as-Judge evaluation, structured debates, health monitoring, usage tracking, and more. It supports various HTTP providers like OpenAI, Google Gemini, Anthropic, Groq, Together AI, Perplexity, and CLI providers like Claude Code, Codex, Gemini CLI, Grok, Aider, and custom agents. Users can install the tool globally, configure it using environment variables, and access interactive UIs for comparing ducks, voting, debating, and usage statistics. The tool provides multiple tools for asking questions, chatting, clearing conversations, listing ducks, comparing responses, voting, judging, iterating, debating, and more. It also offers prompt templates for different analysis purposes and extensive documentation for setup, configuration, tools, prompts, CLI providers, MCP Bridge, guardrails, Docker deployment, troubleshooting, contributing, license, acknowledgments, changelog, registry & directory, and support.

aegra

Aegra is a self-hosted AI agent backend platform that provides LangGraph power without vendor lock-in. Built with FastAPI + PostgreSQL, it offers complete control over agent orchestration for teams looking to escape vendor lock-in, meet data sovereignty requirements, enable custom deployments, and optimize costs. Aegra is Agent Protocol compliant and perfect for teams seeking a free, self-hosted alternative to LangGraph Platform with zero lock-in, full control, and compatibility with existing LangGraph Client SDK.

ruby_llm-agents

RubyLLM::Agents is a production-ready Rails engine for building, managing, and monitoring LLM-powered AI agents. It seamlessly integrates with Rails apps, providing features like automatic execution tracking, cost analytics, budget controls, and a real-time dashboard. Users can build intelligent AI agents in Ruby using a clean DSL and support various LLM providers like OpenAI GPT-4, Anthropic Claude, and Google Gemini. The engine offers features such as agent DSL configuration, execution tracking, cost analytics, reliability with retries and fallbacks, budget controls, multi-tenancy support, async execution with Ruby fibers, real-time dashboard, streaming, conversation history, image operations, alerts, and more.

skylos

Skylos is a privacy-first SAST tool for Python, TypeScript, and Go that bridges the gap between traditional static analysis and AI agents. It detects dead code, security vulnerabilities (SQLi, SSRF, Secrets), and code quality issues with high precision. Skylos uses a hybrid engine (AST + optional Local/Cloud LLM) to eliminate false positives, verify via runtime, find logic bugs, and provide context-aware audits. It offers automated fixes, end-to-end remediation, and 100% local privacy. The tool supports taint analysis, secrets detection, vulnerability checks, dead code detection and cleanup, agentic AI and hybrid analysis, codebase optimization, operational governance, and runtime verification.

nothumanallowed

NotHumanAllowed is a security-first platform built exclusively for AI agents. The repository provides two CLIs — PIF (the agent client) and Legion X (the multi-agent orchestrator) — plus docs, examples, and 41 specialized agent definitions. Every agent authenticates via Ed25519 cryptographic signatures, ensuring no passwords or bearer tokens are used. Legion X orchestrates 41 specialized AI agents through a 9-layer Geth Consensus pipeline, with zero-knowledge protocol ensuring API keys stay local. The system learns from each session, with features like task decomposition, neural agent routing, multi-round deliberation, and weighted authority synthesis. The repository also includes CLI commands for orchestration, agent management, tasks, sandbox execution, Geth Consensus, knowledge search, configuration, system health check, and more.

ai-coders-context

The @ai-coders/context repository provides the Ultimate MCP for AI Agent Orchestration, Context Engineering, and Spec-Driven Development. It simplifies context engineering for AI by offering a universal process called PREVC, which consists of Planning, Review, Execution, Validation, and Confirmation steps. The tool aims to address the problem of context fragmentation by introducing a single `.context/` directory that works universally across different tools. It enables users to create structured documentation, generate agent playbooks, manage workflows, provide on-demand expertise, and sync across various AI tools. The tool follows a structured, spec-driven development approach to improve AI output quality and ensure reproducible results across projects.

dexto

Dexto is a lightweight runtime for creating and running AI agents that turn natural language into real-world actions. It serves as the missing intelligence layer for building AI applications, standalone chatbots, or as the reasoning engine inside larger products. Dexto features a powerful CLI and Web UI for running AI agents, supports multiple interfaces, allows hot-swapping of LLMs from various providers, connects to remote tool servers via the Model Context Protocol, is config-driven with version-controlled YAML, offers production-ready core features, extensibility for custom services, and enables multi-agent collaboration via MCP and A2A.

terminator

Terminator is an AI-powered desktop automation tool that is open source, MIT-licensed, and cross-platform. It works across all apps and browsers, inspired by GitHub Actions & Playwright. It is 100x faster than generic AI agents, with over 95% success rate and no vendor lock-in. Users can create automations that work across any desktop app or browser, achieve high success rates without costly consultant armies, and pre-train workflows as deterministic code.

agents

Cloudflare Agents is a framework for building intelligent, stateful agents that persist, think, and evolve at the edge of the network. It allows for maintaining persistent state and memory, real-time communication, processing and learning from interactions, autonomous operation at global scale, and hibernating when idle. The project is actively evolving with focus on core agent framework, WebSocket communication, HTTP endpoints, React integration, and basic AI chat capabilities. Future developments include advanced memory systems, WebRTC for audio/video, email integration, evaluation framework, enhanced observability, and self-hosting guide.

agentscope

AgentScope is a multi-agent platform designed to empower developers to build multi-agent applications with large-scale models. It features three high-level capabilities: Easy-to-Use, High Robustness, and Actor-Based Distribution. AgentScope provides a list of `ModelWrapper` to support both local model services and third-party model APIs, including OpenAI API, DashScope API, Gemini API, and ollama. It also enables developers to rapidly deploy local model services using libraries such as ollama (CPU inference), Flask + Transformers, Flask + ModelScope, FastChat, and vllm. AgentScope supports various services, including Web Search, Data Query, Retrieval, Code Execution, File Operation, and Text Processing. Example applications include Conversation, Game, and Distribution. AgentScope is released under Apache License 2.0 and welcomes contributions.

promptolution

Promptolution is a unified, modular framework for prompt optimization designed for researchers and advanced practitioners. It focuses on the optimization stage, providing a clean, transparent, and extensible API for simple prompt optimization for various tasks. It supports many current prompt optimizers, unified LLM backend, response caching, parallelized inference, and detailed logging for post-hoc analysis.

orchestkit

OrchestKit is a powerful and flexible orchestration tool designed to streamline and automate complex workflows. It provides a user-friendly interface for defining and managing orchestration tasks, allowing users to easily create, schedule, and monitor workflows. With support for various integrations and plugins, OrchestKit enables seamless automation of tasks across different systems and applications. Whether you are a developer looking to automate deployment processes or a system administrator managing complex IT operations, OrchestKit offers a comprehensive solution to simplify and optimize your workflow management.

Unreal_mcp

Unreal Engine MCP Server is a comprehensive Model Context Protocol (MCP) server that allows AI assistants to control Unreal Engine through a native C++ Automation Bridge plugin. It is built with TypeScript, C++, and Rust (WebAssembly). The server provides various features for asset management, actor control, editor control, level management, animation & physics, visual effects, sequencer, graph editing, audio, system operations, and more. It offers dynamic type discovery, graceful degradation, on-demand connection, command safety, asset caching, metrics rate limiting, and centralized configuration. Users can install the server using NPX or by cloning and building it. Additionally, the server supports WebAssembly acceleration for computationally intensive operations and provides an optional GraphQL API for complex queries. The repository includes documentation, community resources, and guidelines for contributing.

llamafarm

LlamaFarm is a comprehensive AI framework that empowers users to build powerful AI applications locally, with full control over costs and deployment options. It provides modular components for RAG systems, vector databases, model management, prompt engineering, and fine-tuning. Users can create differentiated AI products without needing extensive ML expertise, using simple CLI commands and YAML configs. The framework supports local-first development, production-ready components, strategy-based configuration, and deployment anywhere from laptops to the cloud.

For similar tasks

zeptoclaw

ZeptoClaw is an ultra-lightweight personal AI assistant that offers a compact Rust binary with 29 tools, 8 channels, 9 providers, and container isolation. It focuses on integrations, security, and size discipline without compromising on performance. With features like container isolation, prompt injection detection, secret leak scanner, policy engine, input validator, and more, ZeptoClaw ensures secure AI agent execution. It supports migration from OpenClaw, deployment on various platforms, and configuration of LLM providers. ZeptoClaw is designed for efficient AI assistance with minimal resource consumption and maximum security.

utcp-specification

The Universal Tool Calling Protocol (UTCP) Specification repository contains the official documentation for a modern and scalable standard that enables AI systems and clients to discover and interact with tools across different communication protocols. It defines tool discovery mechanisms, call formats, provider configuration, authentication methods, and response handling.

shai

shai is a coding agent written in Rust that serves as a pair programming buddy in the terminal. It can be used to run code, provide suggestions, and act as a shell assistant. Users can configure providers, run headless, create custom agents, and interact with OVHCloud endpoints for AI capabilities.

spacebot

Spacebot is an AI agent designed for teams, communities, and multi-user environments. It splits the monolith into specialized processes that delegate tasks, allowing it to handle concurrent conversations, execute tasks, and respond to multiple users simultaneously. Built for Discord, Slack, and Telegram, Spacebot can run coding sessions, manage files, automate web browsing, and search the web. Its memory system is structured and graph-connected, enabling productive knowledge synthesis. With capabilities for task execution, messaging, memory management, scheduling, model routing, and extensible skills, Spacebot offers a comprehensive solution for collaborative work environments.

For similar jobs

zep

Zep is a long-term memory service for AI Assistant apps. With Zep, you can provide AI assistants with the ability to recall past conversations, no matter how distant, while also reducing hallucinations, latency, and cost. Zep persists and recalls chat histories, and automatically generates summaries and other artifacts from these chat histories. It also embeds messages and summaries, enabling you to search Zep for relevant context from past conversations. Zep does all of this asyncronously, ensuring these operations don't impact your user's chat experience. Data is persisted to database, allowing you to scale out when growth demands. Zep also provides a simple, easy to use abstraction for document vector search called Document Collections. This is designed to complement Zep's core memory features, but is not designed to be a general purpose vector database. Zep allows you to be more intentional about constructing your prompt: 1. automatically adding a few recent messages, with the number customized for your app; 2. a summary of recent conversations prior to the messages above; 3. and/or contextually relevant summaries or messages surfaced from the entire chat session. 4. and/or relevant Business data from Zep Document Collections.

doc2plan

doc2plan is a browser-based application that helps users create personalized learning plans by extracting content from documents. It features a Creator for manual or AI-assisted plan construction and a Viewer for interactive plan navigation. Users can extract chapters, key topics, generate quizzes, and track progress. The application includes AI-driven content extraction, quiz generation, progress tracking, plan import/export, assistant management, customizable settings, viewer chat with text-to-speech and speech-to-text support, and integration with various Retrieval-Augmented Generation (RAG) models. It aims to simplify the creation of comprehensive learning modules tailored to individual needs.

whatsapp-chatgpt

This repository contains a WhatsApp bot that utilizes OpenAI's GPT and DALL-E 2 to respond to user inputs. Users can interact with the bot through voice messages, which are transcribed and responded to. The bot requires Node.js, npm, an OpenAI API key, and a WhatsApp account. It uses Puppeteer to run a real instance of Whatsapp Web to avoid being blocked. However, there is a risk of being blocked by WhatsApp as it does not allow bots or unofficial clients on its platform. The bot is not free to use, and users will be charged by OpenAI for each request made.

OmniSteward

OmniSteward is an AI-powered steward system based on large language models that can interact with users through voice or text to help control smart home devices and computer programs. It supports multi-turn dialogue, tool calling for complex tasks, multiple LLM models, voice recognition, smart home control, computer program management, online information retrieval, command line operations, and file management. The system is highly extensible, allowing users to customize and share their own tools.

chatgpt-wechat

ChatGPT-WeChat is a personal assistant application that can be safely used on WeChat through enterprise WeChat without the risk of being banned. The project is open source and free, with no paid sections or external traffic operations except for advertising on the author's public account '积木成楼'. It supports various features such as secure usage on WeChat, multi-channel customer service message integration, proxy support, session management, rapid message response, voice and image messaging, drawing capabilities, private data storage, plugin support, and more. Users can also develop their own capabilities following the rules provided. The project is currently in development with stable versions available for use.

mcp-agent

mcp-agent is a simple, composable framework designed to build agents using the Model Context Protocol. It handles the lifecycle of MCP server connections and implements patterns for building production-ready AI agents in a composable way. The framework also includes OpenAI's Swarm pattern for multi-agent orchestration in a model-agnostic manner, making it the simplest way to build robust agent applications. It is purpose-built for the shared protocol MCP, lightweight, and closer to an agent pattern library than a framework. mcp-agent allows developers to focus on the core business logic of their AI applications by handling mechanics such as server connections, working with LLMs, and supporting external signals like human input.

Gmail-MCP-Server

Gmail AutoAuth MCP Server is a Model Context Protocol (MCP) server designed for Gmail integration in Claude Desktop. It supports auto authentication and enables AI assistants to manage Gmail through natural language interactions. The server provides comprehensive features for sending emails, reading messages, managing labels, searching emails, and batch operations. It offers full support for international characters, email attachments, and Gmail API integration. Users can install and authenticate the server via Smithery or manually with Google Cloud Project credentials. The server supports both Desktop and Web application credentials, with global credential storage for convenience. It also includes Docker support and instructions for cloud server authentication.

Operit

Operit AI is a fully functional AI assistant application for mobile devices, running independently on Android devices with powerful tool invocation capabilities. It offers over 40 built-in tools for file system operations, HTTP requests, system operations, UI automation, and media processing. The app combines these tools with rich plugins to enable a wide range of tasks, from simple to complex, providing a comprehensive experience of a smartphone AI assistant.