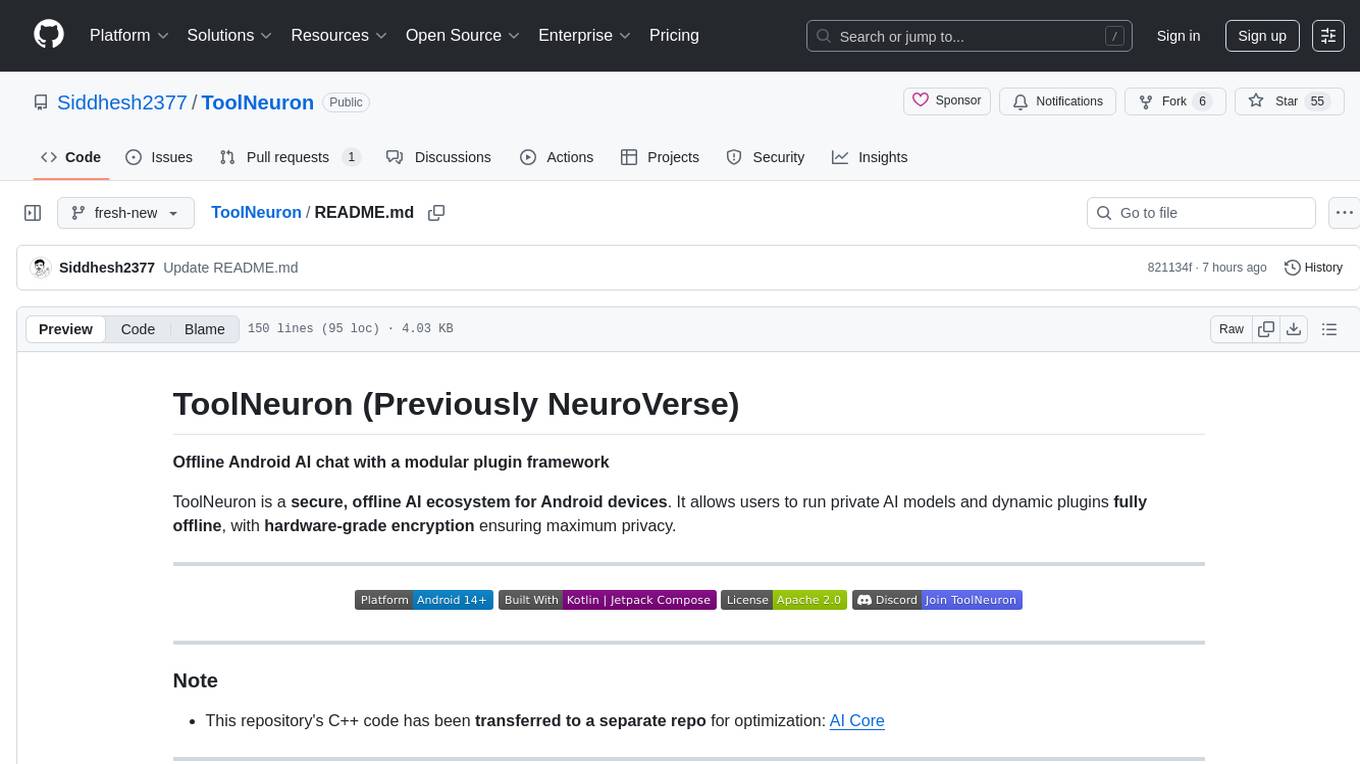

ChordMiniApp

Chord Recognition, Beat Tracking, Guitar Diagrams, Lyrics Transcription Application with LLM context aware for analysis from uploaded audio and YouTube video

Stars: 97

ChordMini is an advanced music analysis platform with AI-powered chord recognition, beat detection, and synchronized lyrics. It features a clean and intuitive interface for YouTube search, chord progression visualization, interactive guitar diagrams with accurate fingering patterns, lead sheet with AI assistant for synchronized lyrics transcription, and various add-on features like Roman Numeral Analysis, Key Modulation Signals, Simplified Chord Notation, and Enhanced Chord Correction. The tool requires Node.js, Python 3.9+, and a Firebase account for setup. It offers a hybrid backend architecture for local development and production deployments, with features like beat detection, chord recognition, lyrics processing, rate limiting, and audio processing supporting MP3, WAV, and FLAC formats. ChordMini provides a comprehensive music analysis workflow from user input to visualization, including dual input support, environment-aware processing, intelligent caching, advanced ML pipeline, and rich visualization options.

README:

Advanced music analysis platform with AI-powered chord recognition, beat detection, and synchronized lyrics.

Clean, intuitive interface for YouTube search, URL input, and recent video access.

Chord progression visualization with synchronized beat detection and grid layout with add-on features: Roman Numeral Analysis, Key Modulation Signals, Simplified Chord Notation, and Enhanced Chord Correction.

Interactive guitar chord diagrams with accurate fingering patterns from the official @tombatossals/chords-db database, featuring multiple chord positions and synchronized beat grid integration.

Synchronized lyrics transcription with AI chatbot for contextual music analysis and translation support.

- Node.js 18+ and npm

- Python 3.9+ (for backend)

- Firebase account (free tier)

-

Clone and install Clone with submodules in one command (for fresh clones)

git clone --recursive https://github.com/ptnghia-j/ChordMiniApp.git cd ChordMiniApp npm installls -la python_backend/models/Beat-Transformer/ ls -la python_backend/models/Chord-CNN-LSTM/ ls -la python_backend/models/ChordMini/Install FluidSynth for MIDI synthesis

# --- Windows --- choco install fluidsynth # --- macOS --- brew install fluidsynth # --- Linux (Debian/Ubuntu-based) --- sudo apt update sudo apt install fluidsynth -

Environment setup

cp .env.example .env.local

Edit

.env.local:NEXT_PUBLIC_PYTHON_API_URL=http://localhost:5001 NEXT_PUBLIC_FIREBASE_API_KEY=your_firebase_api_key NEXT_PUBLIC_FIREBASE_AUTH_DOMAIN=your_project.firebaseapp.com NEXT_PUBLIC_FIREBASE_PROJECT_ID=your_project_id NEXT_PUBLIC_FIREBASE_STORAGE_BUCKET=your_project.appspot.com NEXT_PUBLIC_FIREBASE_MESSAGING_SENDER_ID=your_sender_id NEXT_PUBLIC_FIREBASE_APP_ID=your_app_id

-

Start Python backend (Terminal 1)

cd python_backend python -m venv myenv source myenv/bin/activate # On Windows: myenv\Scripts\activate pip install -r requirements.txt python app.py

-

Start frontend (Terminal 2)

npm run dev

-

Open application

Visit http://localhost:3000

-

Create Firebase project

- Visit Firebase Console

- Click "Create a project"

- Follow the setup wizard

-

Enable Firestore Database

- Go to "Firestore Database" in the sidebar

- Click "Create database"

- Choose "Start in test mode" for development

-

Get Firebase configuration

- Go to Project Settings (gear icon)

- Scroll down to "Your apps"

- Click "Add app" → Web app

- Copy the configuration values to your

.env.local

-

Create Firestore collections

The app uses the following Firestore collections. They are created automatically on first write (no manual creation required):

-

transcriptions— Beat and chord analysis results (docId:${videoId}_${beatModel}_${chordModel}) -

translations— Lyrics translation cache (docId: cacheKey based on content hash) -

lyrics— Music.ai transcription results (docId:videoId) -

keyDetections— Musical key analysis cache (docId: cacheKey) -

audioFiles— Audio file metadata and URLs (docId:videoId)

-

-

Enable Anonymous Authentication

- In Firebase Console: Authentication → Sign-in method → enable Anonymous

-

Configure Firebase Storage

- Set environment variable:

NEXT_PUBLIC_FIREBASE_STORAGE_BUCKET=your_project_id.appspot.com - Folder structure:

-

audio/for audio files -

video/for optional video files

-

- Filename pattern requirement: filenames must include the 11-character YouTube video ID in brackets, e.g.

audio_[VIDEOID]_timestamp.mp3(enforced by Storage rules) - File size limits (enforced by Storage rules):

- Audio: up to 50MB

- Video: up to 100MB

- Set environment variable:

# 1. Sign up at music.ai

# 2. Get API key from dashboard

# 3. Add to .env.local

NEXT_PUBLIC_MUSIC_AI_API_KEY=your_key_here# 1. Visit Google AI Studio

# 2. Generate API key

# 3. Add to .env.local

NEXT_PUBLIC_GEMINI_API_KEY=your_key_hereTo be added in next version...

ChordMiniApp uses a hybrid backend architecture:

For local development, you must run the Python backend on localhost:5001:

-

URL:

http://localhost:5001 - Port Note: Uses port 5001 to avoid conflict with macOS AirPlay/AirTunes service on port 5000

Production deployments is configured based on your VPS and url should be set in the NEXT_PUBLIC_PYTHON_API_URL environment variable.

- Python 3.9+ (Python 3.9-3.11 recommended)

- Virtual environment (venv or conda)

- Git for cloning dependencies

- System dependencies (varies by OS)

-

Navigate to backend directory

cd python_backend -

Create virtual environment

python -m venv myenv # Activate virtual environment # On macOS/Linux: source myenv/bin/activate # On Windows: myenv\Scripts\activate

-

Install dependencies

pip install --no-cache-dir Cython>=0.29.0 numpy==1.22.4 pip install --no-cache-dir madmom>=0.16.1 pip install --no-cache-dir -r requirements.txt

-

Start local backend on port 5001

python app.py

The backend will start on

http://localhost:5001and should display:Starting Flask app on port 5001 App is ready to serve requests Note: Using port 5001 to avoid conflict with macOS AirPlay/AirTunes on port 5000 -

Verify backend is running

Open a new terminal and test the backend:

curl http://localhost:5001/health # Should return: {"status": "healthy"} -

Start frontend development server

# In the main project directory (new terminal) npm run devThe frontend will automatically connect to

http://localhost:5001based on your.env.localconfiguration.

- Beat Detection: Beat-Transformer and madmom models

- Chord Recognition: Chord-CNN-LSTM, BTC-SL, BTC-PL models

- Lyrics Processing: Genius.com integration

- Rate Limiting: IP-based rate limiting with Flask-Limiter

- Audio Processing: Support for MP3, WAV, FLAC formats

Create a .env file in the python_backend directory:

# Optional: Redis URL for distributed rate limiting

REDIS_URL=redis://localhost:6379

# Optional: Genius API for lyrics

GENIUS_ACCESS_TOKEN=your_genius_token

# Flask configuration

FLASK_MAX_CONTENT_LENGTH_MB=150

CORS_ORIGINS=http://localhost:3000,http://127.0.0.1:3000Backend connectivity issues:

# 1. Verify backend is running

curl http://localhost:5001/health

# Expected: {"status": "healthy"}

# 2. Check if port 5001 is in use

lsof -i :5001 # macOS/Linux

netstat -ano | findstr :5001 # Windows

# 3. Verify environment configuration

cat .env.local | grep PYTHON_API_URL

# Expected: NEXT_PUBLIC_PYTHON_API_URL=http://localhost:5001

# 4. Check for macOS AirTunes conflict (if using port 5000)

curl -I http://localhost:5000/health

# If you see "Server: AirTunes", that's the conflict we're avoidingFrontend connection errors:

# Check browser console for errors like:

# "Failed to fetch" or "Network Error"

# This usually means the backend is not running on port 5001

# Restart both frontend and backend:

# Terminal 1 (Backend):

cd python_backend && python app.py

# Terminal 2 (Frontend):

npm run devImport errors:

# Ensure virtual environment is activated

source myenv/bin/activate # macOS/Linux

myenv\Scripts\activate # Windows

# Reinstall dependencies

pip install -r requirements.txtChordMini provides a comprehensive music analysis workflow from user input to visualization. This diagram shows the complete user journey and data processing pipeline:

graph TD

%% User Entry Points

A[User Input] --> B{Input Type}

B -->|YouTube URL/Search| C[YouTube Search Interface]

B -->|Direct Audio File| D[File Upload Interface]

%% YouTube Workflow

C --> E[YouTube Search API]

E --> F[Video Selection]

F --> G[Navigate to /analyze/videoId]

%% Direct Upload Workflow

D --> H[Vercel Blob Upload]

H --> I[Navigate to /analyze with blob URL]

%% Cache Check Phase

G --> J{Firebase Cache Check}

J -->|Cache Hit| K[Load Cached Results]

J -->|Cache Miss| L[Audio Extraction Pipeline]

%% Audio Extraction (Environment-Aware)

L --> M{Environment Detection}

M -->|Development| N[yt-dlp Service]

M -->|Production| O[yt-mp3-go Service]

N --> P[Audio URL Generation]

O --> P

%% ML Analysis Pipeline

P --> Q[Parallel ML Processing]

I --> Q

Q --> R[Beat Detection]

Q --> S[Chord Recognition]

Q --> T[Key Detection]

%% ML Backend Processing

R --> U[Beat-Transformer/madmom Models]

S --> V[Chord-CNN-LSTM/BTC Models]

T --> W[Gemini AI Key Analysis]

%% Results Processing

U --> X[Beat Data]

V --> Y[Chord Progression Data]

W --> Z[Musical Key & Corrections]

%% Synchronization & Caching

X --> AA[Chord-Beat Synchronization]

Y --> AA

Z --> AA

AA --> BB[Firebase Result Caching]

AA --> CC[Real-time Visualization]

%% Visualization Tabs

CC --> DD[Beat & Chord Map Tab]

CC --> EE[Guitar Chords Tab - Beta]

CC --> FF[Lyrics & Chords Tab - Beta]

%% Beat & Chord Map Features

DD --> GG[Interactive Chord Grid]

DD --> HH[Synchronized Audio Player]

DD --> II[Real-time Beat Highlighting]

%% Guitar Chords Features

EE --> JJ[Animated Chord Progression]

EE --> KK[Guitar Chord Diagrams]

EE --> LL[Responsive Design 7/5/3/2/1]

%% Lyrics Features

FF --> MM[Lyrics Transcription]

FF --> NN[Multi-language Translation]

FF --> OO[Word-level Synchronization]

%% External API Integration

MM --> PP[Music.ai Transcription]

NN --> QQ[Gemini AI Translation]

%% Cache Integration

K --> CC

BB --> RR[Enhanced Metadata Storage]

%% Error Handling & Fallbacks

L --> SS{Extraction Failed?}

SS -->|Yes| TT[Fallback Service]

SS -->|No| P

TT --> P

%% Styling

style A fill:#e1f5fe,stroke:#1976d2,stroke-width:3px

style CC fill:#c8e6c9,stroke:#388e3c,stroke-width:3px

style EE fill:#fff3e0,stroke:#f57c00,stroke-width:2px

style FF fill:#f3e5f5,stroke:#7b1fa2,stroke-width:2px

style Q fill:#ffebee,stroke:#d32f2f,stroke-width:2px

style AA fill:#e8f5e8,stroke:#4caf50,stroke-width:2px

%% Environment Indicators

style N fill:#e3f2fd,stroke:#1976d2

style O fill:#e8f5e8,stroke:#4caf50- YouTube Integration: URL/search → video selection → analysis

- Direct Upload: Audio file → blob storage → immediate analysis

- Development: localhost:5001 Python backend with yt-dlp (avoiding macOS AirTunes port conflict)

- Production: Google Cloud Run backend with yt-mp3-go

- Firebase Cache: Analysis results with enhanced metadata

- Cache Hit: Instant loading of previous analyses

- Cache Miss: Full ML processing pipeline

- Parallel Processing: Beat detection + chord recognition + key analysis

- Multiple Models: Beat-Transformer/madmom, Chord-CNN-LSTM/BTC variants

- AI Integration: Gemini AI for key detection and enharmonic corrections

- Beat & Chord Map: Interactive grid with synchronized playback

- Guitar Chords [Beta]: Responsive chord diagrams (7/5/3/2/1 layouts)

- Lyrics & Chords [Beta]: Multi-language transcription with word-level sync

- Next.js 15.3.1 - React framework with App Router

- React 19 - UI library with latest features

- TypeScript - Type-safe development

- Tailwind CSS - Utility-first styling

- Framer Motion - Smooth animations and transitions

- Chart.js - Data visualization for audio analysis

- @tombatossals/react-chords - Guitar chord diagram visualization with official chord database

- React Player - Video playback integration

- Python Flask - Lightweight backend framework

- Google Cloud Run - Serverless container deployment

- Custom ML Models - Chord recognition and beat detection

- Firebase Firestore - NoSQL database for caching

- Vercel Blob - File storage for audio processing

- YouTube Search API - github.com/damonwonghv/youtube-search-api

- yt-dlp - github.com/yt-dlp/yt-dlp - YouTube audio extraction

- yt-mp3-go - github.com/vukan322/yt-mp3-go - Alternative audio extraction

- LRClib - github.com/tranxuanthang/lrclib - Lyrics synchronization

- Music.ai SDK - AI-powered music transcription

- Google Gemini API - AI language model for translations

- Beat Detection - Automatic tempo and beat tracking

- Chord Recognition - AI-powered chord progression analysis

- Key Detection - Musical key identification with Gemini AI

- Accurate Chord Database Integration - Official @tombatossals/chords-db with verified chord fingering patterns

- Enhanced Chord Recognition - Support for both ML model colon notation (C:minor) and standard notation (Cm)

- Interactive Chord Diagrams - Visual guitar fingering patterns with correct fret positions and finger placements

- Responsive Design - Adaptive chord count (7/5/3/2/1 for xl/lg/md/sm/xs)

- Smooth Animations - transitions with optimized scaling

- Unicode Notation - Proper musical symbols (♯, ♭) with enharmonic equivalents

- Synchronized Lyrics - Time-aligned lyrics display

- Multi-language Support - Translation with Gemini AI

- Word-level Timing - Precise synchronization with Music.ai

- Dark/Light Mode - Automatic theme switching

- Responsive Design - Mobile-first approach

- Performance Optimized - Lazy loading and code splitting

- Offline Support - Service worker for caching

ChordMiniApp provides production-ready Docker images for easy deployment:

# Production deployment with Docker Compose

curl -O https://raw.githubusercontent.com/ptnghia-j/ChordMiniApp/main/docker-compose.prod.yml

docker-compose -f docker-compose.prod.yml up -dAvailable on multiple registries:

-

Docker Hub:

ptnghia/chordmini-frontend:latest,ptnghia/chordmini-backend:latest -

GitHub Container Registry:

ghcr.io/ptnghia-j/chordminiapp/frontend:latest

Deployment targets:

- Cloud platforms: AWS, GCP, Azure, DigitalOcean

- Container orchestration: Kubernetes, Docker Swarm

- Edge computing: Raspberry Pi 4+ (ARM64 support)

For custom deployments, see the Local Setup section above.

We welcome contributions! Please see our Contributing Guidelines for details.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ChordMiniApp

Similar Open Source Tools

ChordMiniApp

ChordMini is an advanced music analysis platform with AI-powered chord recognition, beat detection, and synchronized lyrics. It features a clean and intuitive interface for YouTube search, chord progression visualization, interactive guitar diagrams with accurate fingering patterns, lead sheet with AI assistant for synchronized lyrics transcription, and various add-on features like Roman Numeral Analysis, Key Modulation Signals, Simplified Chord Notation, and Enhanced Chord Correction. The tool requires Node.js, Python 3.9+, and a Firebase account for setup. It offers a hybrid backend architecture for local development and production deployments, with features like beat detection, chord recognition, lyrics processing, rate limiting, and audio processing supporting MP3, WAV, and FLAC formats. ChordMini provides a comprehensive music analysis workflow from user input to visualization, including dual input support, environment-aware processing, intelligent caching, advanced ML pipeline, and rich visualization options.

ai-real-estate-assistant

AI Real Estate Assistant is a modern platform that uses AI to assist real estate agencies in helping buyers and renters find their ideal properties. It features multiple AI model providers, intelligent query processing, advanced search and retrieval capabilities, and enhanced user experience. The tool is built with a FastAPI backend and Next.js frontend, offering semantic search, hybrid agent routing, and real-time analytics.

opcode

opcode is a powerful desktop application built with Tauri 2 that serves as a command center for interacting with Claude Code. It offers a visual GUI for managing Claude Code sessions, creating custom agents, tracking usage, and more. Users can navigate projects, create specialized AI agents, monitor usage analytics, manage MCP servers, create session checkpoints, edit CLAUDE.md files, and more. The tool bridges the gap between command-line tools and visual experiences, making AI-assisted development more intuitive and productive.

leetcode-py

A Python package to generate professional LeetCode practice environments. Features automated problem generation from LeetCode URLs, beautiful data structure visualizations (TreeNode, ListNode, GraphNode), and comprehensive testing with 10+ test cases per problem. Built with professional development practices including CI/CD, type hints, and quality gates. The tool provides a modern Python development environment with production-grade features such as linting, test coverage, logging, and CI/CD pipeline. It also offers enhanced data structure visualization for debugging complex structures, flexible notebook support, and a powerful CLI for generating problems anywhere.

routilux

Routilux is a powerful event-driven workflow orchestration framework designed for building complex data pipelines and workflows effortlessly. It offers features like event queue architecture, flexible connections, built-in state management, robust error handling, concurrent execution, persistence & recovery, and simplified API. Perfect for tasks such as data pipelines, API orchestration, event processing, workflow automation, microservices coordination, and LLM agent workflows.

mistral.rs

Mistral.rs is a fast LLM inference platform written in Rust. We support inference on a variety of devices, quantization, and easy-to-use application with an Open-AI API compatible HTTP server and Python bindings.

chat-ollama

ChatOllama is an open-source chatbot based on LLMs (Large Language Models). It supports a wide range of language models, including Ollama served models, OpenAI, Azure OpenAI, and Anthropic. ChatOllama supports multiple types of chat, including free chat with LLMs and chat with LLMs based on a knowledge base. Key features of ChatOllama include Ollama models management, knowledge bases management, chat, and commercial LLMs API keys management.

evi-run

evi-run is a powerful, production-ready multi-agent AI system built on Python using the OpenAI Agents SDK. It offers instant deployment, ultimate flexibility, built-in analytics, Telegram integration, and scalable architecture. The system features memory management, knowledge integration, task scheduling, multi-agent orchestration, custom agent creation, deep research, web intelligence, document processing, image generation, DEX analytics, and Solana token swap. It supports flexible usage modes like private, free, and pay mode, with upcoming features including NSFW mode, task scheduler, and automatic limit orders. The technology stack includes Python 3.11, OpenAI Agents SDK, Telegram Bot API, PostgreSQL, Redis, and Docker & Docker Compose for deployment.

botserver

General Bots is a self-hosted AI automation platform and LLM conversational platform focused on convention over configuration and code-less approaches. It serves as the core API server handling LLM orchestration, business logic, database operations, and multi-channel communication. The platform offers features like multi-vendor LLM API, MCP + LLM Tools Generation, Semantic Caching, Web Automation Engine, Enterprise Data Connectors, and Git-like Version Control. It enforces a ZERO TOLERANCE POLICY for code quality and security, with strict guidelines for error handling, performance optimization, and code patterns. The project structure includes modules for core functionalities like Rhai BASIC interpreter, security, shared types, tasks, auto task system, file operations, learning system, and LLM assistance.

openwhispr

OpenWhispr is an open source desktop dictation application that converts speech to text using OpenAI Whisper. It features both local and cloud processing options for maximum flexibility and privacy. The application supports multiple AI providers, customizable hotkeys, agent naming, and various AI processing models. It offers a modern UI built with React 19, TypeScript, and Tailwind CSS v4, and is optimized for speed using Vite and modern tooling. Users can manage settings, view history, configure API keys, and download/manage local Whisper models. The application is cross-platform, supporting macOS, Windows, and Linux, and offers features like automatic pasting, draggable interface, global hotkeys, and compound hotkeys.

asktube

AskTube is an AI-powered YouTube video summarizer and QA assistant that utilizes Retrieval Augmented Generation (RAG) technology. It offers a comprehensive solution with Q&A functionality and aims to provide a user-friendly experience for local machine usage. The project integrates various technologies including Python, JS, Sanic, Peewee, Pytubefix, Sentence Transformers, Sqlite, Chroma, and NuxtJs/DaisyUI. AskTube supports multiple providers for analysis, AI services, and speech-to-text conversion. The tool is designed to extract data from YouTube URLs, store embedding chapter subtitles, and facilitate interactive Q&A sessions with enriched questions. It is not intended for production use but rather for end-users on their local machines.

flashinfer

FlashInfer is a library for Language Languages Models that provides high-performance implementation of LLM GPU kernels such as FlashAttention, PageAttention and LoRA. FlashInfer focus on LLM serving and inference, and delivers state-the-art performance across diverse scenarios.

local-cocoa

Local Cocoa is a privacy-focused tool that runs entirely on your device, turning files into memory to spark insights and power actions. It offers features like fully local privacy, multimodal memory, vector-powered retrieval, intelligent indexing, vision understanding, hardware acceleration, focused user experience, integrated notes, and auto-sync. The tool combines file ingestion, intelligent chunking, and local retrieval to build a private on-device knowledge system. The ultimate goal includes more connectors like Google Drive integration, voice mode for local speech-to-text interaction, and a plugin ecosystem for community tools and agents. Local Cocoa is built using Electron, React, TypeScript, FastAPI, llama.cpp, and Qdrant.

ToolNeuron

ToolNeuron is a secure, offline AI ecosystem for Android devices that allows users to run private AI models and dynamic plugins fully offline, with hardware-grade encryption ensuring maximum privacy. It enables users to have an offline-first experience, add capabilities without app updates through pluggable tools, and ensures security by design with strict plugin validation and sandboxing.

ito

Ito is an intelligent voice assistant that provides seamless voice dictation to any application on your computer. It works in any app, offers global keyboard shortcuts, real-time transcription, and instant text insertion. It is smart and adaptive with features like custom dictionary, context awareness, multi-language support, and intelligent punctuation. Users can customize trigger keys, audio preferences, and privacy controls. It also offers data management features like a notes system, interaction history, cloud sync, and export capabilities. Ito is built as a modern Electron application with a multi-process architecture and utilizes technologies like React, TypeScript, Rust, gRPC, and AWS CDK.

finite-monkey-engine

FiniteMonkey is an advanced vulnerability mining engine powered purely by GPT, requiring no prior knowledge base or fine-tuning. Its effectiveness significantly surpasses most current related research approaches. The tool is task-driven, prompt-driven, and focuses on prompt design, leveraging 'deception' and hallucination as key mechanics. It has helped identify vulnerabilities worth over $60,000 in bounties. The tool requires PostgreSQL database, OpenAI API access, and Python environment for setup. It supports various languages like Solidity, Rust, Python, Move, Cairo, Tact, Func, Java, and Fake Solidity for scanning. FiniteMonkey is best suited for logic vulnerability mining in real projects, not recommended for academic vulnerability testing. GPT-4-turbo is recommended for optimal results with an average scan time of 2-3 hours for medium projects. The tool provides detailed scanning results guide and implementation tips for users.

For similar tasks

ChordMiniApp

ChordMini is an advanced music analysis platform with AI-powered chord recognition, beat detection, and synchronized lyrics. It features a clean and intuitive interface for YouTube search, chord progression visualization, interactive guitar diagrams with accurate fingering patterns, lead sheet with AI assistant for synchronized lyrics transcription, and various add-on features like Roman Numeral Analysis, Key Modulation Signals, Simplified Chord Notation, and Enhanced Chord Correction. The tool requires Node.js, Python 3.9+, and a Firebase account for setup. It offers a hybrid backend architecture for local development and production deployments, with features like beat detection, chord recognition, lyrics processing, rate limiting, and audio processing supporting MP3, WAV, and FLAC formats. ChordMini provides a comprehensive music analysis workflow from user input to visualization, including dual input support, environment-aware processing, intelligent caching, advanced ML pipeline, and rich visualization options.

For similar jobs

promptflow

**Prompt flow** is a suite of development tools designed to streamline the end-to-end development cycle of LLM-based AI applications, from ideation, prototyping, testing, evaluation to production deployment and monitoring. It makes prompt engineering much easier and enables you to build LLM apps with production quality.

deepeval

DeepEval is a simple-to-use, open-source LLM evaluation framework specialized for unit testing LLM outputs. It incorporates various metrics such as G-Eval, hallucination, answer relevancy, RAGAS, etc., and runs locally on your machine for evaluation. It provides a wide range of ready-to-use evaluation metrics, allows for creating custom metrics, integrates with any CI/CD environment, and enables benchmarking LLMs on popular benchmarks. DeepEval is designed for evaluating RAG and fine-tuning applications, helping users optimize hyperparameters, prevent prompt drifting, and transition from OpenAI to hosting their own Llama2 with confidence.

MegaDetector

MegaDetector is an AI model that identifies animals, people, and vehicles in camera trap images (which also makes it useful for eliminating blank images). This model is trained on several million images from a variety of ecosystems. MegaDetector is just one of many tools that aims to make conservation biologists more efficient with AI. If you want to learn about other ways to use AI to accelerate camera trap workflows, check out our of the field, affectionately titled "Everything I know about machine learning and camera traps".

leapfrogai

LeapfrogAI is a self-hosted AI platform designed to be deployed in air-gapped resource-constrained environments. It brings sophisticated AI solutions to these environments by hosting all the necessary components of an AI stack, including vector databases, model backends, API, and UI. LeapfrogAI's API closely matches that of OpenAI, allowing tools built for OpenAI/ChatGPT to function seamlessly with a LeapfrogAI backend. It provides several backends for various use cases, including llama-cpp-python, whisper, text-embeddings, and vllm. LeapfrogAI leverages Chainguard's apko to harden base python images, ensuring the latest supported Python versions are used by the other components of the stack. The LeapfrogAI SDK provides a standard set of protobuffs and python utilities for implementing backends and gRPC. LeapfrogAI offers UI options for common use-cases like chat, summarization, and transcription. It can be deployed and run locally via UDS and Kubernetes, built out using Zarf packages. LeapfrogAI is supported by a community of users and contributors, including Defense Unicorns, Beast Code, Chainguard, Exovera, Hypergiant, Pulze, SOSi, United States Navy, United States Air Force, and United States Space Force.

llava-docker

This Docker image for LLaVA (Large Language and Vision Assistant) provides a convenient way to run LLaVA locally or on RunPod. LLaVA is a powerful AI tool that combines natural language processing and computer vision capabilities. With this Docker image, you can easily access LLaVA's functionalities for various tasks, including image captioning, visual question answering, text summarization, and more. The image comes pre-installed with LLaVA v1.2.0, Torch 2.1.2, xformers 0.0.23.post1, and other necessary dependencies. You can customize the model used by setting the MODEL environment variable. The image also includes a Jupyter Lab environment for interactive development and exploration. Overall, this Docker image offers a comprehensive and user-friendly platform for leveraging LLaVA's capabilities.

carrot

The 'carrot' repository on GitHub provides a list of free and user-friendly ChatGPT mirror sites for easy access. The repository includes sponsored sites offering various GPT models and services. Users can find and share sites, report errors, and access stable and recommended sites for ChatGPT usage. The repository also includes a detailed list of ChatGPT sites, their features, and accessibility options, making it a valuable resource for ChatGPT users seeking free and unlimited GPT services.

TrustLLM

TrustLLM is a comprehensive study of trustworthiness in LLMs, including principles for different dimensions of trustworthiness, established benchmark, evaluation, and analysis of trustworthiness for mainstream LLMs, and discussion of open challenges and future directions. Specifically, we first propose a set of principles for trustworthy LLMs that span eight different dimensions. Based on these principles, we further establish a benchmark across six dimensions including truthfulness, safety, fairness, robustness, privacy, and machine ethics. We then present a study evaluating 16 mainstream LLMs in TrustLLM, consisting of over 30 datasets. The document explains how to use the trustllm python package to help you assess the performance of your LLM in trustworthiness more quickly. For more details about TrustLLM, please refer to project website.

AI-YinMei

AI-YinMei is an AI virtual anchor Vtuber development tool (N card version). It supports fastgpt knowledge base chat dialogue, a complete set of solutions for LLM large language models: [fastgpt] + [one-api] + [Xinference], supports docking bilibili live broadcast barrage reply and entering live broadcast welcome speech, supports Microsoft edge-tts speech synthesis, supports Bert-VITS2 speech synthesis, supports GPT-SoVITS speech synthesis, supports expression control Vtuber Studio, supports painting stable-diffusion-webui output OBS live broadcast room, supports painting picture pornography public-NSFW-y-distinguish, supports search and image search service duckduckgo (requires magic Internet access), supports image search service Baidu image search (no magic Internet access), supports AI reply chat box [html plug-in], supports AI singing Auto-Convert-Music, supports playlist [html plug-in], supports dancing function, supports expression video playback, supports head touching action, supports gift smashing action, supports singing automatic start dancing function, chat and singing automatic cycle swing action, supports multi scene switching, background music switching, day and night automatic switching scene, supports open singing and painting, let AI automatically judge the content.